- The paper introduces CENTaUR, a finetuned LLaMA model that uses psychological experiment data to simulate human decision-making.

- It leverages LLM embeddings with a finetuned linear layer to reduce negative log-likelihoods and outperform traditional models in decisions from descriptions and experience.

- Robust simulations show that CENTaUR generalizes well to new tasks and accurately captures individual differences, underscoring its potential in cognitive research.

Turning LLMs into Cognitive Models

Introduction

The paper "Turning LLMs into cognitive models" (2306.03917) presents a novel approach to transforming LLMs into cognitive models by finetuning them using data from psychological experiments. The authors explore the capabilities of finetuned LLMs to accurately represent human behavior, outperform traditional cognitive models, and predict human decisions in untested domains. This research suggests that pre-trained LLMs, when appropriately adapted, can serve as generalist cognitive models with implications for cognitive psychology and behavioral sciences.

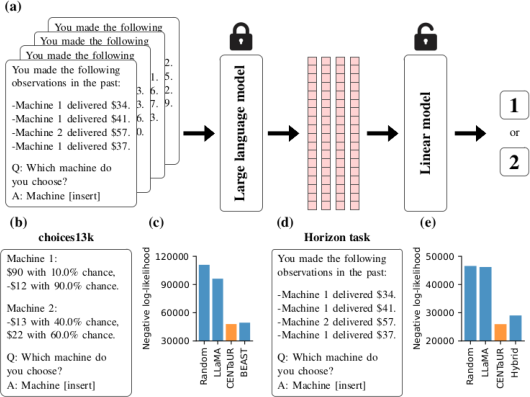

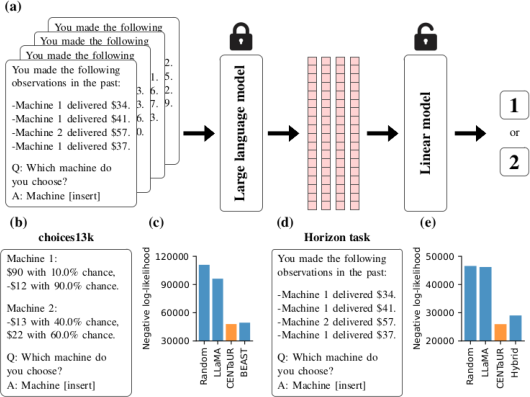

Figure 1: Illustration of our approach and main results.

Finetuning LLMs

The central premise of the paper is the finetuning of LLMs, specifically the LLaMA model, on domain-specific behavioral data to bridge the gap between unhuman-like characteristics and human cognitive processes. LLaMA, which operates with up to 65 billion parameters, was chosen due to its public availability and the comprehensive access provided to its pre-trained weights and architecture. By extracting embeddings from LLMs and applying a finetuned linear layer, the researchers developed a model termed CENTaUR, metaphorically likened to the centaur for its blend of computational and human attributes.

The researchers employed two decision-making paradigms, decisions from descriptions and decisions from experience, both pivotal in psychological literature. The data sets used included the choices13k data set and the horizon task, with embeddings processed through LLaMA to predict human choices. The procedure demonstrated that CENTaUR achieved superior performance compared to conventional cognitive models, evidenced by significant reductions in negative log-likelihoods across tasks.

Model Simulations and Human-Like Characteristics

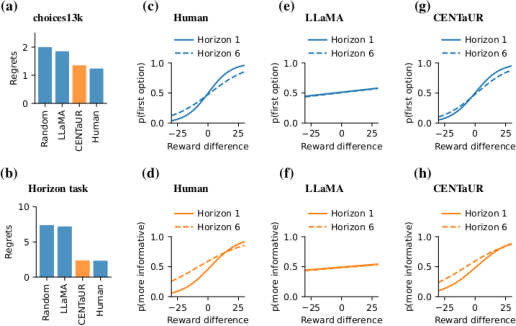

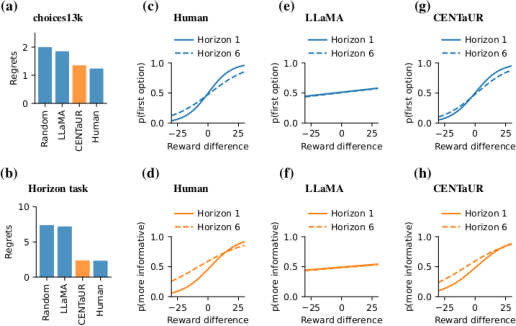

To validate the human-like behavioral characteristics of CENTaUR, the research featured extensive model simulations. The simulated results closely mirrored human performance datasets, such as regret scores aligning more closely with human statistics than the unmodified LLaMA model. Furthermore, exploratory behavior analysis in task scenarios highlighted the divergence between CENTaUR and mere exploitive strategies, demonstrating adaptability on par with human approaches.

Figure 2: Model simulations showcase human-like performance in experimental tasks.

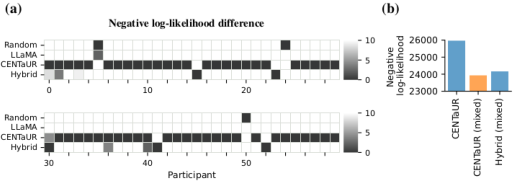

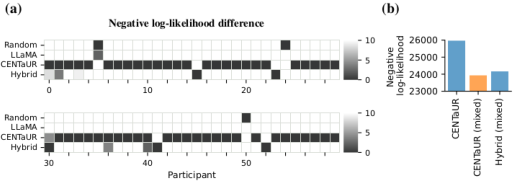

The study also explored CENTaUR's capacity to account for individual differences among participants by incorporating a random-effects model structure in its finetuning process. The results highlighted CENTaUR's versatility in accurately modeling individual behaviors without sacrificing fit quality. The approach illuminates the potential of LLM embeddings to encapsulate diverse cognitive traits on a granular participant level, reinforcing their suitability for complex decision modeling.

Figure 3: Visualization of individual differences captured by CENTaUR models.

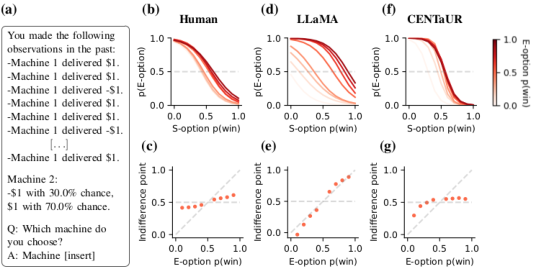

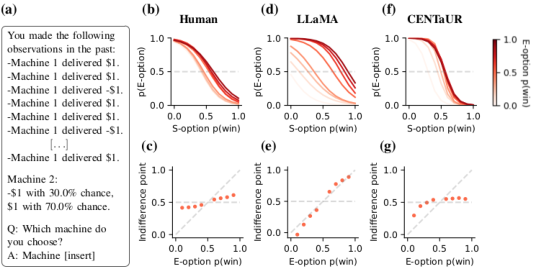

Evaluating Generalization on Hold-Out Tasks

One of the most critical tests for CENTaUR involved its ability to generalize predictions to novel tasks beyond its finetuning domain. The model was trained using combined data sets and then tested against the experiential-symbolic task data, where it demonstrated superior fit and qualitative behavior prediction compared to other models. CENTaUR correctly mirrored human inclination toward symbolic options, a feature not presented during initial training phases, indicating robust generalization potential.

Figure 4: Hold-out task evaluation reveals effective generalization to new tasks.

Discussion

The successful conversion of LLMs into cognitive models holds transformative potential for the intersection of AI and psychological research. By leveraging foundational models like CENTaUR, researchers can conceptualize domain-general models capable of emulating a wide range of human decision-making processes. Future work aims to expand this finetuning approach across multiple psychological tasks, potentially culminating in a unified cognitive architecture model able to simulate human cognition comprehensively.

In conclusion, the adaptation of LLMs into cognitive models represents a new frontier in AI research, offering promise for advanced experimental design and policy development within behavioral sciences. As these models continue to evolve, explainability techniques can provide deeper insights into cognitive processes and decision-making paradigms, paving the way for integrative applications in diverse real-world contexts.