Semi-supervised Multimodal Representation Learning through a Global Workspace

Abstract: Recent deep learning models can efficiently combine inputs from different modalities (e.g., images and text) and learn to align their latent representations, or to translate signals from one domain to another (as in image captioning, or text-to-image generation). However, current approaches mainly rely on brute-force supervised training over large multimodal datasets. In contrast, humans (and other animals) can learn useful multimodal representations from only sparse experience with matched cross-modal data. Here we evaluate the capabilities of a neural network architecture inspired by the cognitive notion of a "Global Workspace": a shared representation for two (or more) input modalities. Each modality is processed by a specialized system (pretrained on unimodal data, and subsequently frozen). The corresponding latent representations are then encoded to and decoded from a single shared workspace. Importantly, this architecture is amenable to self-supervised training via cycle-consistency: encoding-decoding sequences should approximate the identity function. For various pairings of vision-language modalities and across two datasets of varying complexity, we show that such an architecture can be trained to align and translate between two modalities with very little need for matched data (from 4 to 7 times less than a fully supervised approach). The global workspace representation can be used advantageously for downstream classification tasks and for robust transfer learning. Ablation studies reveal that both the shared workspace and the self-supervised cycle-consistency training are critical to the system's performance.

- S. Harnad, “The symbol grounding problem,” Physica D: Nonlinear Phenomena, vol. 42, no. 1-3, pp. 335–346, 1990.

- A. Radford, J. W. Kim, C. Hallacy, A. Ramesh, G. Goh, S. Agarwal, G. Sastry, A. Askell, P. Mishkin, J. Clark, G. Krueger, and I. Sutskever, “Learning Transferable Visual Models From Natural Language Supervision,” Feb. 2021, arXiv:2103.00020 [cs]. [Online]. Available: http://arxiv.org/abs/2103.00020

- A. Frome, G. S. Corrado, J. Shlens, S. Bengio, J. Dean, M. Ranzato, and T. Mikolov, “Devise: A deep visual-semantic embedding model,” Advances in neural information processing systems, vol. 26, 2013.

- Y. Xian, C. H. Lampert, B. Schiele, and Z. Akata, “Zero-Shot Learning – A Comprehensive Evaluation of the Good, the Bad and the Ugly,” Sep. 2020, arXiv:1707.00600 [cs]. [Online]. Available: http://arxiv.org/abs/1707.00600

- J. Lu, C. Clark, R. Zellers, R. Mottaghi, and A. Kembhavi, “Unified-IO: A Unified Model for Vision, Language, and Multi-Modal Tasks,” Jun. 2022, arXiv:2206.08916 [cs]. [Online]. Available: http://arxiv.org/abs/2206.08916

- C. Silberer and M. Lapata, “Grounded Models of Semantic Representation,” in Proceedings of the 2012 Joint Conference on Empirical Methods in Natural Language Processing and Computational Natural Language Learning. Jeju Island, Korea: Association for Computational Linguistics, Jul. 2012, pp. 1423–1433. [Online]. Available: https://aclanthology.org/D12-1130

- D. Kiela and S. Clark, “Multi- and Cross-Modal Semantics Beyond Vision: Grounding in Auditory Perception,” in Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing. Lisbon, Portugal: Association for Computational Linguistics, 2015, pp. 2461–2470. [Online]. Available: http://aclweb.org/anthology/D15-1293

- H. Pham, P. P. Liang, T. Manzini, L.-P. Morency, and B. Póczos, “Found in Translation: Learning Robust Joint Representations by Cyclic Translations between Modalities,” Proceedings of the AAAI Conference on Artificial Intelligence, vol. 33, pp. 6892–6899, Jul. 2019. [Online]. Available: https://aiide.org/ojs/index.php/AAAI/article/view/4666

- J. Yu, Z. Wang, V. Vasudevan, L. Yeung, M. Seyedhosseini, and Y. Wu, “CoCa: Contrastive Captioners are Image-Text Foundation Models,” arXiv:2205.01917 [cs], May 2022, arXiv: 2205.01917. [Online]. Available: http://arxiv.org/abs/2205.01917

- A. Ramesh, P. Dhariwal, A. Nichol, C. Chu, and M. Chen, “Hierarchical Text-Conditional Image Generation with CLIP Latents,” Apr. 2022, arXiv:2204.06125 [cs]. [Online]. Available: http://arxiv.org/abs/2204.06125

- J. Wei, Y. Tay, R. Bommasani, C. Raffel, B. Zoph, S. Borgeaud, D. Yogatama, M. Bosma, D. Zhou, D. Metzler, E. H. Chi, T. Hashimoto, O. Vinyals, P. Liang, J. Dean, and W. Fedus, “Emergent Abilities of Large Language Models,” Jun. 2022, arXiv:2206.07682 [cs]. [Online]. Available: http://arxiv.org/abs/2206.07682

- B. Devillers, B. Choksi, R. Bielawski, and R. VanRullen, “Does language help generalization in vision models?” in Proceedings of the 25th Conference on Computational Natural Language Learning. Online: Association for Computational Linguistics, 2021, pp. 171–182. [Online]. Available: https://aclanthology.org/2021.conll-1.13

- X. Zhai, X. Wang, B. Mustafa, A. Steiner, D. Keysers, A. Kolesnikov, and L. Beyer, “LiT: Zero-Shot Transfer with Locked-image Text Tuning,” arXiv:2111.07991 [cs], Nov. 2021, arXiv: 2111.07991. [Online]. Available: http://arxiv.org/abs/2111.07991

- S. Dehaene, M. Kerszberg, and J.-P. Changeux, “A neuronal model of a global workspace in effortful cognitive tasks,” Proceedings of the national Academy of Sciences, vol. 95, no. 24, pp. 14 529–14 534, 1998, publisher: National Acad Sciences.

- J.-B. Alayrac, A. Recasens, R. Schneider, R. Arandjelović, J. Ramapuram, J. De Fauw, L. Smaira, S. Dieleman, and A. Zisserman, “Self-Supervised MultiModal Versatile Networks,” arXiv:2006.16228 [cs], Oct. 2020, arXiv: 2006.16228. [Online]. Available: http://arxiv.org/abs/2006.16228

- K. Desai and J. Johnson, “VirTex: Learning Visual Representations from Textual Annotations,” in CVPR, 2021.

- M. B. Sariyildiz, J. Perez, and D. Larlus, “Learning Visual Representations with Caption Annotations,” in European Conference on Computer Vision (ECCV), 2020.

- Z. Kalal, K. Mikolajczyk, and J. Matas, “Forward-Backward Error: Automatic Detection of Tracking Failures,” in 2010 20th International Conference on Pattern Recognition. Istanbul, Turkey: IEEE, Aug. 2010, pp. 2756–2759. [Online]. Available: http://ieeexplore.ieee.org/document/5596017/

- D. He, Y. Xia, T. Qin, L. Wang, N. Yu, T.-Y. Liu, and W.-Y. Ma, “Dual learning for machine translation,” in Advances in neural information processing systems, 2016, pp. 820–828.

- M. Artetxe, G. Labaka, E. Agirre, and K. Cho, “Unsupervised neural machine translation,” in 6th International Conference on Learning Representations, ICLR 2018, 2018.

- A. Conneau, G. Lample, M. Ranzato, L. Denoyer, and H. Jégou, “Word translation without parallel data,” in International Conference on Learning Representations, 2018.

- G. Lample, A. Conneau, L. Denoyer, and M. Ranzato, “Unsupervised Machine Translation Using Monolingual Corpora Only,” Apr. 2018, arXiv:1711.00043 [cs]. [Online]. Available: http://arxiv.org/abs/1711.00043

- D. Bahdanau, K. Cho, and Y. Bengio, “Neural Machine Translation by Jointly Learning to Align and Translate,” May 2016, arXiv:1409.0473 [cs, stat]. [Online]. Available: http://arxiv.org/abs/1409.0473

- J.-Y. Zhu, T. Park, P. Isola, and A. A. Efros, “Unpaired Image-to-Image Translation Using Cycle-Consistent Adversarial Networks,” in 2017 IEEE International Conference on Computer Vision (ICCV), 2017, pp. 2242–2251.

- M.-Y. Liu, T. Breuel, and J. Kautz, “Unsupervised image-to-image translation networks,” in Advances in neural information processing systems, 2017, pp. 700–708.

- Z. Yi, H. Zhang, P. Tan, and M. Gong, “Dualgan: Unsupervised dual learning for image-to-image translation,” in Proceedings of the IEEE international conference on computer vision, 2017, pp. 2849–2857.

- S. Chaudhury, S. Dasgupta, A. Munawar, M. A. S. Khan, and R. Tachibana, “Text to image generative model using constrained embedding space mapping,” in 2017 IEEE 27th International Workshop on Machine Learning for Signal Processing (MLSP). IEEE, 2017, pp. 1–6.

- T. Qiao, J. Zhang, D. Xu, and D. Tao, “Mirrorgan: Learning text-to-image generation by redescription,” in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2019, pp. 1505–1514.

- K. Joseph, A. Pal, S. Rajanala, and V. N. Balasubramanian, “C4synth: Cross-caption cycle-consistent text-to-image synthesis,” in 2019 IEEE Winter Conference on Applications of Computer Vision (WACV). IEEE, 2019, pp. 358–366.

- Y. Li, J.-Y. Zhu, R. Tedrake, and A. Torralba, “Connecting touch and vision via cross-modal prediction,” in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2019, pp. 10 609–10 618.

- B. J. Baars, “Global workspace theory of consciousness: toward a cognitive neuroscience of human experience,” in Progress in Brain Research. Elsevier, 2005, vol. 150, pp. 45–53. [Online]. Available: https://linkinghub.elsevier.com/retrieve/pii/S0079612305500049

- R. VanRullen and R. Kanai, “Deep Learning and the Global Workspace Theory,” arXiv:2012.10390 [cs, q-bio], Feb. 2021, arXiv: 2012.10390. [Online]. Available: http://arxiv.org/abs/2012.10390

- A. Juliani, R. Kanai, and S. S. Sasai, “The Perceiver Architecture is a Functional Global Workspace,” in Proceedings of the Annual Meeting of the Cognitive Science Society, vol. 44, 2022, issue: 44.

- A. Jaegle, F. Gimeno, A. Brock, A. Zisserman, O. Vinyals, and J. Carreira, “Perceiver: General Perception with Iterative Attention,” 2021, _eprint: 2103.03206.

- A. Goyal, A. Didolkar, A. Lamb, K. Badola, N. R. Ke, N. Rahaman, J. Binas, C. Blundell, M. Mozer, and Y. Bengio, “Coordination Among Neural Modules Through a Shared Global Workspace,” arXiv:2103.01197 [cs, stat], Mar. 2022, arXiv: 2103.01197. [Online]. Available: http://arxiv.org/abs/2103.01197

- R. Rombach, A. Blattmann, D. Lorenz, P. Esser, and B. Ommer, “High-Resolution Image Synthesis with Latent Diffusion Models,” Apr. 2022, arXiv:2112.10752 [cs]. [Online]. Available: http://arxiv.org/abs/2112.10752

- G. Lample, M. Ott, A. Conneau, L. Denoyer, and M. Ranzato, “Phrase-Based & Neural Unsupervised Machine Translation,” Aug. 2018, arXiv:1804.07755 [cs]. [Online]. Available: http://arxiv.org/abs/1804.07755

- I. Higgins, L. Matthey, A. Pal, C. Burgess, X. Glorot, M. Botvinick, S. Mohamed, and A. Lerchner, “beta-VAE: Learning Basic Visual Concepts with a Constrained Variational Framework,” in International Conference on Learning Representations, 2017. [Online]. Available: https://openreview.net/forum?id=Sy2fzU9gl

- D. P. Kingma and M. Welling, “Auto-Encoding Variational Bayes,” May 2014, arXiv:1312.6114 [cs, stat]. [Online]. Available: http://arxiv.org/abs/1312.6114

- Webots, “http://www.cyberbotics.com,” open-source Mobile Robot Simulation Software. [Online]. Available: http://www.cyberbotics.com

- R. Girdhar, A. El-Nouby, Z. Liu, M. Singh, K. V. Alwala, A. Joulin, and I. Misra, “ImageBind: One Embedding Space To Bind Them All,” May 2023, arXiv:2305.05665 [cs]. [Online]. Available: http://arxiv.org/abs/2305.05665

- A. Graves, G. Wayne, and I. Danihelka, “Neural Turing Machines,” Dec. 2014, arXiv:1410.5401 [cs]. [Online]. Available: http://arxiv.org/abs/1410.5401

- A. Graves, G. Wayne, M. Reynolds, T. Harley, I. Danihelka, A. Grabska-Barwińska, S. G. Colmenarejo, E. Grefenstette, T. Ramalho, J. Agapiou, A. P. Badia, K. M. Hermann, Y. Zwols, G. Ostrovski, A. Cain, H. King, C. Summerfield, P. Blunsom, K. Kavukcuoglu, and D. Hassabis, “Hybrid computing using a neural network with dynamic external memory,” Nature, vol. 538, no. 7626, pp. 471–476, Oct. 2016. [Online]. Available: https://www.nature.com/articles/nature20101

- D. Hafner, J. Pasukonis, J. Ba, and T. Lillicrap, “Mastering Diverse Domains through World Models,” Jan. 2023, arXiv:2301.04104 [cs, stat]. [Online]. Available: http://arxiv.org/abs/2301.04104

- P. Ghosh, M. S. M. Sajjadi, A. Vergari, M. Black, and B. Scholkopf, “From Variational to Deterministic Autoencoders,” in International Conference on Learning Representations, 2020. [Online]. Available: https://openreview.net/forum?id=S1g7tpEYDS

- J. Devlin, M.-W. Chang, K. Lee, and K. Toutanova, “BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding,” arXiv:1810.04805 [cs], May 2019, arXiv: 1810.04805. [Online]. Available: http://arxiv.org/abs/1810.04805

- J. D. Hunter, “Matplotlib: A 2d graphics environment,” Computing in Science & Engineering, vol. 9, no. 3, pp. 90–95, 2007.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

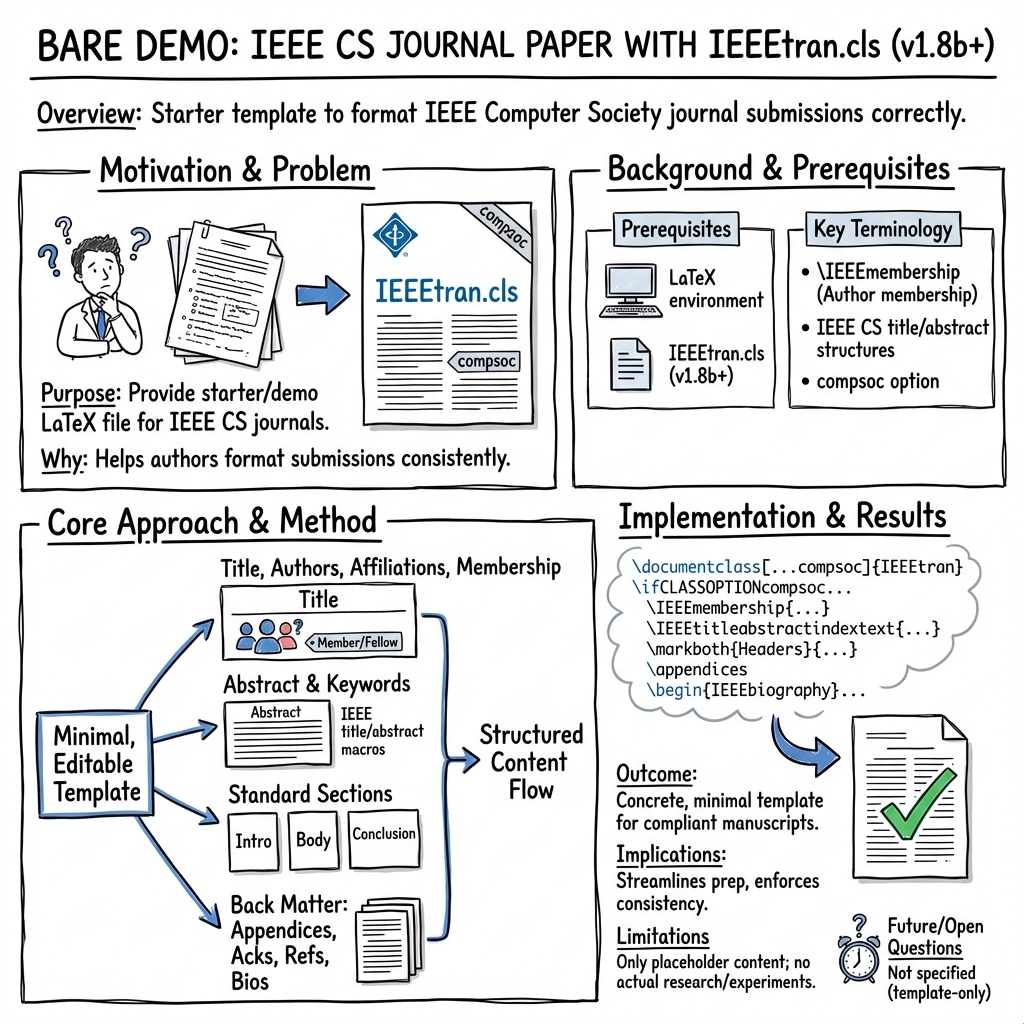

Overview

This paper is not a normal research study. Instead, it’s a “starter file” that shows how to write and format a journal article using a tool called LaTeX and a specific style called IEEEtran for the IEEE Computer Society. Think of it like a well-designed template that writers can fill in to make sure their paper looks professional and follows the rules.

What questions or goals does the paper have?

The main goals are simple:

- Show authors how to set up a LaTeX document for IEEE Computer Society journals.

- Demonstrate the typical parts of a research paper (like the title, abstract, sections, references, and author bios).

- Provide a basic example that people can copy and use as a starting point.

How did the authors do this?

Instead of doing experiments, the authors created a sample LaTeX file using the “IEEEtran” document class. In everyday terms:

- LaTeX is a typesetting system—a tool for writing papers that handles all the layout and formatting (like a smart word processor for scientists).

- A “document class” is like a set of rules or a style guide that controls how the paper looks.

- IEEE is a big organization for engineers and computer scientists. Their journals have strict formatting standards, and IEEEtran is the official style for those standards.

The paper includes example sections so you can see how to structure a real article:

- Title and author information

- Abstract (a short summary)

- Keywords

- Introduction, subsections, and subsubsections

- Conclusion

- Appendices

- Acknowledgments

- References (the bibliography)

- Author biographies

It also shows some special LaTeX commands that help with indexing, section headings, and the overall layout.

What are the main results?

There aren’t scientific findings here. The “results” are that the template:

- Works as a clean, ready-to-use starting point for an IEEE Computer Society journal paper.

- Demonstrates where each part of a paper should go.

- Makes it easier for authors to follow the required formatting without guessing.

This is important because journals often reject or delay papers that don’t follow their formatting rules. A good template saves time and reduces mistakes.

Why does this matter?

- It helps researchers focus on their ideas rather than worrying about formatting.

- It keeps all papers in the journal consistent and easy to read.

- It makes the submission process smoother, which can speed up publishing.

What is the impact?

If more authors use templates like this:

- Journals will receive well-formatted papers, making editors’ and reviewers’ jobs easier.

- Students and new researchers can learn the standard structure of scientific papers.

- Scientific communication becomes clearer and more professional, helping good ideas spread faster.

In short, this paper is a practical guide: it gives you the “recipe” for how an IEEE Computer Society journal paper should look, so you can focus on the “meal”—your research.

Collections

Sign up for free to add this paper to one or more collections.