- The paper presents an empirical evaluation that contrasts practitioners’ assumptions with actual test case effectiveness across eight quality hypotheses.

- It applies rigorous metrics including cyclomatic complexity and lines of code from 42 open-source repositories to assess test case performance.

- The findings challenge conventional wisdom in software testing, suggesting that intuitive quality measures do not always ensure effective bug detection.

Test Case Quality: An Empirical Study on Belief and Evidence

Introduction

Software testing is a crucial aspect of the software development lifecycle aimed at ensuring software quality by identifying defects. The paper "Test Case Quality: An Empirical Study on Belief and Evidence" (2307.06410) investigates the assumptions held by software practitioners regarding test case quality and contrasts them with empirical evidence gathered from open-source repositories.

The paper addresses an important question: what truly defines a high-quality test case? Despite previous studies proposing numerous hypotheses based on practitioners' beliefs, these hypotheses often lack empirical support. This study evaluates eight hypotheses concerning test case quality by analyzing data from prominent software repositories.

Methodology

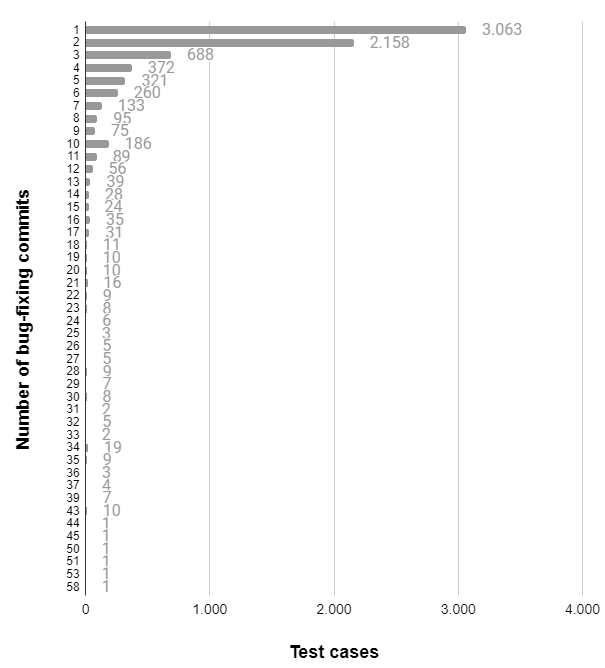

The study employs a rigorous empirical approach, analyzing data from 42 well-established open-source repositories, which are considered to implement high software engineering standards. The methodology involves examining bug-fixing commits in the software's version history as a metric for test case effectiveness. Several static and dynamic metrics are computed to quantify characteristics related to each hypothesis.

The hypotheses span various dimensions such as atomicity, coupling, exception handling, size, complexity, coverage, and readability. The paper provides detailed descriptions for each metric, explaining their relevance to test case quality and their role in validating or refuting the hypotheses.

Results

The empirical analysis yields intriguing results, challenging the widely held beliefs about test case quality. Only one hypothesis finds partial support: large test cases being difficult to understand and maintain is weakly supported by a positive correlation between cyclomatic complexity and lines of code. Most other hypotheses fail to align with the empirical data.

Implications and Threats to Validity

The results suggest that practitioners' beliefs regarding what constitutes a good test case may not lead to effective bug detection. This disconnect calls for a reevaluation of best practices in software testing and urges a critical view of the subjective guidelines often followed in the industry.

The paper acknowledges potential threats to validity, including limitations in data acquisition, project selection bias, and the challenge of fully capturing the nuances of each hypothesis through automated metrics.

Conclusions

The findings indicate a need for a paradigm shift in understanding test case quality. While traditional beliefs provide good software engineering advice, they do not guarantee improved test effectiveness. This study encourages researchers to explore new avenues for assessing test quality and highlights the importance of empirical validation of conventional wisdom in software testing.

Future research should explore alternative quality metrics and explore more nuanced relationships between test characteristics and software reliability. As software systems grow increasingly complex, refining our understanding of what makes a test case truly effective is critical for advancing software quality assurance practices.