- The paper introduces EfficientNet backbones into U-Net++ to significantly improve building extraction accuracy.

- The study employs the Massachusetts Buildings Dataset, achieving superior metrics with EfficientNetb4 (92.23% accuracy, 88.32% IoU).

- Results underscore improved segmentation performance for urban planning, though challenges like shadows remain for future research.

Introduction

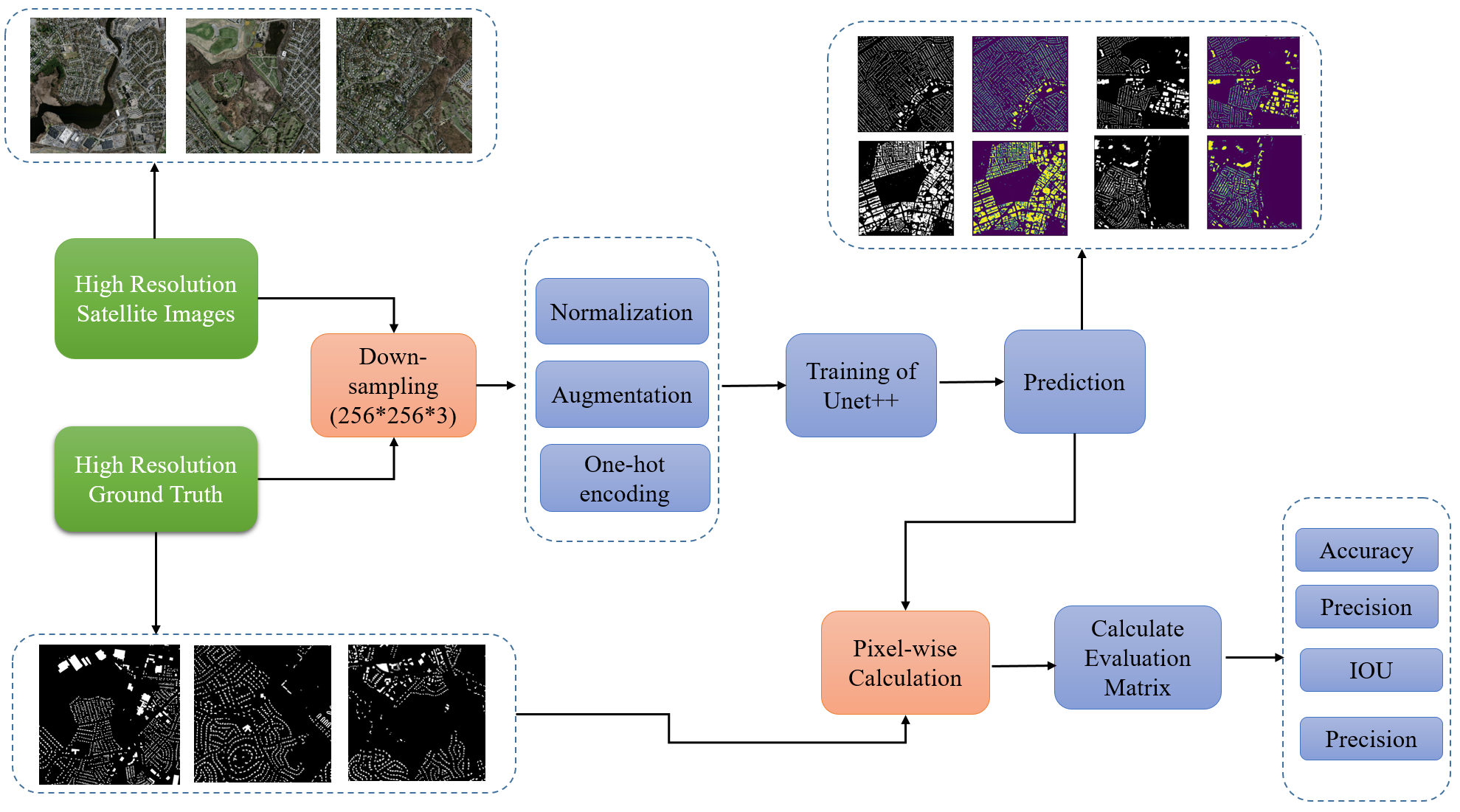

The advent of high-resolution satellite imagery has significantly enhanced our ability to perform automatic building extraction, a crucial task in remote sensing for applications such as urban planning and population density estimation. However, challenges such as noise, occlusion, and complex backgrounds remain. U-Net++, an extension of the traditional U-Net, seeks to address these issues by introducing densely nested skip-connections and deep supervision, enhancing feature extraction accuracy. In this study, EfficientNet backbones were incorporated into U-Net++ to improve building extraction efficacy and efficiency from satellite images.

Dataset

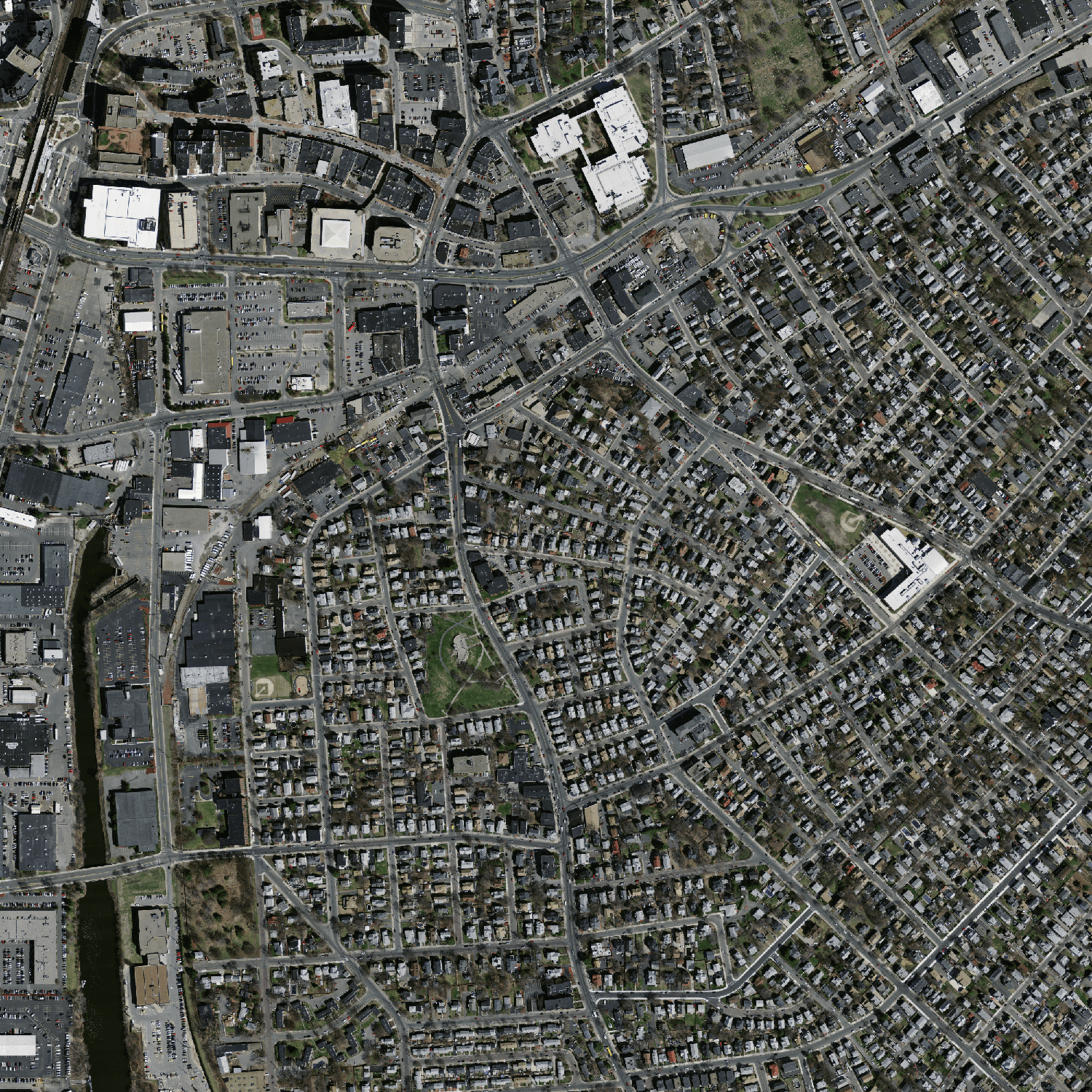

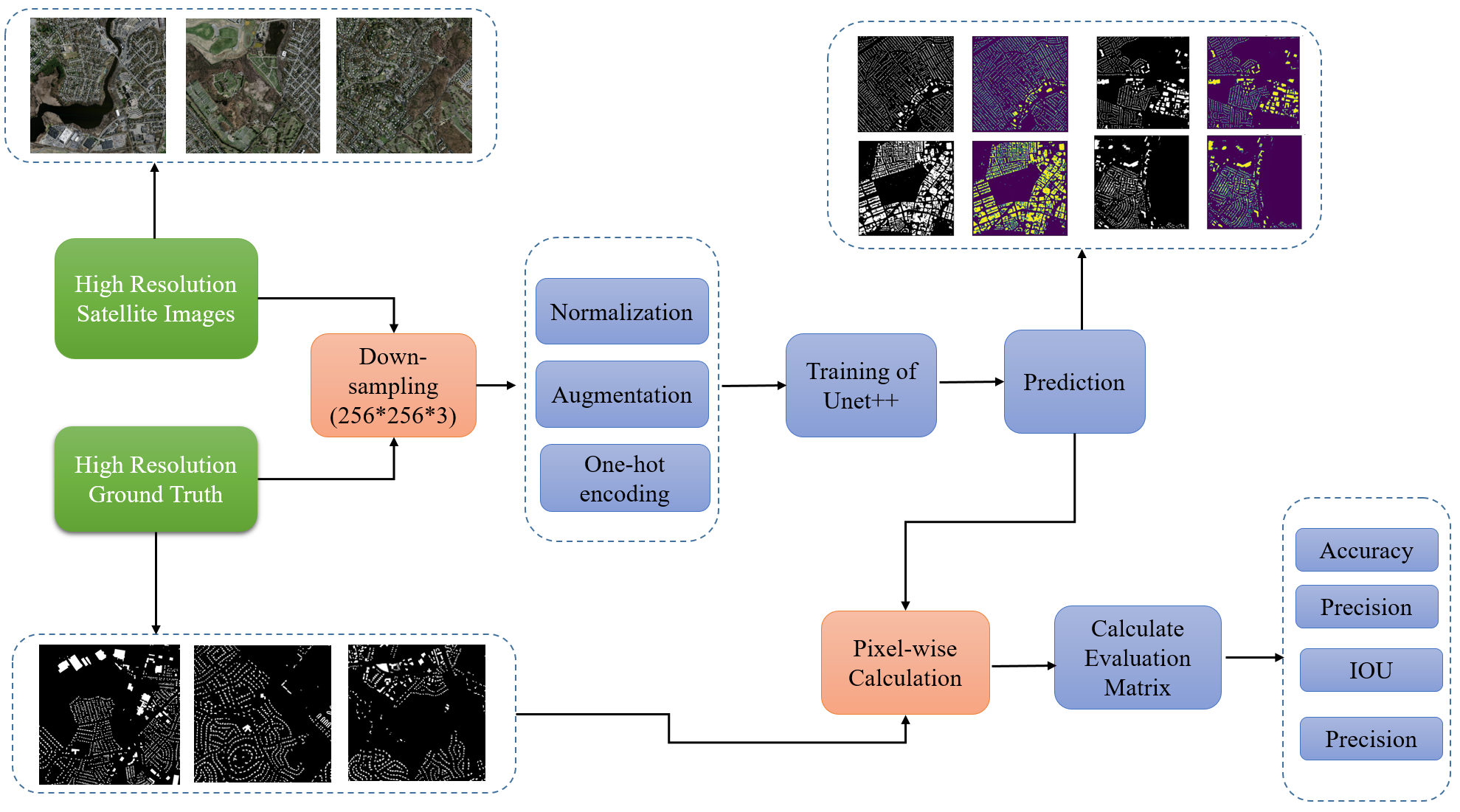

The Massachusetts Buildings Dataset is employed, comprising 151 aerial images of 1500x1500 pixels each, divided into training, test, and validation sets. Preprocessing involves down-sampling images to 256x256 and normalizing pixel values between -1 and 1.

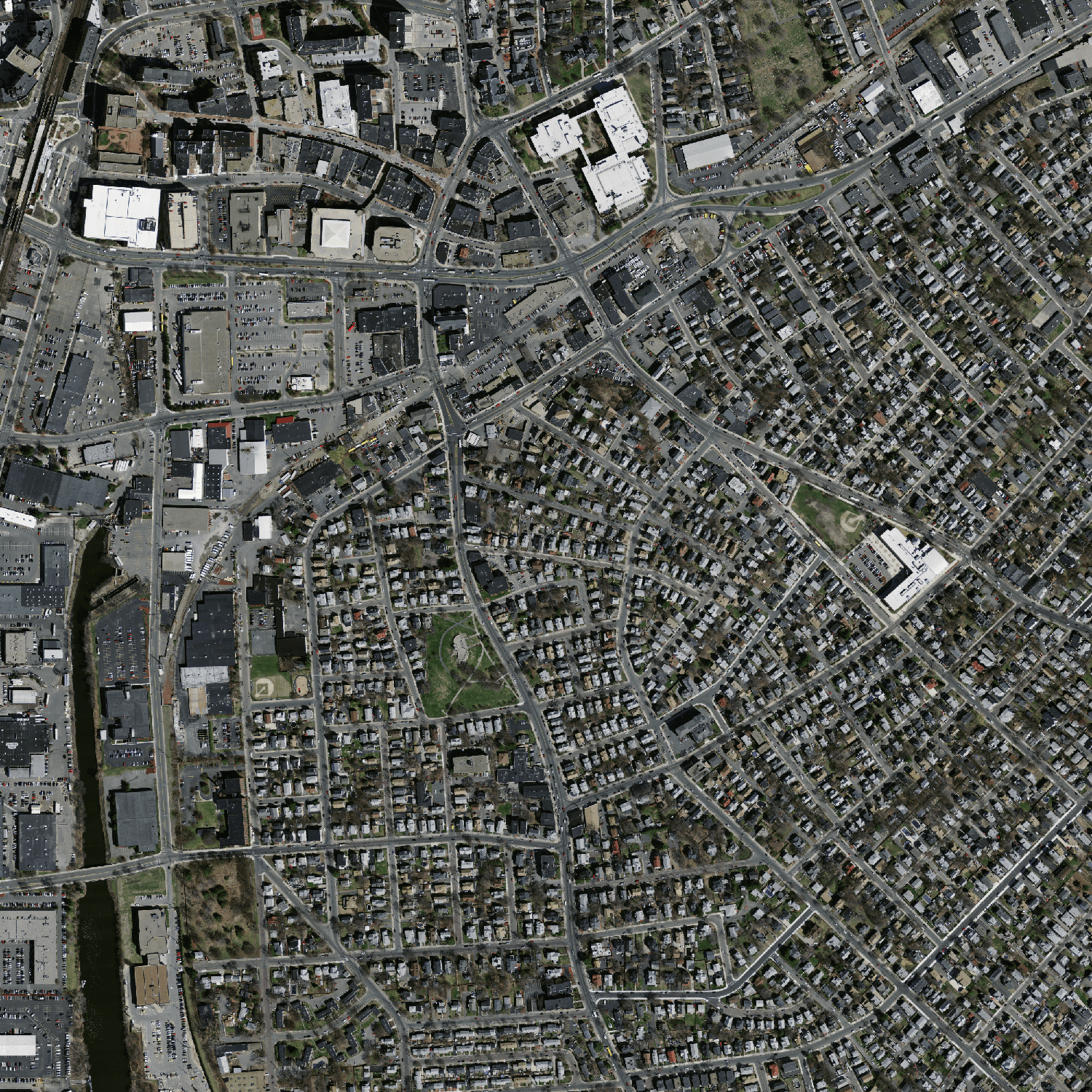

Figure 1: Example of images from the dataset: (a--c)~Input image and (d--f)~Target image.

Methodology

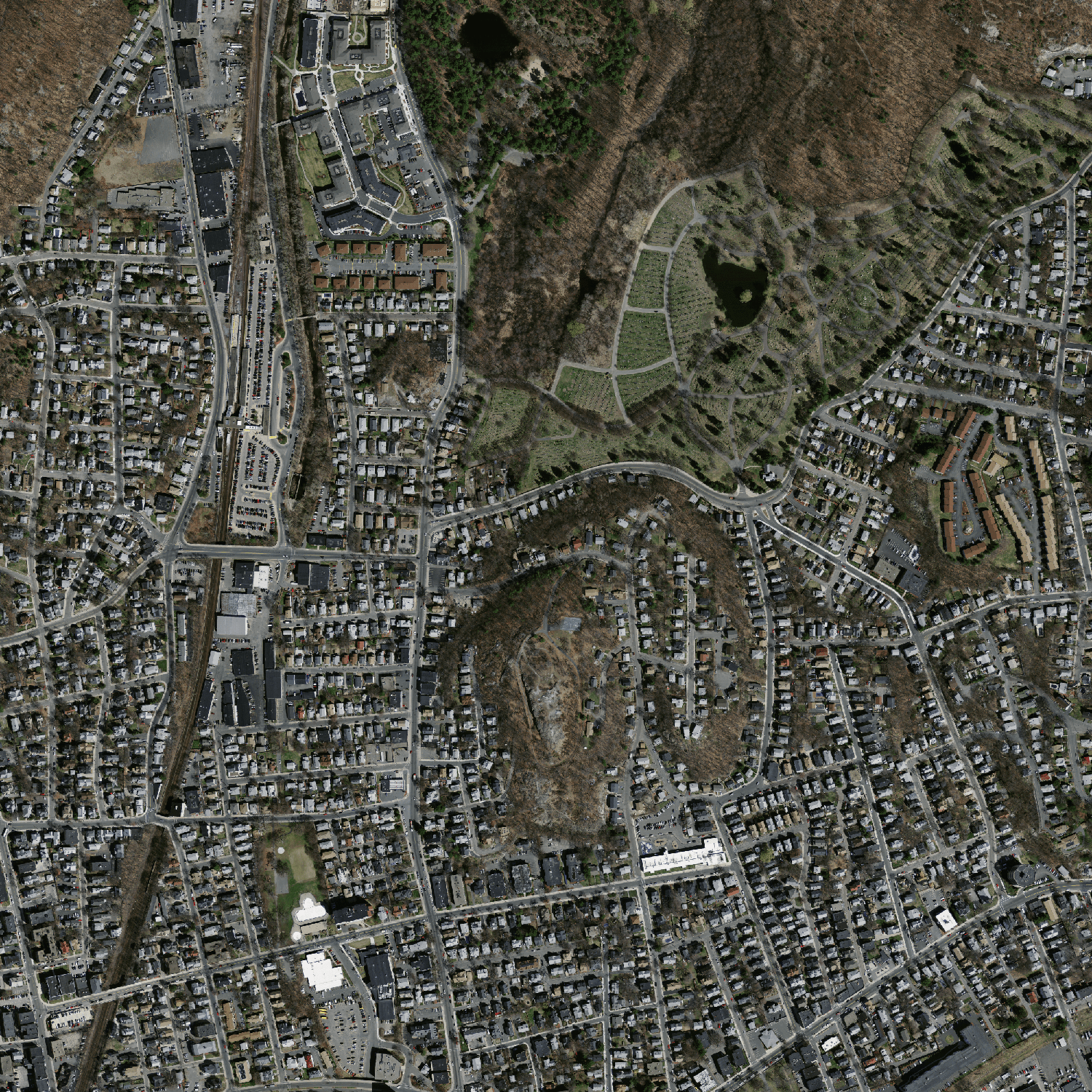

U-Net++

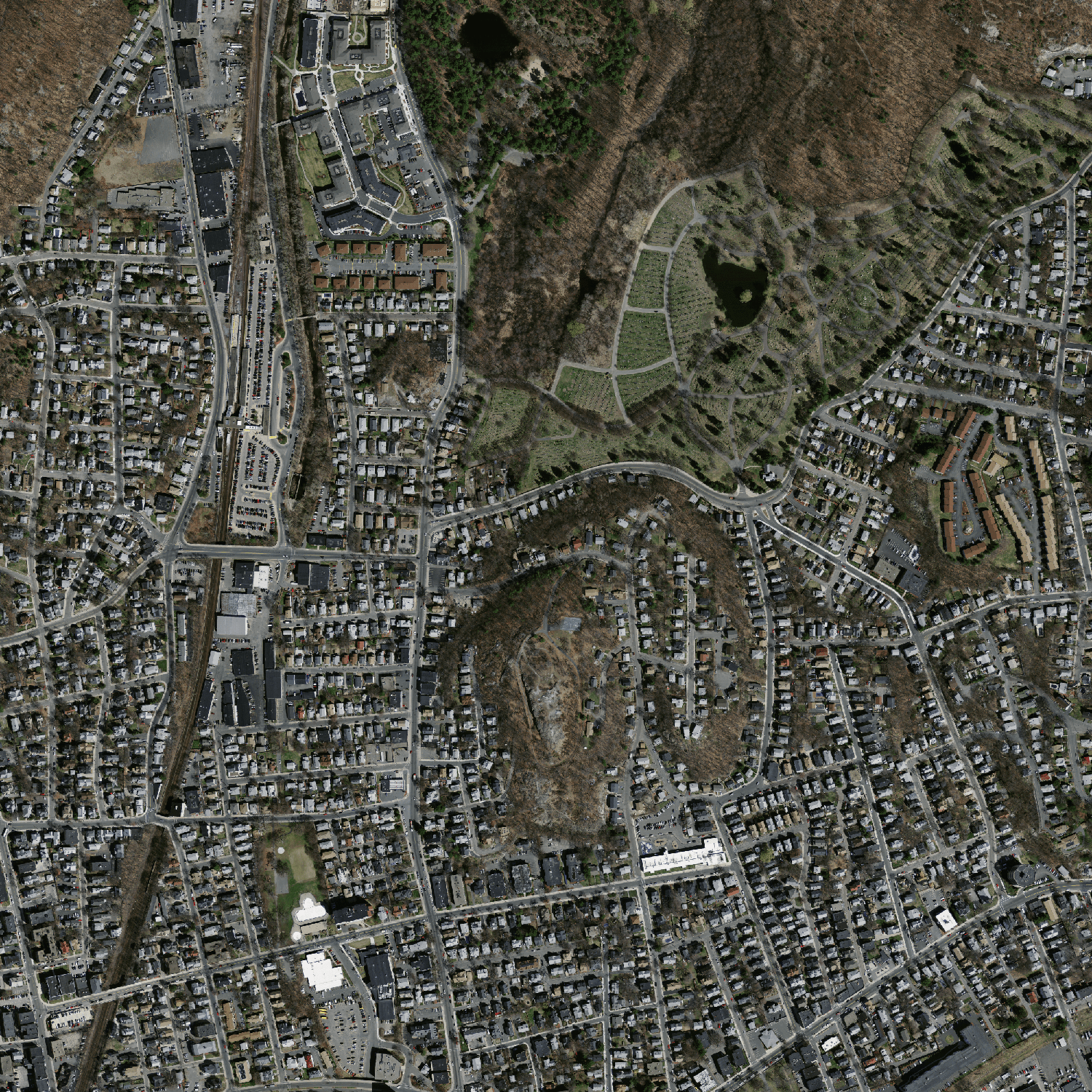

U-Net++ enhances the traditional U-Net by using redesigned skip connections to bridge the semantic gap between encoder and decoder stages, fostering better feature map integration. Its key features include densely connected encoder-decoder paths and deep supervision, enabling superior accuracy in semantic segmentation tasks.

Figure 2: U-Net++ Vs Unet Architecture.

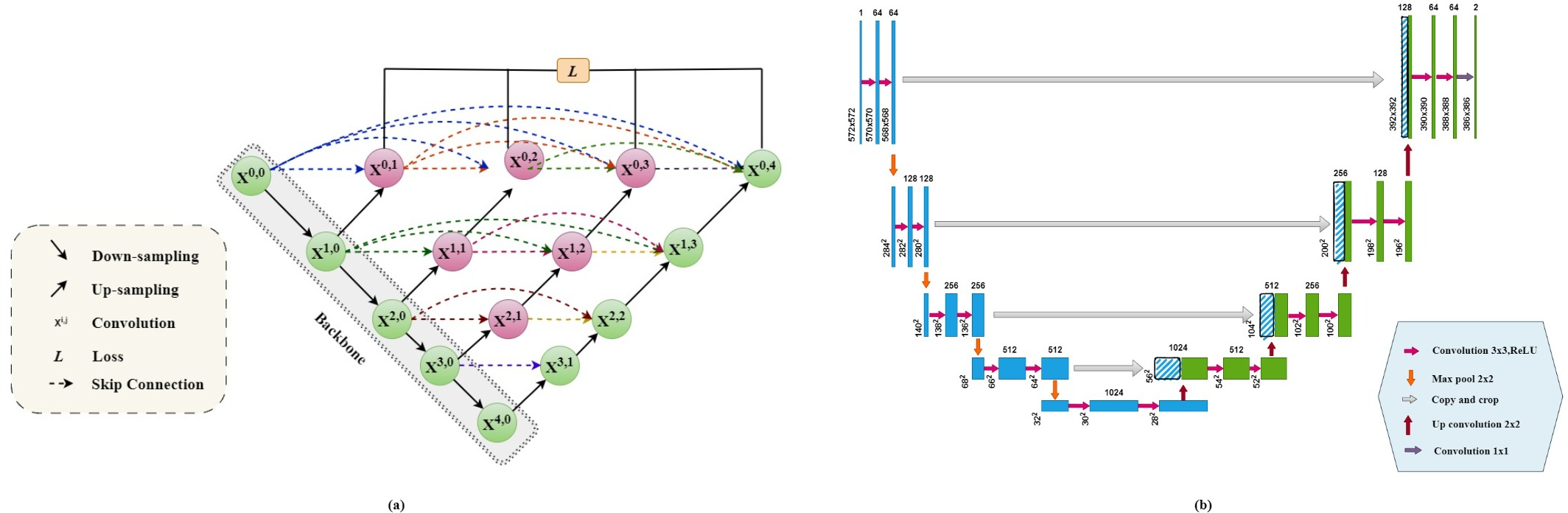

EfficientNet

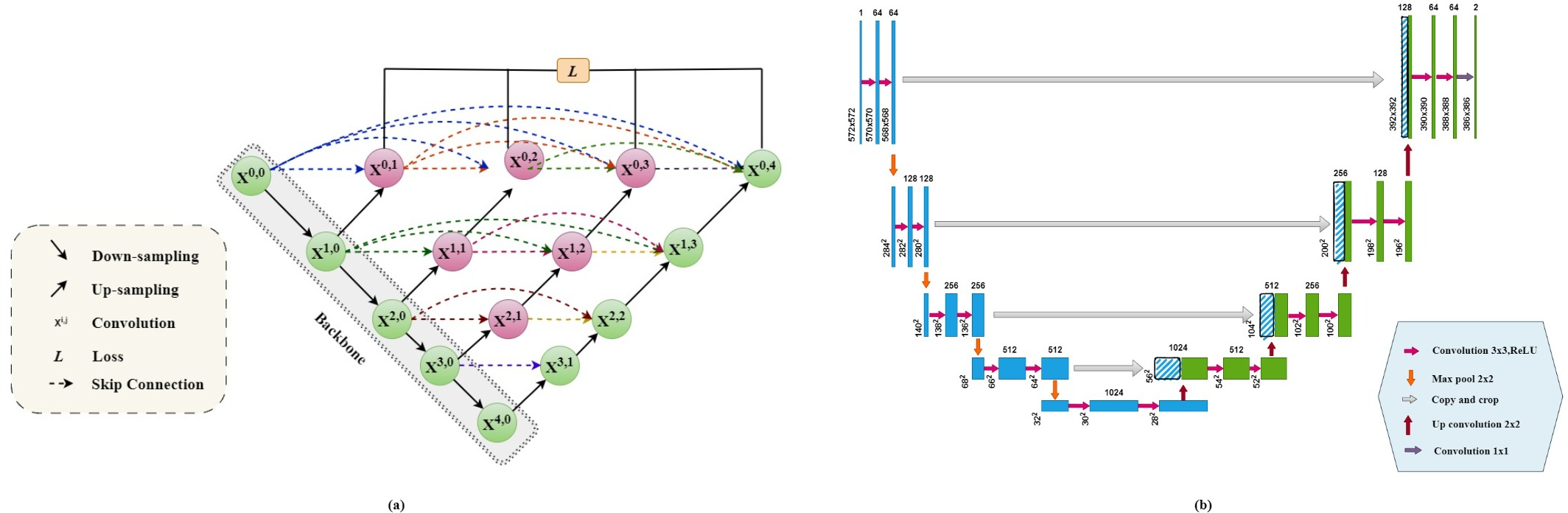

EfficientNet utilizes a compound scaling method which jointly scales depth, width, and resolution to increase accuracy and efficiency over previous CNN models like ResNet. This makes it particularly suitable as a backbone for U-Net++ in this study.

Figure 3: EfficientNet(Model Architecture).

Proposed Architecture

Incorporating EfficientNet as the backbone in U-Net++ aims to balance model complexity while enhancing accuracy. The extensive connections and scales of EfficientNet improve feature extraction and robustness in segmentation tasks. Each EfficientNet-based U-Net++ variant was evaluated, analyzing their performances in real-world scenarios.

Figure 4: Research and Evaluation Workflow.

Result Analysis

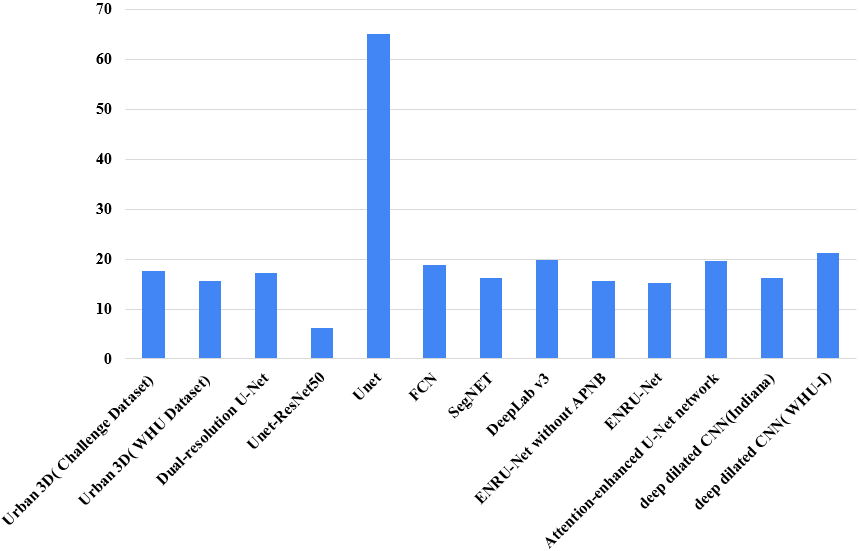

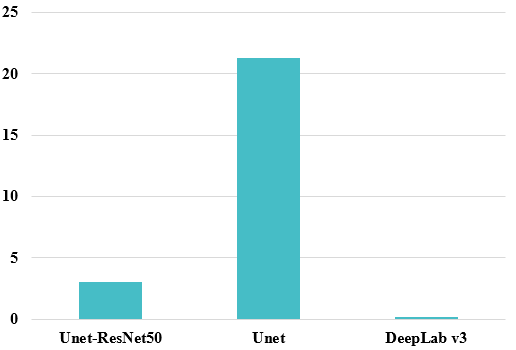

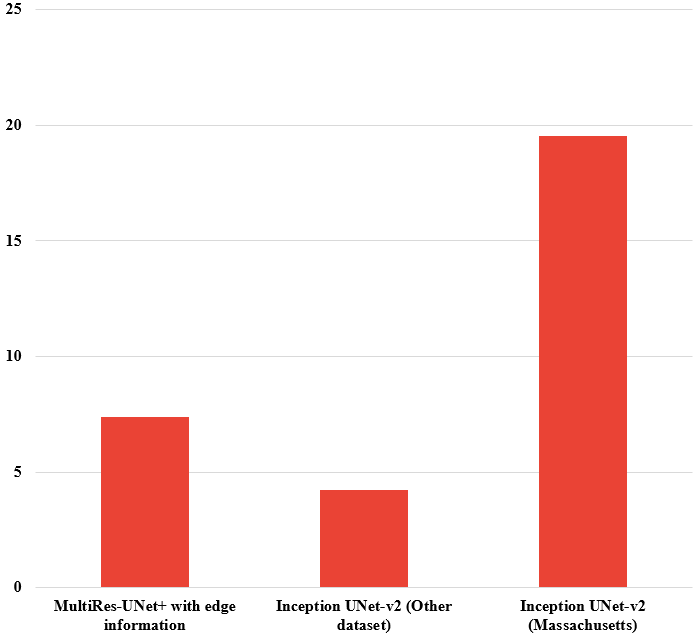

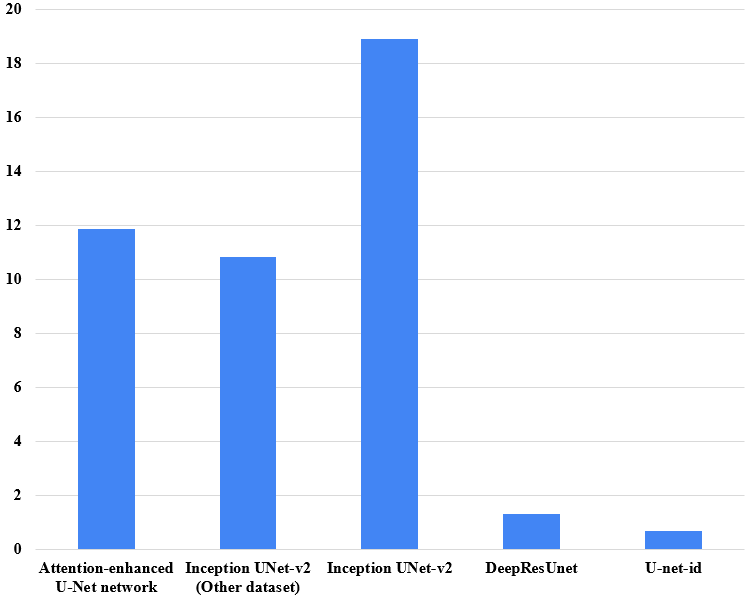

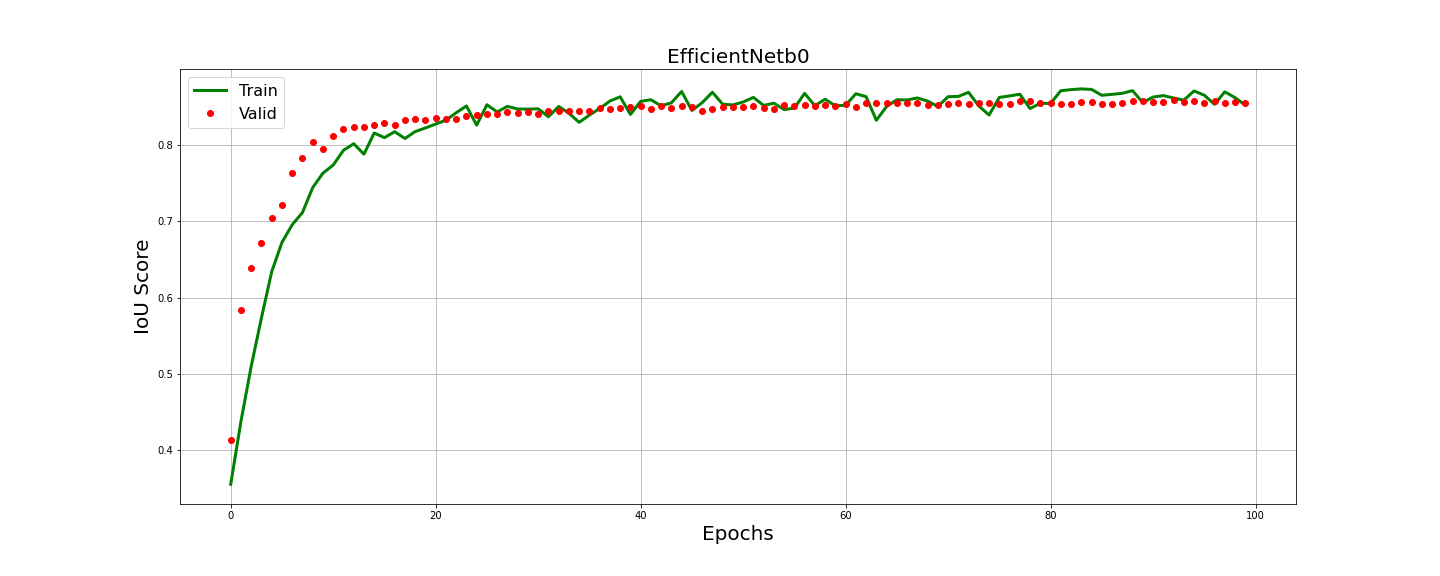

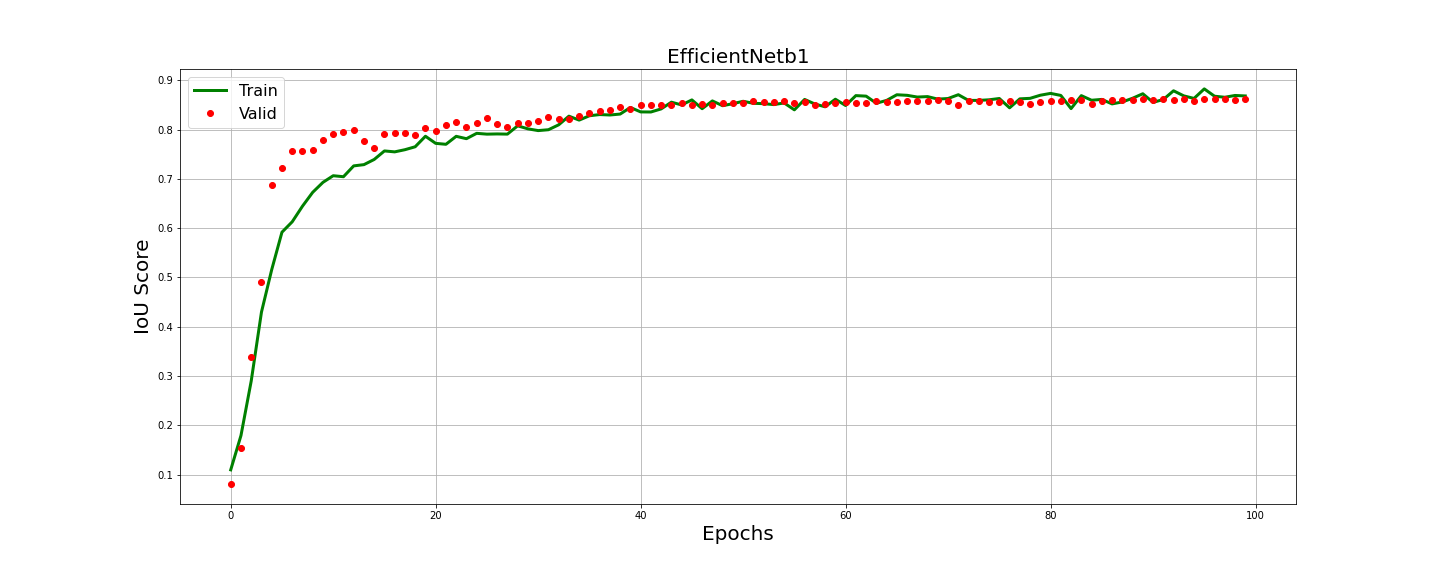

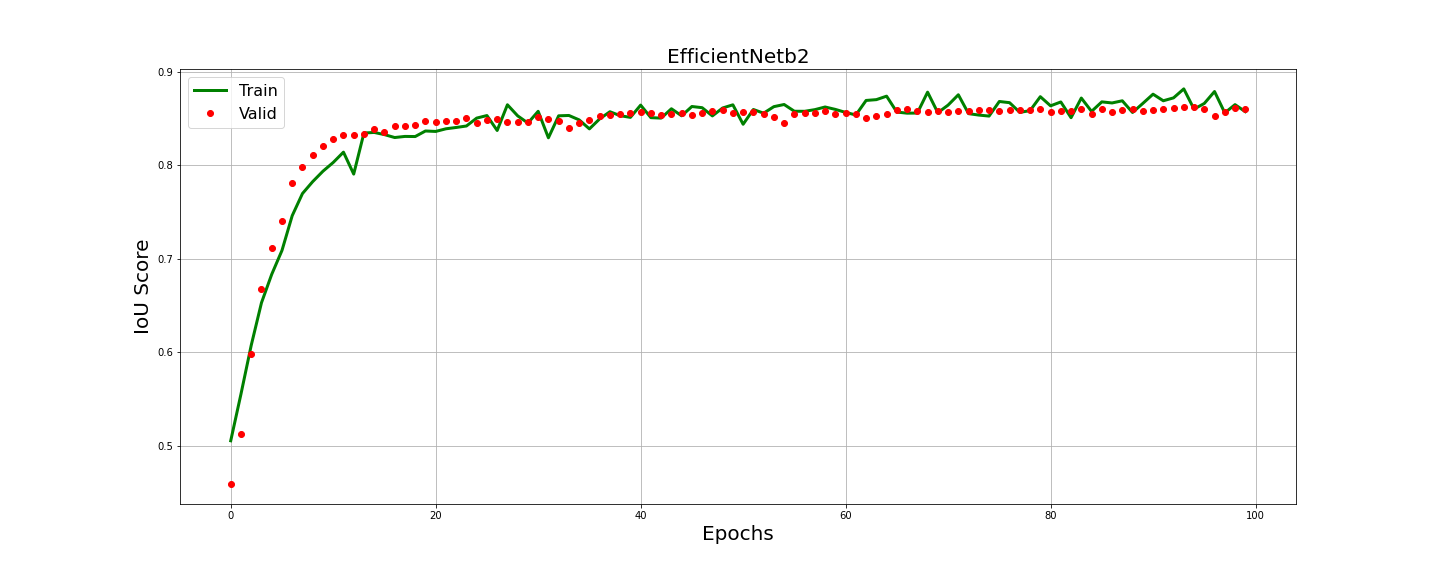

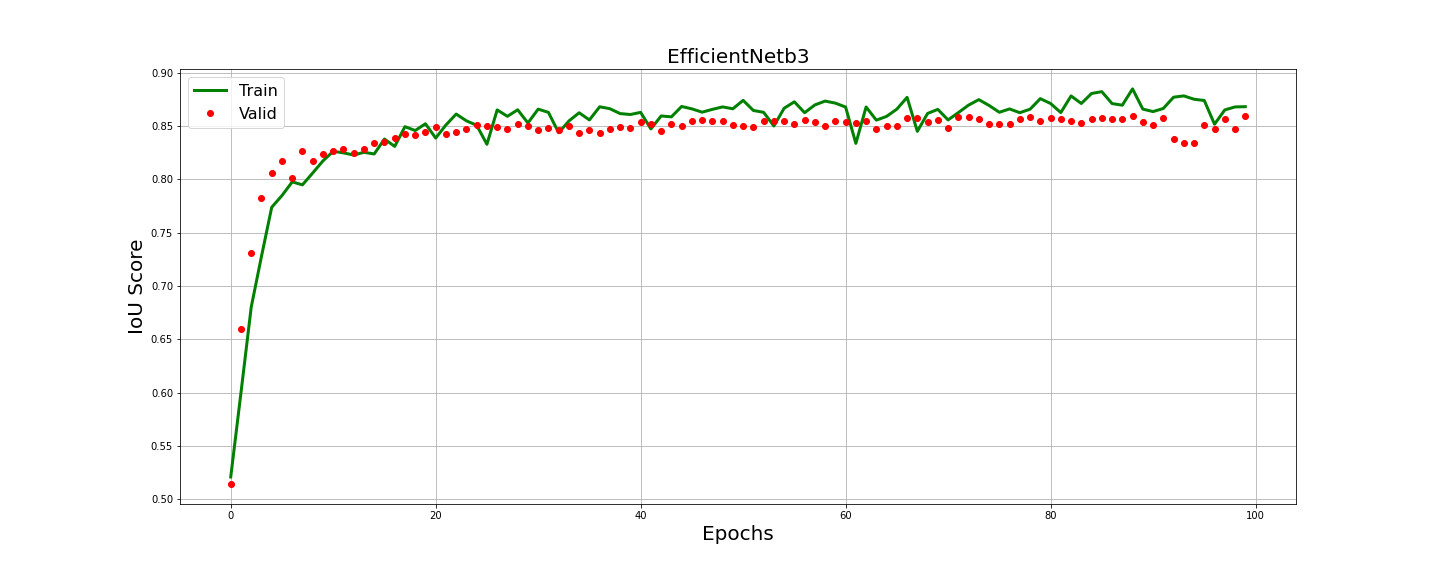

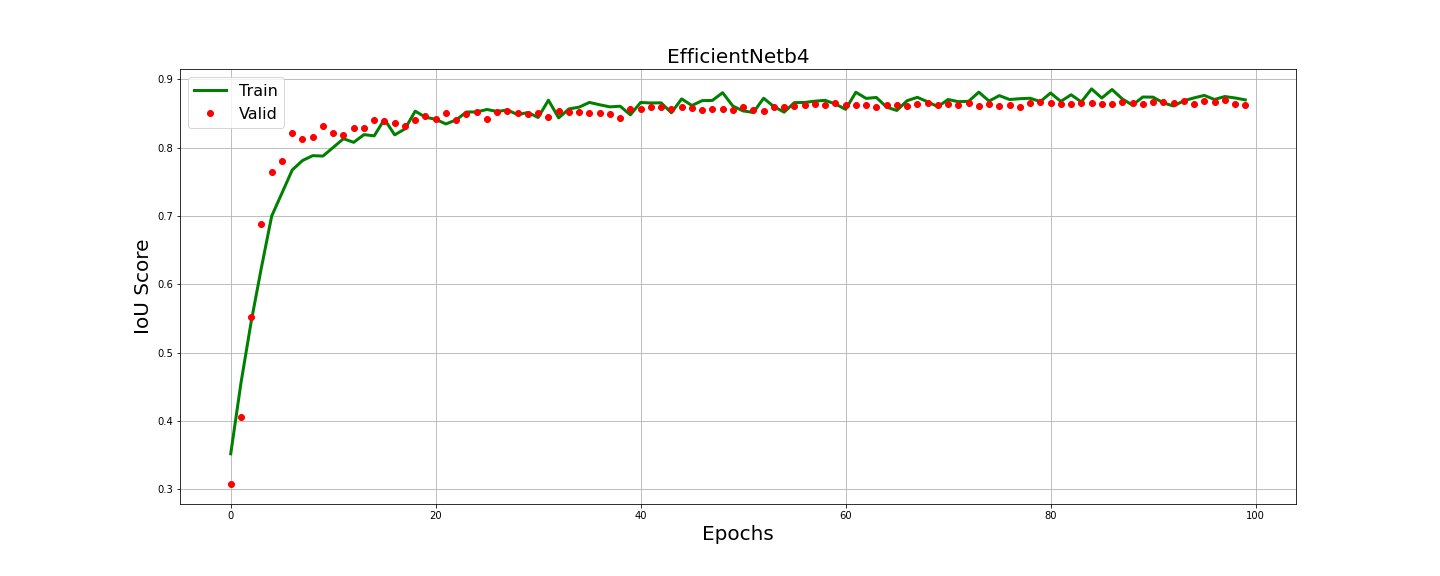

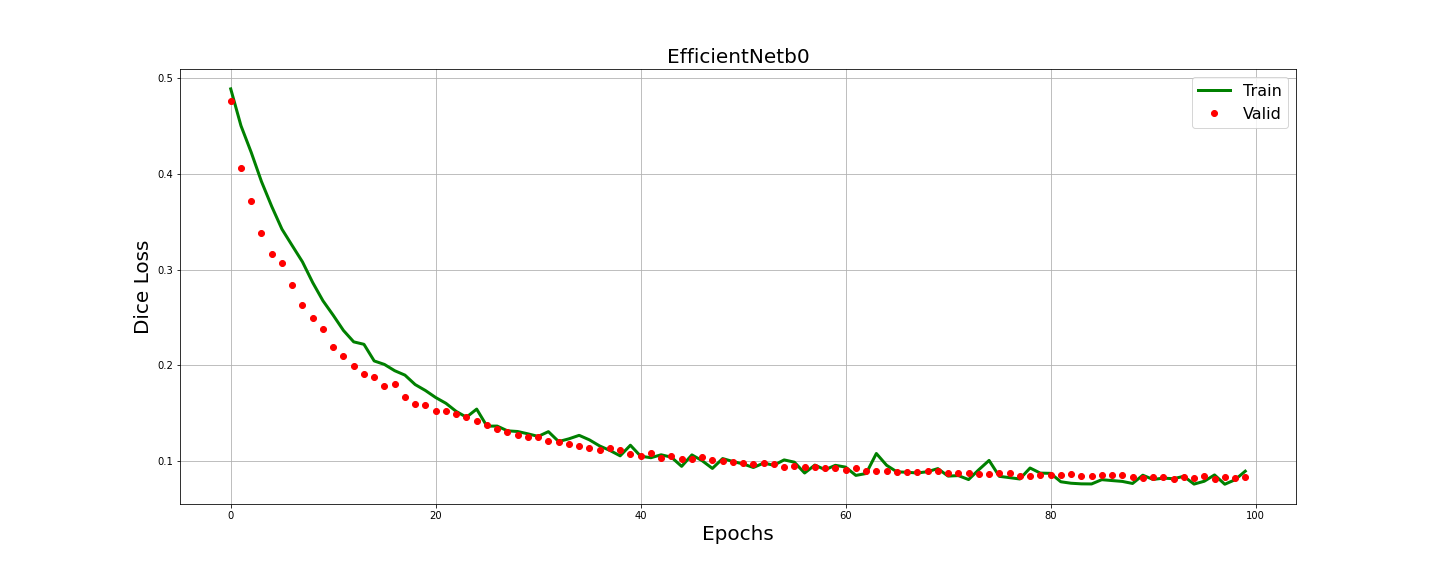

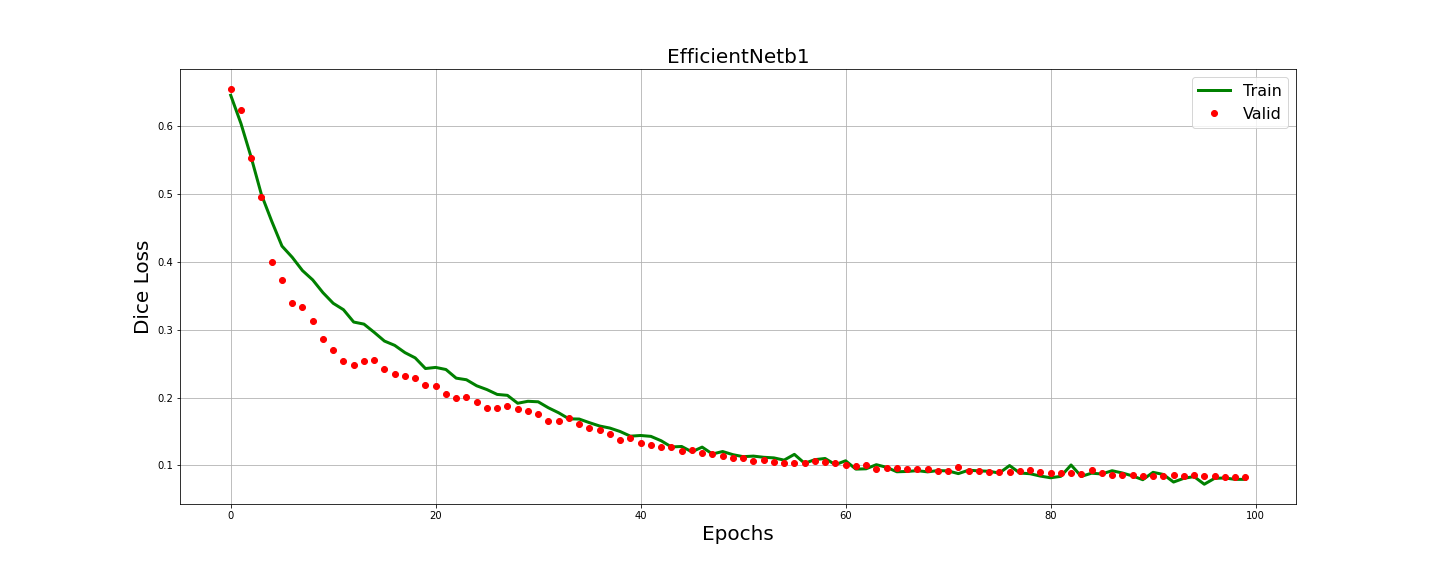

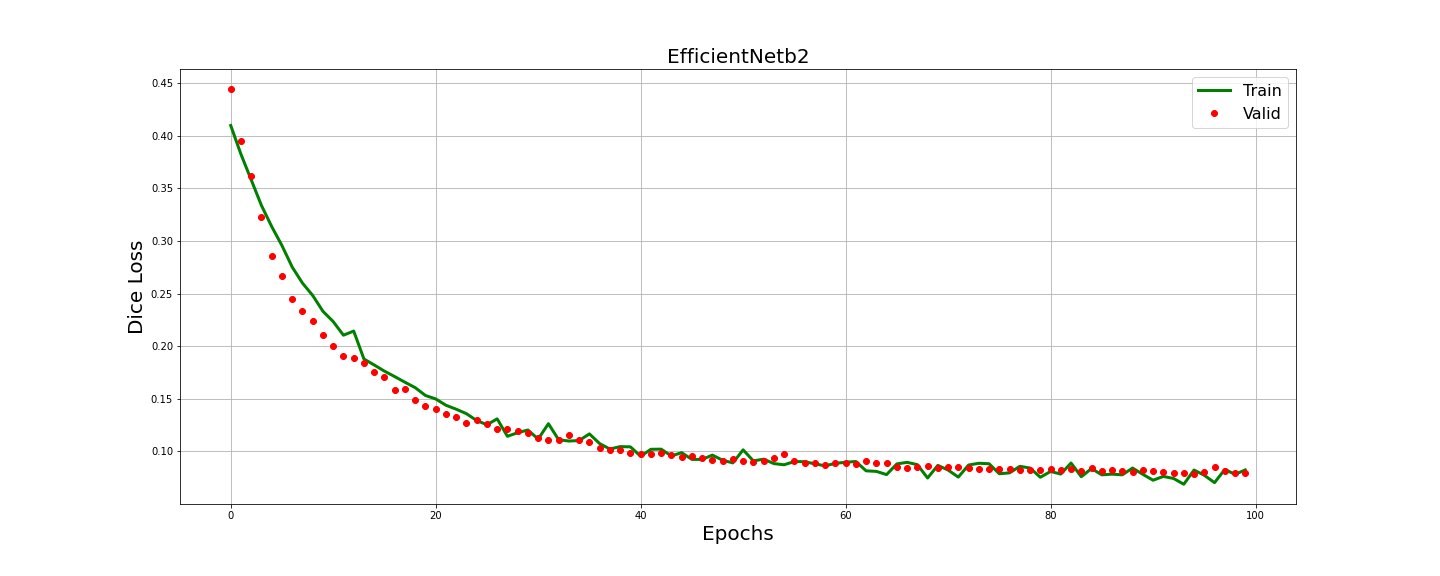

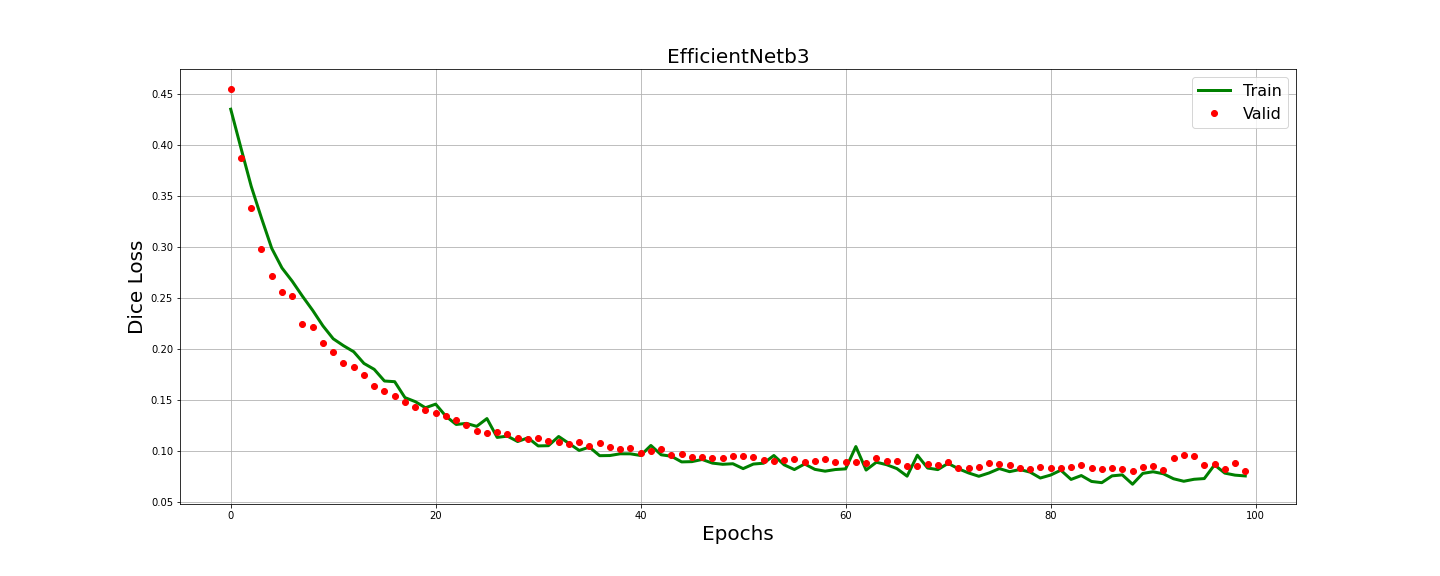

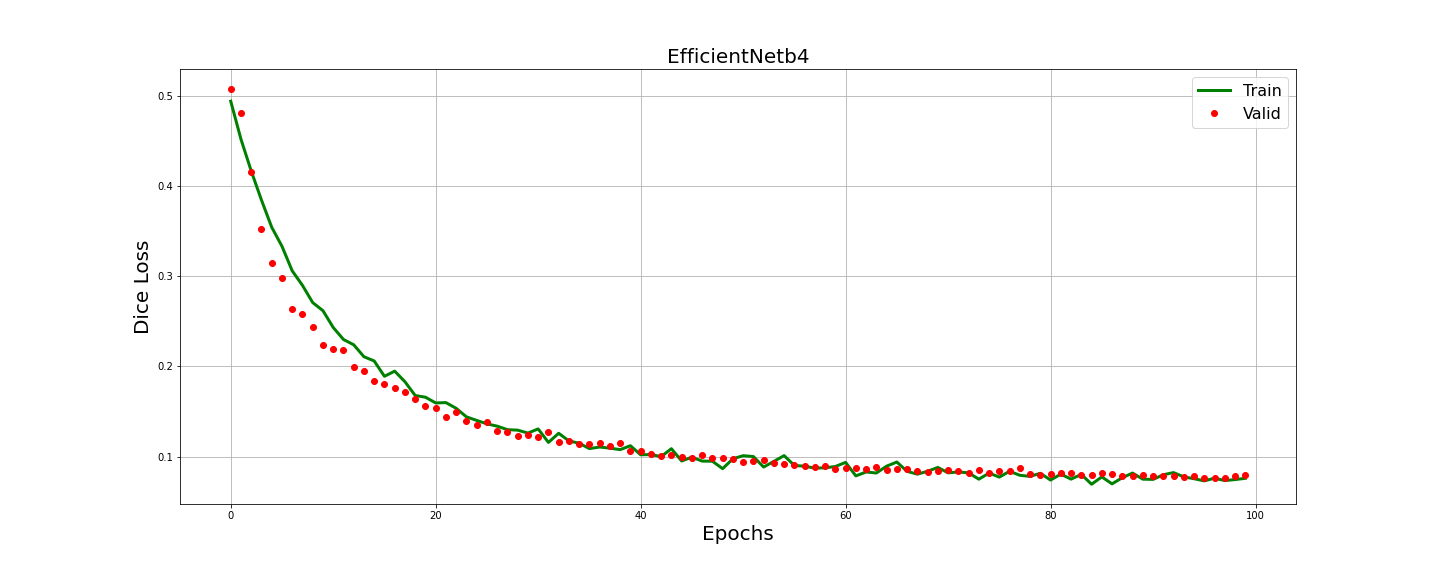

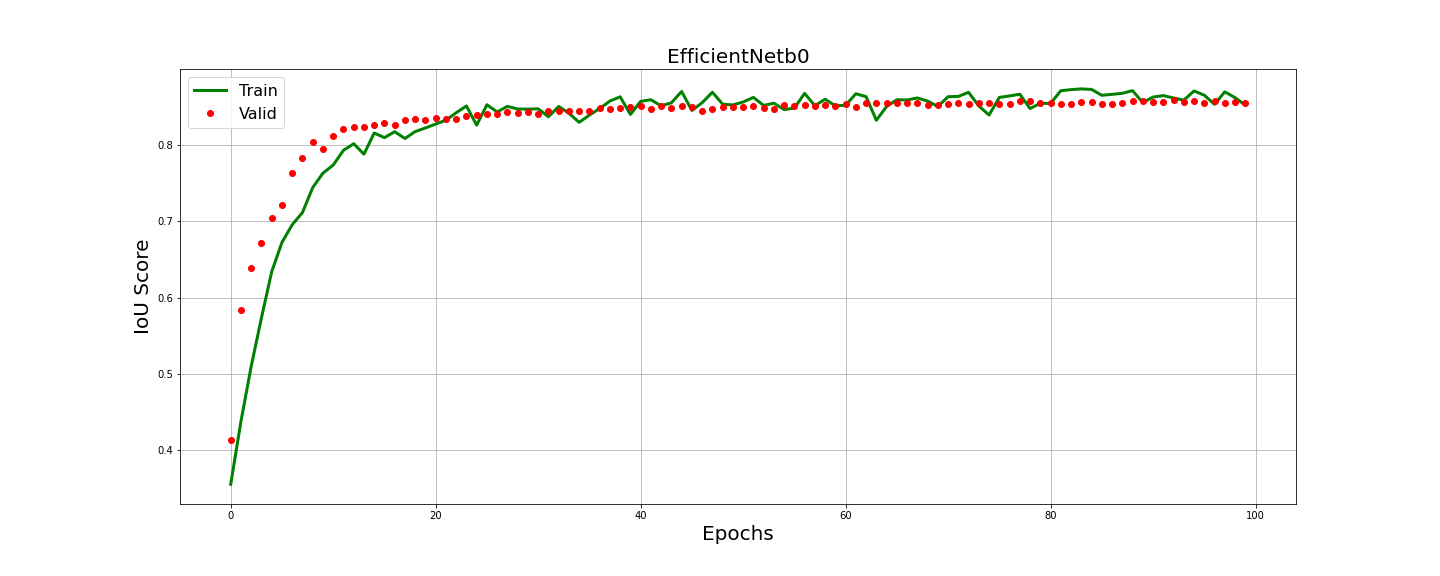

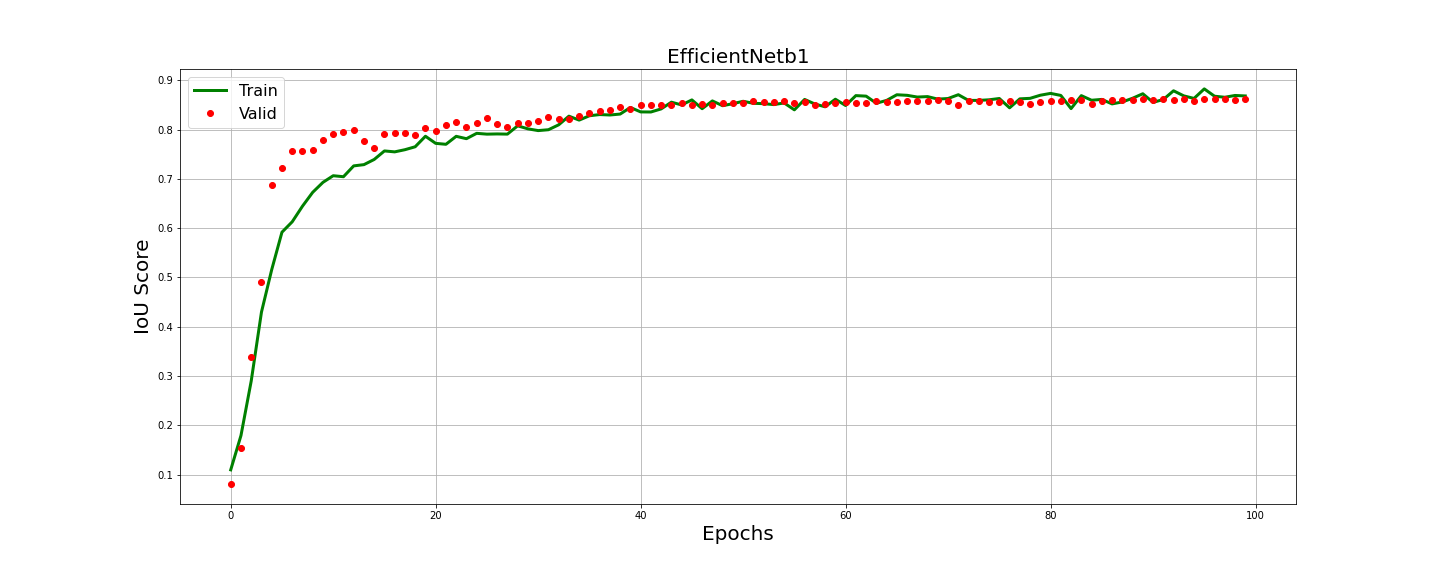

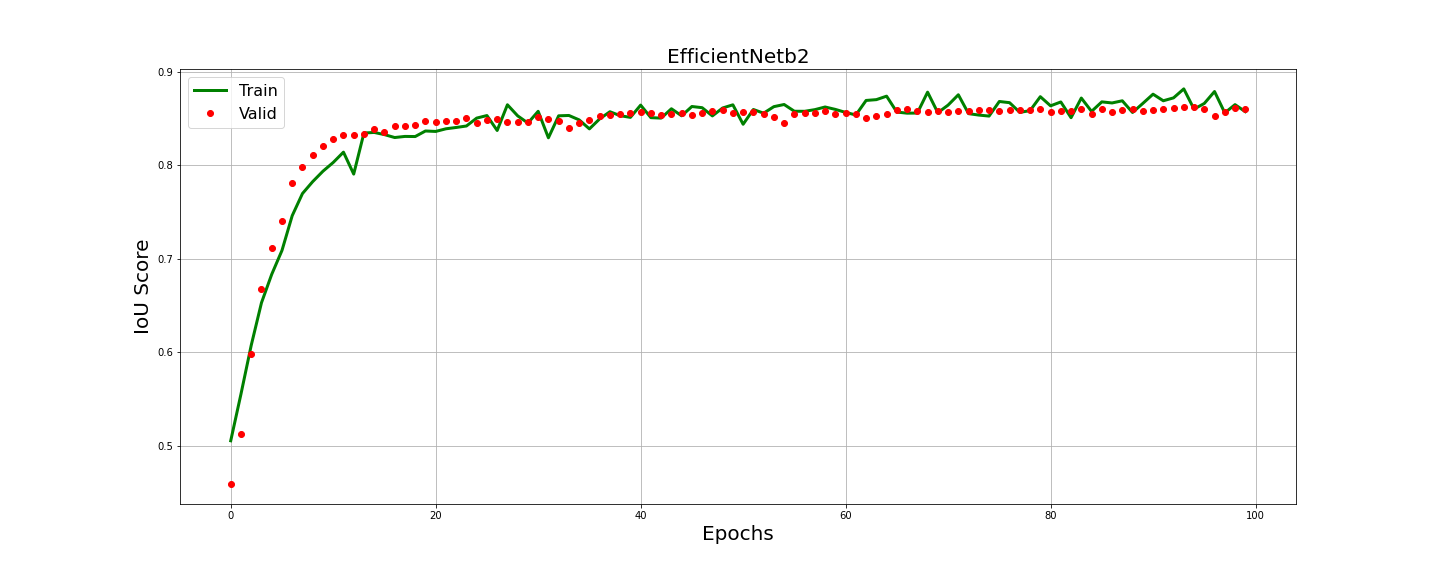

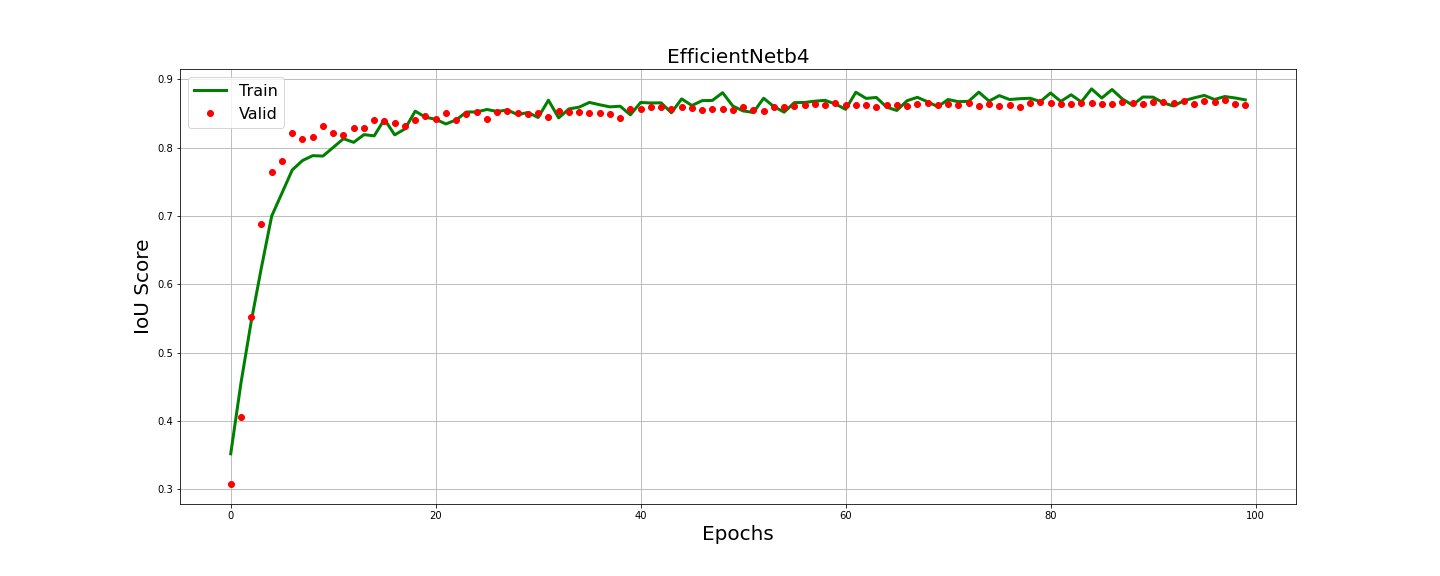

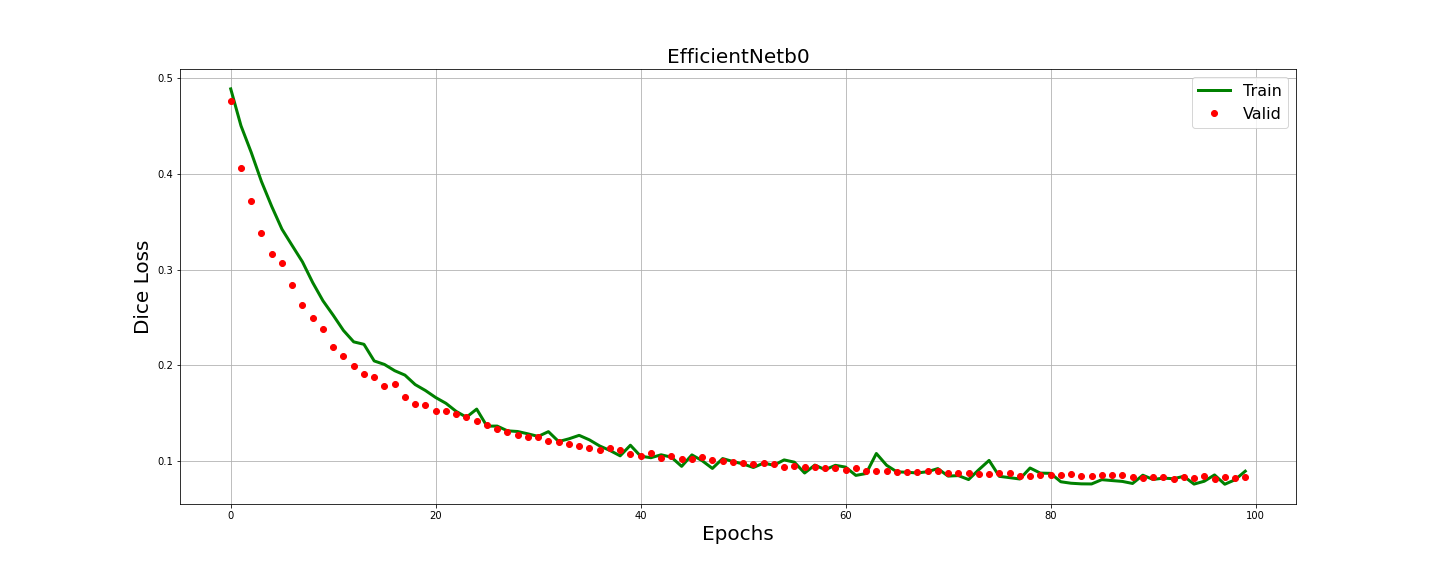

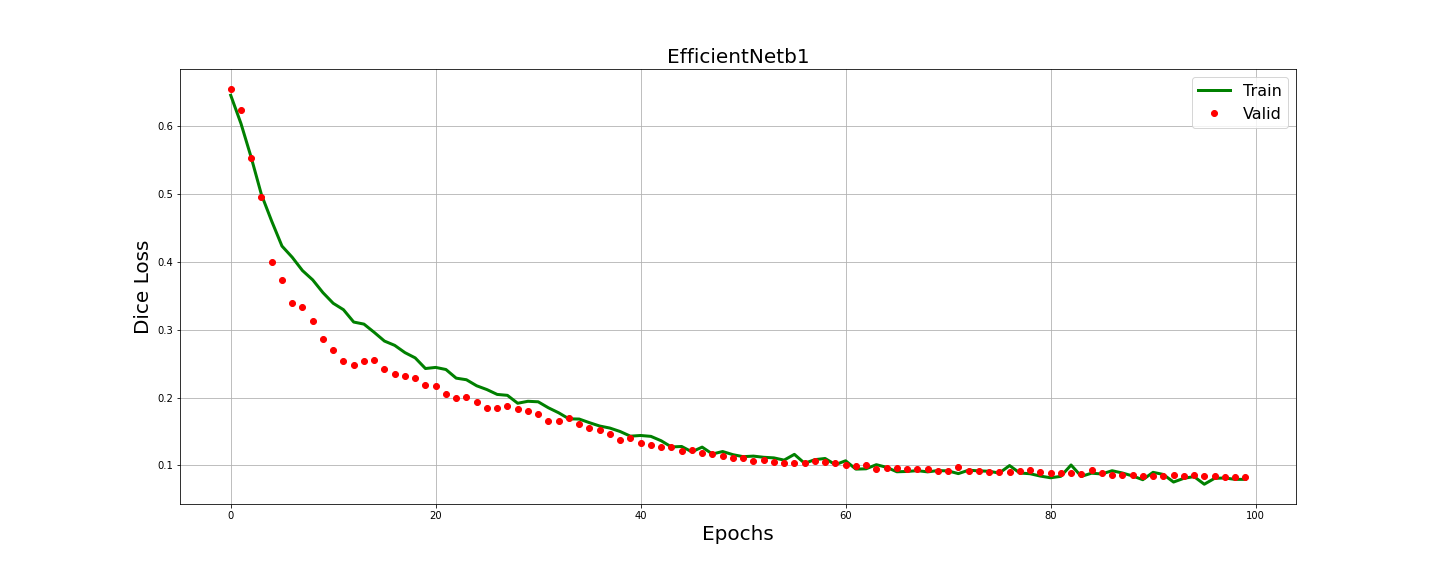

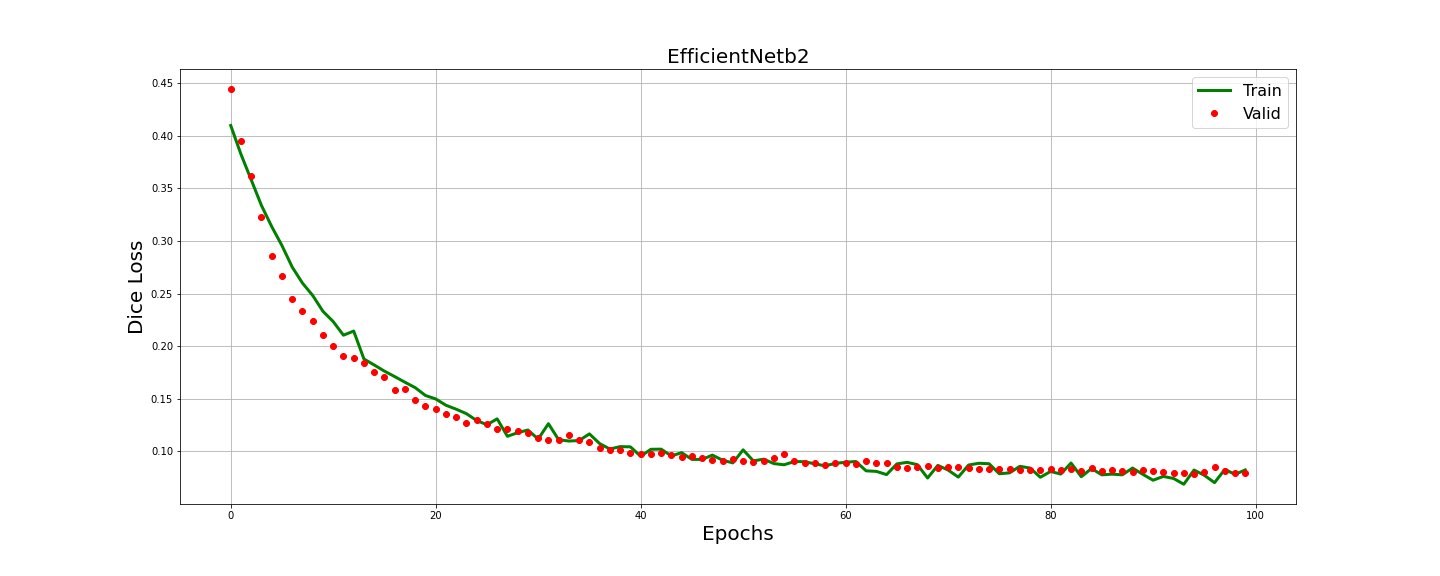

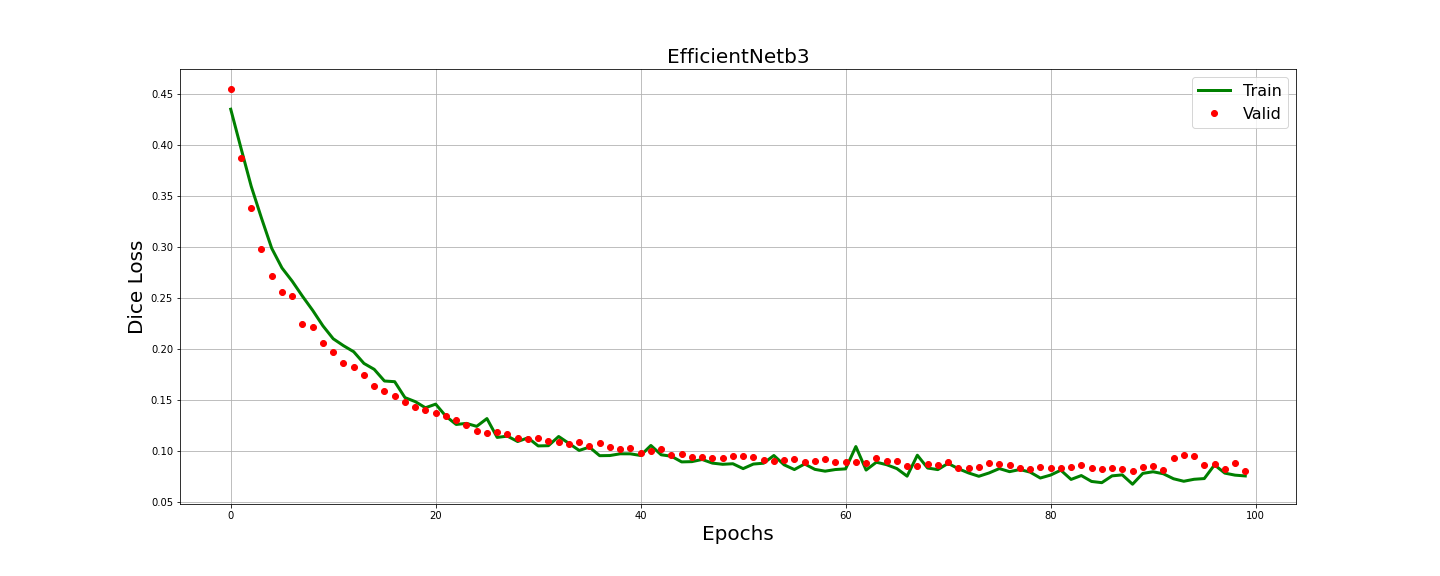

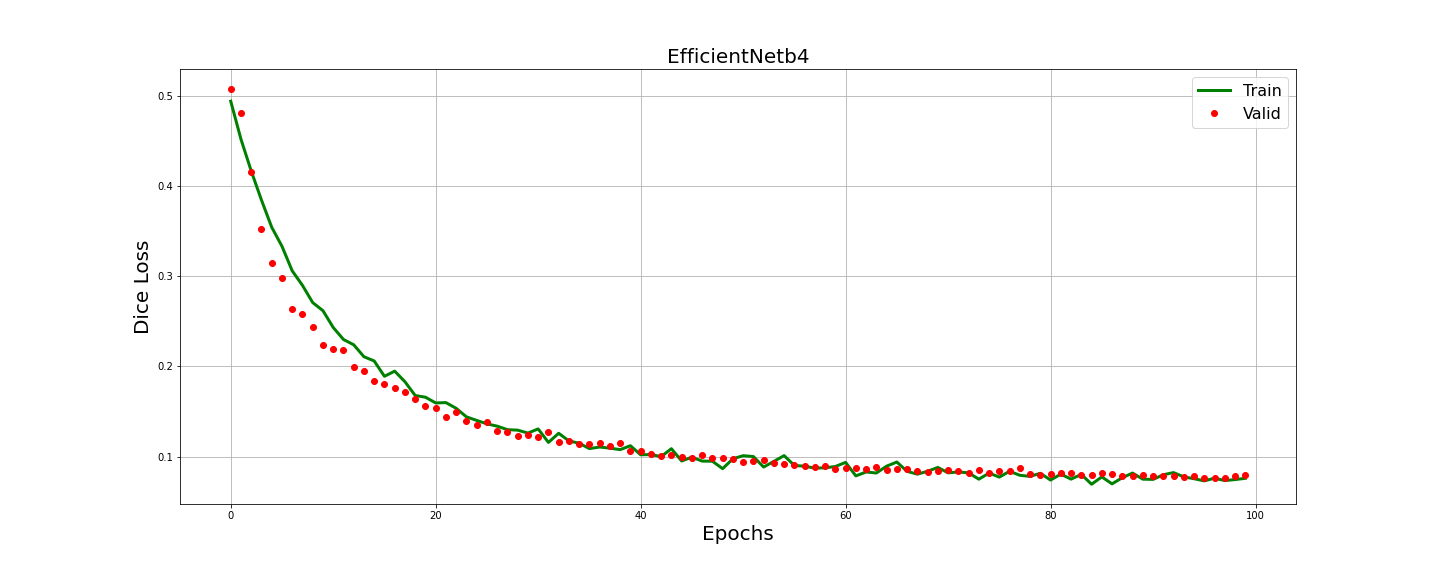

Performance evaluation metrics included accuracy, precision, recall, and IoU. No overfitting was observed during training, and results showcased how EfficientNetb4-based U-Net++ achieved superior metrics:

- Mean Accuracy: 92.23%

- Mean IoU: 88.32%

- Mean Precision: 93.2%

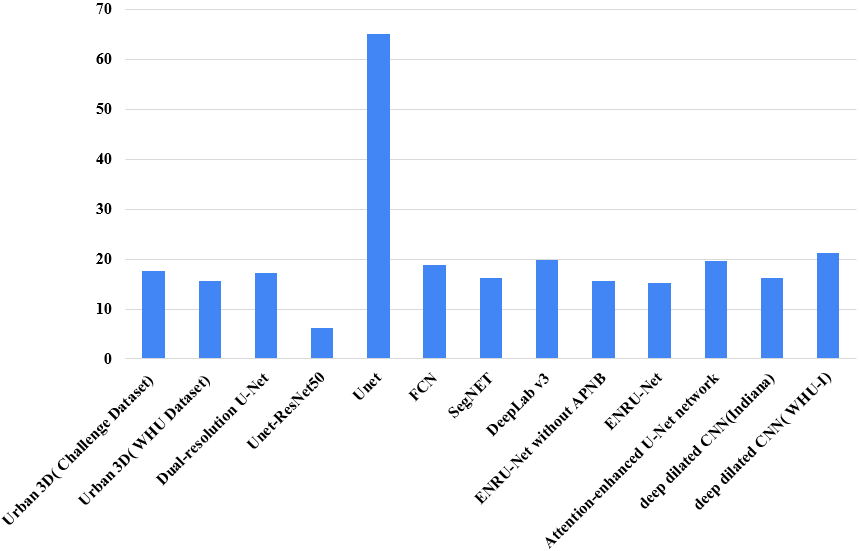

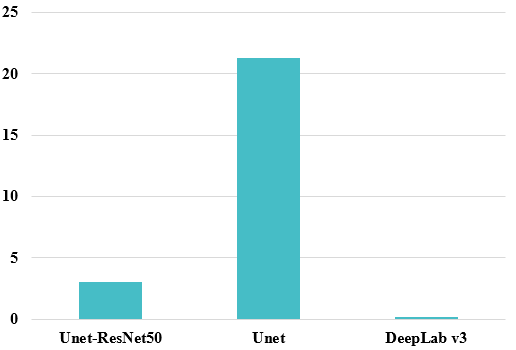

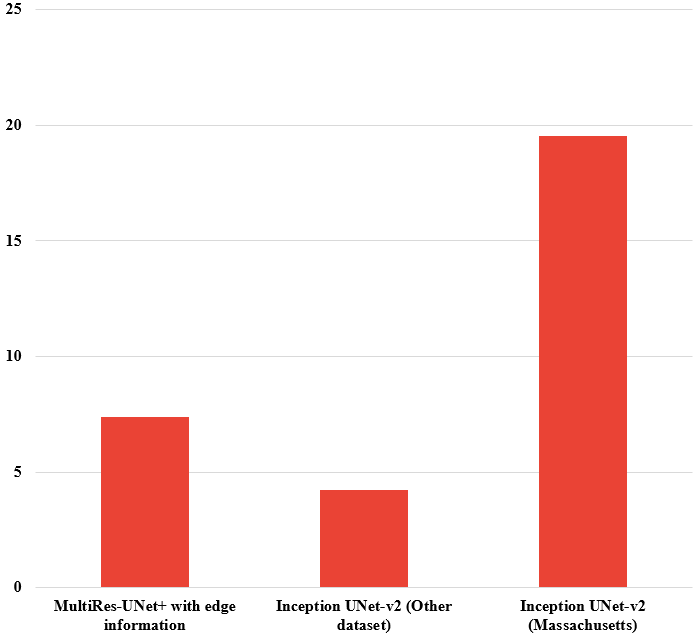

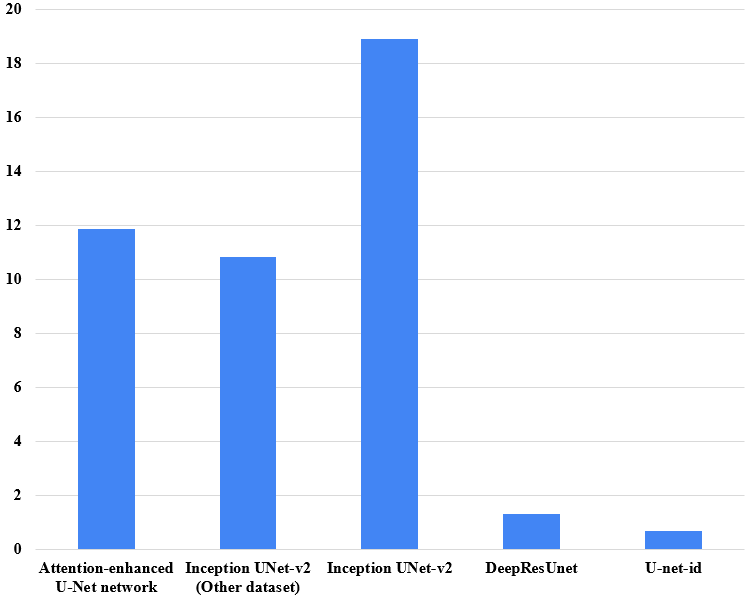

Comparisons with other architectures underscore the proposed method's effectiveness in building extraction tasks.

Figure 5: Improvement of U-Net++(efficientNetb4) over other methods (a)~IOU (b)~Accuracy (c)~Precision and (d)~Recall.

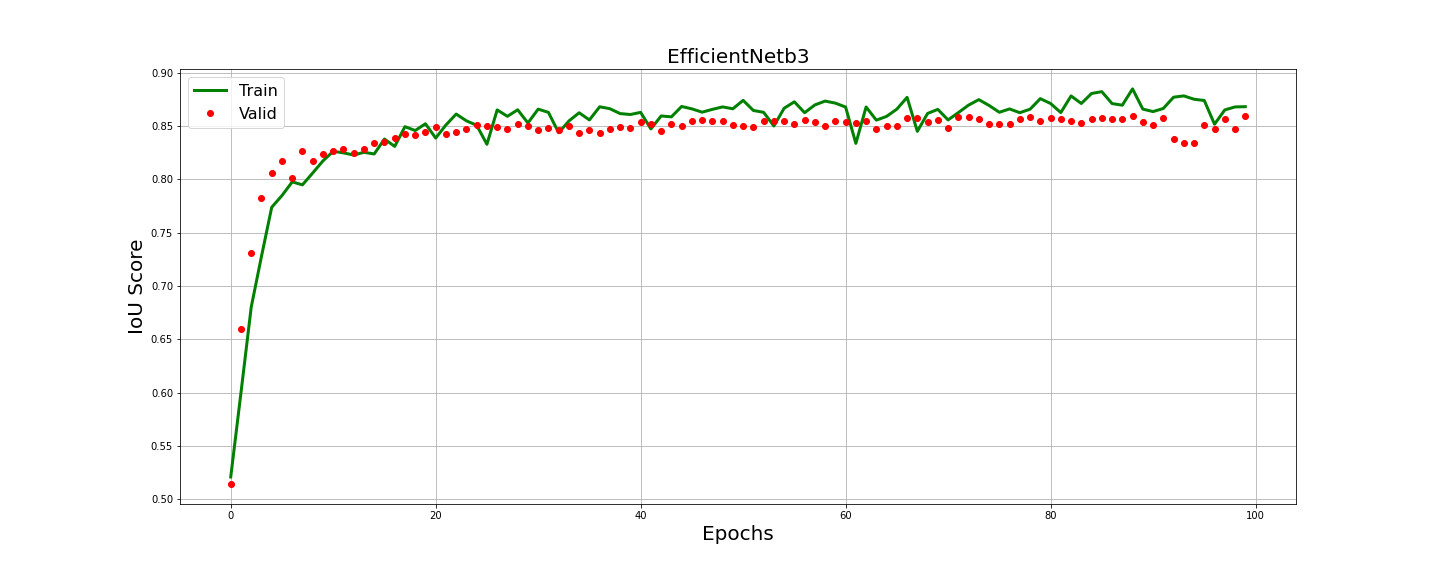

Figure 6: Training History of IOU score.

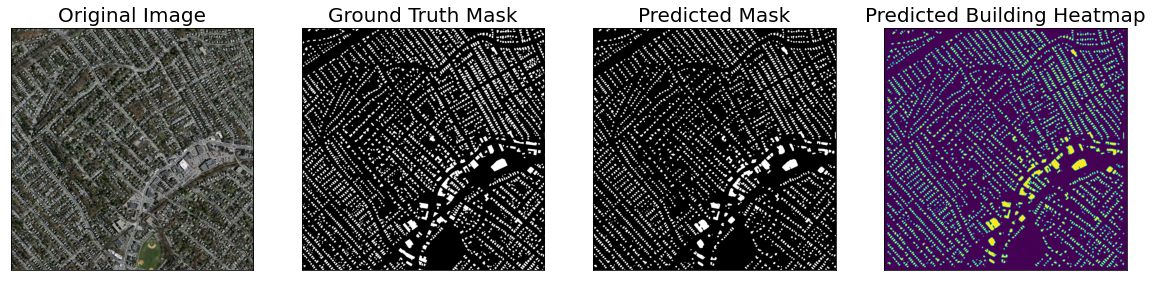

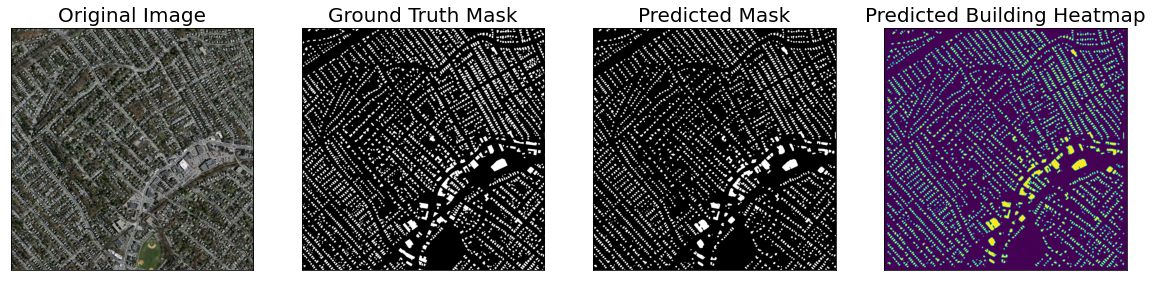

Figure 7: Example of Predicted Image Vs Original Ground-Truth of U-Net++(efficientNetb4).

Conclusion

The study successfully demonstrates the integration of EfficientNet into U-Net++, yielding enhanced performance in automatic building extraction from high-resolution satellite images. Despite promising results, challenges such as distinguishing between shadows and buildings persist, suggesting future directions to incorporate larger datasets and attention mechanisms for even greater accuracy and reliability.