- The paper introduces Meta Prompting, a framework that maps task categories to structured prompts to systematically enhance LLM reasoning.

- It details Recursive Meta Prompting (RMP) as a monad-based self-improvement loop that refines prompt generation for superior task performance.

- Verification on Qwen-72B shows MP yields state-of-the-art results with 46.3% PASS@1 on MATH and 83.5% on GSM8K, indicating high efficiency.

Introduction

"Meta Prompting for AI Systems" presents an advanced framework for enhancing the reasoning capabilities of LLMs through a structure-focused approach termed Meta Prompting (MP). This paper sets out a novel paradigm by shifting the focus from content-specific prompt examples to a generalized structural format using principles from category theory. The authors extend MP into Recursive Meta Prompting (RMP), enabling LLMs to autonomously generate and refine their prompts.

Meta Prompting is defined as a functorial mapping of task categories to structured prompts. By employing category theory, the authors describe MP as a formal system where tasks are seen as objects, and the process of solving them involves morphisms between these objects. This structuring equips LLMs with modular and reusable problem-solving templates, ensuring that complex reasoning flows can be systematically decomposed and reconstituted without reliance on task-specific data.

A significant contribution of the paper is RMP, modeled as a monad. This framework allows LLMs to engage in self-improvement loops using prompt generation and refinement principles akin to metaprogramming. Through RMP, LLMs can independently refine their problem-solving strategies, thereby enhancing both model autonomy and efficiency in prompt engineering.

Implementation and Results

Practical Implementation

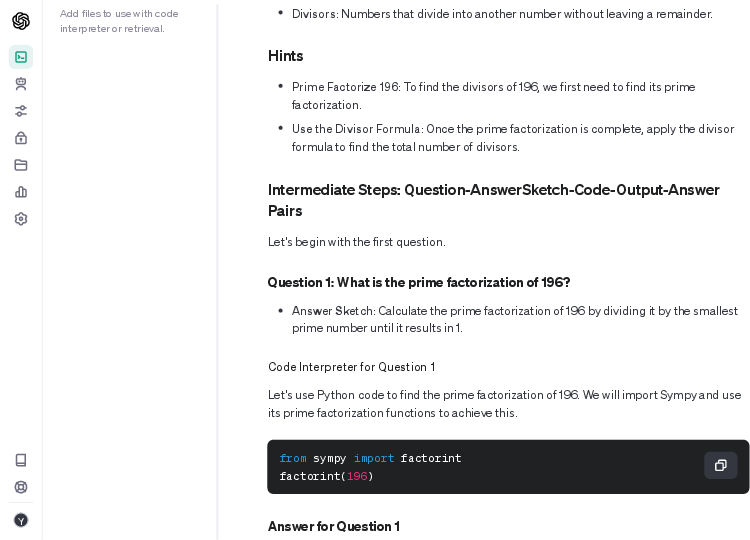

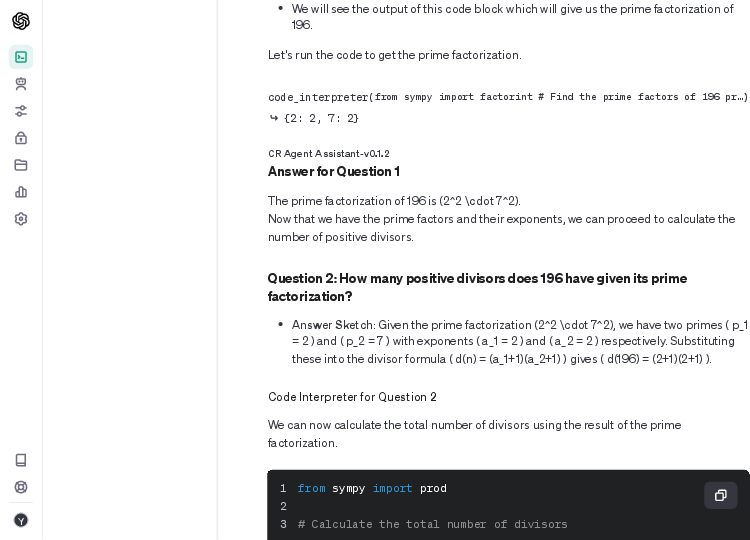

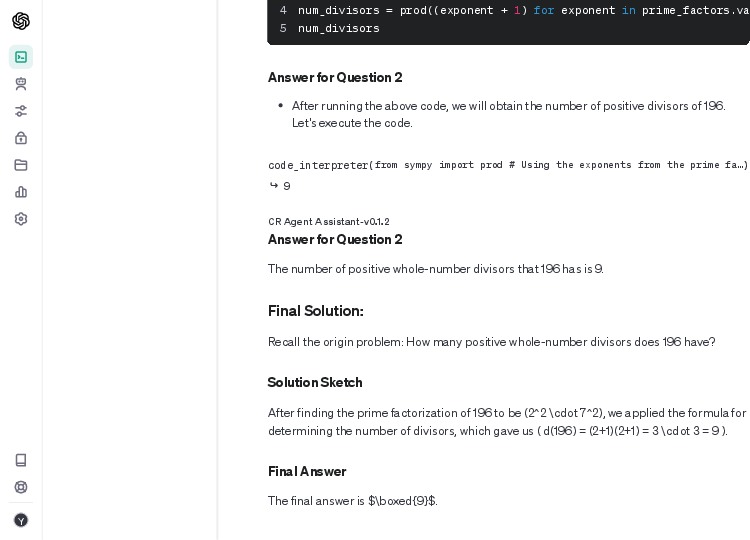

The authors demonstrate the implementation using a Qwen-72B base model, which, when equipped with a single, example-agnostic meta-prompt, achieves superior performance on mathematical benchmarks such as MATH and GSM8K. This structure-centric method facilitates significant gains in token efficiency compared to traditional few-shot prompting methodologies.

The experiments reveal that the zero-shot setting of MP surpasses state-of-the-art models like GPT-4, achieving a PASS@1 accuracy of 46.3% on MATH without fine-tuning, and 83.5% accuracy on GSM8K. This evidences the robust problem-solving and in-context learning capabilities of LLMs when guided by systematized structural prompts.

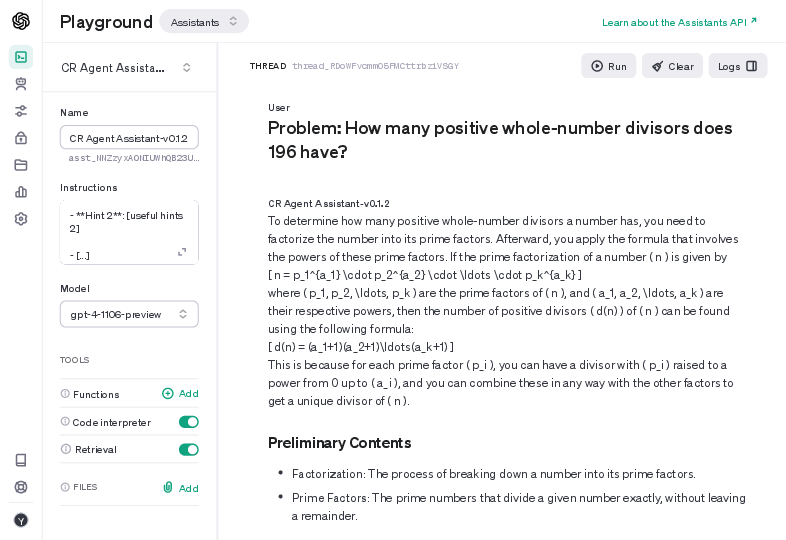

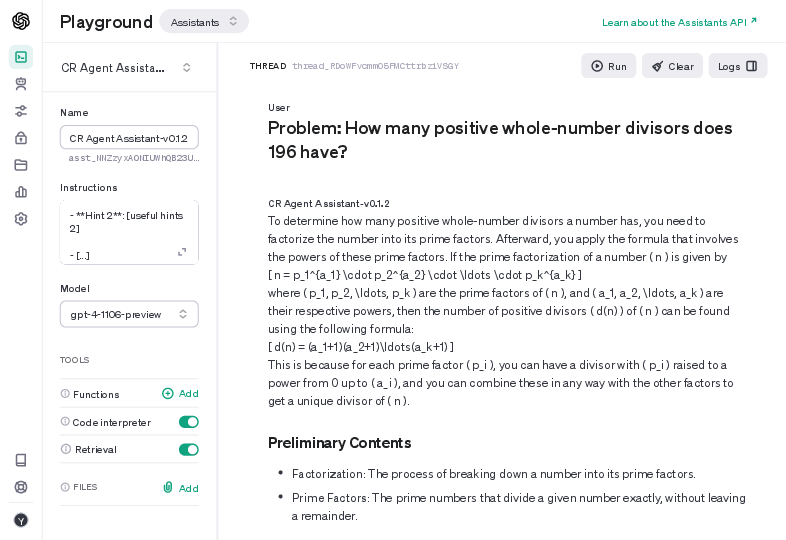

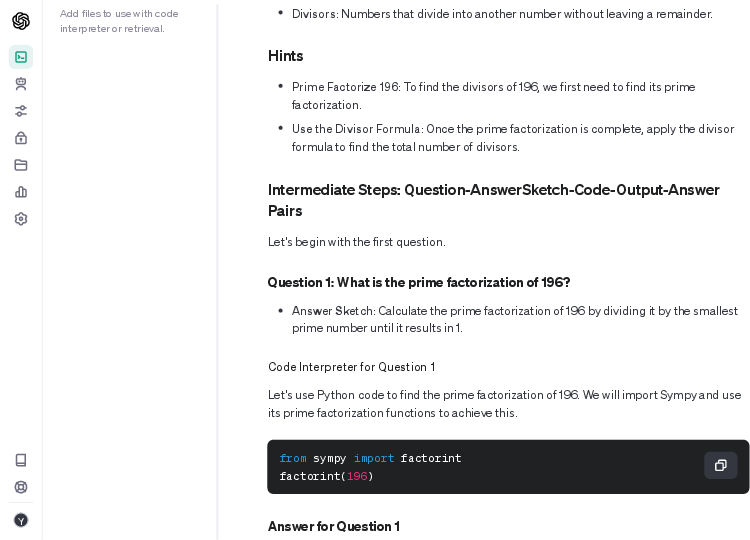

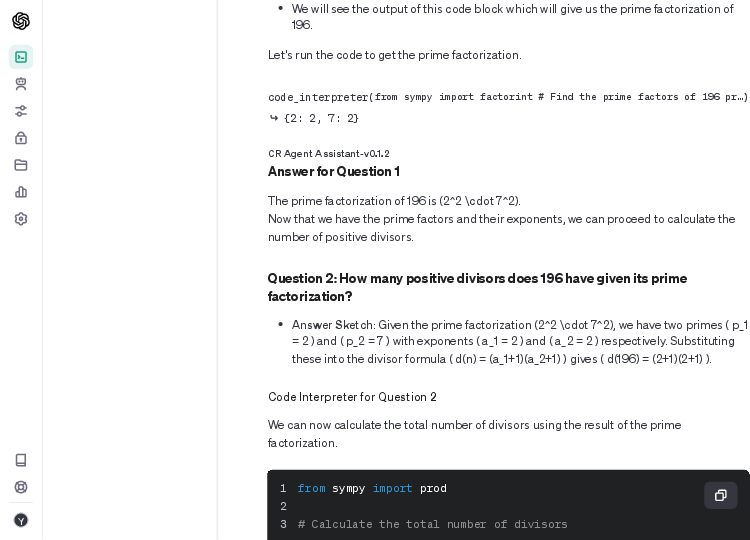

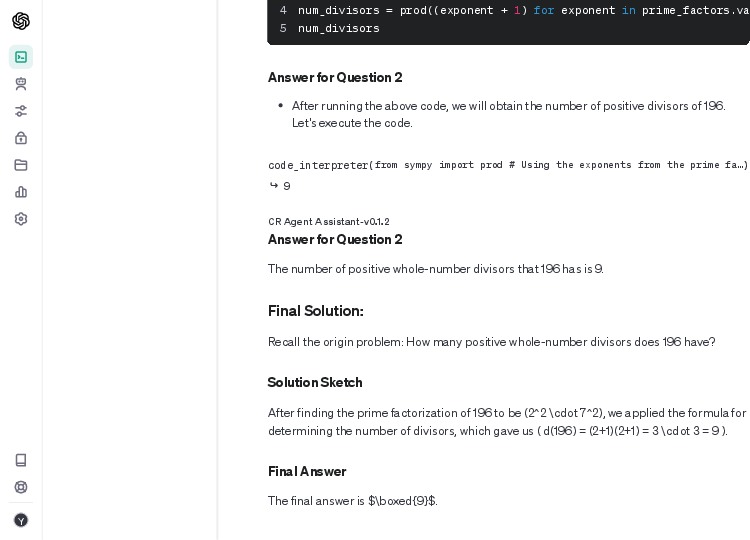

Figure 1: Experiments using the MP-CR Agent within the OpenAI Assistant for solving a MATH~\citep{hendrycks2021measuring}.

Theoretical Implications

By utilizing category theory, the paper provides a formal language for reasoning about prompts, emphasizing the functorial nature of MP for task-to-prompt transformations. This not only underpins the compositionality and adaptability of prompts but also assures the systematic alignment of problem-solving strategies with high-level task structures.

Monad Structure for Self-Improvement

Modeling RMP as a monad introduces a framework for recursive prompt refinement that is both associative and stable, ensuring consistency across iterative prompt enhancements. This elevates LLMs' ability to self-optimize without manual intervention, laying the groundwork for more autonomous AI systems.

Conclusion

"Meta Prompting for AI Systems" offers a refined approach to LLM utilization through structural and recursive prompting strategies. By prioritizing form over content, the framework enhances model reasoning, efficiency, and fairness in evaluation settings. These advancements hint at new directions in building adaptable, self-modifying AI capable of complex reasoning—a step closer to achieving greater autonomy in artificial intelligence applications. The theoretical and empirical insights provide a strong foundation for future exploration into more efficient and self-sufficient AI systems.