- The paper presents an analytical framework that decomposes Bayesian cost into coding and decoding, optimizing mutual information in neural representations.

- It demonstrates how category learning expands neural space near decision boundaries, enhancing discrimination as evidenced by changes in neural Fisher information.

- Extensive numerical experiments on Gaussian datasets and MNIST validate the theoretical insights, offering implications for both biological systems and AI.

The paper "Information theoretic study of the neural geometry induced by category learning" (2311.15682) presents a comprehensive investigation of how category learning influences the neural representation of information in biological and artificial networks. The research adopts an information theoretic framework to analyze and optimize neural representations during categorization tasks.

Decomposition of Bayesian Cost

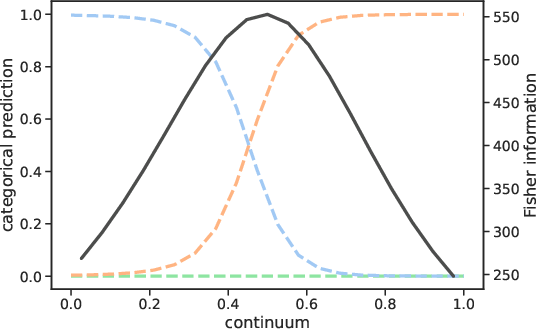

The authors begin by introducing an analytical framework for evaluating the Bayesian cost associated with category learning, decomposing the cost into distinct coding and decoding components. The coding component is focused on maximizing mutual information between the categories and the neural activities, while the decoding component aims to minimize the divergence between actual and estimated category probabilities. The ability to maximize mutual information is crucial for the representation space where decision boundaries are emphasized via an expansion of neural space.

Neural Metrics and Geometry

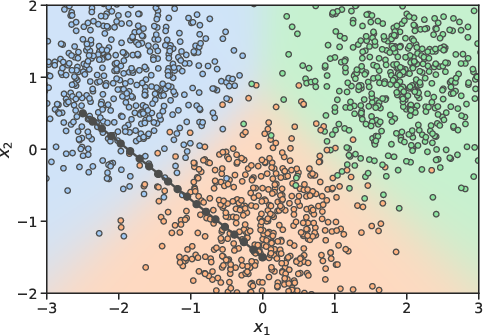

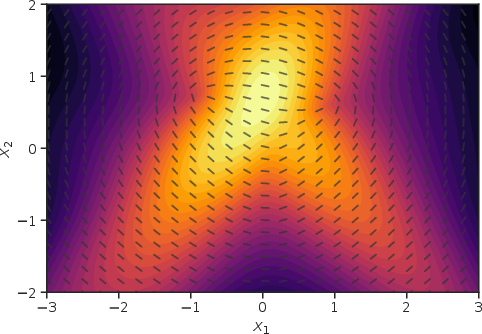

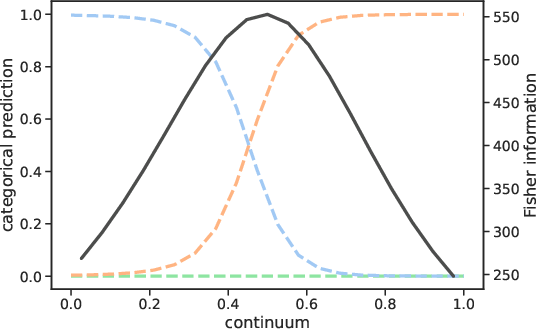

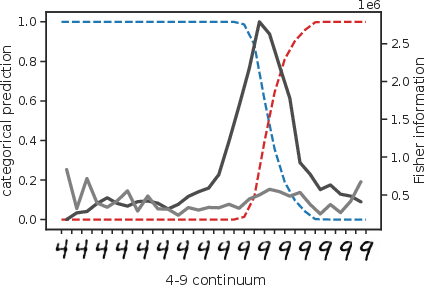

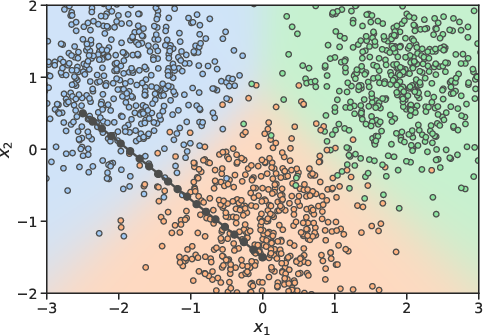

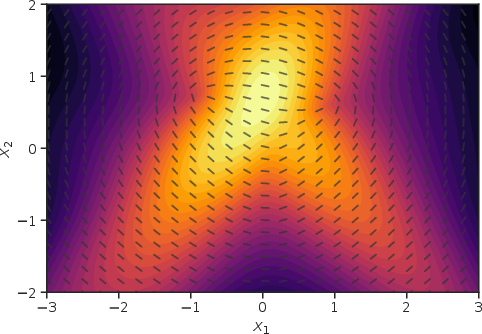

To further understand how neural spaces are configured for optimal categorization, the paper explores the geometry of internal neural representations. The study reveals how category learning results in specific geometrical transformations within the neural space, primarily expanding the neural representation near decision boundaries while contracting it elsewhere. This phenomenon, known as categorical perception, enhances discrimination between inputs near boundaries compared to those within category interiors.

Figure 1: Two-dimensional example with three Gaussian categories. Neural representation focusing at category decision boundaries.

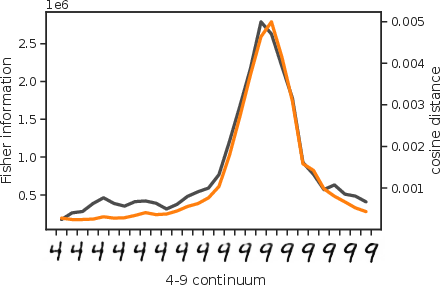

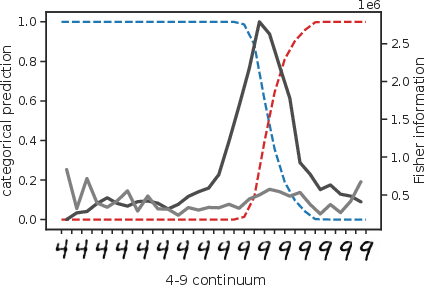

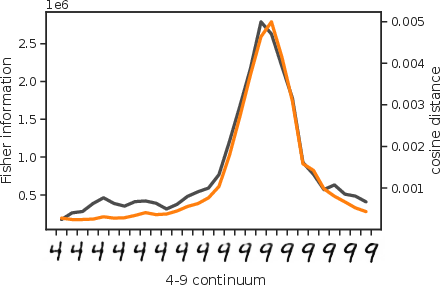

Figure 2: Categorical perception along a 4 to 9 continuum. Analysis reveals increased discrimination near categorical boundaries.

Numerical Experiments

The paper provides numerical illustrations using both simple two-dimensional Gaussian category datasets and complex high-dimensional datasets such as MNIST handwritten digits. The results from these experiments support the theoretical predictions, showing how neural space metrics are adjusted as learning progresses. These transformations are evidenced by changes in neural Fisher information during processing, aligning with known category boundaries and confirming theoretical assertions of expanded decision boundary areas inducing better discrimination.

Discussion on Implications

The insights obtained from this study have broad implications for both theoretical understanding and practical applications in AI systems. From a theoretical perspective, the paper reinforces the infomax principle as an intrinsic part of neural optimization during category learning. Practically, these findings can inform the design of artificial neural networks, especially in tasks requiring high precision in category distinguishing, where optimizing mutual information can enhance classification accuracy.

Moving forward, the research suggests several areas for further investigation, including refining models under finite sample conditions, addressing noise influences within neural processing layers, and exploring efficient estimations of Fisher information metrics. Such advancements could lead to even greater insights into the intricate workings of neural categorization processes.

Conclusion

In summary, the paper provides a significant contribution to the understanding of category learning through an information theoretic lens, particularly highlighting the neural geometric transformations as category boundaries are learned. The emphasis on mutual information optimization for coding efficiency and decision boundary expansion demonstrates a key principle in both cognitive science and artificial intelligence. Future research can build upon these findings to explore the complexities of neural encoding strategies in both biological systems and machine learning frameworks.