Nonlinear classification of neural manifolds with contextual information

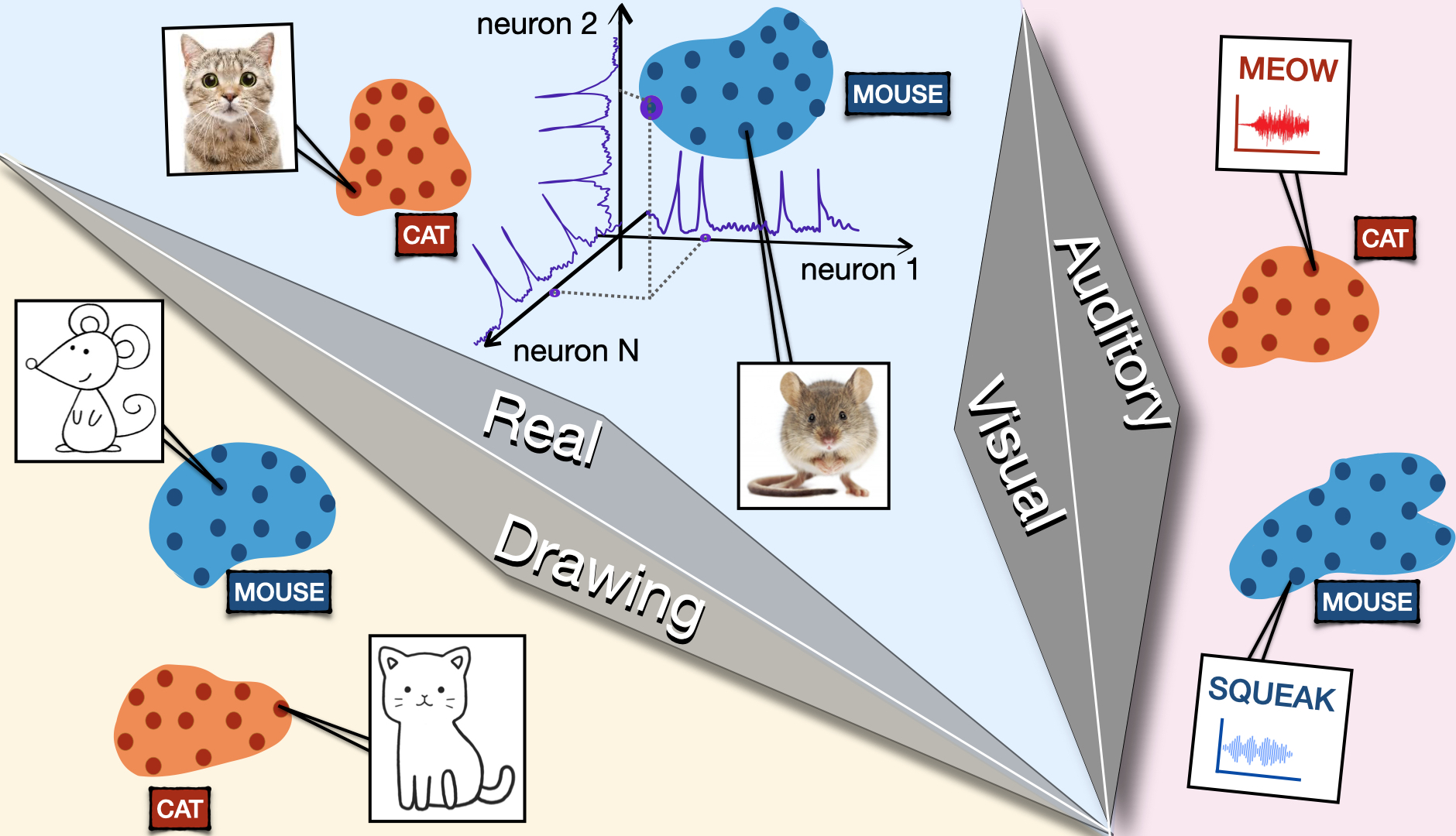

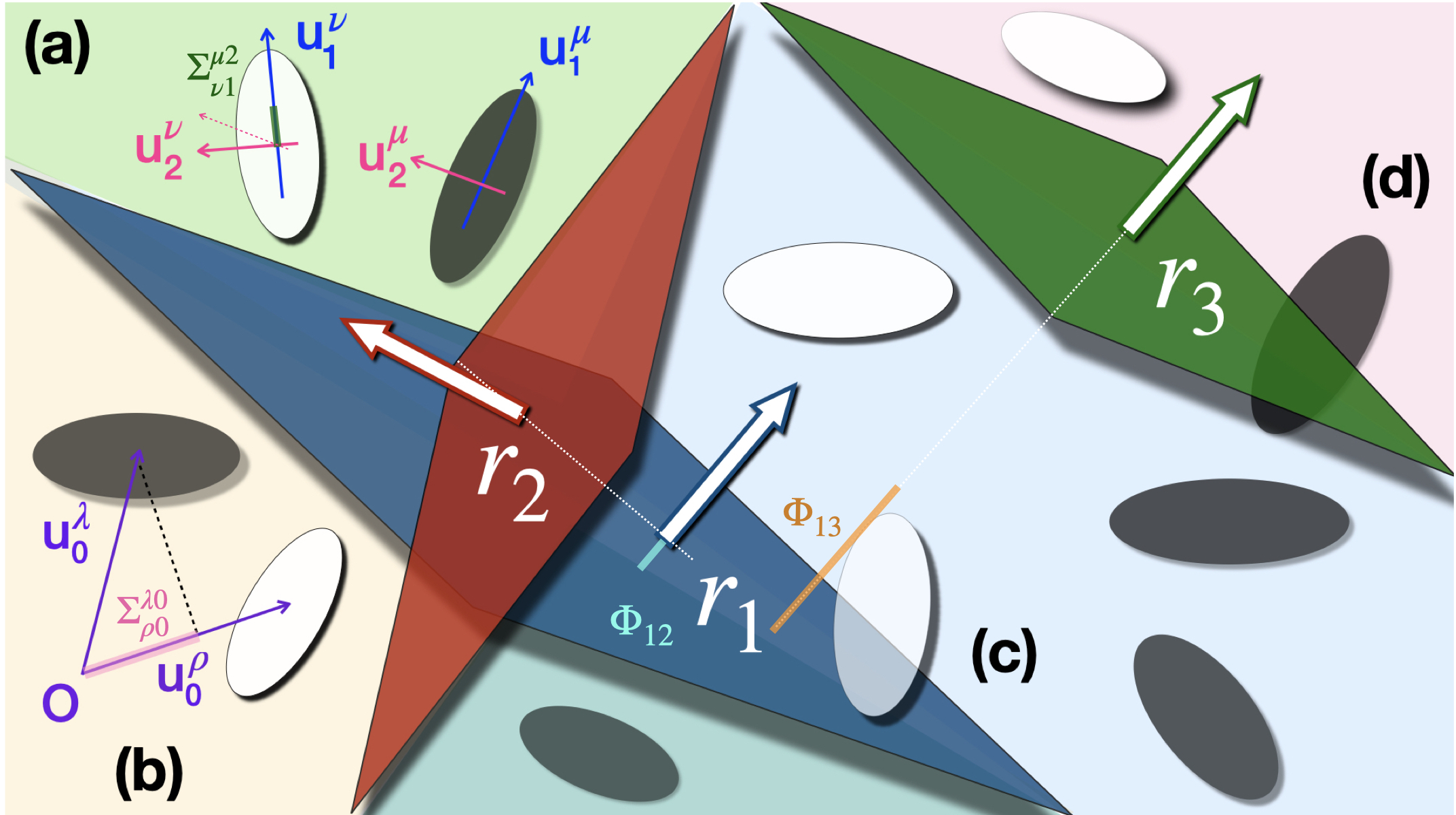

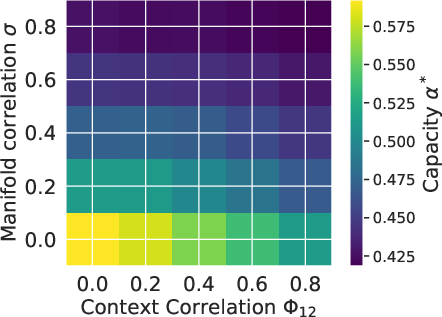

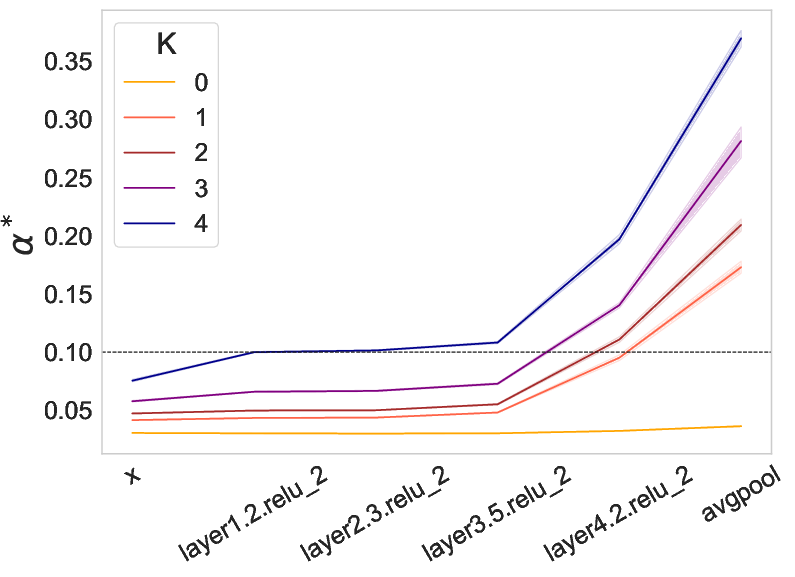

Abstract: Understanding how neural systems efficiently process information through distributed representations is a fundamental challenge at the interface of neuroscience and machine learning. Recent approaches analyze the statistical and geometrical attributes of neural representations as population-level mechanistic descriptors of task implementation. In particular, manifold capacity has emerged as a promising framework linking population geometry to the separability of neural manifolds. However, this metric has been limited to linear readouts. To address this limitation, we introduce a theoretical framework that leverages latent directions in input space, which can be related to contextual information. We derive an exact formula for the context-dependent manifold capacity that depends on manifold geometry and context correlations, and validate it on synthetic and real data. Our framework's increased expressivity captures representation reformatting in deep networks at early stages of the layer hierarchy, previously inaccessible to analysis. As context-dependent nonlinearity is ubiquitous in neural systems, our data-driven and theoretically grounded approach promises to elucidate context-dependent computation across scales, datasets, and models.

- S. Chung and L. Abbott, Curr. opin. neurobiol. 70, 137 (2021).

- T. J. Buschman and S. Kastner, Neuron 88, 127 (2015).

- M. F. Panichello and T. J. Buschman, Nature 592, 601 (2021).

- T. Moore and K. M. Armstrong, Nature 421, 370 (2003).

- T. J. Buschman and E. K. Miller, science 315, 1860 (2007).

- M. London and M. Häusser, Annu. Rev. Neurosci. 28, 503 (2005).

- E. Sezener et al., bioRxiv (2022), 10.1101/2021.03.10.434756.

- J. Veness et al., in Proceedings of the AAAI Conference on Artificial Intelligence, Vol. 35 (2021).

- Q. Li and H. Sompolinsky, Advances in Neural Information Processing Systems 35, 34789 (2022).

- E. Gardner, Journal of physics A: Mathematical and general 21, 257 (1988).

- R. Monasson and R. Zecchina, Modern Physics Letters B 9, 1887 (1995).

- J. A. Zavatone-Veth and C. Pehlevan, Physical Review E 103, L020301 (2021).

- T. M. Cover, IEEE transactions on electronic computers (1965).

- C. Stephenson et al., in ICLR 2021 (2021).

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.