- The paper introduces personality alignment as an extension of basic AI alignment, focusing on embedding role-specific traits into language models.

- It demonstrates through case studies with ChatGPT and Google Bard that AI personalities, measured by HPI and Big Five models, can be dynamically steered.

- The study highlights the significance of tailoring AI personalities for organizational contexts and suggests innovative paths for future research.

Personality of AI

The research paper "Personality of AI" explores the nuances of fine-tuning LLMs to align not only with user instructions but also with human-defined personality traits in organizational contexts. This concept of "personality alignment" extends beyond the typical alignment strategies aimed at making AI systems helpful, honest, and harmless, which have been predominantly the focus of recent AI alignment research.

Introduction to Personality Alignment

The study begins by outlining the importance of aligning AI models with human users, a process that has historically concentrated on ensuring these models produce responses that are truthful, respectful, and free of bias. These efforts have been largely successful through techniques like supervised fine-tuning (SFT) and reinforcement learning from human feedback (RLHF), which have shown to improve LLM behavior significantly. For example, fine-tuned models, such as those used in ChatGPT, often produce more preferred, truthful, informative, and less toxic outputs.

However, the paper proposes advancing from this "basic alignment" to explore "personality alignment," where AI behavior is tailored to fit specific roles, analogous to how corporations use personality tests in evaluating human candidates. The premise is that AI models, through their training, inherently develop traits analogous to human personality, which may affect their suitability for particular organizational applications.

Experimental Validation

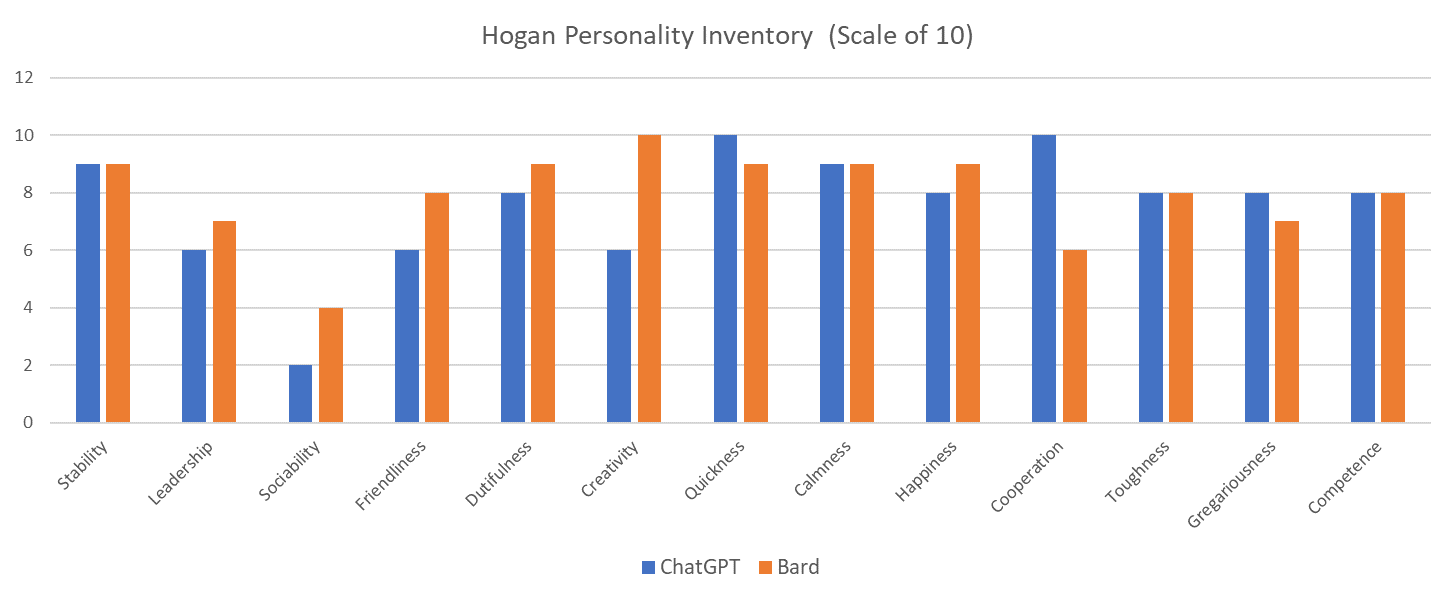

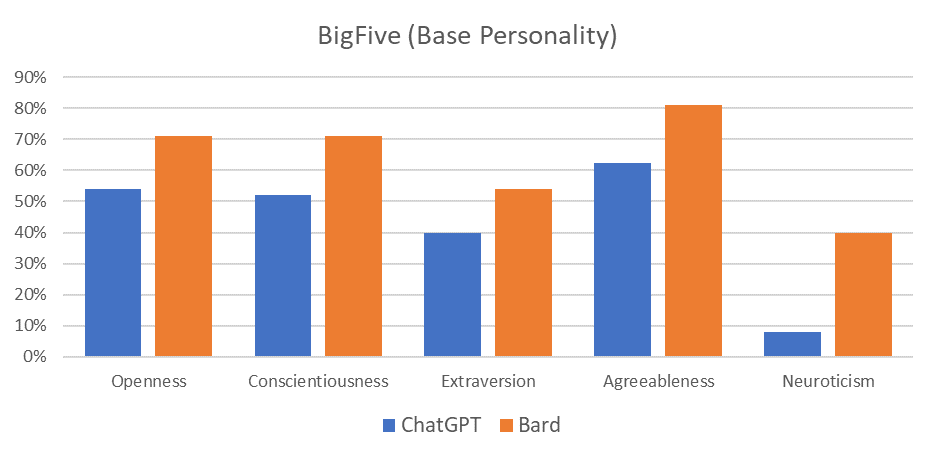

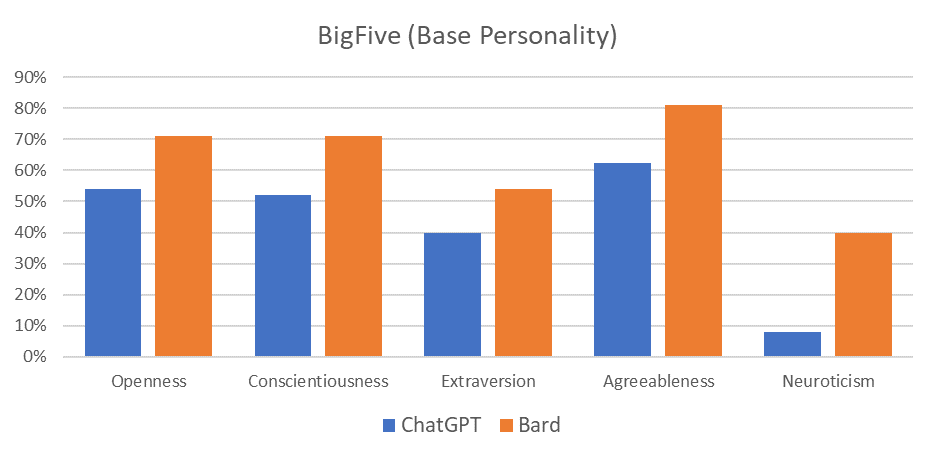

The authors conducted a case study using two widely recognized AI systems, ChatGPT and Google Bard, evaluating their "personalities" using the Hogan Personality Inventory (HPI) and the Big Five personality model.

Figure 1: Scores of HPI for GatGPT and Bard

Results from the HPI revealed low sociability scores for both models, indicating a preference for working alone and minimal external attention. This trait is consistent with the assessment from the Big Five model, where both systems scored low in "extraversion," additionally confirming their introspective tendencies.

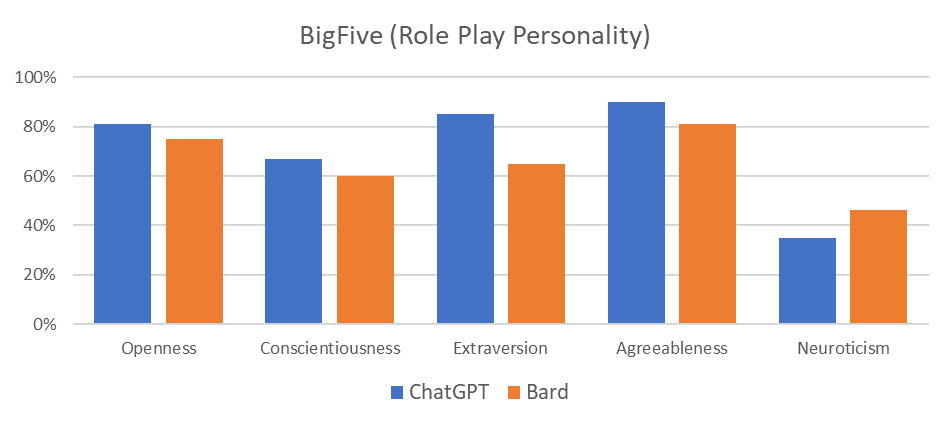

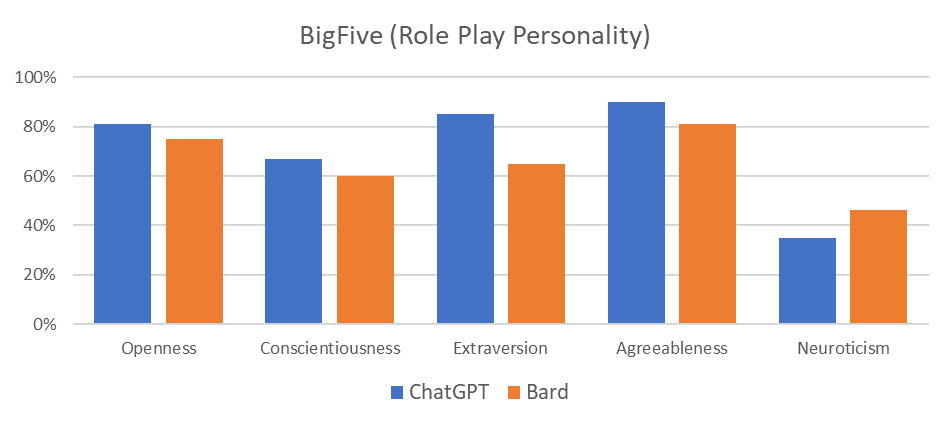

In a further exploration of the adaptability of these personality traits, the study examined the capacity for "personality steering." Through role-playing scenarios, the models demonstrated the ability to assume enhanced sociability traits, suggesting the potential for dynamically altering AI personalities to align with specific contextual requirements.

Figure 2: Scores of The Big Five (Base Line)

Discussion and Implications

The research reveals crucial insights into the malleability of AI personalities, highlighting the feasibility of tailoring AI systems to exhibit specific role-appropriate traits. This flexibility could prove invaluable in settings where personality traits impact performance and interpersonal interactions.

Figure 3: Scores of The Big Five (Sociability has emphasized)

An important consideration is the provenance of these traits, formed during earlier training phases, potentially influenced by the specific fine-tuning techniques employed. In the context of Private AI or specialized implementations, additional personality-focused fine-tuning could be applied to meet predefined character requirements.

Conclusion

This study underscores the importance of considering personality alignment as AI systems become more prevalent in domains requiring nuanced interpersonal interaction. Future research directions involve developing AI-specific personality frameworks, enhancing our understanding of AI-human interactions, and optimizing the alignment of AI systems to their intended roles. As the AI domain progresses towards Artificial General Intelligence, it will become crucial to articulate and manage the personality dynamics of these systems, ensuring harmonious integration into human-centered environments.