Thermodynamic Computing System for AI Applications

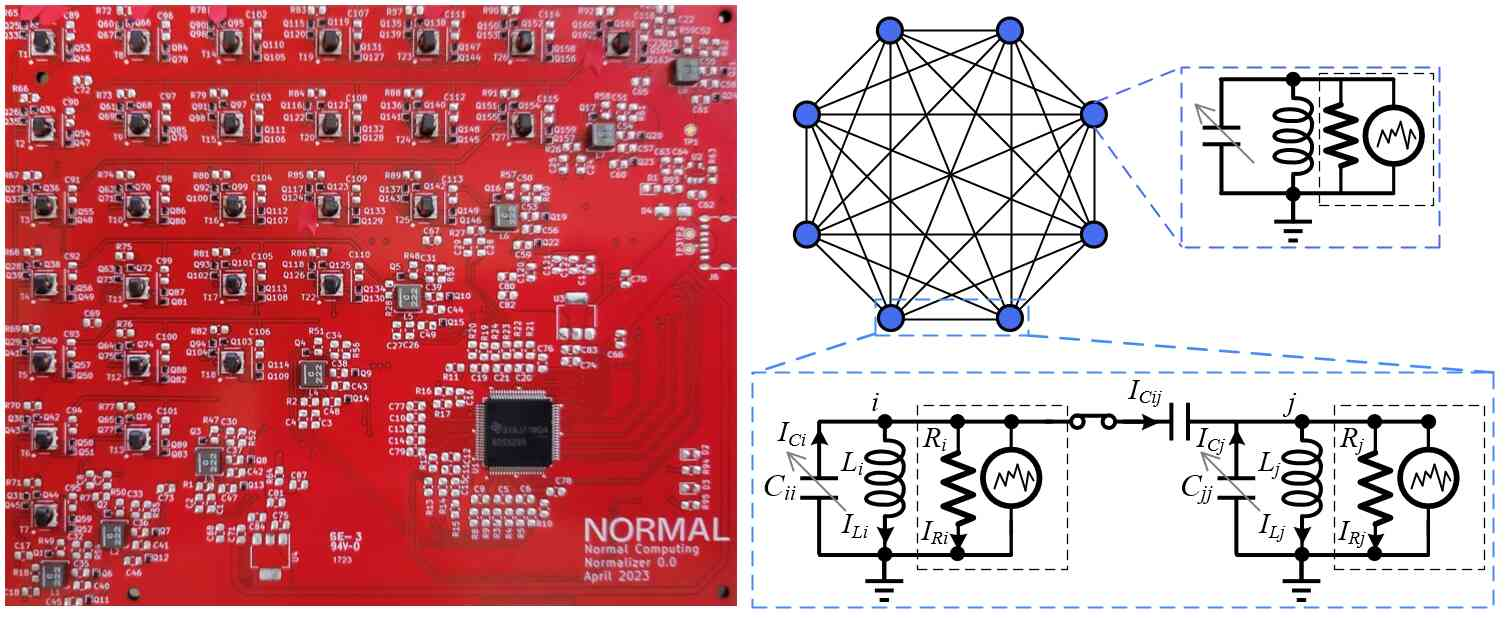

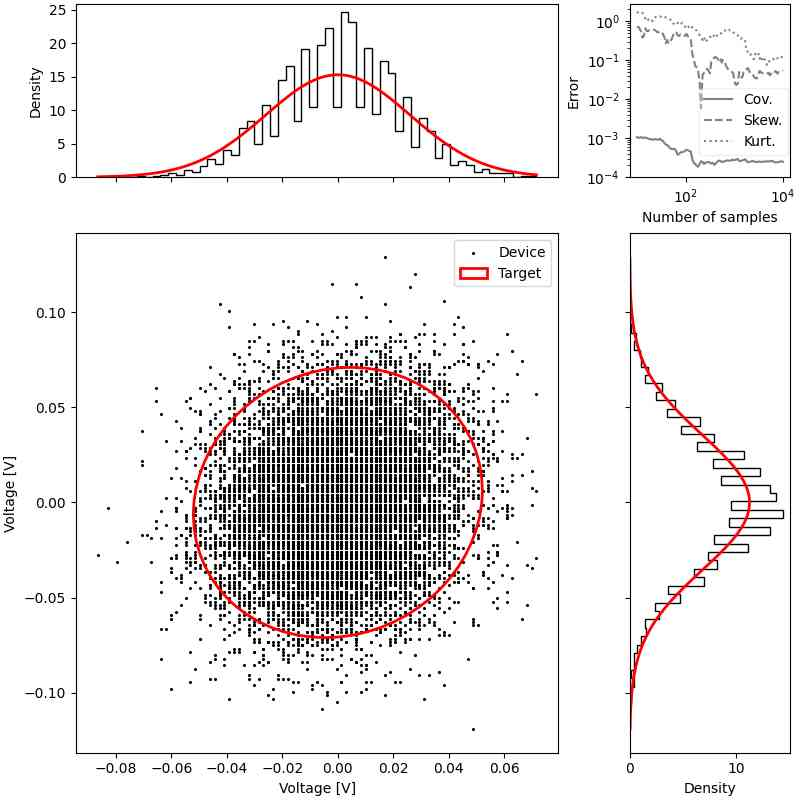

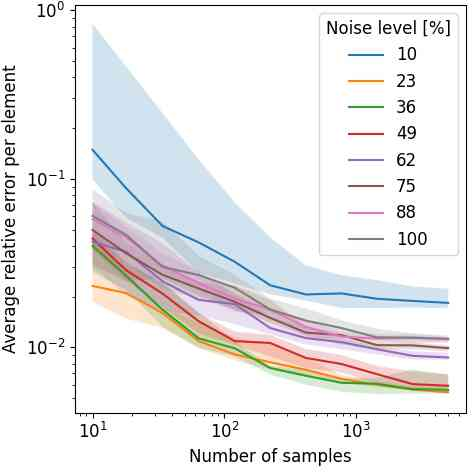

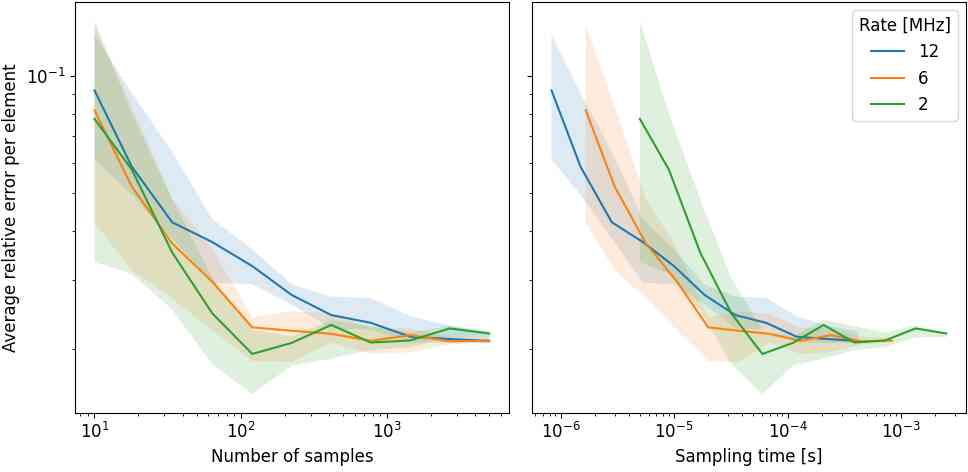

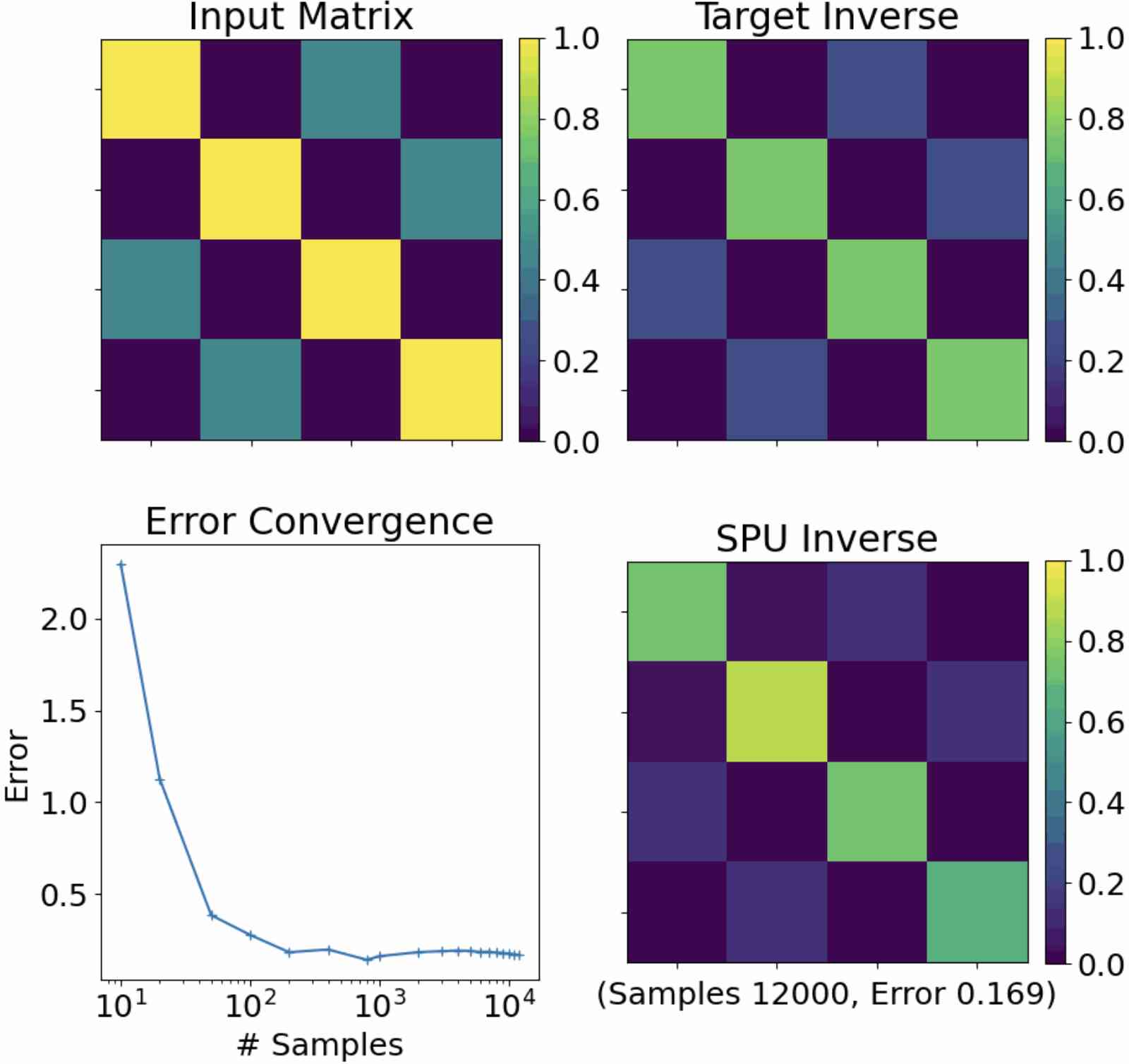

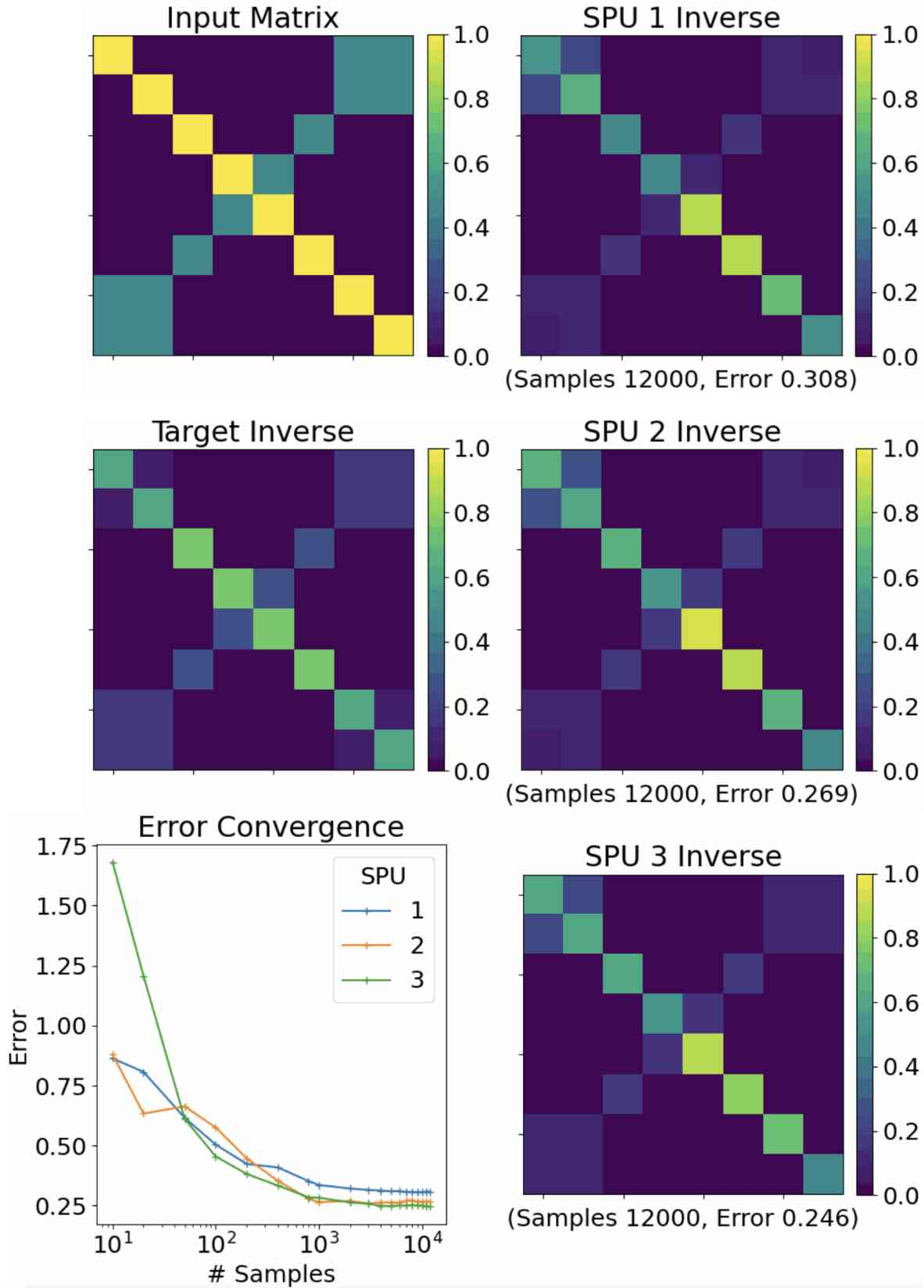

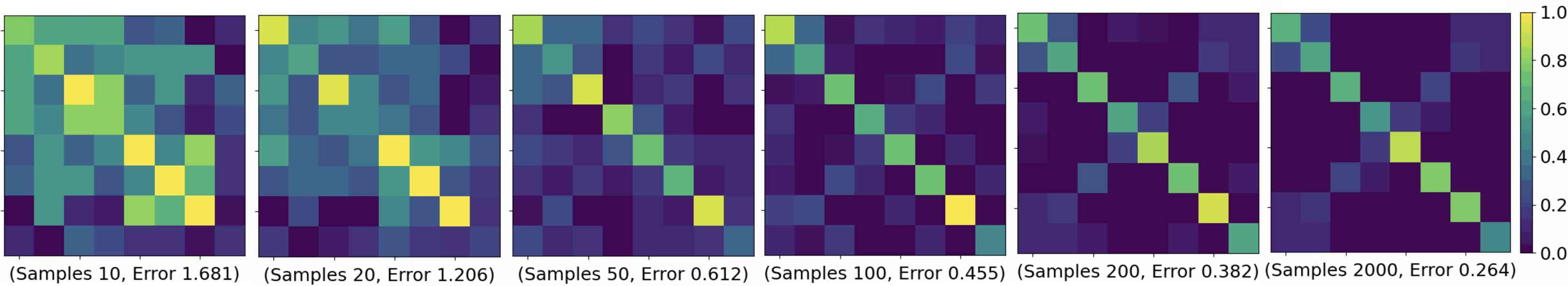

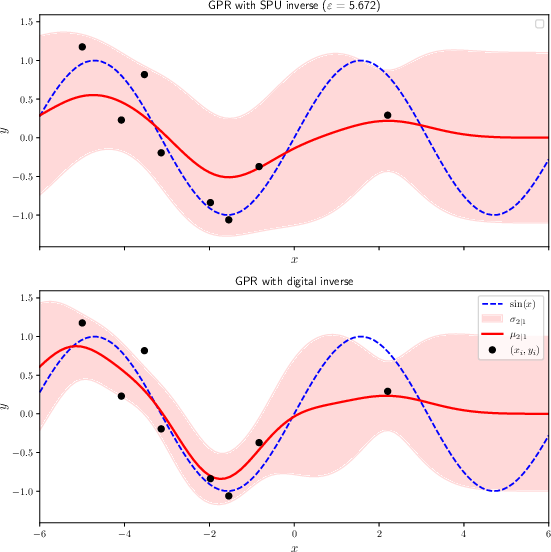

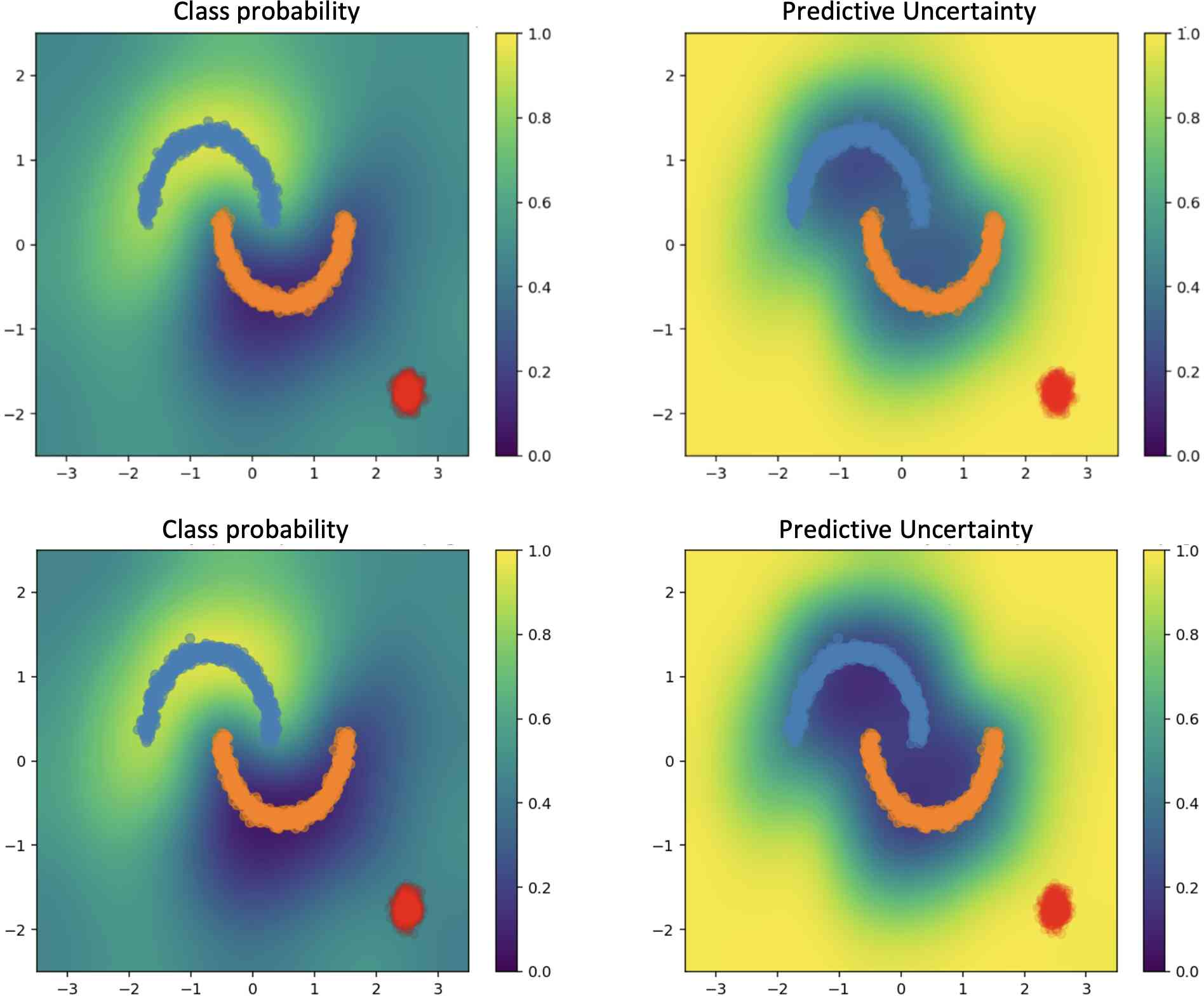

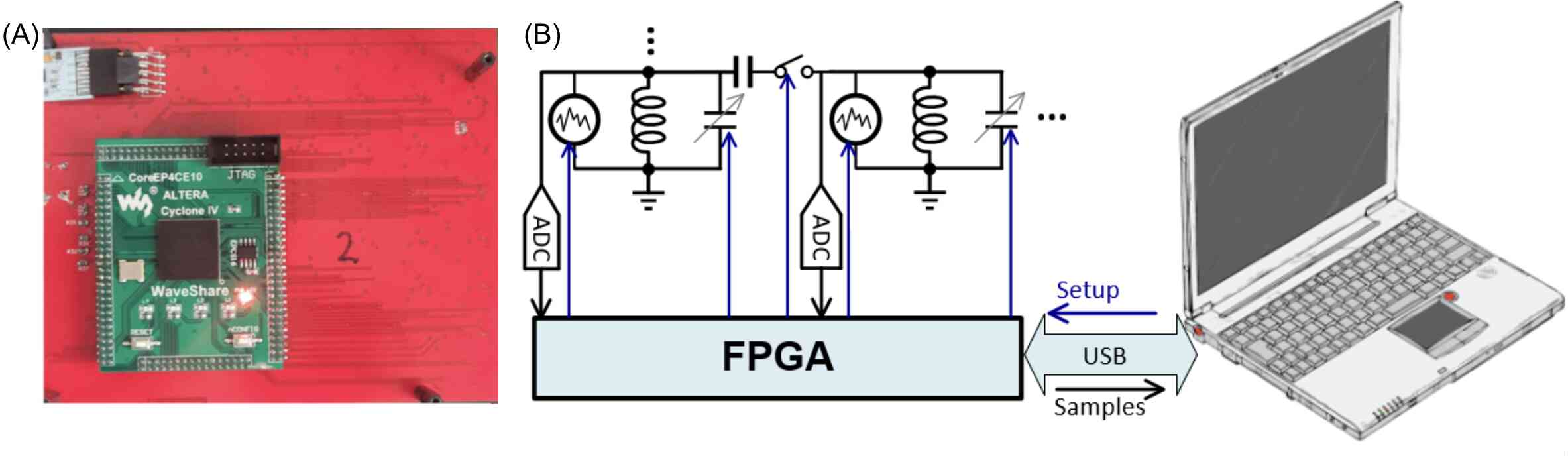

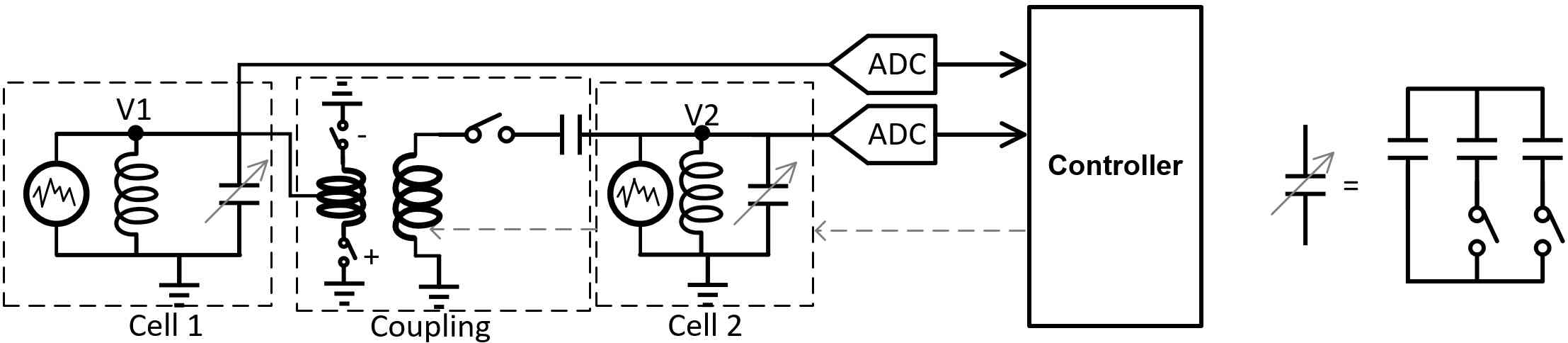

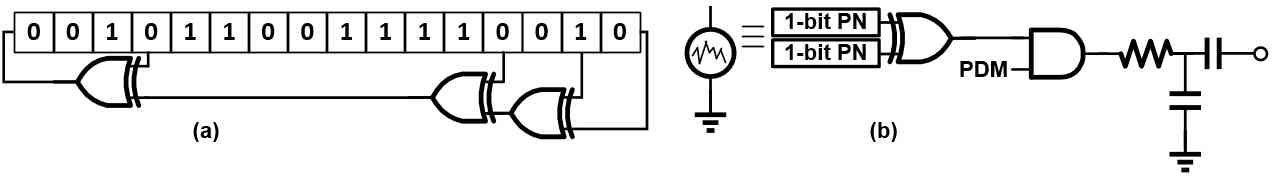

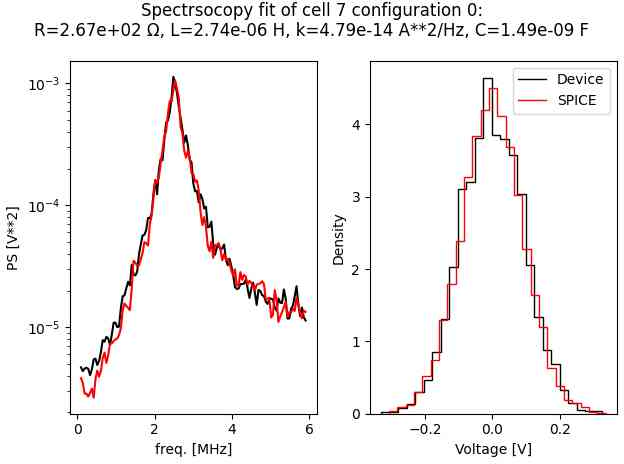

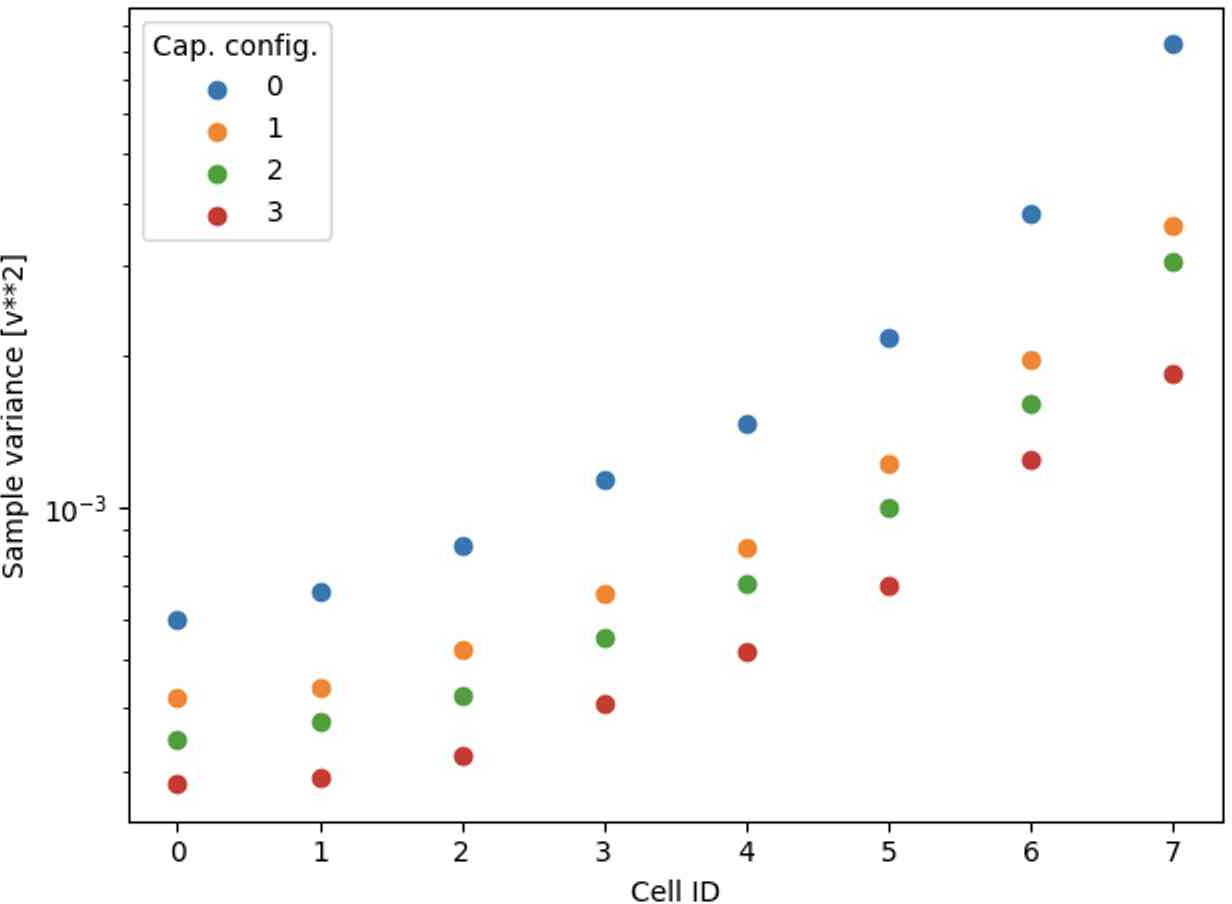

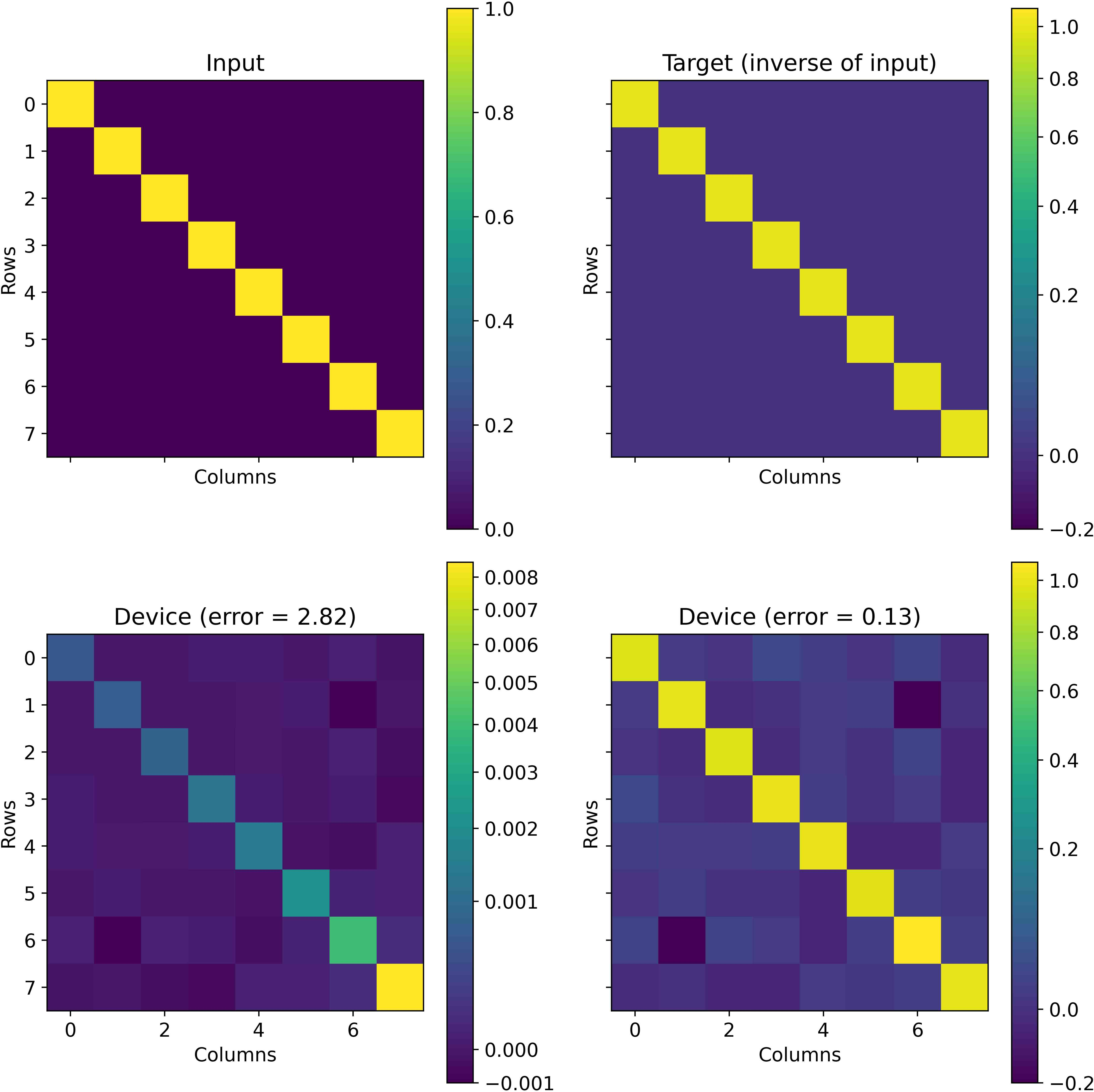

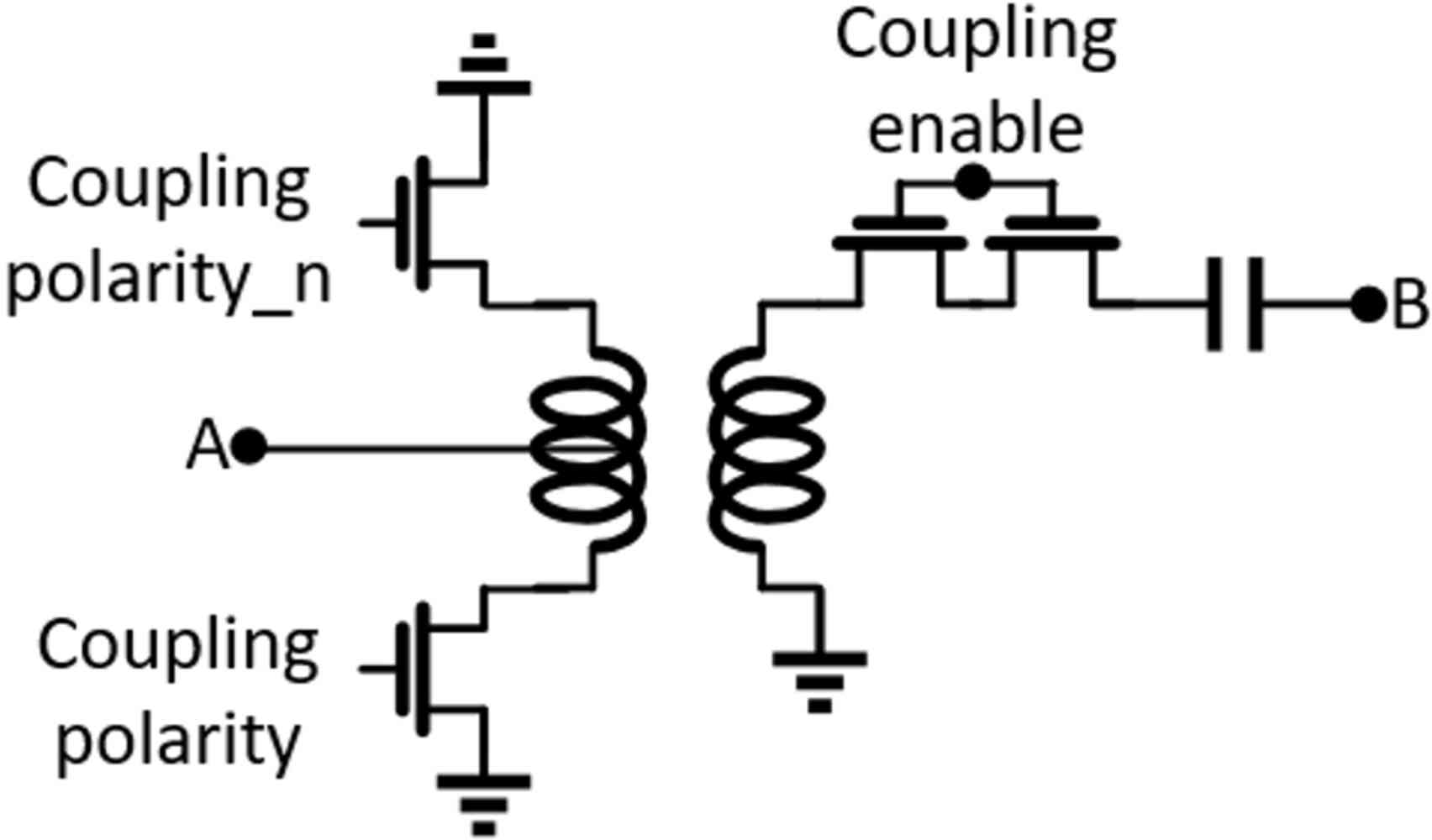

Abstract: Recent breakthroughs in AI algorithms have highlighted the need for novel computing hardware in order to truly unlock the potential for AI. Physics-based hardware, such as thermodynamic computing, has the potential to provide a fast, low-power means to accelerate AI primitives, especially generative AI and probabilistic AI. In this work, we present the first continuous-variable thermodynamic computer, which we call the stochastic processing unit (SPU). Our SPU is composed of RLC circuits, as unit cells, on a printed circuit board, with 8 unit cells that are all-to-all coupled via switched capacitances. It can be used for either sampling or linear algebra primitives, and we demonstrate Gaussian sampling and matrix inversion on our hardware. The latter represents the first thermodynamic linear algebra experiment. We also illustrate the applicability of the SPU to uncertainty quantification for neural network classification. We envision that this hardware, when scaled up in size, will have significant impact on accelerating various probabilistic AI applications.

- Sara Hooker, “The hardware lottery,” Communications of the ACM 64, 58–65 (2021).

- Pavel Izmailov, Sharad Vikram, Matthew D Hoffman, and Andrew Gordon Gordon Wilson, “What are bayesian neural network posteriors really like?” in International conference on machine learning (PMLR, 2021) pp. 4629–4640.

- Yann LeCun, “Objective-driven AI,” https://www.youtube.com/watch?v=vyqXLJsmsrk (2023).

- Geoffrey Hinton, “Neurips 2022,” https://neurips.cc/Conferences/2022/ScheduleMultitrack?event=55869 (2022).

- Naeimeh Mohseni, Peter L. McMahon, and Tim Byrnes, “Ising machines as hardware solvers of combinatorial optimization problems,” Nat. Rev. Phys. 4, 363–379 (2022).

- Navid Anjum Aadit, Andrea Grimaldi, Mario Carpentieri, Luke Theogarajan, John M. Martinis, Giovanni Finocchio, and Kerem Y. Camsari, “Massively parallel probabilistic computing with sparse Ising machines,” Nat. Electron. 5, 460–468 (2022).

- George Mourgias-Alexandris, Hitesh Ballani, Natalia G Berloff, James H Clegg, Daniel Cletheroe, Christos Gkantsidis, Istvan Haller, Vassily Lyutsarev, Francesca Parmigiani, Lucinda Pickup, et al., “Analog iterative machine (aim): using light to solve quadratic optimization problems with mixed variables,” arXiv preprint arXiv:2304.12594 (2023).

- Takahiro Inagaki, Yoshitaka Haribara, Koji Igarashi, Tomohiro Sonobe, Shuhei Tamate, Toshimori Honjo, Alireza Marandi, Peter L. McMahon, Takeshi Umeki, Koji Enbutsu, Osamu Tadanaga, Hirokazu Takenouchi, Kazuyuki Aihara, Ken-ichi Kawarabayashi, Kyo Inoue, Shoko Utsunomiya, and Hiroki Takesue, “A coherent ising machine for 2000-node optimization problems,” Science 354, 603–606 (2016).

- William Moy, Ibrahim Ahmed, Po-wei Chiu, John Moy, Sachin S Sapatnekar, and Chris H Kim, “A 1,968-node coupled ring oscillator circuit for combinatorial optimization problem solving,” Nature Electronics 5, 310–317 (2022).

- Jeffrey Chou, Suraj Bramhavar, Siddhartha Ghosh, and William Herzog, “Analog coupled oscillator based weighted ising machine,” Scientific reports 9, 14786 (2019).

- Tianshi Wang and Jaijeet Roychowdhury, “Oim: Oscillator-based ising machines for solving combinatorial optimisation problems,” in Unconventional Computation and Natural Computation: 18th International Conference, UCNC 2019, Tokyo, Japan, June 3–7, 2019, Proceedings 18 (Springer, 2019) pp. 232–256.

- Bradley H Theilman, Yipu Wang, Ojas Parekh, William Severa, J Darby Smith, and James B Aimone, “Stochastic neuromorphic circuits for solving maxcut,” in 2023 IEEE International Parallel and Distributed Processing Symposium (IPDPS) (IEEE, 2023) pp. 779–787.

- Patrick J. Coles, Collin Szczepanski, Denis Melanson, Kaelan Donatella, Antonio J. Martinez, and Faris Sbahi, “Thermodynamic AI and the fluctuation frontier,” (2023), arXiv:2302.06584 [cs.ET] .

- Tom Conte, Erik DeBenedictis, Natesh Ganesh, Todd Hylton, John Paul Strachan, R Stanley Williams, Alexander Alemi, Lee Altenberg, Gavin E. Crooks, James Crutchfield, et al., “Thermodynamic computing,” arXiv preprint arXiv:1911.01968 (2019).

- Maxwell Aifer, Kaelan Donatella, Max Hunter Gordon, Thomas Ahle, Daniel Simpson, Gavin E Crooks, and Patrick J Coles, “Thermodynamic linear algebra,” arXiv preprint arXiv:2308.05660 (2023).

- Samuel Duffield, Maxwell Aifer, Gavin Crooks, Thomas Ahle, and Patrick J Coles, “Thermodynamic matrix exponentials and thermodynamic parallelism,” arXiv preprint arXiv:2311.12759 (2023).

- Todd Hylton, “Thermodynamic neural network,” Entropy 22, 256 (2020).

- Natesh Ganesh, “A thermodynamic treatment of intelligent systems,” in 2017 IEEE International Conference on Rebooting Computing (ICRC) (2017) pp. 1–4.

- Patryk Lipka-Bartosik, Martí Perarnau-Llobet, and Nicolas Brunner, “Thermodynamic computing via autonomous quantum thermal machines,” arXiv preprint arXiv:2308.15905 (2023).

- Kerem Y. Camsari, Brian M. Sutton, and Supriyo Datta, “p-bits for probabilistic spin logic,” Appl. Phys. Rev. 6, 011305 (2019).

- Shuvro Chowdhury, Andrea Grimaldi, Navid Anjum Aadit, Shaila Niazi, Masoud Mohseni, Shun Kanai, Hideo Ohno, Shunsuke Fukami, Luke Theogarajan, Giovanni Finocchio, et al., “A full-stack view of probabilistic computing with p-bits: devices, architectures and algorithms,” IEEE Journal on Exploratory Solid-State Computational Devices and Circuits (2023).

- Shashank Misra, Leslie C Bland, Suma G Cardwell, Jean Anne C Incorvia, Conrad D James, Andrew D Kent, Catherine D Schuman, J Darby Smith, and James B Aimone, “Probabilistic neural computing with stochastic devices,” Advanced Materials 35, 2204569 (2023).

- Samuel Liu, T Patrick Xiao, Jaesuk Kwon, Bert J Debusschere, Sapan Agarwal, Jean Anne C Incorvia, and Christopher H Bennett, “Bayesian neural networks using magnetic tunnel junction-based probabilistic in-memory computing,” Frontiers in Nanotechnology 4, 1021943 (2022).

- Vikash Kumar Mansinghka et al., Natively probabilistic computation, Ph.D. thesis, Citeseer (2009).

- Kevin P Murphy, Probabilistic machine learning: an introduction (MIT press, 2022).

- Christopher Williams and Carl Rasmussen, “Gaussian processes for regression,” Advances in neural information processing systems 8 (1995).

- Jeremiah Liu, Zi Lin, Shreyas Padhy, Dustin Tran, Tania Bedrax Weiss, and Balaji Lakshminarayanan, “Simple and principled uncertainty estimation with deterministic deep learning via distance awareness,” Advances in Neural Information Processing Systems 33, 7498–7512 (2020).

- Tianqi Chen, Emily Fox, and Carlos Guestrin, “Stochastic gradient hamiltonian monte carlo,” in International conference on machine learning (PMLR, 2014) pp. 1683–1691.

- Peter W Shor, “Scheme for reducing decoherence in quantum computer memory,” Physical review A 52, R2493 (1995).

- Peter W Shor, “Polynomial-time algorithms for prime factorization and discrete logarithms on a quantum computer,” SIAM review 41, 303–332 (1999).

- Isaac L Chuang, Neil Gershenfeld, and Mark Kubinec, “Experimental implementation of fast quantum searching,” Physical review letters 80, 3408 (1998).

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.