The Distributional Reward Critic Framework for Reinforcement Learning Under Perturbed Rewards

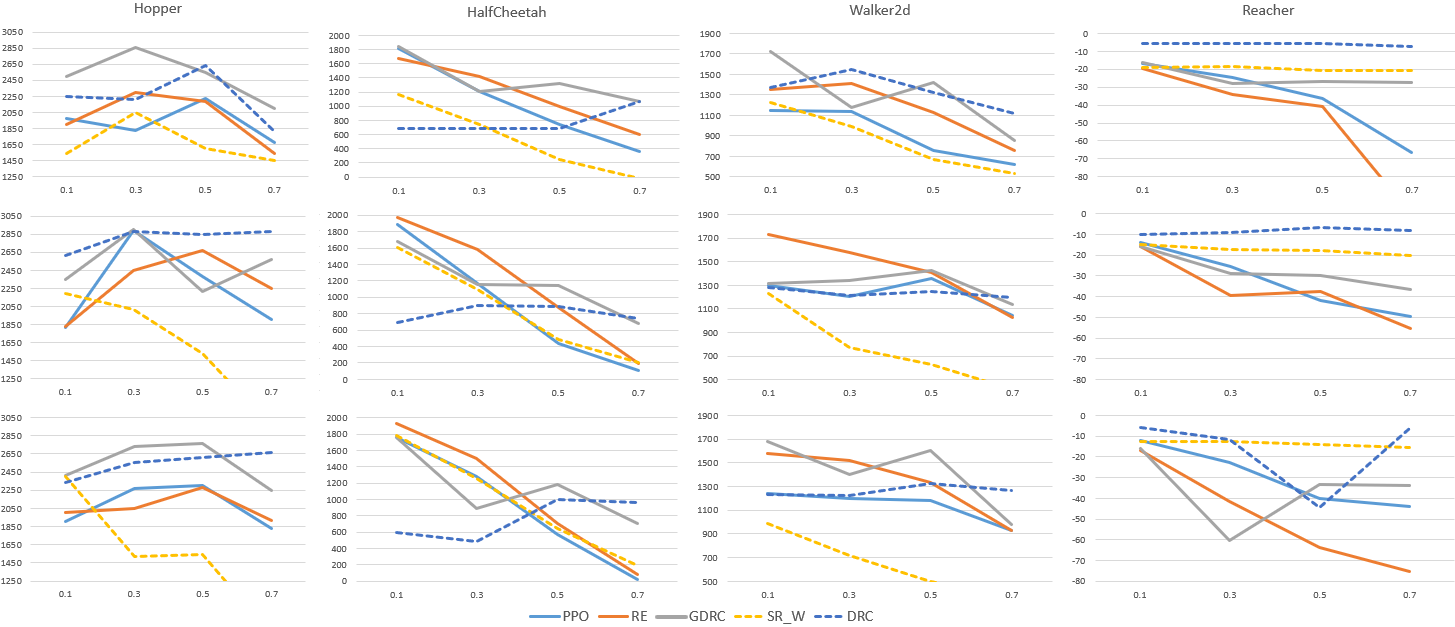

Abstract: The reward signal plays a central role in defining the desired behaviors of agents in reinforcement learning (RL). Rewards collected from realistic environments could be perturbed, corrupted, or noisy due to an adversary, sensor error, or because they come from subjective human feedback. Thus, it is important to construct agents that can learn under such rewards. Existing methodologies for this problem make strong assumptions, including that the perturbation is known in advance, clean rewards are accessible, or that the perturbation preserves the optimal policy. We study a new, more general, class of unknown perturbations, and introduce a distributional reward critic framework for estimating reward distributions and perturbations during training. Our proposed methods are compatible with any RL algorithm. Despite their increased generality, we show that they achieve comparable or better rewards than existing methods in a variety of environments, including those with clean rewards. Under the challenging and generalized perturbations we study, we win/tie the highest return in 44/48 tested settings (compared to 11/48 for the best baseline). Our results broaden and deepen our ability to perform RL in reward-perturbed environments.

- Training a helpful and harmless assistant with reinforcement learning from human feedback. arXiv preprint arXiv:2204.05862, 2022.

- Vulnerability of deep reinforcement learning to policy induction attacks. In Machine Learning and Data Mining in Pattern Recognition: 13th International Conference, MLDM 2017, New York, NY, USA, July 15-20, 2017, Proceedings 13, pp. 262–275. Springer, 2017.

- A distributional perspective on reinforcement learning. In International conference on machine learning, pp. 449–458. PMLR, 2017.

- Provably robust blackbox optimization for reinforcement learning. In Conference on Robot Learning, pp. 683–696. PMLR, 2020.

- Reinforcement learning with stochastic reward machines. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 36, pp. 6429–6436, 2022.

- Implicit quantile networks for distributional reinforcement learning. In International conference on machine learning, pp. 1096–1105. PMLR, 2018a.

- Distributional reinforcement learning with quantile regression. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 32, 2018b.

- Reinforcement learning with a corrupted reward channel. arXiv preprint arXiv:1705.08417, 2017.

- Robust loss functions under label noise for deep neural networks. In Proceedings of the AAAI conference on artificial intelligence, volume 31, 2017.

- Inverse reward design. Advances in neural information processing systems, 30, 2017.

- Adversarial attacks on neural network policies. arXiv preprint arXiv:1702.02284, 2017.

- Delving into adversarial attacks on deep policies. arXiv preprint arXiv:1705.06452, 2017.

- Mobile robot navigation using prioritized experience replay q-learning. In 2019 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 2036–2041. IEEE, 2019.

- Continuous control with deep reinforcement learning. arXiv preprint arXiv:1509.02971, 2015.

- Tactics of adversarial attack on deep reinforcement learning agents. arXiv preprint arXiv:1703.06748, 2017a.

- Detecting adversarial attacks on neural network policies with visual foresight. arXiv preprint arXiv:1710.00814, 2017b.

- The effects of memory replay in reinforcement learning. In 2018 56th annual allerton conference on communication, control, and computing (Allerton), pp. 478–485. IEEE, 2018.

- Robust training under label noise by over-parameterization. In International Conference on Machine Learning, pp. 14153–14172. PMLR, 2022.

- Normalized loss functions for deep learning with noisy labels. In International conference on machine learning, pp. 6543–6553. PMLR, 2020.

- Ddsketch: A fast and fully-mergeable quantile sketch with relative-error guarantees. arXiv preprint arXiv:1908.10693, 2019.

- Playing atari with deep reinforcement learning. arXiv preprint arXiv:1312.5602, 2013.

- Noisy reinforcements in reinforcement learning: some case studies based on gridworlds. In Proceedings of the 6th WSEAS international conference on applied computer science, pp. 296–300, 2006.

- Policy invariance under reward transformations: Theory and application to reward shaping. In Icml, volume 99, pp. 278–287. Citeseer, 1999.

- Training language models to follow instructions with human feedback. Advances in Neural Information Processing Systems, 35:27730–27744, 2022.

- Robust deep reinforcement learning with adversarial attacks. arXiv preprint arXiv:1712.03632, 2017.

- Robust adversarial reinforcement learning. In International Conference on Machine Learning, pp. 2817–2826. PMLR, 2017.

- Martin L Puterman. Markov decision processes: discrete stochastic dynamic programming. John Wiley & Sons, 2014.

- Learning rewards to optimize global performance metrics in deep reinforcement learning. arXiv preprint arXiv:2303.09027, 2023.

- Policy teaching via environment poisoning: Training-time adversarial attacks against reinforcement learning. In International Conference on Machine Learning, pp. 7974–7984. PMLR, 2020.

- Reward estimation for variance reduction in deep reinforcement learning. In Proceedings of The 2nd Conference on Robot Learning, 2018.

- An analysis of categorical distributional reinforcement learning. In International Conference on Artificial Intelligence and Statistics, pp. 29–37. PMLR, 2018.

- Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347, 2017.

- Reward is enough. Artificial Intelligence, 299:103535, 2021.

- Learning from noisy labels with deep neural networks: A survey. IEEE Transactions on Neural Networks and Learning Systems, 2022.

- Regression as classification: Influence of task formulation on neural network features. In International Conference on Artificial Intelligence and Statistics, pp. 11563–11582. PMLR, 2023.

- Mujoco: A physics engine for model-based control. In 2012 IEEE/RSJ international conference on intelligent robots and systems, pp. 5026–5033. IEEE, 2012.

- Reinforcement learning with perturbed rewards. In Proceedings of the AAAI conference on artificial intelligence, volume 34, pp. 6202–6209, 2020.

- Generalized cross entropy loss for training deep neural networks with noisy labels. Advances in neural information processing systems, 31, 2018.

- Robust bayesian inverse reinforcement learning with sparse behavior noise. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 28, 2014.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.