NumeroLogic: Number Encoding for Enhanced LLMs' Numerical Reasoning

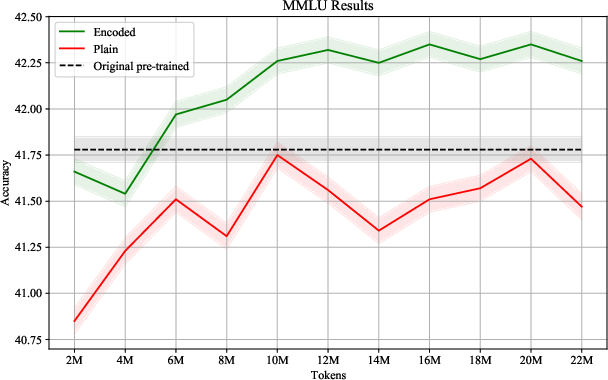

Abstract: LLMs struggle with handling numerical data and performing arithmetic operations. We hypothesize that this limitation can be partially attributed to non-intuitive textual numbers representation. When a digit is read or generated by a causal LLM it does not know its place value (e.g. thousands vs. hundreds) until the entire number is processed. To address this issue, we propose a simple adjustment to how numbers are represented by including the count of digits before each number. For instance, instead of "42", we suggest using "{2:42}" as the new format. This approach, which we term NumeroLogic, offers an added advantage in number generation by serving as a Chain of Thought (CoT). By requiring the model to consider the number of digits first, it enhances the reasoning process before generating the actual number. We use arithmetic tasks to demonstrate the effectiveness of the NumeroLogic formatting. We further demonstrate NumeroLogic applicability to general natural language modeling, improving language understanding performance in the MMLU benchmark.

- Gpt-4 technical report. arXiv preprint arXiv:2303.08774, 2023.

- Improving image generation with better captions. Computer Science. https://cdn. openai. com/papers/dall-e-3. pdf, 2(3):8, 2023.

- Palm: Scaling language modeling with pathways. Journal of Machine Learning Research, 24(240):1–113, 2023.

- Philip Gage. A new algorithm for data compression. The C Users Journal, 12(2):23–38, 1994.

- Aligning ai with shared human values. Proceedings of the International Conference on Learning Representations (ICLR), 2021.

- Measuring massive multitask language understanding. Proceedings of the International Conference on Learning Representations (ICLR), 2021.

- Lora: Low-rank adaptation of large language models. arXiv preprint arXiv:2106.09685, 2021.

- Mistral 7b. arXiv preprint arXiv:2310.06825, 2023.

- Andrej Karpathy. Nanogpt. https://github.com/karpathy/nanoGPT, 2022.

- Teaching arithmetic to small transformers. In The Twelfth International Conference on Learning Representations, 2024.

- The RefinedWeb dataset for Falcon LLM: outperforming curated corpora with web data, and web data only. arXiv preprint arXiv:2306.01116, 2023.

- Neural machine translation of rare words with subword units. arXiv preprint arXiv:1508.07909, 2015.

- Positional description matters for transformers arithmetic. arXiv preprint arXiv:2311.14737, 2023.

- Aaditya K Singh and DJ Strouse. Tokenization counts: the impact of tokenization on arithmetic in frontier llms. arXiv preprint arXiv:2402.14903, 2024.

- Llama: Open and efficient foundation language models. arXiv preprint arXiv:2302.13971, 2023.

- Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems, 35:24824–24837, 2022.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.