Agent Design Pattern Catalogue: A Collection of Architectural Patterns for Foundation Model based Agents

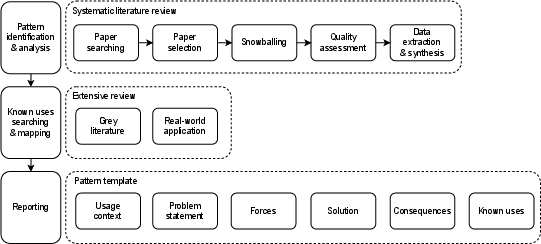

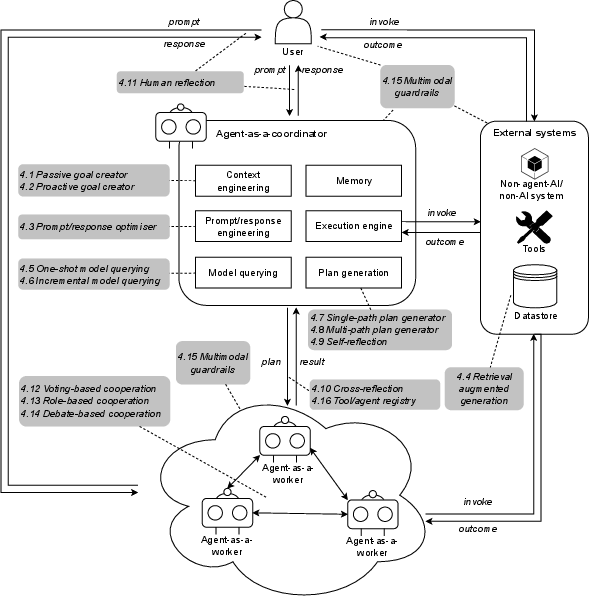

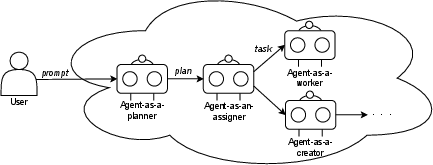

Abstract: Foundation model-enabled generative artificial intelligence facilitates the development and implementation of agents, which can leverage distinguished reasoning and language processing capabilities to takes a proactive, autonomous role to pursue users' goals. Nevertheless, there is a lack of systematic knowledge to guide practitioners in designing the agents considering challenges of goal-seeking (including generating instrumental goals and plans), such as hallucinations inherent in foundation models, explainability of reasoning process, complex accountability, etc. To address this issue, we have performed a systematic literature review to understand the state-of-the-art foundation model-based agents and the broader ecosystem. In this paper, we present a pattern catalogue consisting of 18 architectural patterns with analyses of the context, forces, and trade-offs as the outcomes from the previous literature review. We propose a decision model for selecting the patterns. The proposed catalogue can provide holistic guidance for the effective use of patterns, and support the architecture design of foundation model-based agents by facilitating goal-seeking and plan generation.

- R. Bommasani, D. A. Hudson, E. Adeli, R. Altman, S. Arora, S. von Arx, M. S. Bernstein, J. Bohg, A. Bosselut, E. Brunskill et al., “On the opportunities and risks of foundation models,” arXiv preprint arXiv:2108.07258, 2021.

- U. Anwar, A. Saparov, J. Rando, D. Paleka, M. Turpin, P. Hase, E. S. Lubana, E. Jenner, S. Casper, O. Sourbut et al., “Foundational challenges in assuring alignment and safety of large language models,” arXiv preprint arXiv:2404.09932, 2024.

- Q. Lu, L. Zhu, X. Xu, Z. Xing, S. Harrer, and J. Whittle, “Towards responsible generative ai: A reference architecture for designing foundation model based agents,” arXiv preprint arXiv:2311.13148, 2023.

- J. Achiam, S. Adler, S. Agarwal, L. Ahmad, I. Akkaya, F. L. Aleman, D. Almeida, J. Altenschmidt, S. Altman, S. Anadkat et al., “Gpt-4 technical report,” arXiv preprint arXiv:2303.08774, 2024.

- K. Hu, “Chatgpt sets record for fastest-growing user base - analyst note,” Feb 2023. [Online]. Available: https://www.reuters.com/technology/chatgpt-sets-record-fastest-growing-user-base-analyst-note-2023-02-01/

- Mar 2024. [Online]. Available: https://www.deeplearning.ai/the-batch/issue-242/

- C. Packer, V. Fang, S. G. Patil, K. Lin, S. Wooders, and J. E. Gonzalez, “Memgpt: Towards llms as operating systems,” arXiv preprint arXiv:2310.08560, 2024.

- S. Colabianchi, A. Tedeschi, and F. Costantino, “Human-technology integration with industrial conversational agents: A conceptual architecture and a taxonomy for manufacturing,” Journal of Industrial Information Integration, vol. 35, p. 100510, 2023. [Online]. Available: https://www.sciencedirect.com/science/article/pii/S2452414X23000833

- “Openai’s bet on a cognitive architecture,” Nov 2023. [Online]. Available: https://blog.langchain.dev/openais-bet-on-a-cognitive-architecture/

- A. E. Hassan, D. Lin, G. K. Rajbahadur, K. Gallaba, F. R. Cogo, B. Chen, H. Zhang, K. Thangarajah, G. A. Oliva, J. Lin et al., “Rethinking software engineering in the era of foundation models: A curated catalogue of challenges in the development of trustworthy fmware,” arXiv preprint arXiv:2402.15943, 2024.

- S. Gao, A. Fang, Y. Huang, V. Giunchiglia, A. Noori, J. R. Schwarz, Y. Ektefaie, J. Kondic, and M. Zitnik, “Empowering biomedical discovery with ai agents,” arXiv preprint arXiv:2404.02831, 2024.

- M. Nafreen, S. Bhattacharya, and L. Fiondella, “Architecture-based software reliability incorporating fault tolerant machine learning,” in 2020 Annual Reliability and Maintainability Symposium (RAMS), 2020, pp. 1–6.

- Y. Liu, S. Chen, H. Chen, M. Yu, X. Ran, A. Mo, Y. Tang, and Y. Huang, “How ai processing delays foster creativity: Exploring research question co-creation with an llm-based agent,” arXiv preprint arXiv:2310.06155, 2023.

- S. S. Kannan, V. L. Venkatesh, and B.-C. Min, “Smart-llm: Smart multi-agent robot task planning using large language models,” arXiv preprint arXiv:2309.10062, 2023.

- X. Zeng, X. Wang, T. Zhang, C. Yu, S. Zhao, and Y. Chen, “Gesturegpt: Zero-shot interactive gesture understanding and grounding with large language model agents,” arXiv preprint arXiv:2310.12821, 2023.

- D. Zhao, Z. Xing, X. Xia, D. Ye, X. Xu, and L. Zhu, “Seehow: Workflow extraction from programming screencasts through action-aware video analytics,” arXiv preprint arXiv:2304.14042, 2023.

- C. Zhang, K. Yang, S. Hu, Z. Wang, G. Li, Y. Sun, C. Zhang, Z. Zhang, A. Liu, S.-C. Zhu et al., “Proagent: Building proactive cooperative ai with large language models,” arXiv preprint arXiv:2308.11339, 2023.

- A. Zhao, D. Huang, Q. Xu, M. Lin, Y.-J. Liu, and G. Huang, “Expel: Llm agents are experiential learners,” arXiv preprint arXiv:2308.10144, 2023.

- R. Schumann, W. Zhu, W. Feng, T.-J. Fu, S. Riezler, and W. Y. Wang, “Velma: Verbalization embodiment of llm agents for vision and language navigation in street view,” arXiv preprint arXiv:2307.06082, 2023.

- Y. Hu and Y. Lu, “Rag and rau: A survey on retrieval-augmented language model in natural language processing,” arXiv preprint arXiv:2404.19543, 2024.

- S.-Q. Yan, J.-C. Gu, Y. Zhu, and Z.-H. Ling, “Corrective retrieval augmented generation,” arXiv preprint arXiv:2401.15884, 2024.

- Z. Levonian, C. Li, W. Zhu, A. Gade, O. Henkel, M.-E. Postle, and W. Xing, “Retrieval-augmented generation to improve math question-answering: Trade-offs between groundedness and human preference,” arXiv preprint arXiv:2310.03184, 2023.

- L. Wang, C. Ma, X. Feng, Z. Zhang, H. Yang, J. Zhang, Z. Chen, J. Tang, X. Chen, Y. Lin et al., “A survey on large language model based autonomous agents,” Frontiers of Computer Science, vol. 18, no. 6, pp. 1–26, 2024.

- Y. Shen, K. Song, X. Tan, D. Li, W. Lu, and Y. Zhuang, “Hugginggpt: Solving ai tasks with chatgpt and its friends in hugging face,” in Advances in Neural Information Processing Systems, A. Oh, T. Neumann, A. Globerson, K. Saenko, M. Hardt, and S. Levine, Eds., vol. 36. Curran Associates, Inc., 2023, pp. 38 154–38 180. [Online]. Available: https://proceedings.neurips.cc/paper_files/paper/2023/file/77c33e6a367922d003ff102ffb92b658-Paper-Conference.pdf

- J. Zhang, R. Krishna, A. H. Awadallah, and C. Wang, “Ecoassistant: Using llm assistant more affordably and accurately,” arXiv preprint arXiv:2310.03046, 2023.

- B. Xu, Z. Peng, B. Lei, S. Mukherjee, Y. Liu, and D. Xu, “Rewoo: Decoupling reasoning from observations for efficient augmented language models,” arXiv preprint arXiv:2305.18323, 2023.

- J. Wei, X. Wang, D. Schuurmans, M. Bosma, F. Xia, E. Chi, Q. V. Le, D. Zhou et al., “Chain-of-thought prompting elicits reasoning in large language models,” Advances in Neural Information Processing Systems, vol. 35, pp. 24 824–24 837, 2022.

- Z. Wang, Z. Liu, Y. Zhang, A. Zhong, L. Fan, L. Wu, and Q. Wen, “Rcagent: Cloud root cause analysis by autonomous agents with tool-augmented large language models,” arXiv preprint arXiv:2310.16340, 2023.

- Z. Zhang, Y. Yao, A. Zhang, X. Tang, X. Ma, Z. He, Y. Wang, M. Gerstein, R. Wang, G. Liu et al., “Igniting language intelligence: The hitchhiker’s guide from chain-of-thought reasoning to language agents,” arXiv preprint arXiv:2311.11797, 2023.

- S. Yao, D. Yu, J. Zhao, I. Shafran, T. Griffiths, Y. Cao, and K. Narasimhan, “Tree of thoughts: Deliberate problem solving with large language models,” Advances in Neural Information Processing Systems, vol. 36, 2024.

- J. Huang, S. S. Gu, L. Hou, Y. Wu, X. Wang, H. Yu, and J. Han, “Large language models can self-improve,” arXiv preprint arXiv:2210.11610, 2022.

- N. Shinn, F. Cassano, A. Gopinath, K. Narasimhan, and S. Yao, “Reflexion: language agents with verbal reinforcement learning,” in Advances in Neural Information Processing Systems, A. Oh, T. Neumann, A. Globerson, K. Saenko, M. Hardt, and S. Levine, Eds., vol. 36. Curran Associates, Inc., 2023, pp. 8634–8652. [Online]. Available: https://proceedings.neurips.cc/paper_files/paper/2023/file/1b44b878bb782e6954cd888628510e90-Paper-Conference.pdf

- J. Chen, S. Yuan, R. Ye, B. P. Majumder, and K. Richardson, “Put your money where your mouth is: Evaluating strategic planning and execution of llm agents in an auction arena,” arXiv preprint arXiv:2310.05746, 2023.

- J. S. Park, J. O’Brien, C. J. Cai, M. R. Morris, P. Liang, and M. S. Bernstein, “Generative agents: Interactive simulacra of human behavior,” in Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology, ser. UIST ’23. New York, NY, USA: Association for Computing Machinery, 2023. [Online]. Available: https://doi.org/10.1145/3586183.3606763

- W. Yao, S. Heinecke, J. C. Niebles, Z. Liu, Y. Feng, L. Xue, R. Murthy, Z. Chen, J. Zhang, D. Arpit et al., “Retroformer: Retrospective large language agents with policy gradient optimization,” arXiv preprint arXiv:2308.02151, 2023.

- C. Qian, X. Cong, C. Yang, W. Chen, Y. Su, J. Xu, Z. Liu, and M. Sun, “Communicative agents for software development,” arXiv preprint arXiv:2307.07924, 2023.

- Y. Talebirad and A. Nadiri, “Multi-agent collaboration: Harnessing the power of intelligent llm agents,” arXiv preprint arXiv:2306.03314, 2023.

- W. Huang, F. Xia, T. Xiao, H. Chan, J. Liang, P. Florence, A. Zeng, J. Tompson, I. Mordatch, Y. Chebotar et al., “Inner monologue: Embodied reasoning through planning with language models,” arXiv preprint arXiv:2207.05608, 2022.

- S. Ma, Q. Chen, X. Wang, C. Zheng, Z. Peng, M. Yin, and X. Ma, “Towards human-ai deliberation: Design and evaluation of llm-empowered deliberative ai for ai-assisted decision-making,” arXiv preprint arXiv:2403.16812, 2024.

- Y. Wang, Z. Liu, J. Zhang, W. Yao, S. Heinecke, and P. S. Yu, “Drdt: Dynamic reflection with divergent thinking for llm-based sequential recommendation,” arXiv preprint arXiv:2312.11336, 2023.

- S. Hamilton, “Blind judgement: Agent-based supreme court modelling with GPT,” in The AAAI-23 Workshop on Creative AI Across Modalities, 2023. [Online]. Available: https://openreview.net/forum?id=Nx9ajnqG9Rw

- C.-M. Chan, W. Chen, Y. Su, J. Yu, W. Xue, S. Zhang, J. Fu, and Z. Liu, “Chateval: Towards better LLM-based evaluators through multi-agent debate,” in The Twelfth International Conference on Learning Representations, 2024. [Online]. Available: https://openreview.net/forum?id=FQepisCUWu

- J. C. Yang, M. Korecki, D. Dailisan, C. I. Hausladen, and D. Helbing, “Llm voting: Human choices and ai collective decision making,” arXiv preprint arXiv:2402.01766, 2024.

- J. Li, Q. Zhang, Y. Yu, Q. Fu, and D. Ye, “More agents is all you need,” arXiv preprint arXiv:2402.05120, 2024.

- S. Hong, X. Zheng, J. Chen, Y. Cheng, J. Wang, C. Zhang, Z. Wang, S. K. S. Yau, Z. Lin, L. Zhou et al., “Metagpt: Meta programming for multi-agent collaborative framework,” arXiv preprint arXiv:2308.00352, 2023.

- X. Tang, A. Zou, Z. Zhang, Y. Zhao, X. Zhang, A. Cohan, and M. Gerstein, “Medagents: Large language models as collaborators for zero-shot medical reasoning,” arXiv preprint arXiv:2311.10537, 2023.

- M. Li, L. Chen, J. Chen, S. He, J. Gu, and T. Zhou, “Selective reflection-tuning: Student-selected data recycling for llm instruction-tuning,” arXiv preprint arXiv:2402.10110, 2024.

- T. Liang, Z. He, W. Jiao, X. Wang, Y. Wang, R. Wang, Y. Yang, Z. Tu, and S. Shi, “Encouraging divergent thinking in large language models through multi-agent debate,” arXiv preprint arXiv:2305.19118, 2023.

- Y. Du, S. Li, A. Torralba, J. B. Tenenbaum, and I. Mordatch, “Improving factuality and reasoning in language models through multiagent debate,” arXiv preprint arXiv:2305.14325, 2023.

- H. Chen, W. Ji, L. Xu, and S. Zhao, “Multi-agent consensus seeking via large language models,” arXiv preprint arXiv:2310.20151, 2023.

- T. Rebedea, R. Dinu, M. Sreedhar, C. Parisien, and J. Cohen, “Nemo guardrails: A toolkit for controllable and safe llm applications with programmable rails,” arXiv preprint arXiv:2310.10501, 2023.

- H. Inan, K. Upasani, J. Chi, R. Rungta, K. Iyer, Y. Mao, M. Tontchev, Q. Hu, B. Fuller, D. Testuggine et al., “Llama guard: Llm-based input-output safeguard for human-ai conversations,” arXiv preprint arXiv:2312.06674, 2023.

- J. Ruan, Y. Chen, B. Zhang, Z. Xu, T. Bao, du qing, shi shiwei, H. Mao, X. Zeng, and R. Zhao, “TPTU: Task planning and tool usage of large language model-based AI agents,” in NeurIPS 2023 Foundation Models for Decision Making Workshop, 2023. [Online]. Available: https://openreview.net/forum?id=GrkgKtOjaH

- G. Wang, Y. Xie, Y. Jiang, A. Mandlekar, C. Xiao, Y. Zhu, L. Fan, and A. Anandkumar, “Voyager: An open-ended embodied agent with large language models,” in Intrinsically-Motivated and Open-Ended Learning Workshop @NeurIPS2023, 2023. [Online]. Available: https://openreview.net/forum?id=nfx5IutEed

- T. Xie, F. Zhou, Z. Cheng, P. Shi, L. Weng, Y. Liu, T. J. Hua, J. Zhao, Q. Liu, C. Liu et al., “Openagents: An open platform for language agents in the wild,” arXiv preprint arXiv:2310.10634, 2023.

- Y. Liu, Q. Lu, G. Yu, H.-Y. Paik, H. Perera, and L. Zhu, “A pattern language for blockchain governance,” in Proceedings of the 27th European Conference on Pattern Languages of Programs, ser. EuroPLop ’22. New York, NY, USA: Association for Computing Machinery, 2023. [Online]. Available: https://doi.org/10.1145/3551902.3564802

- “Information Technology - Artificial Intelligence - Management System,” International Organization for Standardization, Standard ISO/IEC 42001:2023, 2023. [Online]. Available: https://www.iso.org/standard/81230.html

- B. Xia, Q. Lu, L. Zhu, S. U. Lee, Y. Liu, and Z. Xing, “From principles to practice: An accountability metrics catalogue for managing ai risks,” arXiv preprint arXiv:2311.13158, 2023.

- B. Xia, Q. Lu, L. Zhu, and Z. Xing, “Towards ai safety: A taxonomy for ai system evaluation,” arXiv preprint arXiv:2404.05388, 2024.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.