- The paper demonstrates that integrating interactive teacher demonstrations and student trials significantly accelerates neural language acquisition.

- It introduces a novel reward model, conditioned on training steps, to evaluate and guide language proficiency growth.

- Results show that even smaller models benefit, achieving faster vocabulary growth compared to traditional non-interactive training methods.

Summary of "Babysit A LLM From Scratch: Interactive Language Learning by Trials and Demonstrations" (2405.13828)

Introduction and Objective

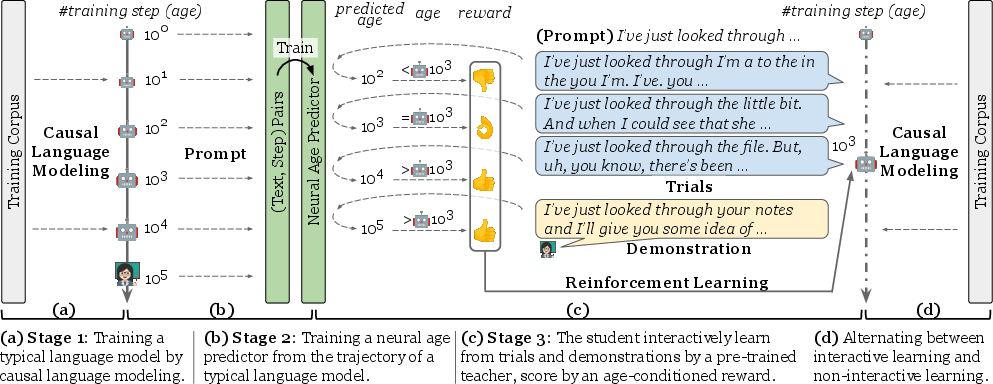

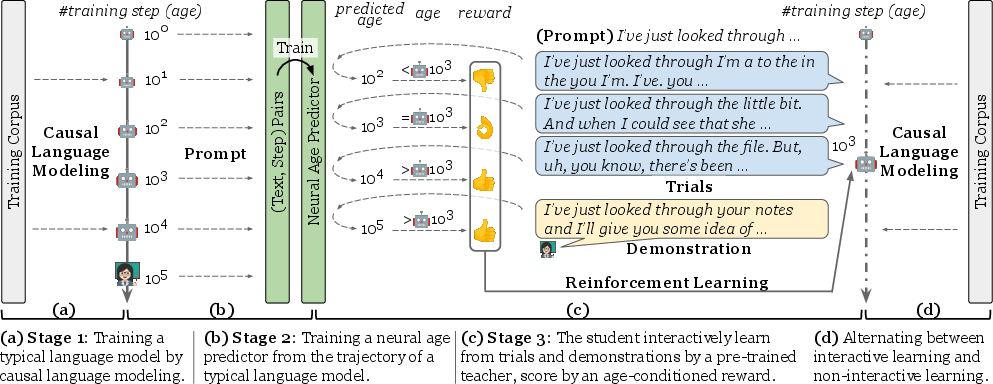

The paper introduces an innovative trial-and-demonstration (TnD) framework, which mimics the interactive nature of human language learning by incorporating corrective feedback from "caregivers." The aim is to assess whether such interactivity can enhance learning efficiency in neural LLMs. Unlike traditional training paradigms that are largely non-interactive, the TnD framework emphasizes interaction through student trials, teacher demonstrations, and rewards conditioned on language competence at varying developmental stages.

Methodology: Trials-and-Demonstrations (TnD) Framework

Student Trials

The student model is initialized using GPT-2 and engages in production-based learning by generating language continuations from provided prompts. This process is integral to measuring how interactive learning impacts the language acquisition efficiency of models.

Teacher Demonstrations

Pre-trained LLMs serve as proxies for human teachers, offering corrections through natural language demonstrations. These demonstrations aim to replicate the communicative feedback that one might expect from human interactions without requiring the recruitment of actual human participants.

Reward Model

A reward function conditioned on neural development—represented by training steps—guides learning. Rewards are calculated using a neural age predictor trained on extensive (text, step) pair data, comparing expected learning stages to actual proficiency.

Figure 1: The learning by trial-and-demonstration (TnD) framework: begins with a causal LLM objective and culminates in interactive student and teacher exchanges scored by an age-conditioned reward function.

Experimental Setup and Evaluation

Corpora and Baselines

The research utilizes two datasets: the BookCorpus and the BabyLM Corpus, representing different aspects of language exposure and complexity. Key model baselines include plain causal language modeling (CLM), TnD, and variants emphasizing trials or teacher demonstrations exclusively.

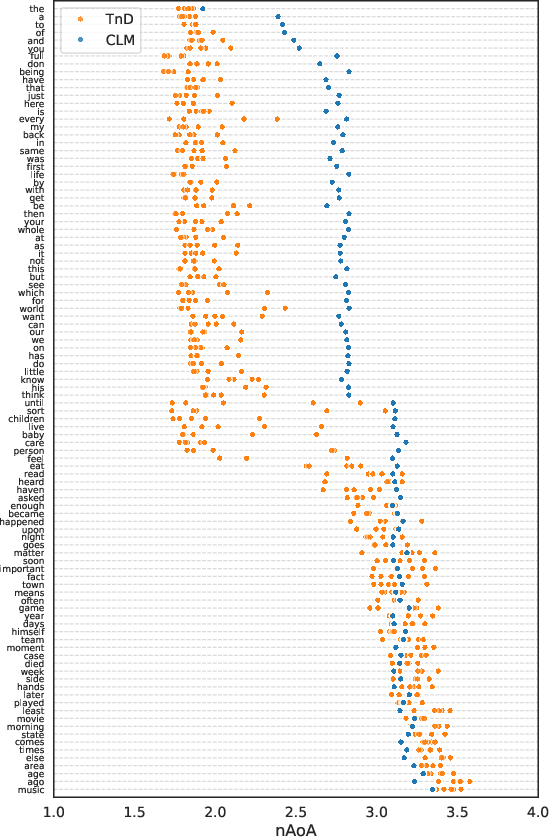

Metrics and Analysis

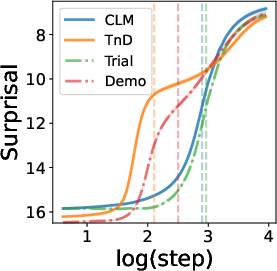

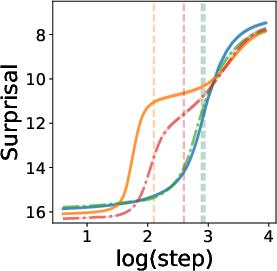

Evaluation metrics focus on neural age of acquisition (nAoA) and effective vocabulary development over training steps. Experiments demonstrate accelerated word acquisition through interactive methods, highlighting the contribution of trials and demonstrations to early-stage learning efficiency.

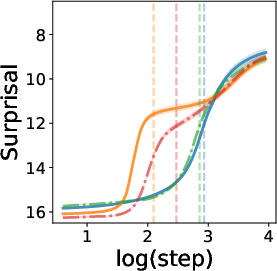

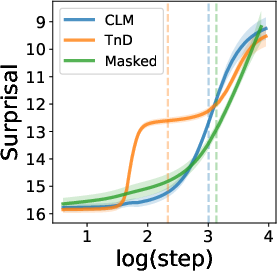

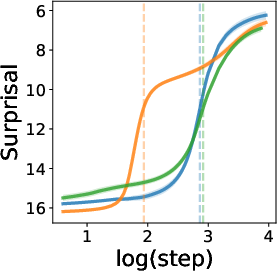

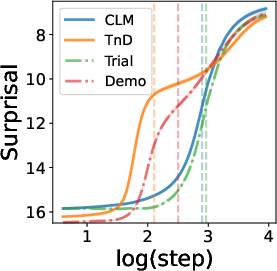

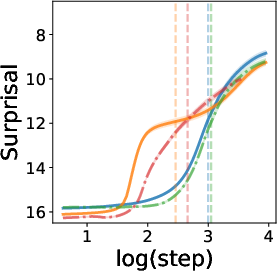

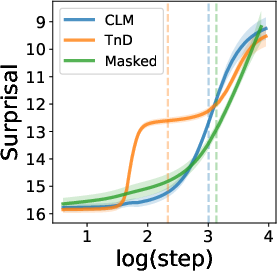

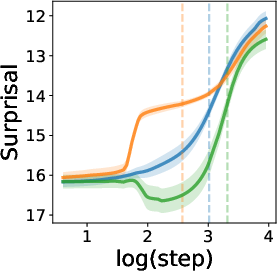

Figure 2: Learning curve of CMN words on BookCorpus.

Results: Efficiency and Feedback Contributions

Accelerated Acquisition

The TnD framework significantly hastens learning compared to non-interactive baselines, with teacher demonstrations and student trials playing crucial roles. Models exhibit faster vocabulary growth and earlier acquisition as evidenced by word surprisal and nAoA metrics.

Smaller Models and Knowledge Distillation

Even reduced-size models benefit from the TnD approach, achieving similar or better early-stage performance when using corrective feedback compared to larger CLM baselines.

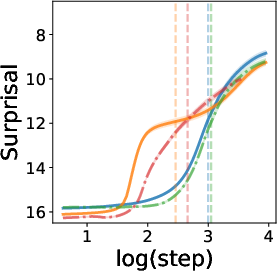

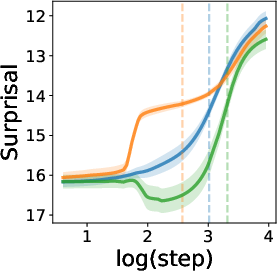

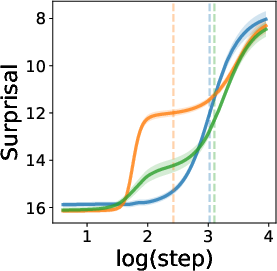

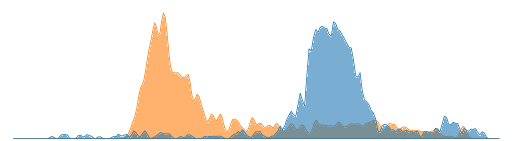

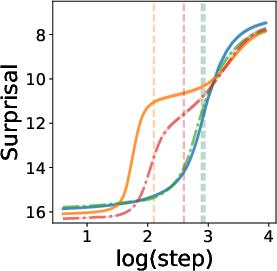

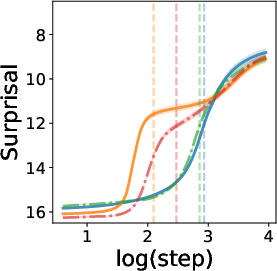

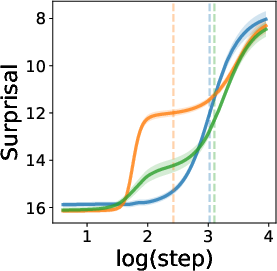

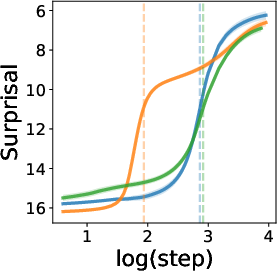

Figure 3: Influence of teacher's word preferences in CMN words on BabyLM.

Discussion: Interaction, Student Trials, and Teacher Influence

Interaction in Learning

The study underscores the potential of interactive language learning by trials and demonstrations as a viable path for improving neural LLMs. By distilling linguistic knowledge through interactivity, student actors achieve faster proficiency.

Teacher's Influence

The selection of vocabulary by teacher models plays a significant role in student learning trajectories, affecting efficiency and acquisition speed. Student models learn more effectively when supported by targeted teacher demonstrations.

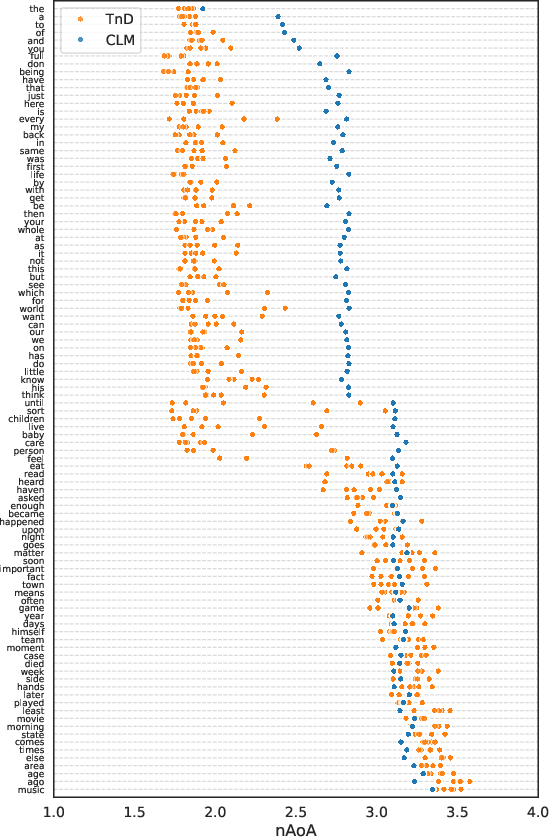

Figure 4: The ridgeline and scatter plot of words and their neural age of acquisition (nAoA) in BabyLM Corpus.

Conclusion

The paper demonstrates the critical role of interactive feedback in neural language acquisition, emphasizing how TnD frameworks can optimize learning processes in LLMs. The findings propose an alternative pathway to developing AI systems capable of efficient language understanding, suggesting avenues for future research into interactive neural learning mechanisms.

Implications and Future Directions

This research contributes to a broader understanding of how interactive systems can be modeled after human learning paradigms, specifically through corrective feedback mechanisms. The potential application areas include interactive language tutoring systems and more human-like AI communication interfaces, promising efficiency and adaptability in neural LLM training. Future studies could explore iterative teacher-student rotations and enhancements in reward logic to further refine interactive learning strategies in artificial intelligence applications.