- The paper introduces the LOGIN framework that integrates LLM consultations with GNN training to refine semantic features and graph structures.

- It employs uncertainty-based node selection and targeted feedback to correct misclassifications, significantly boosting GNN performance.

- Experimental results across diverse datasets validate LOGIN as a scalable and effective alternative to manually tuned GNN architectures.

LOGIN: A LLM Consulted Graph Neural Network Training Framework

The paper introduces a novel framework named LOGIN, which integrates LLMs with Graph Neural Networks (GNNs) through an interactive training process. This approach aims to enhance GNN performance on various graph types by utilizing LLMs as consultants, introducing a paradigm termed "LLMs-as-Consultants".

Introduction

Graph-structured data, representing entities and their interactions, has applications in social networks, financial systems, molecular biology, and more. Recent advances in graph machine learning, particularly with GNNs, have significantly impacted tasks such as recommendation systems, anomaly detection, and drug discovery. Despite their effectiveness, traditional GNN architectures can struggle with heterophilic graphs due to their message-passing mechanisms, which are better suited to homophilic graphs. The complexity in designing specific GNN variants for diverse graph scenarios demands extensive manual optimization, which is both time-consuming and challenging.

In contrast, LLMs have demonstrated remarkable capabilities across various domains, including reasoning and knowledge representation. LOGIN leverages these capabilities by integrating LLMs into the GNN training process to improve performance across different graph types.

Methodology and Framework

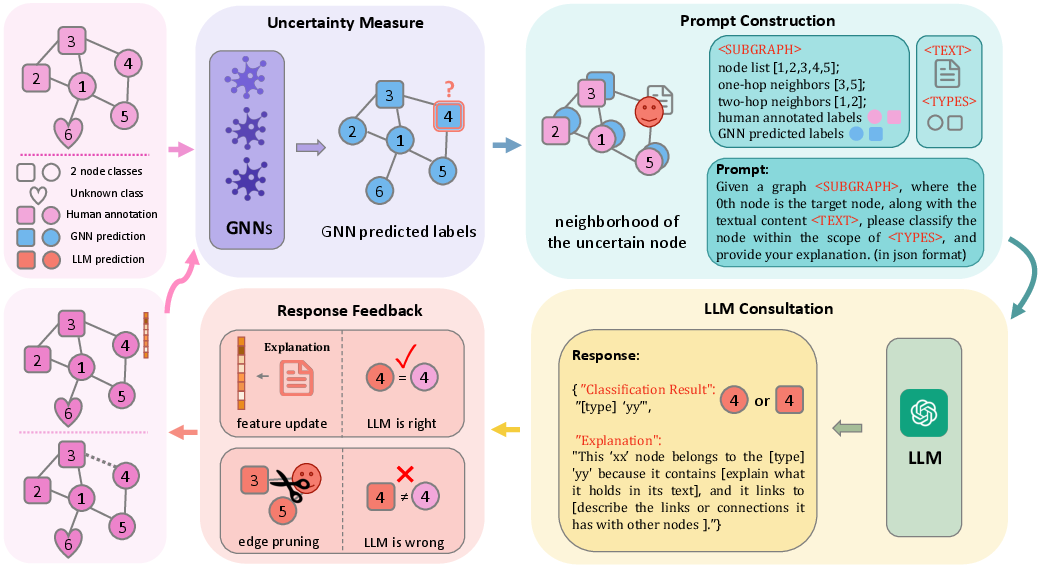

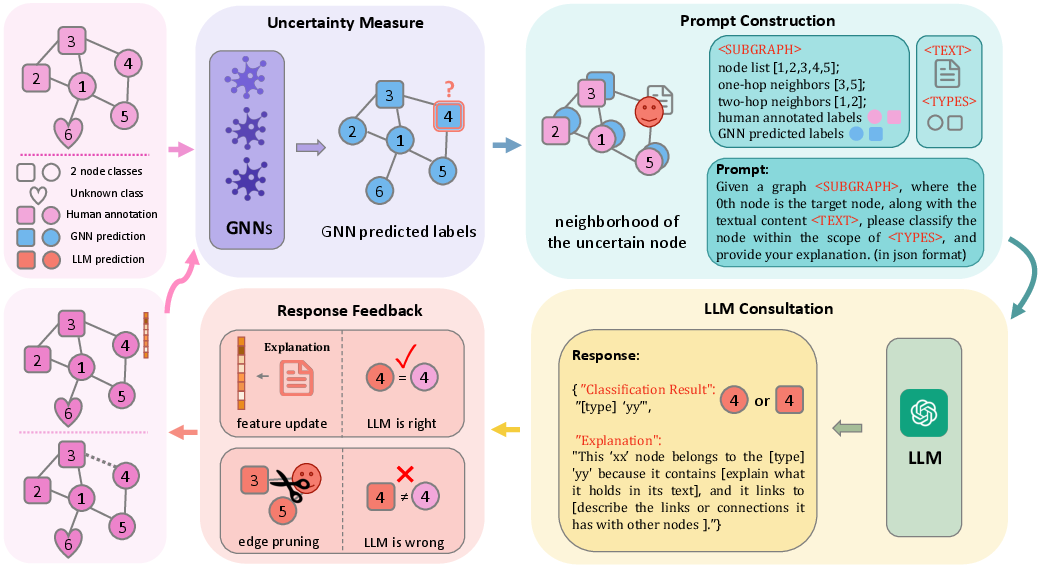

The LOGIN framework involves several key components:

- Node Selection: Uncertain nodes are identified based on the predictive variance in GNN outputs. This selection process helps to focus LLM consultation on nodes where GNNs exhibit uncertainty.

- LLM Consultation: For selected nodes, comprehensive prompts combining semantic and topological information are crafted and sent to the LLMs. These prompts include node texts and neighborhood structures to aid LLMs in prediction tasks.

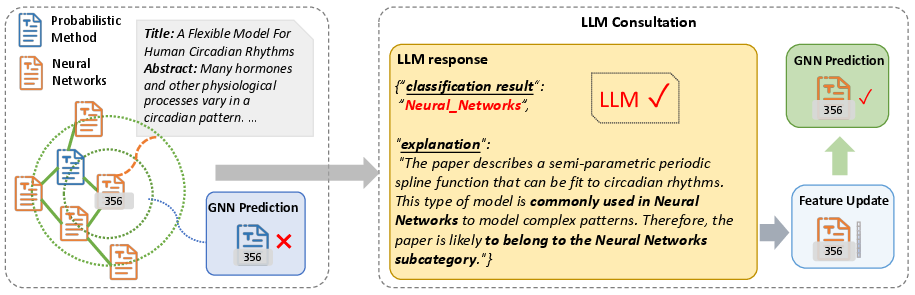

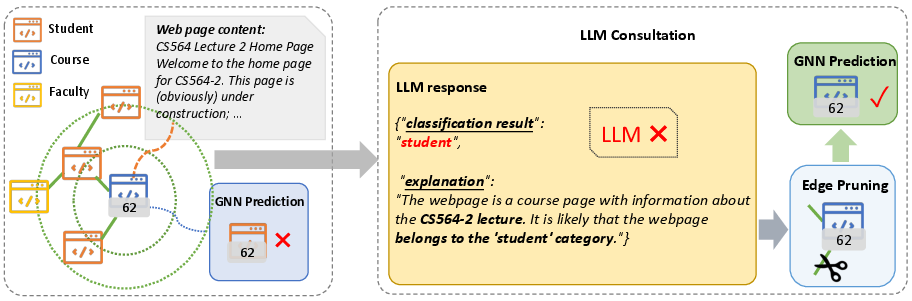

- Response Feedback: The framework utilizes LLM responses in a twofold manner. Correct classifications lead to semantic feature enhancement, while incorrect predictions result in structure refinement by pruning potentially misleading edges.

- Retraining:

The GNN is retrained using improved node features and refined graph structures, informed by LLM consultations, thereby enhancing its ability to classify nodes accurately.

Figure 1: The pipeline of LOGIN, detailing the interactive GNN training process integrated with LLM consultations.

Evaluation

LOGIN was evaluated on several datasets categorized by homophily:

- Homophilic Graphs: Cora, PubMed, Arxiv-23

- Heterophilic Graphs: Wisconsin, Texas, Cornell

Experiments demonstrated that LOGIN significantly improves the performance of classic GNN architectures, making them competitive with advanced state-of-the-art GNN models. Importantly, LOGIN achieved superior accuracy on both homophilic and heterophilic graphs, surpassing traditionally specialized GNNs. The results also confirmed the utility of integrating LLMs as consultants, which can leverage their emergent abilities to enhance graph reasoning tasks.

Case Studies

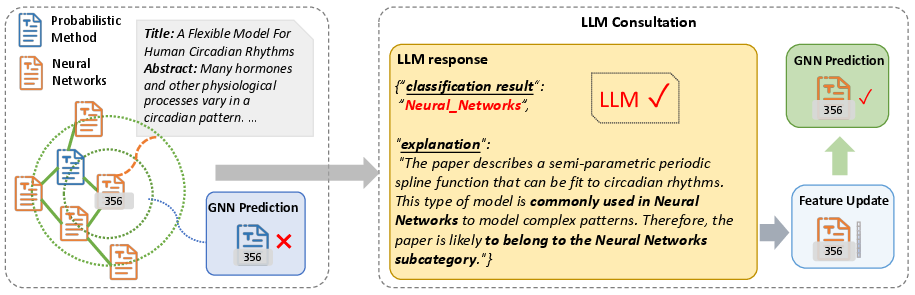

Specific nodes from the Cora and Wisconsin datasets were analyzed to illustrate LOGIN's impact. In Cora, a node initially misclassified by GNN was corrected through LLM consultation, leading to a semantic enhancement that improved the retrained GNN's accuracy.

Figure 2: Case study of node 356 in Cora, illustrating the process from misclassification to correct classification through LLM consultation.

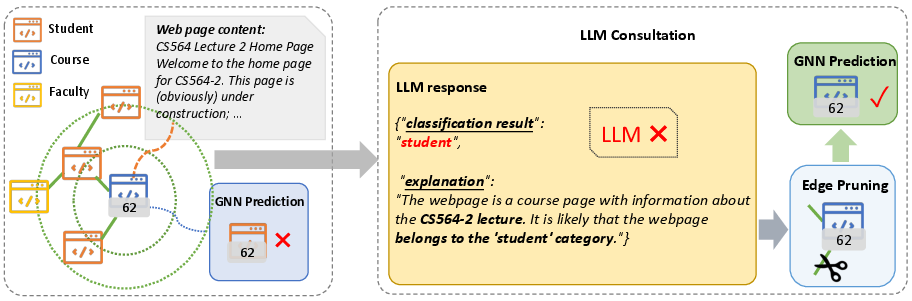

In Wisconsin, structural refinement of a misclassified node helped eliminate noisy connections, facilitating more accurate GNN predictions.

Figure 3: Case study of node 62 in Wisconsin, demonstrating structural refinement post-LLM consultation leading to correct GNN classification.

Implications and Future Work

LOGIN's integration of LLMs as interactive components in the GNN training process offers a scalable solution to enhance GNN effectiveness across diverse graph types. This approach not only reduces the need for complex manual design of specialized GNNs but also opens avenues for more efficient and adaptive graph learning systems. Future research may explore deeper integration techniques, iterative consultation processes, and scalability improvements to accommodate larger graph datasets.

Conclusion

LOGIN exemplifies the synergistic potential of combining LLMs with traditional graph learning methods, fundamentally enhancing graph neural networks through intelligent LLM-guided adjustments. This framework represents a shift towards more generalized, powerful methods in graph machine learning, with promising implications for broader applications.