Unsupervised Model Tree Heritage Recovery

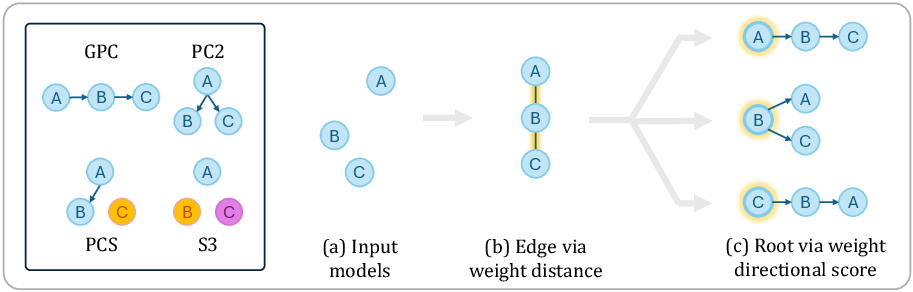

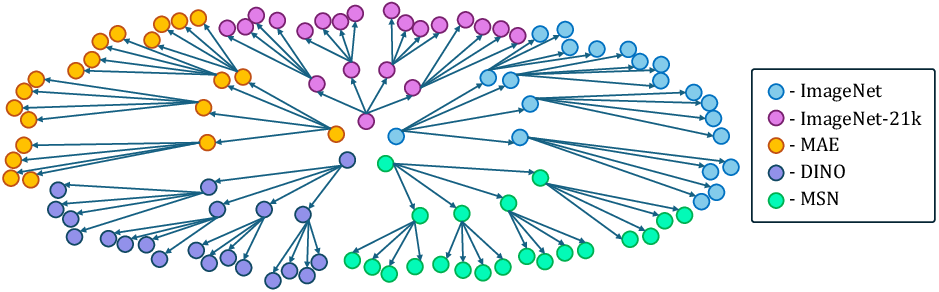

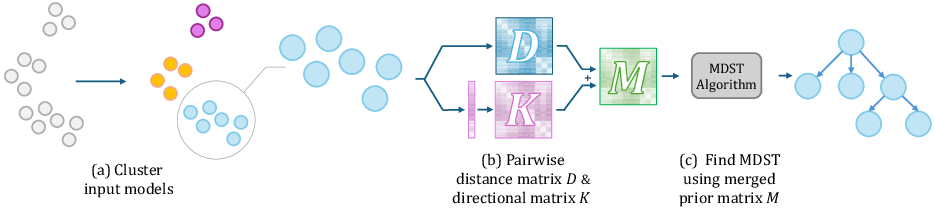

Abstract: The number of models shared online has recently skyrocketed, with over one million public models available on Hugging Face. Sharing models allows other users to build on existing models, using them as initialization for fine-tuning, improving accuracy, and saving compute and energy. However, it also raises important intellectual property issues, as fine-tuning may violate the license terms of the original model or that of its training data. A Model Tree, i.e., a tree data structure rooted at a foundation model and having directed edges between a parent model and other models directly fine-tuned from it (children), would settle such disputes by making the model heritage explicit. Unfortunately, current models are not well documented, with most model metadata (e.g., "model cards") not providing accurate information about heritage. In this paper, we introduce the task of Unsupervised Model Tree Heritage Recovery (Unsupervised MoTHer Recovery) for collections of neural networks. For each pair of models, this task requires: i) determining if they are directly related, and ii) establishing the direction of the relationship. Our hypothesis is that model weights encode this information, the challenge is to decode the underlying tree structure given the weights. We discover several properties of model weights that allow us to perform this task. By using these properties, we formulate the MoTHer Recovery task as finding a directed minimal spanning tree. In extensive experiments we demonstrate that our method successfully reconstructs complex Model Trees.

- Pca of high dimensional random walks with comparison to neural network training. Advances in Neural Information Processing Systems, 31, 2018.

- The anatomy of a large-scale hypertextual web search engine. Computer networks and ISDN systems, 30(1-7):107–117, 1998.

- Spectral networks and locally connected networks on graphs. arXiv preprint arXiv:1312.6203, 2013.

- Transformer interpretability beyond attention visualization. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 782–791, 2021.

- Yoeng-Jin Chu. On the shortest arborescence of a directed graph. Scientia Sinica, 14:1396–1400, 1965.

- Analyzing transformers in embedding space. arXiv preprint arXiv:2209.02535, 2022.

- The origin of species by means of natural selection: or, the preservation of favored races in the struggle for life. AL Burt New York, 2009.

- An image is worth 16x16 words: Transformers for image recognition at scale. arXiv preprint arXiv:2010.11929, 2020.

- Rosetta neurons: Mining the common units in a model zoo. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pages 1934–1943, 2023.

- Jack Edmonds et al. Optimum branchings. Journal of Research of the national Bureau of Standards B, 71(4):233–240, 1967.

- Classifying the classifier: dissecting the weight space of neural networks. arXiv preprint arXiv:2002.05688, 2020.

- Hyperdiffusion: Generating implicit neural fields with weight-space diffusion. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pages 14300–14310, 2023.

- Efficient algorithms for finding minimum spanning trees in undirected and directed graphs. Combinatorica, 6(2):109–122, 1986.

- Visualization of learning in neural networks using principal component analysis. In Proc of Int Conf on Computational Intelligence and Multimedia Applications, edited by: Varma, B. and Yao, X., Australia, pages 327–331. Citeseer, 1997a.

- Weight space learning trajectory visualization. In Proc. Eighth Australian Conference on Neural Networks, Melbourne, pages 55–59. Citeseer, 1997b.

- Inductive representation learning on large graphs. Advances in neural information processing systems, 30, 2017.

- Delving deep into rectifiers: Surpassing human-level performance on imagenet classification. In Proceedings of the IEEE international conference on computer vision, pages 1026–1034, 2015.

- Deep residual learning for image recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition, pages 770–778, 2016.

- Recovering the pre-fine-tuning weights of generative models. arXiv preprint arXiv:2402.10208, 2024.

- Lora: Low-rank adaptation of large language models. arXiv preprint arXiv:2106.09685, 2021.

- Backward lens: Projecting language model gradients into the vocabulary space. arXiv preprint arXiv:2402.12865, 2024.

- Semi-supervised classification with graph convolutional networks. arXiv preprint arXiv:1609.02907, 2016.

- Graph neural networks for learning equivariant representations of neural networks. arXiv preprint arXiv:2403.12143, 2024.

- Hypernet: Towards accurate region proposal generation and joint object detection. In Proceedings of the IEEE conference on computer vision and pattern recognition, pages 845–853, 2016.

- Graph metanetworks for processing diverse neural architectures. arXiv preprint arXiv:2312.04501, 2023.

- Equivariant architectures for learning in deep weight spaces. In International Conference on Machine Learning, pages 25790–25816. PMLR, 2023a.

- Equivariant deep weight space alignment. arXiv preprint arXiv:2310.13397, 2023b.

- Learning to learn with generative models of neural network checkpoints. arXiv preprint arXiv:2209.12892, 2022.

- High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 10684–10695, 2022.

- Code llama: Open foundation models for code. arXiv preprint arXiv:2308.12950, 2023.

- Grad-cam: Visual explanations from deep networks via gradient-based localization. In Proceedings of the IEEE international conference on computer vision, pages 618–626, 2017.

- Membership inference attacks against machine learning models. In 2017 IEEE symposium on security and privacy (SP), pages 3–18. IEEE, 2017.

- Llama 2: Open foundation and fine-tuned chat models. arXiv preprint arXiv:2307.09288, 2023.

- Predicting neural network accuracy from weights. arXiv preprint arXiv:2002.11448, 2020.

- SciPy 1.0: Fundamental Algorithms for Scientific Computing in Python. Nature Methods, 17:261–272, 2020.

- Neural network diffusion. arXiv preprint arXiv:2402.13144, 2024.

- Classifying nodes in graphs without gnns. arXiv preprint arXiv:2402.05934, 2024.

- A large-scale study of representation learning with the visual task adaptation benchmark. arXiv preprint arXiv:1910.04867, 2019.

- Metadiff: Meta-learning with conditional diffusion for few-shot learning. In Proceedings of the AAAI Conference on Artificial Intelligence, pages 16687–16695, 2024.

- Permutation equivariant neural functionals. Advances in Neural Information Processing Systems, 36, 2024.

- Learning deep features for discriminative localization. In Proceedings of the IEEE conference on computer vision and pattern recognition, pages 2921–2929, 2016.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.