- The paper demonstrates that integrating XAI with the scientific method enhances transparency by aligning machine insights with empirical evidence.

- It outlines methodologies comparing the machine view with human expertise, highlighting both convergent validations and divergent findings.

- The climate science application shows transformer neural networks achieving 88.3% accuracy in heatwave predictions while uncovering unexpected atmospheric patterns.

Explain the Black Box for the Sake of Science: Revisiting the Scientific Method in the Era of Generative Artificial Intelligence

Introduction

The paper "Explain the Black Box for the Sake of Science: the Scientific Method in the Era of Generative Artificial Intelligence" explores the integration of AI with the traditional scientific method. Researchers have increasingly relied on AI to identify patterns within datasets that are often beyond the grasp of traditional human observation. This integration prompts the need for bridging human expertise with AI insights to further scientific understanding through Explainable AI (XAI) methodologies.

Scientific Method and AI

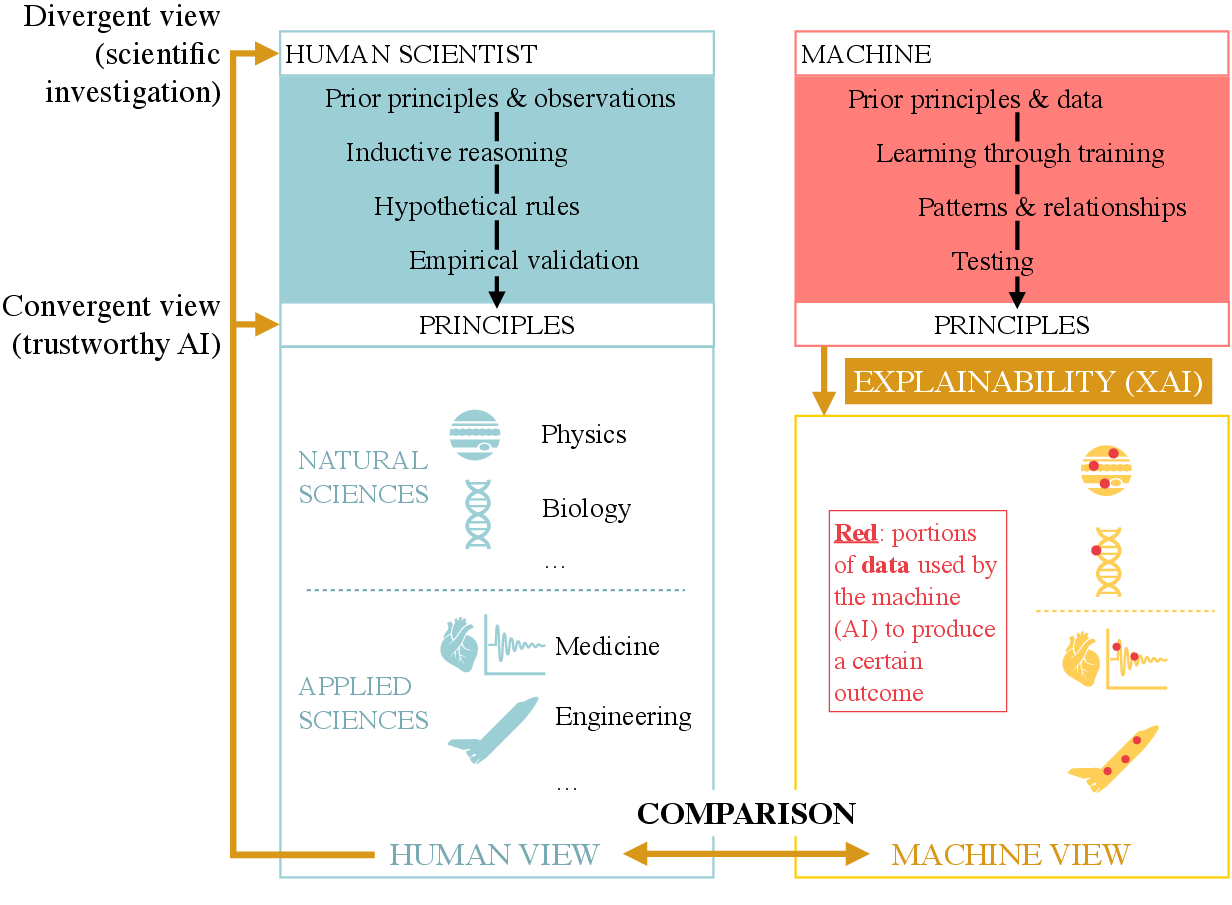

The study emphasizes the ingrained nature of the scientific method in human exploration, stating that systematic rules derived from empirical evidence are foundational. The authors suggest AI systems can benefit scientific discovery when their internal decision-making processes—termed the 'machine view'—are made transparent and comparable to the 'human view'.

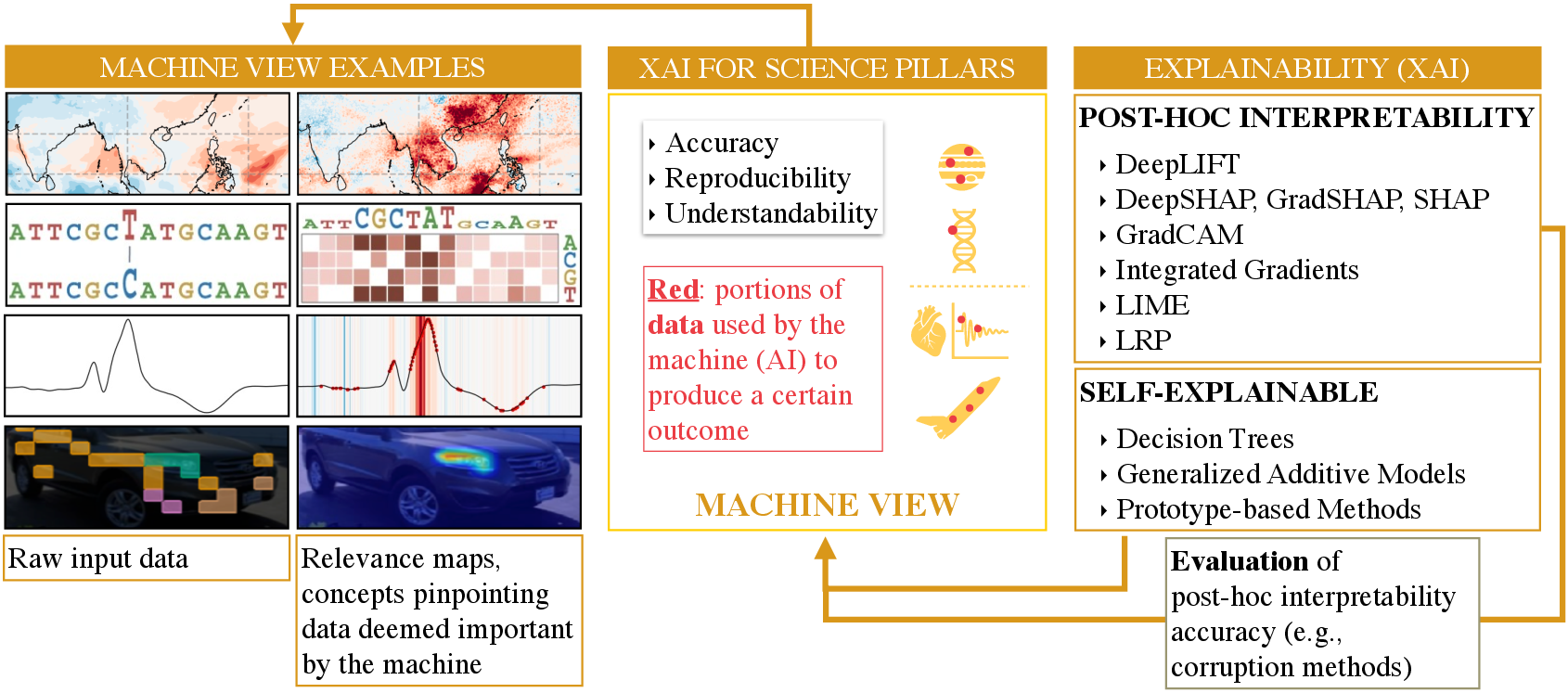

Figure 1: Comparative view of the scientific method workflow used by humans and AI, illustrating the role of explainable AI in providing outcome interpretability.

With AI’s capability to discern intricate patterns and derive principles from large datasets, its results, when interpreted correctly, can align with or diverge from established scientific knowledge, potentially leading to new insights or validating existing theories.

Explainability Through AI

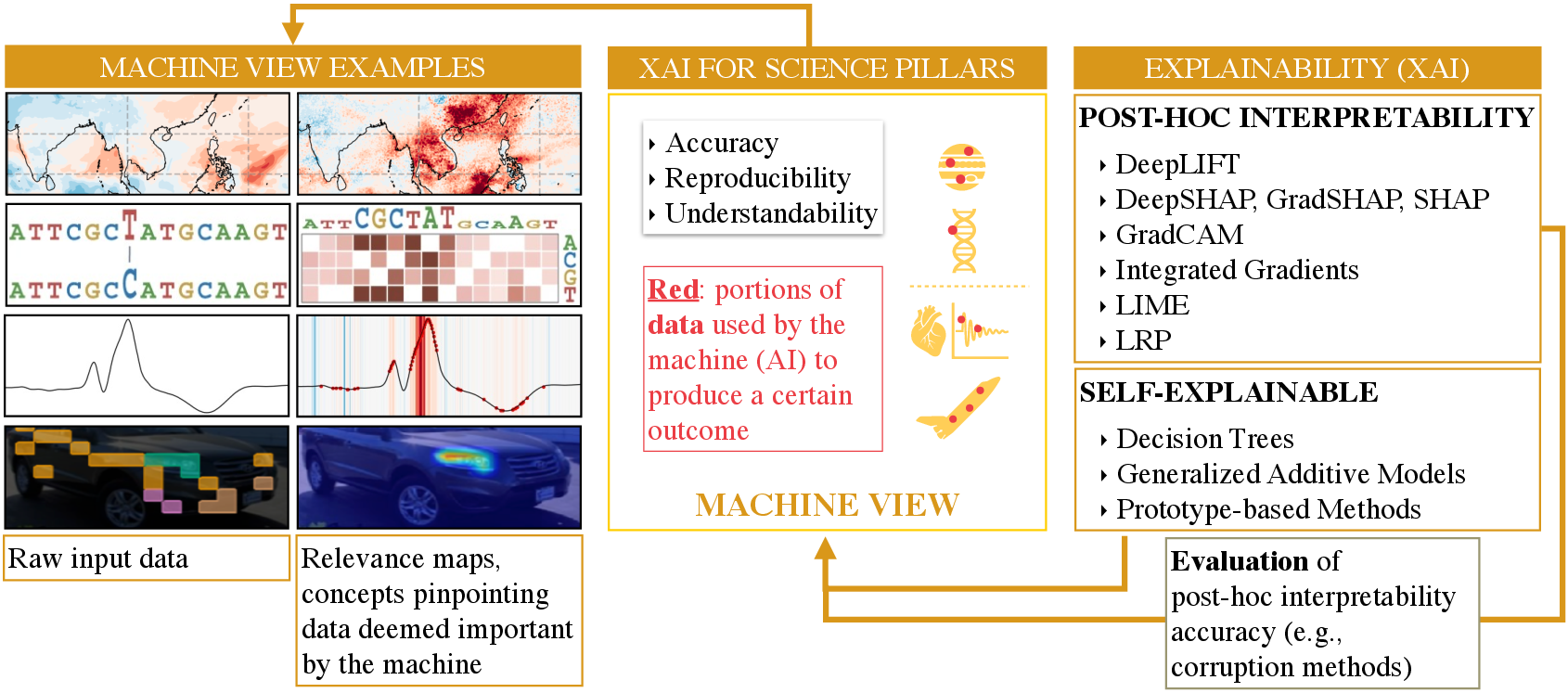

The study posits that thorough understanding of AI predictions can be achieved by examining the data used by these models, effectively providing a 'machine view'. This requires effective XAI strategies that reveal how a model's input leads to specific outputs, enabling scientists to evaluate AI-driven results against human intuition and existing scientific knowledge.

Figure 2: Explainability workflow for domain experts, highlighting the interaction between machine view and human view.

The Role of XAI in Bridging Knowledge Gaps

Utilizing XAI can lead to divergent or convergent views between the machine's insights and human understanding. Convergent views reassure the validity and trustworthiness of AI-driven results, critical in fields requiring high-trust outcomes like medicine. Divergent views prompt critical analysis and investigation, fostering potential scientific advancements where traditional methods fall short.

Practical Applications and Examples

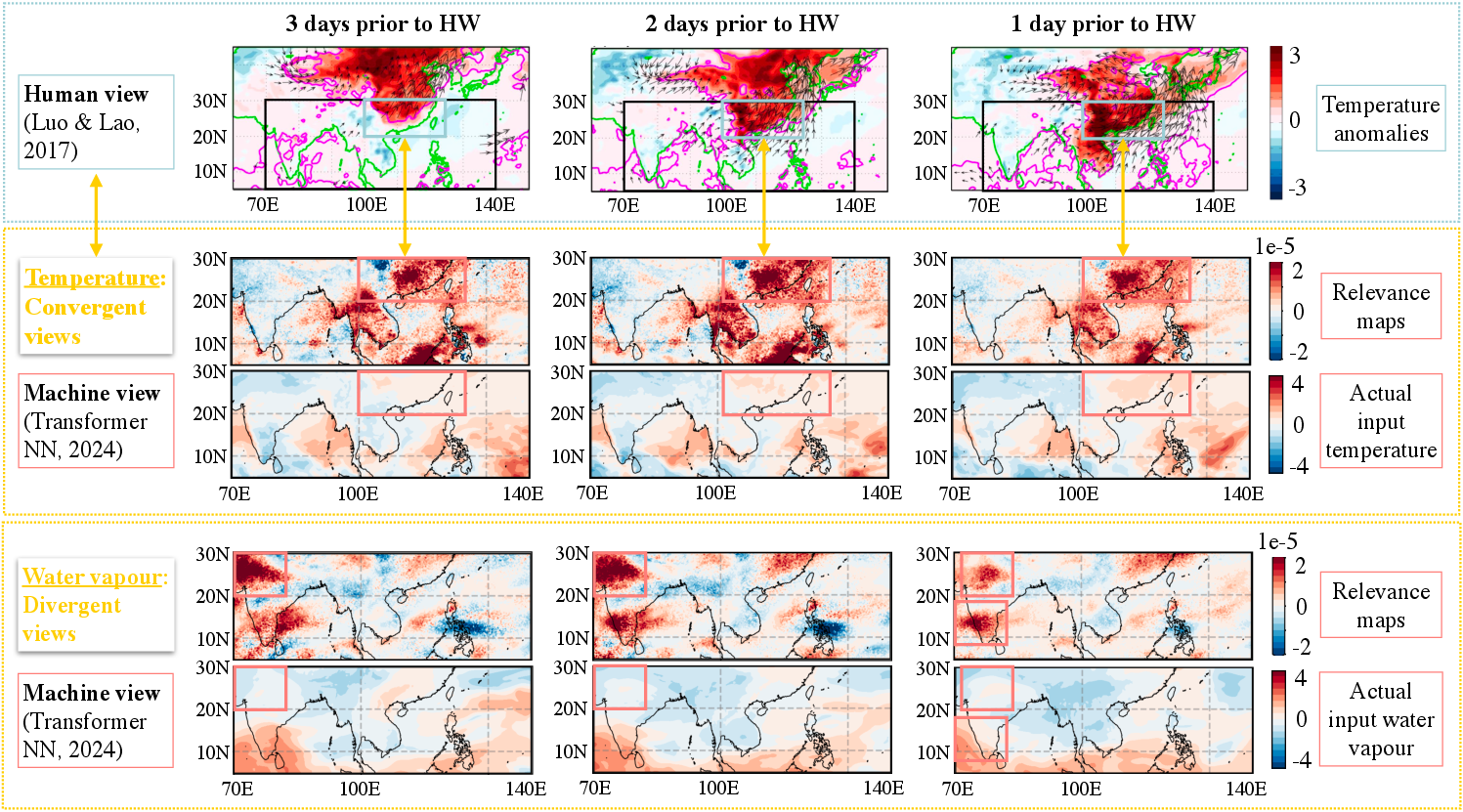

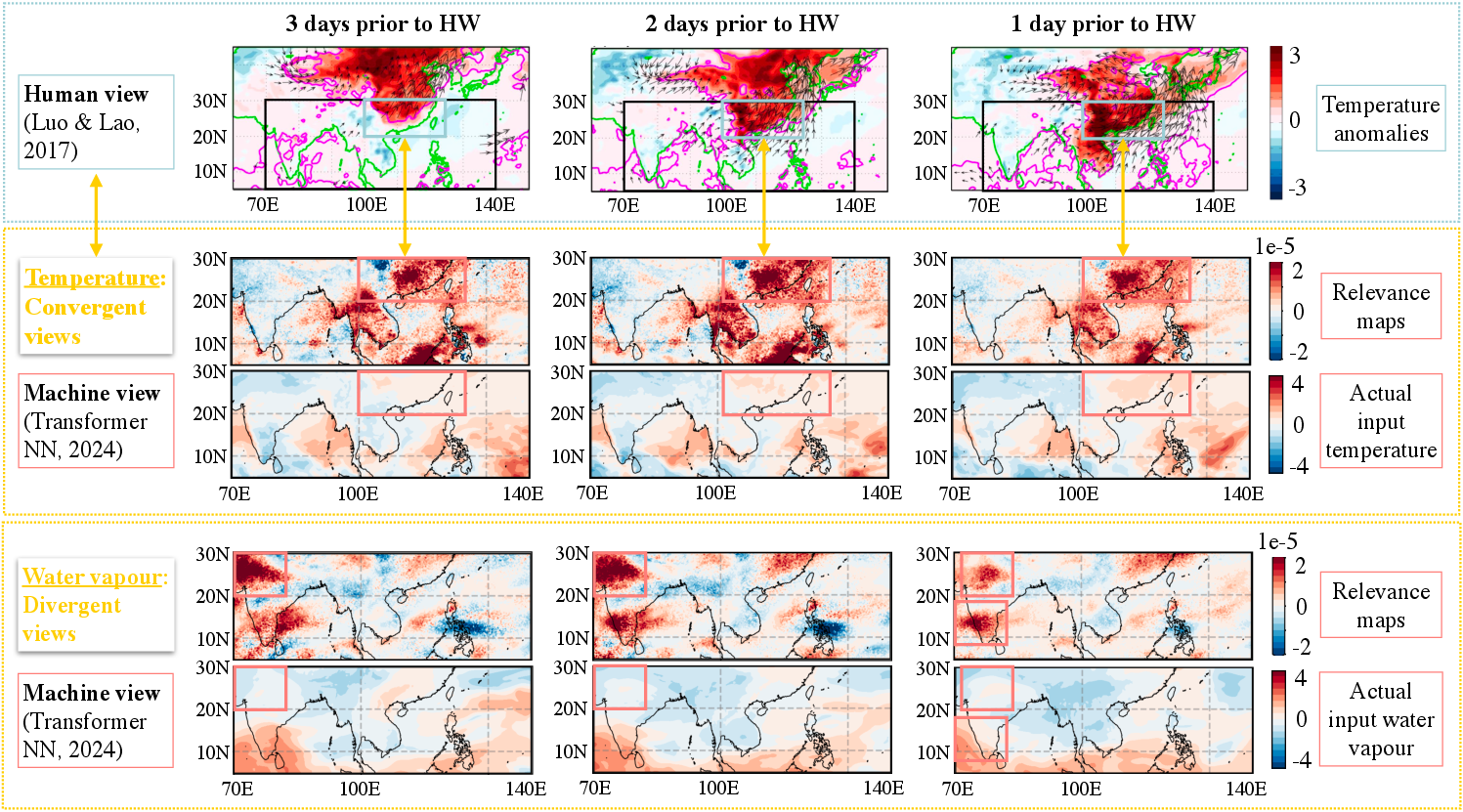

The paper provides an illustration within climate science, specifically predicting heatwaves using transformer neural networks. The model achieved an accuracy of 88.3%, and the post-hoc interpretability methods revealed that machine-derived insights on water vapor differed from human understanding, prompting further investigation.

Figure 3: Machine view and domain-expert view on heatwave prediction showcasing congruence in temperature understanding and divergence in water vapor analysis.

This example underscores XAI’s potential to uncover hidden principles, thus facilitating the refinement or expansion of current scientific knowledge.

Conclusion

The integration of XAI into the scientific process has the potential to significantly enhance the discovery of new knowledge by providing clarity and trustworthiness to AI outcomes. By comparing AI-derived insights with human expertise, XAI serves as a catalyst for both validating and challenging existing scientific paradigms. This approach not only aids in addressing complex scientific queries but also mitigates the risks tied to opaque AI models. Future research and applications in this area stand to benefit from a stronger synergy between human and machine understanding, paving the way for new scientific methodologies and insights.