PitVis-2023 Challenge: Workflow Recognition in videos of Endoscopic Pituitary Surgery

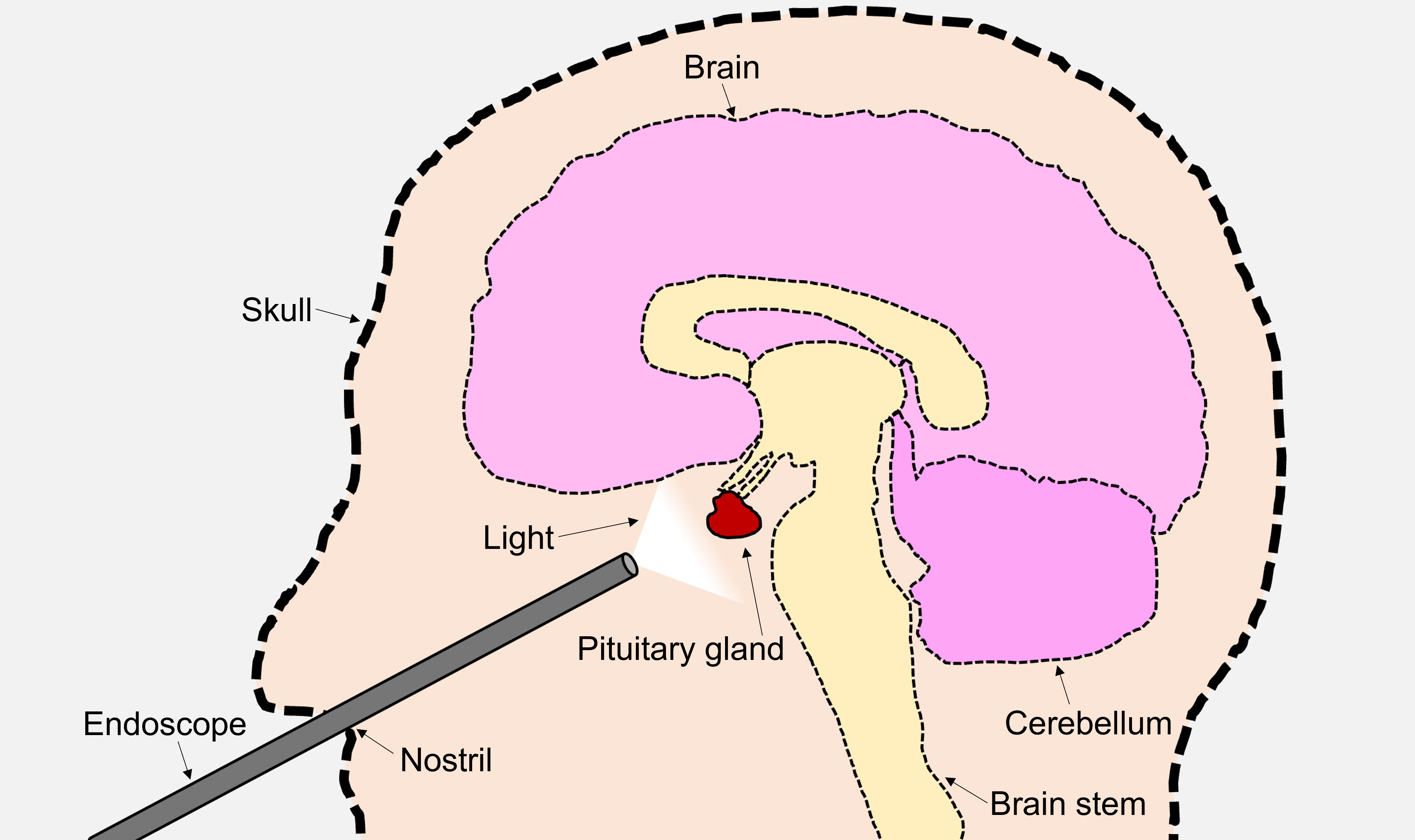

Abstract: The field of computer vision applied to videos of minimally invasive surgery is ever-growing. Workflow recognition pertains to the automated recognition of various aspects of a surgery: including which surgical steps are performed; and which surgical instruments are used. This information can later be used to assist clinicians when learning the surgery; during live surgery; and when writing operation notes. The Pituitary Vision (PitVis) 2023 Challenge tasks the community to step and instrument recognition in videos of endoscopic pituitary surgery. This is a unique task when compared to other minimally invasive surgeries due to the smaller working space, which limits and distorts vision; and higher frequency of instrument and step switching, which requires more precise model predictions. Participants were provided with 25-videos, with results presented at the MICCAI-2023 conference as part of the Endoscopic Vision 2023 Challenge in Vancouver, Canada, on 08-Oct-2023. There were 18-submissions from 9-teams across 6-countries, using a variety of deep learning models. A commonality between the top performing models was incorporating spatio-temporal and multi-task methods, with greater than 50% and 10% macro-F1-score improvement over purely spacial single-task models in step and instrument recognition respectively. The PitVis-2023 Challenge therefore demonstrates state-of-the-art computer vision models in minimally invasive surgery are transferable to a new dataset, with surgery specific techniques used to enhance performance, progressing the field further. Benchmark results are provided in the paper, and the dataset is publicly available at: https://doi.org/10.5522/04/26531686.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about (in simple terms)

Doctors sometimes remove pituitary tumors using a tiny camera and tools through the nose. While they do this, the camera records a video. This paper is about teaching computers to watch those surgery videos and automatically understand two things:

- Which step of the operation is happening right now (like following steps in a recipe)

- Which surgical tools are being used (like recognizing a hammer vs. a screwdriver)

The authors ran an international challenge called PitVis-2023 to see which computer programs (AI models) could do this best. They also built and shared the first public dataset for this specific surgery to help future research.

The main questions the paper asks

- Can AI reliably recognize the step of the surgery from each video frame?

- Can AI recognize which instrument(s) are present, even when they change quickly?

- Are models that look at both space (what’s in each image) and time (how things change across frames) better than models that look at single images only?

- Does training one model to do both tasks together (steps and instruments) help it do better?

- What does a good “score” for this problem look like, and how do we measure it fairly?

How the study was done (the challenge setup)

The dataset

- The team collected real surgery videos from a major hospital in London.

- They shared 25 training videos with the challenge participants and kept 8 test videos hidden to fairly judge the results.

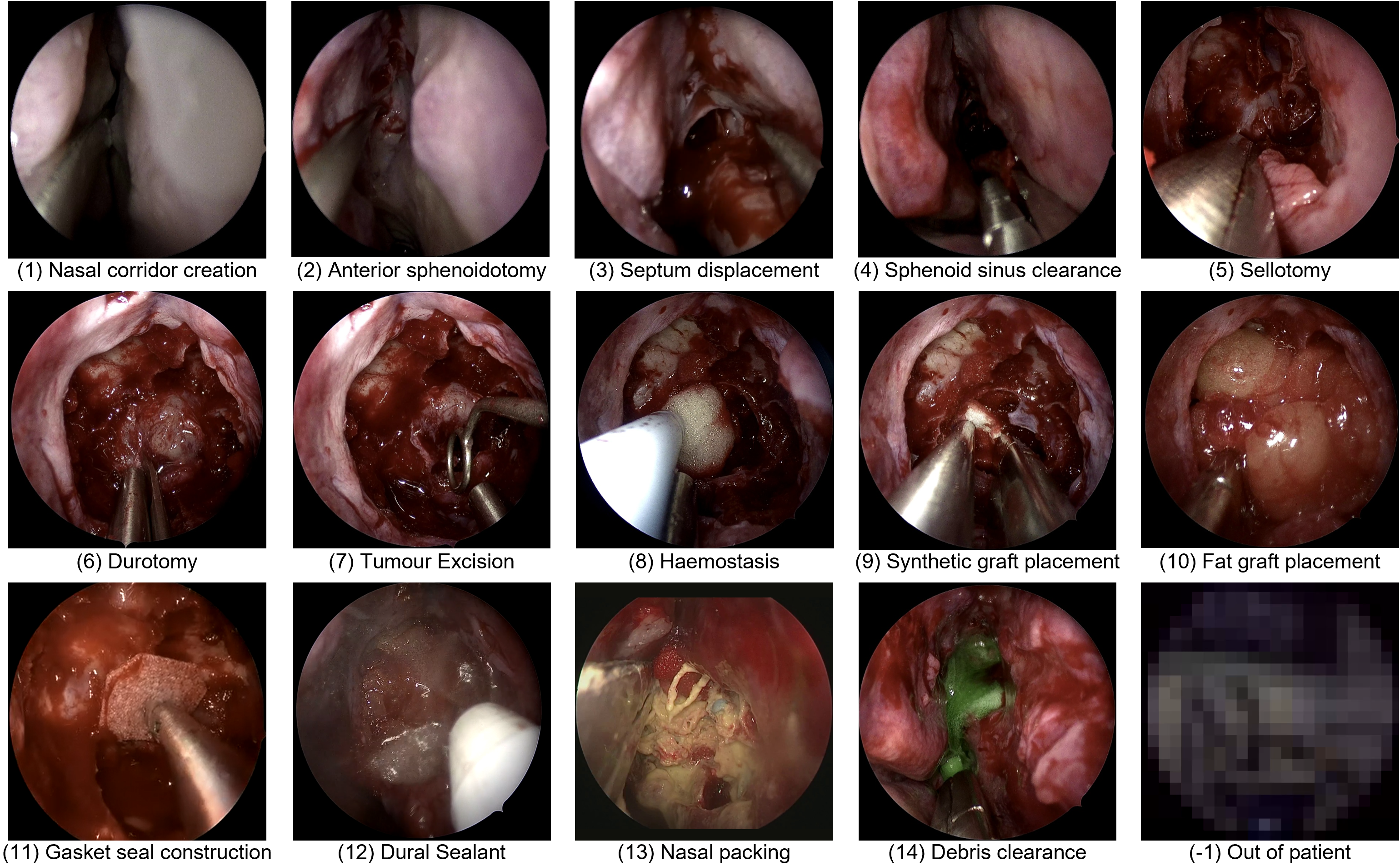

- Experts labeled every second of each video:

- The current surgical step (about a dozen steps after removing rare ones with too little data)

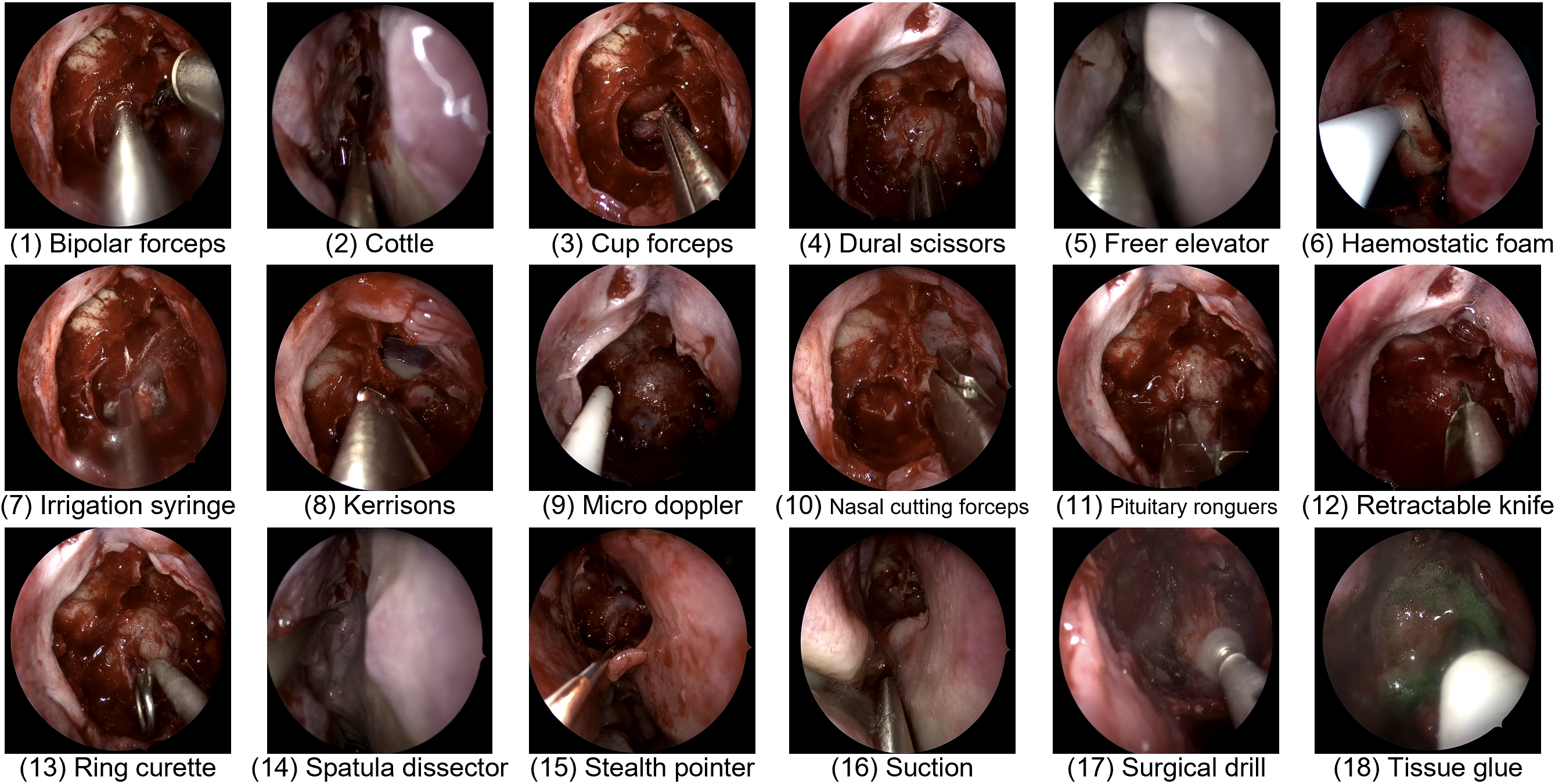

- Up to two instruments present at that moment (about 19 instruments including “no instrument”)

Think of it like giving students a set of labeled practice videos, then testing them on new videos they haven’t seen.

The tasks

Participants built AI models for:

- Task 1: Step recognition (exactly one step is happening at a time)

- Task 2: Instrument recognition (zero, one, or two tools can be present at a time)

- Task 3: Multi-task (predict both step and instruments at the same time)

All models had to be “online,” meaning they could only use current and past frames—not “peek into the future.” That’s realistic for helping during a live surgery.

How the AI models worked (in everyday language)

Most models followed a “watch a movie” approach:

- Spatial understanding: Look at each frame (like a photo) to find useful visual features (e.g., tip of a tool, blood vessels, tissue)

- Temporal understanding: Combine information across many frames (like watching a short clip) to notice actions over time (e.g., cutting vs. cleaning)

- Some models did both tasks together, sharing knowledge between recognizing steps and recognizing tools, which can help both.

You’ll see terms like:

- CNNs and Transformers: Types of neural networks that are good at understanding images and videos.

- “Spatial” models process single images.

- “Temporal” models connect frames in time, like remembering what happened a moment ago.

- Multi-task learning: One model learns steps and instruments together, so it can use clues from one to improve the other (e.g., certain tools are mostly used in certain steps).

How performance was measured

The challenge used two ideas to judge fairness and real usefulness:

- Macro-F (a balance score): Think of it as a balanced grade that checks how well the model finds each class (step/tool), giving equal importance even to rare ones. This avoids “cheating” by always guessing the common class.

- Edit score (for steps): Checks how smooth and sensible the sequence of predicted steps is over time. It punishes “flickering” (rapid, unrealistic step changes), because real surgeries don’t jump wildly between steps every second.

For instruments, they used Macro-F only, because instruments can switch very quickly and multiple tools can overlap, which makes simple smoothness checks less fair.

Why this problem is hard

- Small, distorted view: The camera is very close and the working space is tiny. The edges of the image can be warped, and tool shafts can block the tip.

- Frequent switching: Steps and tools can change often and not always in the same order.

- Occlusions: Blood or fog can block the camera, and the scope is taken out to clean—so the video is not always consistent.

- Look-alikes: Some tools and steps look very similar, so motion and context matter a lot (what happened just before often tells you what’s happening now).

- Imbalance: Some tools (like suction) and “no instrument” appear a lot; others are rare. That makes training and fair scoring tricky.

What the challenge found

- 18 submissions from 9 teams across 6 countries took part.

- The best models used both space and time (spatio-temporal methods) and often did both tasks together (multi-task learning).

- These models beat simple, image-only models by a lot:

- Step recognition: more than 50% improvement (Macro-F)

- Instrument recognition: more than 10% improvement (Macro-F)

- This shows that state-of-the-art video AI from other surgeries can work well here too, especially when adapted to the special challenges of pituitary surgery.

- The dataset is now public, so other researchers can build on this work.

Why this matters

If computers can reliably recognize steps and tools in real time, they can help:

- Teach: Create better training videos and automatic feedback for junior surgeons.

- Document: Auto-fill operation notes after surgery (saving time).

- Assist live: Alert the team when a new step starts or the next instrument is needed, making the operating room more efficient and safer.

Beyond that, releasing a high-quality public dataset sets a baseline for future research and encourages fair comparisons and steady progress.

What’s next

- Improve handling of quick tool changes with better time-based scoring for instruments.

- Gather more data for rare steps and instruments.

- Make models more robust across different hospitals and recording setups.

- Move toward real-time assistance in the operating room, with careful testing to ensure safety and usefulness.

In short, PitVis-2023 shows that modern AI can understand complex surgery videos when it learns both from what’s in each frame and how things change over time—and that sharing data and benchmarks helps the whole field move forward.

Practical Applications

Summary

The paper introduces the PitVis-2023 Challenge and the first publicly available, per-second annotated dataset for workflow recognition in endoscopic pituitary surgery (eTSA). It benchmarks state-of-the-art spatio-temporal and multi-task computer vision models for step and instrument recognition, reports substantial performance improvements over purely spatial single-task baselines, and outlines clinically meaningful use cases (training, documentation, intraoperative assistance). These assets and findings enable a range of practical applications in healthcare, education, software/AI, and policy.

Below are prioritized, actionable applications derived from the paper’s findings, methods, and innovations. Each item indicates sectors, potential tools/products/workflows, and key assumptions/dependencies.

Immediate Applications

These can be piloted or integrated now with available data, models, and infrastructure.

- Healthcare — Video auto-tagging and indexing in surgical video platforms

- Use case: Automatically segment eTSA videos by recognized steps and instruments to create chaptered content in hospital video management systems (e.g., Touch Surgery Ecosystem).

- Tools/products/workflows: “Workflow Recognition API” using spatio-temporal encoders (e.g., Swin, MViT, ResNet50) + temporal decoders (LSTM/TCN/Transformer) + optional temporal smoothing; per-second metadata export; Dockerized inference; post hoc batch processing at 1 fps.

- Assumptions/dependencies: Access to de-identified videos (720p, 24 fps); domain similarity to PitVis dataset; GPU or server resources; adherence to IRB/consent; site-specific ontologies mapped to PitVis step/instrument definitions.

- Healthcare — Semi-automated operative note drafting

- Use case: Generate structured summaries of performed steps and used instruments to speed up operative note creation.

- Tools/products/workflows: “Operative Note Auto-Drafter” using step presence detection (CNN/Transformer + TSF) and instrument recognition; templated EHR insertion; clinician validation workflow.

- Assumptions/dependencies: Clinical oversight; local EHR integration; acceptance of macro-F/Edit-score as quality proxies; institution-approved note templates; handling minor annotation errors.

- Healthcare/Operations — Instrument usage analytics for supply chain and procurement

- Use case: Create dashboards showing instrument usage duration and variability across surgeons, cases, and time (e.g., suction dominance, instrument switching patterns).

- Tools/products/workflows: “Instrument Utilization Dashboard” summarizing per-second labels; procurement and inventory planning; review of instrument availability changes noted in training vs. testing distributions.

- Assumptions/dependencies: Accurate instrument classification for dominant classes (e.g., suction, no instrument); ongoing data ingestion; governance to avoid misuse in surgeon performance evaluation.

- Medical Education — Curriculum-aligned training and automated performance metrics

- Use case: Chaptered training videos aligned to eTSA steps; trainee analytics (time on task, frequency of step/instrument switching, sequence adherence) to reduce learning curve.

- Tools/products/workflows: “Training Coach” module that computes macro-F-based per-frame correctness against expert-defined sequences; Edit-score for temporal consistency; interactive feedback and replay.

- Assumptions/dependencies: Acceptance of PitVis step definitions across programs; availability of annotated videos; human-in-the-loop feedback; avoidance of over-reliance on a single metric.

- Academia/Software — Benchmarking and rapid prototyping for surgical AI

- Use case: Use PitVis dataset (DOI provided) for reproducible evaluation of new spatio-temporal/multi-task architectures and temporal metrics.

- Tools/products/workflows: “PitVis Benchmark Suite” with baseline code, Dockerized evaluation scripts, macro-F/Edit-score computation; model ablation and transfer learning studies.

- Assumptions/dependencies: Dataset license adherence; comparability of metrics; consideration of class imbalance and volatile instrument usage patterns.

- Annotation Acceleration — Pre-annotation with active learning

- Use case: Reduce manual labeling burden by pre-annotating steps/instruments and focusing human effort on corrections and edge cases.

- Tools/products/workflows: “Labeling Assistant” using current PitVis-trained models; uncertainty sampling; active learning loop; quality assurance checkpoints.

- Assumptions/dependencies: Sufficient model confidence on common classes; clear annotation guidelines; human verification; audit trails.

- Quality & Safety — Postoperative audit and case review

- Use case: Quantify workflow consistency; flag frequent short, temporally inconsistent predictions/changes (e.g., haemostasis bursts) for review.

- Tools/products/workflows: “Workflow Consistency Report” using Edit-score and transition probabilities; peer review integration; morbidity/mortality meeting support.

- Assumptions/dependencies: Careful interpretation to avoid penalizing clinically appropriate deviations; calibration to local practice patterns.

- Policy/Governance — Challenge and dataset governance model replication

- Use case: Adopt BIAS guidelines, annotation verification workflows, and de-identification pipelines for future surgical AI datasets/challenges.

- Tools/products/workflows: Institutional “Surgical AI Data Governance Playbook” covering consent, blurring, fps standardization, per-second annotation protocols, public/private splits.

- Assumptions/dependencies: IRB alignment; resourcing for multi-round annotation and verification; clear publication/licensing plans; socialization across clinical and data teams.

Long-Term Applications

These require further research, scaling, development, and/or regulatory approval before clinical deployment.

- Healthcare — Real-time intraoperative step/instrument recognition for OR orchestration

- Use case: Live display of current step and active instruments to scrub nurses, anesthetists, and circulating staff; proactive prompts for upcoming instrument needs; improved OR efficiency.

- Tools/products/workflows: “OR Context Feed” integrated into endoscope towers; streaming inference at 24–30 fps with low latency; alerting workflows; role-specific UIs.

- Assumptions/dependencies: Robust generalization across hospitals/devices; human factors design; regulatory approval as a CDSS; cybersecurity; reliable performance in occlusions and small working spaces.

- Healthcare/EHR/Billing — End-to-end structured operative notes and coding automation

- Use case: Automatically generate structured operative notes with validated step timelines and instrument use; support coding/billing and registries.

- Tools/products/workflows: “EHR Note Generator” with clinician-in-the-loop validation; standards-compliant export (HL7/FHIR); coding rules; audit trails.

- Assumptions/dependencies: Regulatory approvals; integration with hospital EHRs; medico-legal acceptance; robust error handling and provenance tracking.

- Robotics/Automation — Context-aware assistance and camera control

- Use case: Use recognized steps/instruments to drive robotic camera positioning, stabilize view, trigger camera cleaning sequences when occlusions likely, or offer context-aware tool guidance.

- Tools/products/workflows: “Context-Aware Scope Controller” combining spatio-temporal recognition with device logs; closed-loop orchestration with endoscope systems.

- Assumptions/dependencies: High-fidelity real-time performance; interoperability with device APIs; rigorous safety validation; multi-modal fusion (e.g., sensor data, audio) to disambiguate similar tools.

- Cross-Surgery Generalization — Unified workflow recognition across minimally invasive procedures

- Use case: Extend spatio-temporal multi-task recognition (steps, instruments) to other surgeries (e.g., cholecystectomy, prostatectomy), enabling standardized analytics across specialties.

- Tools/products/workflows: “General Surgical Workflow Engine” with domain adaptation, transfer learning, ontology mapping; multi-institutional datasets.

- Assumptions/dependencies: Larger, diverse public datasets; agreed ontologies; harmonized metrics beyond macro-F (especially for multi-label instruments); site-specific model calibration.

- Medical Education — Personalized, at-scale coaching and certification support

- Use case: Real-time or post hoc skill assessment that correlates workflow features with competence; adaptive training plans; certification-ready reports.

- Tools/products/workflows: “Surgical Skill Assessor” combining Edit-score, transition consistency, instrument-switching metrics, and outcome-linked analytics.

- Assumptions/dependencies: Validated links between workflow metrics and competence/outcomes; acceptance by training bodies; bias and fairness audits.

- Policy/Standards — Ontology and metric standardization for surgical workflow AI

- Use case: Establish common step and instrument taxonomies, temporal metrics (e.g., macro-F + Edit-score for steps; improved temporal metrics for multi-label instruments), and reporting standards.

- Tools/products/workflows: “Surgical Workflow Standards Consortium”; reference specifications; benchmark leaderboards with clinically grounded metrics.

- Assumptions/dependencies: Multi-stakeholder consensus (surgeons, industry, regulators); iterative metric refinement; cross-institutional validation.

- Research — Multimodal surgical context engines

- Use case: Fuse video with audio, device telemetry, instrument trackers, and vital signs to improve robustness and resolve visually ambiguous classes (e.g., doppler probe vs. glue applicator).

- Tools/products/workflows: “Multimodal Context Engine” with spatio-temporal transformers and correlation losses across tasks; active learning for rare events; instrument segmentation extensions.

- Assumptions/dependencies: Access to synchronized multimodal data; annotation scale; privacy and security for audio/device streams; compute constraints.

- Edge Deployment — Low-latency inference on surgical towers

- Use case: Deploy compressed, quantized models to endoscope hardware for real-time inference with strict latency and reliability targets.

- Tools/products/workflows: Model compression pipelines; hardware-accelerated inference (TensorRT/ONNX Runtime); watchdog and fallback procedures.

- Assumptions/dependencies: Vendor cooperation; certification for medical devices; resilience to domain shift; robust maintenance and updates.

- Outcomes Research — Linking workflow features to patient outcomes and variability

- Use case: Use recognized step sequences and instrument patterns to study outcome variability, learning curves, and best practices; inform guidelines and policy.

- Tools/products/workflows: “Workflow–Outcome Analytics” integrating clinical outcomes with per-second labels; statistical and causal models; guideline development.

- Assumptions/dependencies: Longitudinal data; standardized outcomes; confounder control; ethics approvals; multi-center collaboration.

Notes on Assumptions and Dependencies Across Applications

- Data and domain generalization: Performance may degrade across hospitals, devices, and time due to practice heterogeneity and instrument availability changes; requires domain adaptation and ongoing validation.

- Annotation quality: Human labeling errors are mitigated but not eliminated; human-in-the-loop review remains important for clinical deployments.

- Metric choice: Macro-F and Edit-score are appropriate starting points for steps; instrument recognition needs improved temporal metrics for multi-label volatility; clinical relevance must guide metric selection.

- Real-time constraints: Online models must meet latency targets; robustness under occlusion, distortion, and frequent instrument/step switching is critical.

- Regulatory and governance: Clinical decision support requires regulatory approval, rigorous validation, transparency (BIAS guidelines), consent and de-identification processes, and cybersecurity controls.

- Human factors and UI: Acceptance by OR teams depends on clear interfaces, minimal cognitive load, and reliable alerts; design must consider different roles and workflows.

- Integration: EHR interoperability (HL7/FHIR), device API access, and hospital IT policies are essential for end-to-end solutions.

Collections

Sign up for free to add this paper to one or more collections.