Comparative validation of surgical phase recognition, instrument keypoint estimation, and instrument instance segmentation in endoscopy: Results of the PhaKIR 2024 challenge

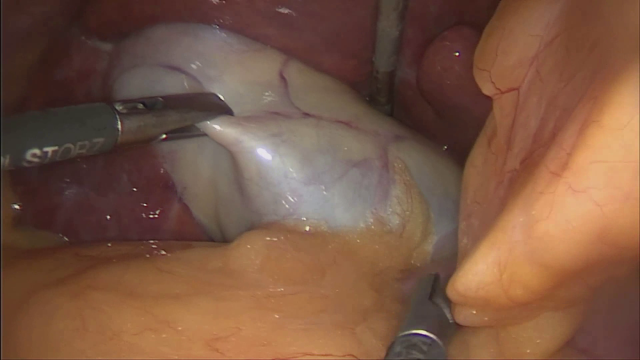

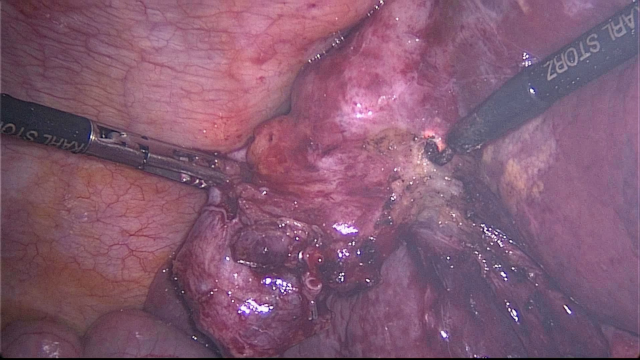

Abstract: Reliable recognition and localization of surgical instruments in endoscopic video recordings are foundational for a wide range of applications in computer- and robot-assisted minimally invasive surgery (RAMIS), including surgical training, skill assessment, and autonomous assistance. However, robust performance under real-world conditions remains a significant challenge. Incorporating surgical context - such as the current procedural phase - has emerged as a promising strategy to improve robustness and interpretability. To address these challenges, we organized the Surgical Procedure Phase, Keypoint, and Instrument Recognition (PhaKIR) sub-challenge as part of the Endoscopic Vision (EndoVis) challenge at MICCAI 2024. We introduced a novel, multi-center dataset comprising thirteen full-length laparoscopic cholecystectomy videos collected from three distinct medical institutions, with unified annotations for three interrelated tasks: surgical phase recognition, instrument keypoint estimation, and instrument instance segmentation. Unlike existing datasets, ours enables joint investigation of instrument localization and procedural context within the same data while supporting the integration of temporal information across entire procedures. We report results and findings in accordance with the BIAS guidelines for biomedical image analysis challenges. The PhaKIR sub-challenge advances the field by providing a unique benchmark for developing temporally aware, context-driven methods in RAMIS and offers a high-quality resource to support future research in surgical scene understanding.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Glossary

- 95% Hausdorff Distance (95% HD): A robust boundary-based metric measuring the 95th percentile of shortest distances between contours of predicted and ground-truth segmentations. "the 95\% Hausdorff-Distance (95\% HD, \cite{huttenlocher1993comparing}) served as the boundary-based metric."

- Balanced Accuracy (BA): The unweighted average of sensitivity and specificity, useful for class imbalance. "Balanced Accuracy (BA,~\cite{tharwat2021classification}) served as the metric for evaluating the overall multi-class performance."

- BIAS guidelines: Reporting standards for biomedical image analysis challenges to ensure transparent and fair evaluation. "We report results and findings in accordance with the BIAS guidelines for biomedical image analysis challenges."

- bootstrapping: A resampling technique with replacement used to estimate metric stability and confidence. "we applied bootstrapping~\cite{efron1992bootstrap} with 10,000 iterations."

- calot triangle dissection (CTD): A defined surgical phase in cholecystectomy involving dissection of Calot’s triangle. "calot triangle dissection (CTD)"

- Cholec80 dataset: A benchmark dataset of cholecystectomy videos annotated with surgical phases. "following the classification scheme introduced in the Cholec80 dataset by~\cite{twinanda2016endonet}."

- cholecystectomy: Surgical removal of the gallbladder. "thirteen full-length laparoscopic cholecystectomy videos"

- cleaning and coagulation (ClCo): A surgical phase focusing on hemostasis and cleanup. "cleaning and coagulation (ClCo)"

- clipping and cutting (ClCu): A surgical phase where structures are clipped and transected. "clipping and cutting (ClCu)"

- COCO evaluation protocol: A standardized evaluation scheme (for detection/segmentation/keypoints) computing mAP across multiple thresholds. "The mean Average Precision (mAP) is computed following the COCO evaluation protocol~\cite{lin2014microsoft}"

- Computer Vision Annotation Tool (CVAT): An annotation tool for images and videos used to create segmentation and keypoint labels. "the Computer Vision Annotation Tool (CVAT)\footnote{\url{https://www.cvat.ai/} was used."

- Dice Similarity Coefficient (DSC): An overlap metric for segmentation accuracy ranging from 0 (no overlap) to 1 (perfect overlap). "the Dice Similarity Coefficient (DSC, \cite{dice1945measures}) was used as the multi-instance multi-class overlap metric"

- EndoVis (Endoscopic Vision Challenge): A MICCAI-affiliated challenge series focused on endoscopic image analysis. "the Endoscopic Vision (EndoVis) challenge at MICCAI 2024."

- ex-vivo: Data or procedures performed outside a living organism. "sequences featuring robotic instruments are based on ex-vivo data from animal tissue"

- F1-score: The harmonic mean of precision and recall, balancing false positives and false negatives. "the F1-score~\hbox{\cite{van1979information,chinchor1992muc4} was used as the per-class performance metric"

- gallbladder dissection (GD): A surgical phase involving dissection of the gallbladder from its bed. "gallbladder dissection (GD)"

- gallbladder packaging (GP): A surgical phase where the gallbladder is bagged for extraction. "gallbladder packaging (GP)"

- gallbladder retraction (GR): A surgical phase involving retraction to expose the operative field. "gallbladder retraction (GR)"

- HeiChole Benchmark: A benchmark from the Surgical Workflow and Skill Analysis Challenge used for phase recognition research. "as part of the Surgical Workflow and Skill Analysis Challenge (HeiChole Benchmark, \cite{wagner2019comparative})"

- Hungarian Maximum Matching Algorithm: An algorithm for optimal assignment used to match predicted and ground-truth instances. "we applied the Hungarian Maximum Matching Algorithm~\cite{kuhn1955hungarian} based on the IoU between all possible prediction-ground truth pairs."

- in-vivo: Data or procedures performed within a living organism. "for in-vivo recordings involving manual instruments"

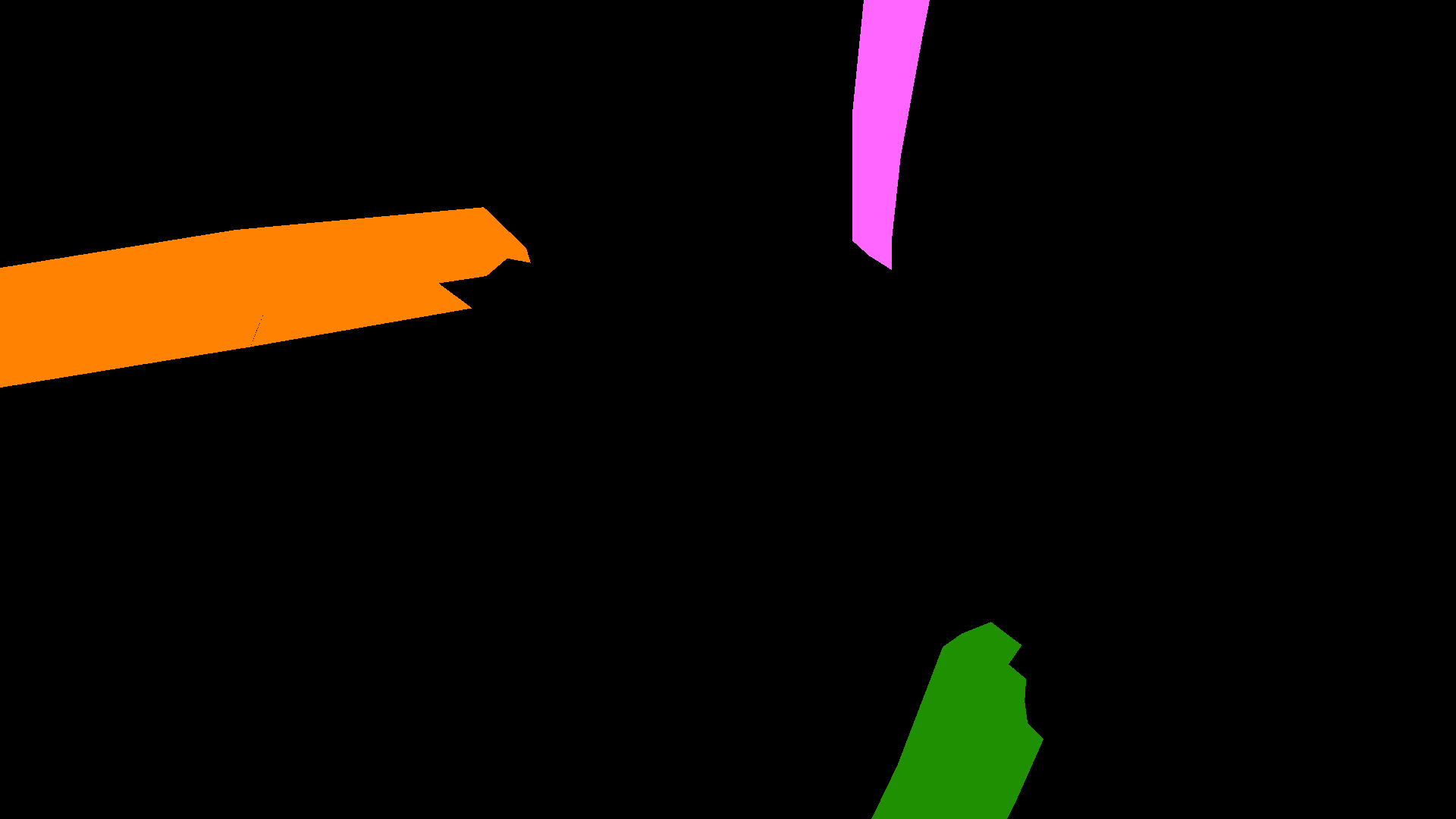

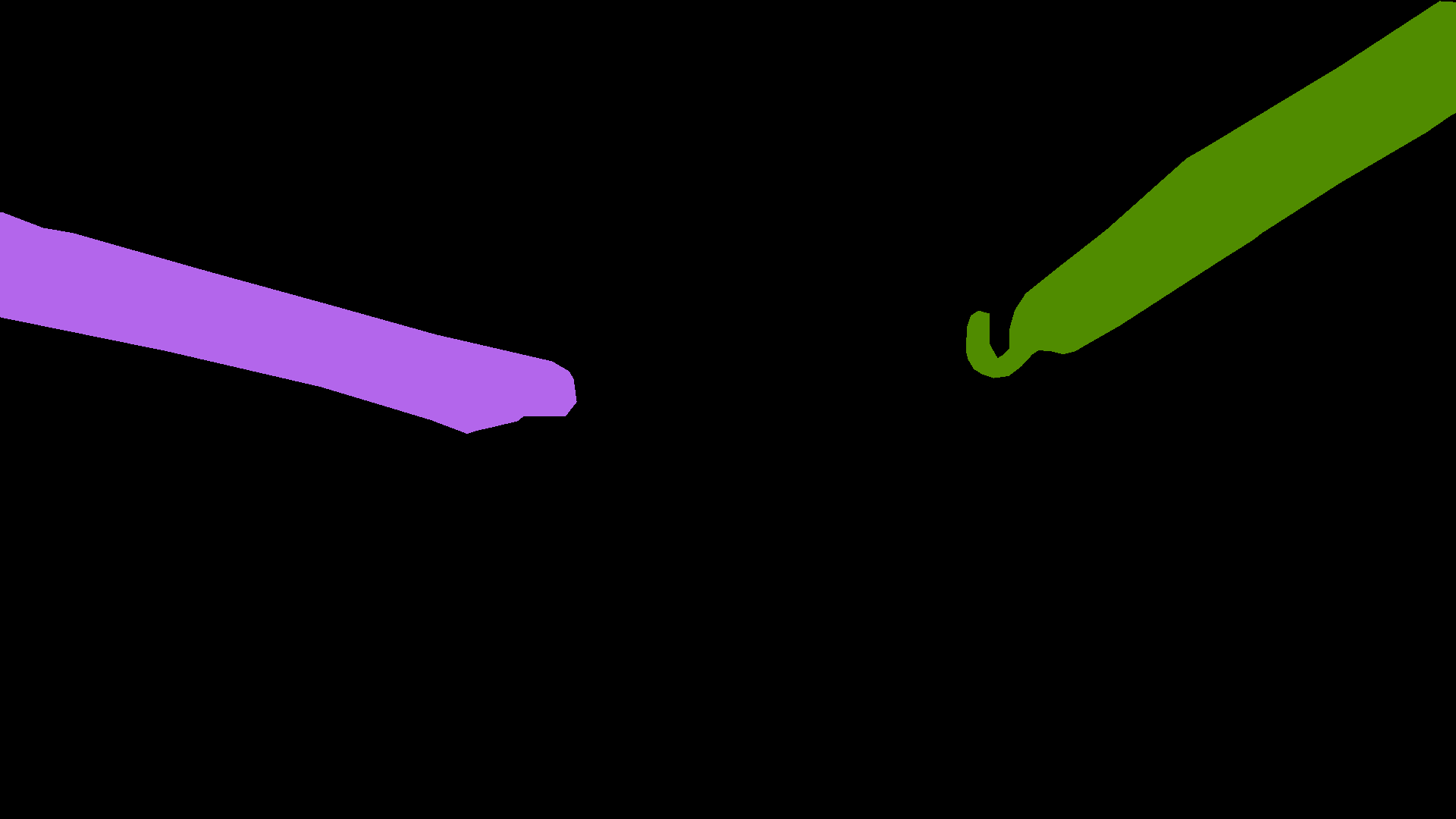

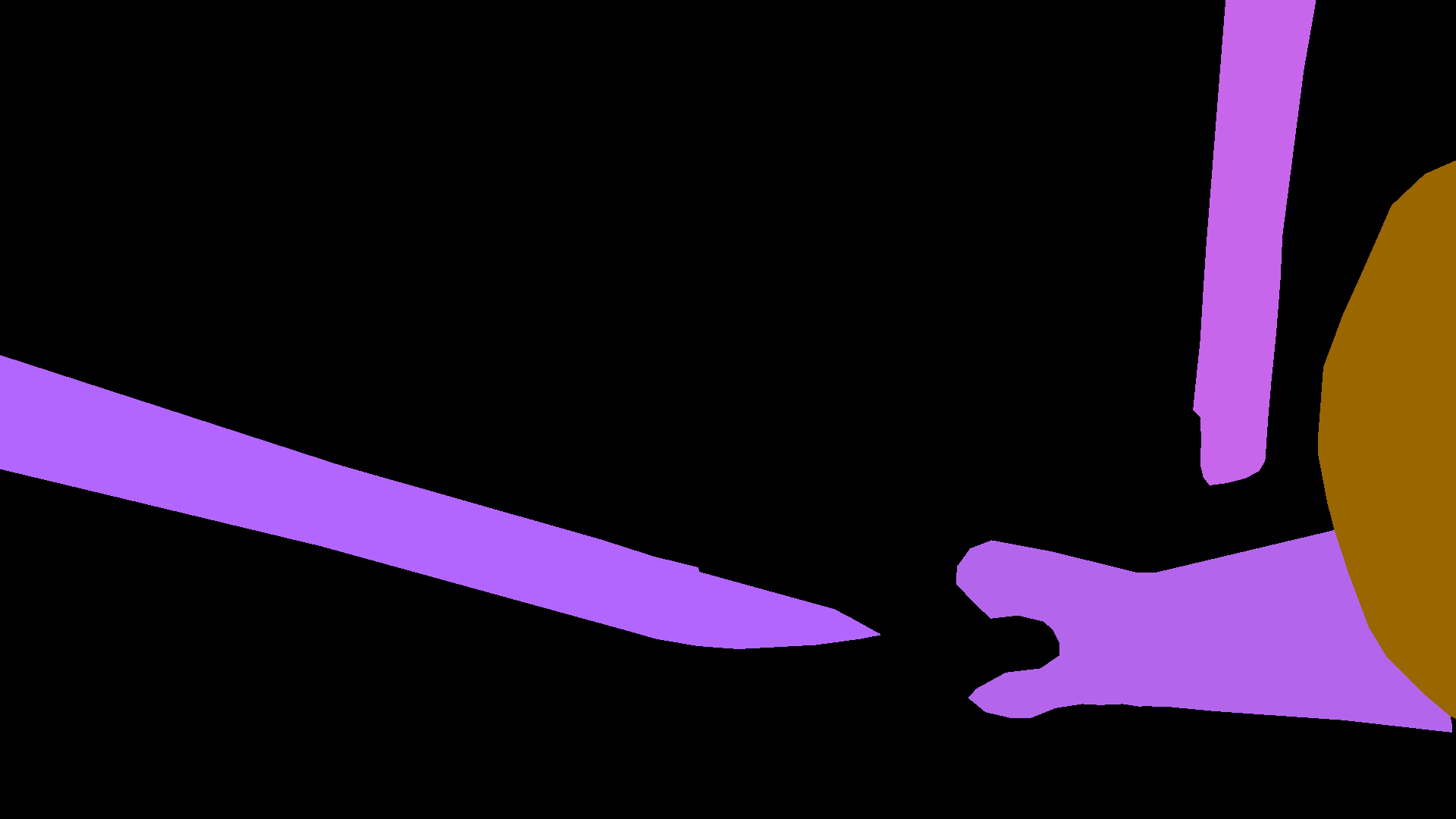

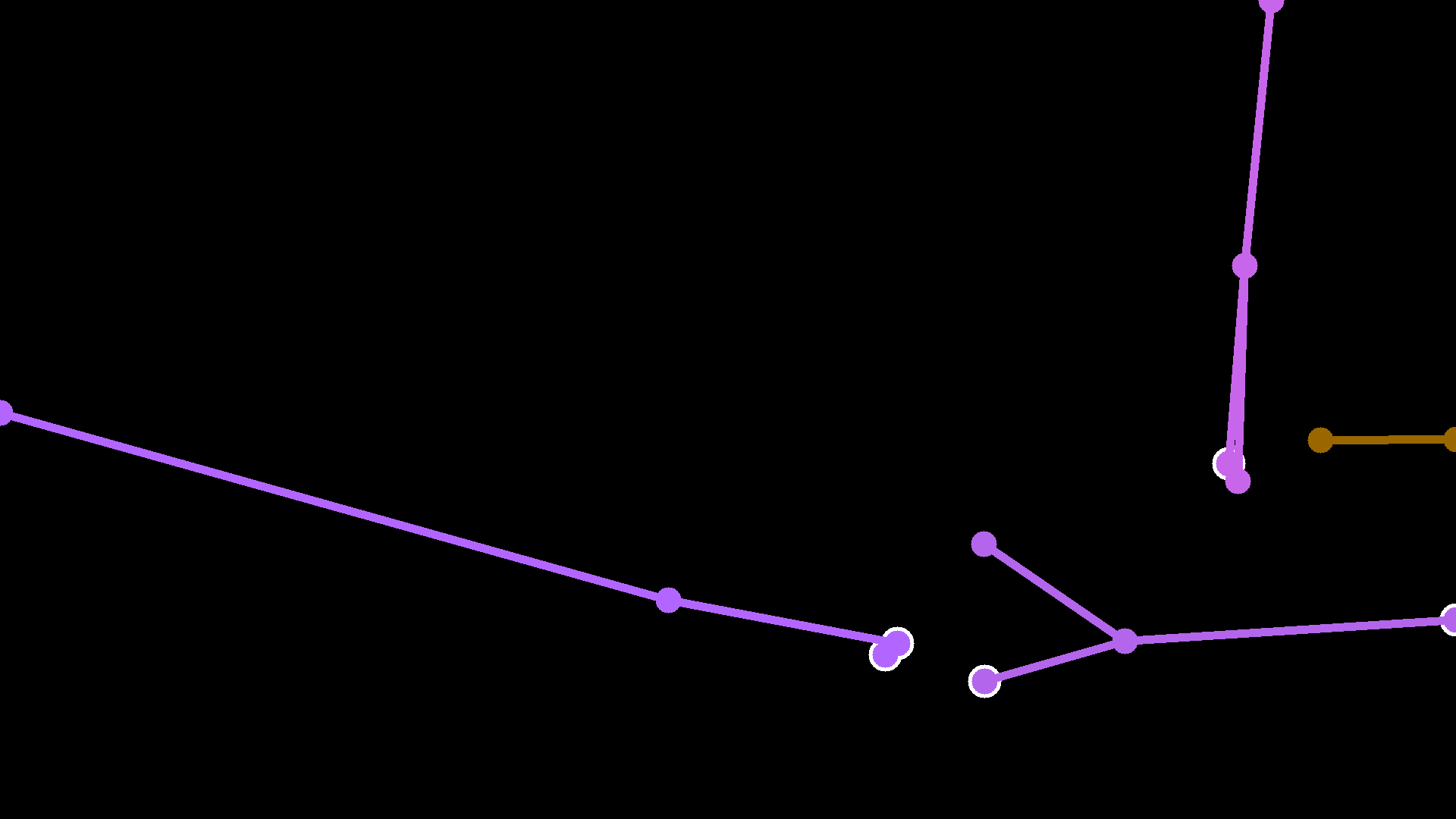

- Instrument instance segmentation: Pixel-precise multi-class, multi-instance segmentation of instruments in images. "Instrument instance segmentation: Segmentation of the contours of the surgical instruments as precisely as possible through pixel-accurate predictions and distinguishing different instrument classes and instances of the same instrument class."

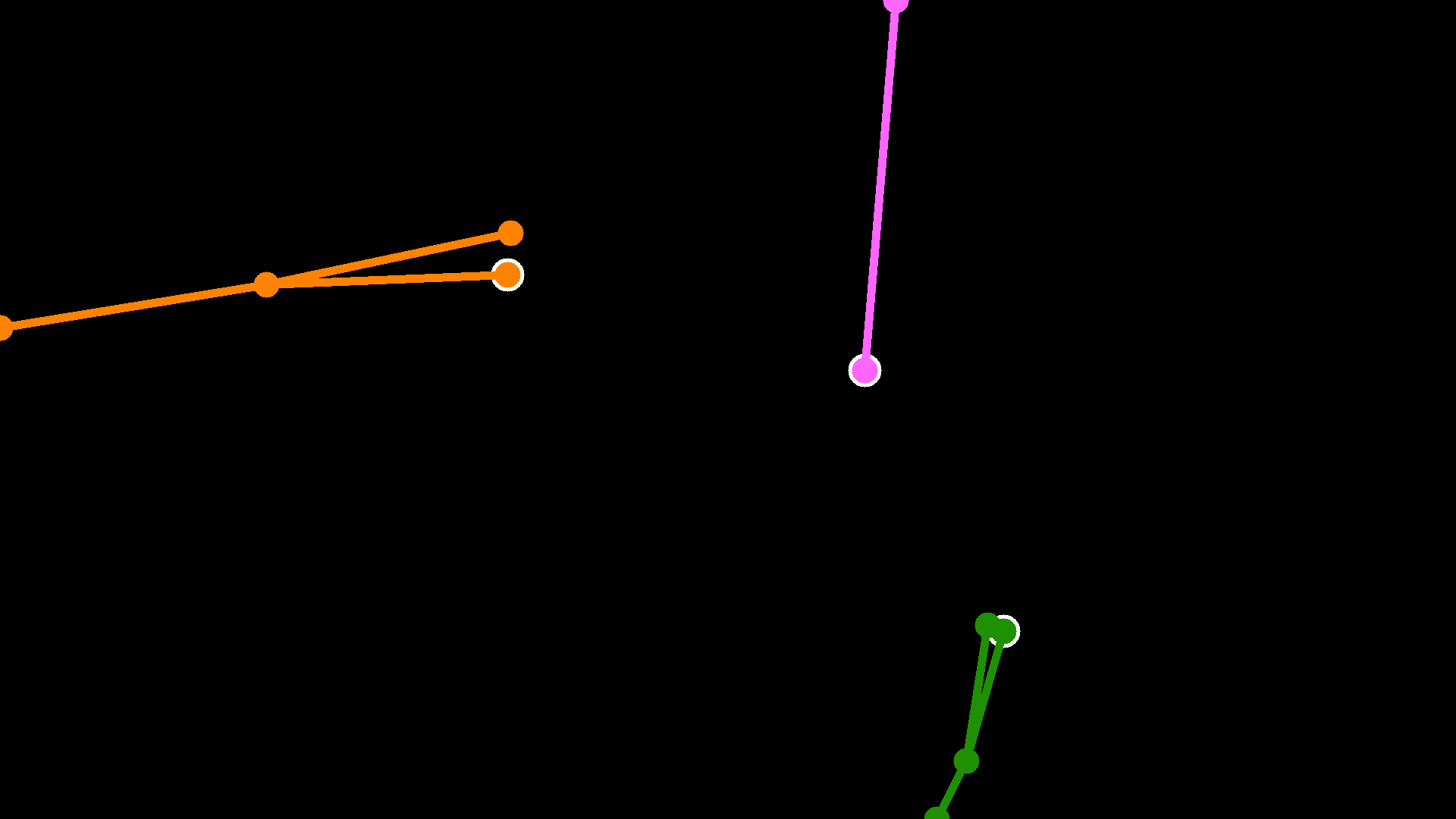

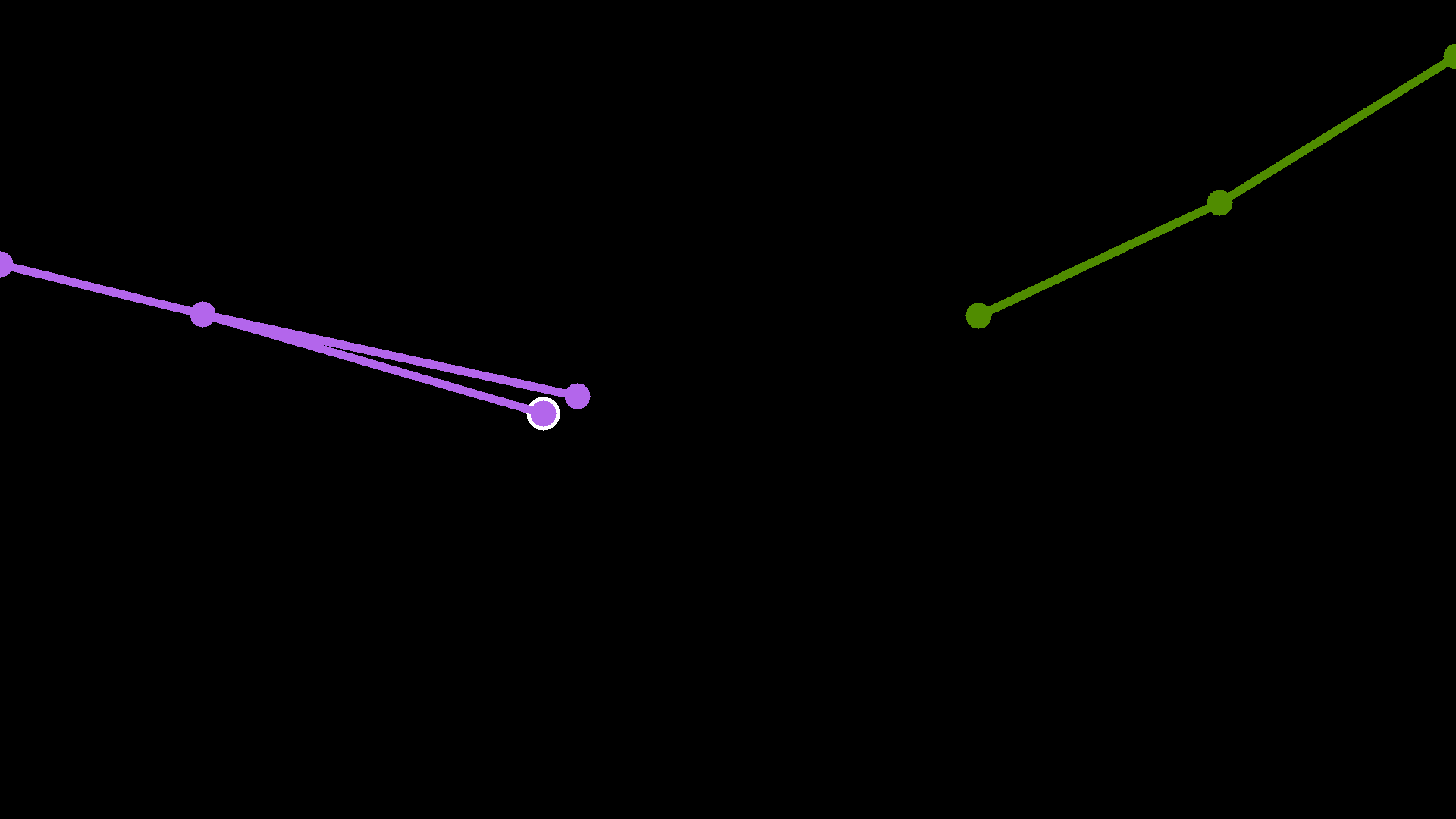

- Instrument keypoint estimation: Localizing predefined keypoints on surgical instruments, accounting for visibility and instrument type. "Instrument keypoint estimation: Location of certain keypoints on the surgical instruments as precisely as possible through pixel-accurate coordinates, and distinguishing different instrument classes and instances of the same instrument class, considering that the number of keypoints depends on the type of the individual instrument."

- Intersection-over-Union (IoU): An overlap ratio between predicted and ground-truth regions used for instance matching. "all Intersection-over-Union (IoU) thresholds :"

- mean Average Precision (mAP): The mean of average precision values across classes and thresholds, summarizing detection/segmentation performance. "The mean Average Precision (mAP) is computed following the COCO evaluation protocol~\cite{lin2014microsoft}"

- MICCAI: The Medical Image Computing and Computer Assisted Intervention conference/society. "the annual conference of the Medical Image Computing and Computer Assisted Intervention (MICCAI) society"

- Metrics Reloaded Framework: A framework of recommendations for selecting appropriate metrics in medical image analysis. "followed the recommendations of the Metrics Reloaded Framework proposed by~\cite{maier2024metrics}."

- Object Keypoint Similarity (OKS): A keypoint similarity measure that accounts for object scale and keypoint visibility. "the $\text{mAP}_{\text{OKS}$ metric based on the Object Keypoint Similarity (OKS) following the COCO evaluation protocol~\cite{cocoKeypointEval}."

- PhaKIR: The Surgical Procedure Phase, Keypoint, and Instrument Recognition sub-challenge at EndoVis 2024. "Surgical Procedure Phase, Keypoint, and Instrument Recognition (PhaKIR) sub-challenge"

- porcine tissue: Pig tissue used in experimental datasets, often simplifying instrument recognition vs. human tissue. "operate on porcine tissue~\cite{allan2017robotic, allan2018robotic}, which significantly simplifies the recognition of surgical instruments compared to human tissue"

- preparation (P): The initial surgical phase preparing the operative field. "preparation (P)"

- RAMIS: Robot-assisted minimally invasive surgery. "robot-assisted minimally invasive surgery (RAMIS)"

- shaft-tip transition: A keypoint marking the junction between an instrument’s shaft and tip. "the shaft-tip transition, indicating the junction between the shaft and the tip,"

- Surgical phase recognition: Classification of procedural phases from endoscopic video. "Surgical phase recognition: Classification of the surgical phases of a cholecystectomy as accurately as possible."

- undefined phase: A label used for transitional frames between phases. "an eighth category -- an undefined phase -- was introduced to label transitional frames between two phases."

Collections

Sign up for free to add this paper to one or more collections.