- The paper presents ChainBuddy, an AI-assisted system that automates initial LLM pipeline generation to mitigate the blank page problem in evaluation setup.

- It utilizes a multi-agent architecture based on the LangGraph framework, integrating requirement gathering, planning, task-specific, connection, and review agents.

- Usability studies show that ChainBuddy reduces cognitive workload and setup time, enabling efficient and structured experimentation with LLMs.

ChainBuddy: An AI Agent System for Generating LLM Pipelines

The paper "ChainBuddy: An AI Agent System for Generating LLM Pipelines" presents an AI-assisted system designed to address the "blank page problem" encountered by users when creating evaluation pipelines for LLMs (2409.13588). The system, integrated into the ChainForge platform, employs a conversational agent to automate the generation of initial LLM pipelines, aiding users in evaluating LLM behavior across varied tasks.

Introduction to ChainBuddy

ChainBuddy is developed to offer a structured approach to LLM pipeline creation, easing users into the process of designing experiments and evaluations for LLMs. This feature comes as a vital addition to the growing suite of tools for LLM operations [flowiseai] and addresses common user challenges in prompt engineering and model evaluation.

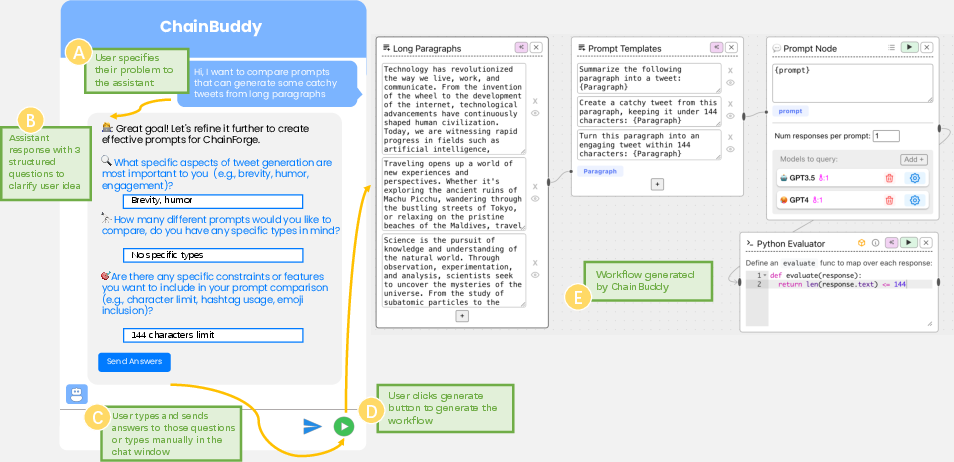

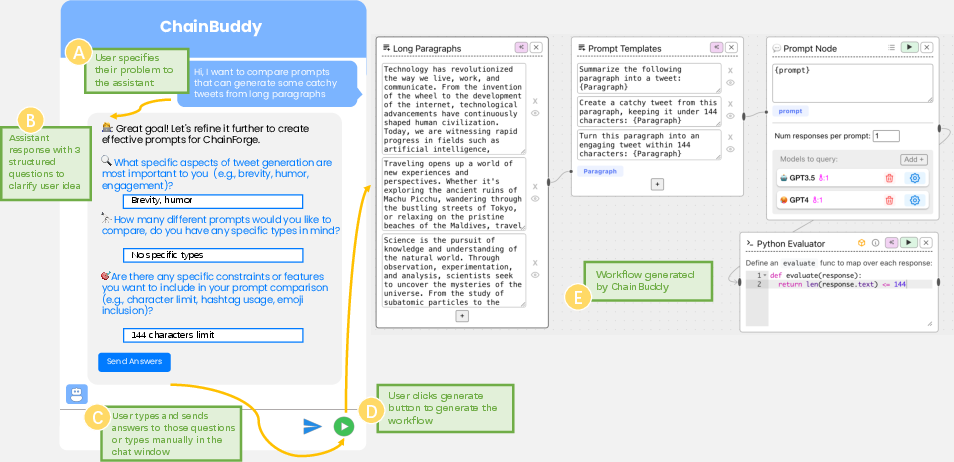

Figure 1: ChainBuddy interface and example usage. Users specify requirements (A), ChainBuddy replies with a requirements-gathering form (B) that users can either fill out and send, or follow up with an open-ended chat (C). User presses green button (D) to indicate that they are ready to generate a flow. After a delay of 10-20 sec, ChainBuddy produces a starter pipeline (E). Here, the starter pipeline includes example inputs, multiple prompts to try (prompt templates), two queried models, and a Python-based code evaluator.

System Architecture

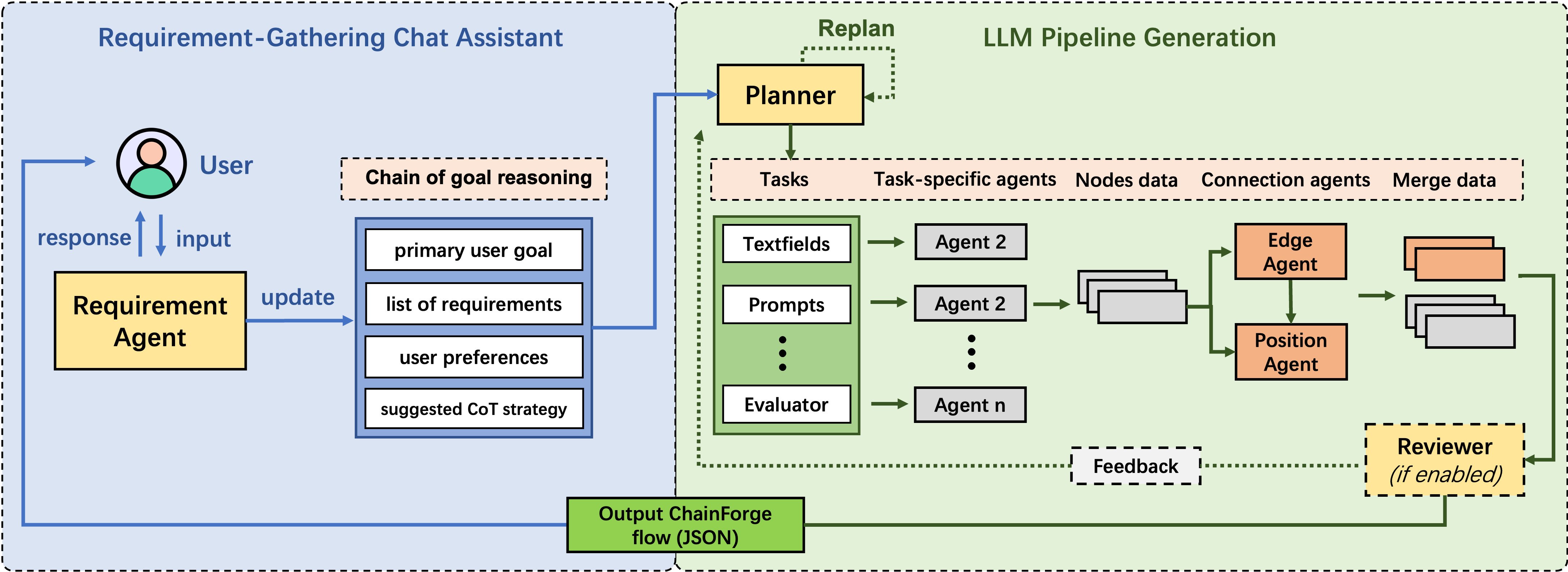

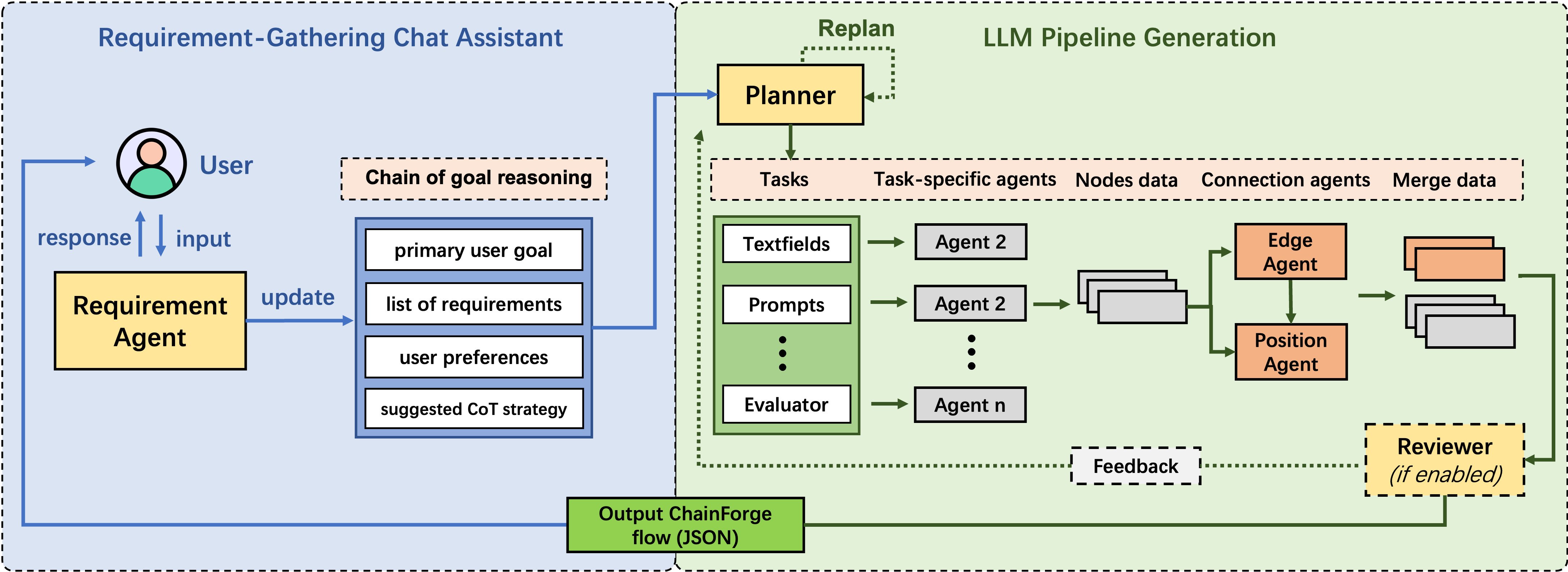

ChainBuddy's architecture is composed of several interacting agents built on the LangGraph framework, which supports multi-actor LLM applications [langgraph]. The architecture consists of:

- Requirement Gathering Chat Assistant: Utilizes a structured Q&A with the user to gather requirements, minimizing ambiguity in task specifications.

- Planner Agent: Forms a comprehensive plan for LLM pipeline structure, considering available nodes in the ChainForge environment.

- Task-Specific Agents: Handle individual responsibilities such as data input and prompt creation customized for user needs.

- Connection Agents: Organize and connect task outputs, completing the flow structure.

- Post-hoc Reviewer Agent: Ensures the final output aligns with user specifications, though it was disabled for the usability study to reduce generation time.

Figure 2: ChainBuddy system architecture. A front-end requirement agent elicits user intent and context (left). Upon user interaction, the system generates a comprehensive pipeline plan, assessing tasks through dedicated agents.

Evaluation and Usability Study

The usability study conducted demonstrated that ChainBuddy effectively reduces users' cognitive workload and enhances confidence in creating LLM evaluation pipelines. Participants reported a significant reduction in mental demand and time spent setting up evaluations compared to a baseline interface without the assistant's support.

The study featured tasks such as professional email drafting and tweet summarization, which were evaluated using the NASA TLX scale and system usability metrics. Results showed that users performed more effectively with ChainBuddy, leveraging its ability to generate accurate and editable pipeline structures.

Figure 3: Participant responses to Likert Questions for NASA TLX and system usability, grouped by Condition. Significant main effects indicate reduced mental demand and improved confidence with the ChainBuddy assistant involved.

Implementation and Practical Applications

Implementing ChainBuddy in real-world LLM evaluation scenarios allows for efficient setup of complex prompt comparisons and automated evaluations. The system's design is suitable for diverse applications, from standardizing code outputs and evaluating model biases to generating data processing workflows.

Configuration considerations include optimizing for node generation and ensuring flexibility in user-specified queries. Users can leverage ChainBuddy's structured assistance to explore various LLM capabilities with less upfront effort, facilitating a broader experimentation range.

Conclusion

ChainBuddy offers a significant advancement in user-centered AI interaction design, easing the initial setup phase for LLM pipeline generation. By automating initial drafts and providing a framework for editing and expansion, the system empowers users to focus on high-level experimentation and evaluation tasks.

Future work could expand on ChainBuddy's functionalities, such as supporting more complex editing capabilities and incorporating additional data sources, further advancing the scope of AI-assisted pipeline development.