- The paper introduces AgentOps, a novel framework that enables comprehensive observability of LLM agents by tracing artifacts such as goals, plans, and tools.

- The methodology involves a mapping study of 17 tools and presents a detailed taxonomy categorizing agent spans, reasoning, planning, workflows, evaluation, and safety guardrails.

- The framework offers practical implications through prototype development and real-world validations to bridge theoretical concepts with operational AI safety.

Observability in Agent Operations: The AgentOps Paradigm

The paper "AgentOps: Enabling Observability of LLM Agents" discusses the necessity of observability in LLM agents to ensure AI safety. It introduces the AgentOps concept, a specialized DevOps paradigm tailored for agents, providing a comprehensive taxonomy that addresses the tracing of agent artifacts throughout their lifecycle.

Introduction to AgentOps

LLM agents, autonomous systems powered by LLMs, interact with external environments and evolve over time, presenting significant AI safety concerns due to their nondeterministic behavior and complex operational pipelines. The paper underscores the need for observability in these systems, aiming to provide stakeholders with actionable insights to monitor, detect anomalies, and prevent failures.

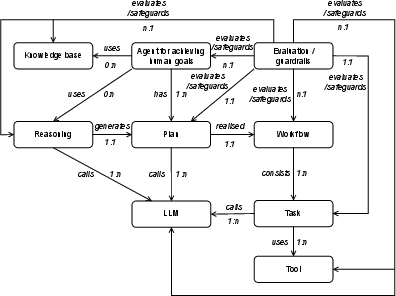

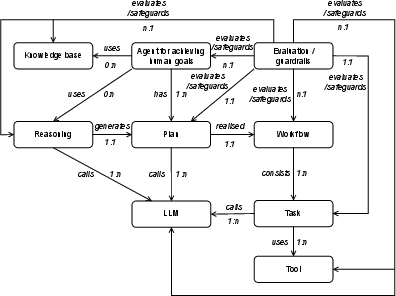

Observability in AgentOps involves systematically tracing agent artifacts and data—such as goals, plans, and tools—throughout operational pipelines (Figure 1). This approach contrasts with traditional DevOps tools that often focus solely on LLM-specific metrics and miss crucial agent-specific observability aspects.

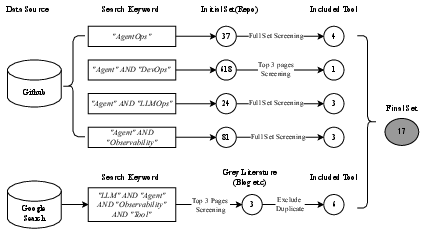

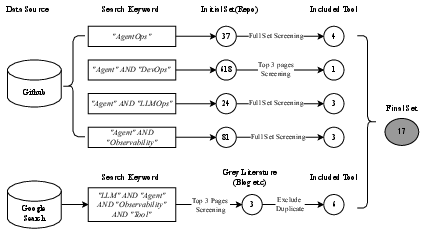

Figure 1: Search Process of AgentOps Relevant Tools.

The paper's methodology includes a systematic mapping study of existing tools related to AgentOps. The study identifies 17 tools relevant to AgentOps, categorized by attributes such as GitHub stars and key features. Notable tools include AgentOps, Langfuse, and Datadog, each offering various functionalities like monitoring, tracing, and prompt management (Figure 2).

Figure 2: Entity-Relationship Model for Agent Artifacts.

Taxonomy of AgentOps

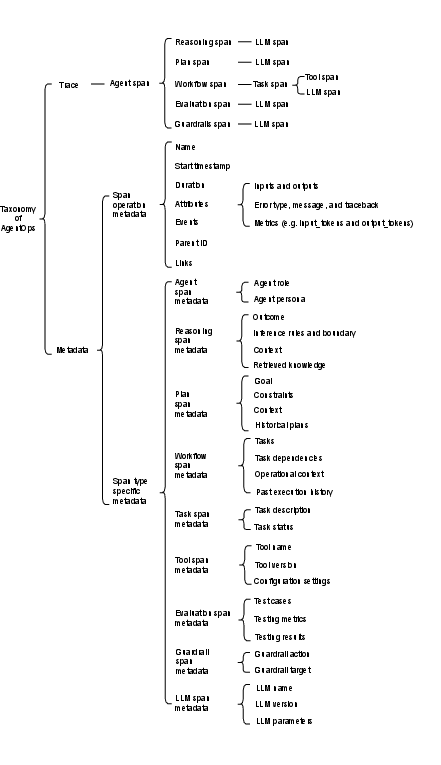

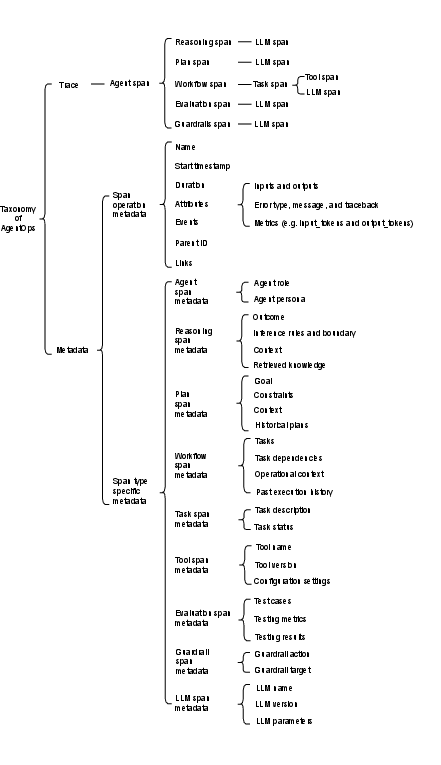

The taxonomy proposed in the paper delineates a structured approach to trace agent artifacts. It includes several layers, such as:

- Agent Spans: Define the agent's role and interaction style.

- Reasoning Spans: Capture the agent's context, retrieved knowledge, and inference rules.

- Planning Spans: Record the agent's goals, constraints, and historical plans.

- Workflow and Task Spans: Detail task execution and tool interaction within workflows.

- Evaluation Spans: Assess the agent's performance against predefined metrics.

- Guardrail Spans: Ensure AI safety by enforcing operational constraints.

- LLM Spans: Document interactions with the LLM, detailing configurations and responses.

This taxonomy provides a reference template for developers, facilitating a standardized approach to implement AgentOps tools that support comprehensive monitoring, logging, and analytics (Figure 3).

Figure 3: Taxonomy of AgentOps.

Practical Implications and Future Work

The introduction of the AgentOps paradigm offers significant potential to enhance the observability of LLM agents. It addresses critical aspects of AI safety by providing tools and methodologies for stakeholders to track, analyze, and manage agent artifacts throughout their lifecycle.

The paper suggests the development of a prototype AgentOps tool and the validation of the taxonomy through real-world case studies as future directions. These efforts will further refine the understanding and application of observability in LLM agents, bridging the gap between theoretical concepts and practical implementation.

Conclusion

"AgentOps: Enabling Observability of LLM Agents" presents a vital framework for addressing AI safety in LLM agents through enhanced observability. The comprehensive taxonomy of AgentOps outlined in the paper serves as a valuable foundation for designing and implementing effective observability solutions, ensuring that AI systems operate safely and predictably across various domains.