- The paper demonstrates that integrating Dynamic Evidence Trees and data condensation significantly improves LLM capabilities for analytical reasoning in intelligence analysis.

- The methodology employs dynamic memory modules and embedding-based retrieval to structure and synthesize scattered evidence into coherent narratives.

- Evaluations show augmented LLMs better organize evidence, though challenges remain in achieving deep speculative reasoning required for advanced analytical tasks.

LLM Augmentations to Support Analytical Reasoning over Multiple Documents

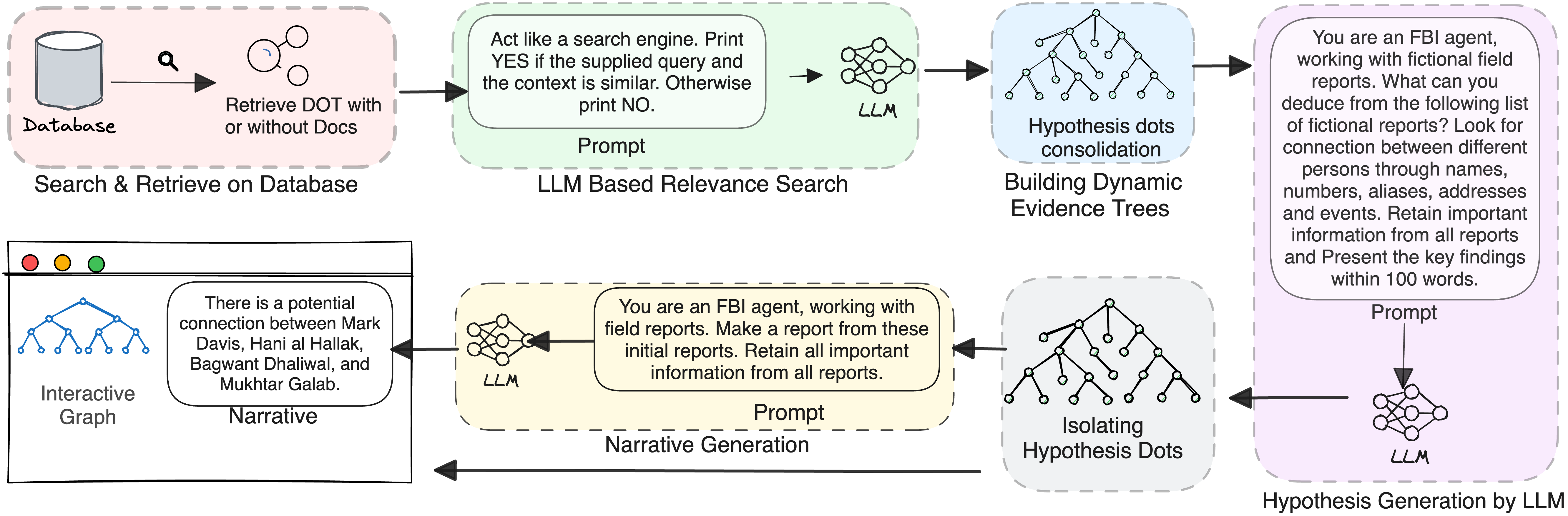

The paper "LLM Augmentations to Support Analytical Reasoning over Multiple Documents" (2411.16116) explores methods to enhance LLMs for intelligence analysis (IA), where tasks involve drawing complex connections from massive textual dossiers. The researchers propose augmenting LLMs with a structured architecture involving Dynamic Evidence Trees (DETs) and data condensation to improve reasoning capabilities, especially when connecting scattered pieces of information, or "dots", to form cohesive narratives.

Introduction to Intelligence Analysis

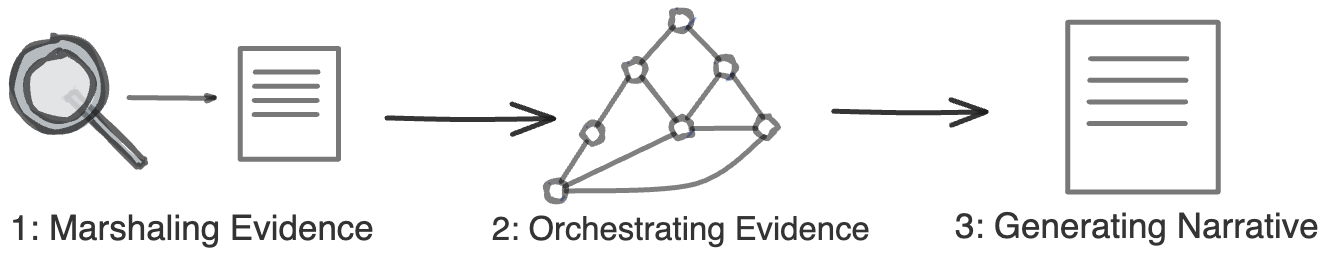

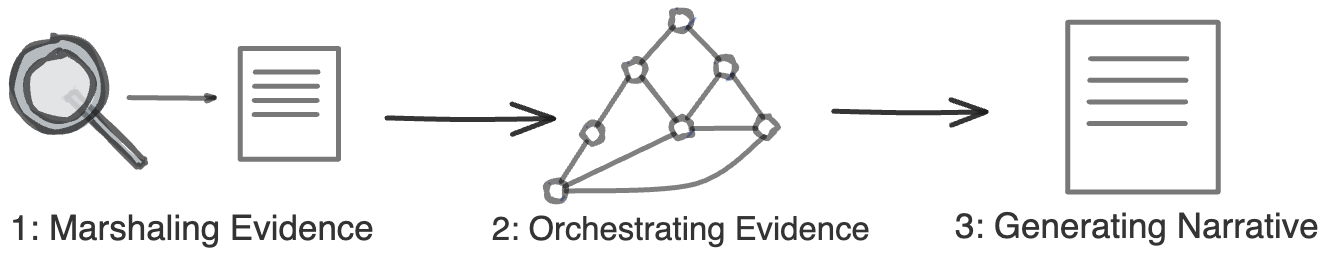

Intelligence Analysis (IA) generally involves three main steps: marshaling evidence, orchestrating the gathered evidence to build persuasive arguments, and narrative generation.

Figure 1: Three steps to intelligence analysis (IA).

IA tasks typically require drawing connections between seemingly unrelated entities and events, demanding significant time and effort from human analysts. LLMs, with substantial generative capabilities, are being explored for their utility in IA to potentially automate and enhance these tasks. The paper examines whether LLMs can support this intricate reasoning and introduces augmentations to address existing limitations.

Methodology

The researchers structure their approach around augmenting LLMs with several components to tackle IA tasks.

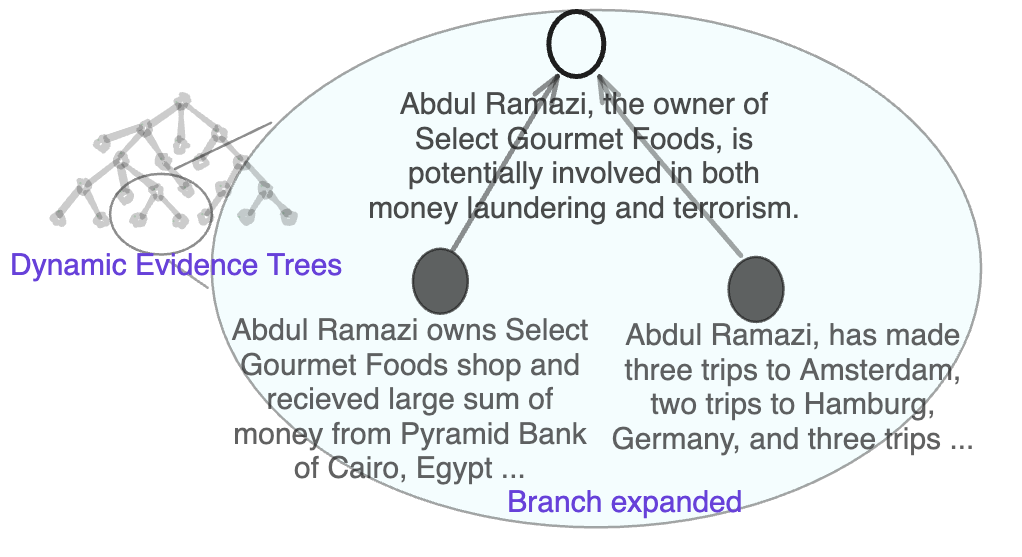

Dynamic Evidence Trees as a Memory Module

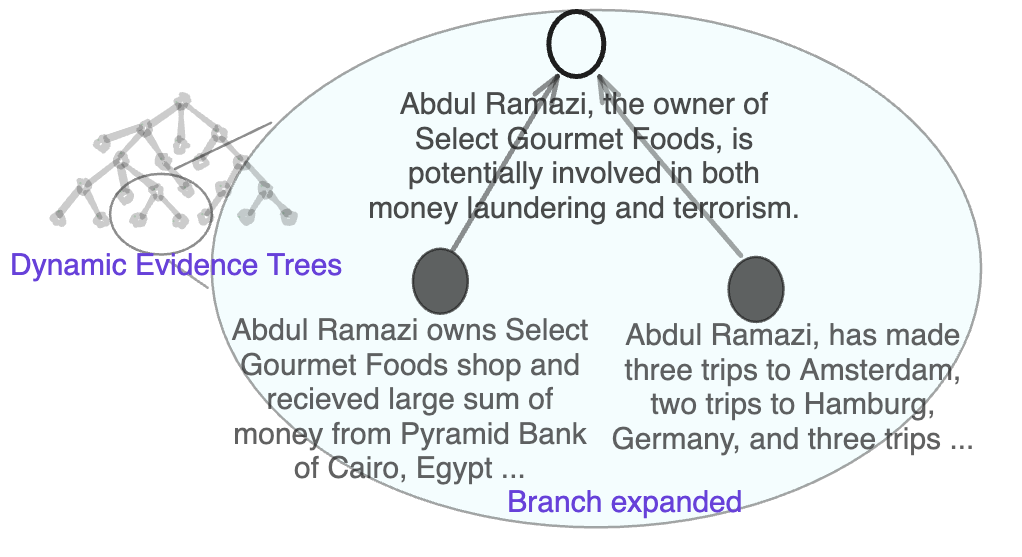

DETs are designed as a memory augmentation to overcome LLMs' limitations in handling large context windows and tracking evolving investigation threads.

Figure 2: Regular DETs, dots: (black: document, white: hypothesis).

Each node in a DET represents an information "dot," and the trees are constructed to dynamically organize and synthesize these dots into new hypotheses as evidence accumulates. DETs facilitate organizing and updating threads of investigation by creating hypotheses based on retrieved evidence and newly parsed information dots.

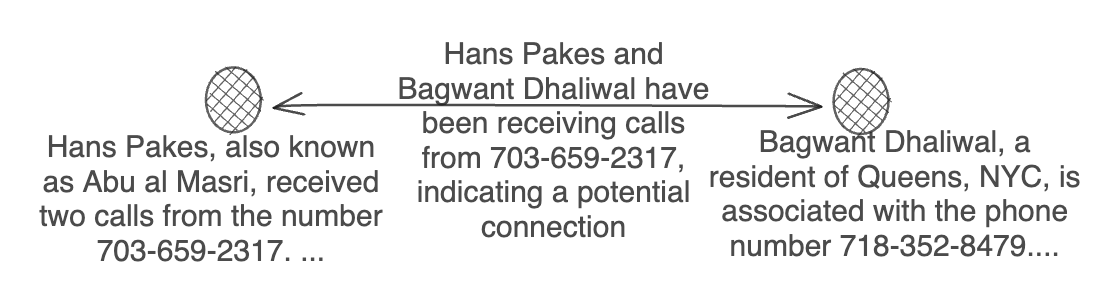

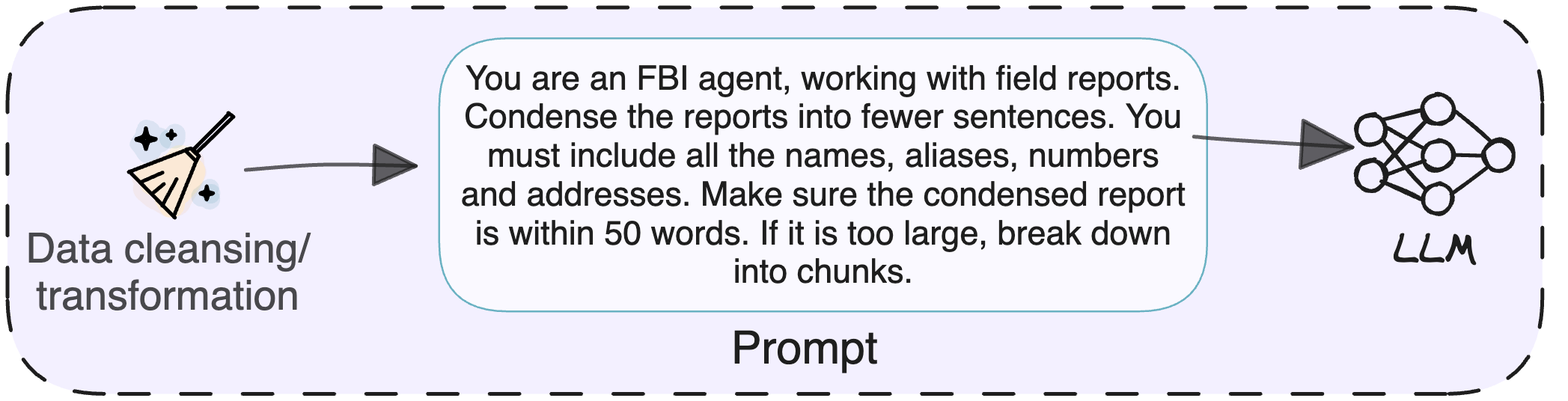

Data Condensation and Retrieval

To manage context length limitations intrinsic to LLMs, the paper proposes data condensation, where reports are distilled into compact information chunks.

Figure 3: Data condensation and dot extraction.

Additionally, the architecture leverages LLM-augmented retrieval, involving embedding-based searches and further filtering through LLM reasoning capabilities, ensuring relevant subsets of information dots are identified and connected within DETs.

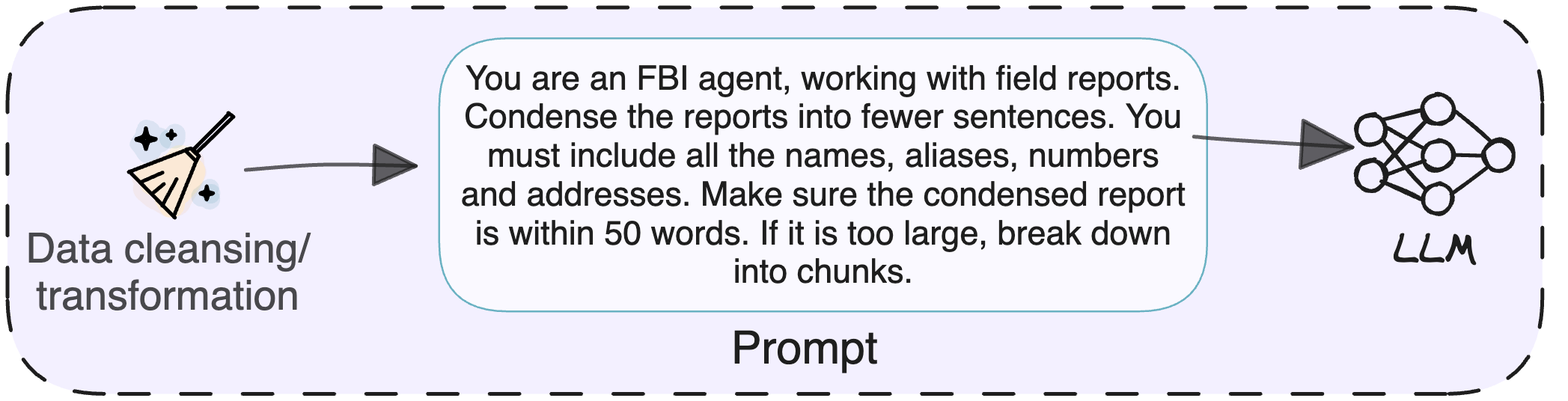

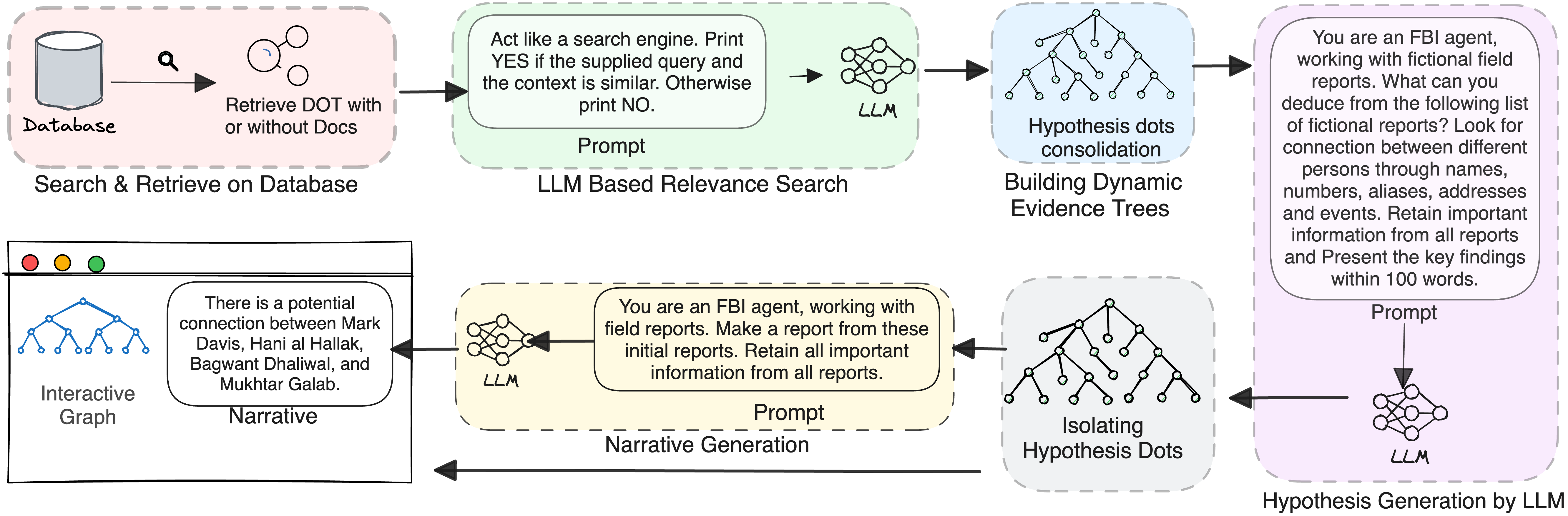

Integrated Augmented Architecture

The complete architecture integrates DETs with the retrieval and condensation modules, processing documents sequentially to build structured evidence trees capable of supporting more robust narrative generation.

Figure 4: All augmentations together with retrieve and merge.

Evaluation

Evaluations were conducted using datasets popular in IA training and analytics competitions. The paper assesses key research questions about LLM capabilities in solving IA problems using these augmentations:

- Can LLMs solve IA problems on their own?

- Do augmentations help?

- What is the effect of different LLM parameters such as temperature and context length on performance?

Results indicate that while augmented LLMs perform better than basic models, particularly with large datasets, there remains a significant challenge in orchestrating evidence to produce convincing analytical narratives (see Figure 5 for performance comparison).

Case Studies and Practical Insights

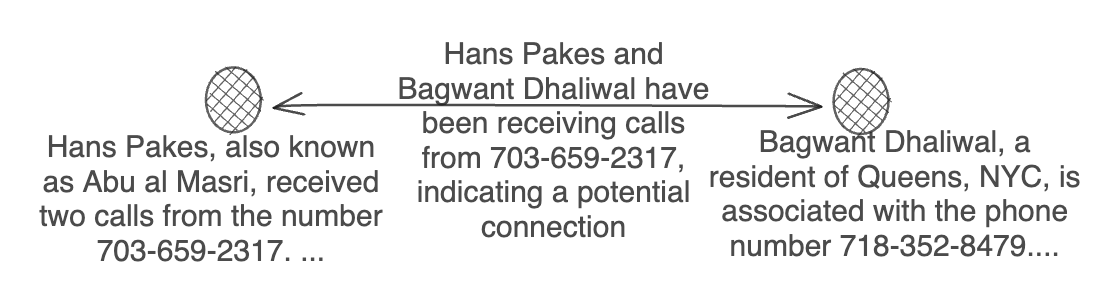

The paper highlights several use cases, demonstrating that while augmented LLMs can organize related entities and events effectively, they struggle with deeper analytical reasoning tasks. This limitation is primarily due to the inherent nature of LLMs and the complexity of IA tasks requiring imaginative reasoning, which current LLMs are not readily capable of synthesizing.

(Figure 6 and Figure 7)

Figure 6: Sketch of the solution of Crescent dataset.

Figure 7: Small use case for imaginative reasoning.

Conclusion

The research contributes to the ongoing investigation into how LLMs can support complex analytical reasoning tasks by proposing augmentations like DETs and data condensation. These augmentations improve LLM utility in IA, especially in structuring and organizing extensive datasets for reasoning tasks. Nevertheless, there is still room for improvement, particularly in aiding the speculative reasoning required for advanced intelligence analysis. Future work could focus on refining these augmentations and incorporating more complex reasoning capabilities, potentially combined with external knowledge bases to further bridge the gap in hypothesis-building and narrative generation tasks in intelligence contexts.