Towards Scientific Discovery with Generative AI: Progress, Opportunities, and Challenges

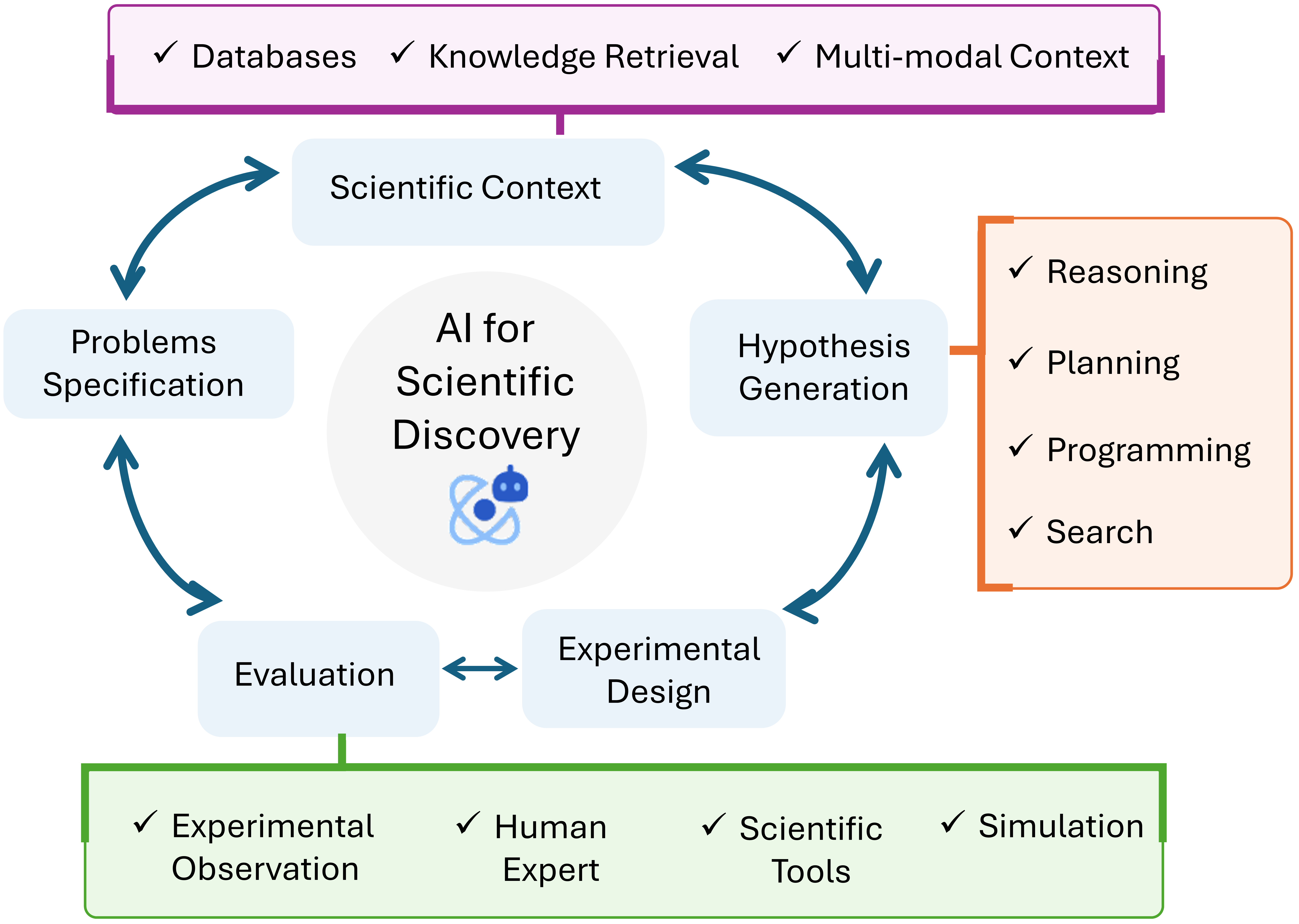

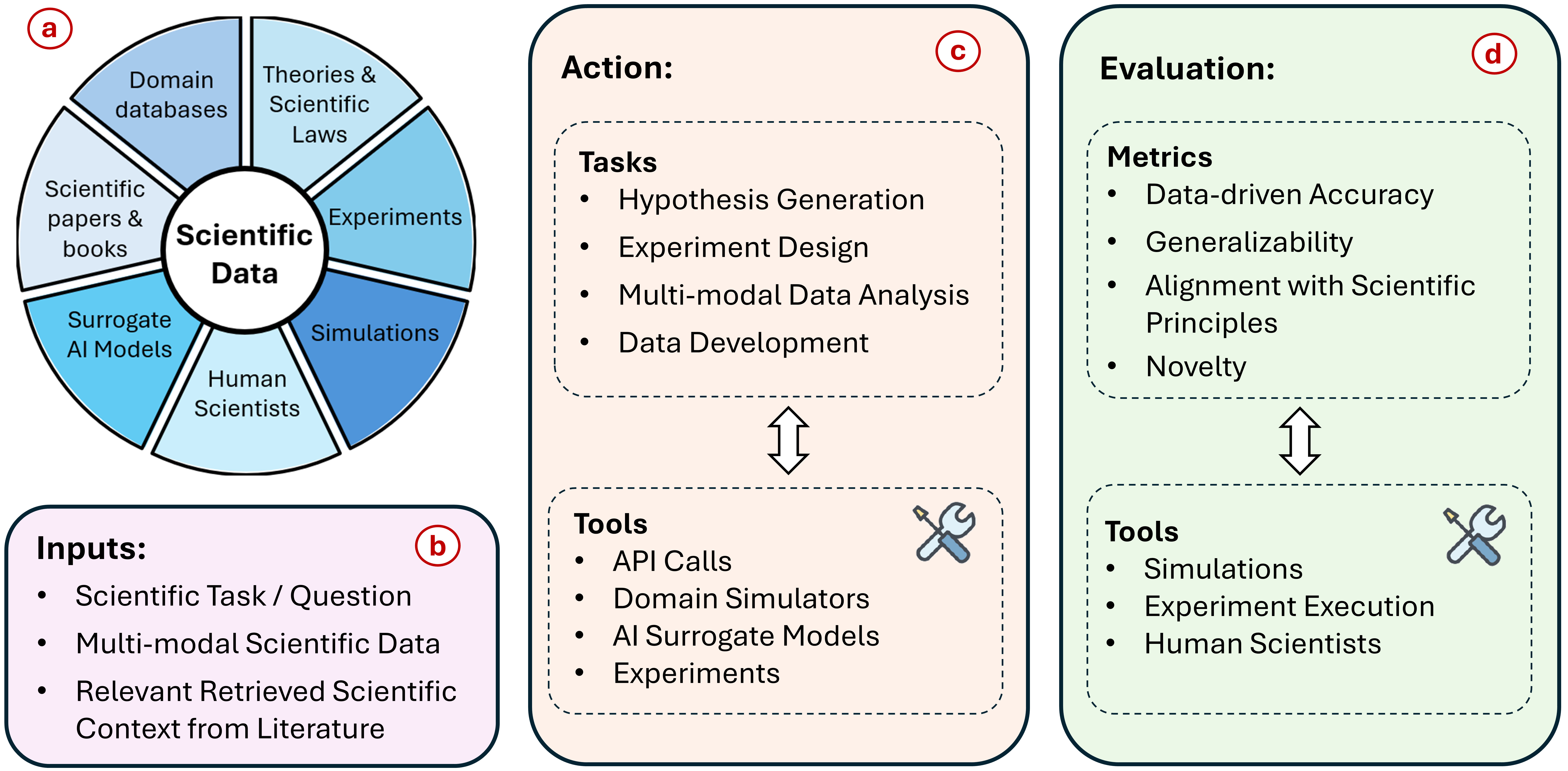

Abstract: Scientific discovery is a complex cognitive process that has driven human knowledge and technological progress for centuries. While AI has made significant advances in automating aspects of scientific reasoning, simulation, and experimentation, we still lack integrated AI systems capable of performing autonomous long-term scientific research and discovery. This paper examines the current state of AI for scientific discovery, highlighting recent progress in LLMs and other AI techniques applied to scientific tasks. We then outline key challenges and promising research directions toward developing more comprehensive AI systems for scientific discovery, including the need for science-focused AI agents, improved benchmarks and evaluation metrics, multimodal scientific representations, and unified frameworks combining reasoning, theorem proving, and data-driven modeling. Addressing these challenges could lead to transformative AI tools to accelerate progress across disciplines towards scientific discovery.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about a big idea: using generative AI (like advanced chatbots and other smart models) to help make real scientific discoveries. Scientists do many things—read papers, come up with ideas, design experiments, run tests, and build theories. AI can already help with some parts, but we don’t yet have AI systems that can do the whole process on their own for a long time. The authors explain what AI can currently do for science, what’s missing, and how we might build better AI “science agents” that work alongside humans to speed up discovery.

Key Objectives and Questions

The paper asks simple but important questions:

- What has AI recently achieved in helping scientists, and in which areas?

- Why doesn’t AI yet function like a full, reliable “AI scientist”?

- What are the main challenges we need to solve to get there?

- What steps and research directions could lead to AI systems that reason, test, and discover like helpful lab partners?

Methods and Approach

This is a survey and roadmap paper. Instead of reporting one experiment, the authors:

- Reviewed recent research on how AI is used in science (for example, reading and summarizing papers, solving math proofs, planning experiments, discovering equations, and designing new drugs or materials).

- Organized these advances to show what’s working well and where the gaps are.

- Proposed a framework and research directions for building stronger, more complete AI systems for science.

Helpful analogies for technical terms:

- LLMs: Think of them as super-powered reading and writing assistants trained on huge amounts of text. They can answer questions, summarize, and suggest ideas.

- Theorem proving: Like showing your math work step-by-step to prove something is always true, not just true for examples.

- Experimental design: Planning how to test an idea—what to change, what to measure, and how to know if the result makes sense.

- Symbolic regression (equation discovery): Figuring out a formula that explains data points, like guessing the rule behind a graph.

- Multimodal data: Science isn’t just text; it’s pictures, graphs, tables, numbers, and code. Multimodal AI tries to understand all these “types” together.

- Latent space: A compact “map” learned by AI that makes it easier to search for good ideas (like finding the best path through a huge maze).

- Benchmarks: Standard tests used to measure how good AI is at specific tasks.

Main Findings

The authors summarize where AI has made real progress and what still needs work.

Recent progress:

- Literature analysis and brainstorming: Specialized LLMs trained on science papers can quickly find relevant research, summarize findings, answer questions, and even suggest new research ideas. This helps scientists keep up with the flood of publications.

- Theorem proving: AI combined with formal math tools can help turn informal ideas into proper proofs. This matters because strong theories need solid arguments, not just patterns in data.

- Experimental design: AI agents can propose and refine experiments in areas like physics, chemistry, and biology. This can save time and money by narrowing down promising setups before trying them in the lab.

- Data-driven discovery:

- Drug discovery: AI can search huge spaces of possible molecules to find candidates for new medicines faster than manual methods.

- Equation discovery: AI can find mathematical laws hidden in data, helping scientists understand underlying rules.

- Materials discovery: AI can suggest new materials with desired properties, and guide the steps to test and make them.

Key challenges and opportunities:

- Better benchmarks and evaluation: Current tests often check whether AI can rediscover known facts or solve textbook problems. That can be fooled by memorization. We need tests that reward true novelty, generalizability (working in new situations), and alignment with scientific principles (not breaking known laws of physics).

- Science-focused AI agents: Instead of passive tools, we need active agents that can reason, plan, use specialized scientific tools, run simulations, check their work, and collaborate with humans like lab teammates.

- Multimodal scientific representations: Science uses text, images, graphs, numbers, code, and more. AI needs to understand and connect all these forms to reason well.

- Unifying theory and data: The best science connects data-driven patterns with solid theoretical reasoning. We need frameworks that combine neural models (great with patterns) with symbolic logic and theorem proving (great with exact reasoning), and that can handle uncertainty.

Why these results matter:

- They show that AI is already helpful in many parts of the scientific process.

- They also highlight what’s missing and how to bridge the gap toward AI that truly accelerates discovery, not just automates simple tasks.

Implications and Potential Impact

If we tackle the challenges the authors describe, we could build AI systems that:

- Work as trustworthy research partners: They would help read and organize knowledge, propose testable ideas, design careful experiments, run simulations, and interpret outcomes with scientific rigor.

- Discover faster and smarter: By navigating huge search spaces (like all possible molecules or material structures), AI could uncover options humans might miss, speeding up breakthroughs in medicine, energy, climate science, and more.

- Improve scientific quality: By testing for novelty, generalizability, and consistency with known principles, AI agents could help filter out weak ideas and strengthen good ones.

- Reduce costs and barriers: Better AI-guided design and simulation can cut down on trial-and-error in labs, making research more efficient and accessible.

Bottom line: Fully autonomous “AI scientists” may be far away, but strong, science-focused AI assistants are within reach. By building better benchmarks, smarter agents, multimodal reasoning tools, and unified theory-plus-data systems, we can create AI that truly helps push the frontiers of human knowledge.

Collections

Sign up for free to add this paper to one or more collections.