- The paper introduces a tensor-based LTLf semantics that ensures neural network outputs adhere to formal logical constraints.

- It presents a differentiable loss function mapped from logical specifications, verified through Isabelle/HOL to reduce constraint violations.

- The approach successfully bridges symbolic reasoning with neural optimization, demonstrating significant improvements in trajectory learning tasks.

Introduction

The paper presents an integration of formal methods with deep learning, specifically targeting the domain of neurosymbolic learning through a formally verified approach. By utilizing tensor-based Linear Temporal Logic on Finite Traces (LTLf), the research offers a rigorous framework for constrained training in machine learning, ensuring correctness via formal verification conducted in Isabelle/HOL. This approach aims to provide a mathematical guarantee for implementations, bridging the gap between symbolic reasoning and neural computation.

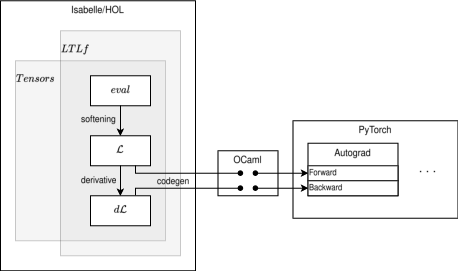

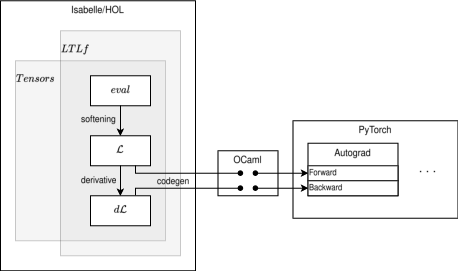

The research introduces a novel tensor-based semantics for LTLf, working over finite traces commonly used in deep learning frameworks. These tensors support the evaluation of logical constraints semantically and smoothly, ensuring differentiability which is essential for neural learning processes. The semantics are rigorously defined to maintain standard correctness properties and produce efficient code through Isabelle/HOL’s code generation capabilities.

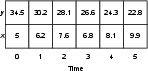

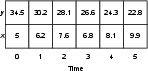

Figure 1: An example trace capturing the x and y coordinates of an agent.

The core contribution lies in the formalization of a differentiable loss function that is sound with respect to LTLf semantics. This involves mappings from neural network outputs to logical constraints expressed in LTLf, using tensor operations to evaluate constraints across batches of traces. The approach creates a link between high-level logical specifications and low-level tensor computations, ensuring that the implementation respects the logical properties through automatic code generation.

Experimental Validation

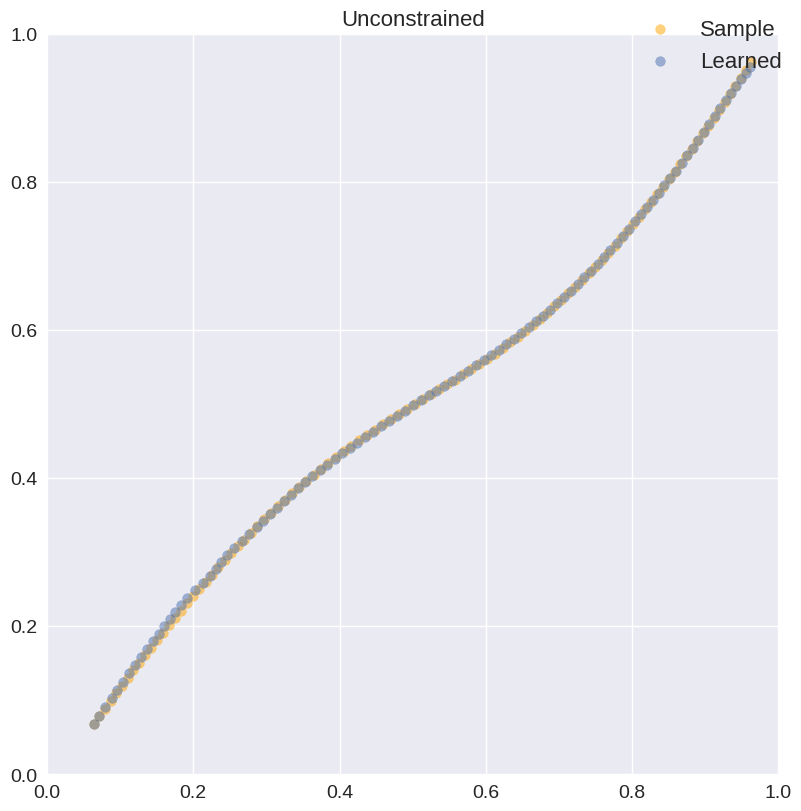

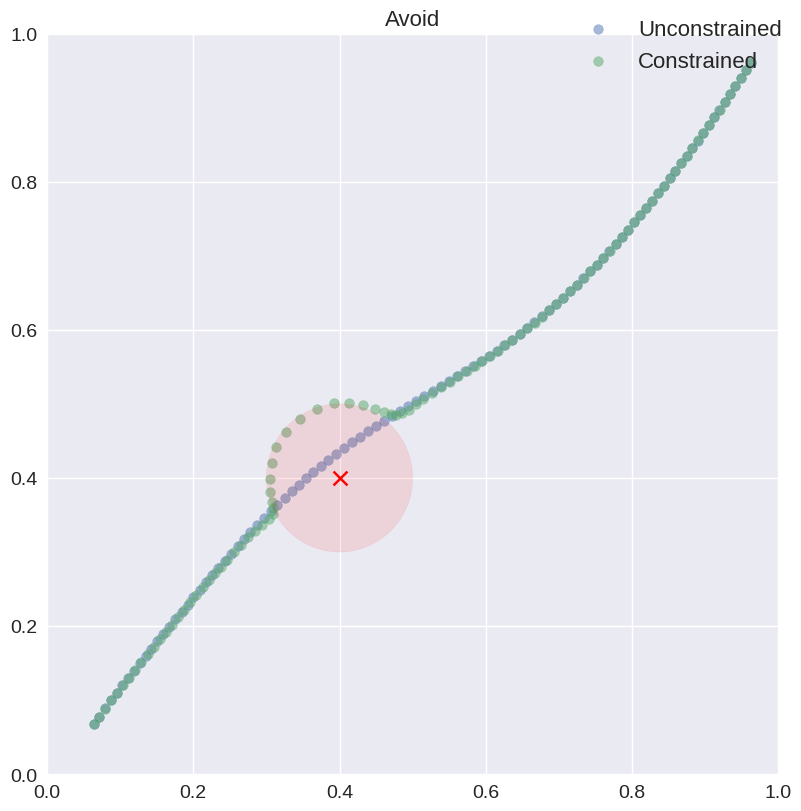

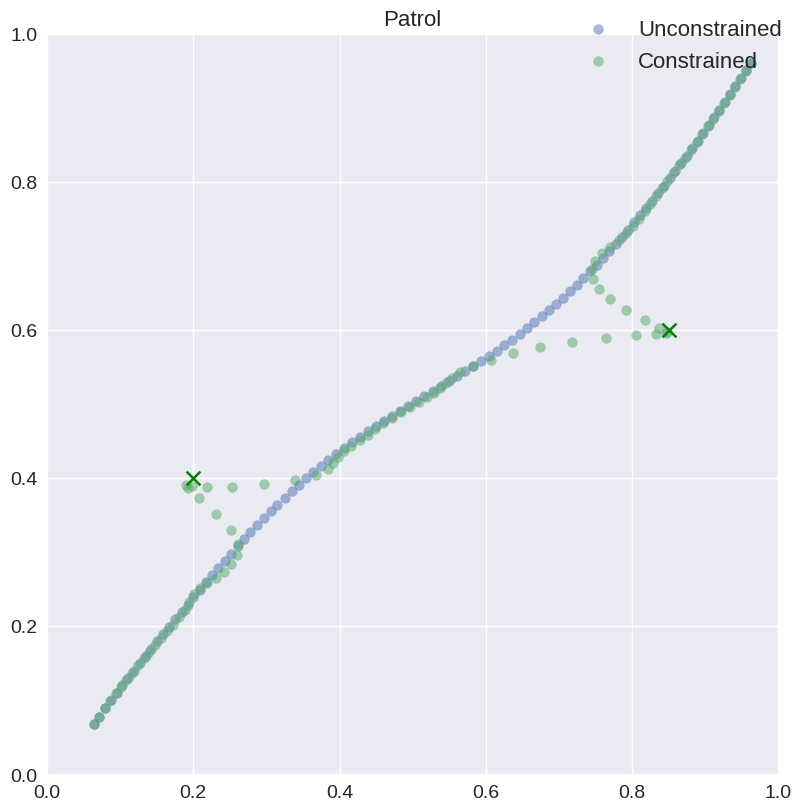

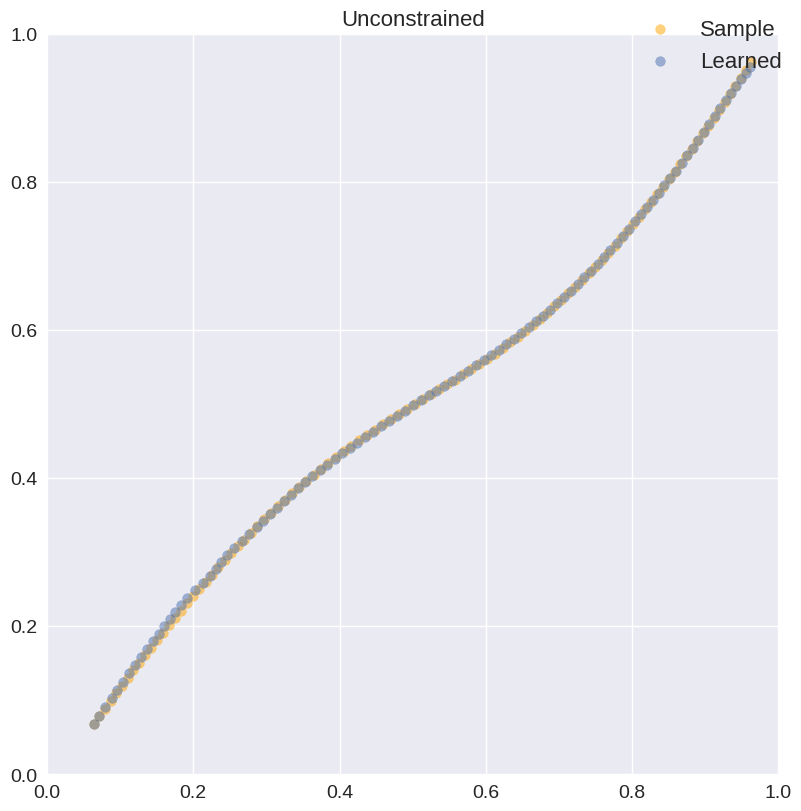

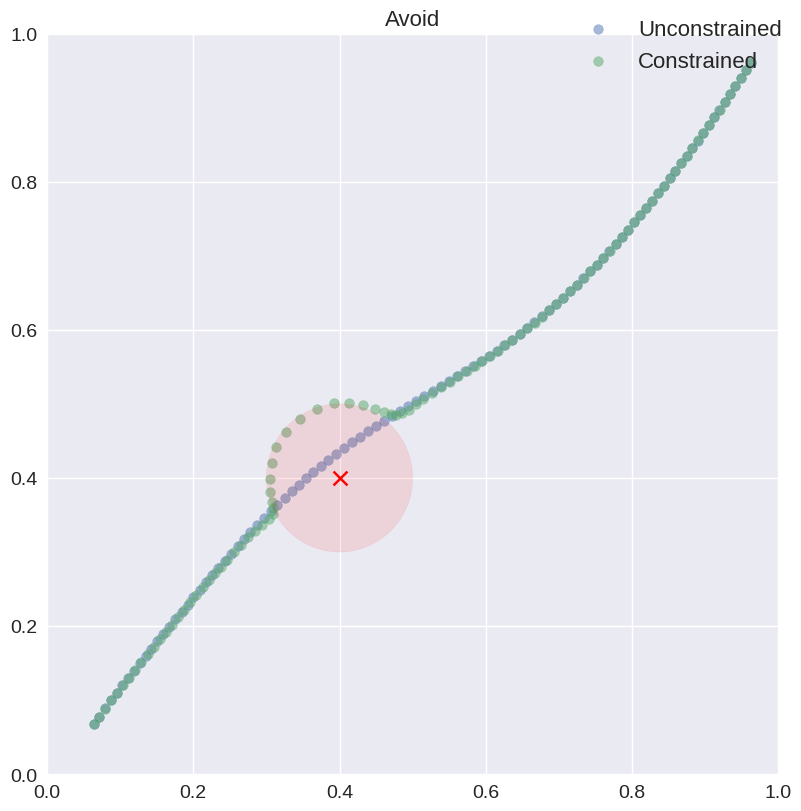

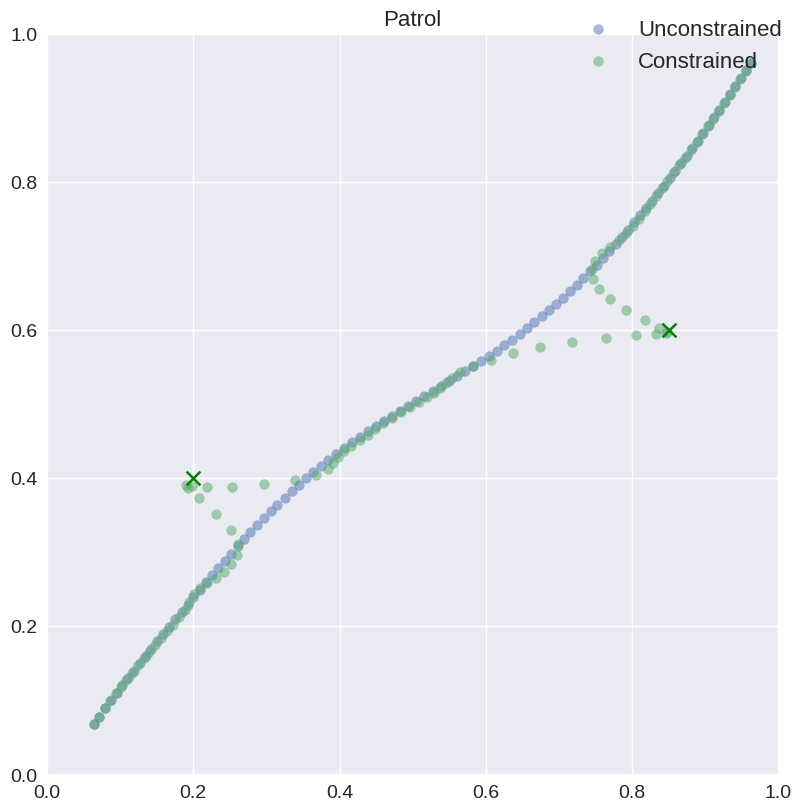

The applicability of the framework is evaluated across various trajectory learning tasks, demonstrating the integration of logical constraints with neural optimization. The experiments illustrate the reduction of constraint violations through the neurosymbolic approach, emphasizing precision and reliability in tasks such as motion planning.

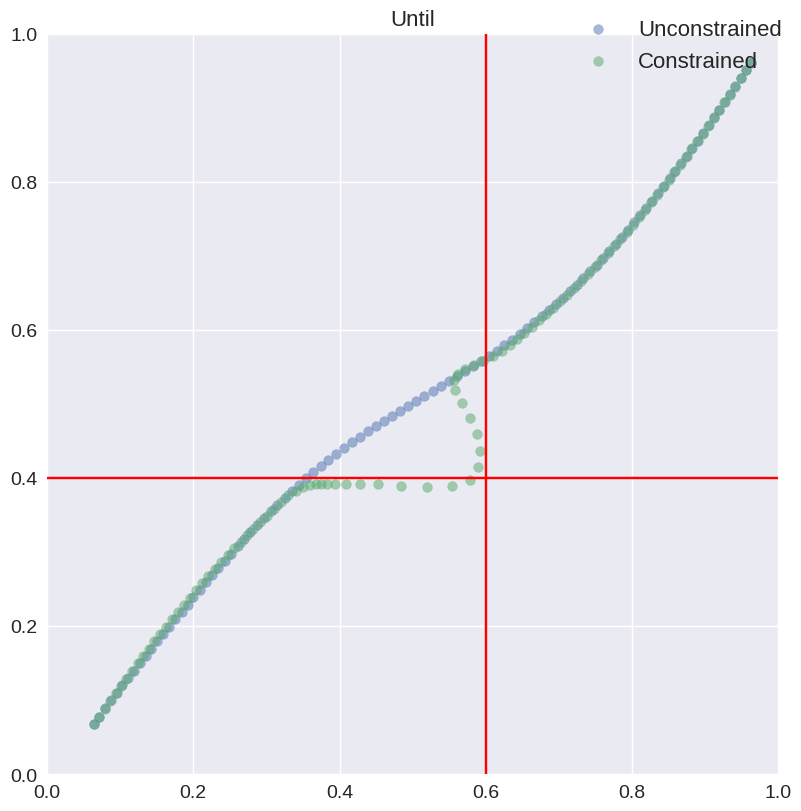

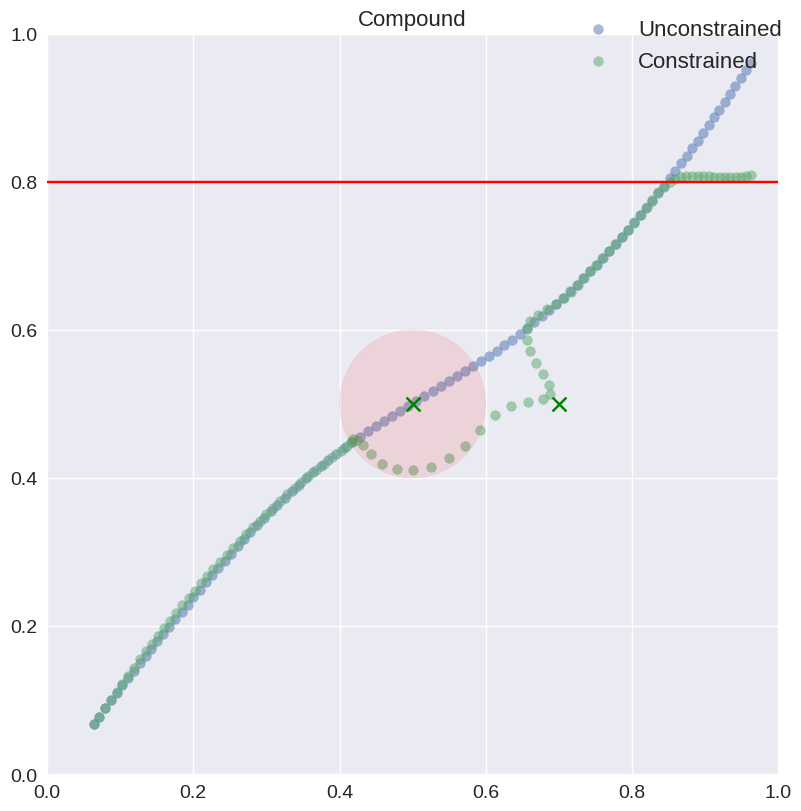

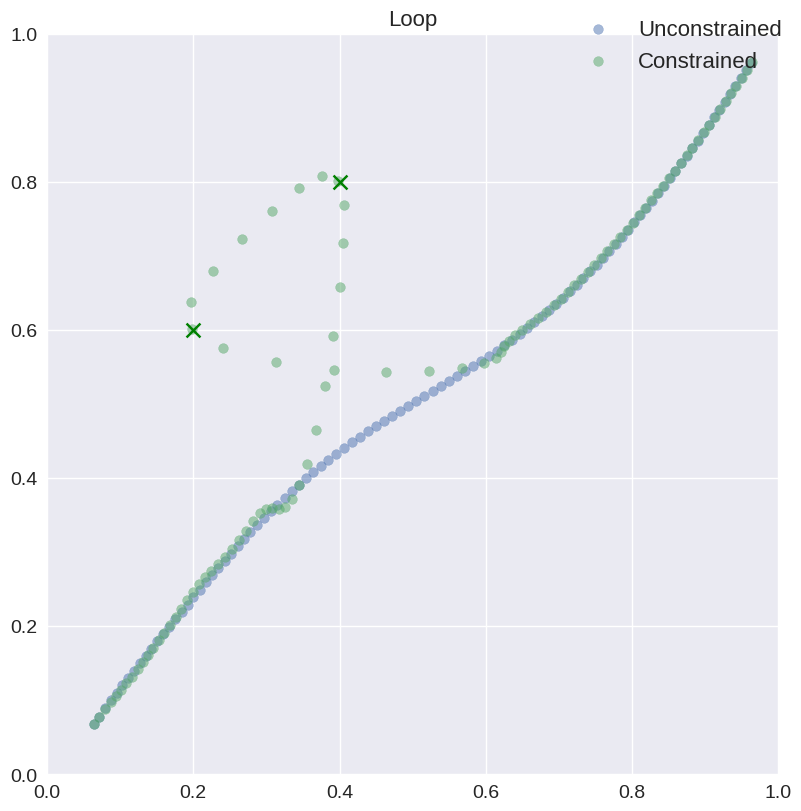

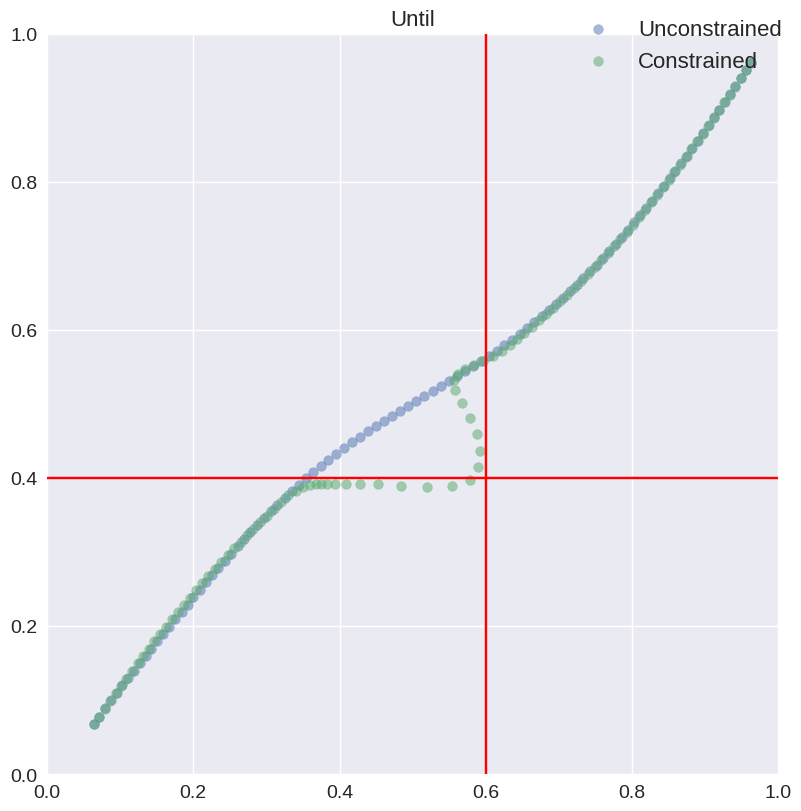

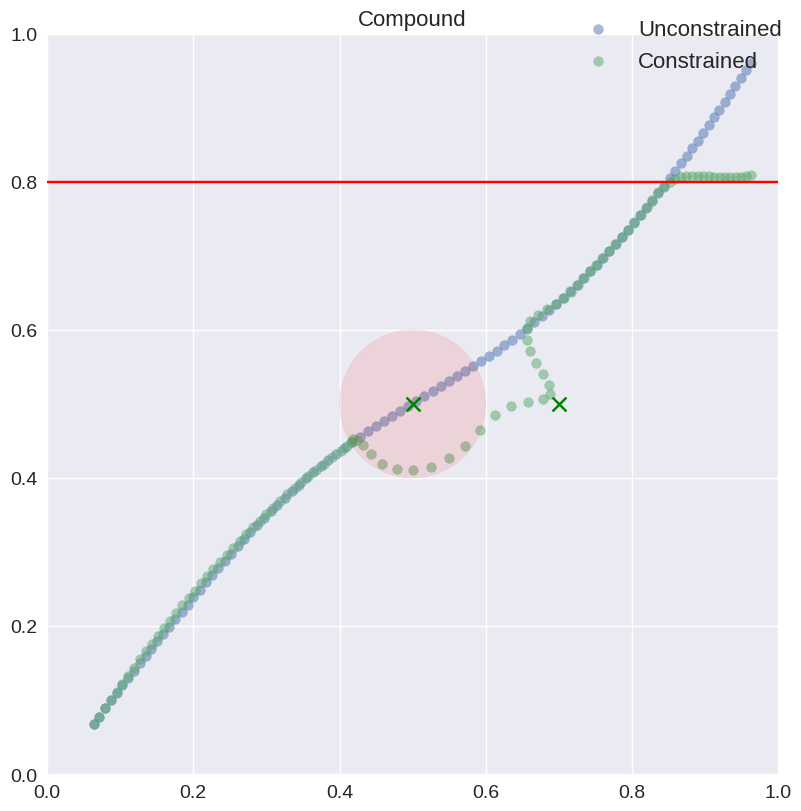

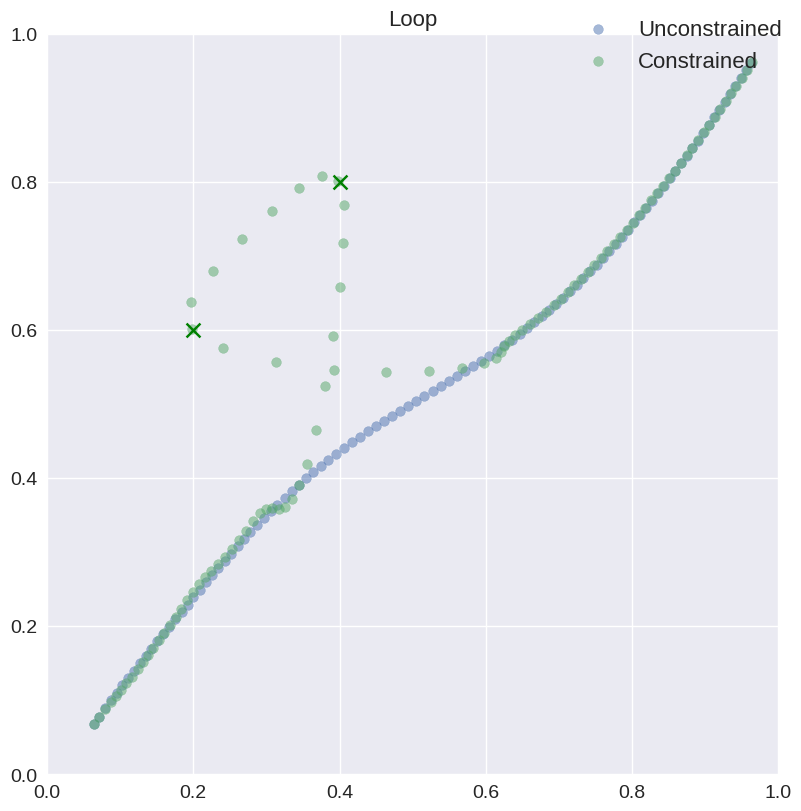

Figure 2: Trajectories for the Unconstrained, Avoid, Patrol, Until, Compound, and Loop experiments.

Integration with PyTorch

Integral to the framework is the Isabelle-PyTorch pipeline that leverages code generation capabilities to integrate with optimized PyTorch applications effectively. The OCaml code synthesized from Isabelle/HOL serves as the bridge between formal specifications and practical deployment in deep learning environments, ensuring mathematically sound executions.

Figure 3: Isabelle-PyTorch neurosymbolic pipeline.

Conclusions

The paper underscores a systematic methodology for embedding logical constraints in learning systems under formal guarantees of correctness and soundness. Future directions propose extending these methodologies to broader logical frameworks and examining how domain representations can affect the effectiveness of constraints.

The work effectively demonstrates a method to formally verify and integrate logic within neural architectures, presenting a viable path for developing trustworthy AI systems capable of adhering to high levels of mathematical certainty while ensuring efficiency in learning paradigms.