- The paper introduces LTLZinc, a framework that integrates LTL with MiniZinc to benchmark continual and neuro-symbolic temporal reasoning systems.

- The methodology leverages perceptual and symbolic domains alongside temporal specifications and constraints to test sequential and incremental tasks using datasets like MNIST and Cifar-100.

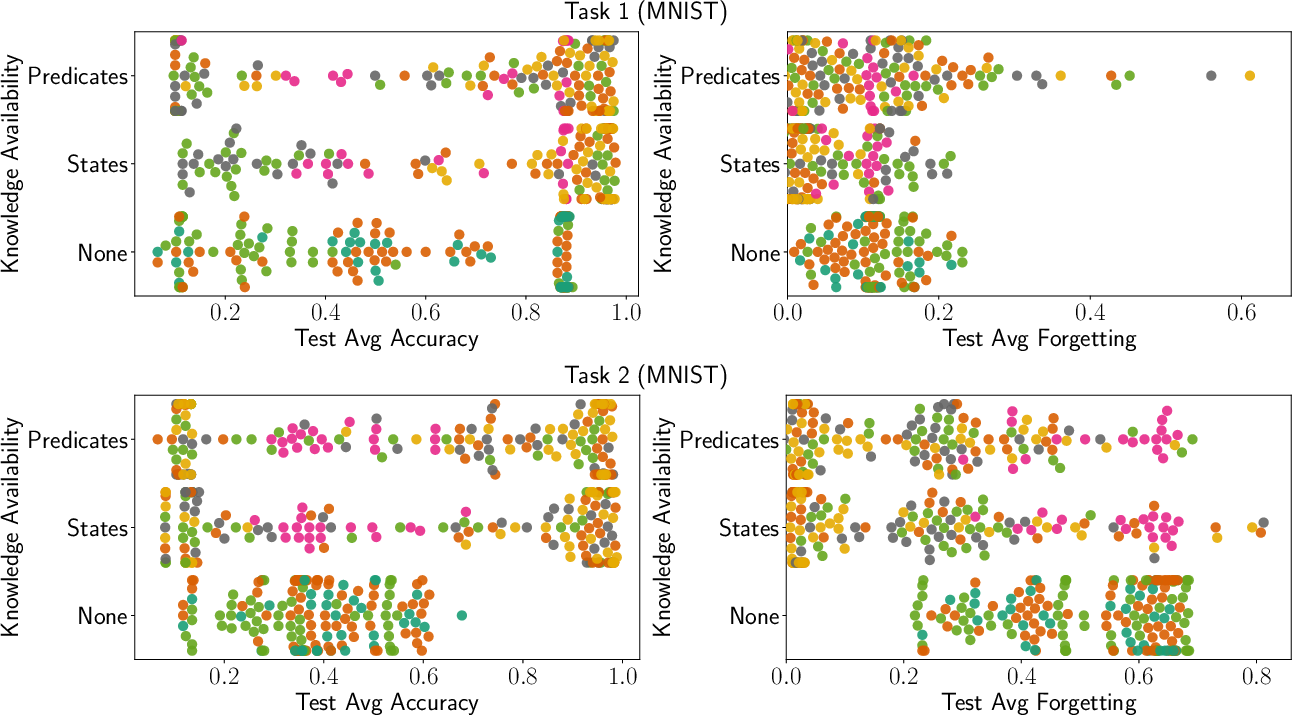

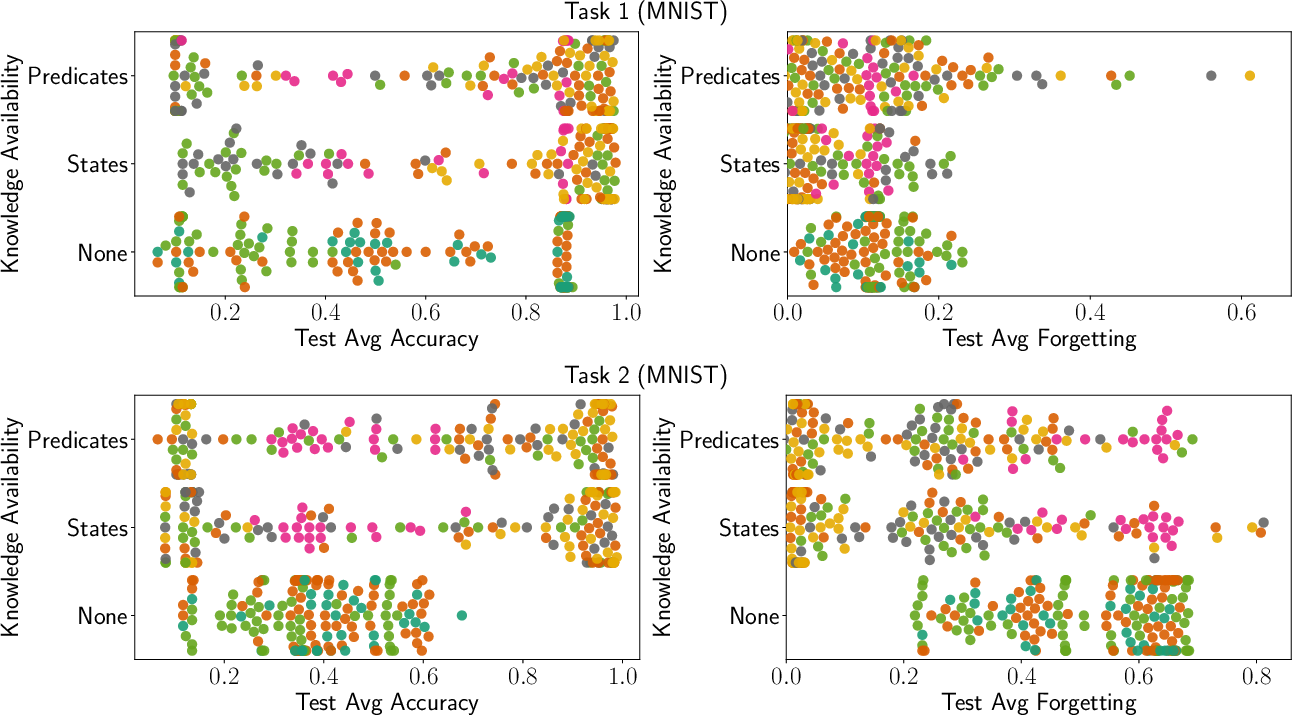

- Experiments demonstrate improved average accuracy and reduced forgetting, underscoring the benefits of combining neural architectures with symbolic reasoning in dynamic learning environments.

Introduction

"LTLZinc: a Benchmarking Framework for Continual Learning and Neuro-Symbolic Temporal Reasoning" (2507.17482) introduces a formal framework for addressing sequential and relational learning with a focus on integrating neuro-symbolic systems and continual learning paradigms. The paper presents LTLZinc—a tool designed for creating data sets that facilitate testing the efficacy of such systems in handling dynamic, temporal logic-based scenarios.

Framework Overview

Core Components

LTLZinc weaves together principles from Linear Temporal Logic (LTL) and MiniZinc—a constraint satisfaction and optimization platform. The framework's foundational entities include:

- Perceptual Domains (X): These represent input domains such as image data from datasets like MNIST, which LTLZinc can leverage in experiments.

- Symbolic Domains (Y): Corresponding symbolic labels associated with the perceptual domains that the neuro-symbolic systems must learn to infer.

- Constraints (C): Predicates that are constructed over symbolic domains, establishing logical or arithmetic relationships between them.

- Temporal Specifications (F): Detailed LTL formulas expressing temporal relationships to guide the sequence-learning tasks.

Problem Definition

Two primary modalities for tasks emerge within LTLZinc:

- Sequential Mode: Engages sequences from a data set characterized by temporal logic constraints, requiring agents to process entire sequences rather than temporally independent samples.

- Incremental Mode: Presents experiences as ordered data episodes with constraints, focusing on experience replay and the progression of learning across episodes typical of continual learning environments.

Evaluation and Tasks

Sequential Tasks

Six sequence classification problems were outlined to explore the capabilities of neuro-symbolic systems under tight temporal and logical constraints. These tasks range in complexity, involving arithmetic reasoning and propositional constraints to logic-driven sequence evaluations, using data from MNIST and Fashion MNIST. The datasets feature annotated sequence labels, automaton state traces, and transition-specific constraints.

Example Task: One task challenges the system to handle scenarios where sequences must always ensure, for instance, that symbolic expressions about tuples like X < Y + Z hold for specific temporal segments illustrative of short-term memory management and arithmetic comparison.

Figure 1: Average accuracy and average forgetting for class-continual experiments on MNIST, grouped by knowledge available to each strategy.

Incremental Tasks

Class-continual learning experiments were crafted to evaluate models' performance in settings where they encounter gradually evolving datasets. Tasks included rare class exposure and cyclic class reemergence requiring iterative learning processes akin to real-world data evolution. The datasets for such tasks were instantiated with MNIST for simplistic perceptual operations as well as Cifar-100 for increased complexity.

Example Task: A class-incremental task challenges the system with classes that rarefy as time progresses, emphasizing retention over time. Moreover, cyclical exposure of certain classes provides a unique challenge for standard forgetting and recovery mechanisms.

Experimentation and Implementation

Neural-Symbolic Pipeline

Through a modular architecture (Figure 2), the evaluation pipeline comprises image classification, logic-based constraint checking, next-state prediction modules, and sequence classification. For experimentation, various implementations were explored such as Scallop and ProbLog for constraints and GRU for state prediction.

Evaluations were gauged using metrics like average accuracy and forgetting, highlighting the persistence of learned information. The experiments revealed distinct advantages of symbolic or integrating symbolic and neural approaches over purely neural models in handling the dichotomy of temporal reasoning and adaptability in a continual learning setup.

Future Directions

The researchers advocate expanding LTLZinc’s tasks to cover an even broader array of real-world scenarios, alongside fostering deeper neural-symbolic integration through sophisticated neuro-symbolic architectures capable of handling more generalized deductive reasoning within temporal contexts.

In conclusion, LTLZinc provides a structured roadmap for bridging the gap between symbolic reasoning and neural networks across temporal dimensions, promoting advances in artificial intelligence research tailored to dynamic, real-world complexities.