Do We Need to Verify Step by Step? Rethinking Process Supervision from a Theoretical Perspective

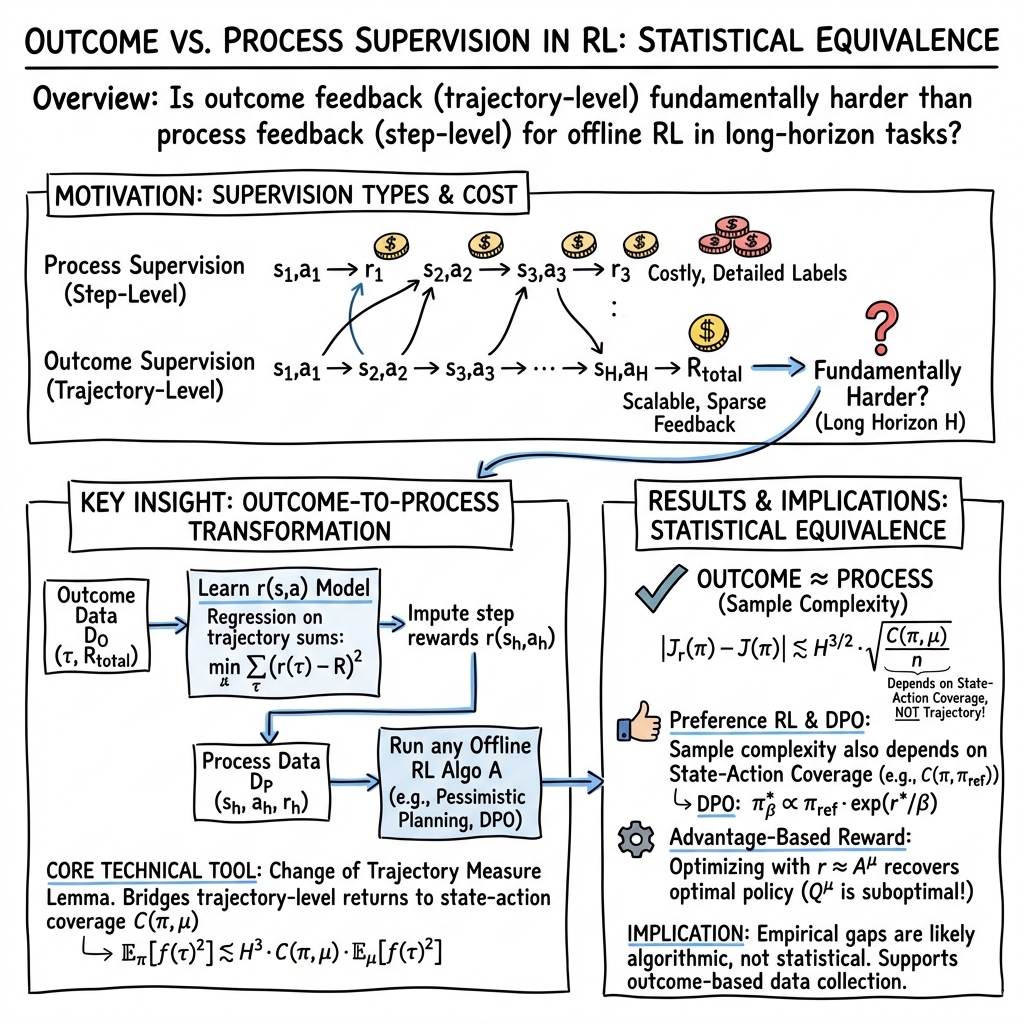

Abstract: As LLMs have evolved, it has become crucial to distinguish between process supervision and outcome supervision -- two key reinforcement learning approaches to complex reasoning tasks. While process supervision offers intuitive advantages for long-term credit assignment, the precise relationship between these paradigms has remained an open question. Conventional wisdom suggests that outcome supervision is fundamentally more challenging due to the trajectory-level coverage problem, leading to significant investment in collecting fine-grained process supervision data. In this paper, we take steps towards resolving this debate. Our main theorem shows that, under standard data coverage assumptions, reinforcement learning through outcome supervision is no more statistically difficult than through process supervision, up to polynomial factors in horizon. At the core of this result lies the novel Change of Trajectory Measure Lemma -- a technical tool that bridges return-based trajectory measure and step-level distribution shift. Furthermore, for settings with access to a verifier or a rollout capability, we prove that any policy's advantage function can serve as an optimal process reward model, providing a direct connection between outcome and process supervision. These findings suggest that the empirically observed performance gap -- if any -- between outcome and process supervision likely stems from algorithmic limitations rather than inherent statistical difficulties, potentially transforming how we approach data collection and algorithm design for reinforcement learning.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What this paper is about

Imagine teaching a robot or a smart assistant to solve a long puzzle. You can teach it in two ways:

- Grade every step it takes (“process supervision”).

- Only grade the final answer (“outcome supervision”).

Many people think grading every step is much better, because it tells the learner exactly where it went wrong. This paper asks: do we really need to check every step, or can checking just the final result be good enough?

The authors show, with math, that under reasonable conditions, learning from final results can be just as statistically effective as learning from step-by-step feedback. They also explain when and how we can turn final-result feedback into useful step-like signals, and why some popular shortcuts work (or don’t).

What questions the researchers asked

- Is outcome supervision (only seeing the final score) fundamentally harder than process supervision (seeing a score for each step)?

- Can we convert “final score” data into “per-step” scores in a reliable way?

- In settings where we can try out parts of a solution (do “rollouts” or use a verifier), what kind of step-based signal should we learn: a Q-function or an advantage function?

- For training from preferences (when you only know which of two solutions is better), do we really need very strong coverage of whole solution paths, or is step-by-step coverage enough?

How they studied it (in simple terms)

Think of learning as walking through a maze:

- A “trajectory” is the whole path you took.

- A “state-action” is one step in the maze (where you are + which direction you move).

- “Horizon” is how many steps the maze takes.

- “Coverage” means: does your training data include enough examples of the states and actions your new policy will encounter?

Two kinds of coverage:

- Trajectory coverage (whole paths): very strict and can blow up exponentially because there are many possible paths.

- State-action coverage (single steps): more manageable—you only need to cover individual steps well, not every possible entire path.

Key ideas and methods:

- Change of Trajectory Measure Lemma: The authors prove a new result showing that, if your data has good coverage of individual steps, you can safely reason about whole paths without paying an exponential penalty. In other words, you can relate “how things look along full paths” to “how well individual steps are covered” with only a polynomial (reasonable) cost in the number of steps .

- Turning total scores into per-step scores: They show you can learn a per-step reward model by fitting it so that when you add up the predicted step rewards along a path, it matches the observed total score. Then you can plug this into any standard “offline RL” algorithm that expects per-step rewards.

- Preferences (like “A is better than B”): They analyze methods that learn from pairwise comparisons and show you can still get good guarantees using step coverage, not full-path coverage.

- Rollouts and verifiers: If you can start from a point mid-path and simulate to the end (a “rollout”) or check correctness with a verifier, they show that using the “advantage function” as your step reward is theoretically sound. The advantage tells you how much better a move is compared to your usual behavior at that point. They also show that using a Q-function as the step reward can be misleading.

Analogy: If the total score is like the grade on a full essay, they show how to build a fair “grading rubric” for each paragraph (each step) so that the sum of paragraph grades matches the essay grade. Their lemma proves you can do this robustly if your examples cover the kinds of paragraphs (steps) you’ll write in the future.

Main findings and why they matter

Here are the main takeaways, in plain language:

- Outcome supervision can be “as good as” process supervision: If your data has good coverage of the steps you care about, then learning from only final scores is no more statistically difficult than learning from step-by-step scores—up to reasonable (polynomial) factors in the number of steps .

- A new mathematical tool to bridge steps and full paths: Their Change of Trajectory Measure Lemma shows you can control path-level errors using only step-level coverage. This avoids scary, exponential blow-ups that happen when you require coverage of every full path.

- You can convert final-score data into step-by-step rewards: Fit a per-step reward model so that its sum matches the total score, split your data to avoid overfitting, then use any standard offline RL method. Theoretical bounds show this works under step-coverage assumptions.

- For learning from preferences (e.g., “solution A is better than B”): They show sample complexity depends on step coverage (not full-path coverage). This tightens guarantees for methods like DPO (Direct Preference Optimization) in settings where step coverage makes sense (e.g., deterministic dynamics).

- With rollouts or a verifier, prefer the advantage function over the Q-function for step rewards: Training a step reward equal to “advantage” leads you to the same optimal behavior as the original task. Using Q as the step reward can fail in some cases.

Why it matters:

- Collecting step-by-step human feedback is expensive and slow. If outcome-only feedback can be just as good, we can save time and resources.

- This suggests that differences seen in practice may be due more to current algorithms than to the kind of feedback (process vs. outcome) we collect.

What this could mean going forward

- Cheaper data collection: Teams may lean more on outcome or preference feedback (easier to gather) without losing theoretical efficiency—if they ensure good step coverage in their data.

- Better algorithms: Since the gap seems algorithmic rather than fundamental, improving methods that learn from outcome feedback might close performance gaps with step-by-step supervision.

- Smarter use of simulators/verifiers: When you can do rollouts or verification, train step rewards from the advantage function to stay aligned with the true goal.

- Broader impact on training LLMs and robots: For long reasoning chains or long-horizon tasks, this work supports strategies that reduce the need for tedious step-by-step labels.

Simple caveat: These guarantees rely on reasonable “coverage” of the steps you’ll need later and come with polynomial factors in the number of steps. In practice, you still need enough varied data and solid algorithms to make this work well.

Collections

Sign up for free to add this paper to one or more collections.