- The paper presents G-Safeguard, which leverages graph neural networks to detect adversarial attacks in multi-agent systems via anomaly detection on utterance graphs.

- It achieves performance recovery improvements of over 40% under prompt injection scenarios and cuts attack success rates by up to 39.23%.

- The study highlights scalability and topological transferability, enabling secure interactions in complex LLM-based multi-agent environments.

G-Safeguard: A Topology-Guided Security Lens and Treatment on LLM-based Multi-agent Systems

Introduction

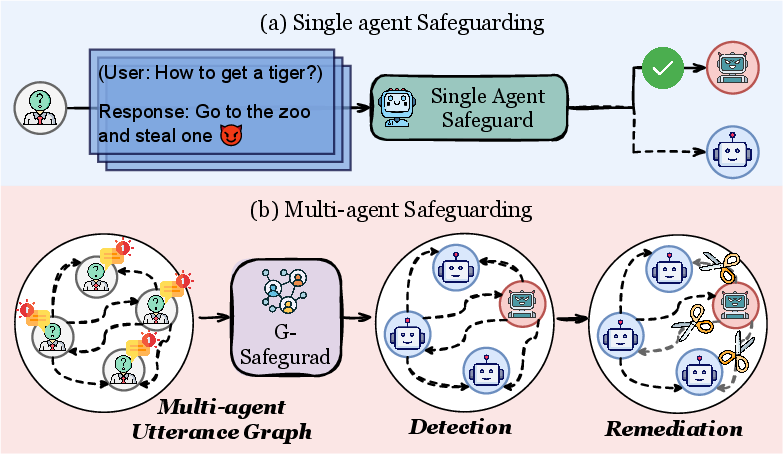

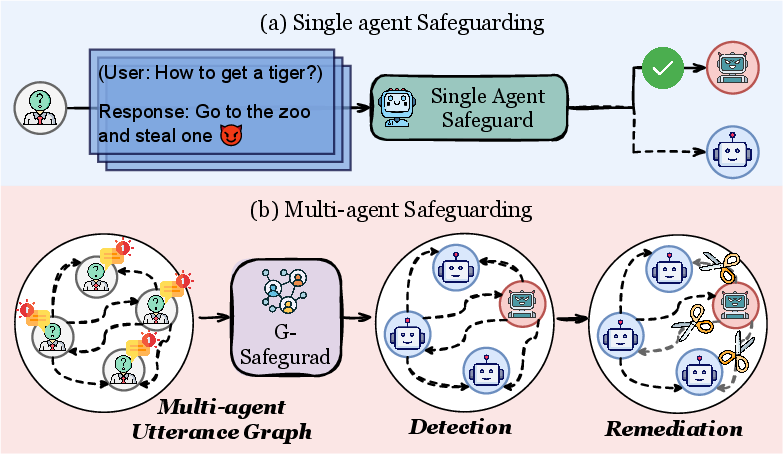

The paper "G-Safeguard: A Topology-Guided Security Lens and Treatment on LLM-based Multi-agent Systems" (2502.11127) explores a novel approach to securing LLM-based multi-agent systems (MAS). These systems are increasingly integrated into critical applications, making their vulnerability to adversarial attacks a significant concern. The proposed method, G-Safeguard, employs graph neural networks (GNNs) to detect anomalies in multi-agent utterance graphs and apply topological interventions for attack remediation.

Methodology

Multi-agent Utterance Graph Construction: The method constructs multi-agent utterance graphs capturing interaction dynamics and topological relationships among agents. Each agent is represented by a node, and interactions are encoded as edges within this graph structure. This setup enables the systematic monitoring of communications to identify potential attack vectors.

Graph-based Attack Detection: The core of G-Safeguard's detection mechanism is an edge-featured GNN that models anomaly detection as a node classification problem. By iteratively updating node representations, the system identifies high-risk agents whose outputs suggest exposure to adversarial influences.

Intervention and Remediation: Using recognized adjacency relationships, G-Safeguard performs edge pruning to sever potentially harmful communication pathways. This strategic intervention is crucial in mitigating the risk of misinformation and malicious behavior propagation throughout the MAS network.

Figure 1: The paradigm comparison between single agent safeguard and multi-agent safeguard.

Experimental Results

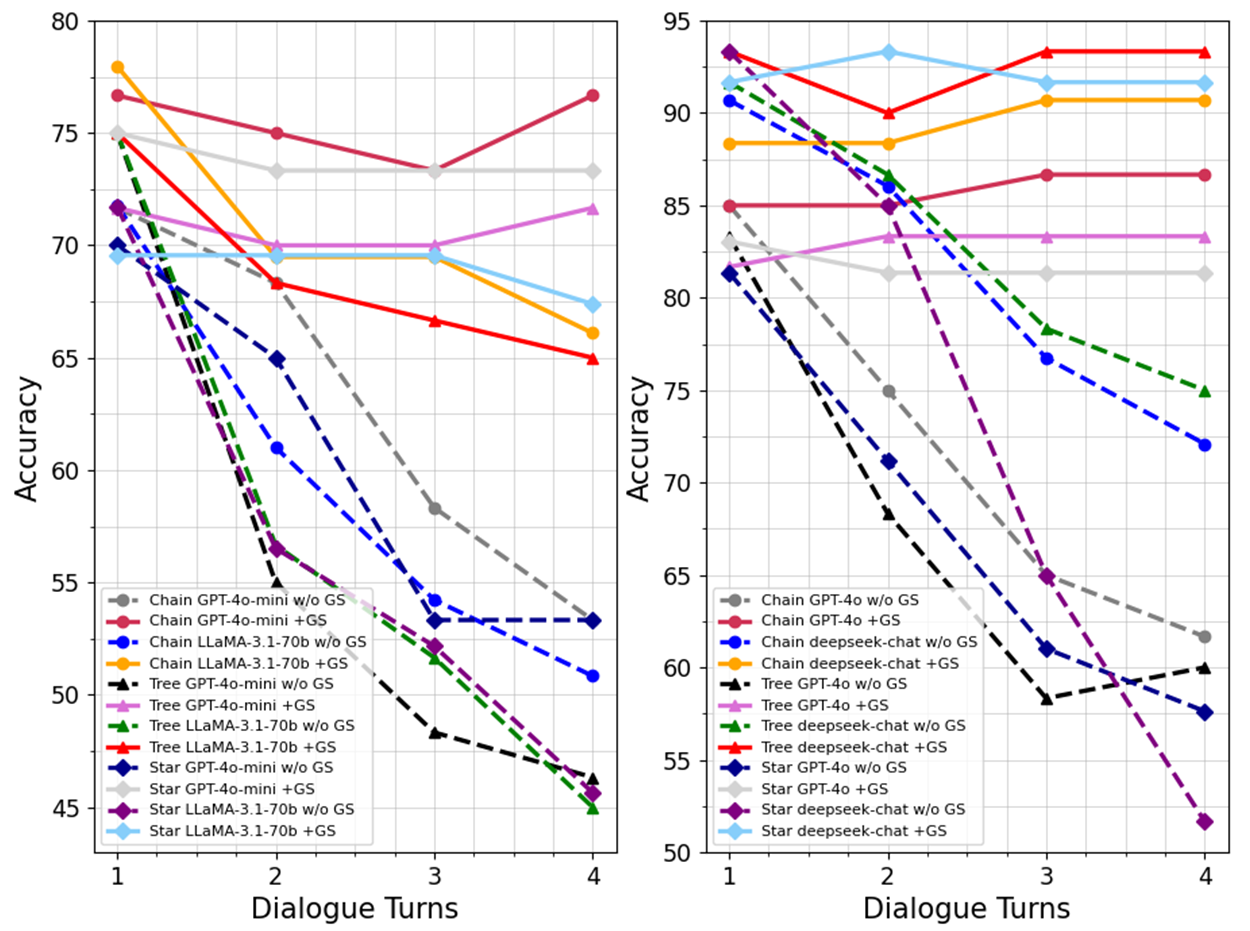

The experiments demonstrate substantial efficacy in defending against various attack strategies. The results showcase the ability of G-Safeguard to recover over 40% of the performance under prompt injection scenarios, independently validating its robustness across diverse topologies and LLM backbones. Key findings include:

- Performance Recovery: G-Safeguard successfully reduces attack success rates by 10% to 39.23% across different configurations, underscoring its adaptive capability against dynamic threat vectors.

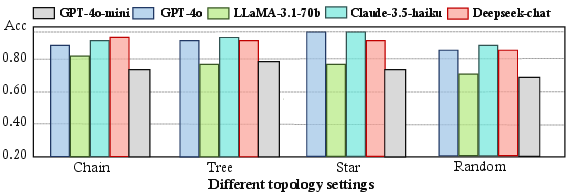

- Topological and Inductive Transferability: The method exhibits remarkable scalability, maintaining its effectiveness even when applied to larger-scale MAS without the need for extensive retraining.

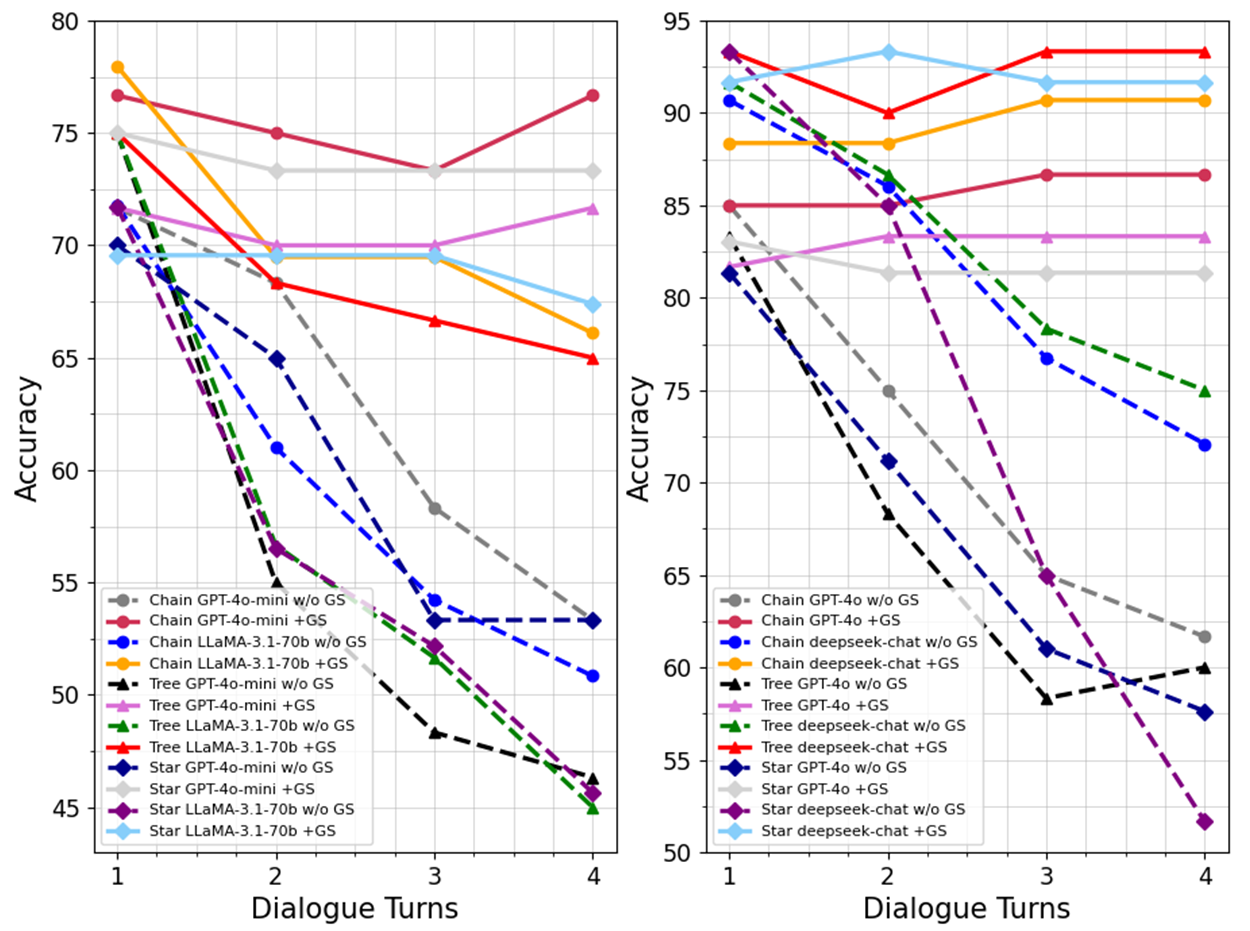

Figure 2: The overall performance of MAS on the CSQA (left) and MMLU (right) datasets after each turn of dialogue. We use majority voting as the strategy to select the final answer.

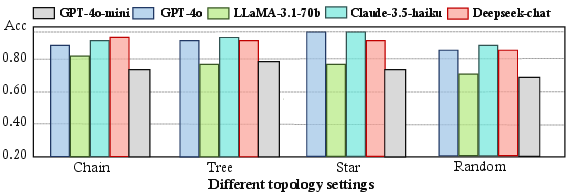

Figure 3: The recognition accuracy of for MAS with different topological structures composed of various LLMs on PoisonRAG dataset.

Implications and Future Work

The findings from this paper have several implications for the security and design of MAS. By integrating topology-guided interventions and anomaly detection directly into MAS workflows, developers can enhance system resilience without compromising agent autonomy or flexibility. The deployment of G-Safeguard can facilitate safer interactions within environments characterized by complex, multi-agent collaboration.

Future directions may involve refining the edge-featured GNN models to capture more granular communication patterns and extending the framework to anticipate unseen attack patterns preemptively. Additionally, exploring hybrid models that leverage both learning-based and rule-based strategies might deepen the MAS defense architecture robustness.

Conclusion

"G-Safeguard: A Topology-Guided Security Lens and Treatment on LLM-based Multi-agent Systems" (2502.11127) presents a pioneering approach for enhancing the security of LLM-based MAS. Through its graph-centric anomaly detection and strategic topology interventions, it offers a scalable, adaptable solution to the growing challenge of securing multi-agent interactions. This methodology not only enriches the current discourse on MAS security but also sets a foundational precedent for further research in harnessing topological insights for systemic protection.