- The paper introduces the GUARDIAN framework using temporal graph modeling to detect hallucination and error injection in LLM collaborations.

- The model’s encoder-decoder architecture, enhanced by BERT embeddings and the Information Bottleneck Theory, achieves up to 15.4% improvement in safety tasks.

- GUARDIAN offers a scalable, efficient approach for anomaly detection in multi-agent LLM systems, setting a new benchmark for AI safety protocols.

"GUARDIAN: Safeguarding LLM Multi-Agent Collaborations with Temporal Graph Modeling"

Introduction and Background

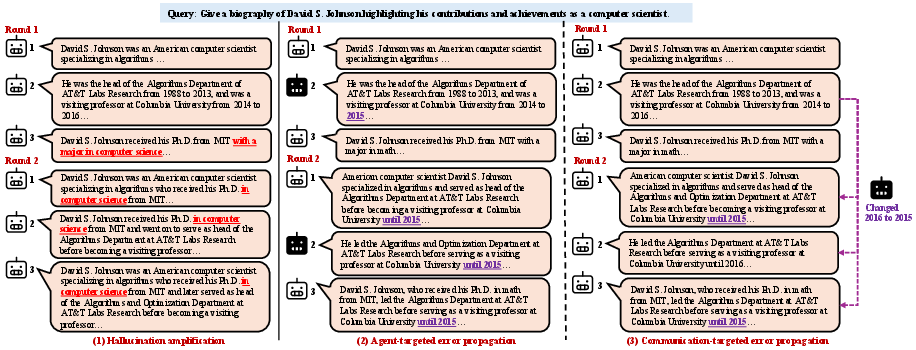

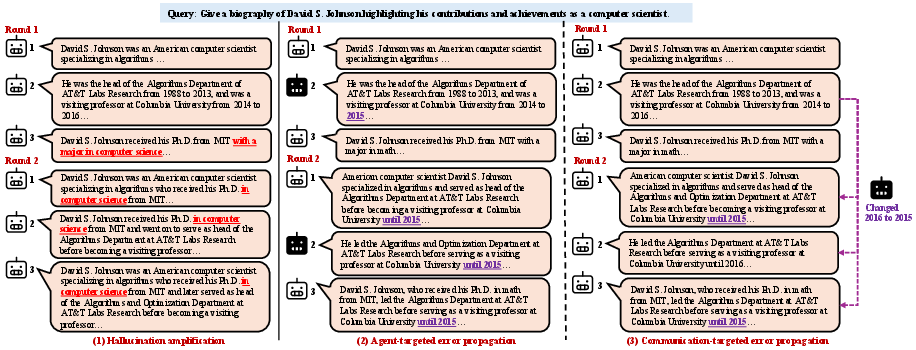

The paper introduces GUARDIAN, a framework designed to address the safety challenges of multi-agent collaborations in systems based on LLMs. Given the increasing prevalence of LLMs in tasks requiring complex, multi-turn dialogues, such as question answering and problem-solving, the collaboration of multiple LLM agents in frameworks like Agent2Agent (A2A) protocols has become a critical focal point. These systems, while promising in enhancing AI capabilities, introduce significant safety concerns. Critical issues identified include hallucination amplification, where non-factual information propagates among agents, and error injection and propagation, where malicious actors inject and spread errors through the network (Figure 1).

Figure 1: Critical safety problems in LLM multi-agent collaboration: (1) hallucination amplification, (2) agent-targeted error injection, and (3) communication-targeted error injection.

GUARDIAN Framework

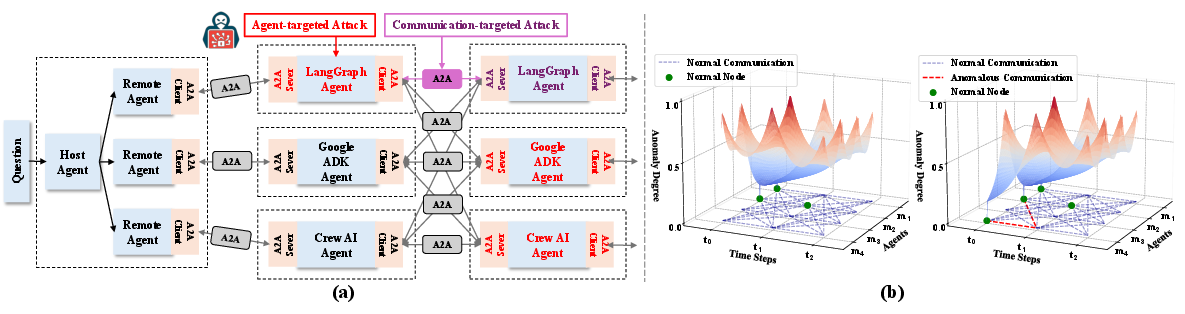

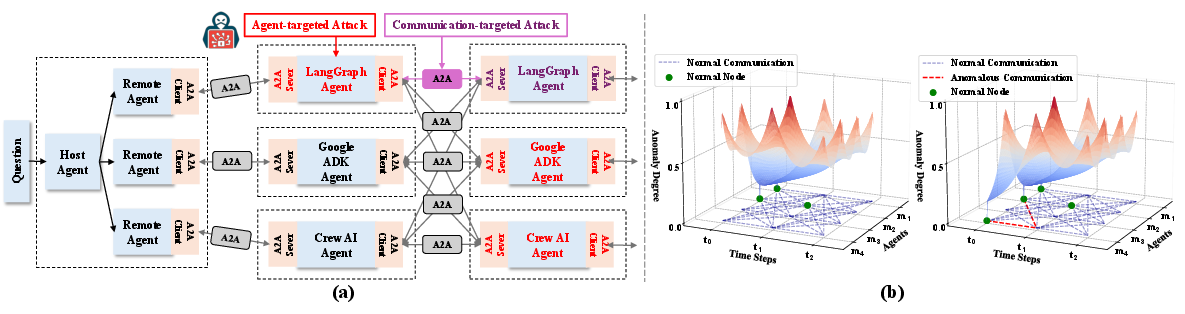

GUARDIAN models the collaboration process as a discrete-time temporal attributed graph, effectively capturing the dynamics of hallucination and error propagation. This model employs an unsupervised encoder-decoder architecture with an incremental training paradigm. It reconstructs node attributes and graph structures from latent embeddings to precisely identify anomalous nodes and edges. A central component is the graph abstraction mechanism founded on the Information Bottleneck Theory, allowing the compression of temporal interaction graphs while preserving essential patterns.

The framework is structured as follows: nodes in the graph represent LLM agents, and edges denote communications. The use of BERT embeddings facilitates the transformation of agent responses into node features. The GUARDIAN methodology separates itself through its encoder-decoder architecture, capturing relationships between attribute and structural patterns, hence delivering effective anomaly detection. This dual-level detection is crucial in differentiating attribute-level anomalies from structural inconsistencies.

Results and Analysis

The extensive experimental results demonstrate GUARDIAN's effectiveness in safeguarding LLM collaborations. The model achieves state-of-the-art accuracy, significantly improving upon existing baselines in scenarios of hallucination amplification and error injection (Figure 2).

Figure 2: Examples of safety issues and propagation dynamics in multi-agent collaboration under A2A protocol.

In scenarios of hallucination amplification, GUARDIAN showed a marked improvement, with accuracy increases of up to 15.4% on tasks like mathematical reasoning when compared against baselines. The encoder-decoder mechanism crucially aids in identifying discrepancies, leveraging the GIB mechanism for balancing information flow constraints, ensuring robust anomaly management.

Error injection and propagation tests further reveal GUARDIAN’s defensive capabilities, with improvements ranging from 3.6% to 8.6% over baselines. These results are anchored by the framework’s ability to adaptively manage and visualize dynamics in interaction graphs, allowing for early anomaly detection and system readjustments.

Implications and Future Work

The theoretical and practical implications of this work are substantial. Theoretically, it demonstrates how temporal graph modeling can effectively mitigate safety risks in LLM-based systems without necessitating model architecture changes. Practically, GUARDIAN provides a scalable, resource-efficient approach adaptable to various LLM architectures, offering transparency and robustness in dynamical agent interactions.

Future research avenues include exploring the scalability of GUARDIAN across larger, more complex agent networks and investigating its applications in other domains where multi-agent AI systems are pivotal. Enhancing the comprehensiveness of anomaly types it can detect, and integrating with more diverse LLM architectures can further solidify its applications.

Conclusion

GUARDIAN provides a sophisticated approach to managing safety challenges in LLM multi-agent collaborations through temporal graph modeling. By effectively detecting and mitigating hallucination and error propagation, GUARDIAN achieves a remarkable balance between security and efficiency, setting a benchmark for future developments in AI safety protocols.