- The paper introduces a model that applies Hebbian learning to hierarchically tokenize contiguous word features, emulating natural language acquisition.

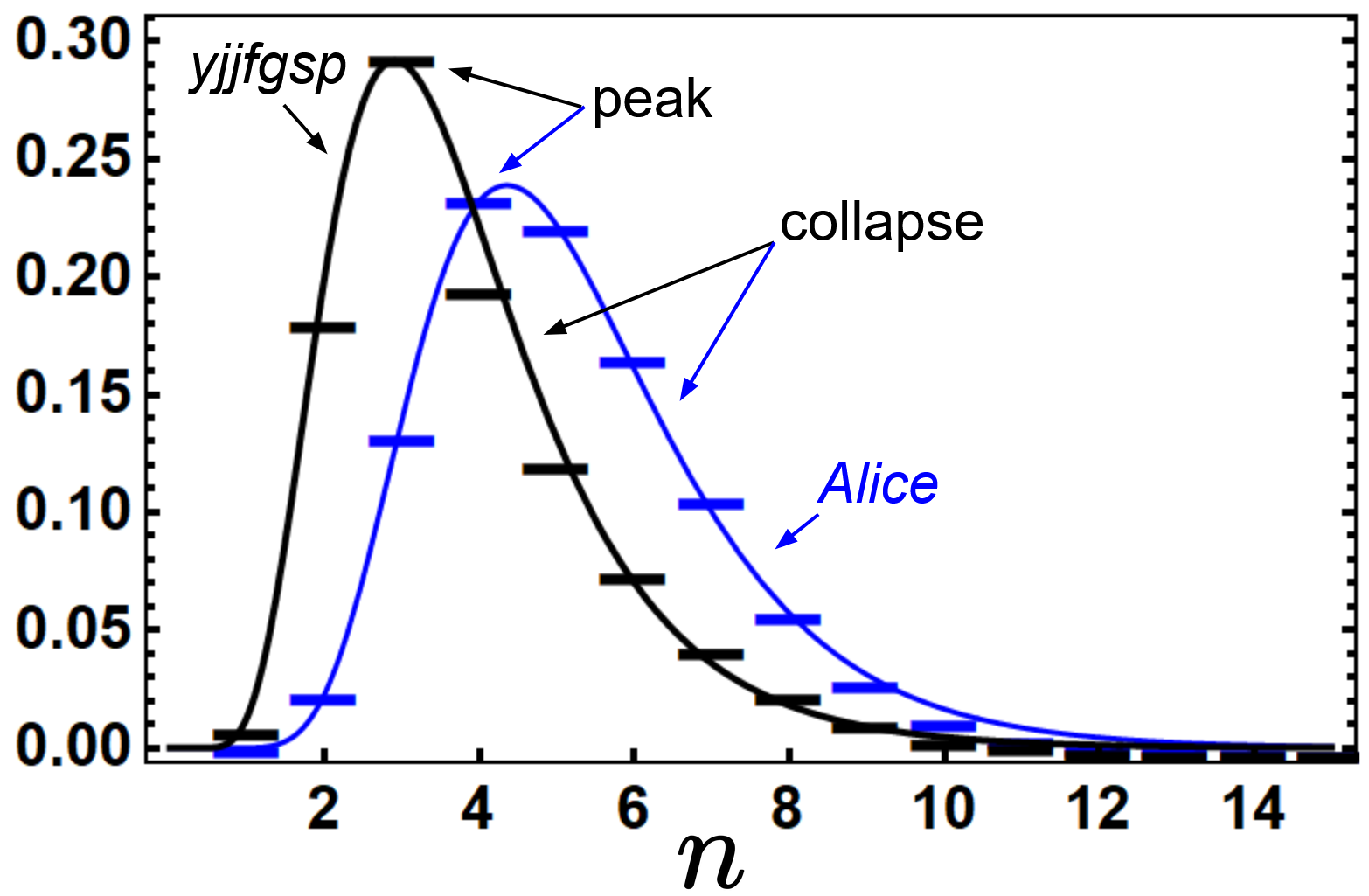

- It demonstrates that unsupervised learning on both real and random strings can yield n-gram distributions resembling log-normal patterns found in natural languages.

- The approach leverages auxiliary neurons and replay mechanisms to manage scaling issues and reveal how neural morphology may shape language structure.

Detailed Summary of "Hebbian Learning the Local Structure of Language" (2503.02057)

Introduction to Language Acquisition and Learning Models

The paper presents a foundational model for effective human language learning inspired by the local and unsupervised nature of Hebbian learning. The model is motivated by historical events such as the spontaneous development of Nicaraguan Sign Language (NSL), which models the emergence of a new language without pre-existing templates. Unlike data-intensive LLMs, which depend on vast datasets and computational power, this research aims to understand the natural acquisition of language without defined data or supervision.

Hebbian Learning Framework and Hierarchical Tokenization

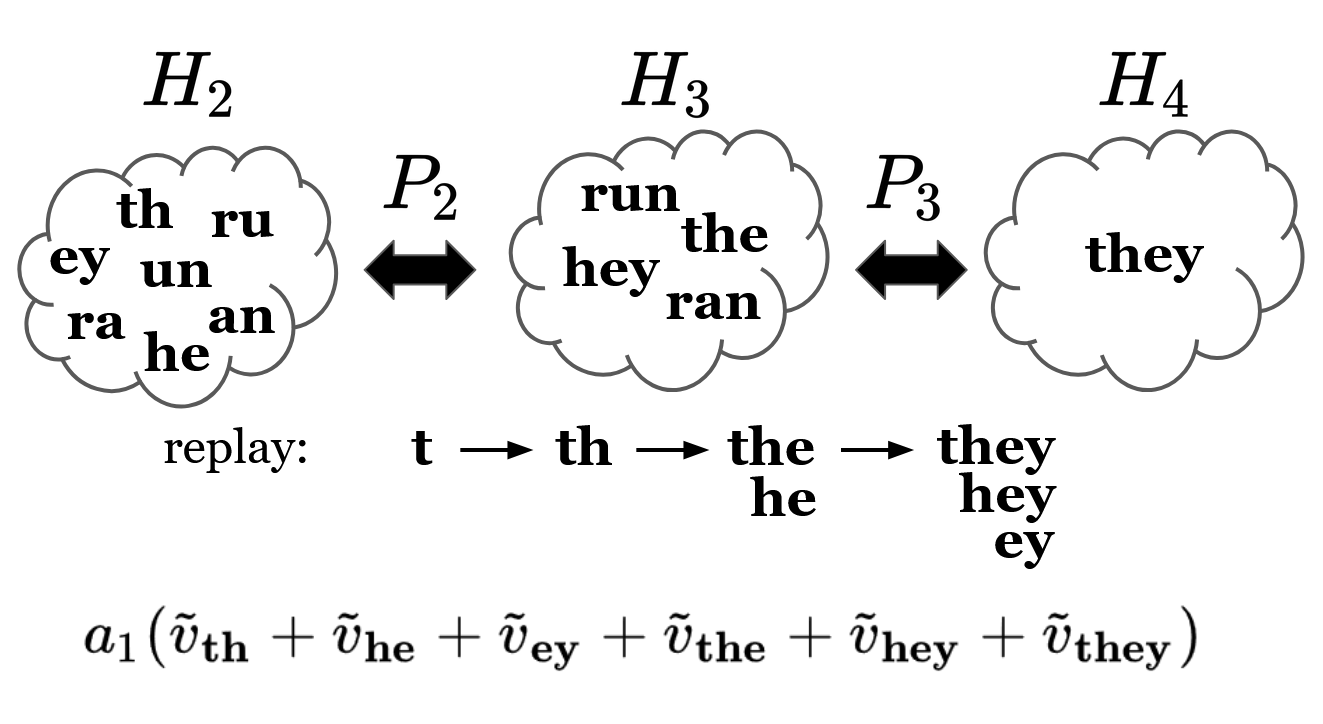

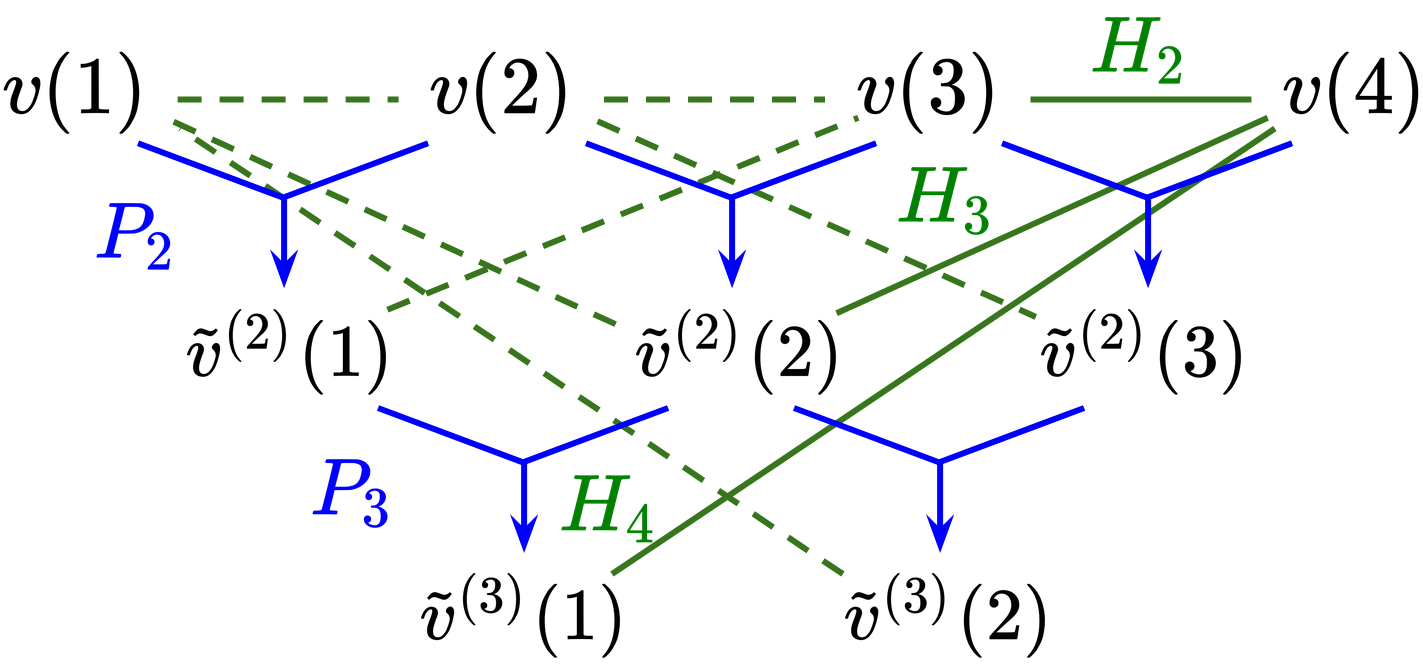

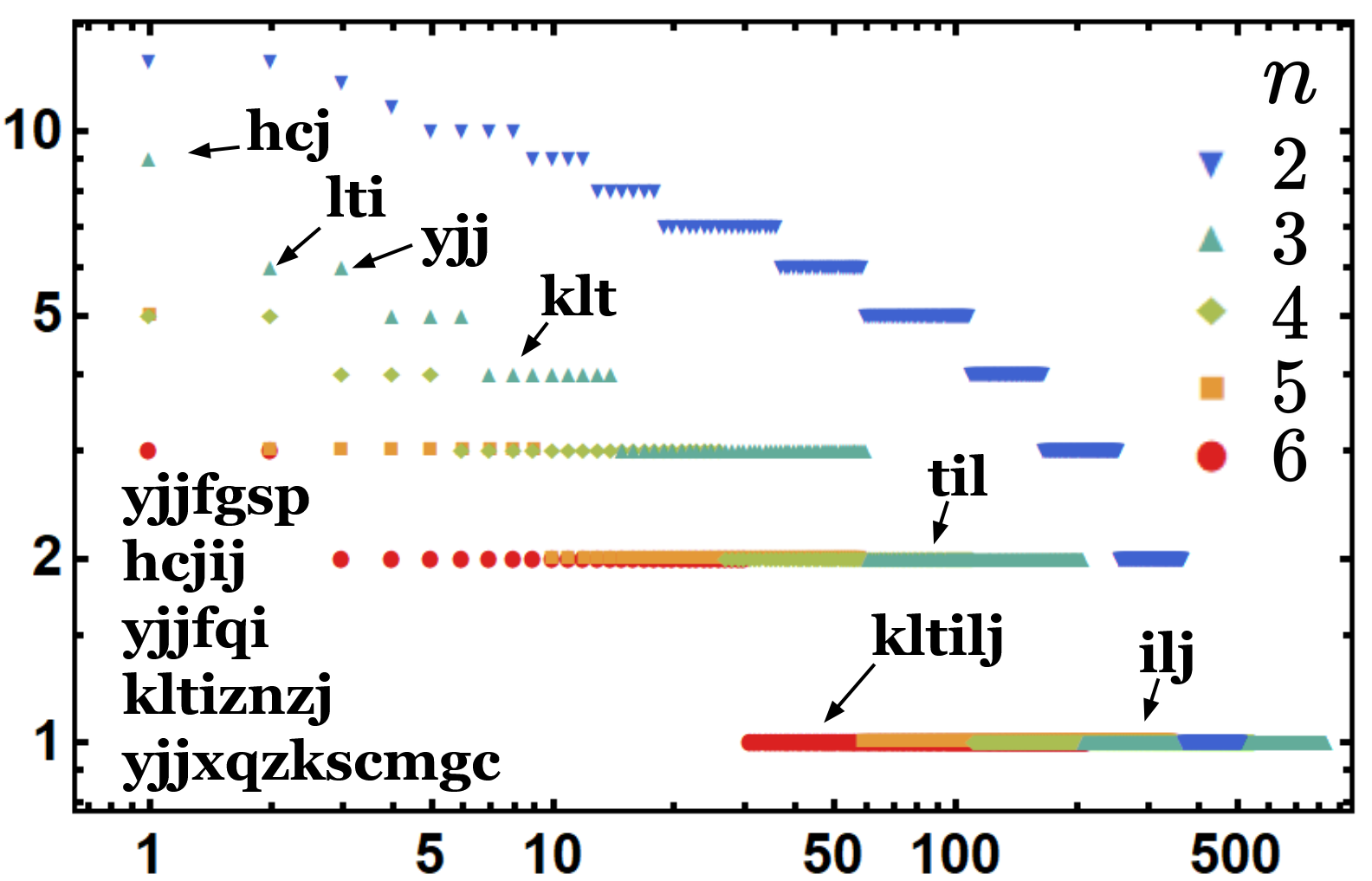

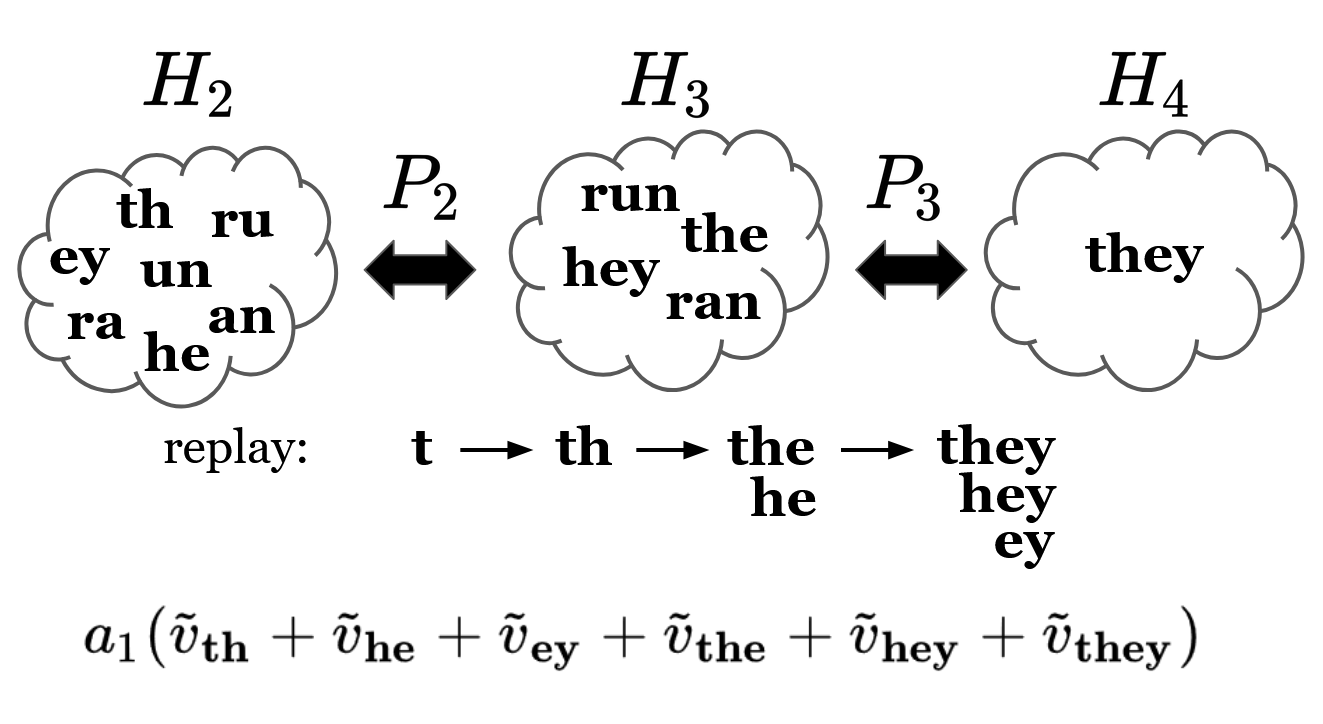

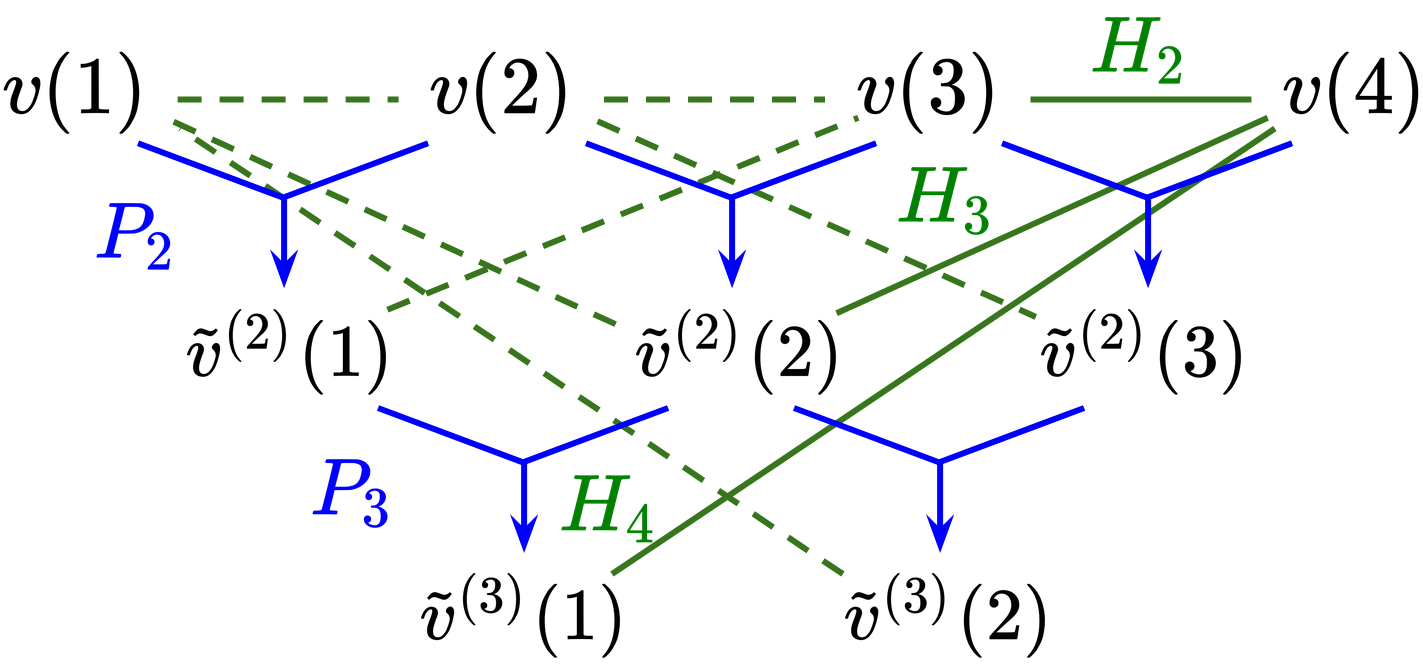

The model develops a language learning framework centered around Hebbian learning, which emphasizes local interactions at the level of individual neurons. This method proposes a hierarchy where neurons learn to tokenize contiguous word structures from text data, demonstrating an ability akin to byte-pair encoding (BPE), where compound features at each hierarchical level only include previously learned simpler structures.

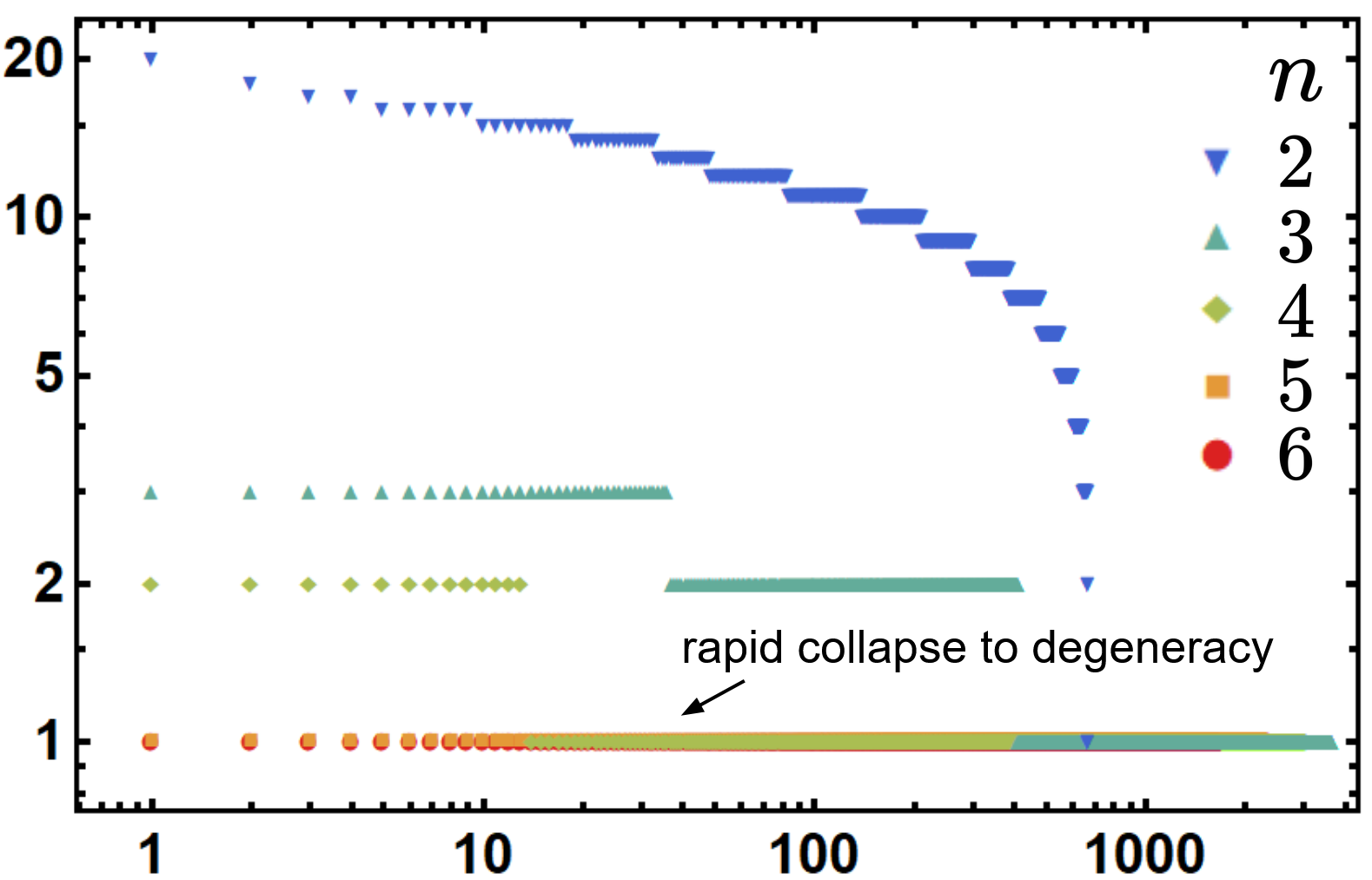

Figure 1: A hierarchy is defined by a sequence of Hamiltonians related by projectors. The features at each level of the hierarchy are learned tokens (v~) representing n-grams.

Analysis of Language Morphology via Random Hierarchical Learning

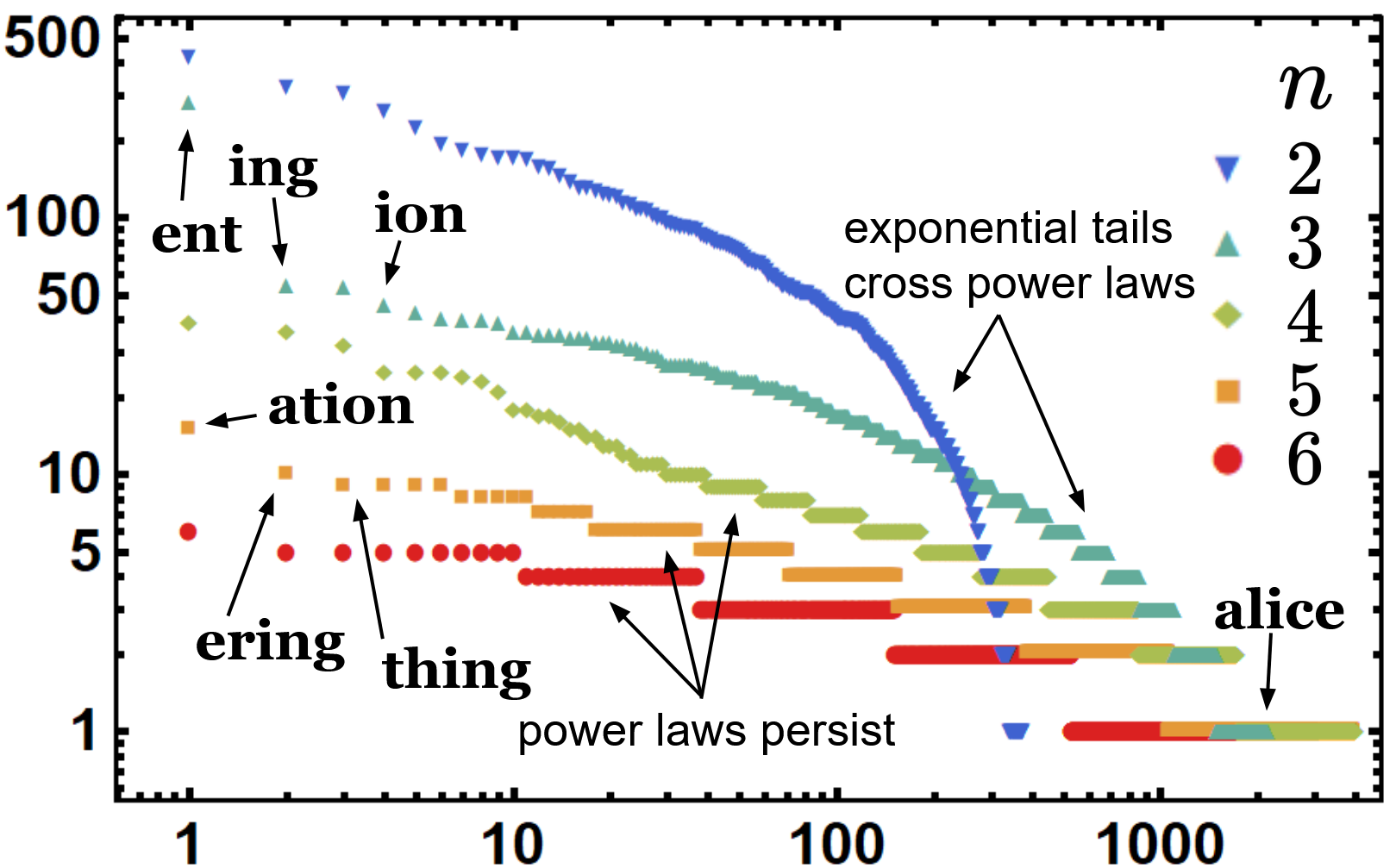

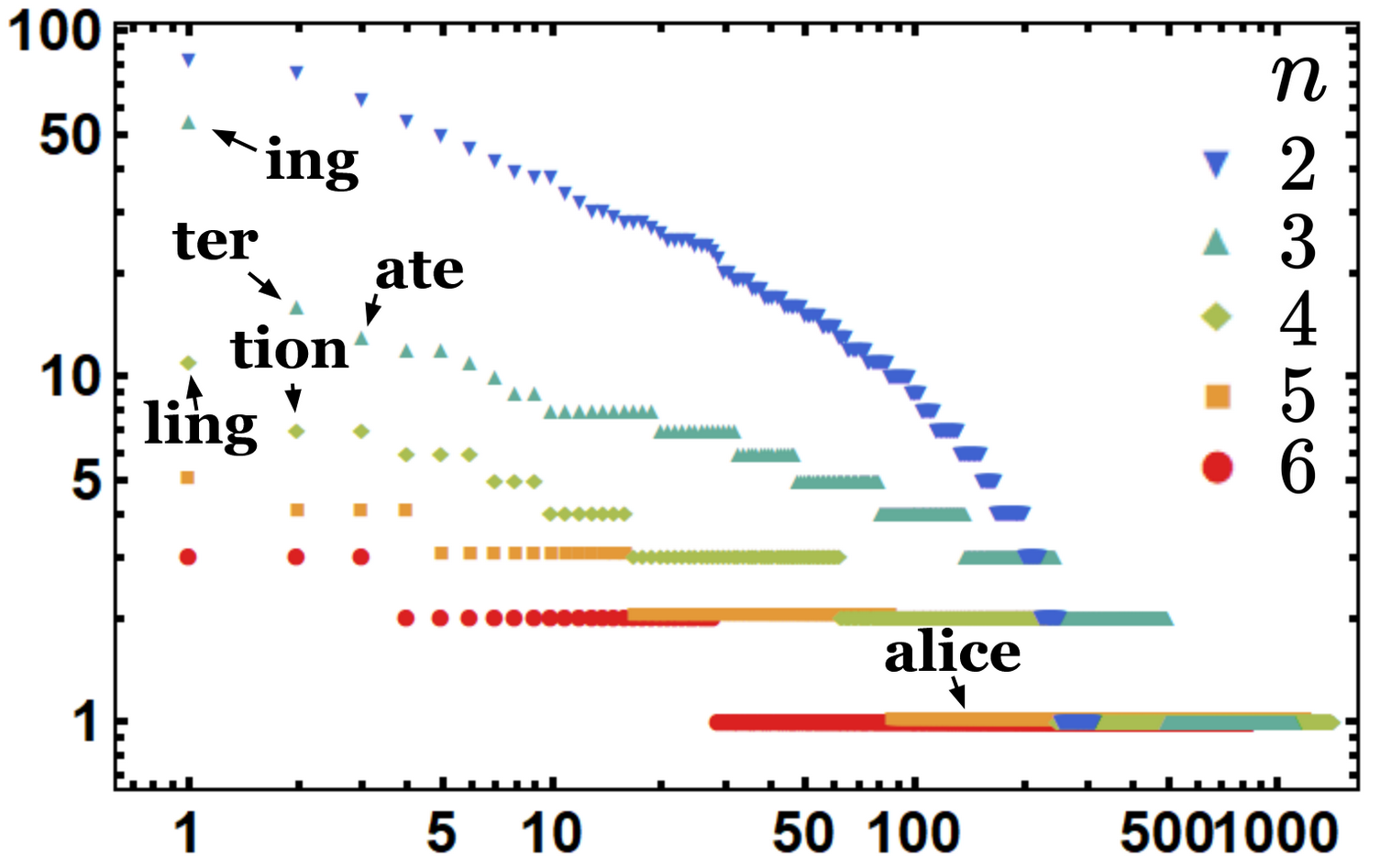

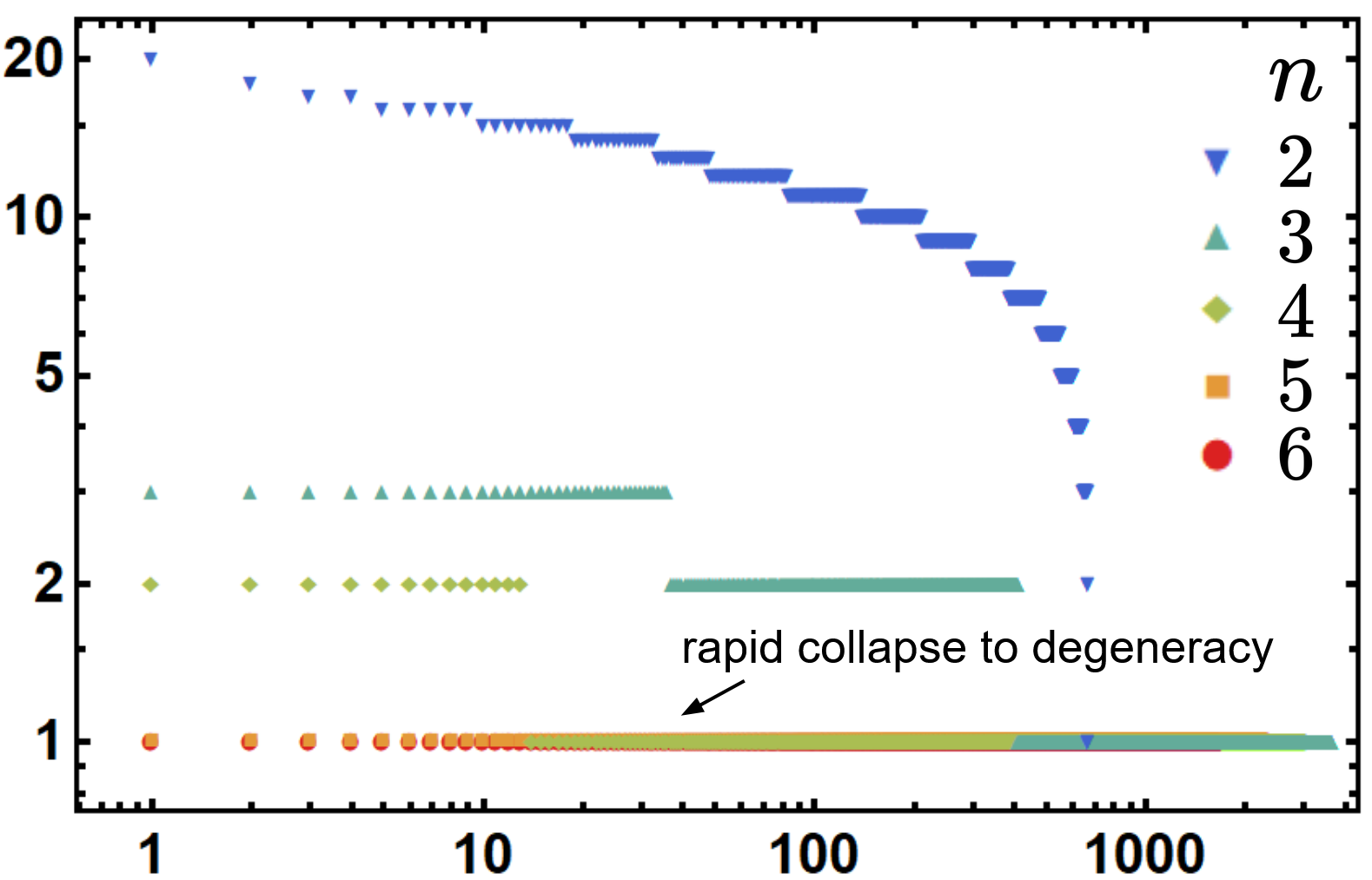

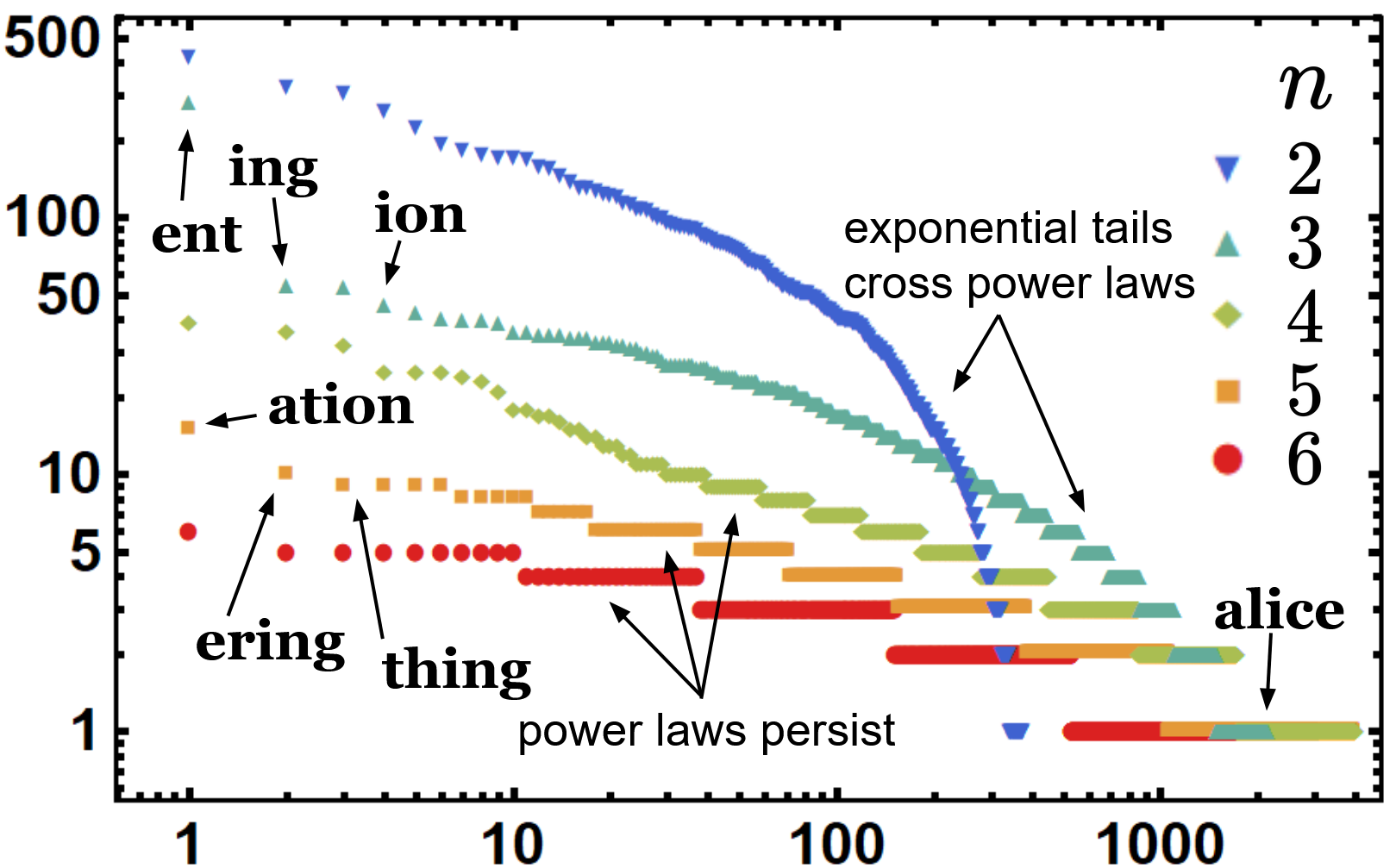

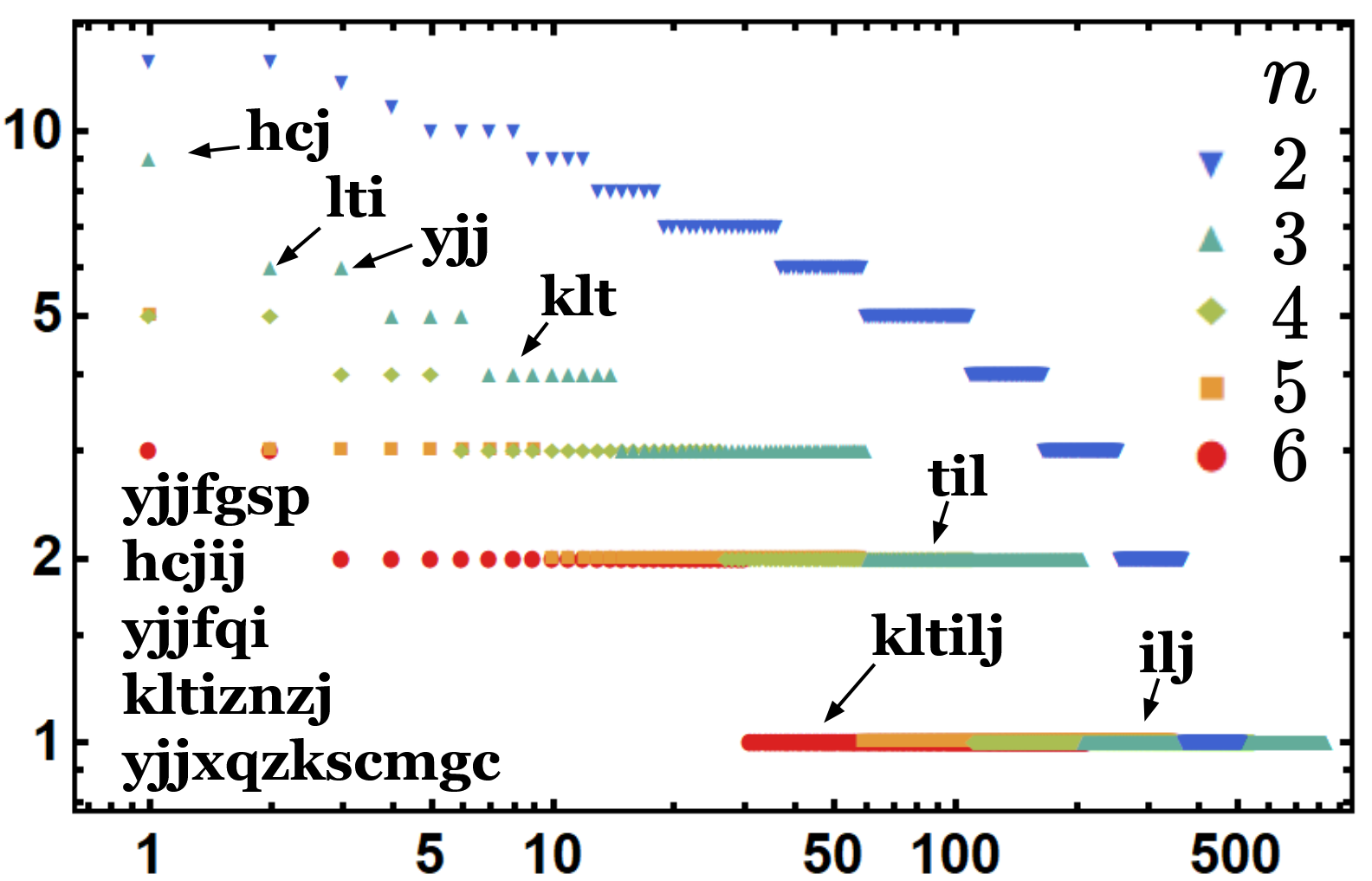

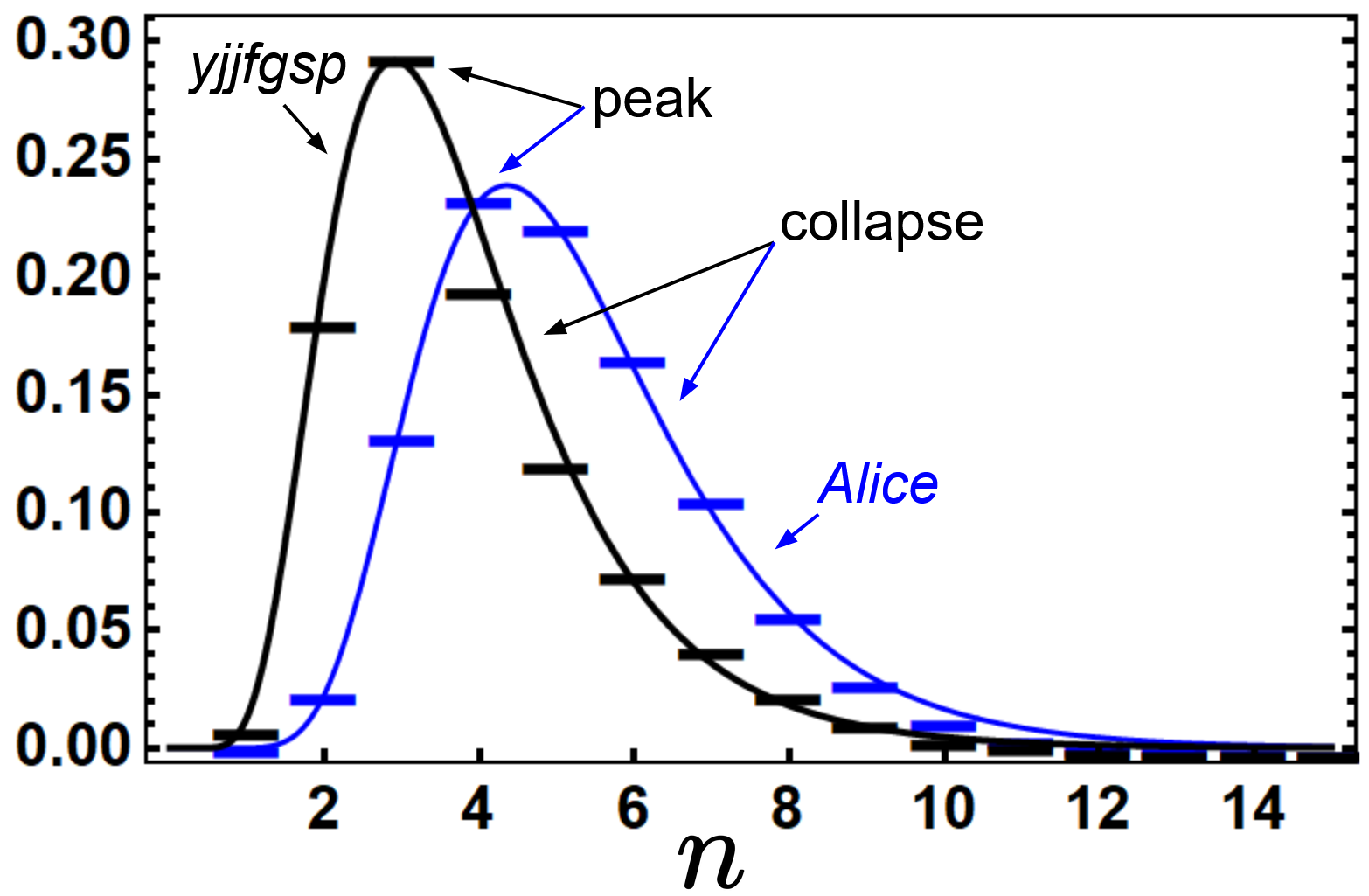

The hierarchy model exploits redundancy by enabling resource-efficient parallel learning and tokenization while predicting natural language morphology. Training the model on uniform random strings results in learning synapticless patterns that generate a morphologically complex yet semantically void random language. Such random models reflect log-normal distribution patterns observed in natural languages. The collapse of hierarchy is naturally limited by constraints on memory and processing, which prevents natural languages from evolving indefinite word lengths.

Figure 2: Frequency distribution of n-grams for real-world language data.

Scaling Considerations and Constraints of the Model

The model’s scalability is challenged by issues such as the bottleneck caused by the increased complexity of n-gram features at higher hierarchical levels. The research addresses these limitations by leveraging auxiliary neurons and replay mechanisms, allowing neurons to learn embeddings, thereby reducing the retained complexity and maintaining historical linguistic tokenization. These embeddings facilitate faster learning and recognition processes enabling compression and parallelized inference.

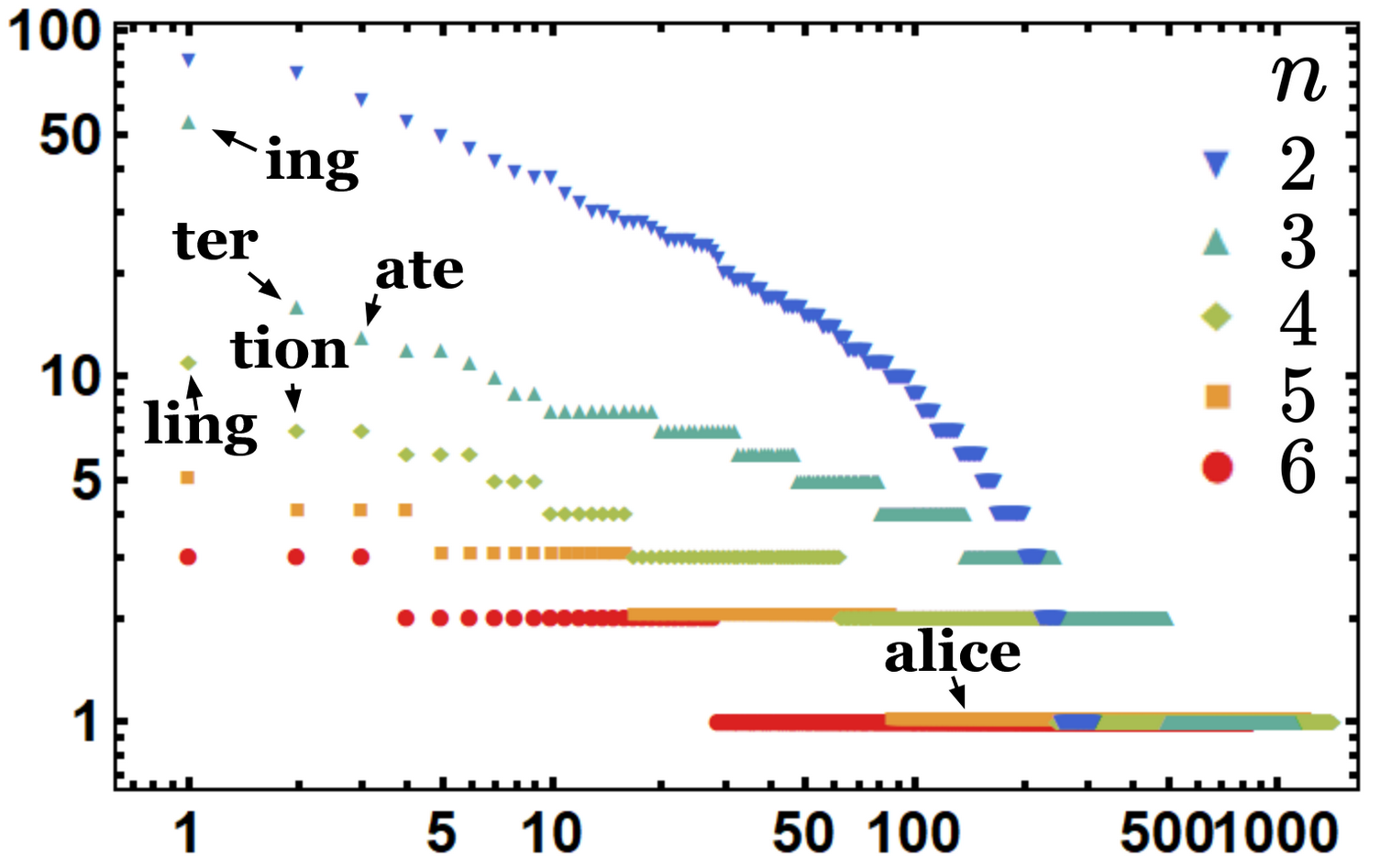

Figure 3: Comparing normalized n-gram distribution between Alice in Wonderland and a random language.

Implications for Neural Morphology and Language Processing

The findings suggest that language’s local structure is fundamentally linked to inherent neural coding constraints. This implies that morphology is not just a linguistic characteristic but potentially an organizing principle in neural architecture and language processing. The existence of a structured neural code suggests that the rules governing language morphology also shape perception and memory.

Conclusion

The research provides a theoretical model that can explain the microscopic origins of language structure, challenging data-driven paradigms by offering an unsupervised framework inherently suited to exploit language’s local and hierarchical morphology. By deriving a basis of understanding language from its smallest neural interactions, the model sets a foundation for bringing insights into artificial intelligence and cognitive science that mirror human-like language learning phases.

In summary, "Hebbian Learning the Local Structure of Language" offers a biologically plausible approach to understanding language acquisition, emphasizing a shift towards models capable of unsupervised and localized learning. This work has significant implications for future AI systems, aiming to replicate natural language processing in more human-aligned methods.