- The paper demonstrates that token embeddings share similar global geometric orientations across large language model families via pairwise cosine similarity and Pearson correlation.

- It applies Locally Linear Embeddings and intrinsic dimension techniques to reveal detailed local structure and semantic coherence within token clusters.

- The study introduces the Emb2Emb method, illustrating the feasibility of transferring steering vectors across different models to achieve consistent behavior.

Shared Global and Local Geometry of LLM Embeddings: An Academic Summary

The paper "Shared Global and Local Geometry of LLM Embeddings" (2503.21073) investigates the geometric similarities across token embeddings of LLMs in the context of both global and local characteristics. The authors aim to understand how these embeddings are similarly structured across different model architectures and sizes, providing insights into potential applications such as transferability of model configurations.

Introduction

Framework and Objective

Token embeddings in LLMs represent input data into high-dimensional spaces, serving as the foundational representation upon which neural networks perform transformations to produce outputs. Previous research indicates that these models often converge to similar representations due to shared training datasets and architectural principles. This work explores two main analyses: the shared global geometry, which pertains to the overarching orientation and distribution pattern of these embeddings, and local geometry, which includes detailed structural similarities such as neighborhood constructs and intrinsic dimensionality.

Theoretical Background

Token embeddings are critical as initial representations that are continuously refined through layers in a network. Techniques like Locally Linear Embeddings (LLE) and intrinsic dimension calculations provide tools to explore these local geometric properties. The paper adopts these methods to examine how token embeddings maintain their structure across various model families, such as GPT2, Llama3, and Gemma2.

Global Geometry Analysis

Methodological Approach

To assess global similarities, the study computes pairwise cosine similarity matrices from sampled token embeddings across different models within the same family. Pearson correlation coefficients are then used to quantify the similarity in orientation of token embeddings across models of varying sizes and configurations.

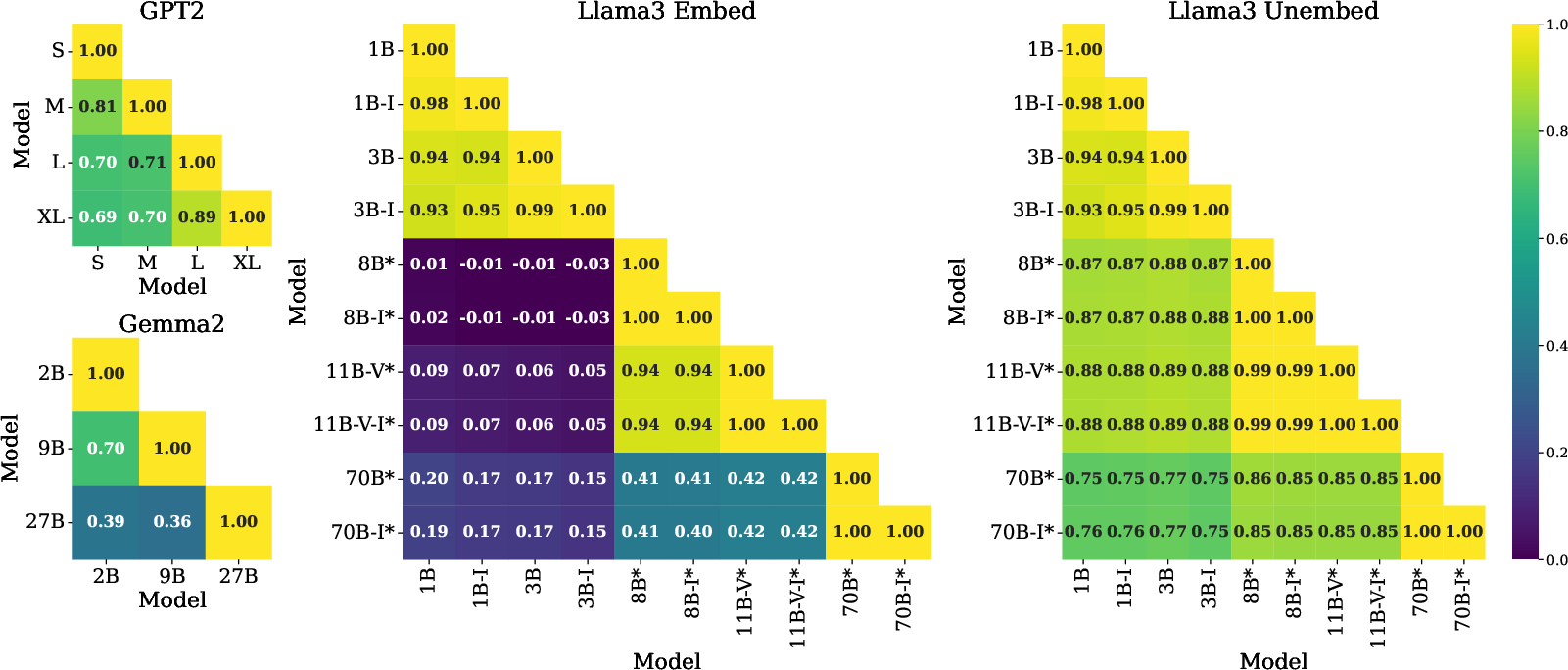

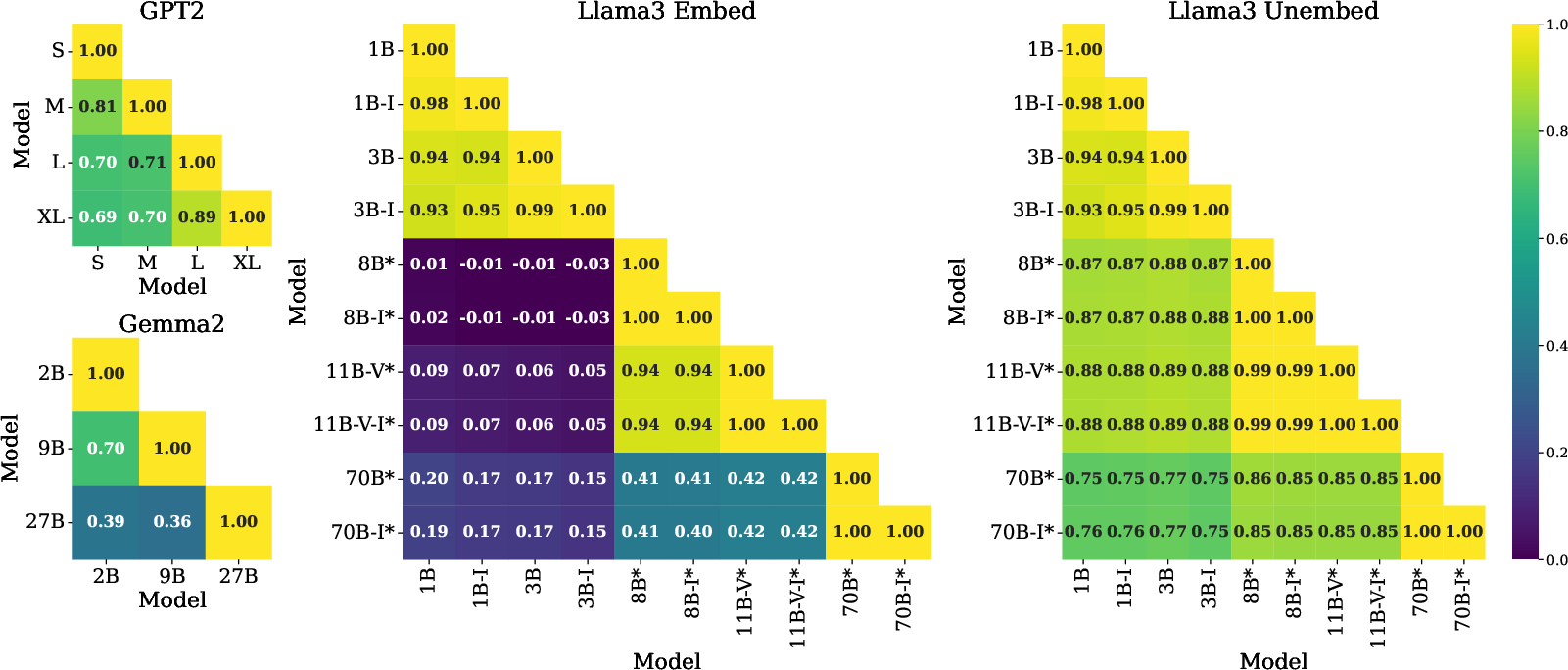

Figure 1: LLMs share similar relative orientations as denoted by high Pearson correlation scores across embedding matrices.

Observations and Results

- Token embeddings within the same family show high correlation scores, affirming similar geometric orientations even when models differ in embedding dimensions.

- Instruction-tuned models maintain high alignment with their base versions, indicating that transformation processes do not disrupt global embedding geometry.

- In cases involving "untied" embeddings, significant correlation persists in the unembedding space, suggesting a convergence of feature representations by the final layer.

Local Geometry Analysis

Locally Linear Embeddings (LLE)

LLE is applied to approximate the embedding vector as a weighted sum of its nearest neighbors, capturing local structural properties across layers.

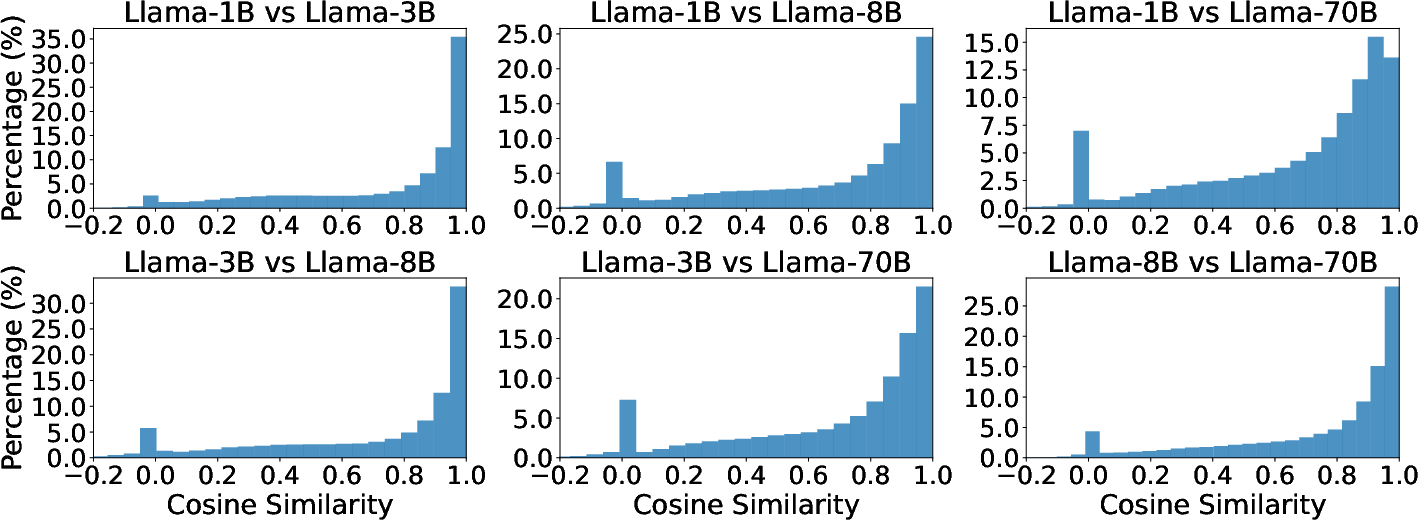

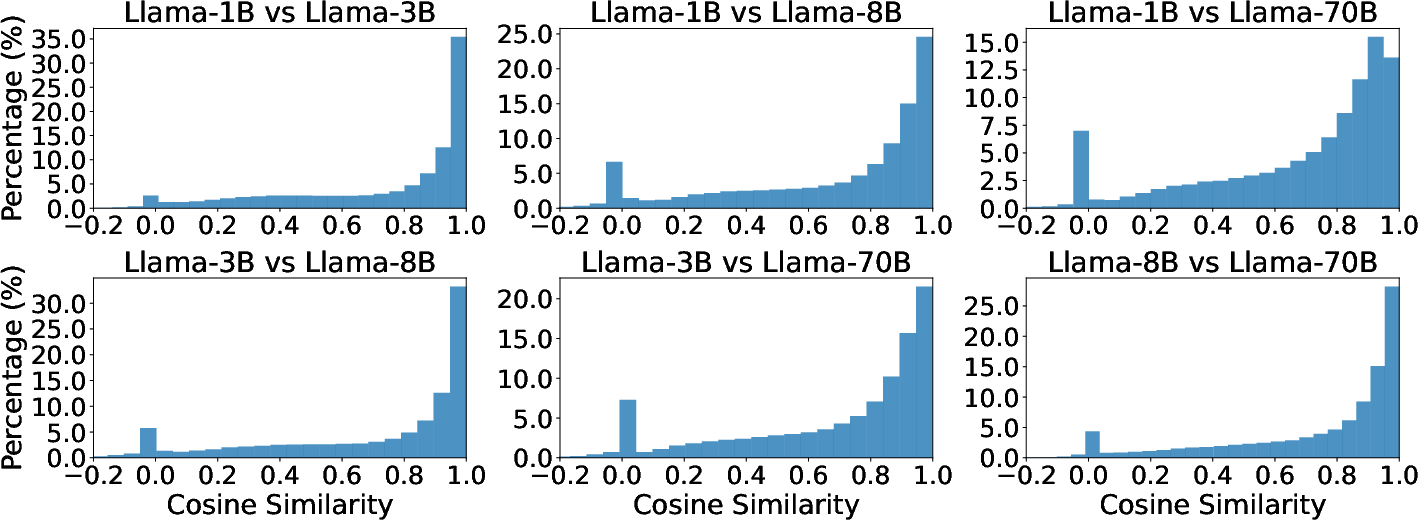

Figure 2: Visualization of LLE weight similarities showing consistent local geometry across Llama3 unembeddings.

Intrinsic Dimension Calculation

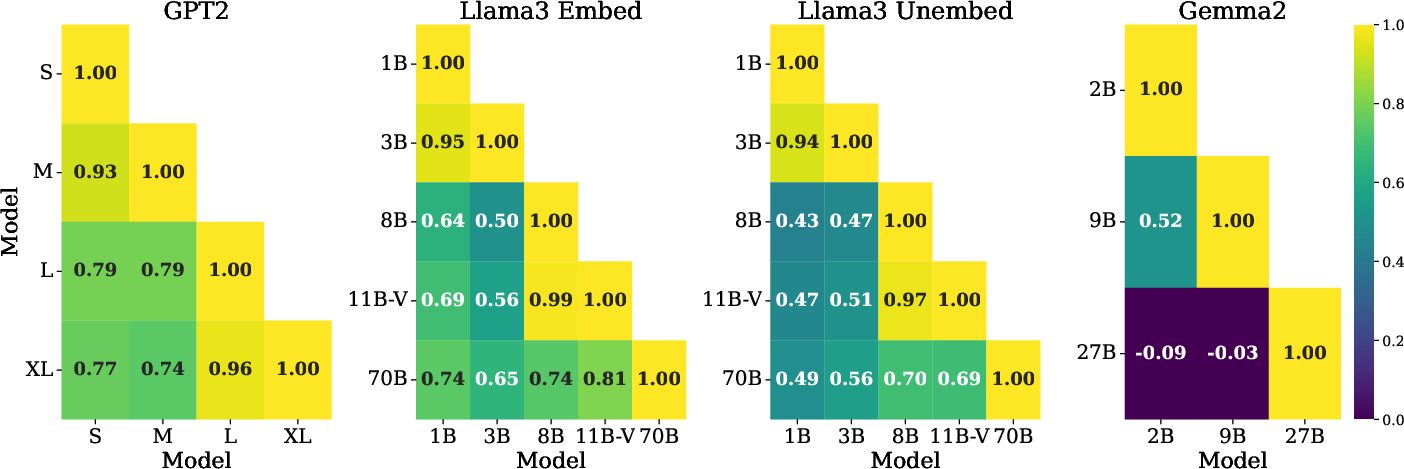

Intrinsic dimension measures capture the complexity and manifold characteristics of the embedding spaces, revealing that tokens with lower intrinsic dimensions tend to form semantically coherent clusters.

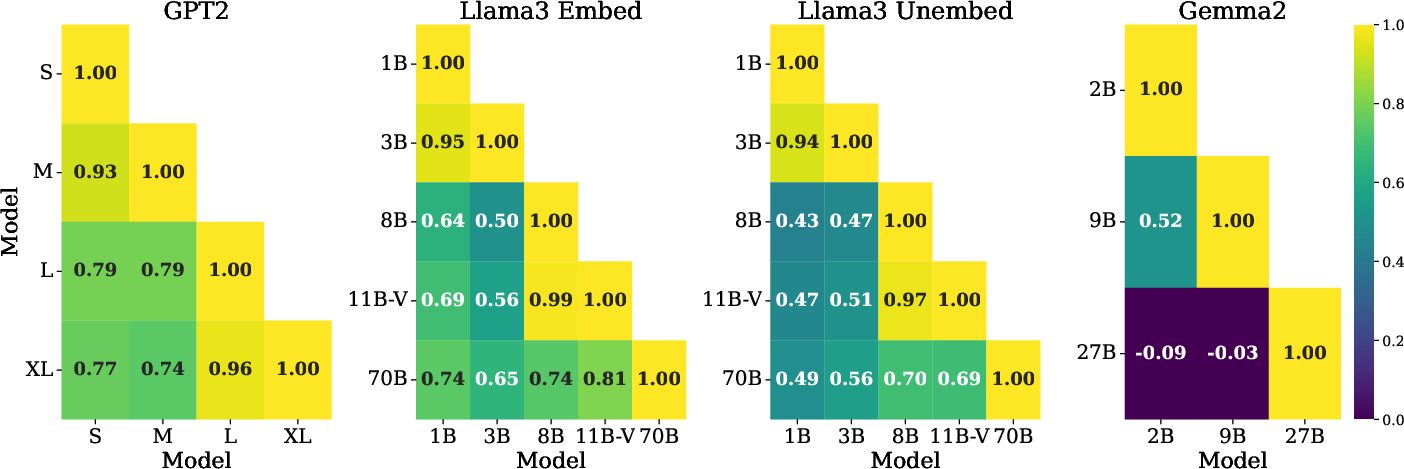

Figure 3: Similar intrinsic dimensions across various LLMs, denoting shared local geometric properties.

Empirical Insights

- Tokens with smaller intrinsic dimensions form clusters with high semantic coherence, verified through correlation with a Semantic Coherence Score (SCS) derived from ConceptNet relations.

- Such findings underscore the utility of lower-dimensional representations in understanding semantic structures within LLM embeddings.

Emb2Emb: Transferring Steering Vectors

Conceptual Overview

Emb2Emb leverages the shared geometry insights to facilitate model interpretability applications such as steering vector transfer across different models. Steering vectors, crucial for controlling model outputs, can be effectively transformed using learned linear mappings based on the studied geometric consistencies.

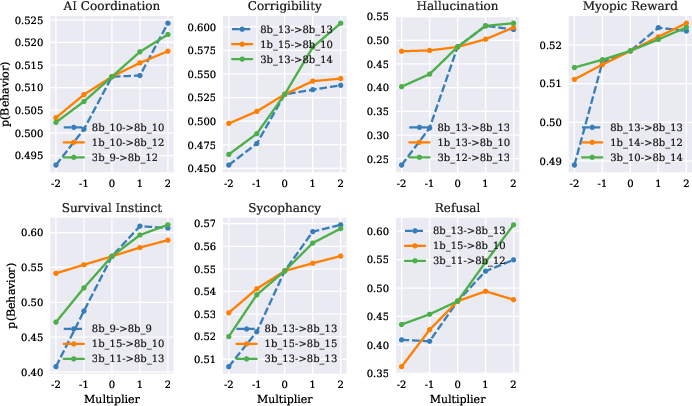

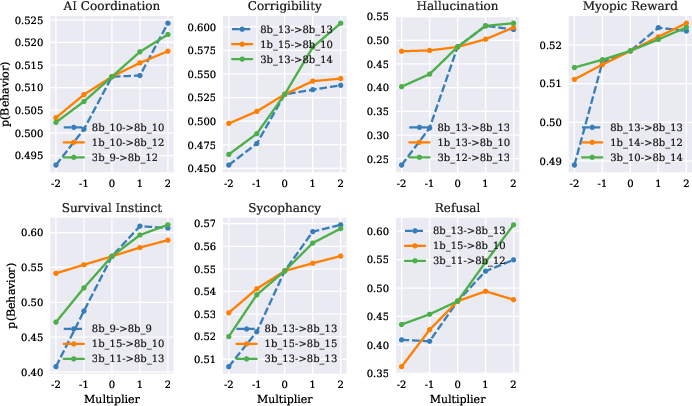

Figure 4: Transfer of steering vectors showing consistent control of behavior across Llama3 models despite different dimensions.

Practical Implications

Experimentation across the Llama3 and GPT2 families demonstrates the feasibility of steering vector transfer without loss of behavioral intent, providing new avenues for model control and manipulation.

Conclusion

The study provides compelling evidence of both global and local geometric similarities within token embeddings across LLMs, paving the way for advanced model interoperability and control strategies such as Emb2Emb. Future research may explore the implications of embedding similarities for model training efficiency, domain adaptation, and data-driven task fine-tuning. These insights emphasize the geometric principles as foundational elements crucial for advancing model development and application frameworks in AI.