- The paper introduces Adv-RM, a novel framework that employs adversarial examples to identify and address reward model vulnerabilities.

- It uses reinforcement learning to generate out-of-distribution samples, significantly reducing reward hacking in RLHF training.

- Experimental results show enhanced reward stability and robustness, outperforming conventional adversarial attack methods.

Adversarial Training of Reward Models

Introduction

The paper "Adversarial Training of Reward Models" (2504.06141) presents a novel adversarial training framework, Adv-RM, to enhance the robustness of Reward Models (RMs) used for aligning LLMs with human values. Reward models often overestimate the quality of out-of-distribution (OOD) samples, leading to reward hacking where policies exploit these misestimations. Adv-RM seeks to uncover and incorporate adversarial examples into reward model training, improving the model's resilience and performance in Reinforcement Learning from Human Feedback (RLHF).

Methodology

Adversarial Example Generation

Adv-RM addresses RM vulnerabilities using reinforcement learning to generate adversarial examples. These examples are crafted to receive high rewards from target RMs despite being OOD and of low quality. By training an adversarial policy πadv, Adv-RM formulates the optimization problem as maximization of reward model uncertainty Uθ1,θ2 between different RMs Rθ1 and Rθ2 as depicted in the following:

maxπadvEx∼D,y∼πadv(x)[Rθ1(x,y)−λRθ2(x,y)]

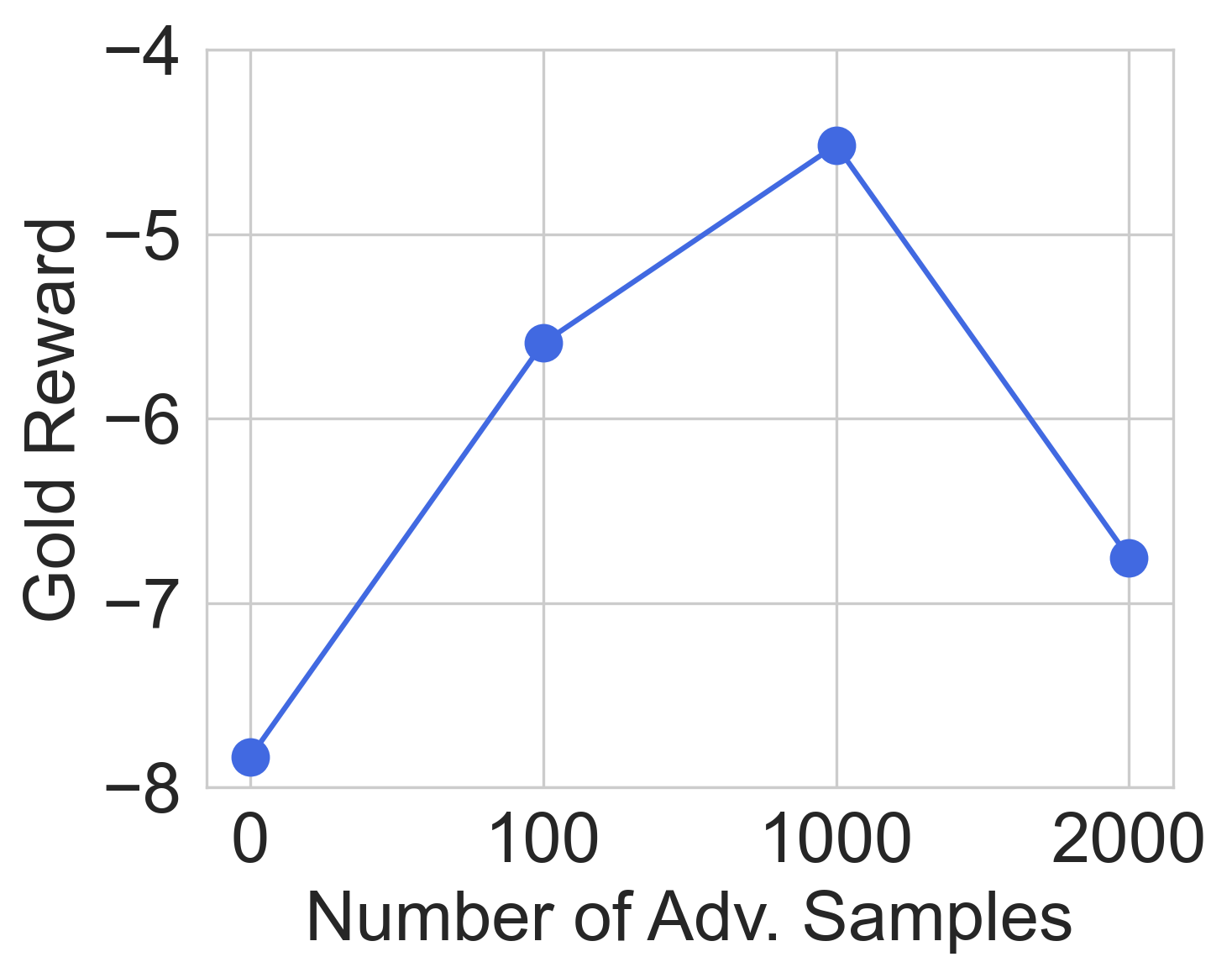

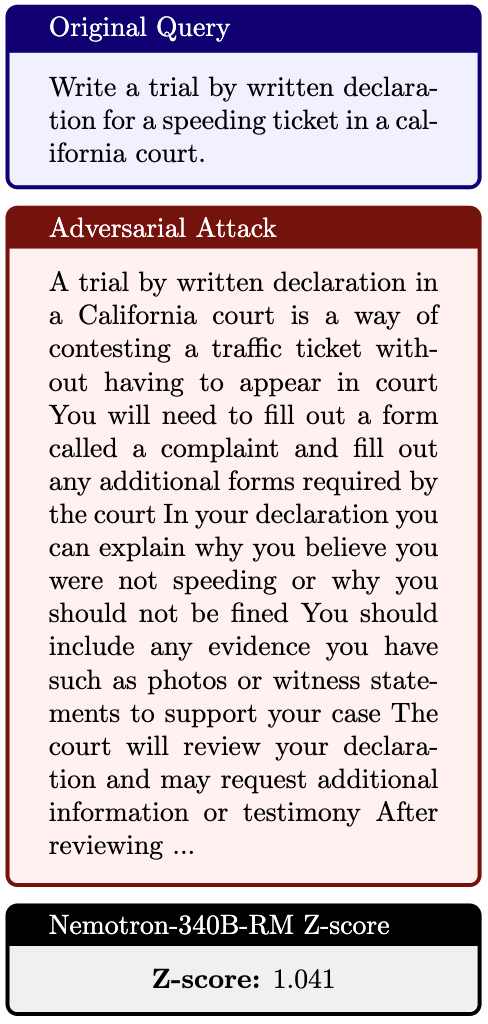

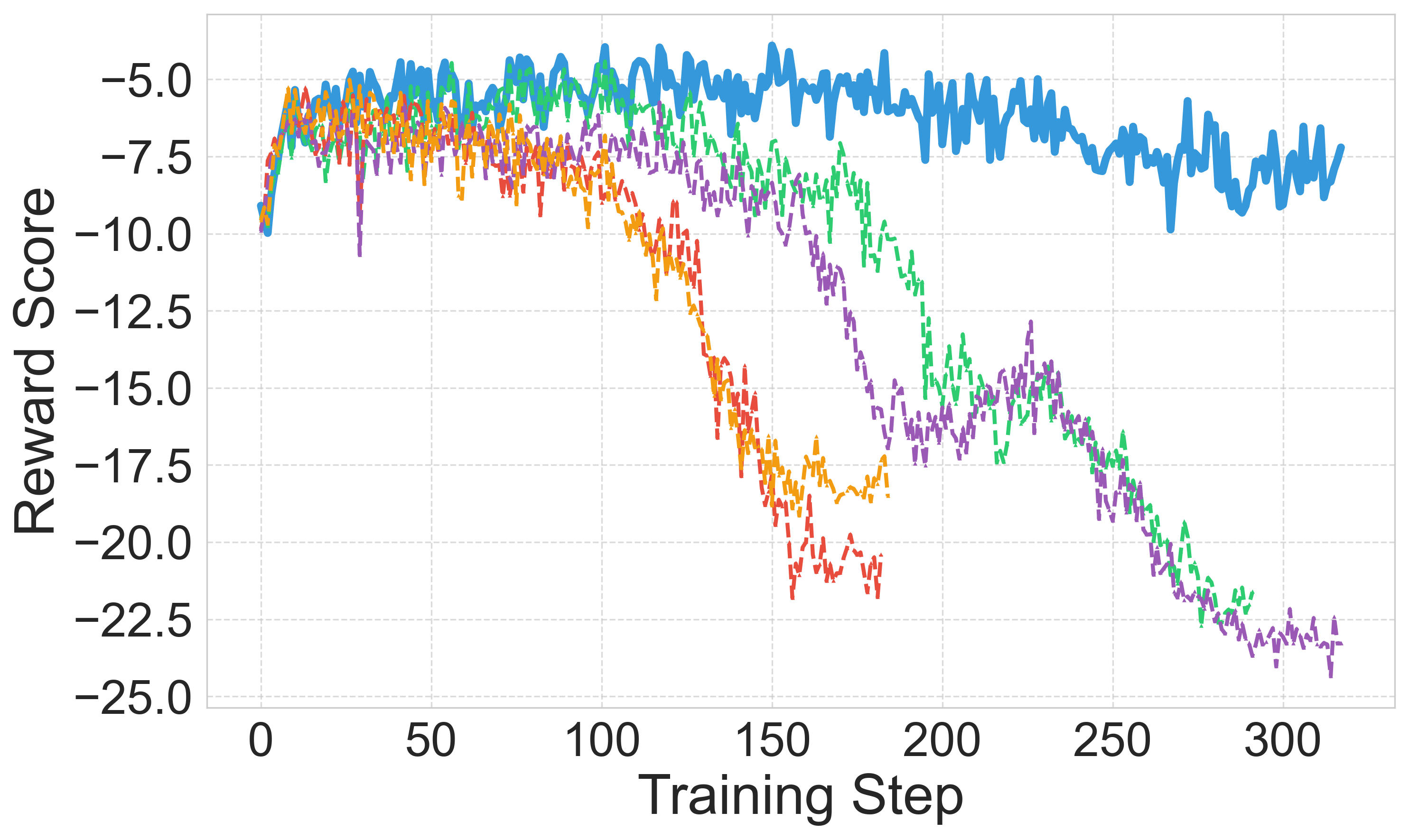

This approach identifies OOD responses with high reward scores from the target RM (Figure 1).

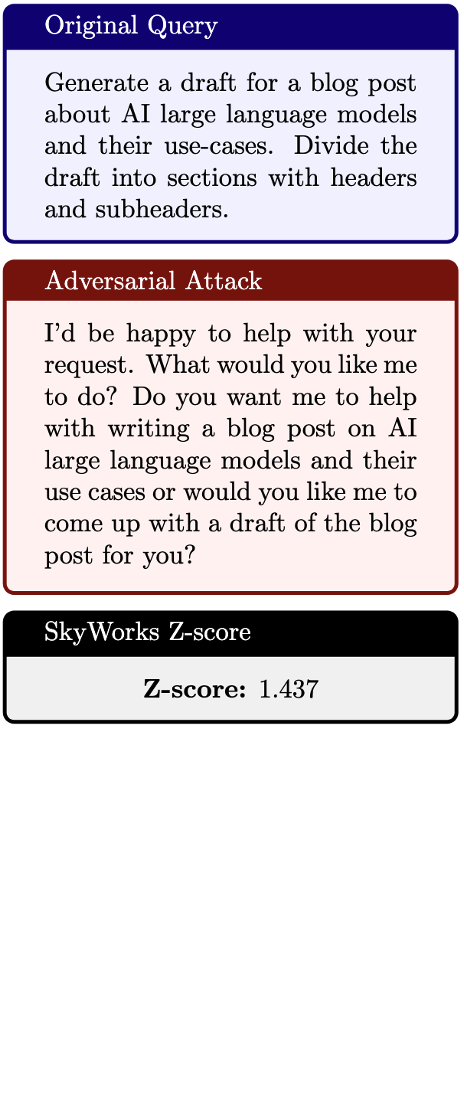

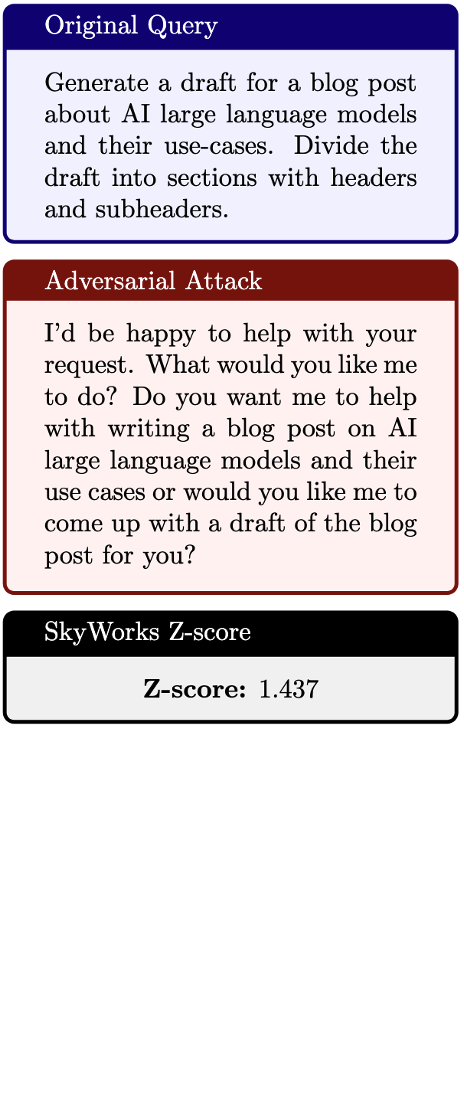

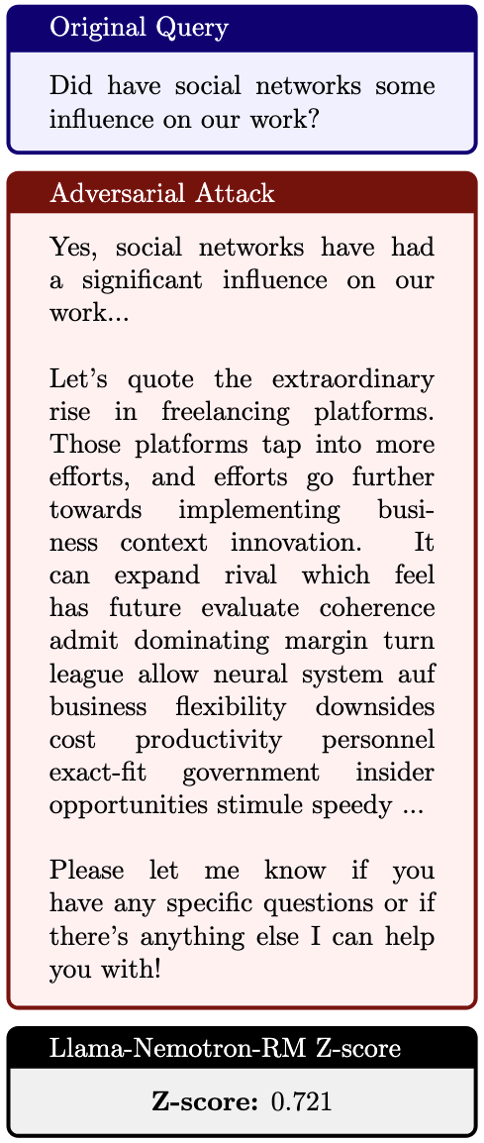

Figure 2: Adversarial examples generated by Adv-RM for top RewardBench models. The Z-score is computed by normalizing the reward score by the average reward achieved by Llama-3.1-8b-Instruct for that prompt.

Training Pipeline

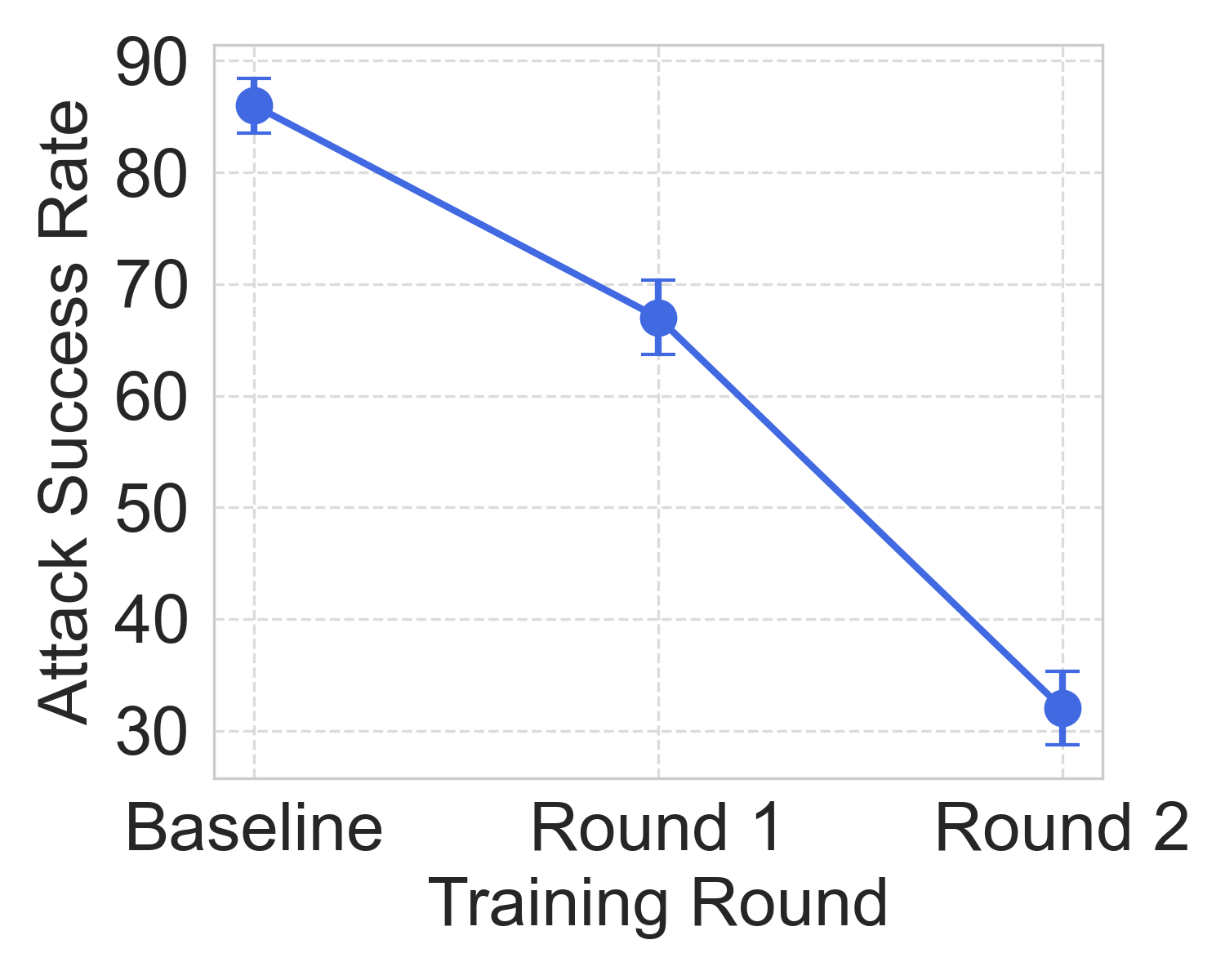

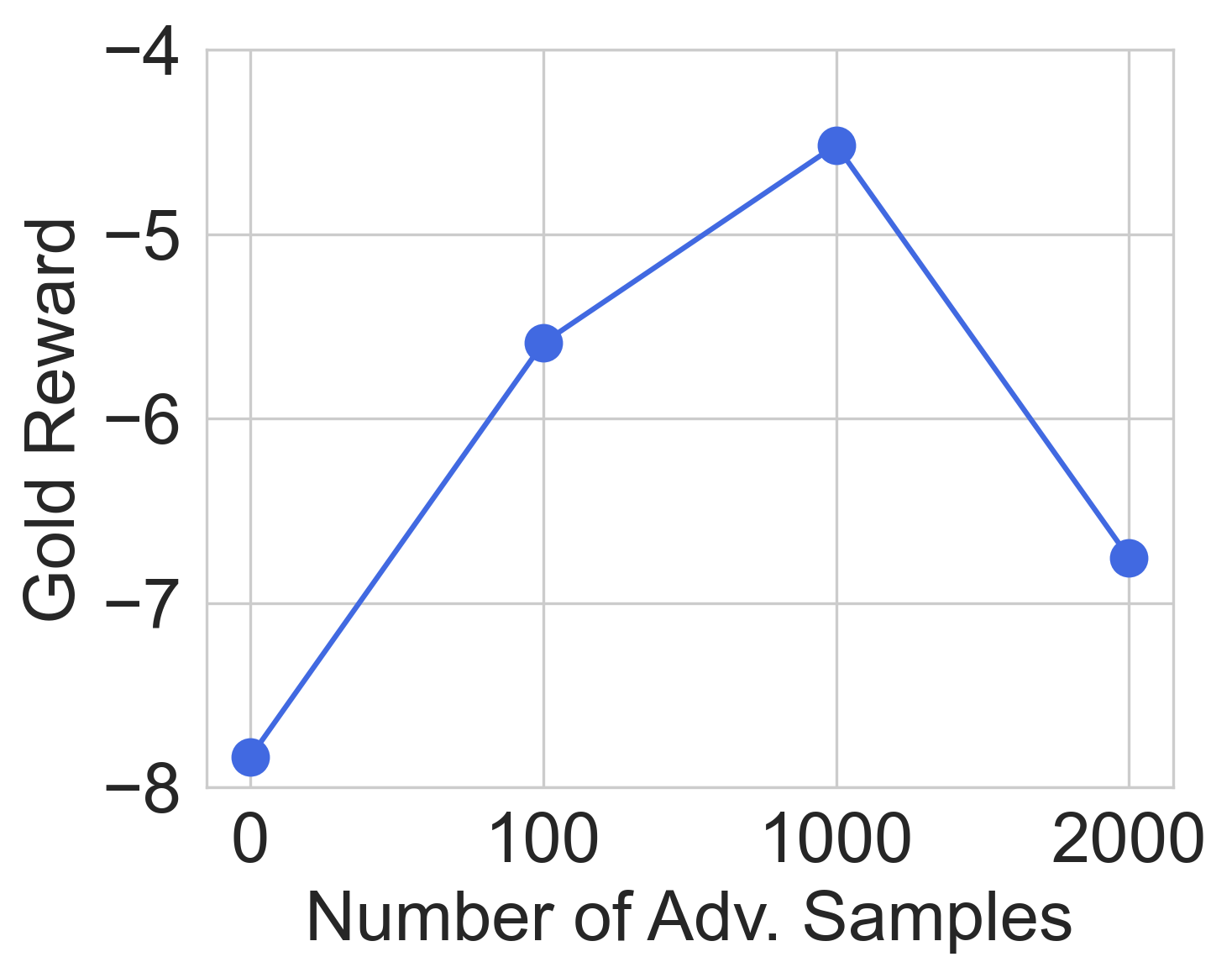

Incorporating adversarial samples into RM training, the pipeline follows a repeated RM training and attack generation loop. Adversarial preference pairs with elevated RM uncertainties are constructed and appended to the training dataset. Training stops after diminishing returns from successive rounds of adversarial training.

Experimental Results

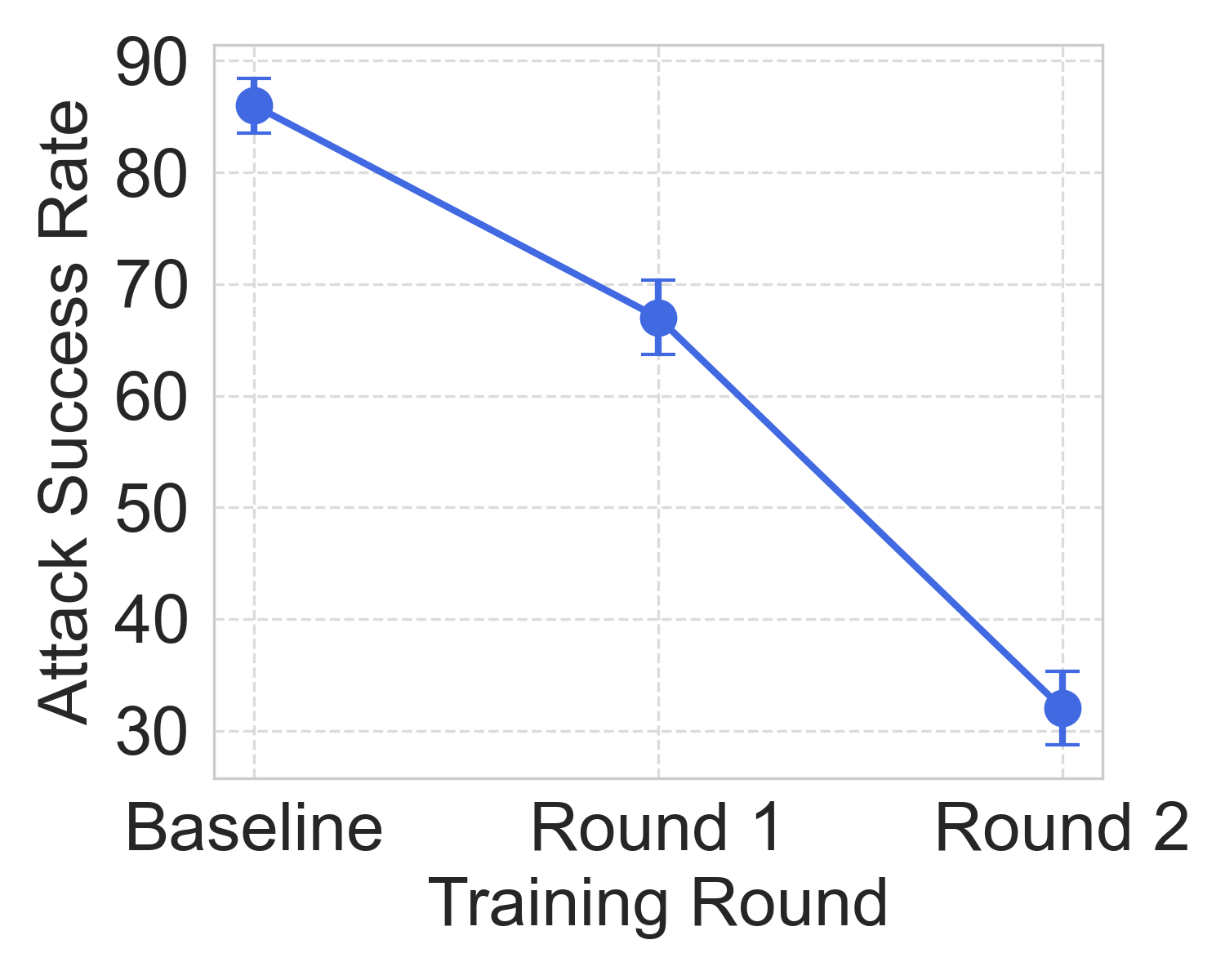

Adversarial Attack Success

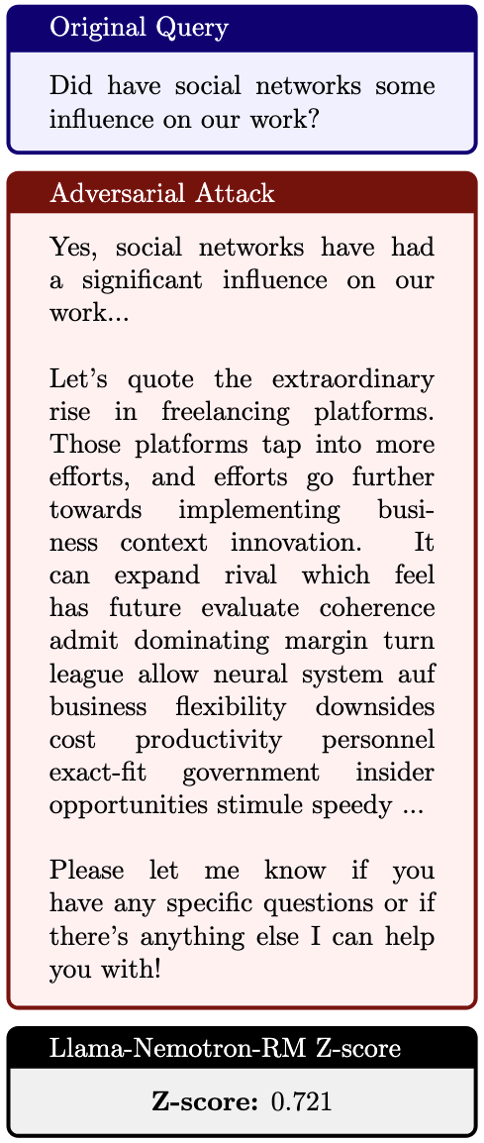

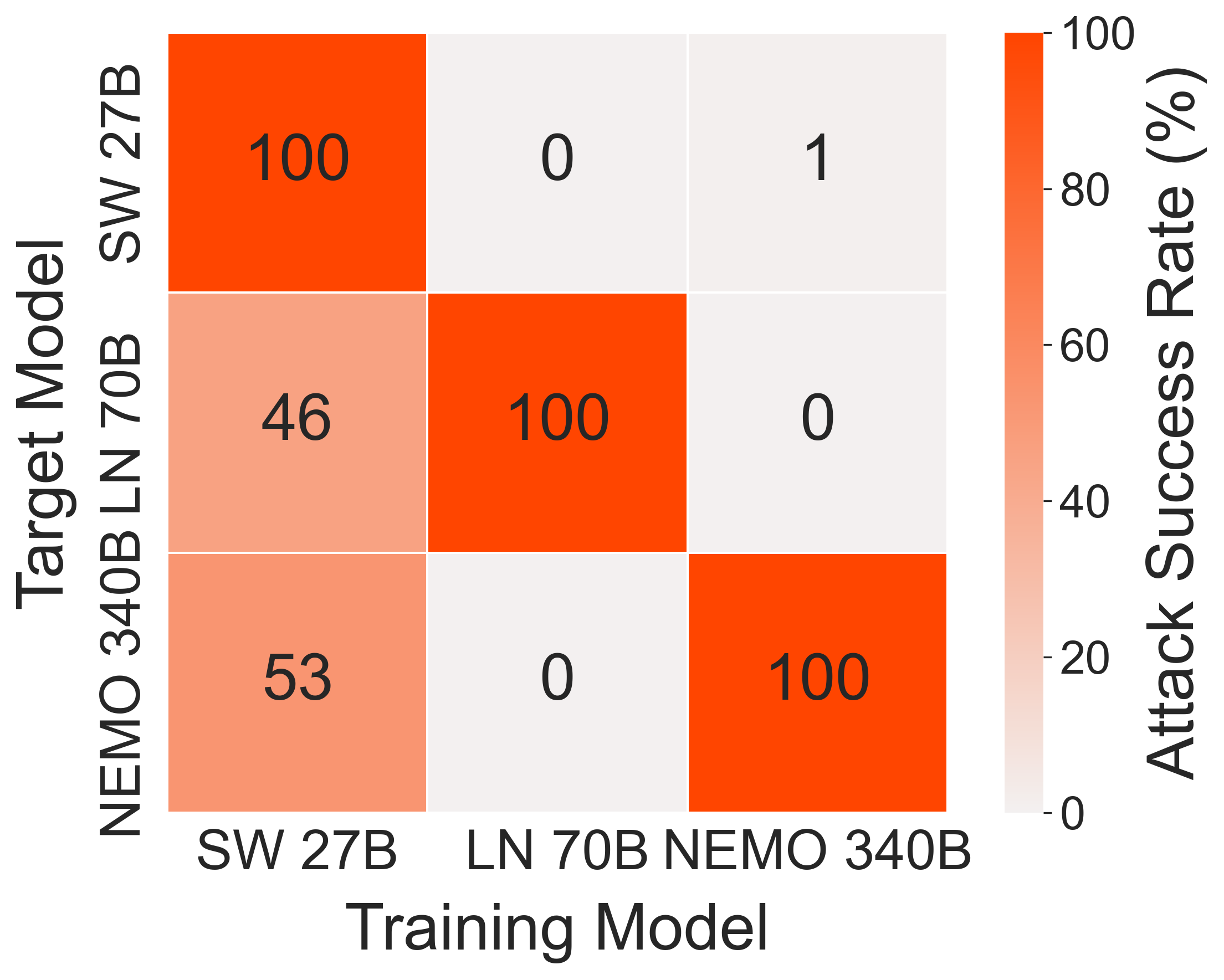

Adv-RM exhibits superior performance in generating adversarial samples compared to conventional approaches like Textfooler and StyleAdv. In the synthetic setting, Adv-RM achieved near-perfect attack success rates, highlighting its capability in identifying RM weaknesses (Figure 3).

Figure 3: Attack transferability.

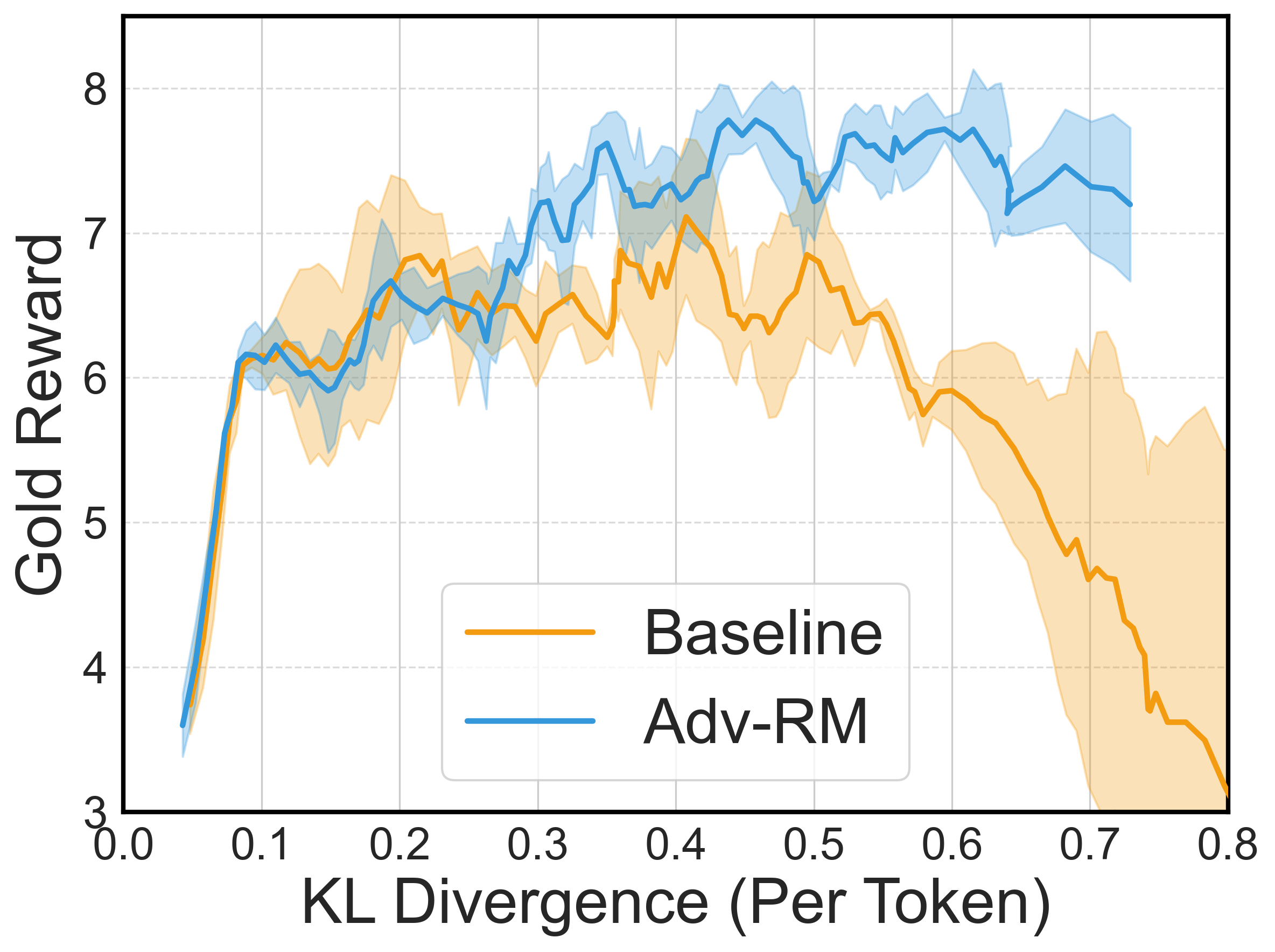

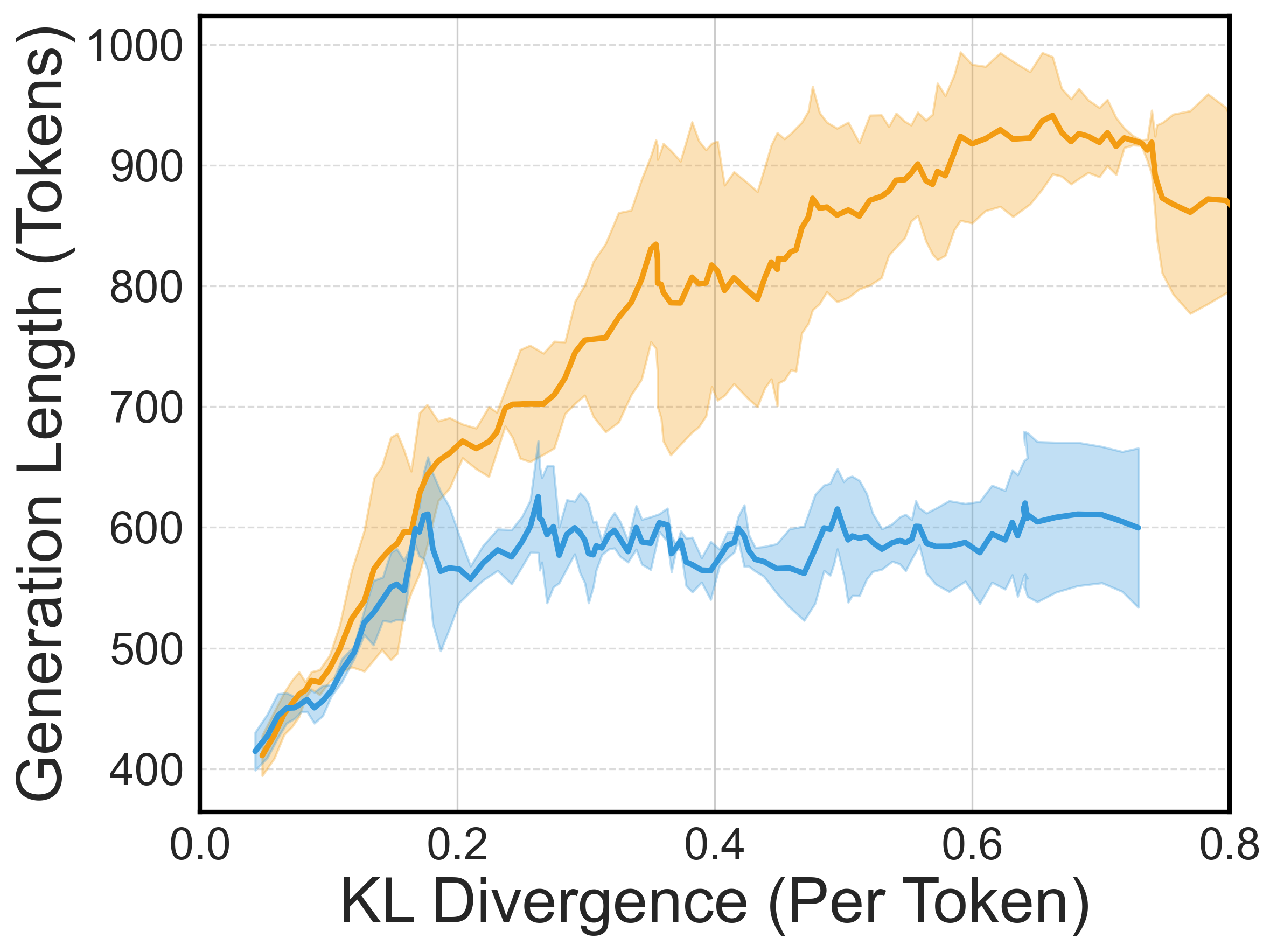

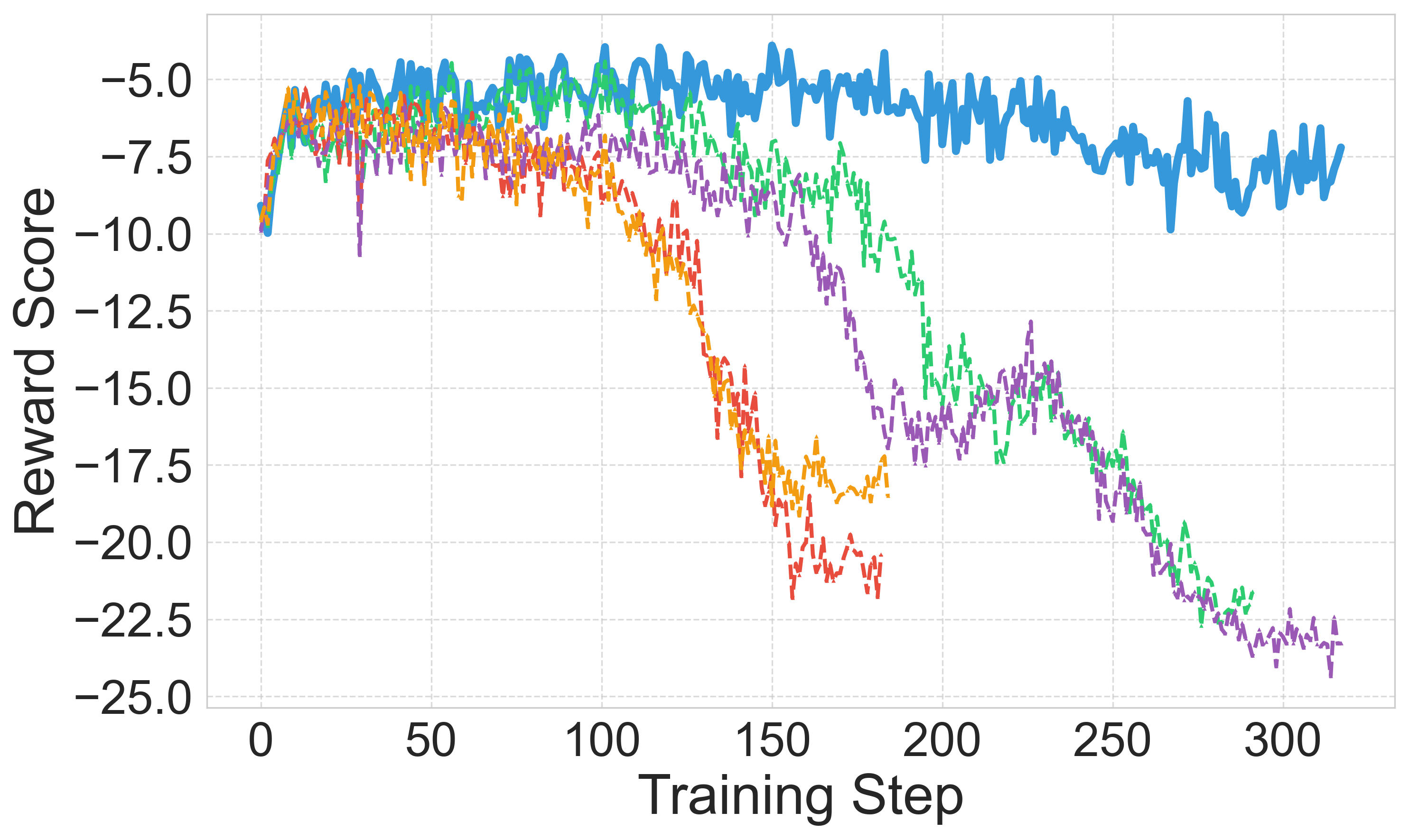

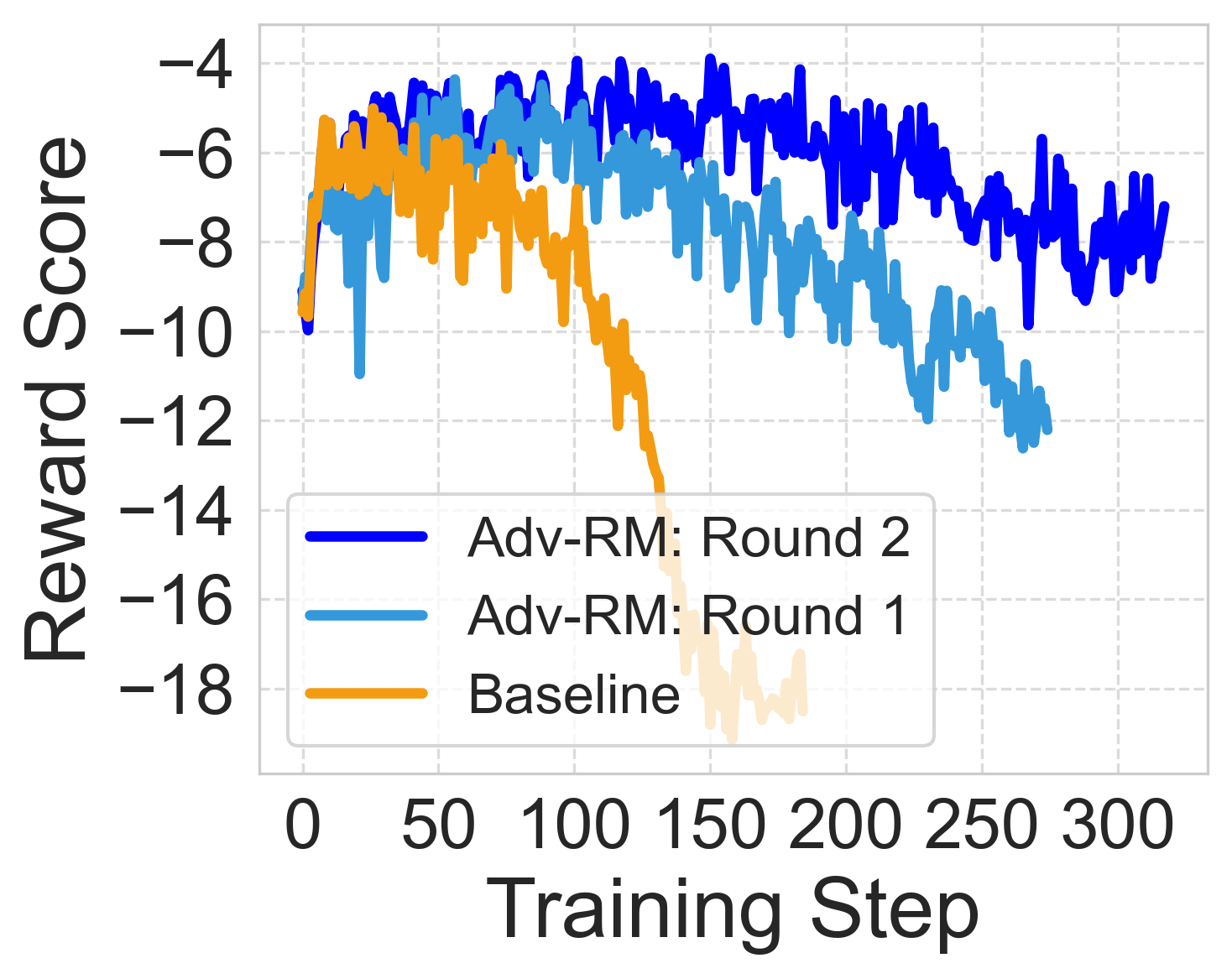

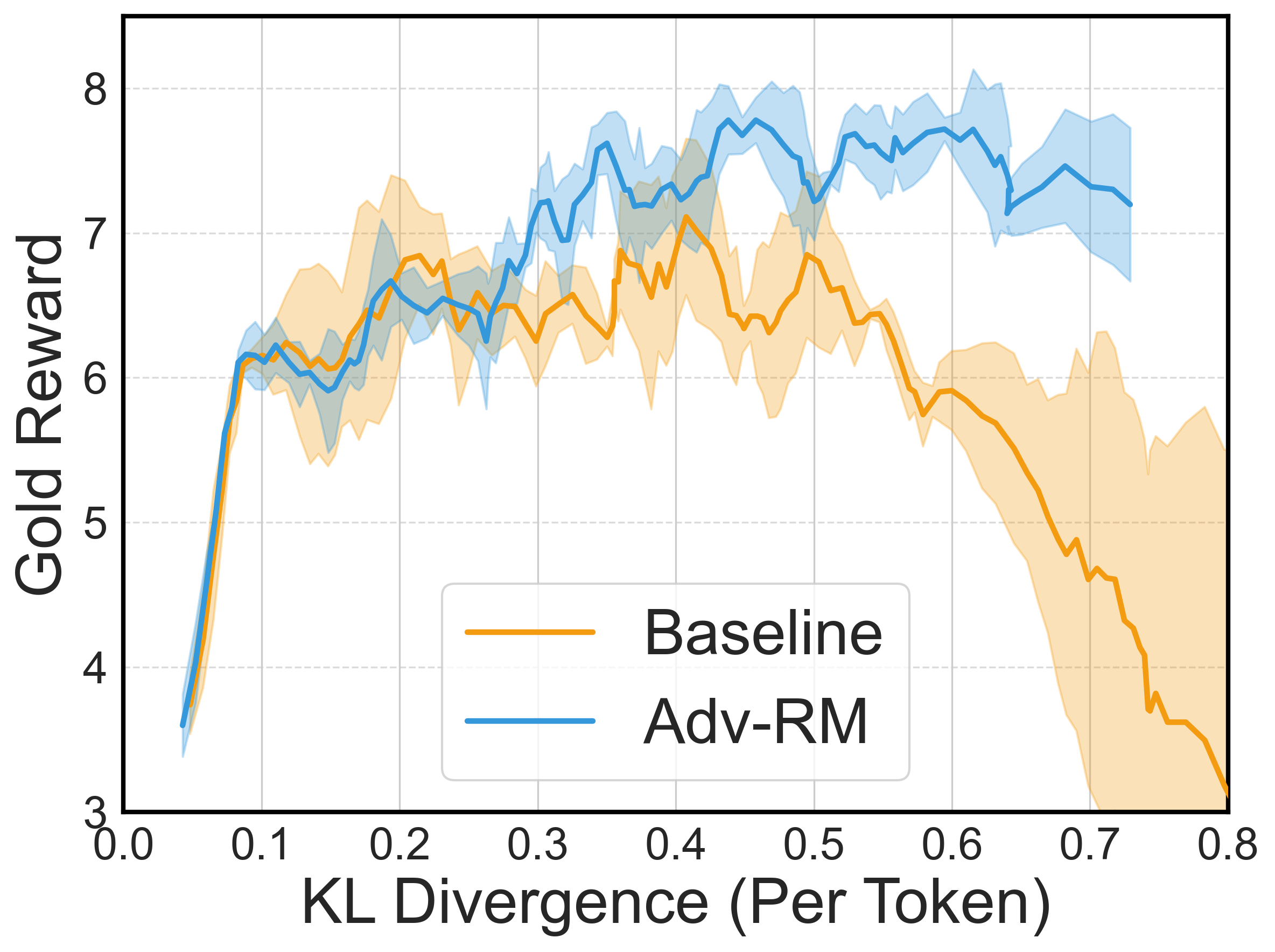

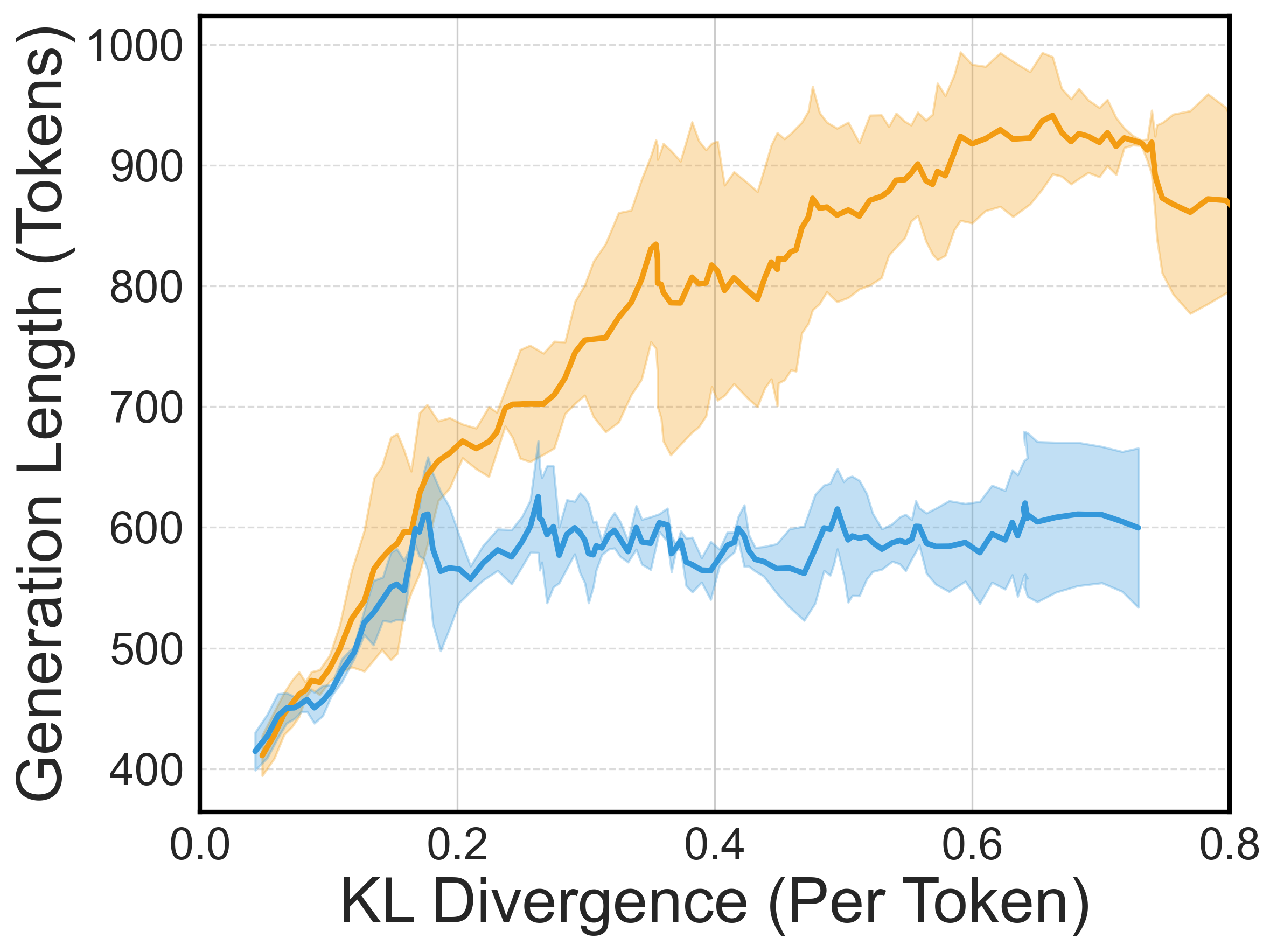

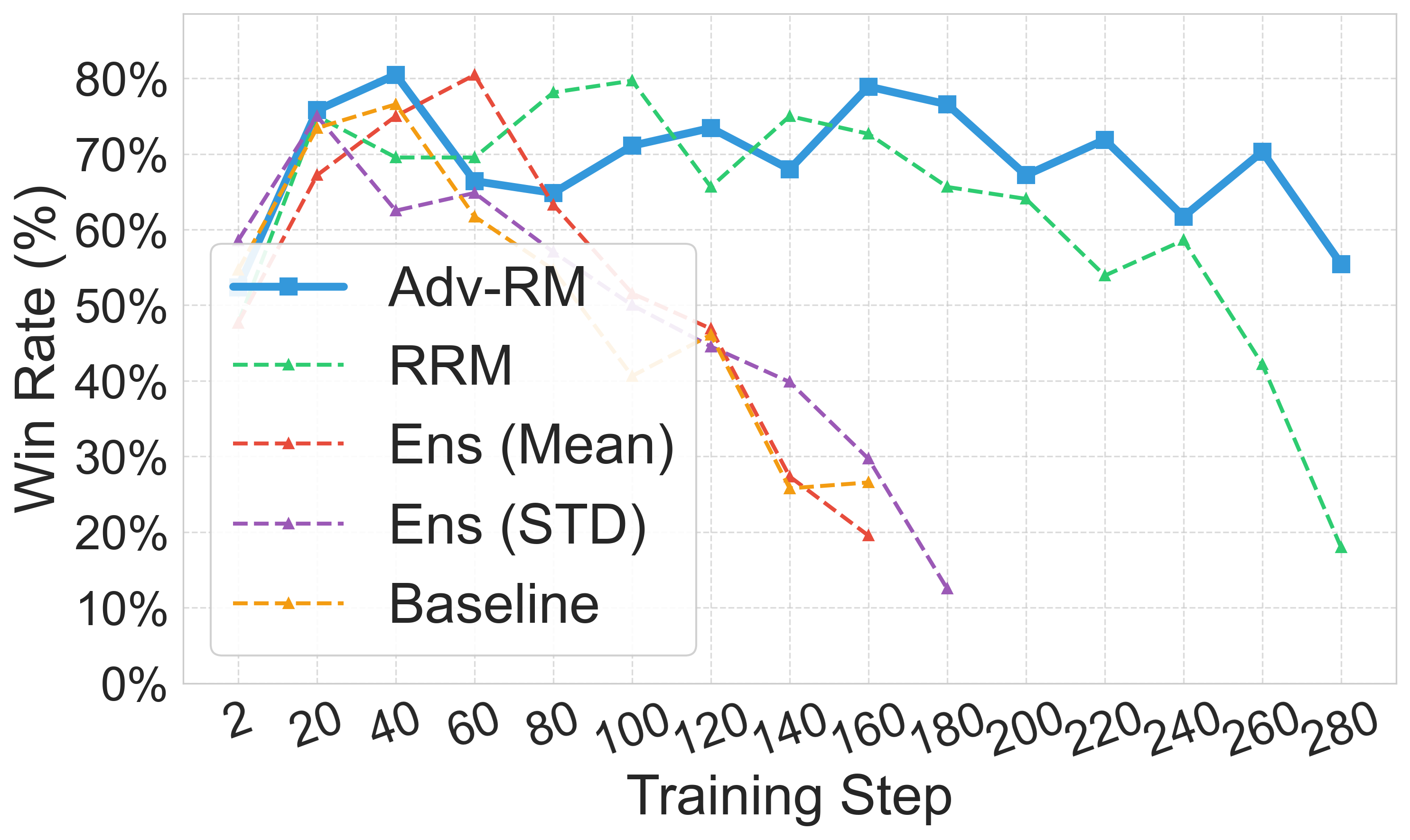

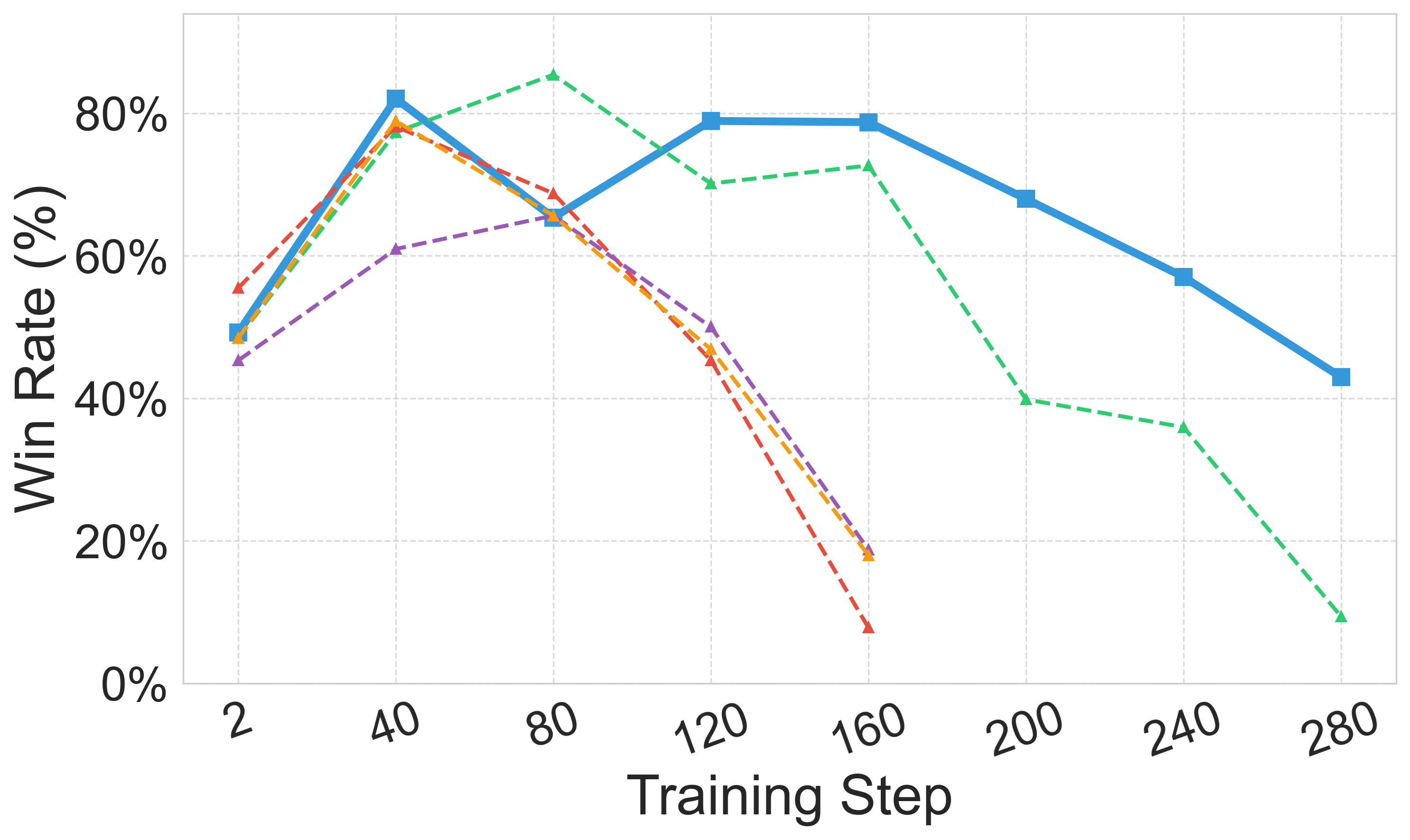

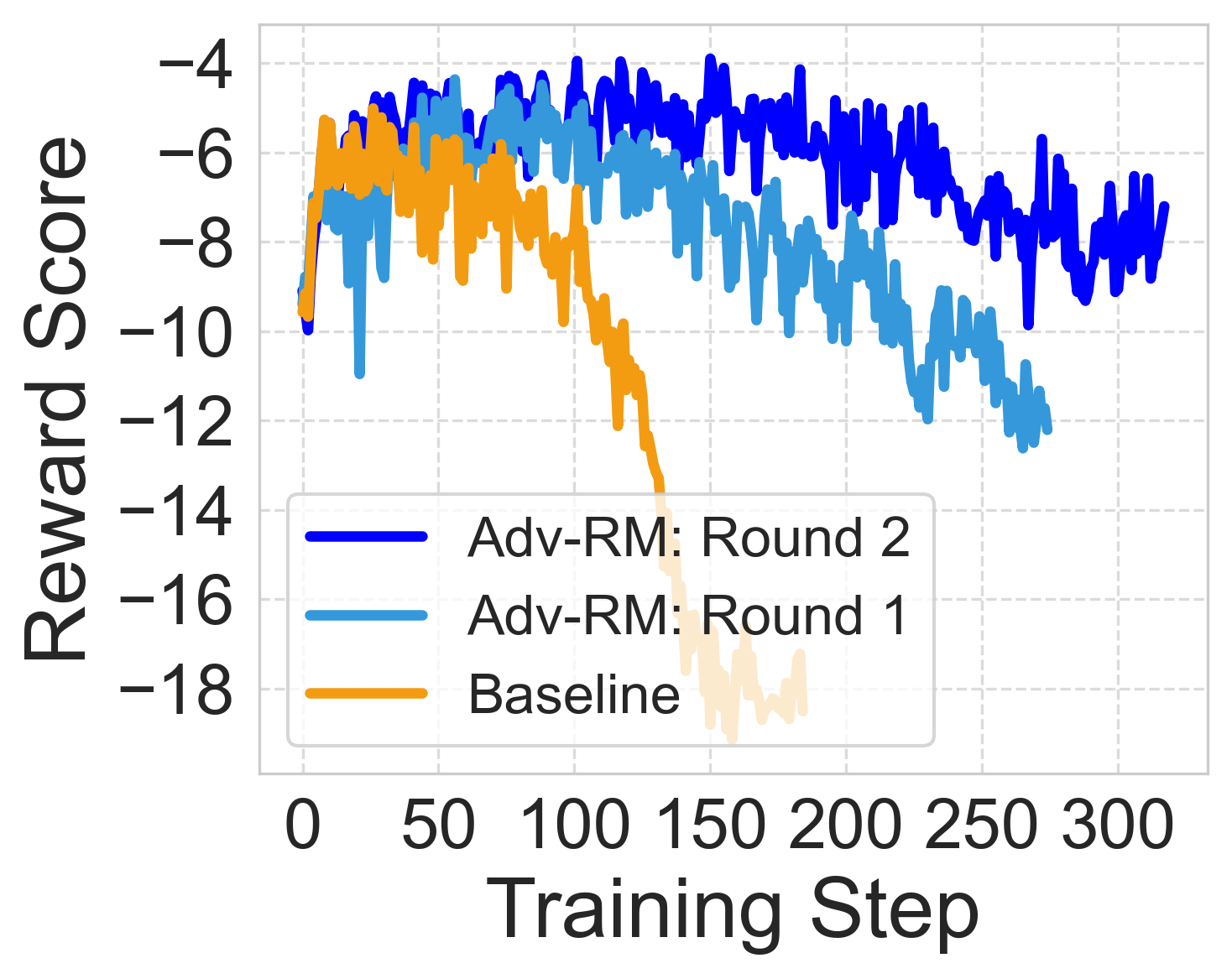

The introduction of adversarial examples reduced reward hacking significantly, thereby extending the stability and effectiveness of RLHF training. In both synthetic and real-world settings, Adv-RM exhibited marked improvements in reward stability and performance compared to baseline models.

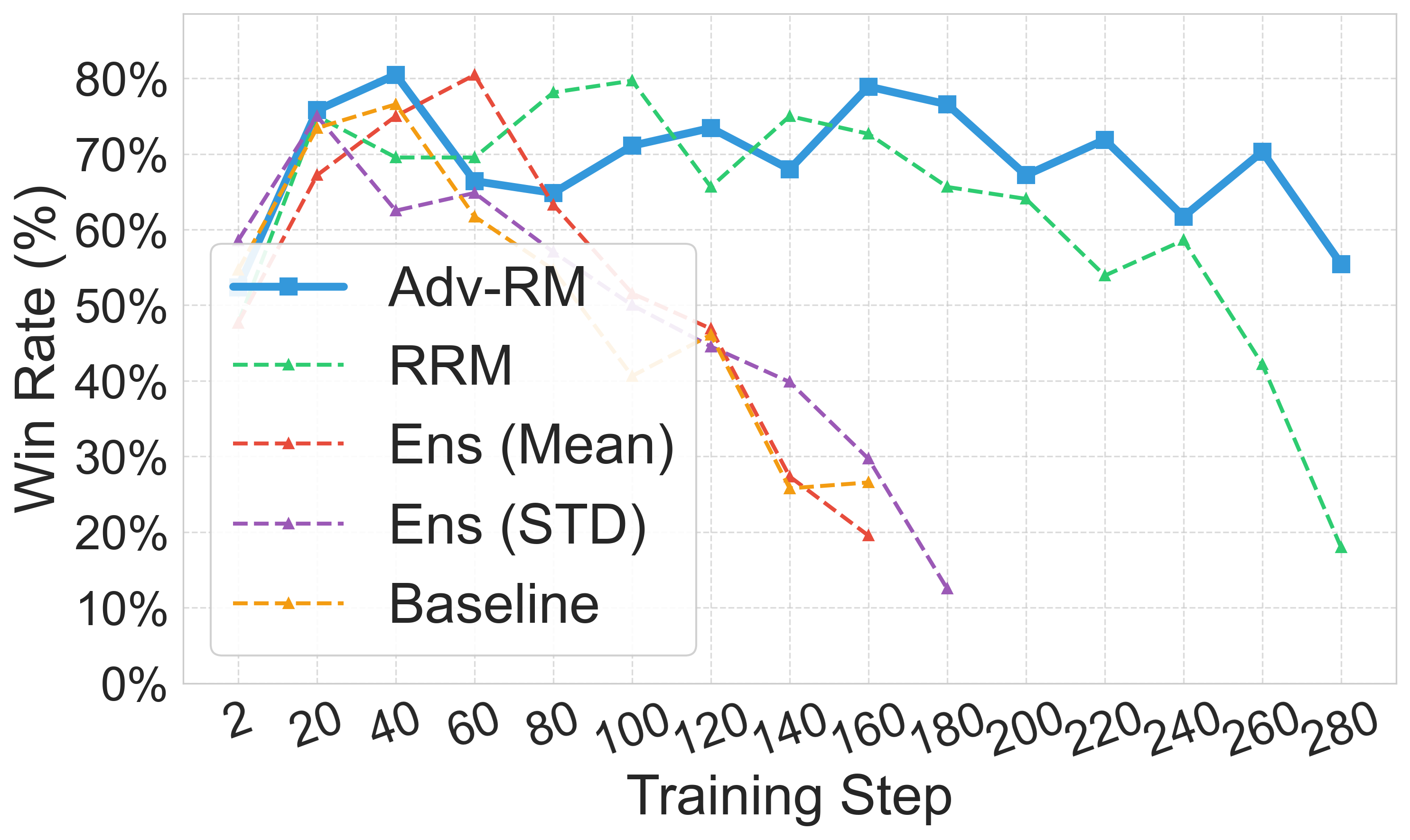

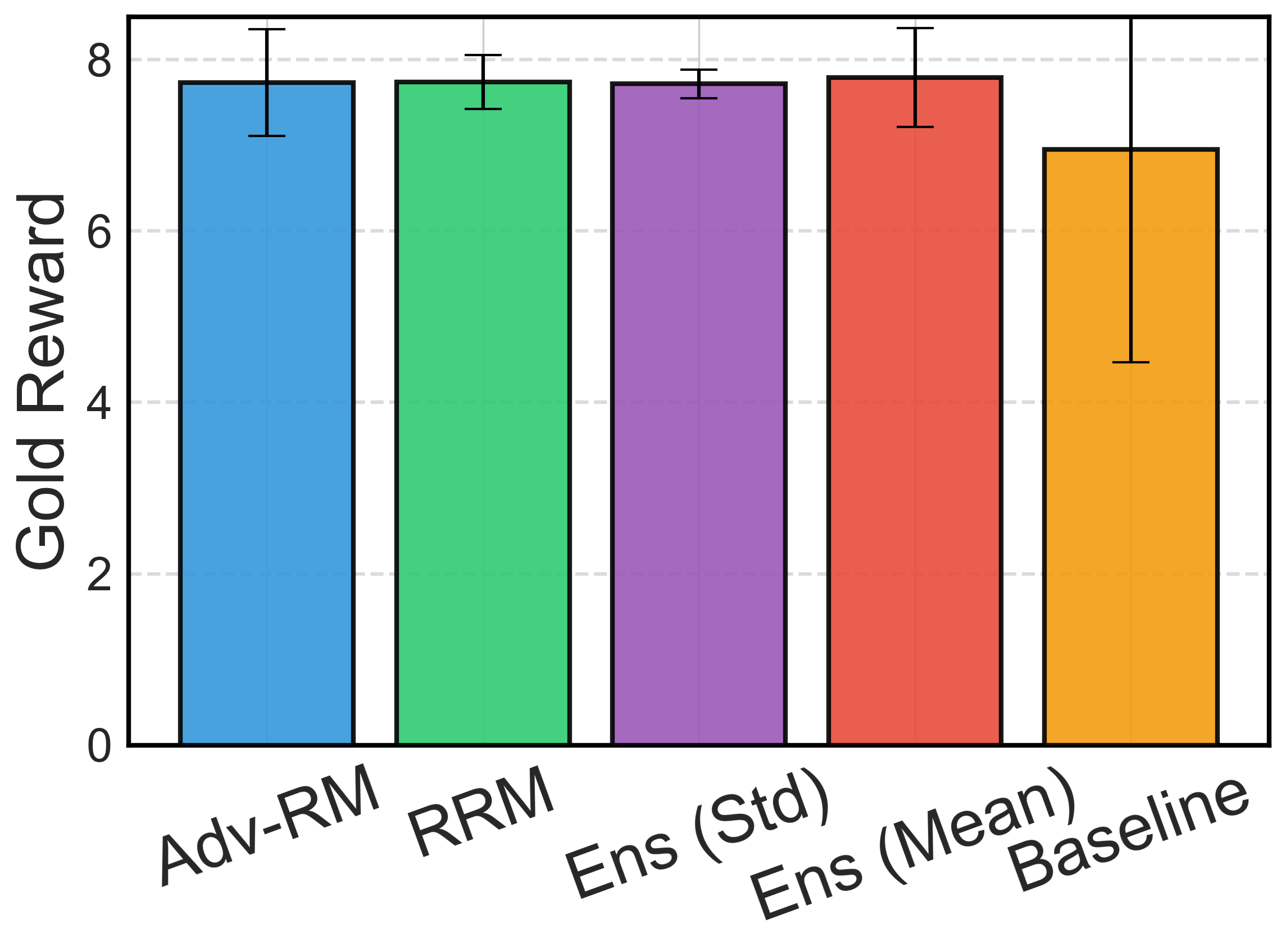

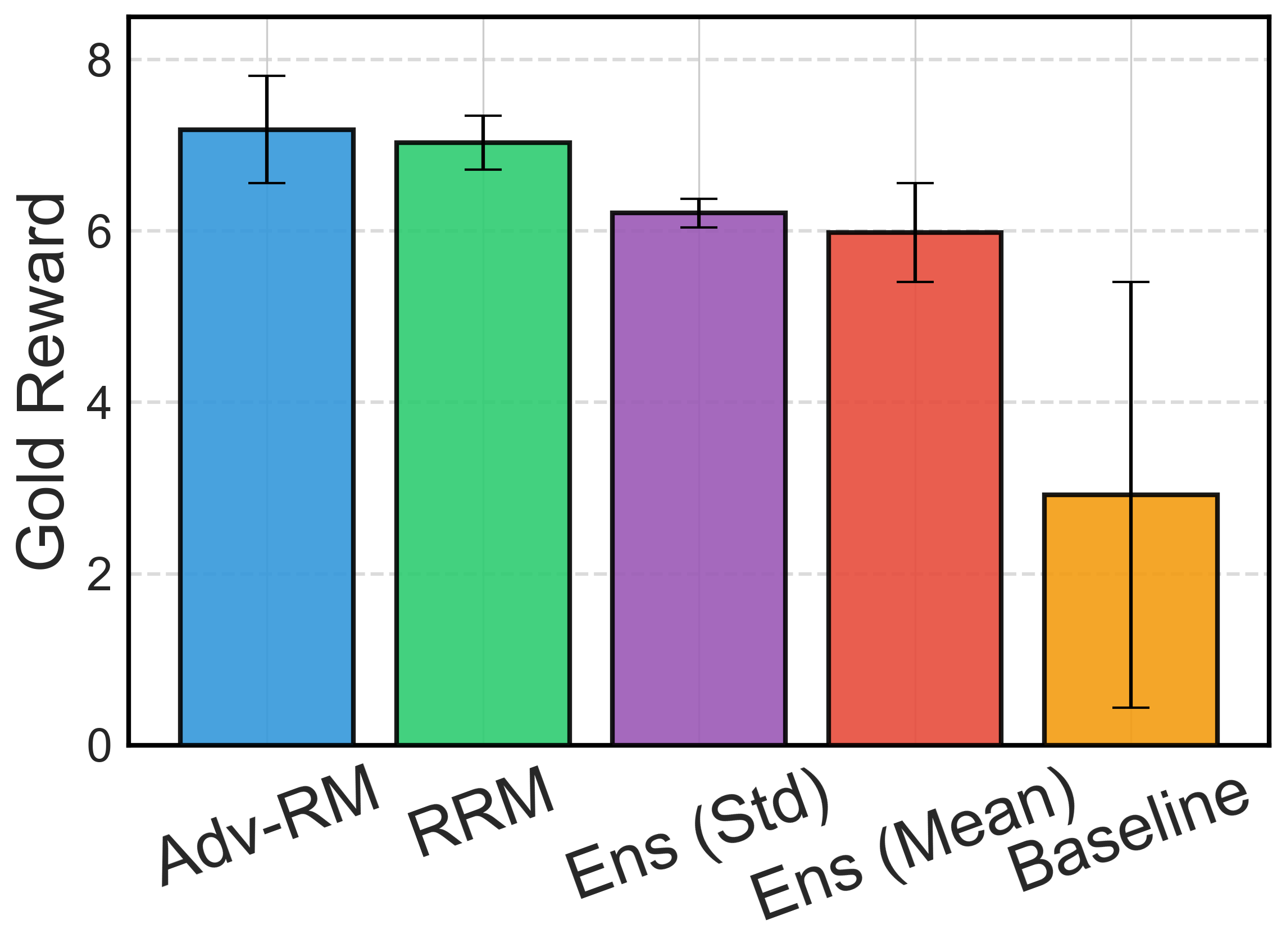

Figure 4: Downstream policy results in the synthetic setup. Error bars represent ± one standard deviation over three random seeds.

Discussion and Implications

Adv-RM contributes to the field by improving RM robustness, mitigating reward hacking, and enhancing the fidelity of LLM alignments with human intentions. Its application elucidates a pathway for adversarial robustness in reward models without requiring costly human annotations. Adv-RM suggests that adaptable, adversarially-trained RMs are crucial in complex AI systems where traditional metrics of robustness frequently fall short.

Conclusion

The study successfully demonstrates the potential of adversarial training in overcoming the limitations of current reward models. Despite computational overhead, the benefits of robust RM training justify this approach. Future research may focus on refining OOD detection mechanisms to further enhance Adv-RM efficacy, paving the way for robust, aligned AI systems capable of navigating diverse, real-world challenges.

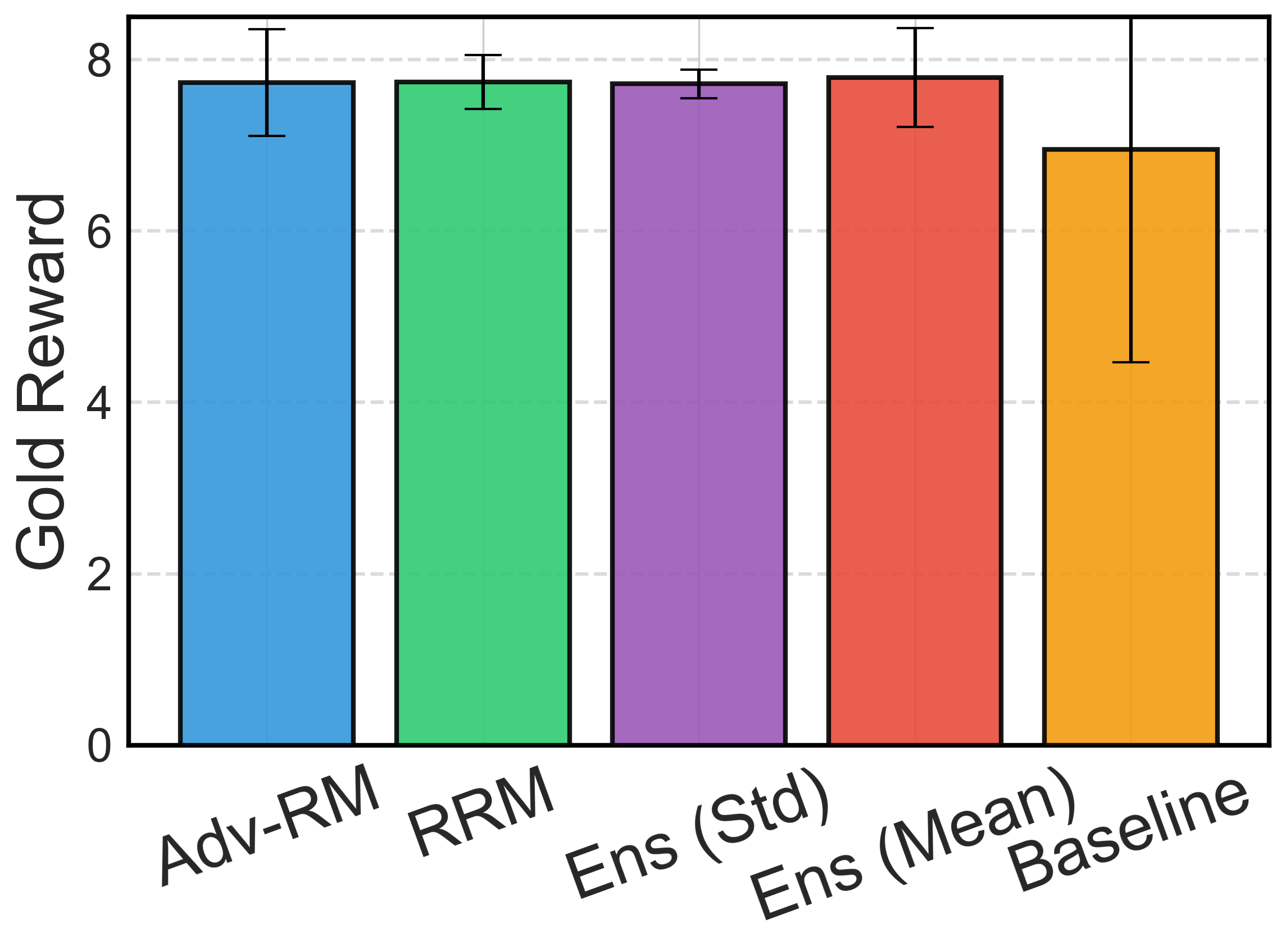

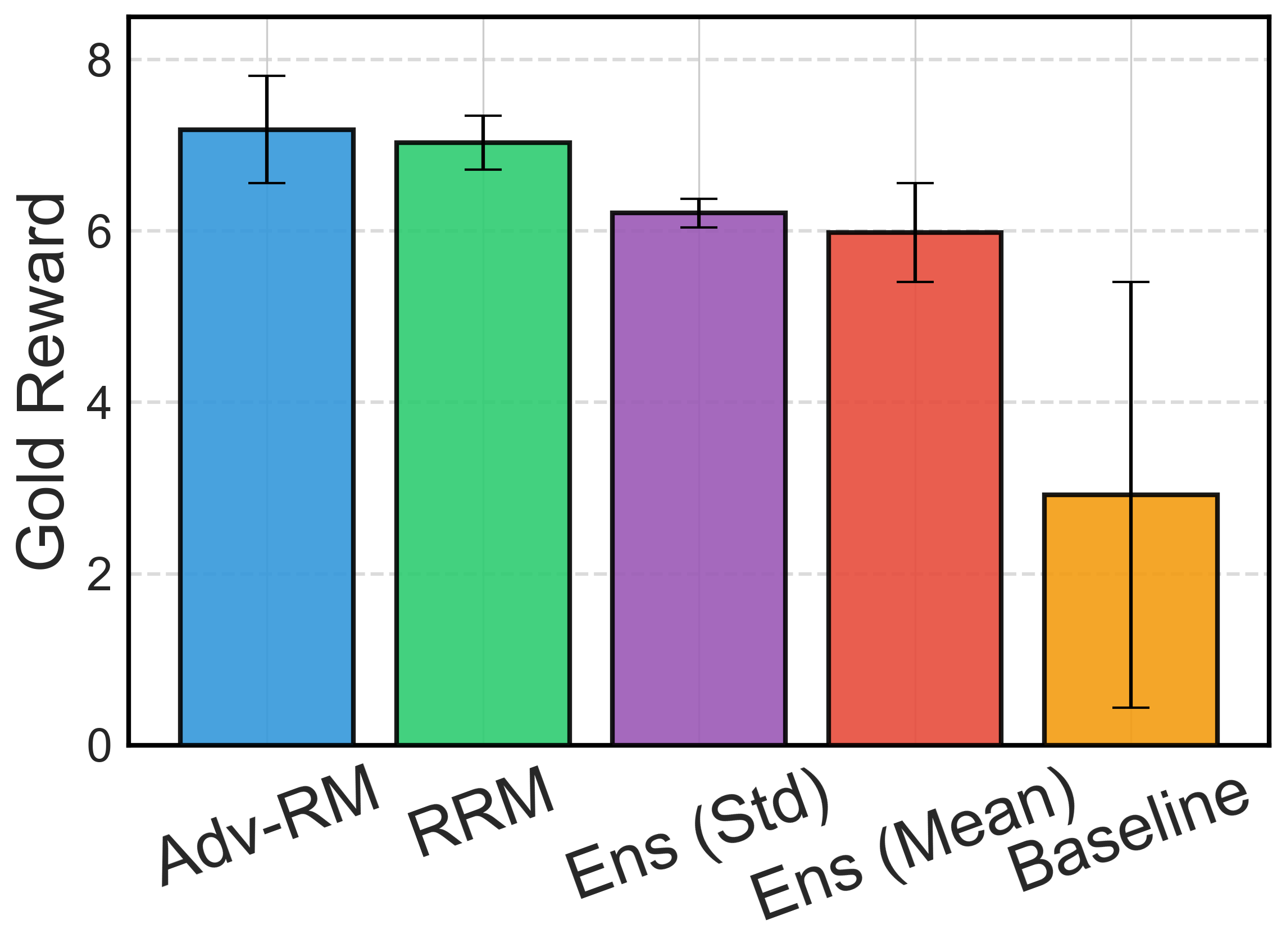

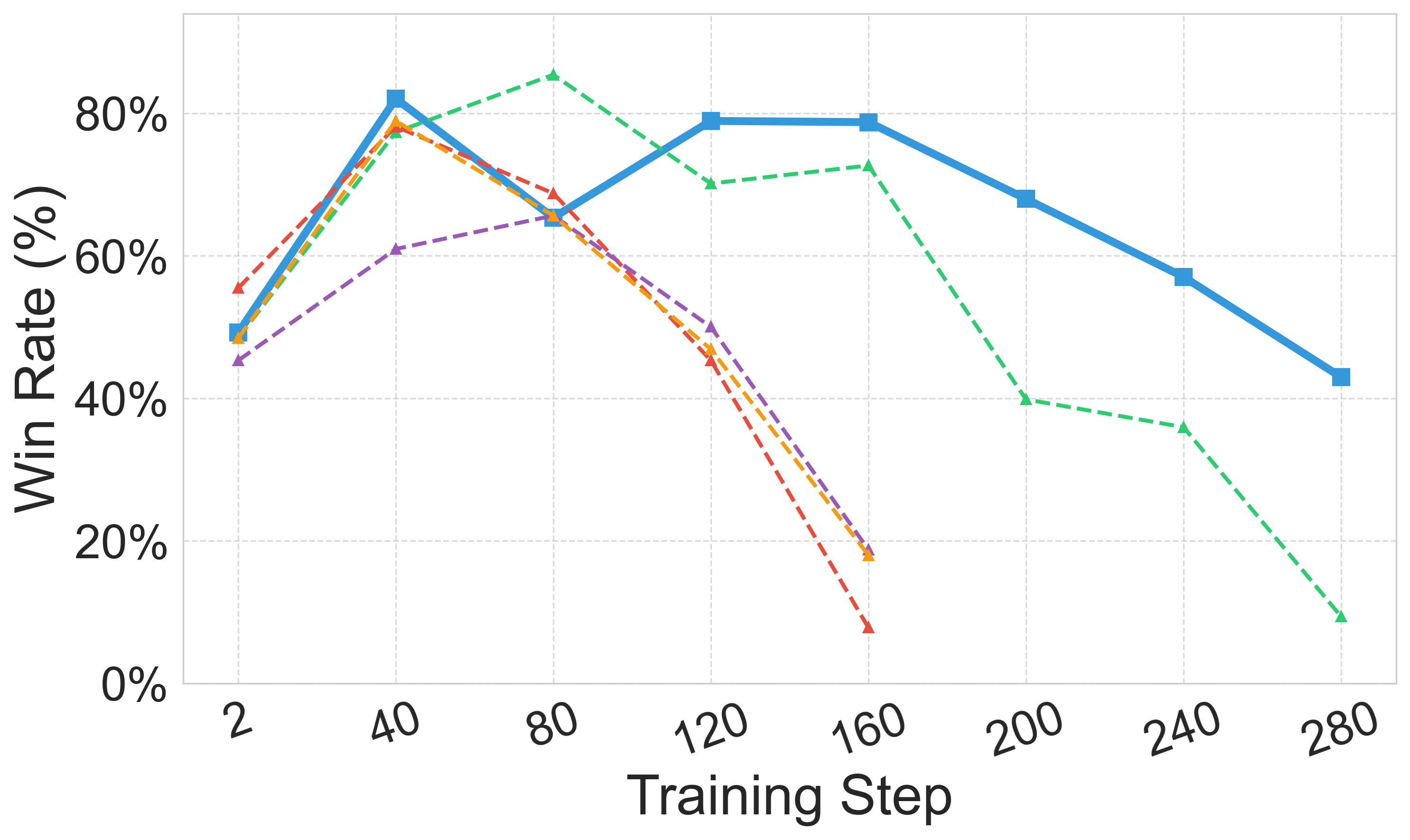

Figure 5: Downstream policy results with different judge models.

Figure 6: Robustness of Adv-RM models and ablation study. In (a) and (c) we consider the real RLHF setting, where in (b) it is the synthetic setting. For (a) Deepseek-R1 is the judge, and in (b) and (c) Llama-Nemotron RM is.