Boosting LLM Serving through Spatial-Temporal GPU Resource Sharing

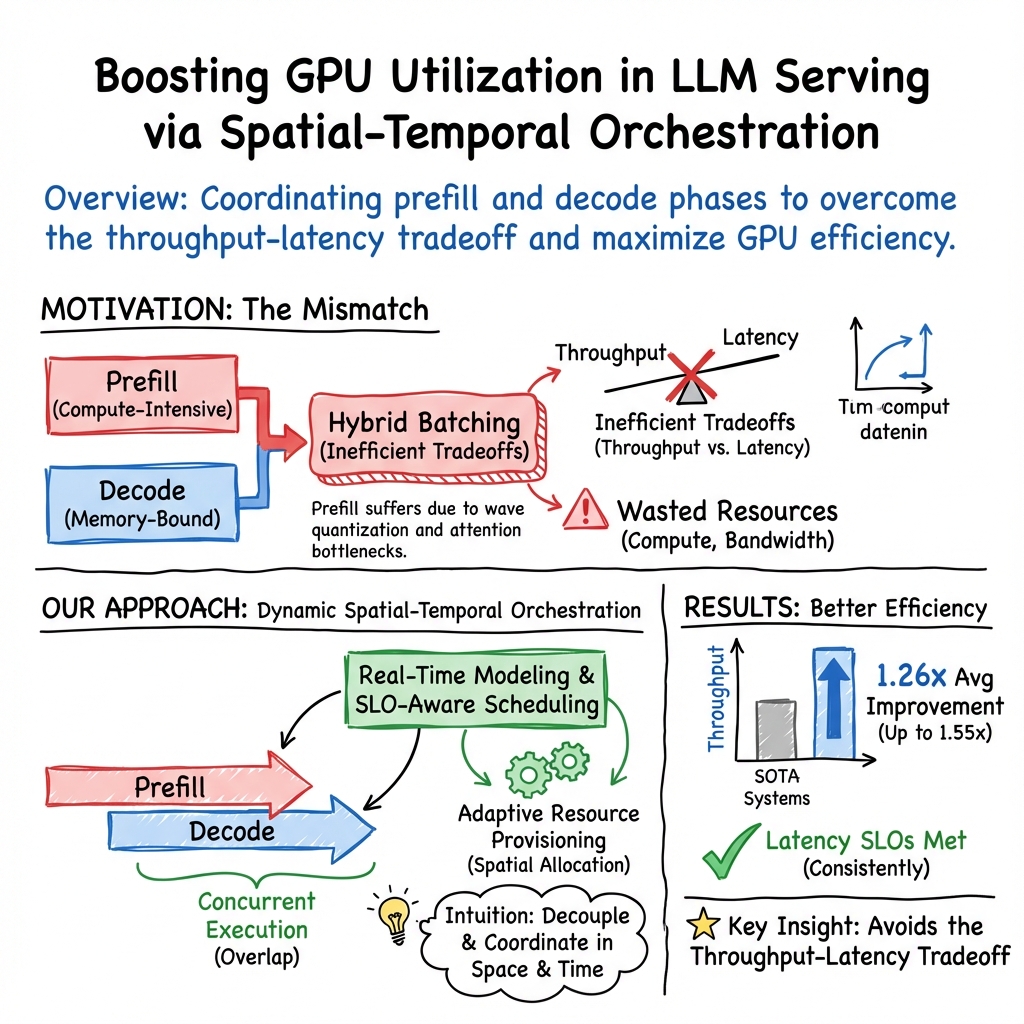

Abstract: Modern LLM serving systems confront inefficient GPU utilization due to the fundamental mismatch between compute-intensive prefill and memory-bound decode phases. While current practices attempt to address this by organizing these phases into hybrid batches, such solutions create an inefficient tradeoff that sacrifices either throughput or latency, leaving substantial GPU resources underutilized. We identify two key root causes: 1) the prefill phase suffers from suboptimal compute utilization due to wave quantization and attention bottlenecks. 2) hybrid batches disproportionately prioritize latency over throughput, resulting in wasted compute and memory bandwidth. To mitigate the issues, we present Bullet, a novel spatial-temporal orchestration system that eliminates these inefficiencies through precise phase coordination. Bullet enables concurrent execution of prefill and decode phases, while dynamically provisioning GPU resources using real-time performance modeling. By integrating SLO-aware scheduling and adaptive resource allocation, Bullet maximizes utilization without compromising latency targets. Experimental evaluations on real-world workloads demonstrate that Bullet delivers 1.26x average throughput gains (up to 1.55x) over state-of-the-arts, while consistently meeting latency constraints.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Simple Explanation of “Bullet: Boosting GPU Utilization for LLM Serving via Dynamic Spatial-Temporal Orchestration”

What is this paper about?

This paper introduces Bullet, a system that helps computers answer lots of AI questions (like chatbots do) faster and more efficiently. It focuses on how to better use powerful chips called GPUs when serving LLMs, such as those behind popular AI assistants.

What problem are they trying to solve?

When an AI model answers a question, it usually goes through two steps:

- Prefill: The model reads and processes the whole user prompt. This step is heavy on math and uses lots of GPU “muscle.”

- Decode: The model then writes the answer one token (word or piece of a word) at a time. This step frequently needs to fetch a lot of stored information, so it’s limited more by memory speed than by pure math power.

These two steps stress the GPU in very different ways. Current systems often mix these steps together in one batch to keep the GPU busy, but that creates a bad tradeoff:

- If they try to respond quickly (low latency), they don’t handle as many requests per second (lower throughput).

- If they try to handle many requests (high throughput), individual responses might slow down (higher latency). Either way, a lot of the GPU’s power is still left unused.

What are the key questions the paper asks?

- Why are GPUs underused when serving LLMs, even with smart batching?

- Can we run the prefill and decode steps at the same time without slowing responses?

- How do we split and schedule GPU resources so we meet response-time promises while serving more users?

How does Bullet work?

Think of the GPU as a big, shared kitchen:

- Prefill is like a chef doing intense cooking (a lot of heavy work on the stove).

- Decode is like a chef who keeps running to the pantry for ingredients (lots of waiting on memory).

Instead of putting both chefs in the same line where they get in each other’s way, Bullet:

- Runs prefill and decode concurrently, but in a carefully organized way.

- Splits the GPU “by space and time” (spatial-temporal orchestration):

- Spatial: Give different parts of the GPU to different tasks.

- Temporal: Schedule tasks at different moments so they don’t trip over each other.

- Uses a real-time performance model to decide how much GPU each step should get right now. It’s like a traffic controller that watches current conditions and adjusts lanes and lights on the fly.

- Is “SLO-aware.” An SLO (Service Level Objective) is a promise like “your reply will arrive within X milliseconds.” Bullet plans and schedules work to meet these time promises while still pushing for high throughput.

Along the way, the authors point out two root causes of waste:

- Prefill sometimes can’t use all GPU compute due to “wave quantization” and “attention bottlenecks.”

- Wave quantization (simple view): The math work comes in chunks that don’t always fit the GPU perfectly, like passengers boarding a bus in uneven groups—some seats stay empty even if the bus is mostly full.

- Attention bottlenecks: The attention mechanism must look up a lot of information, which can slow things down (like repeatedly checking a big notebook while writing).

- Hybrid batches (mixing prefill and decode in one batch) often lean too much toward keeping latency low, leaving compute units or memory bandwidth unused.

Bullet’s approach avoids these pitfalls by coordinating the two phases precisely and by adjusting resource splits dynamically using live measurements and predictions.

What did they find?

- Bullet improved the number of requests served per second (throughput) by an average of 1.26× and up to 1.55× compared to leading systems.

- It still met latency targets (responses stayed within the promised time). In short: more users served, same snappy feel.

Why is this important?

- Better GPU use means lower costs for running AI at scale (fewer machines needed for the same workload).

- Users get fast replies even when many people are using the service at once.

- This helps AI services be more reliable, greener (less wasted power), and more affordable.

What’s the bigger impact?

Bullet shows that:

- Treating the prefill and decode steps differently—and coordinating them smartly—can unlock a lot of hidden performance.

- Real-time, data-driven scheduling on GPUs can keep both speed (latency) and productivity (throughput) high at the same time. This idea could influence future AI serving systems, making LLMs more efficient and widely accessible.

Collections

Sign up for free to add this paper to one or more collections.