- The paper introduces TNO, a neural operator that overcomes limitations in temporal extrapolation and error accumulation in time-dependent PDEs.

- It employs a dual-branch structure combining DeepONet advances, U-Net encoding with adaptive pooling, and temporal bundling for efficient spatiotemporal modeling.

- Benchmarked on weather, climate, and CO2 sequestration, TNO achieves robust resolution invariance, long-term accuracy, and generalization across multiphysics scenarios.

Temporal Neural Operator for Time-Dependent Physical Phenomena

Introduction

The paper "Temporal Neural Operator for Modeling Time-Dependent Physical Phenomena" (2504.20249) presents the Temporal Neural Operator (TNO), a neural operator architecture tailored to efficiently model spatio-temporal dynamics governed by time-dependent PDEs. The TNO synthesizes architectural and training advances from DeepONet, Fourier Neural Operator, and spatiotemporal processing frameworks. Critically, TNO addresses significant limitations in existing operator learning methods, specifically their inability to robustly handle temporal extrapolation, error accumulation, and resolution invariance when deployed on real-world, high-dimensional, and noisy scientific datasets.

Methodology: The Temporal Neural Operator Framework

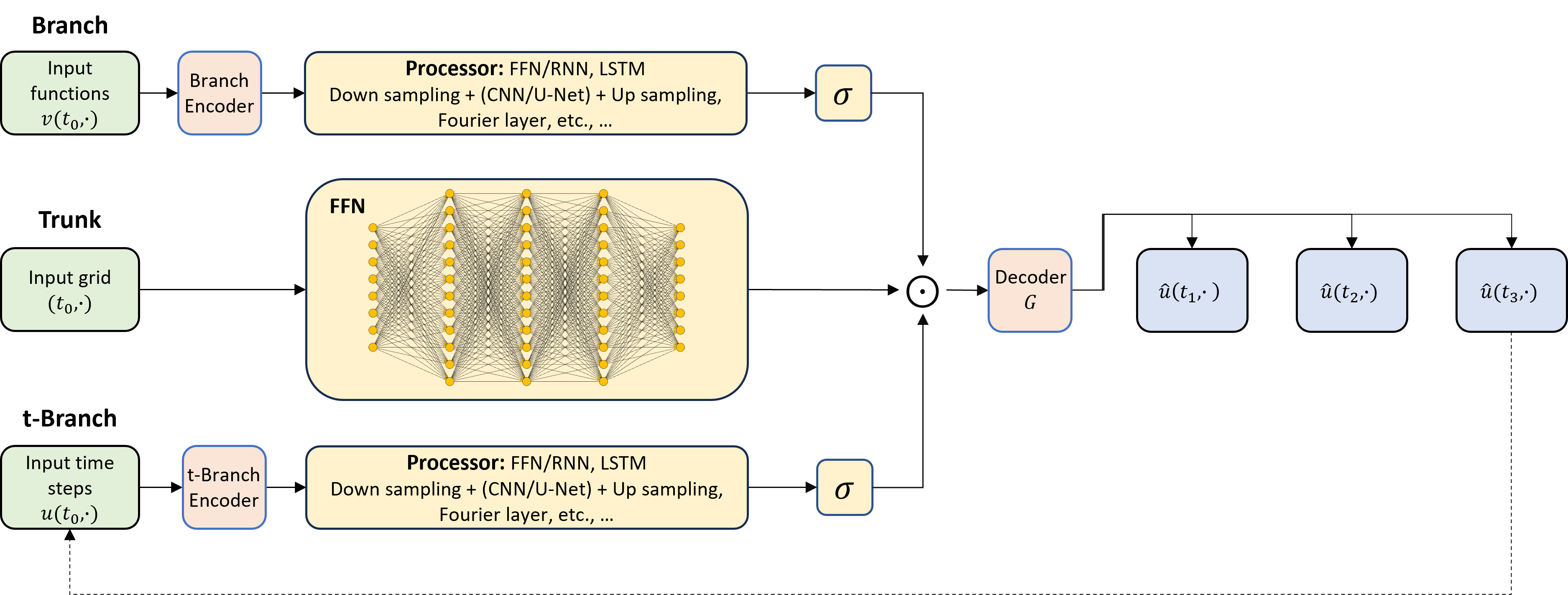

The TNO augments the classical DeepONet operator learning pipeline with a dedicated temporal branch (t-branch), architectural features for efficient high-dimensional input handling, and novel training regimes that mitigate temporal error accumulation.

The TNO operates as follows:

Key training strategies include:

- Temporal Bundling (K>1): Enables prediction of multiple future steps per forward pass, improving efficiency and stabilizing rollouts.

- Autoregressive Conditioning and Teacher Forcing: Supports both Markov and memory-based system evolution, with training optionally stabilized by supplying ground truth at intermediate rollout steps.

Benchmarking: Weather and Climate Forecasting Applications

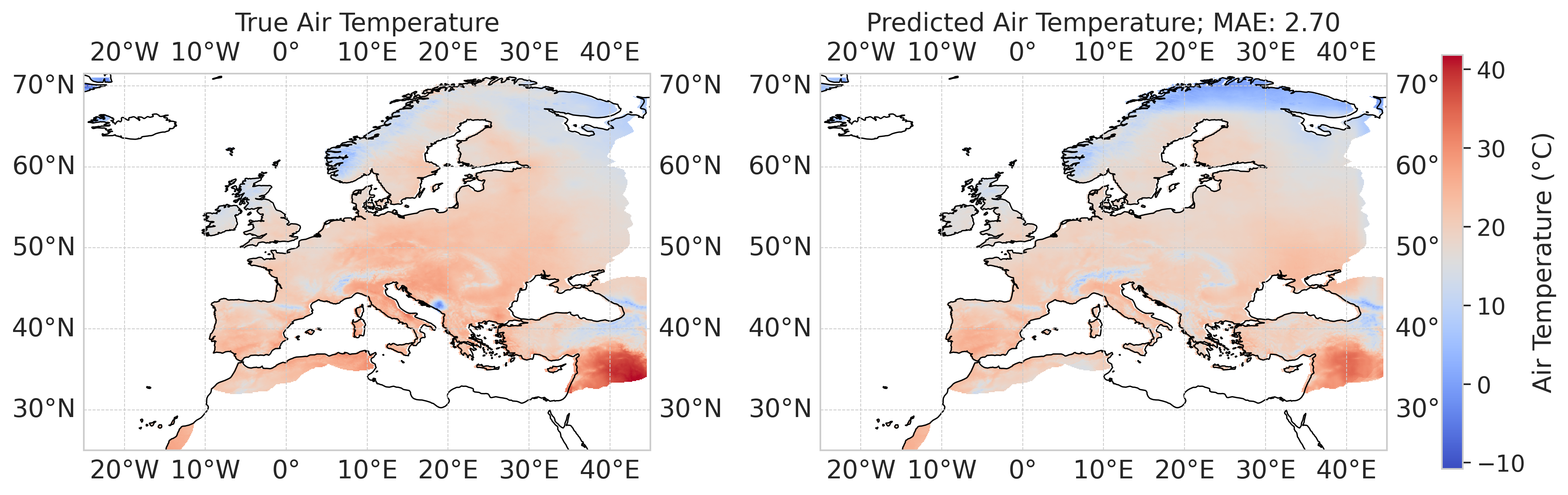

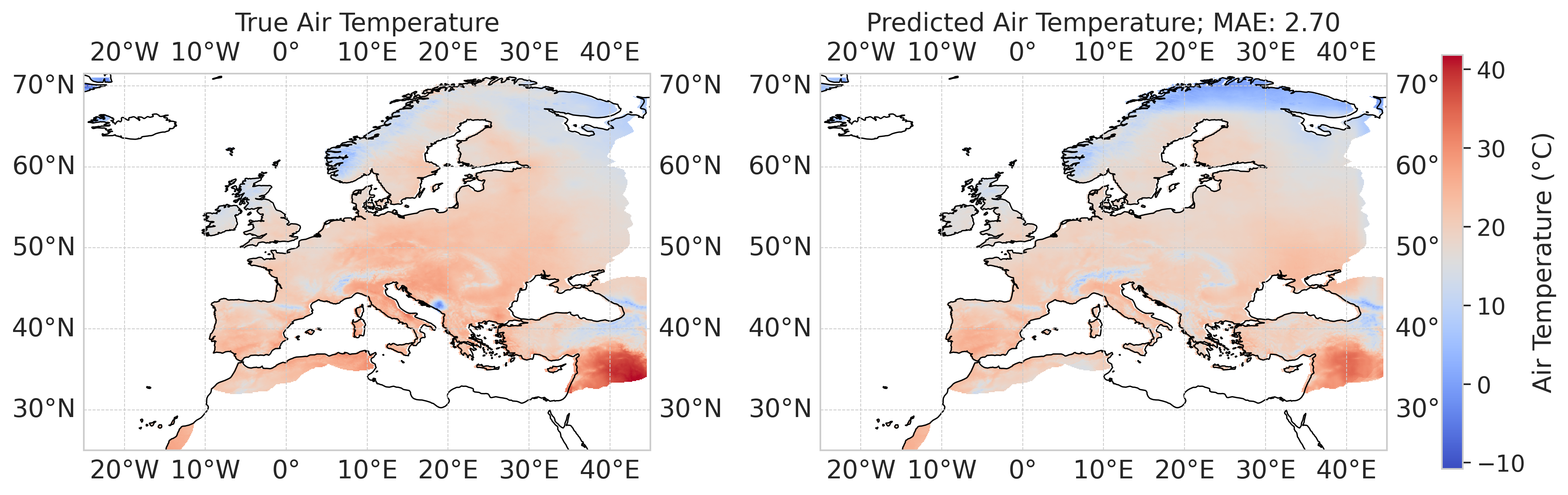

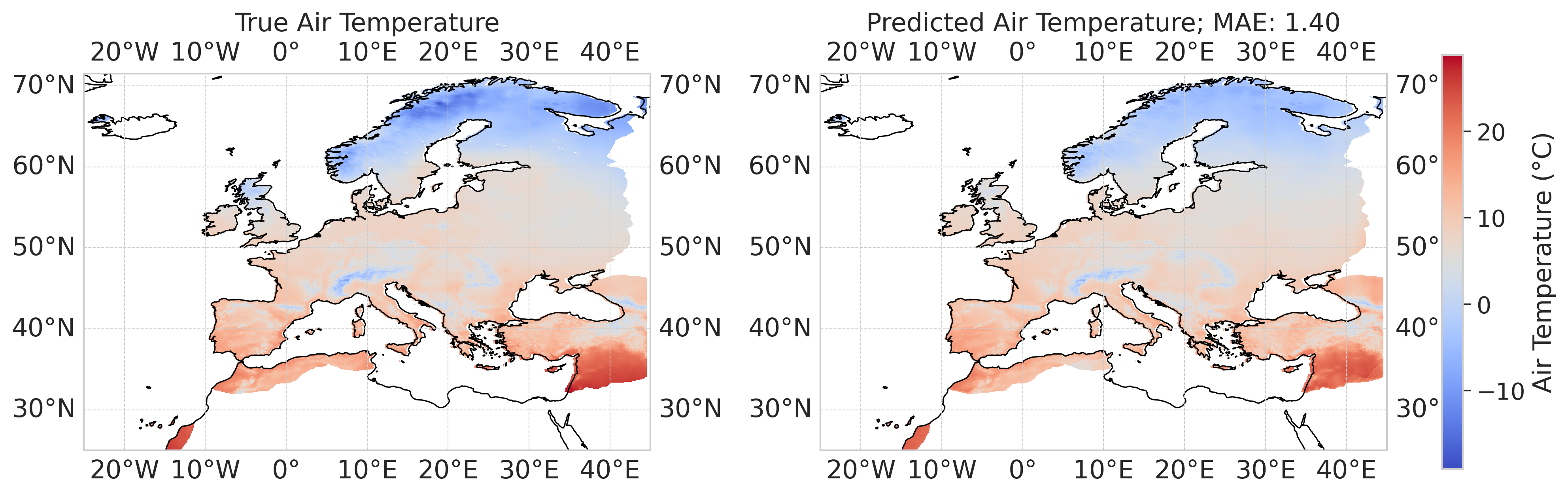

European Regional Air Temperature Forecast

TNO is evaluated on the E-OBS observational climate dataset, addressing noisy, incomplete, and high-resolution (0.1°, 0.25°) weather data with strong spatial heterogeneity. Noteworthy results:

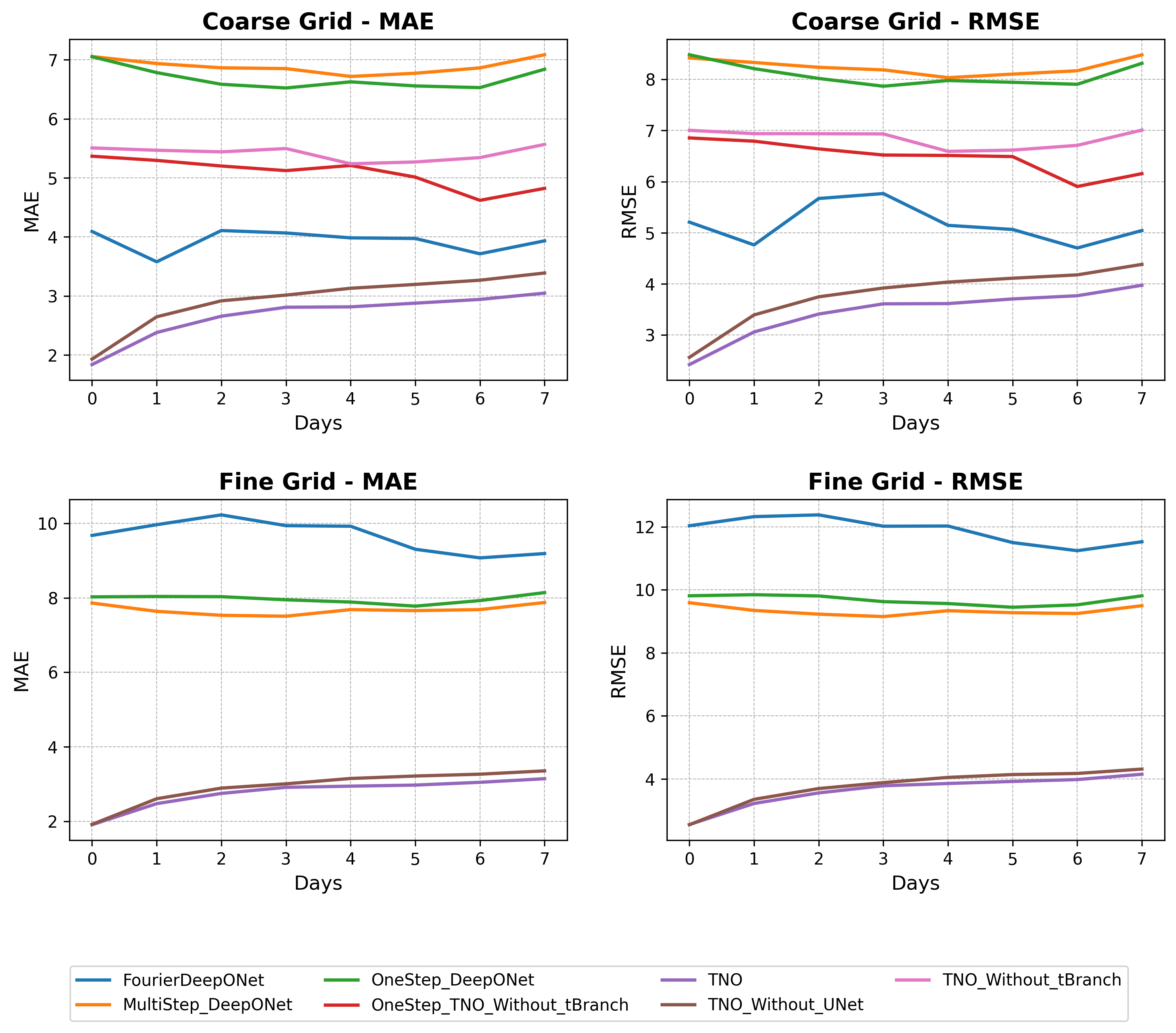

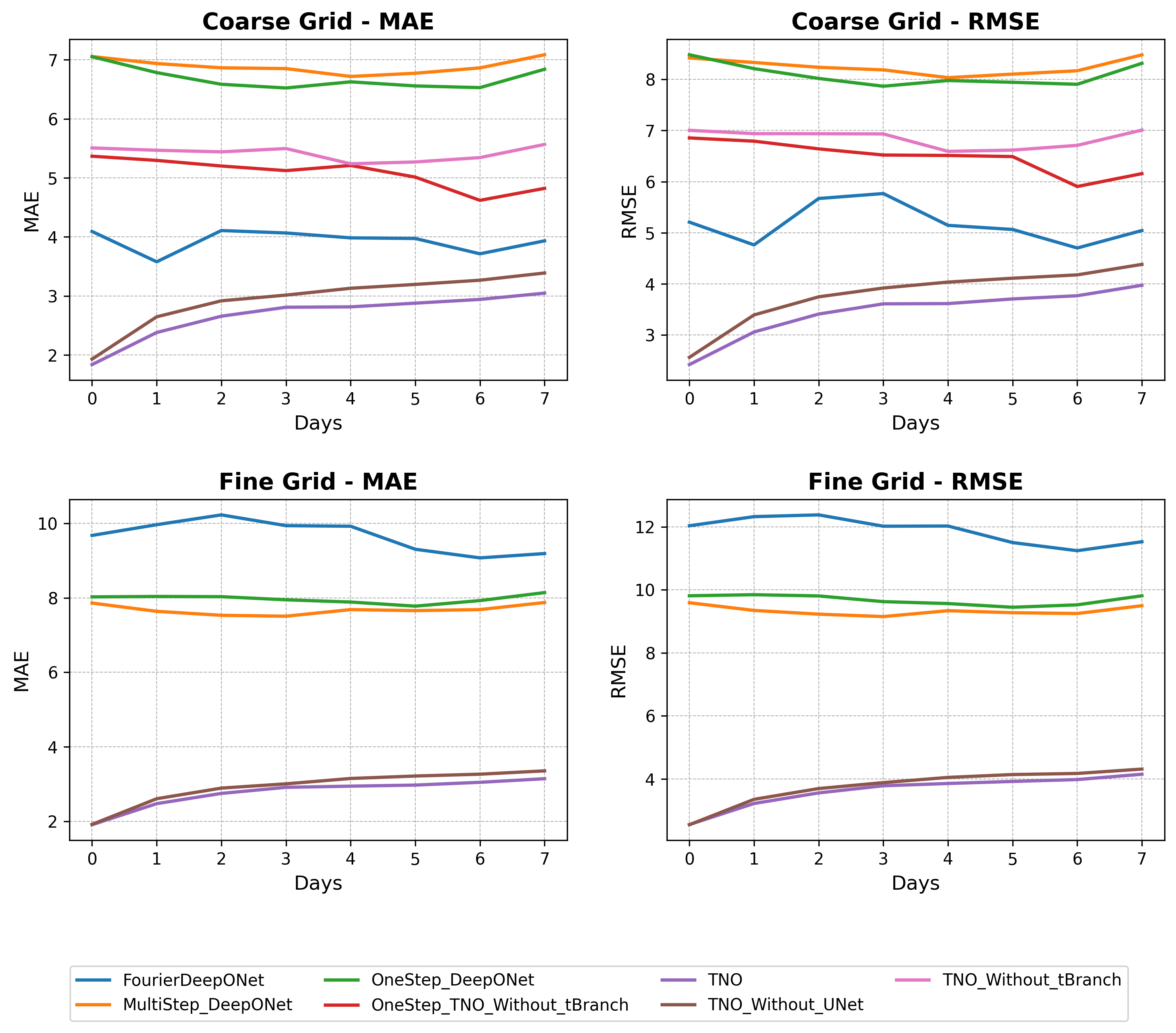

- Multi-step forecasting with limited history (L=1): TNO achieves an MAE of 2.68∘C on blind test data (0.25° grid) and 2.83∘C on a higher-resolution 0.1° grid without retraining, demonstrating robust resolution invariance.

- Error Accumulation: Examination of multi-step rollouts shows negligible error drift, confirming the stabilization protocol's efficacy in long-term predictions.

- Ablation Analysis: Removal of the t-branch or U-Net severely degrades performance and resolution invariance, quantifying the contributions of each architectural component.

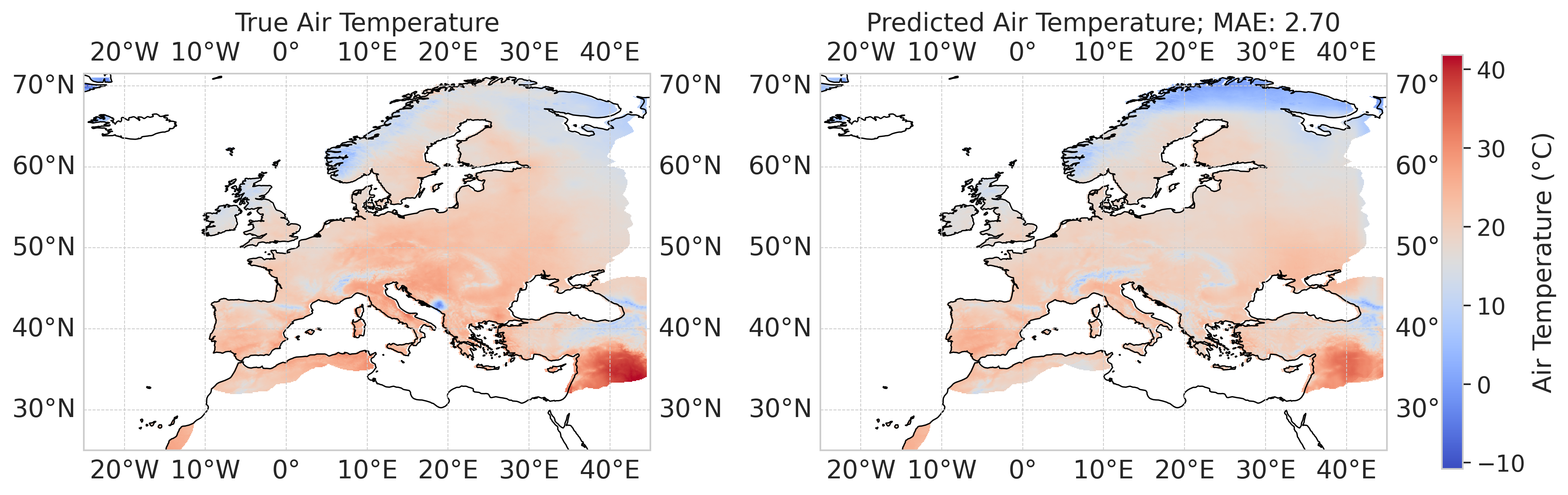

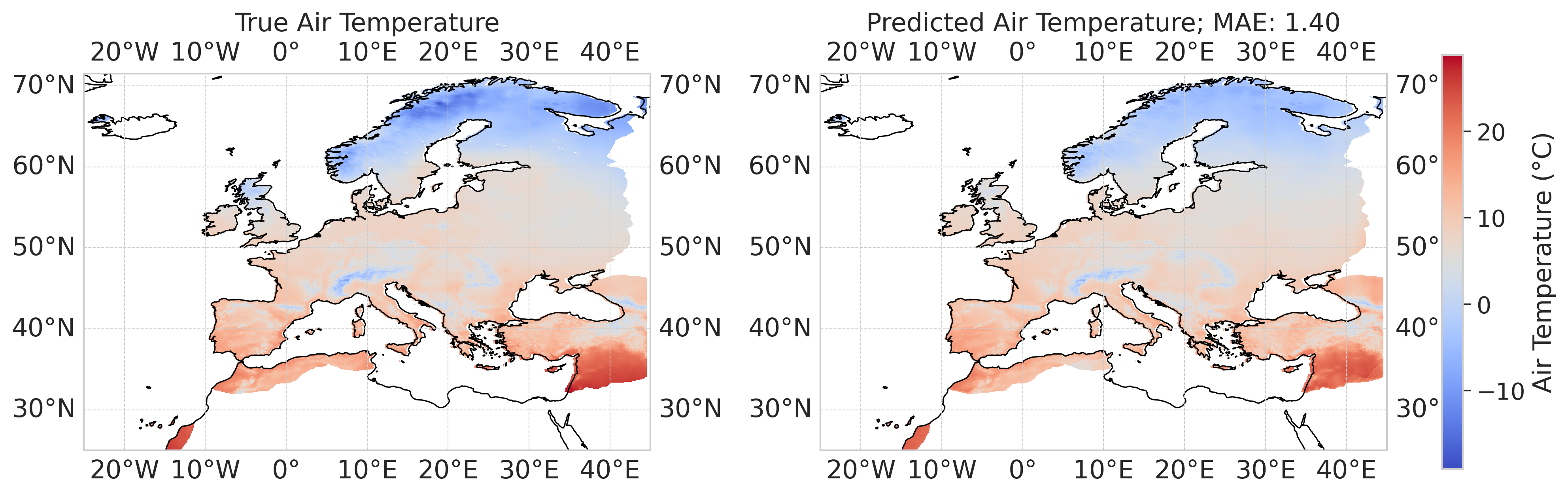

Figure 2: TNO-predicted air temperature field for 24/06/2023 (0.25° grid) demonstrates high-fidelity spatial structure and low error versus ground truth.

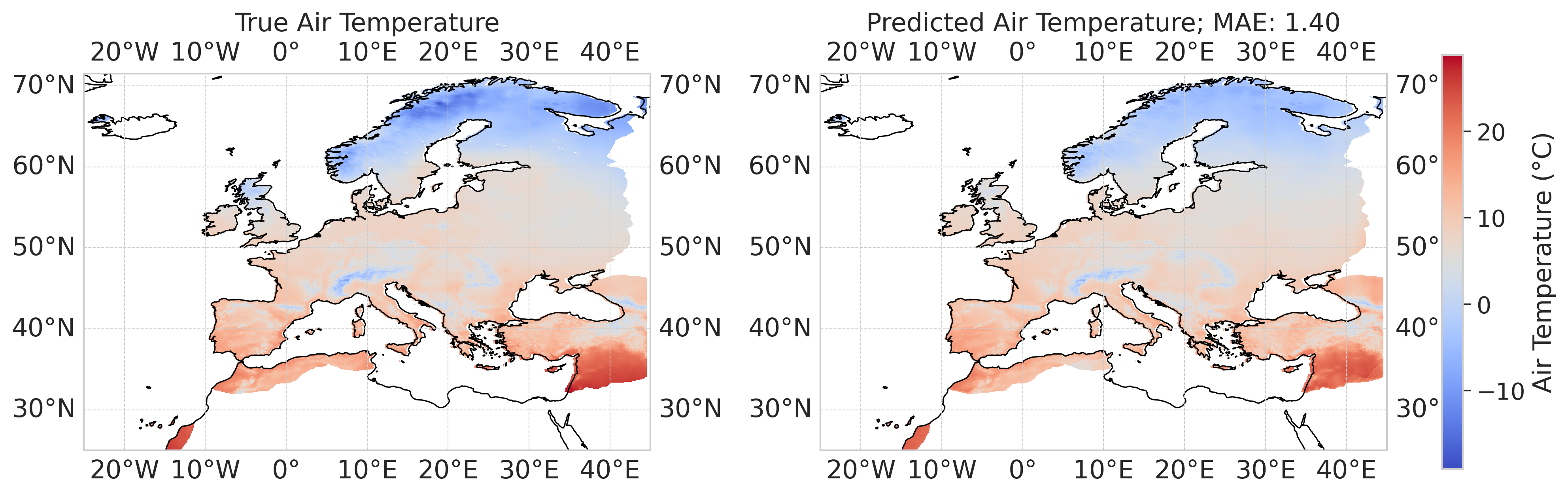

Figure 3: TNO-predicted air temperature field (12/11/2023, 0.25° grid); similar accuracy is observed for higher-resolution predictions.

Figure 4: MAE and RMSE benchmarks on coarse (top) and fine (bottom) grids for TNO and ablated models; "Multistep" indicates use of temporal bundling (K=4).

Global Climate Modeling

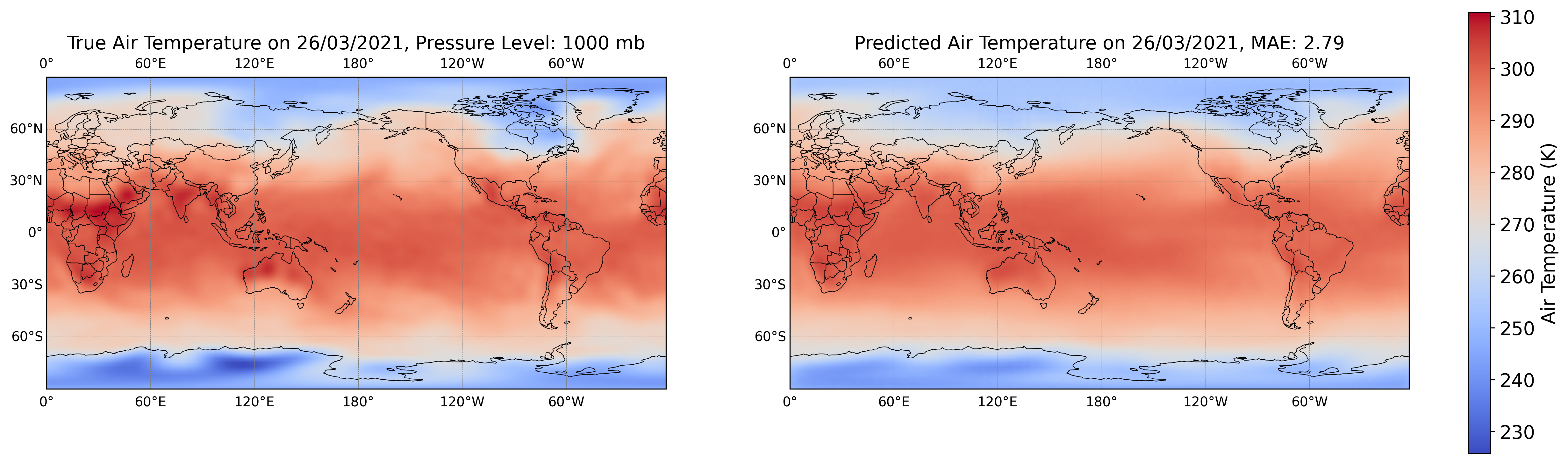

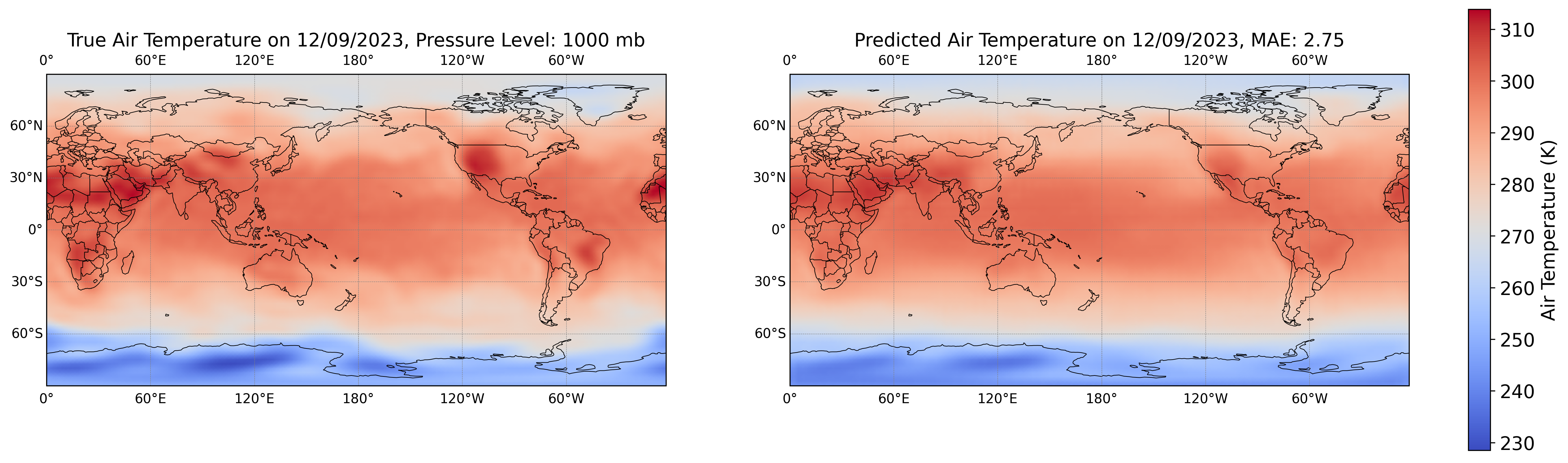

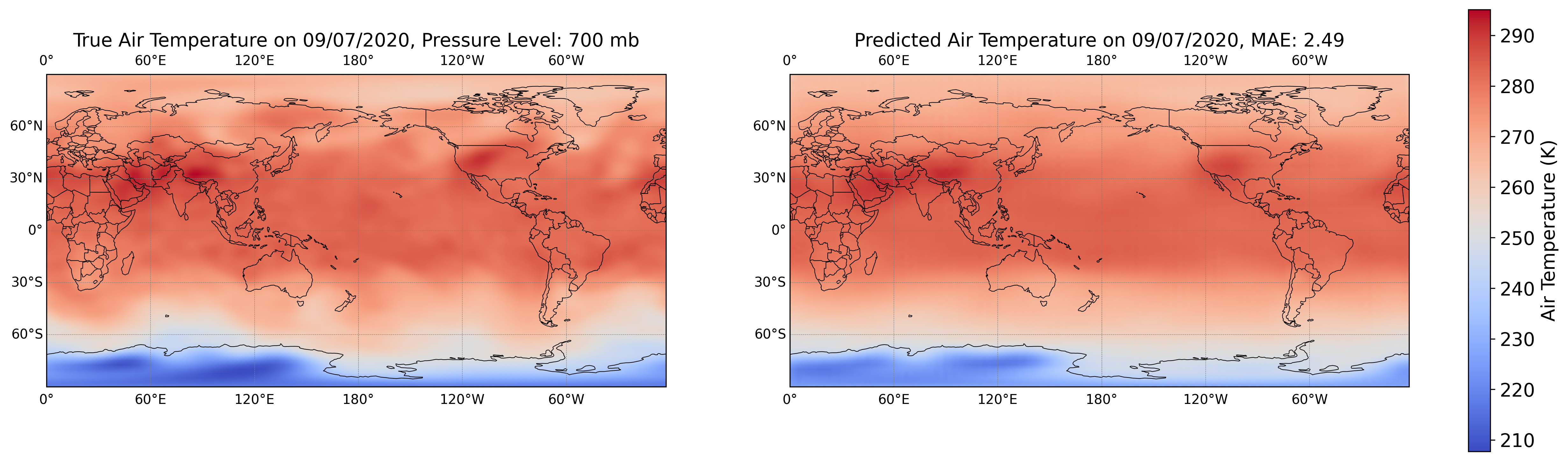

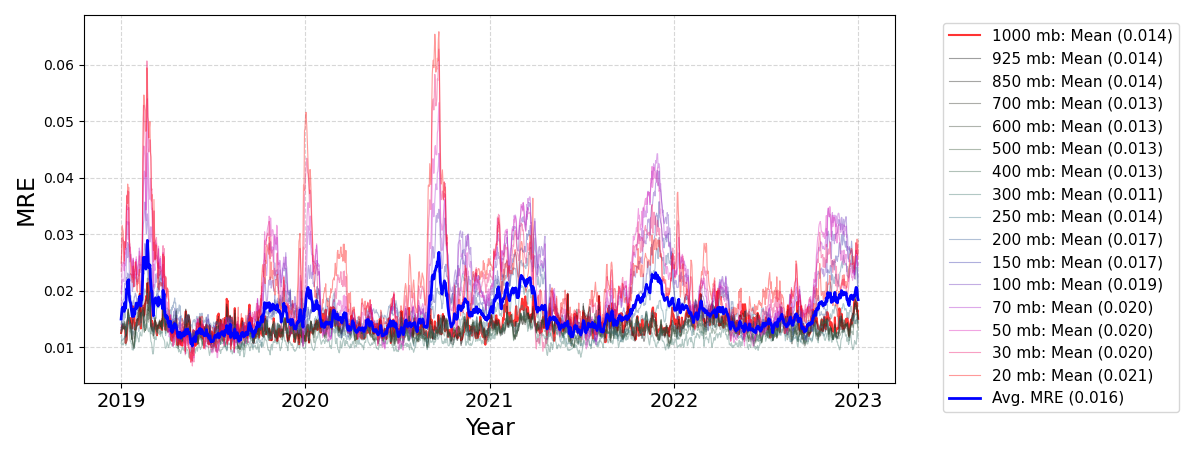

The TNO is applied to NCEP/NCAR Reanalysis data for global air temperature spanning 16 atmospheric pressure levels. The framework collapses the 3D spatiotemporal field into 2D slices with conditioning, exploiting efficient 2D convolutional architectures:

- Temporal Extrapolation: TNO accurately forecasts air temperatures up to five years beyond training, achieving mean relative L2 error 0.016 across all pressure levels.

- Vertical Interpolation & Extrapolation: The model generalizes to atmospheric levels entirely excluded during training.

- Single Unified Model: Unlike Ditto and other foundation models that require separate models or massive multi-level parameterization, TNO covers all levels using a single light-weight network.

Figure 5: Example global air temperature field (26/03/2021, 1000 mb) predicted by TNO, showcasing strong qualitative agreement.

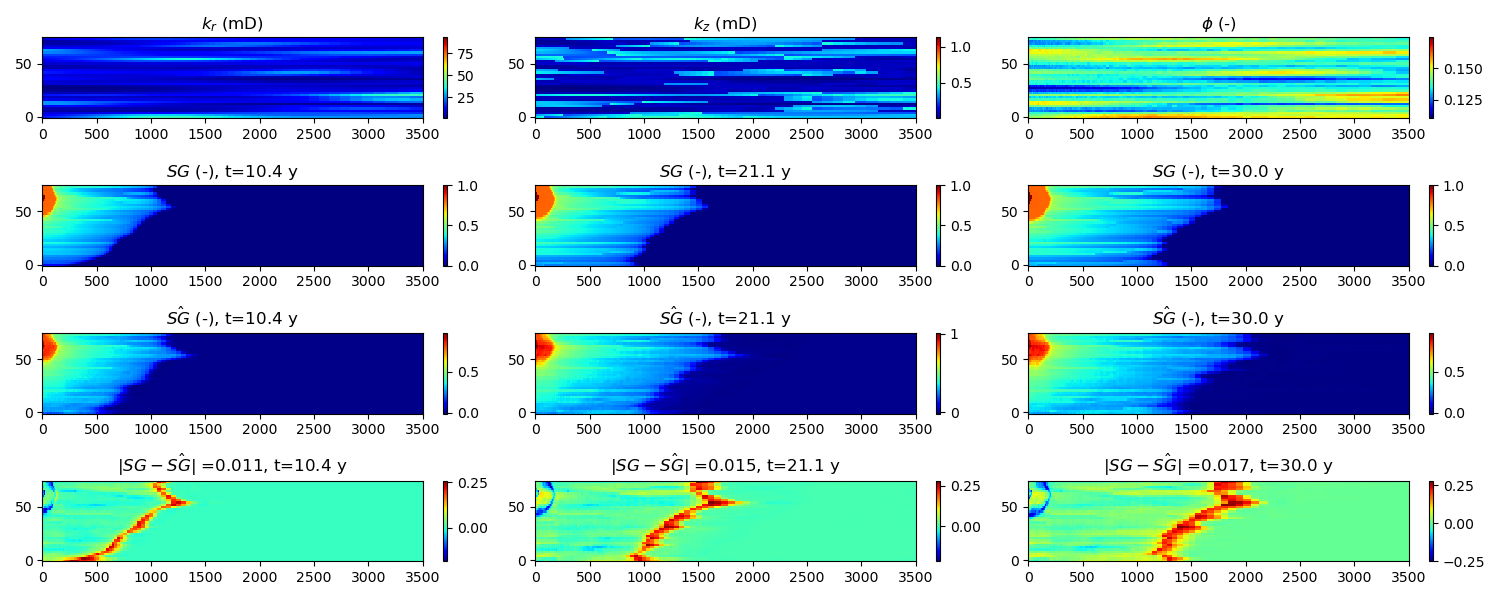

Operator Generalization: Geologic Carbon Sequestration

TNO is assessed on the high-dimensional, multiphysics task of CO2 plume migration and pressure evolution for geological storage, requiring learning coupled elliptic-hyperbolic PDE dynamics and robust generalization to unseen geology and well configurations.

Discussion: Theoretical and Practical Implications

The TNO framework advances operator learning by offering:

- Superior Temporal Extrapolation: Through its explicit modeling of spatiotemporal operator structure and temporal bundling, TNO achieves accurate, stable long rollouts not previously demonstrated by neural operator architectures.

- Resolution Invariance: TNO decouples training and inference resolutions, enabled by adaptive pooling/U-Net and coordinate-based trunking. This is indispensable for scientific deployment scenarios with evolving sensor networks or regridding requirements.

- Unified, Low-Memory Models: A single TNO model handles multiresolution, multi-physics, and multivariable cases—often with fewer parameters and lower memory use than dedicated specialized models.

These results position TNO as a state-of-the-art solution for time-dependent PDE operator learning across spatiotemporal domains with practical utility in real-time forecasting and scientific simulation replacement.

Conclusion

The Temporal Neural Operator robustly addresses core challenges in operator learning for time-dependent PDEs: temporal extrapolation, error stability, generalization to novel parameterizations, and resolution invariance. Architecturally, the introduction of a temporal branch, U-Net encoders with adaptive pooling, and Hadamard product synthesis yields significant gains over DeepONet, FNO, and their variants. Extensive benchmarking on weather forecasting, climate modeling, and multiphysics geoscience demonstrates TNO's flexibility, accuracy, and practical deployment capabilities. Future directions include scaling TNO architectures for even higher-dimensional coupled systems, integration with active data assimilation workflows, and further exploration of theoretical generalization bounds for operator learning in non-Markovian and multi-scale physical settings.