- The paper introduces an autoregressive framework that generates high-fidelity 3D garments from single images using structured parametric representations.

- The paper employs a masked autoregressive model with diffusion-based denoising and classifier-free guidance to enhance garment synthesis quality.

- The paper demonstrates superior performance on benchmarks like Chamfer Distance and Point-to-Surface metrics, ensuring simulation-ready, diverse garment outputs.

GarmentX: Autoregressive Parametric Representations for High-Fidelity 3D Garment Generation

Introduction to GarmentX

GarmentX presents an innovative framework for generating high-fidelity, wearable 3D garments from a single image input. Unlike traditional garment reconstruction methodologies, which suffered from constraints leading to physically implausible outputs, GarmentX leverages a structured parametric representation model. This approach integrates seamlessly with GarmentCode, allowing for the intuitive modification of garment shape and style while ensuring the structural validity and physical realism of the resulting garment models. The introduction of an autoregressive model provides a structured generation process, enhancing garment synthesis quality.

Data Construction and Representation

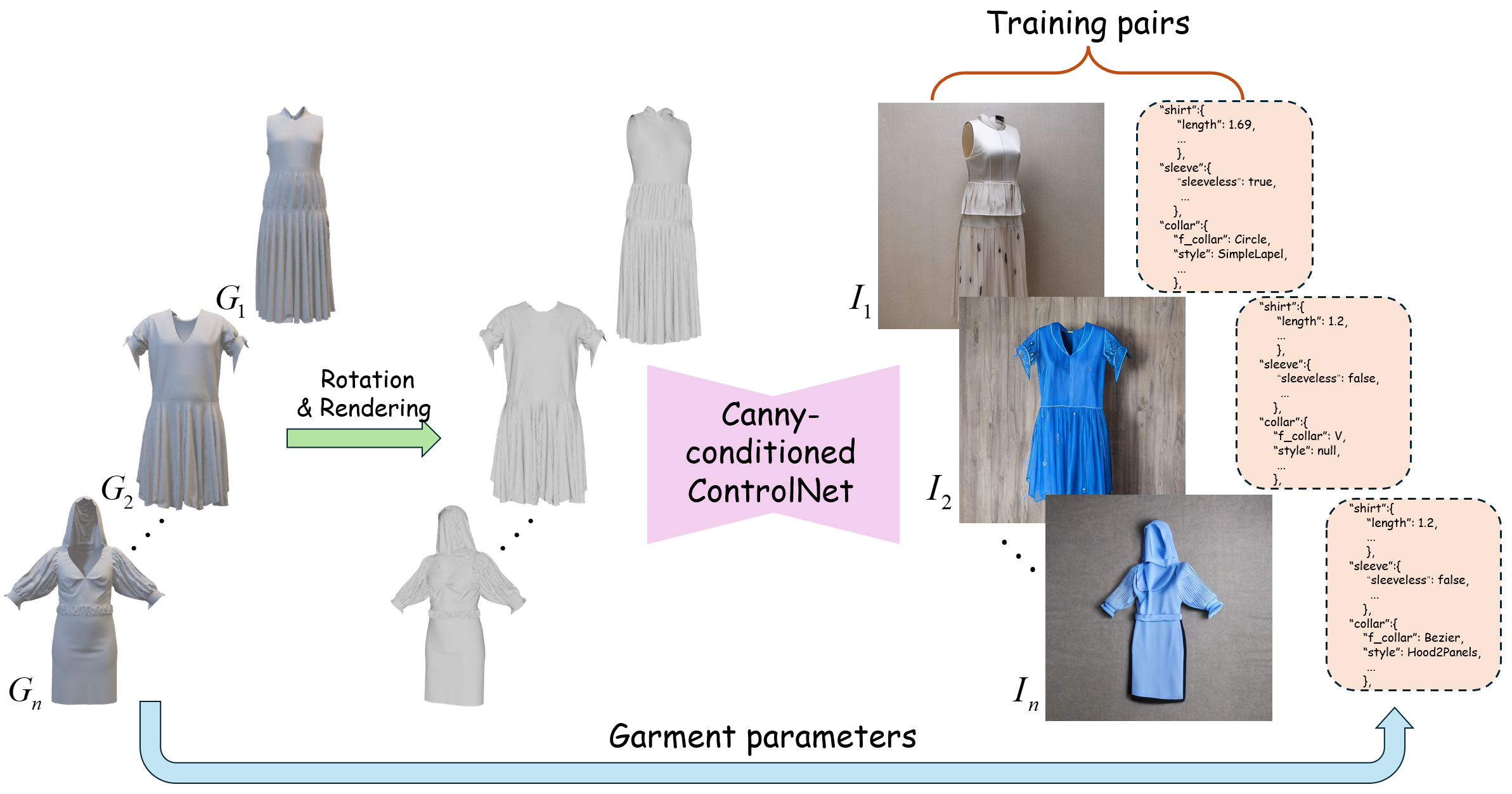

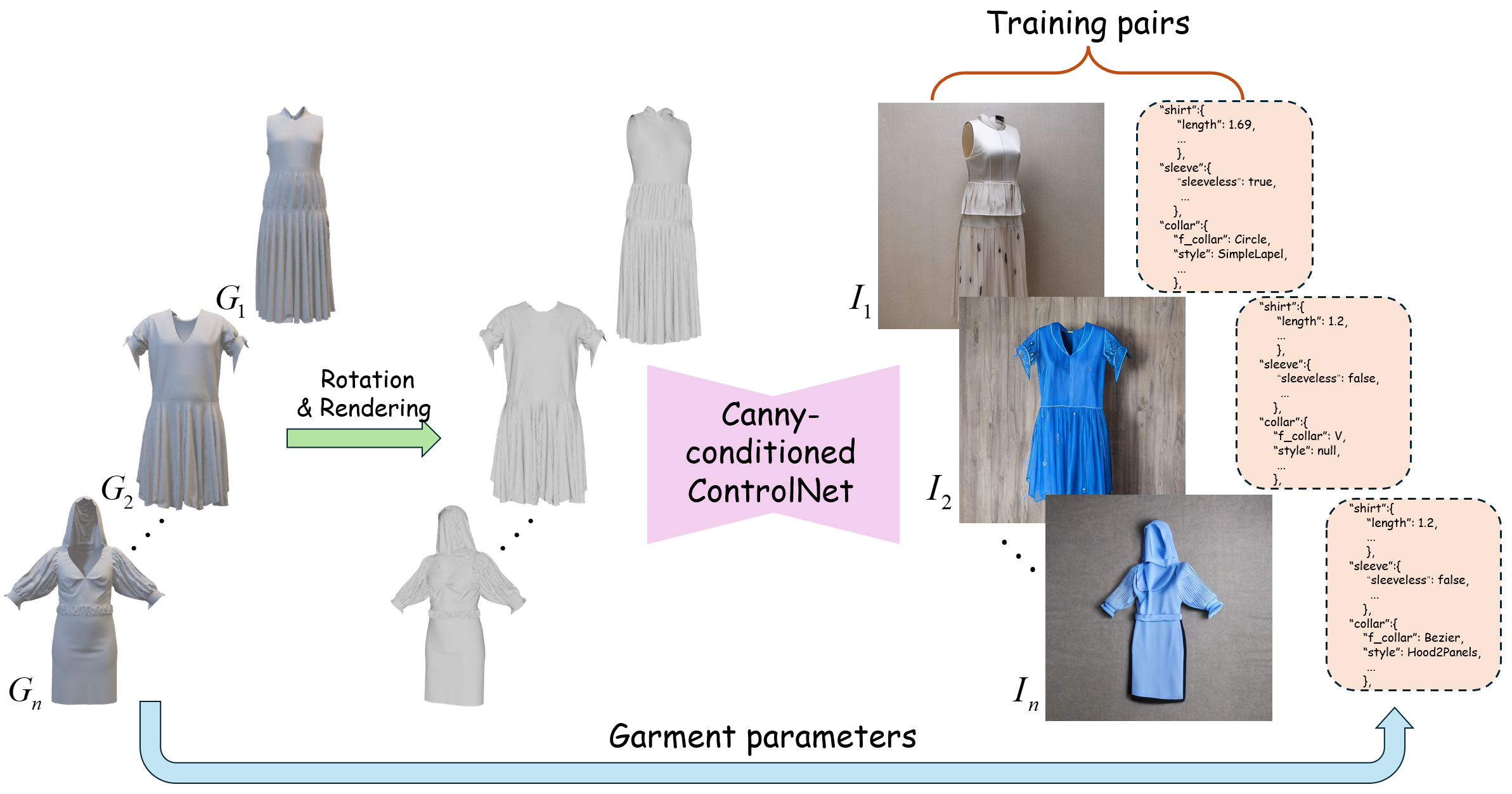

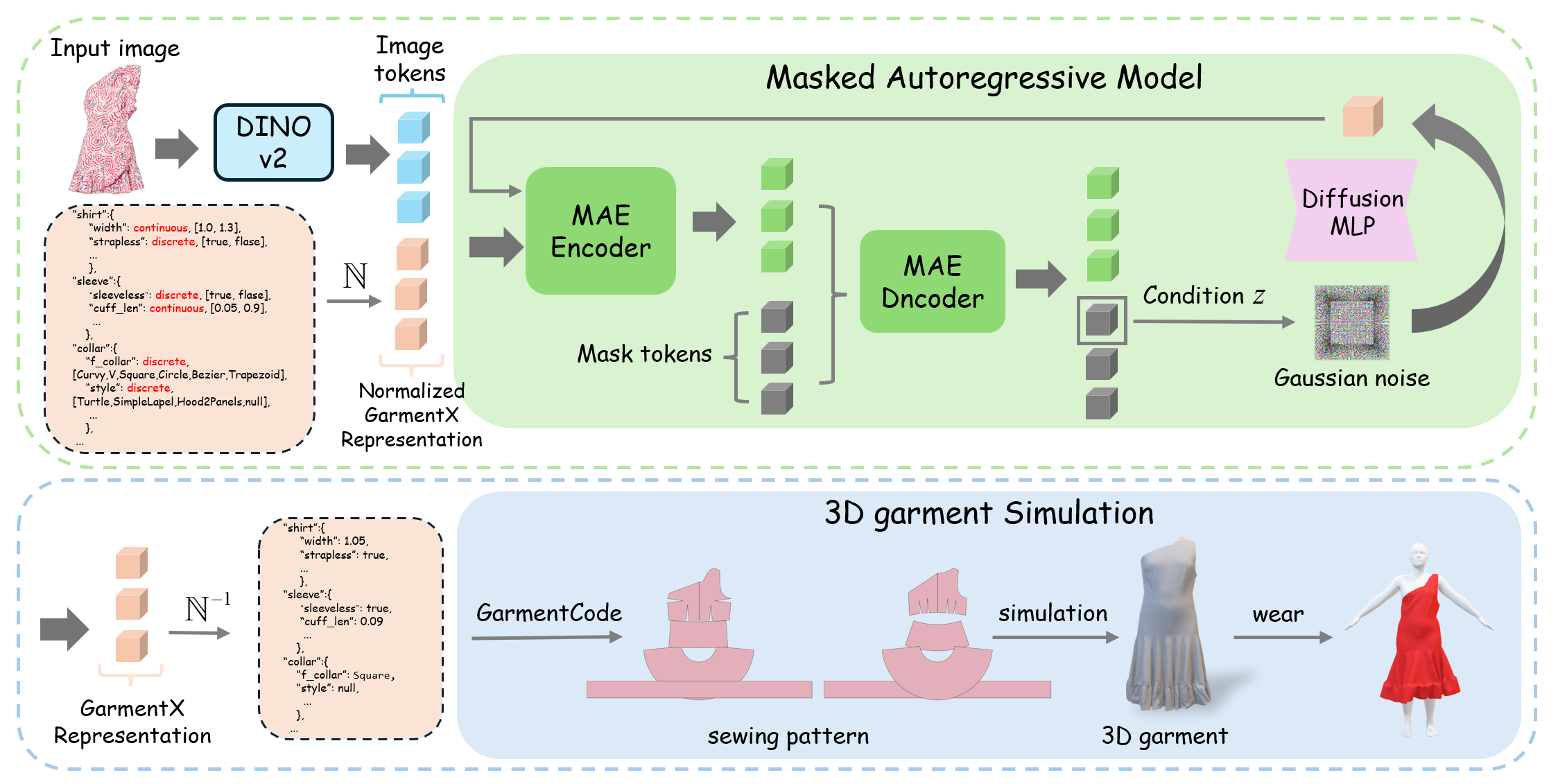

Central to the effectiveness of GarmentX is its dataset construction and parametric representation strategy. The GarmentX dataset, composed of 378,682 garment parameter-image pairs, is synthesized through an automatic pipeline leveraging ControlNet with Blender for high-quality image rendering (Figure 1). This process ensures coherence between garment parameters and photorealistic images, facilitating robust model training. Each garment is represented via a parameter vector p, encoding attributes such as neckline type and sleeve shape, normalized to manage continuous and discrete parameters effectively, offering direct controllability over garment features.

Figure 1: Automatic Data Construction Pipeline. We construct garment parameters-image pairs using ControlNet and Blender.

Architecture Overview

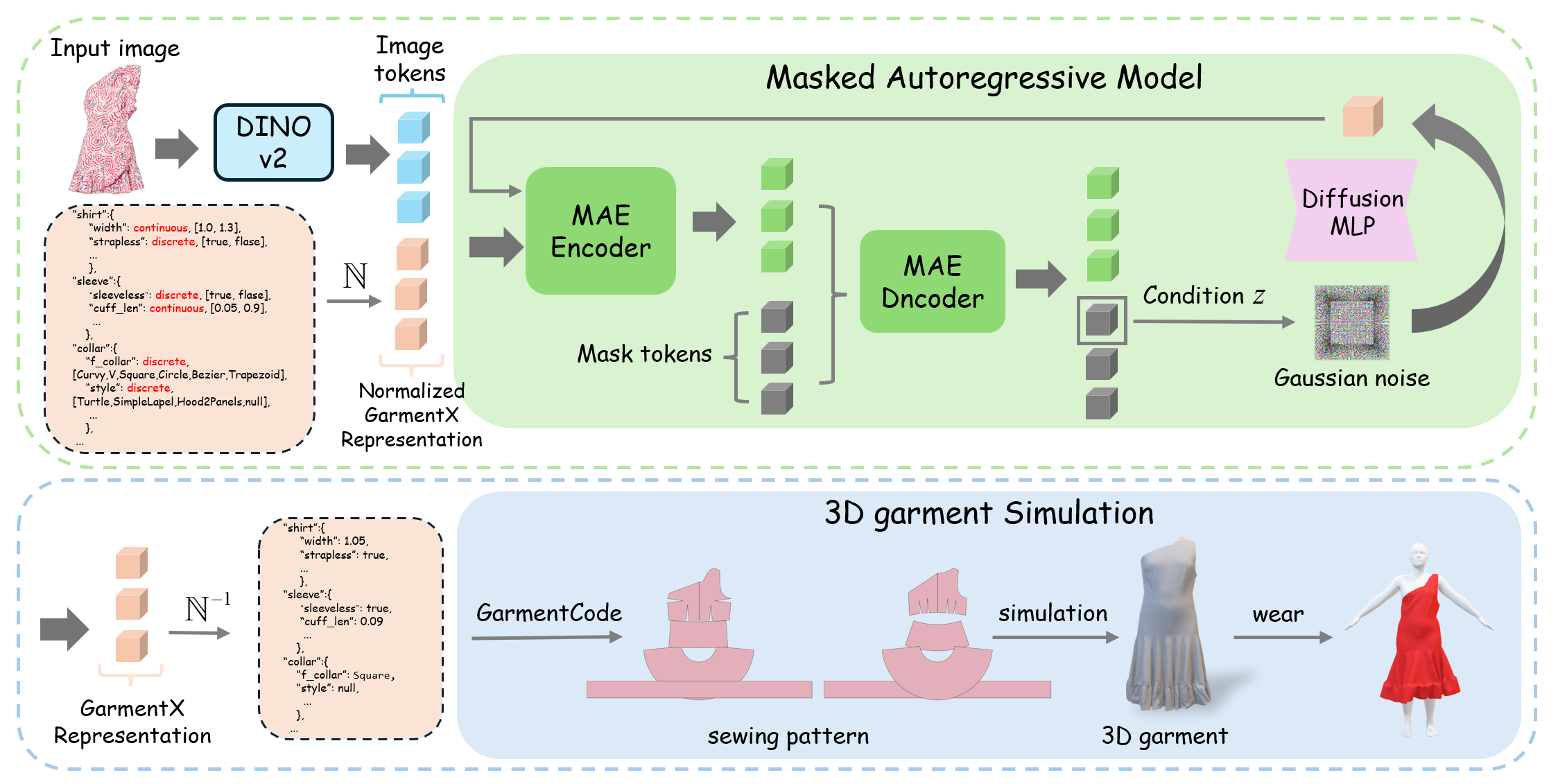

The GarmentX architecture employs a masked autoregressive model, efficiently decoding input image tokens into structured garment parameters. This methodology, illustrated in Figure 2, involves processing through a MAE encoder-decoder and diffusion MLP, achieving efficient learning and generation. The generative process utilizes DINOv2 for feature extraction and adopts a classifier-free guidance (CFG) mechanism to optimize conditional input quality. The diffusion component applies MLP-based denoising to achieve high-fidelity reconstructions, ensuring simulation-ready 3D garment outputs.

Figure 2: Overview of GarmentX. Taking a single image as input, GarmentX trains a masked autoregressive generation model to efficiently generate 3D garments.

Experimental Evaluation

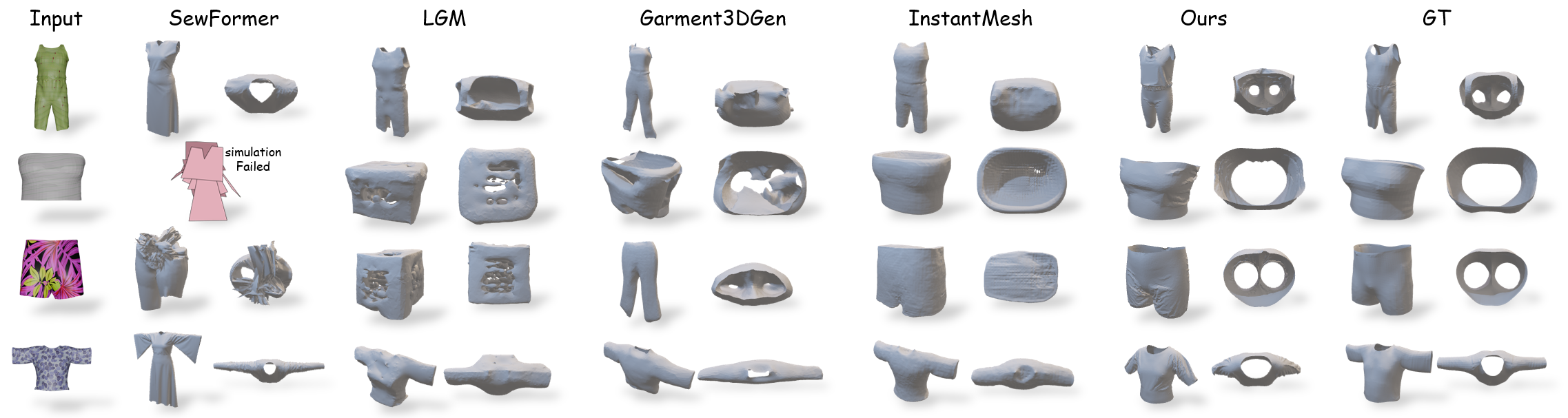

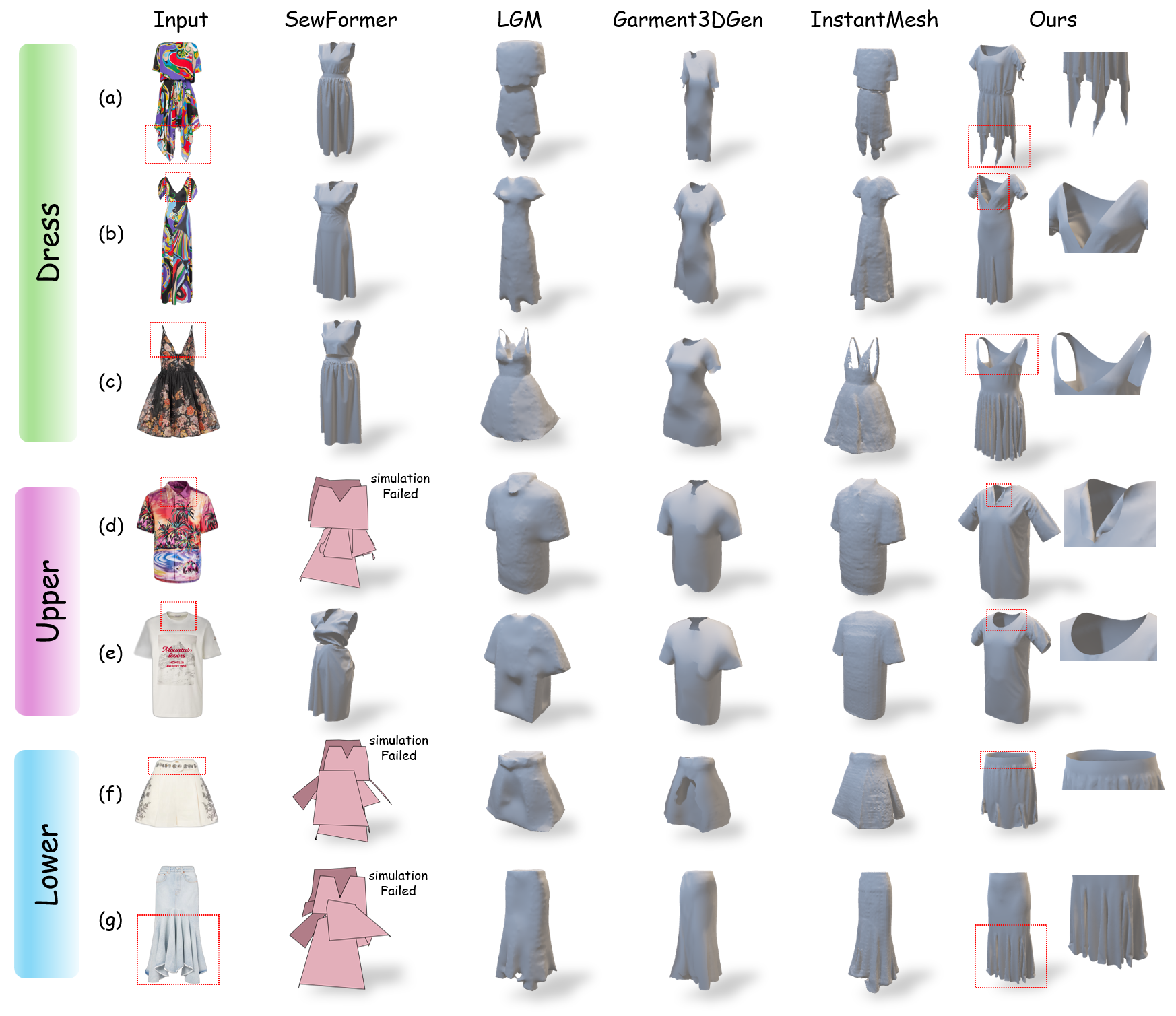

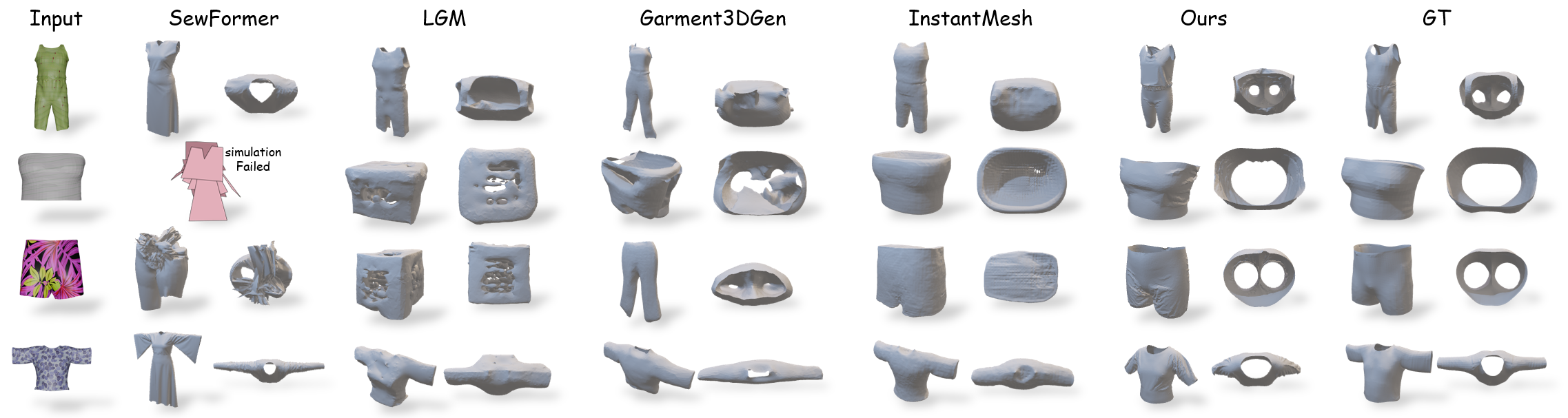

GarmentX demonstrates superior performance across various benchmarks. Quantitative assessments on the CLOTH3D dataset show that GarmentX excels in Chamfer Distance (CD) and Point-to-Surface (P2S) metrics, indicating high geometric fidelity with input-image alignment (Figure 3). In addition, the zero simulation failure rate evidences the structural reliability and readiness for downstream applications, contrasting significantly with prior pattern prediction methods.

Figure 3: Qualitative comparisons on CLOTH3D dataset. GarmentX produces wearable, open-structure, and complete 3D garments.

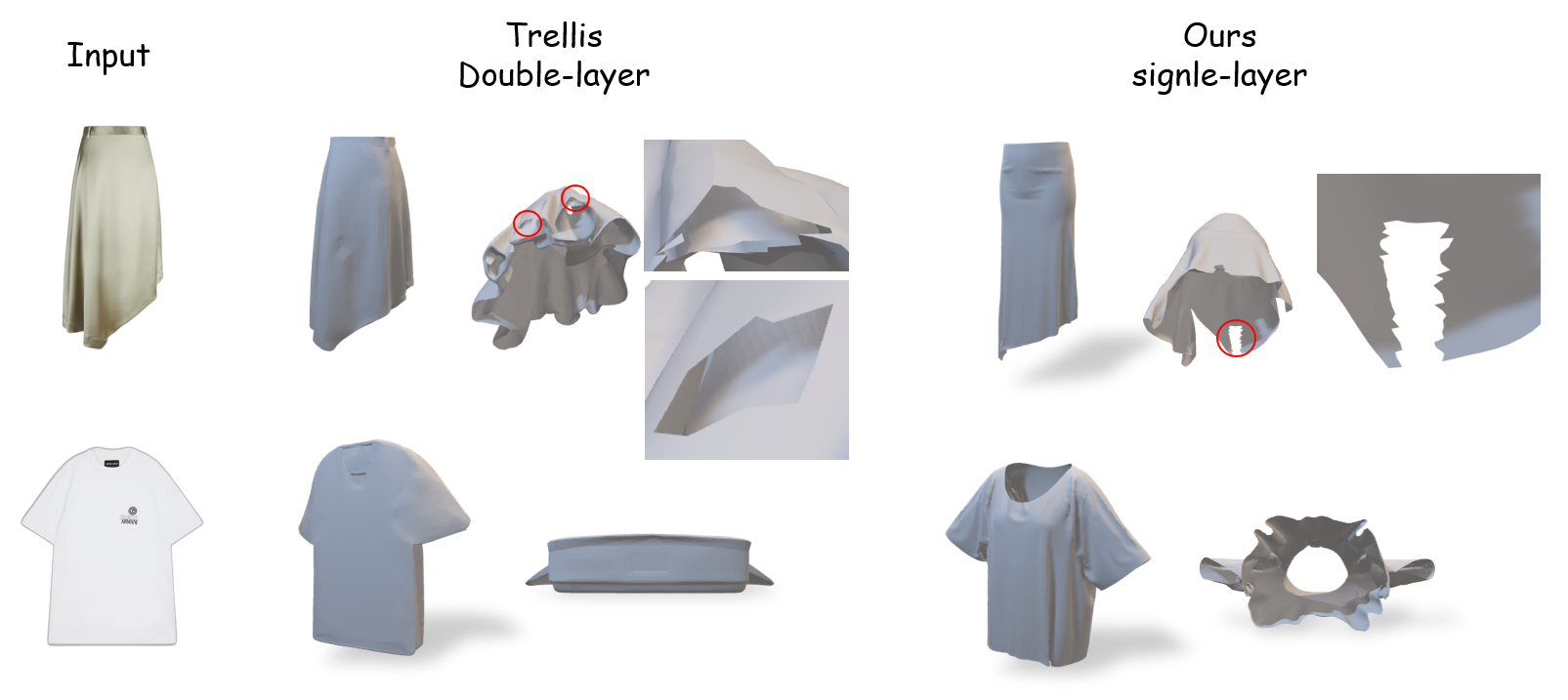

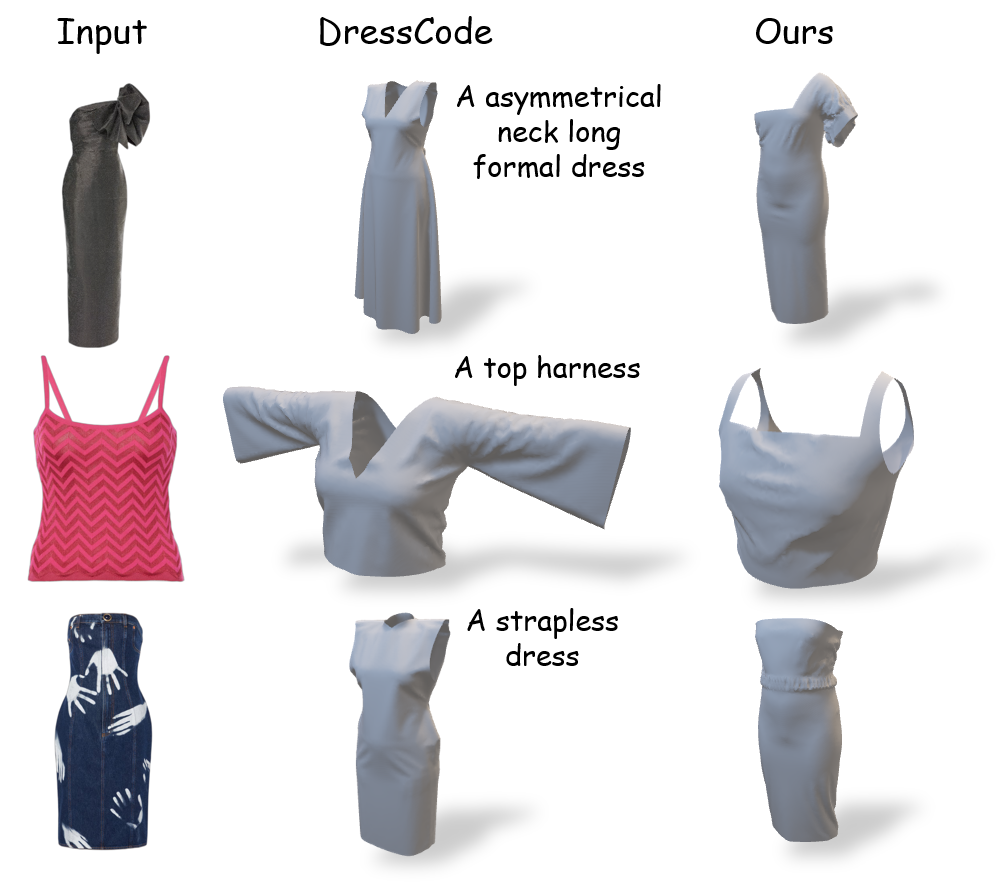

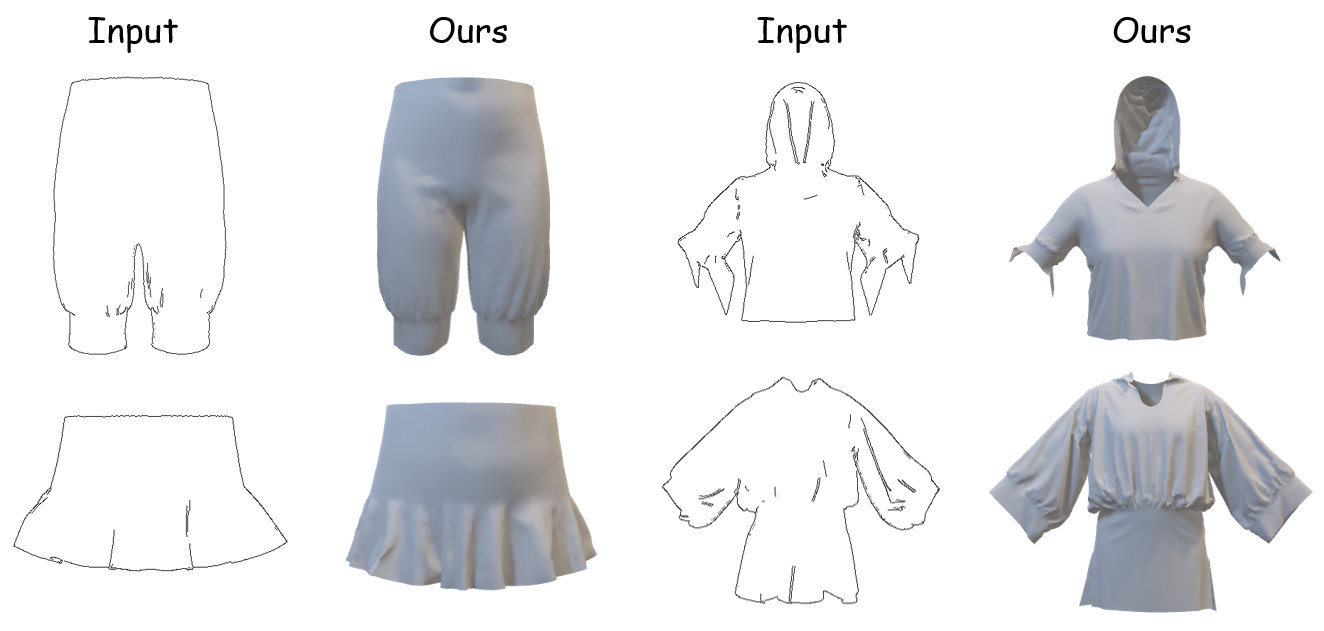

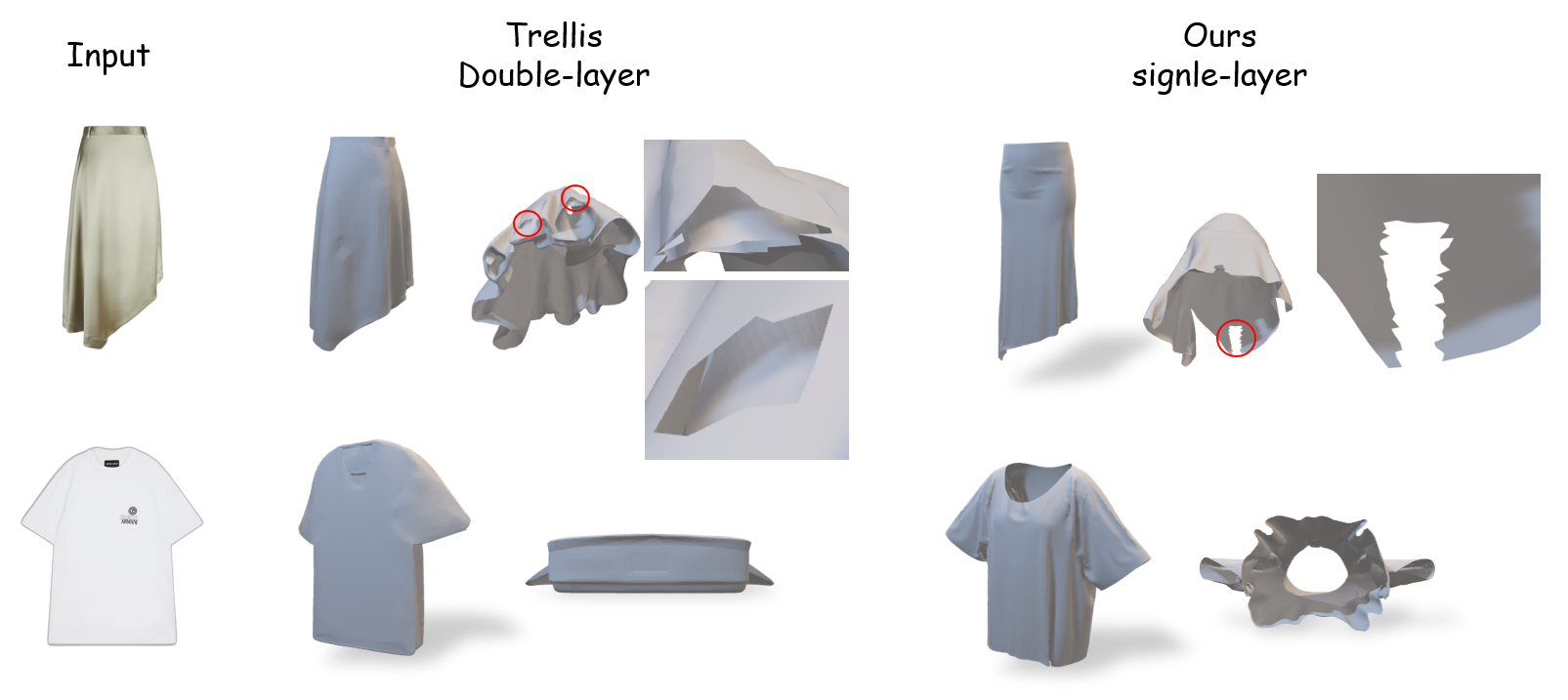

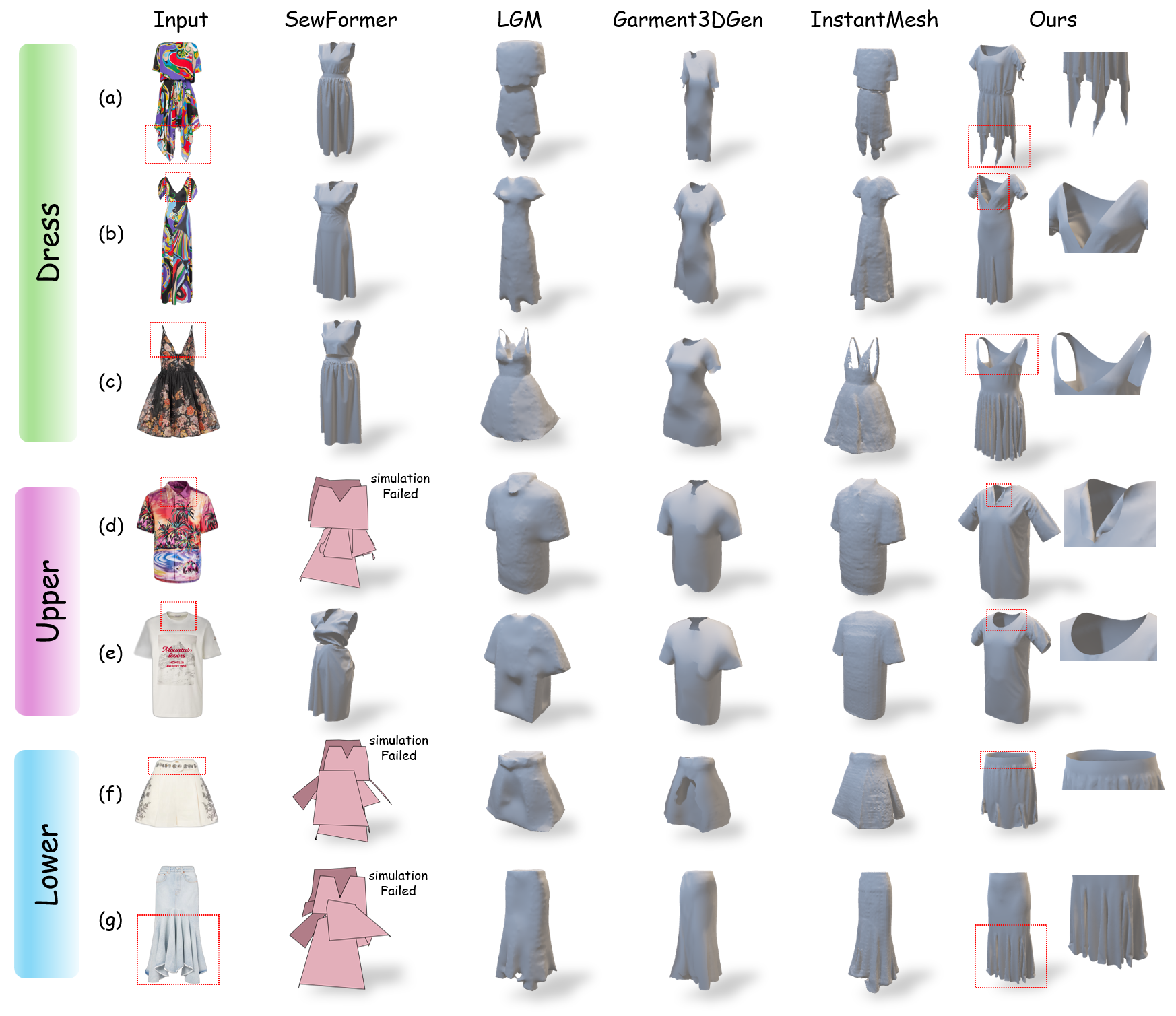

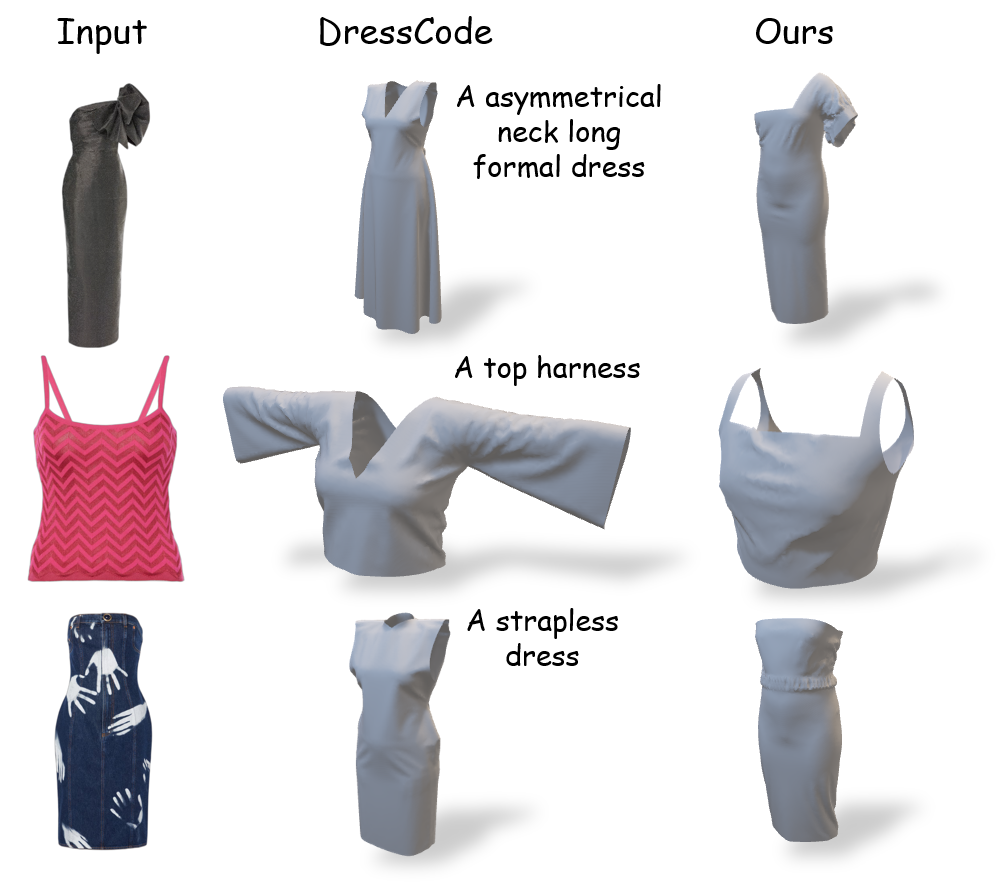

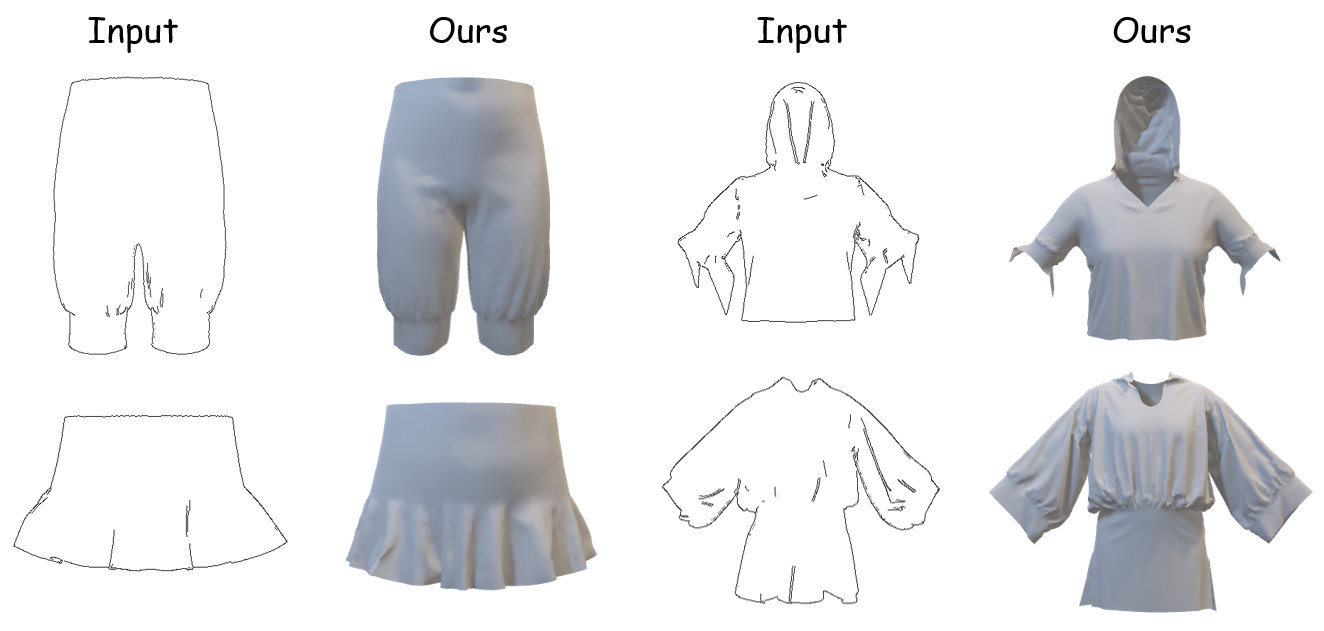

Further comparisons on datasets like CVDD and CVPR illustrate GarmentX's versatility in generating diverse, complex garments with intricate detail preservation (Figures 5, 6). Empirical results against competitors, such as DressCode and Trellis, highlight GarmentX's superior generalization capabilities, handling unique garment styles effectively (Figures 4, 6, 7).

Figure 4: Qualitative comparisons with Trellis. GarmentX generates single-layer, open-structure 3D garments.

Figure 5: Qualitative comparisons on CVDD dataset. GarmentX produce diverse, complex, and detailed 3D garments that match the input images well.

Figure 6: Qualitative comparisons with DressCode. GarmentX exhibits better generalization, enabling the creation of a variety of uncommon garments.

Figure 7: Sketch-conditional inputs. Note that GarmentX has not been trained on sketch images.

Ablative Insights and Model Refinements

Notably, ablative studies demonstrate the superiority of the autoregressive model over alternatives such as DiT, confirming the methodological benefits in distribution modeling and computational efficiency. Further adjustments in data construction techniques and CFG scale have fine-tuned the model's balance between quality and conditioning strength, with data construction via ControlNet proving optimal (Figure 8, Table analysis).

Figure 8: Qualitative ablation of different data construction methods. ControlNet was selected for its optimal balance between speed and quality.

Conclusion

GarmentX embodies a significant progression in 3D garment generation, achieving unprecedented synthesis quality and garment diversity through its structured parametric framework. The integration with GarmentX dataset ensures robust training and practical applicability. Future development will focus on extending the framework's applicability to clothed human images, addressing current preprocessing requirements, and further enhancing the versatility and accuracy of AI-centric garment design systems.