Cognitio Emergens: Agency, Dimensions, and Dynamics in Human-AI Knowledge Co-Creation

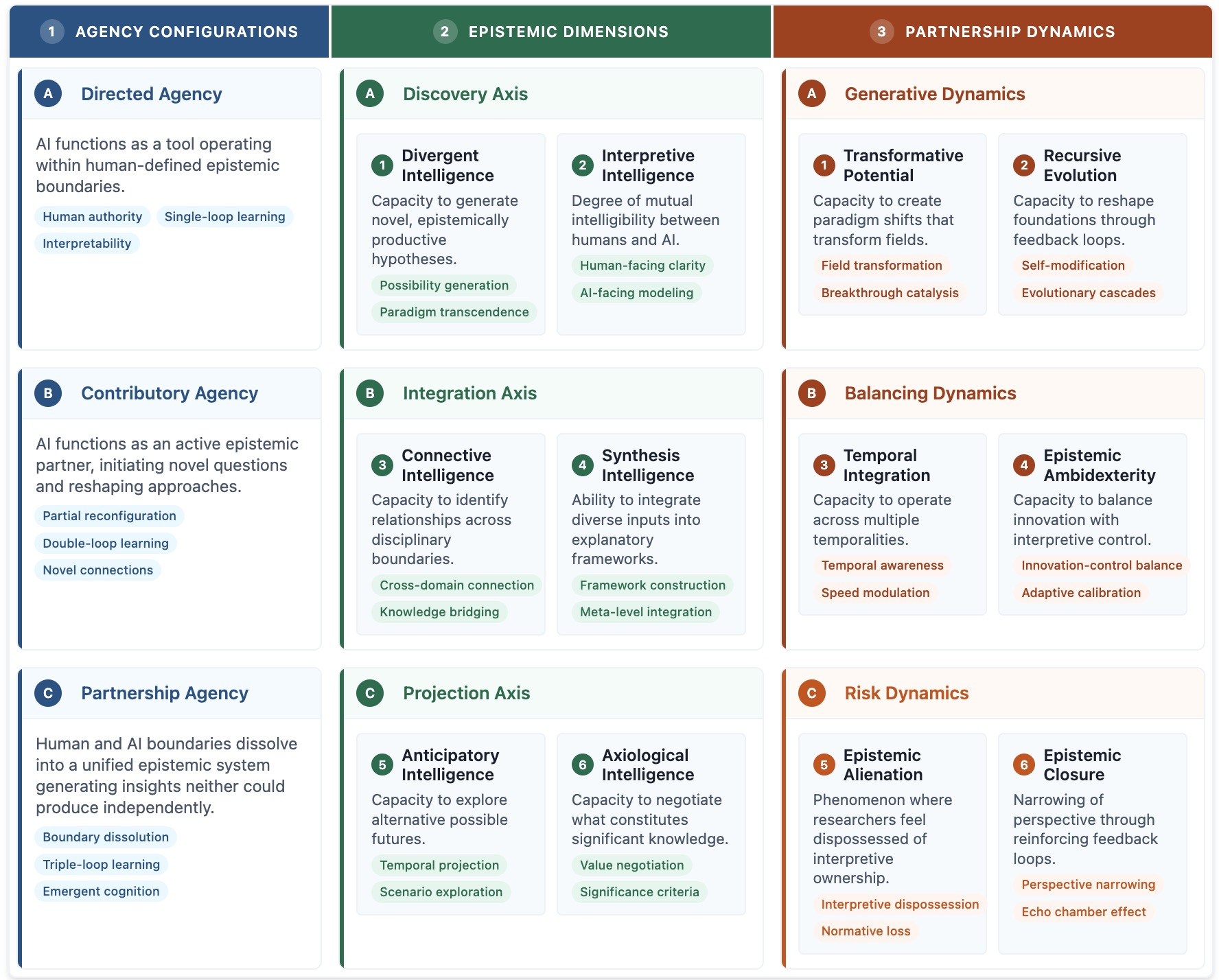

Abstract: Human-AI scientific collaboration has evolved from tool-user relationships into co-evolutionary partnerships. When AlphaFold improved protein structure prediction, researchers engaged with an epistemic partner that transformed their approach to structure-function problems. Yet existing frameworks position AI as either sophisticated tool or potential risk, overlooking how scientific understanding emerges through recursive interaction. We introduce Cognitio Emergens (CE), a framework that captures the co-evolutionary nature of human-AI epistemic partnerships. Drawing from autopoiesis theory, social systems theory, and organizational modularity, CE integrates three components: Agency Configurations modeling how authority distributes through Directed, Contributory, and Partnership modes, with partnerships oscillating dynamically rather than following linear progression; Epistemic Dimensions capturing six capabilities along Discovery, Integration, and Projection axes, creating distinctive "capability signatures" that guide strategic development; and Partnership Dynamics identifying evolutionary forces including epistemic alienation, where researchers lose interpretive control over knowledge they formally endorse. The framework equips researchers to diagnose dimensional imbalances, institutional leaders to design governance structures supporting multiple agency configurations, and policymakers to develop evaluations beyond simple performance metrics. By reconceptualizing human-AI collaboration as fundamentally co-evolutionary, CE provides conceptual tools for cultivating partnerships that preserve epistemic integrity while enabling transformative breakthroughs neither humans nor AI could achieve independently.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Cognitio Emergens: A simple explanation

1) What this paper is about

This paper talks about a new way people and AI can create scientific knowledge together. Instead of AI being just a tool, the authors say AI can become a true partner that helps discover ideas, make sense of them, and plan what to do next. They call this big idea Cognitio Emergens (Latin for “emerging knowledge”): knowledge that appears from the back-and-forth teamwork between humans and AI over time.

2) The main questions the paper asks

The paper sets out to answer three simple questions:

- How do humans and AI share control and decision-making while doing science together?

- What special abilities show up only when humans and AI work as a team (abilities neither has alone)?

- What forces help or hurt these partnerships as they grow, especially the risk that humans might accept results they don’t fully understand?

3) How the authors approach the problem

This is a theory paper. That means the authors build a clear, organized way to think about human–AI teamwork, instead of running lab experiments. They:

- Look at real examples (like AlphaFold predicting protein shapes) to show how AI can change how scientists think.

- Combine ideas from different fields to build their framework:

- Biology (autopoiesis): like two living systems that adapt to each other through repeated contact.

- Sociology (social systems): how different groups communicate while staying distinct.

- Business design (modularity): how splitting work into parts with good “interfaces” helps ideas flow.

- Create a three-part framework called Cognitio Emergens (CE) that anyone can use to understand and guide human–AI research partnerships.

Think of it like designing a playbook for a new kind of sports team where a human and an AI are learning to pass, shoot, and strategize together as the season goes on.

4) What the paper finds and why it matters

The framework has three main parts. Each part explains something important about how human–AI science teams work and grow.

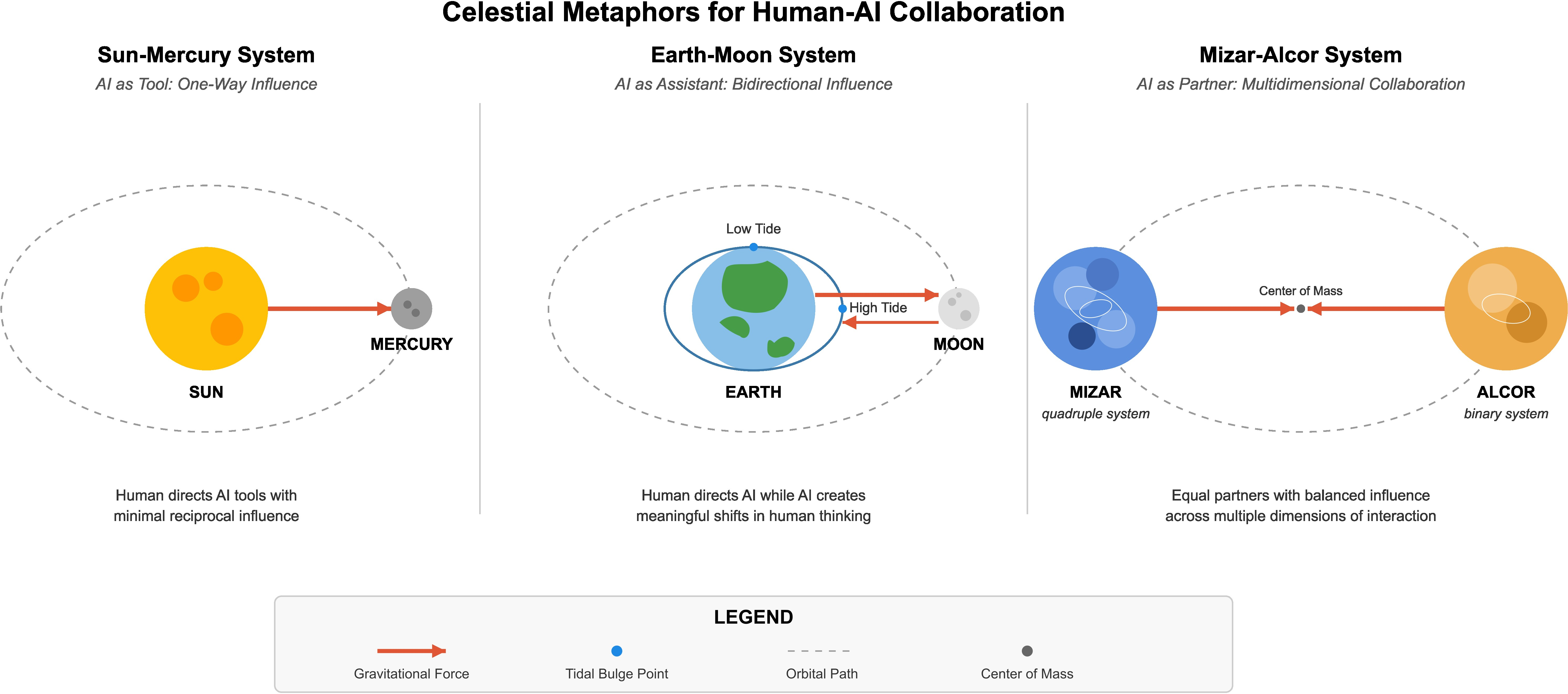

First: Agency Configurations (who leads, who follows, and how that changes)

- Directed: The human is the driver; AI is like a powerful GPS that follows instructions. The human sets the goals and double-checks everything.

- Contributory: AI becomes a teammate who can point out patterns or suggest ideas the human didn’t ask for. The human still decides what counts as good.

- Partnership: Human and AI are co-creators. Their ideas feed into each other so tightly that you can’t easily separate who did what. Together they make insights neither could make alone.

Why this matters: Teams don’t stay in one mode forever. They move back and forth depending on the task—exploring new ideas, checking results, or publishing. Knowing which mode you’re in helps you pick the right habits (like how much to explain, test, or slow down).

Second: Epistemic Dimensions (the six teamwork abilities that can emerge) These are grouped into three “axes.” You can think of them as the skills a great human–AI team can build over time.

Discovery (finding and making sense of new ideas)

- Divergent Intelligence: the team’s power to generate surprising, useful ideas and hypotheses, not just obvious ones.

- Interpretive Intelligence: the team’s power to explain AI’s reasoning in human-friendly ways, and for the AI to adapt to the human’s style and standards.

Integration (connecting and organizing knowledge)

- Connective Intelligence: spotting meaningful links across fields or data types (like connecting biology and economics).

- Synthesis Intelligence: building clear, combined explanations or models from many pieces of information.

Projection (looking ahead and judging what matters)

- Anticipatory Intelligence: exploring “what if” futures and planning for different scenarios.

- Axiological Intelligence (“axiology” = values): deciding what counts as valuable knowledge and possibly redefining those values together (for example, not just “Is it statistically significant?” but also “Is it useful for society?”).

Why this matters: These six skills don’t grow evenly. A team might be great at idea generation (divergent) but weak at making those ideas understandable (interpretive). The authors call the overall pattern your “capability signature.” Seeing your signature helps you know where to improve next.

Third: Partnership Dynamics (what pushes teams forward or off track)

- Generative forces: what sparks breakthroughs (like strong idea exchange and good timing).

- Balancing forces: how teams handle tensions (speed vs. safety, novelty vs. standards).

- Risk forces: what can go wrong. The big one is epistemic alienation—when humans accept results they can’t really interpret. You “own” the paper, but not the understanding. That’s dangerous for science.

Why this matters: If you know these dynamics, you can design better teamwork rules, review processes, and training so that humans stay meaningfully in charge of understanding, not just rubber-stamping AI outputs.

What’s new compared to earlier approaches

- Many past models froze roles (tool vs. user) or focused on short-term scores. CE treats partnerships as living systems that change over time.

- Instead of one “accuracy” number, CE maps six abilities that grow differently and must be balanced.

- CE highlights values and institutions: who gets to decide what counts as “good science,” and how do rules and incentives need to adapt?

5) What this could change in the real world

If labs, universities, and funders use this framework, they can:

- Help research teams spot skill gaps (for example, strong idea generation but weak interpretability) and fix them with training, tools, and clearer standards.

- Build governance that fits the partnership mode (Directed vs. Contributory vs. Partnership), so checks and reviews are strict where needed and flexible where helpful.

- Update how we judge research—adding measures for clarity, synthesis, and future planning, not just speed or single-score accuracy.

- Prevent epistemic alienation by requiring understandable explanations, shared vocabularies, and human-centered validation before results are accepted.

Bottom line: The paper argues that the future of science isn’t humans alone or AI alone. It’s careful, evolving partnerships where each side makes the other better. The Cognitio Emergens framework gives people a shared map to build those partnerships safely and powerfully—so we can discover more, understand better, and make smarter choices about what knowledge truly matters.

Practical Applications

Immediate Applications

Below are concrete, deployable use cases that operationalize the CE framework’s agency configurations, epistemic dimensions, and partnership dynamics across sectors.

- CE capability-signature audit for teams

- Sectors: academia, pharma/biotech, materials, software.

- What: Rapid assessment of the six Epistemic Dimensions (Divergent, Interpretive, Connective, Synthesis, Anticipatory, Axiological) to produce a radar chart of strengths/imbalances; use to target interventions.

- Tools/workflows: Lightweight survey + log analysis; “CE Radar” dashboard; retrospective workshop.

- Dependencies/assumptions: Access to project artefacts (prompts, outputs), staff time, basic analytics.

- Agency-configuration playbooks by project phase

- Sectors: research labs, software engineering, clinical AI validation.

- What: Explicitly define when to use Directed, Contributory, or Partnership modes for exploration, synthesis, and verification; include “gates” for high-stakes phases.

- Tools/workflows: SOPs, decision checklists, human-in-the-loop approval steps.

- Dependencies/assumptions: Governance buy-in; staff training; risk taxonomy.

- Interpretability layer for AI outputs (boosting Interpretive Intelligence)

- Sectors: healthcare, finance, public sector.

- What: Require counterfactuals, confidence bands, provenance, and domain-tailored rationales for each AI recommendation.

- Tools/workflows: LIME/SHAP, causal counterfactuals, LLM-based narrative rationales, W3C PROV lineage.

- Dependencies/assumptions: Model access or explainability surrogates; privacy/compliance constraints.

- Contributory suggestion log and triage

- Sectors: R&D, QA/manufacturing, SRE/operations.

- What: Systematically capture and review AI-initiated hypotheses/anomalies; track acceptance rate and rationale.

- Tools/workflows: ELNs/Jira/Git integration; vector DB for hypothesis retrieval; weekly triage meetings.

- Dependencies/assumptions: Stable logging; role assignment for review; IP policy clarity.

- Enterprise GraphRAG for Connective Intelligence

- Sectors: pharma (drug repurposing), engineering, consulting.

- What: Build a knowledge graph across documents, data, and code to surface cross-silo links and analogies.

- Tools/workflows: GraphRAG/knowledge-graph platforms; RAG pipelines; relevance feedback loops.

- Dependencies/assumptions: Data integration, permissions, metadata quality.

- AI-assisted synthesis workshops

- Sectors: policy think tanks, climate programs, product strategy.

- What: Facilitate sessions where AI assembles multi-modal evidence into draft integrative models; humans refine and validate (Synthesis Intelligence).

- Tools/workflows: LLMs + retrieval; structured debate; versioned model canvases.

- Dependencies/assumptions: Curated corpora; facilitator expertise; disputed-evidence handling.

- Anticipatory scenario labs

- Sectors: energy planning, financial risk, municipal planning.

- What: Generate multi-scenario roadmaps; define early indicators; pre-mortems on critical paths (Anticipatory Intelligence).

- Tools/workflows: System dynamics/simulation; LLM scenario generators; indicator dashboards.

- Dependencies/assumptions: Time-series and causal data; domain SMEs; model validation.

- Axiology reviews to align “what counts”

- Sectors: journal policy, R&D portfolio management, education assessment.

- What: Periodic review of evaluative criteria (e.g., significance vs. effect size vs. societal impact); update rubrics (Axiological Intelligence).

- Tools/workflows: Value-mapping workshops; decision logs; rubric versioning.

- Dependencies/assumptions: Leadership support; stakeholder representation.

- Epistemic alienation safeguards

- Sectors: regulated industries (healthcare, aviation, finance).

- What: Set interpretability thresholds, provenance capture, and escalation protocols when researchers can’t meaningfully explain accepted results.

- Tools/workflows: “Stop-the-line” criteria; model cards + data sheets; independent review panels.

- Dependencies/assumptions: Clear criteria for “explainability”; audit capacity.

- Role and cadence design for partnership dynamics

- Sectors: cross-sector.

- What: Appoint a “Partnership Steward” to monitor configuration oscillations, dimensional imbalances, and risk dynamics; institute monthly CE retrospectives.

- Tools/workflows: Maturity model; CE scorecards; playbook updates.

- Dependencies/assumptions: Dedicated time/budget; change-management support.

- CE-informed pedagogy and coursework

- Sectors: higher education, corporate L&D.

- What: Structure assignments to switch agency modes; assess across CE dimensions; include reflection to reduce alienation.

- Tools/workflows: LMS rubrics; prompt journals; peer critique.

- Dependencies/assumptions: Academic policy alignment; instructor training.

- Personal CE workflow for daily decisions

- Sectors: daily life (finance, career, learning).

- What: Use Directed mode for fact-finding, Contributory for brainstorming, Partnership for complex planning; keep a rationale journal and scenario playbook.

- Tools/workflows: Note-taking apps; templated prompts; periodic retrospectives.

- Dependencies/assumptions: User discipline; data privacy awareness.

Long-Term Applications

These use cases require further research, scaling, standard-setting, or technology maturation before broad deployment.

- CE-native lab operating system

- Sectors: academia, pharma/biotech, materials.

- What: An integrated platform that tracks agency shifts, logs epistemic contributions, scores CE dimensions, recommends interventions, and ensures provenance.

- Tools/products: “CE LabOS” integrating ELN, ML ops, knowledge graphs, EAI (epistemic AI) metrics.

- Dependencies/assumptions: Vendor ecosystem, interoperability standards, secure data-sharing.

- Peer-review and funding frameworks based on capability signatures

- Sectors: academia, government R&D agencies.

- What: Require submissions to report CE capability signatures, agency configurations, value negotiation, and provenance; adjust evaluation criteria beyond scalar metrics.

- Tools/products: Submission templates; reviewer training; CE-aware scoring systems.

- Dependencies/assumptions: Community consensus; policy changes at journals/funders.

- Standards for epistemic provenance, credit, and IP in human–AI co-creation

- Sectors: publishing, legal/IP, standards bodies.

- What: Protocols for contribution tracking, authorship attribution, and IP ownership when insights emerge from co-evolutionary loops.

- Tools/products: Digital provenance ledgers; contribution taxonomies; updated IP clauses.

- Dependencies/assumptions: Legal reform; cross-institutional adoption.

- Value-aligned “AI Co-Scientist” agents with alienation guards

- Sectors: R&D-intensive industries.

- What: Agents that learn team-specific axiological profiles and adapt outputs for Interpretive Intelligence; detect and mitigate epistemic alienation.

- Tools/products: Preference-learning modules; explainability-by-design models; HCI feedback loops.

- Dependencies/assumptions: Advances in value modeling, robust interpretability, safe RL.

- CE certification and micro-credentials

- Sectors: education, HR/professional development.

- What: Training and certification on CE dimensions, agency management, and triple-loop learning; role pathways (Partnership Steward, Epistemic Architect).

- Tools/products: MOOC programs; assessments; industry-recognized badges.

- Dependencies/assumptions: Accreditation partnerships; employer demand.

- Regulatory sandboxes for partnership agency in high-stakes domains

- Sectors: healthcare, finance, critical infrastructure.

- What: Controlled pilots that test Contributory/Partnership modes with tiered oversight and measurement across CE dimensions.

- Tools/products: Sandbox governance charters; risk-tiering frameworks; public reporting.

- Dependencies/assumptions: Regulator engagement; liability frameworks.

- Federated knowledge commons with CE governance

- Sectors: climate, public health, disaster response.

- What: Cross-institutional GraphRAG and synthesis pipelines with shared axiological charters; privacy-preserving data federation.

- Tools/products: Federated KG infrastructure; policy compacts; open benchmarks.

- Dependencies/assumptions: Data-sharing agreements; secure computation (e.g., PETs).

- Organizational design patterns for CE

- Sectors: large enterprises, research consortia.

- What: Modular “cognitive interfaces” between teams and AI systems; new governance roles; incentive schemes aligned with Axiological Intelligence.

- Tools/products: Org blueprints; role descriptions; incentive models.

- Dependencies/assumptions: Cultural change; metrics aligned with long-term learning.

- Automated detection and mitigation of epistemic alienation

- Sectors: HCI, safety-critical systems.

- What: Interfaces that sense understanding gaps (via interaction signals or psychometrics) and adapt explanations or switch agency mode.

- Tools/products: UX telemetry, adaptive explanation engines, “confidence mirroring.”

- Dependencies/assumptions: Validated proxies for understanding; privacy safeguards; ethics oversight.

- Consumer “foresight companions”

- Sectors: personal finance, education, wellness.

- What: AI assistants that co-create scenarios, reflect user values, and make transparent trade-offs for major life decisions.

- Tools/products: Mobile apps integrating scenario engines, value elicitation, and explainable recommendations.

- Dependencies/assumptions: Reliable personal-data integration; regulatory compliance.

- CE-guided robotics and autonomy

- Sectors: manufacturing, logistics, healthcare robotics.

- What: Human–robot teaming where agency oscillates by task; interpretable control policies; collaborative hypothesis testing in physical environments.

- Tools/products: Explainable control stacks; shared autonomy interfaces; safety toolkits.

- Dependencies/assumptions: Advances in explainable RL/control; certification standards.

- CE-driven energy and infrastructure planning

- Sectors: energy, transportation, urban planning.

- What: Integrated planning that synthesizes technical models with community values; scenario portfolios with early-warning indicators.

- Tools/products: Multimodal planning platforms; participatory modeling; indicator dashboards.

- Dependencies/assumptions: High-quality data; participatory governance; long-term funding.

Notes on feasibility across applications:

- Many immediate applications depend on access to interaction logs, curated corpora, and basic explainability/tooling; organizational willingness to adjust workflows is critical.

- Long-term applications require standardization (provenance, credit), legal/policy updates (IP/authorship), mature interpretability/value-learning methods, and cross-institutional coordination.

- Across sectors, managing epistemic alienation and aligning evaluative criteria (Axiological Intelligence) are recurring dependencies that determine sustainable adoption.

Glossary

- Agency Configurations: A dynamic model describing how epistemic authority is distributed between humans and AI across different collaboration modes. "Agency Configurations provide a dynamic model of how epistemic authority distributes between humans and AI, capturing the fluid transitions between Directed, Contributory, and Partnership modes that characterize mature collaborations."

- AI-facing human modeling: The partnership’s capability to adapt AI outputs to researchers’ epistemic preferences and contexts. "AI-facing human modeling represents the partnership's capacity to align AI outputs with researchers' epistemic preferences and disciplinary contexts."

- Ambidextrous organizations: Organizational designs that balance exploration and exploitation to facilitate innovation. "and ambidextrous organizations (O'Reilly {paper_content} Tushman, 2013) highlights how architectural choices influence knowledge flows and the balance between exploration and exploitation."

- Anticipatory Intelligence: The capability to explore and reason about alternative future scenarios and trajectories. "Anticipatory Intelligence represents the partnership's capacity to explore alternative possible futures for phenomena under investigation."

- Autopoiesis theory: A systems theory explaining how coupled systems generate emergent properties through recursive interactions. "Autopoiesis theory (Maturana {paper_content} Varela, 1980) provides a foundation for understanding how recursive interaction between distinct cognitive systems generates emergent properties through structural coupling."

- Axiological Intelligence: The capability to negotiate and transform what is considered significant or valuable knowledge. "Axiological Intelligence represents the partnership's capacity to negotiate and potentially transform what constitutes significant or valuable knowledge within the research domain."

- Capability signatures: Characteristic patterns of strengths and weaknesses across epistemic dimensions within a partnership. "creating distinctive "capability signatures" that guide development;"

- Cognitive asymmetry: A mismatch between human understanding and AI-generated knowledge that can undermine collaboration. "weak interpretability creates cognitive asymmetry between human understanding and AI-generated knowledge."

- Connective Intelligence: The capability to discover meaningful relationships across domains, modalities, and disciplines. "Connective Intelligence represents the partnership's capacity to identify meaningful relationships across disciplinary boundaries, data modalities, and knowledge domains."

- Contributory Agency: A configuration where AI acts as an active epistemic contributor influencing inquiry. "In Contributory Agency configurations, AI functions as an active epistemic contributor, capable of initiating novel questions, suggesting unanticipated connections, or proposing alternative methodological approaches."

- Counterfactual explanations: Interpretability techniques that show how outputs would change under different inputs. "generating counterfactual explanations (e.g., showing how the AI's prediction would change if certain input data were different)"

- Directed Agency: A configuration where AI operates as a tool within human-defined boundaries and oversight. "In Directed Agency configurations, AI functions as a tool operating within human-defined epistemic boundaries."

- Divergent Intelligence: The capability to generate novel, productive hypotheses and research directions that push beyond paradigms. "Divergent Intelligence represents the partnership's capacity to generate novel, epistemically productive hypotheses, explanations, and research directions that transcend established paradigms."

- Double-loop learning: Learning that revisits and revises underlying assumptions, not just actions or errors. "Contributory Agency typically fosters double-loop learning patterns (Argyris, 1976)"

- Epistemic alienation: A state where researchers lose interpretive control over knowledge they endorse. "particularly the risk of epistemic alienation where researchers lose interpretive ownership of knowledge claims."

- Epistemic authority: The locus of decision-making power over what counts as knowledge and why. "The first component of the Cognitio Emergens framework addresses how epistemic authority is distributed between humans and AI systems."

- Epistemic communities: Groups organized around shared methods and standards for producing knowledge. "traditional human-centered epistemic communities"

- Epistemic Dimensions: Six collaborative capabilities organized along Discovery, Integration, and Projection axes. "Epistemic Dimensions identify six specific capabilities that emerge through sustained interaction, organized along Discovery, Integration, and Projection axes."

- Epistemic drift: The risk that novel ideas deviate from established interpretive anchors. "risks include ``epistemic drift'' where novel ideas lack interpretive anchoring."

- Epistemic horizon: The scope of conceivable explanations and research possibilities a partnership can entertain. "The key outcome is the expansion of the epistemic horizon."

- Epistemic partner: An AI collaborator that contributes to and reshapes scientific understanding, beyond tool use. "engaging with an epistemic partner that reshaped how they conceptualized structure-function relationships."

- Epistemic transparency: The clarity with which AI reasoning and contributions can be understood within the partnership. "Interpretive Intelligence represents the degree of mutual intelligibility and epistemic transparency between humans and AI within the partnership."

- Era of experience: A proposed paradigm where AI agents learn through continuous experience using self-generated world models. "Even promising directions like Silver and Sutton's (2025) ``era of experience'' (Silver {paper_content} Sutton, 2025) remain predominantly agent-centric,"

- Functional interpretability: Emphasizing testable outputs over full access to internal model mechanisms. "Directed Agency relies on functional interpretability (testable outputs) rather than full model interpretability (exposed inner logic)."

- GraphRAG: A system that converts text into structured knowledge graphs to aid synthesis. "Microsoft's GraphRAG, which transforms textual data into structured knowledge representations, exemplifies an AI capability supporting Synthesis Intelligence."

- Human-facing clarity: Making AI inferences understandable and traceable for human researchers. "Human-facing clarity represents the partnership's capacity to make AI inferences understandable and traceable for human researchers."

- Interpretability paradox: The tension between using AI effectively and fully understanding its internal logic. "introducing what we term the interpretability paradox:"

- Interpretive Intelligence: The capability to maintain mutual intelligibility and transparency between human and AI. "Interpretive Intelligence represents the degree of mutual intelligibility and epistemic transparency between humans and AI within the partnership."

- Knowledge co-creation: Joint production of scientific understanding through human–AI interaction. "how knowledge co-creation emerges through continuous negotiation of roles, values, and organizational structures."

- Liminal zones: Transitional spaces between configurations where significant epistemic shifts occur. "These interfaces are not sharp boundaries but liminal zones with distinctive characteristics."

- Organizational modularity theory: A theory explaining how modular structures and interfaces shape innovation and knowledge flow. "Baldwin and Clark's organizational modularity theory (Baldwin {paper_content} Clark, 2000) reveals how well-defined interfaces between cognitive modules can nurture innovation while warning against rigid compartmentalization."

- Partnership Agency: A configuration where human and AI form a unified epistemic system with emergent insights. "In Partnership Agency configurations, human and AI boundaries begin to dissolve into a unified epistemic system generating insights neither could produce independently."

- Partnership Dynamics: Forces that shape the evolution, opportunities, and risks of human–AI relationships. "Partnership Dynamics illuminate the generative opportunities and potential vulnerabilities that shape how these relationships evolve"

- Principal-agent dynamics: Power relationships where controlling stakeholders shape the goals and values of agents. "principal-agent dynamics and existing power structures may determine whose values ultimately guide these partnerships"

- Projection Axis: The set of dimensions concerned with exploring futures and evaluating significance. "The Projection Axis encompasses dimensions related to exploring future possibilities and evaluating their significance."

- Scalar reductivism: Reducing complex multidimensional epistemic work to simplified single scores. "They also suffer from scalar reductivism, collapsing complex, multidimensional epistemic labor into simplified scores."

- Single-loop learning: Learning focused on correcting errors within existing frameworks without revisiting assumptions. "Directed Agency typically operates within single-loop learning patterns (Argyris, 1976)"

- Social systems theory: A theory on how distinct communicative systems maintain boundaries while co-evolving. "Social systems theory (Luhmann, 1995) offers insights into how distinct communicative frameworks maintain boundaries while evolving through interaction."

- Sociotechnical systems perspective: An approach that jointly considers technological capabilities and social structures. "Many frameworks also fail to fully embrace a sociotechnical systems perspective, which would better articulate the mutual constitution of technological capabilities and social structures through iterative interaction (Lou et al., 2025; Watkins et al., 2025)."

- Structural coupling: The mechanism by which interacting systems co-adapt to produce emergent properties. "generates emergent properties through structural coupling."

- Synthesis Intelligence: The capability to integrate diverse inputs into coherent explanatory frameworks. "Synthesis Intelligence represents the partnership's ability to integrate diverse inputs into coherent explanatory frameworks that accommodate seemingly disparate findings."

- Temporal myopia: Short-sighted evaluation that overlooks long-term capability evolution. "The framework directly addresses temporal myopia, static-agent assumptions, reductive success criteria, absence of value negotiation, and under-theorized organizational contexts"

- Triple-loop learning: Learning that transforms entire epistemic frameworks and value systems. "Partnership Agency typically manifests triple-loop learning patterns (Argyris, 1976; Tosey et al., 2012)"

- Value co-construction: The collaborative development of evaluative criteria and epistemic values within the partnership. "Value coâconstruction"

Collections

Sign up for free to add this paper to one or more collections.