- The paper introduces General User Models that consolidate computer use data into confidence-weighted user propositions.

- It employs a four-module pipeline—Propose, Retrieve, Revise, and Audit—to generate, update, and safeguard user inferences.

- Evaluation shows robust accuracy (up to 100% for high-confidence propositions) and effective privacy auditing in real-world deployments.

Creating General User Models from Computer Use: An Expert Analysis

Motivation and Problem Statement

The paper addresses a longstanding challenge in HCI and AI: constructing user models that are both general and actionable across diverse contexts, rather than being siloed within specific applications or domains. Existing user models are typically narrow, focusing on isolated preferences (e.g., music, shopping) or limited to single-application telemetry. This fragmentation precludes the realization of context-aware, proactive, and adaptive systems as envisioned in foundational HCI work. The authors propose General User Models (GUMs), which learn from unstructured, multimodal observations of user behavior to generate confidence-weighted, natural language propositions about the user's knowledge, beliefs, and preferences.

GUM Architecture and Abstractions

GUMs are instantiated as collections of confidence-weighted propositions, each representing an inference about the user derived from raw observations (e.g., screenshots, notifications, file system events). The architecture comprises four primary modules:

- Propose: Converts unstructured observations into candidate propositions with associated confidence scores and rationales.

- Retrieve: Indexes and retrieves contextually relevant propositions using a two-stage BM25 + LLM reranking pipeline, incorporating recency and diversity via MMR.

- Revise: Refines and updates existing propositions in light of new evidence, supporting both reinforcement and contradiction.

- Audit: Enforces privacy by leveraging contextual integrity, using the GUM itself to filter observations that violate user-specific privacy norms.

This pipeline enables continuous, online learning of user context, supporting both stable (long-term) and transient (moment-to-moment) inferences.

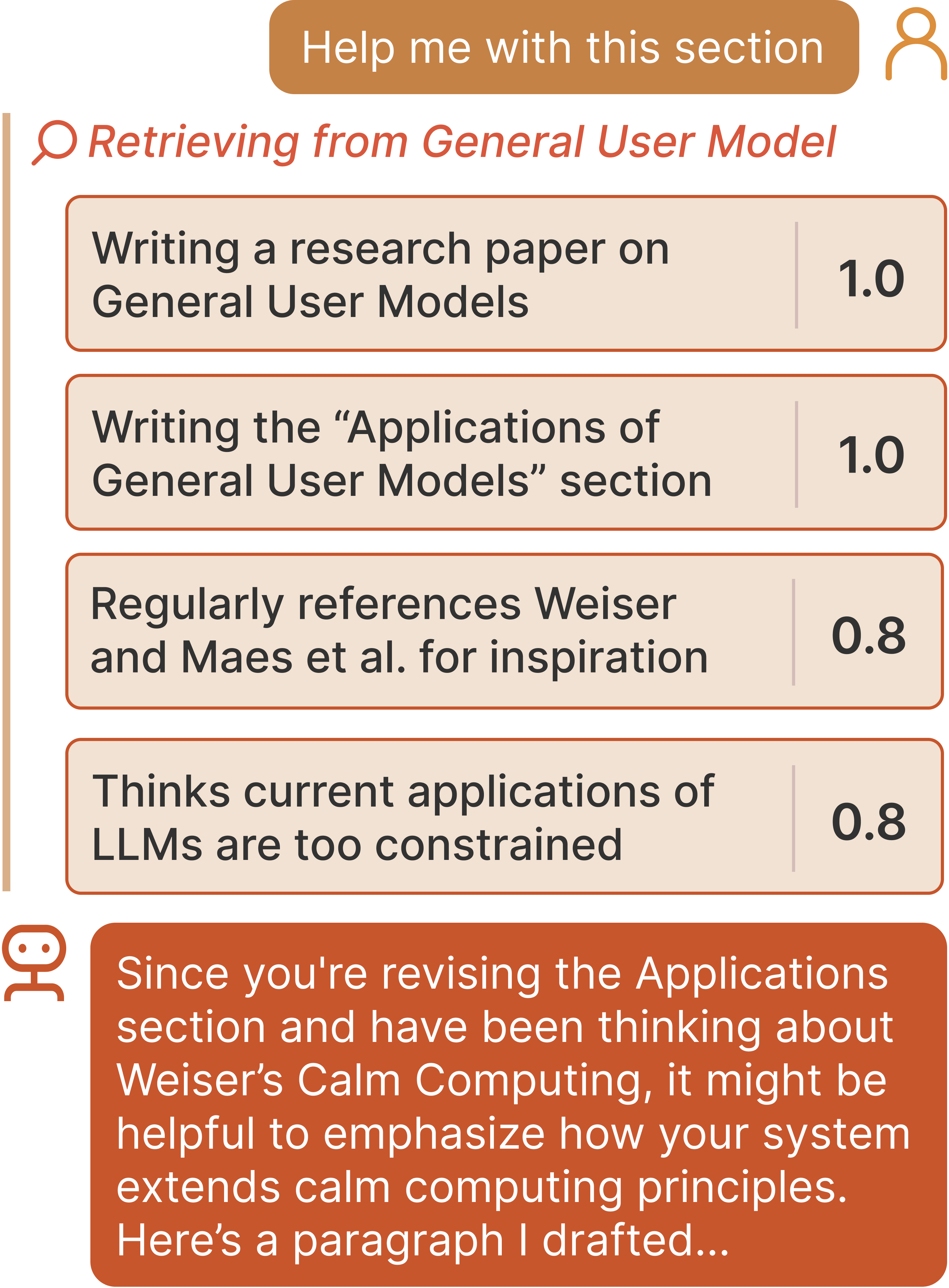

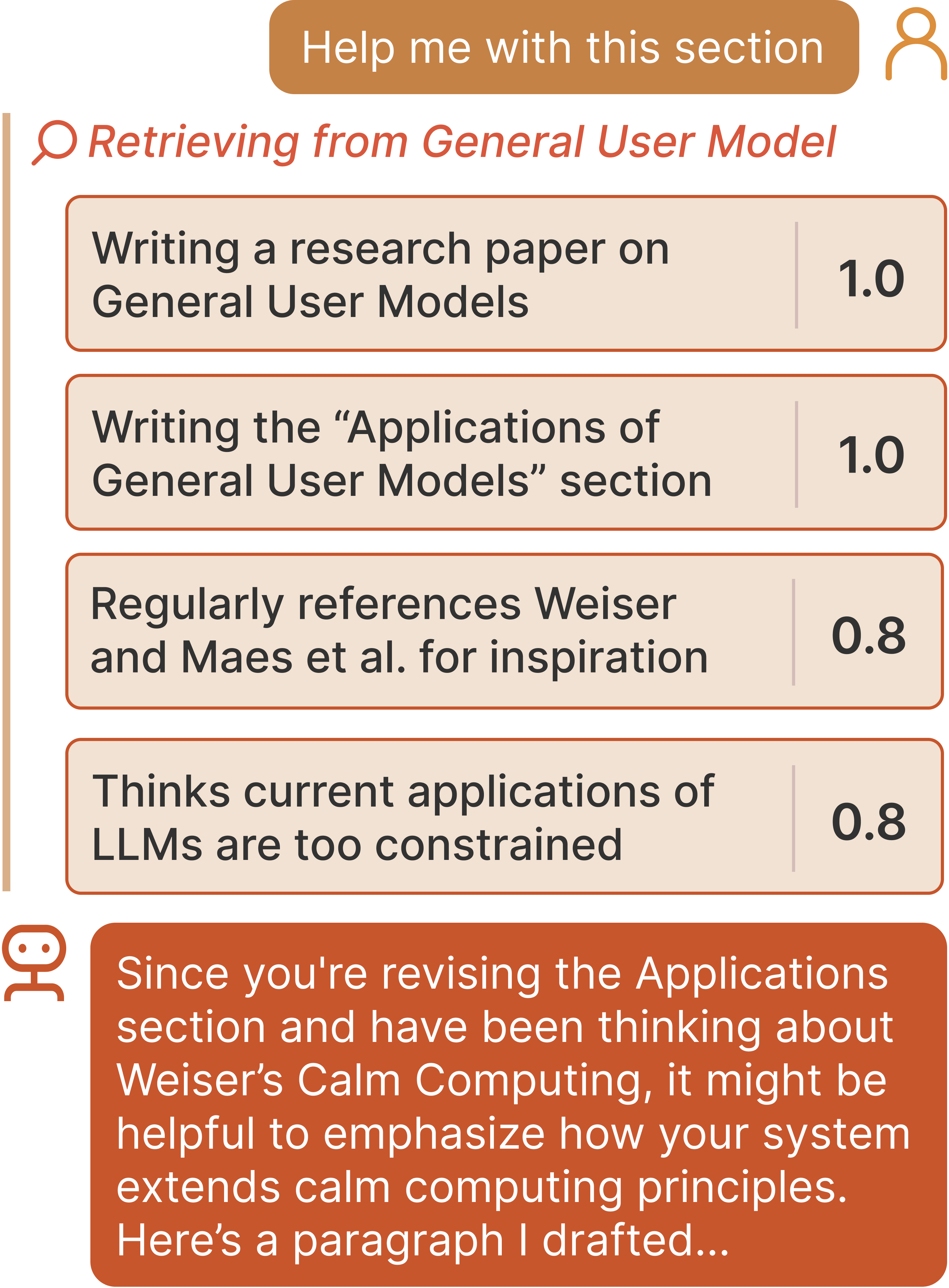

Figure 1: General User Models ground user queries in broader context by marshalling relevant prior interactions and observations, enabling highly contextualized responses.

Implementation Details

Observations and Propositions

Observations are any data that can be tokenized and processed by a VLM or LLM, including screenshots, text, and sensor data. Each observation is timestamped and source-tagged. Propositions are generated via prompted LLM inference, with explicit rationales and confidence scores (1-10, normalized to [0,1]). Decay scores are also generated to model proposition staleness, inspired by cognitive architectures.

Model Choices and Engineering

- Vision-LLM: Qwen 2.5 VL is used for screen transcription and multimodal understanding.

- LLM: Llama 3.3 70B is used for proposition generation, revision, and reranking.

- Retrieval: BM25 for initial candidate selection, followed by LLM-based reranking for semantic precision.

- Privacy: All inference is performed locally or on secure, user-controlled infrastructure; the Audit module uses the GUM to answer contextual integrity questions and block sensitive data.

API and Extensibility

GUM is implemented as a Python package, pip-installable, and exposes an interface for subscribing to observation streams, querying propositions, and managing proposition metadata. Observers can be extended to new modalities (audio, health data, etc.) with minimal engineering overhead.

Applications and System Integration

LLM Prompt Augmentation

GUMs can be used to augment LLM prompts with rich, user-specific context, closing the grounding gap that plagues current LLM-based assistants. For example, underspecified queries ("help me with this section") are automatically contextualized with relevant prior work, collaborators' feedback, and recent activities, yielding more relevant and actionable model outputs.

Context-Aware and Proactive Systems

GUMs enable a new class of applications that can query user context across all observed modalities. Examples include:

- Notification Filtering: OS-level notification managers can suppress irrelevant alerts and surface only those aligned with the user's current focus and priorities.

- Personalized Interface Agents: Agents can anticipate user needs and automate tasks (e.g., travel planning, document organization) without explicit user input.

- Proactive Assistants (Gumbo): The Gumbo system demonstrates end-to-end integration, ingesting screenshots to build a GUM, generating and ranking suggestions, and autonomously executing low-risk actions.

(Figure 2)

Figure 2: Gumbo assistant surfaces and executes suggestions based on the user's GUM, with interfaces for suggestion review and proposition management.

Evaluation

Technical Evaluation: Accuracy and Calibration

A controlled study with N=18 participants using email data demonstrates that GUMs generate accurate and well-calibrated propositions. Highly confident propositions (confidence=1.0) achieve 100% accuracy, and overall accuracy is 76.15% across all propositions. The full GUM architecture (with both Retrieve and Revise) outperforms ablations in user preference rankings and calibration (Brier score 0.17±0.03), with underconfidence being the dominant error mode—an advantageous property for user trust.

(Figure 3)

Figure 3: The GUM pipeline processes unstructured inputs, audits for privacy, constructs and revises propositions, and retrieves contextually relevant information.

Privacy Audit

The Audit module, evaluated on the same participant set, effectively blocks most privacy-violating propositions, with only 7/180 flagged as violations. Participants rated the contextual integrity responses highly (μ=6.06 on a 1-7 Likert scale). However, the system is susceptible to adversarial manipulation (e.g., spam emails injecting false propositions), highlighting the need for robust adversarial filtering.

End-to-End Evaluation: Gumbo Deployment

A longitudinal deployment with N=5 participants using Gumbo over 5 days confirms that GUMs remain accurate and calibrated in real-world, multimodal settings (accuracy 0.79±0.07, Brier 0.28±0.04). Participants found Gumbo's suggestions both immediately useful and capable of surfacing needs they had not explicitly articulated. Notably, 25% of suggestions were rated as strong or excellent, and two participants requested continued use post-study.

Limitations and Trade-offs

- Privacy vs. Utility: The more data GUM ingests, the more powerful its inferences, but this amplifies privacy risks. The Audit module mitigates but does not eliminate these risks.

- Generalization: GUMs are limited by the scope of observed modalities; activities outside the computer or on unobserved devices are not captured.

- Model Limitations: Current LLMs and VLMs are prone to hallucinations, misattributions, and context forgetting. The retrieval and revision pipeline compensates for these weaknesses, but future end-to-end models may subsume these steps.

- Resource Requirements: Running GUMs with large models (e.g., Llama 3.3 70B, Qwen 2.5 VL) requires significant compute (80GB H100 GPUs in the study), though quantization and model distillation are feasible paths for on-device deployment.

- Adversarial Manipulation: GUMs are vulnerable to prompt injection and adversarial data, necessitating further research on robust filtering and user-in-the-loop validation.

Implications and Future Directions

GUMs represent a significant step toward unified, cross-application user modeling, enabling both classic and novel HCI applications. The architecture is modular and extensible, supporting integration with future, more efficient multimodal models and additional sensor modalities. Key research directions include:

- On-device, privacy-preserving inference: Model distillation and quantization to enable local deployment without cloud dependencies.

- Adversarial robustness: Developing techniques for detecting and mitigating manipulation via malicious observations.

- Ethical safeguards: Ensuring user agency, transparency, and auditability in proposition generation and suggestion execution.

- Long-term adaptation: Investigating mechanisms for lifelong learning, user-driven proposition editing, and dynamic privacy norm modeling.

Conclusion

The GUM framework operationalizes a general, multimodal, and privacy-aware approach to user modeling, moving beyond application-specific silos. Empirical results demonstrate that GUMs can synthesize accurate, calibrated, and actionable inferences from unstructured user behavior, supporting both reactive and proactive system behaviors. While challenges remain in privacy, robustness, and resource efficiency, GUMs provide a foundation for the next generation of context-aware, adaptive, and user-aligned interactive systems.