- The paper finds that RL-trained VLMs achieve superior compositional reasoning compared to SFT, emphasizing explicit visual grounding.

- The study introduces ComPABench, a diagnostic framework evaluating cross-modal compositional generalization across visual and textual tasks.

- Enhanced techniques like RL-Ground significantly improve out-of-distribution performance by integrating unimodal reasoning into multimodal tasks.

Unveiling the Compositional Ability Gap in Vision-Language Reasoning Model

Introduction

Vision-LLMs (VLMs) are engineered to integrate visual perception with language reasoning, leveraging reinforcement learning (RL) strategies that have demonstrated efficacy in enhancing reasoning capabilities within LLMs. However, whether these strategies can effectively extend compositional reasoning across modalities in VLMs remains inadequately explored. The paper investigates compositional generalization in VLMs, conducting a systematic probing study through the development of ComPABench—a diagnostic framework targeting the integration of unimodal reasoning skills into cross-modal, compositional tasks.

Methodology

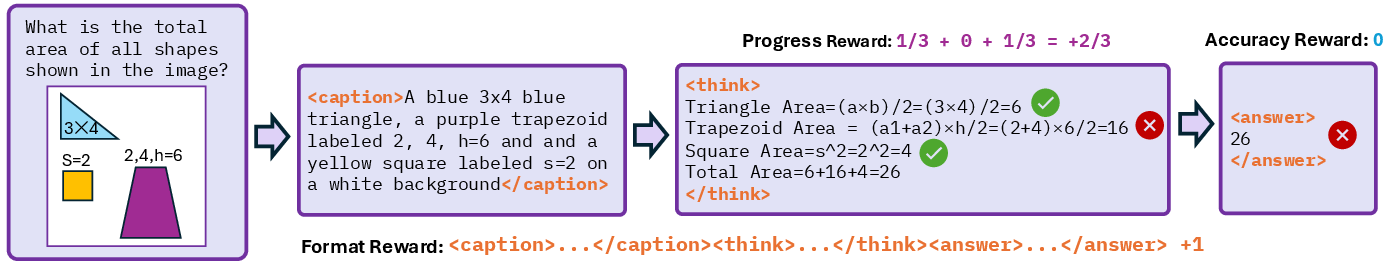

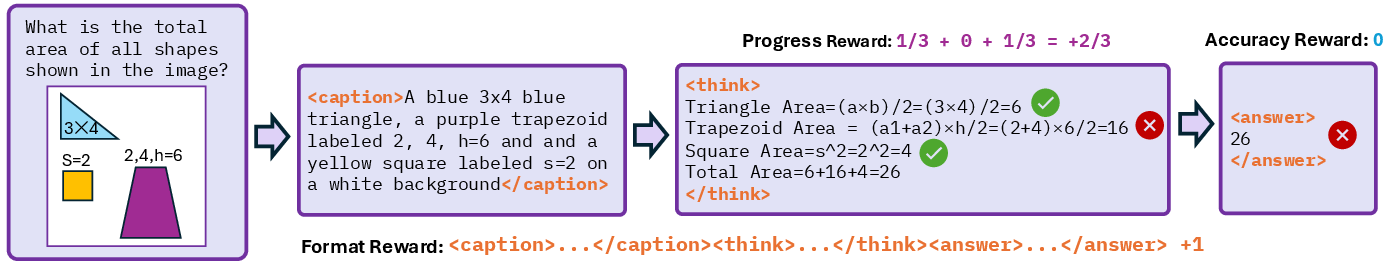

The authors present three core findings: (1) RL-trained VLMs exhibit superior compositional generalization compared to those trained with supervised fine-tuning (SFT); (2) existing VLMs, despite performing well on isolated tasks, struggle with compositional generalization under cross-modal scenarios; and (3) incorporating explicit visual content descriptions before reasoning and rewarding progressive grounding significantly enhances compositional reasoning. This emphasizes two critical components for improving VLMs' compositionality: visual-to-text alignment and accurate visual grounding.

Experimental Framework

Benchmark Overview

ComPABench evaluates three dimensions of compositional generalization: cross-modal composition, cross-task reasoning, and out-of-distribution (OOD) variant handling—each with multimodal and unimodal variants. This framework systematically probes VLM capabilities under novel task objectives and distribution shifts. Specific tasks include calculating shape areas, identifying grid positions, and assessing composite challenges requiring skill integration across modalities.

Training Paradigms

The paper formalizes three training paradigms utilized: SFT, RL with Group Relative Policy Optimization (GRPO), and SFT-initialized RL. SFT trains models using paired data and focuses on aligning model outputs with target distributions, whereas RL incorporates GRPO for structured policy optimization using token-level advantages modulated by reward signals.

Figure 1: Illustration of RL-Ground framework.

Results

Cross-Modality Generalization

RL-trained models demonstrate only limited compositional generalization from text to multimodal visual tasks, whereas initializing RL with pure-text priors enhances model performance—indicating that pure-text training alone does not ensure effective visual reasoning transfer.

RL exhibits improved compositional reasoning over SFT-trained models, particularly on pure-text tasks. The integration of RL-Ground—combining visual-to-text alignment with progress rewards—enables VLMs to achieve better compositional reasoning in multimodal settings.

Out-of-Distribution Generalization

Under OOD conditions, RL models show robust generalization, outperforming SFT significantly. RL-Ground closes the compositional ability gap effectively, yielding strong performance across individual and compositional tasks in OOD scenarios.

Conclusion

The paper provides a detailed evaluation of compositional generalization capabilities in VLMs, highlighting the limitations of current training strategies through the ComPABench framework. While RL improves compositional reasoning in VLMs, multimodal compositionality remains challenging, necessitating structured prompting and grounded supervision techniques. The proposed RL-Ground strategy underscores the importance of visual grounding for enhancing multimodal reasoning capabilities in VLMs across diverse task distributions.