MCP-Zero: Proactive Toolchain Construction for LLM Agents from Scratch

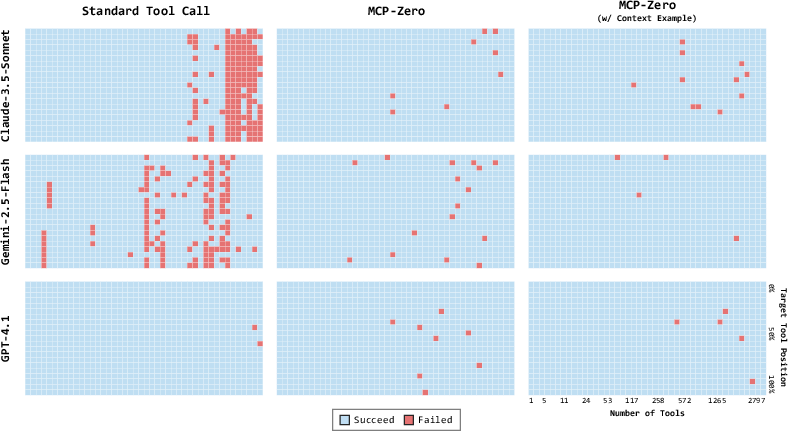

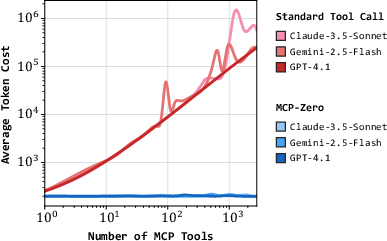

Abstract: Function-calling has enabled LLMs to act as tool-using agents, but injecting thousands of tool schemas into the prompt is costly and error-prone. We introduce MCP-Zero, a proactive agent framework that lets the LLM itself decide when and which external tools to retrieve, thereby assembling a task-specific toolchain from scratch. The framework is built upon three components: (1) Proactive Tool Request, where the model emits a structured $\left<\operatorname{tool_assistant}\right>$ block that explicitly specifies the desired server and task; (2) Hierarchical Vector Routing, a coarse-to-fine retrieval algorithm that first selects candidate servers and then ranks tools within each server based on the semantic similarity; (3) Iterative Proactive Invocation, enabling multi-round, cross-domain toolchain construction with minimal context overhead, and allowing the model to iteratively revise its request when the returned tools are insufficient. To evaluate our approach we also compile MCP-tools, a retrieval dataset comprising 308 MCP servers and 2,797 tools extracted from the official Model-Context-Protocol repository and normalized into a unified JSON schema. Experiments show that MCP-Zero (i) effectively addresses the context overhead problem of existing methods and accurately selects the correct tool from a pool of nearly 3,000 candidates (248.1k tokens); (ii) reduces token consumption by 98\% on the APIbank while maintaining high accuracy; and (iii) supports multi-turn tool invocation with consistent accuracy across rounds. The code and dataset will be released soon.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper is about teaching AI assistants (LLMs, or LLMs) to be more independent when using tools. Instead of dumping a huge list of possible tools into the AI’s prompt and asking it to pick one, the authors propose “MCP‑Zero,” a system that lets the AI actively ask for the exact tool it needs, when it needs it. This makes the AI faster, more accurate, and better at handling complex, multi-step tasks.

“MCP” stands for Model Context Protocol, a standard way for AIs to connect to many external tools (like file systems, web services, databases). The problem is: the more tools you add, the longer and heavier the AI’s prompt becomes. MCP‑Zero fixes this by letting the AI discover tools on demand, instead of loading everything up front.

What questions were the researchers trying to answer?

In simple terms, the paper asks:

- Can an AI figure out what it’s missing and ask for the right tools by itself, instead of being given a giant, confusing toolkit?

- Can it quickly find the best tool out of thousands by searching smartly, not just once at the start?

- Will this approach still work well in long, multi-turn conversations as tasks change and grow?

How did they do it?

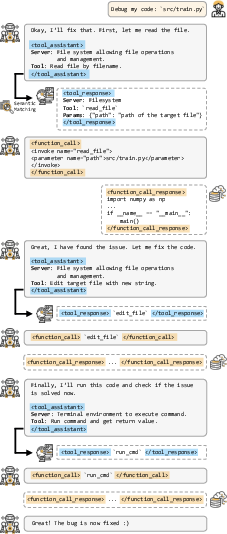

Think of a big “tool mall” with 2,797 tools across 308 “stores” (servers). You don’t want to carry every instruction manual around. Instead, you ask for what you need when you hit a roadblock, and use a smart directory to find the right store and tool. MCP‑Zero does exactly that, using three main ideas:

1) Active Tool Requests

When the AI realizes it needs help (like “I need to read a file” or “I need to run a command”), it writes a short, structured request that says:

- which “store” (server) it wants

- what kind of operation (tool) it needs

This is like the AI saying: “I need access to the File System server, and a tool to read files.” Because the AI describes its need clearly, it’s easier to match that request to the right tool documentation.

Example:

1 2 3 4 |

<tool_assistant> server: filesystem tool: read_file at path src/train.py </tool_assistant> |

2) Hierarchical Semantic Routing (a two-step smart search)

Instead of searching all 2,797 tools at once, MCP‑Zero first:

- narrows down the right servers (like picking the right store in the mall)

- then ranks tools within those servers to find the best match

“Semantic” means it uses meaning, not exact words, so “read code” can match “open file” if they’re related. This two-step search is faster and more precise, like checking the floor directory to pick the right shop, then finding the right shelf in that shop.

3) Iterative Capability Extension

The AI doesn’t have to decide everything upfront. As the task unfolds, it can request new tools when needed, and build a multi-step chain:

- read a file → edit code → run a command to test → maybe search the web, etc.

If a tool turns out not to be enough, the AI refines its request and tries again. This makes the system naturally fault-tolerant and adaptable.

Supporting dataset: MCP‑tools

To test MCP‑Zero properly, the authors built a dataset called MCP‑tools:

- 308 MCP servers and 2,797 tools collected from the official MCP repository

- Cleaned and summarized to improve matching

- Pre-computed “embeddings” (numerical meaning representations) so searches are fast

Experiments they ran

- Needle-in-a-haystack: Can the AI find the right tool when it’s hidden among thousands?

- APIBank: A standard benchmark for tool calling, with single-turn and multi-turn conversations.

What did they find, and why does it matter?

Here are the main results:

- Accurate selection at scale: MCP‑Zero can pick the right tool from nearly 3,000 choices while keeping prompts short.

- Huge cost savings: Up to 98% fewer “tokens” (the tiny chunks of text AIs read), especially on APIBank tests, while keeping high accuracy. Fewer tokens means lower compute cost and faster responses.

- Strong performance in multi-turn chats: It stays accurate even when the conversation gets longer and the tool ecosystem gets larger.

- Better than “retrieve once” methods: Systems that pick tools only from the initial user query often miss later needs. MCP‑Zero’s iterative requests fix that.

In short: letting the AI actively ask for tools makes it smarter, cheaper, and more reliable.

What’s the impact of this research?

If we want truly autonomous AI agents, they need to do more than passively choose from a pre-loaded menu. They should notice their limits and actively get the tools they need—just like a good problem-solver does.

MCP‑Zero shows a practical way to:

- Reduce prompt bloat in large tool ecosystems

- Scale to thousands of tools without slowing down

- Build flexible, cross-domain toolchains on the fly

- Cut costs while keeping or improving accuracy

This could help developers build more capable AI assistants for coding, data work, research, and beyond. Future directions include combining MCP‑Zero with systems that can create brand-new tools when none exist, and turning MCP‑Zero into a “meta-server” that other agents can call to discover tools efficiently.

Collections

Sign up for free to add this paper to one or more collections.