- The paper introduces a novel framework that dynamically manages LLM short-term memory to optimize tool calling in multi-turn conversations.

- The paper evaluates three modes—Autonomous Agent, Workflow, and Hybrid—that balance tool removal and task performance across 13 LLMs on 5,000 tool contexts.

- The paper demonstrates that adaptive memory management enhances task accuracy and efficiency, offering potential for integration with long-term memory frameworks.

Overview

"MemTool: Optimizing Short-Term Memory Management for Dynamic Tool Calling in LLM Agent Multi-Turn Conversations" addresses a critical challenge faced by LLMs — the management of their short-term memory concerning tool usage during multi-turn conversations. Conventional LLMs, while capable of interacting with external tools, have limited effectiveness due to fixed context windows. MemTool proposes a framework for dynamic memory management, enabling better context handling across multiple interactions by adopting three different modes of operation: Autonomous Agent Mode, Workflow Mode, and Hybrid Mode.

Methodology

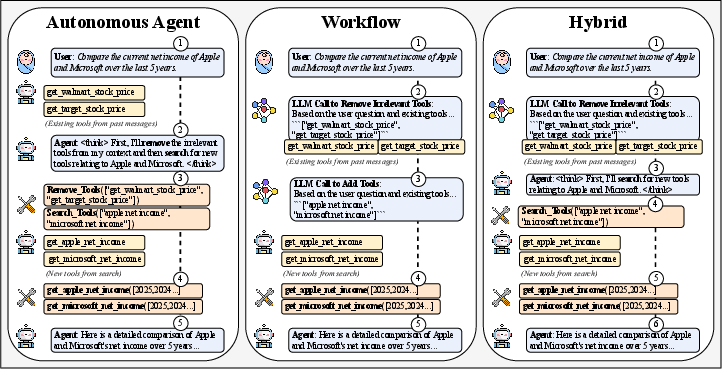

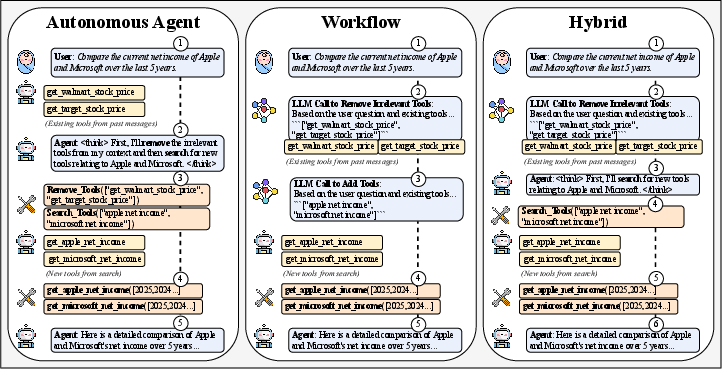

Figure 1: MemTool three modes architecture and end-to-end walkthrough of user-assistant interaction across multi-turn chats.

- Autonomous Agent Mode: This mode allows an LLM to autonomously manipulate its toolset, equipping and discarding tools as needed without external control. The agent uses function calls to manage the tool context, executing tasks with retrieved tools and adjusting the toolset based on task requirements.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

|

def autonomous_agent_mode(LLM, query, previous_tools, context, vector_db):

# Prune previous context for efficiency

context = prune_context(context) + query

tools = previous_tools | {SearchTools, RemoveTools}

while True:

response = LLM(context, tools)

if response.type == "tool_call":

# Manage tools based on agent's decision

if response.tool == RemoveTools:

tools = manage_tools(response, tools)

elif response.tool == SearchTools:

tools = search_new_tools(response, vector_db, tools)

else:

return response.content |

- Workflow Mode: This mode follows a pre-defined operational flow where tools are pruned and searched based on a fixed strategy, minimizing the reliance on LLM reasoning capabilities for tool management. It systematically processes user queries, ensuring the toolset remains optimized for performance.

- Hybrid Mode: Combining elements of both previous modes, Hybrid Mode uses a structured workflow for pruning tools, while still allowing the agent some freedom to search for and incorporate new tools as needed.

Evaluation

The paper evaluates MemTool across 13 different LLMs using the ScaleMCP benchmark, which includes 5,000 tool contexts. Performance is assessed based on tool-removal efficiency, tool correctness, and task completion accuracy over 100 multi-turn conversations.

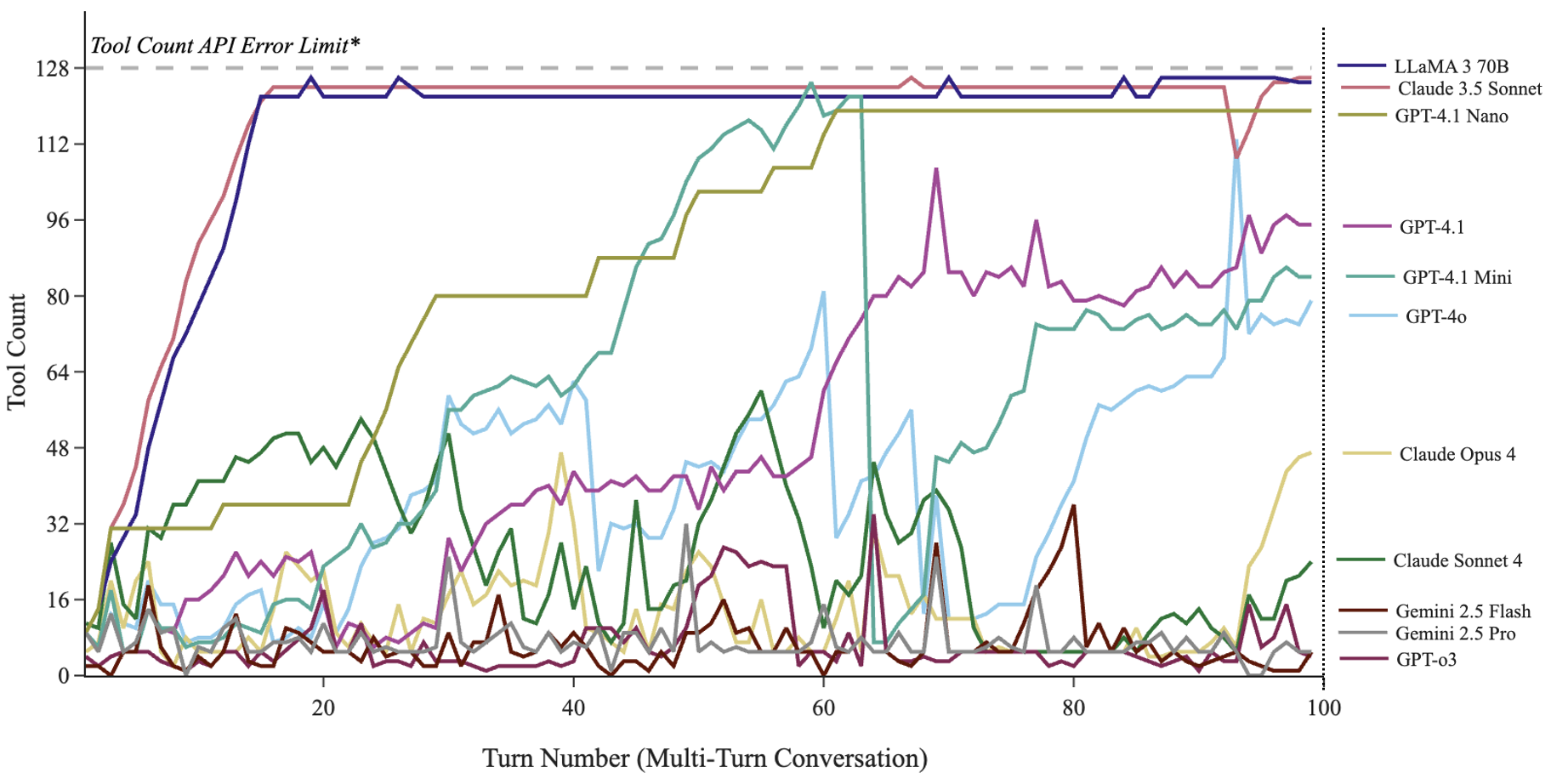

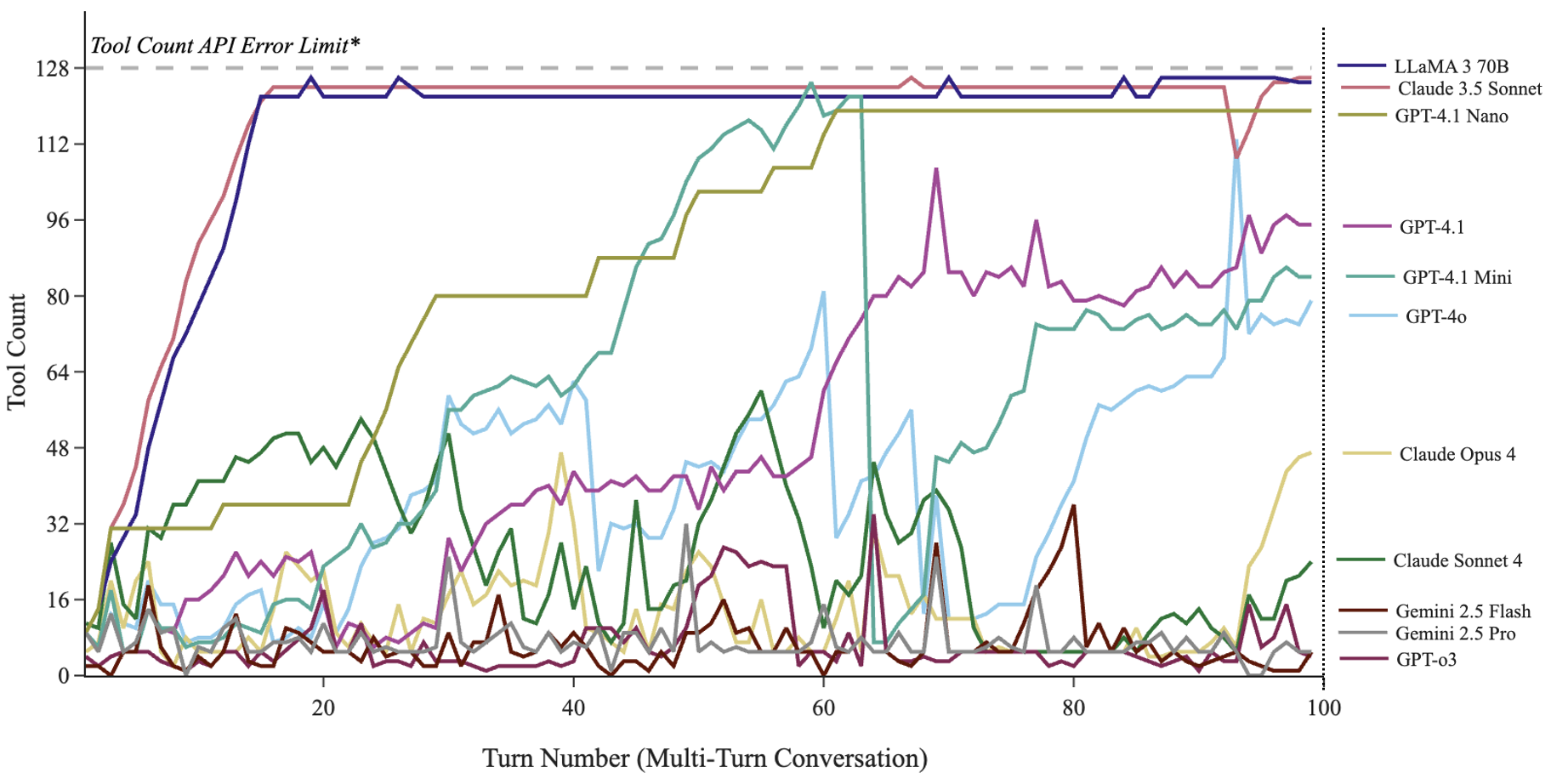

Figure 2: MemTool Autonomous Agent Mode: Tool Count across 100 multi-turn queries for various LLMs.

- Autonomous Agent Mode: Some LLMs, such as GPT-o3 and Gemini 2.5 Pro, achieved high removal efficiency (90-94%), efficiently managing the toolset and task completion in dynamic settings. However, smaller models struggled with managing tool removal effectively.

- Workflow Mode: This mode consistently managed tool removal effectively across all tested LLMs. It demonstrated a strong balance of maintaining tool relevance while achieving high task completion rates.

- Hybrid Mode: This mode performed well in maintaining tool removal efficiency similar to workflow mode, while allowing some flexibility seen in the autonomous mode for tool addition, thus achieving high task completion rates with moderate tool management complexity.

Implications and Future Directions

The implications of MemTool are significant for the deployment of LLMs in real-world applications, particularly where agents need to adaptively interact with complex environments over extended sessions. The hybrid strategies suggested by MemTool could extend to long-term memory frameworks, improving overall system intelligence in more persistent user interactions.

Future work will focus on the integration of MemTool with long-term memory frameworks to create more robust and versatile LLM agents, capable of maintaining a coherent understanding and interaction model over extended user interactions. Additionally, exploring fine-tuning mechanisms or human feedback loops might improve the autonomous decision-making capabilities of LLMs in tool management.

Conclusion

MemTool introduces a novel approach to short-term memory management in LLMs, providing three architectures to improve dynamic tool handling across multi-turn interactions. By offering modes that provide varying levels of control and autonomy, MemTool demonstrates its potential to enhance the efficiency and effectiveness of LLMs in dynamic and complex conversational environments.