- The paper proposes a statistical physics framework to model language model reasoning as a continuous-time stochastic system with latent regime switches.

- It demonstrates that sentence-level dynamics and multimodal residuals reveal distinct reasoning phases, validated across eight models on seven benchmarks.

- The SLDS framework achieves a predictive R² of 0.68 and shows generalizability in capturing reasoning dynamics across various Transformer architectures.

A Statistical Physics of LLM Reasoning

The paper "A Statistical Physics of LLM Reasoning" addresses the complex emergent reasoning phenomenon observed in Transformer LLMs (LMs). It proposes using a statistical physics framework to model LLMs' reasoning dynamics, viewed as a stochastic dynamical system with latent regime switching. This approach is designed to accommodate the diverse reasoning phases and potential misalignments that LMs can experience during reasoning tasks. The paper also includes empirical results from eight models applied to seven benchmarks, highlighting the utility of the framework for predicting critical transitions and inference-time failures.

Continuous-Time Dynamical Systems Framework

Transformers' reasoning is modeled as a continuous-time stochastic process, with sentence-level hidden states evolving according to a stochastic differential equation (SDE). The formulation adopts concepts from statistical physics, incorporating drift and diffusion terms to account for systematic and stochastic dynamics:

dh(t)=μ(h(t),Z(t))dt+B(h(t),Z(t))dW(t),

where Z(t) represents latent regimes dictating different reasoning states. Full high-dimensional analysis is not feasible due to the size of LMs; hence, a lower-dimensional manifold is employed, capturing significant variance (approximately 50% with rank-40 projection).

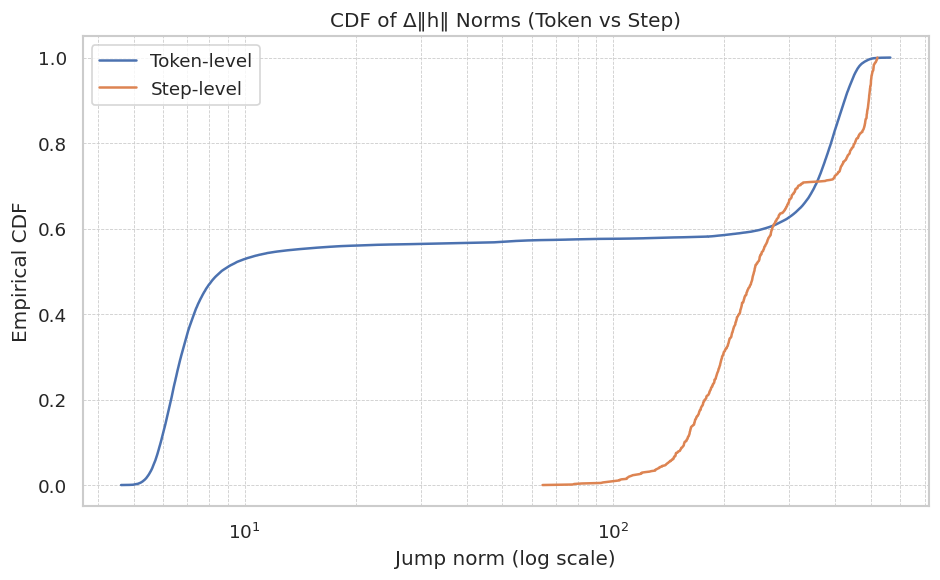

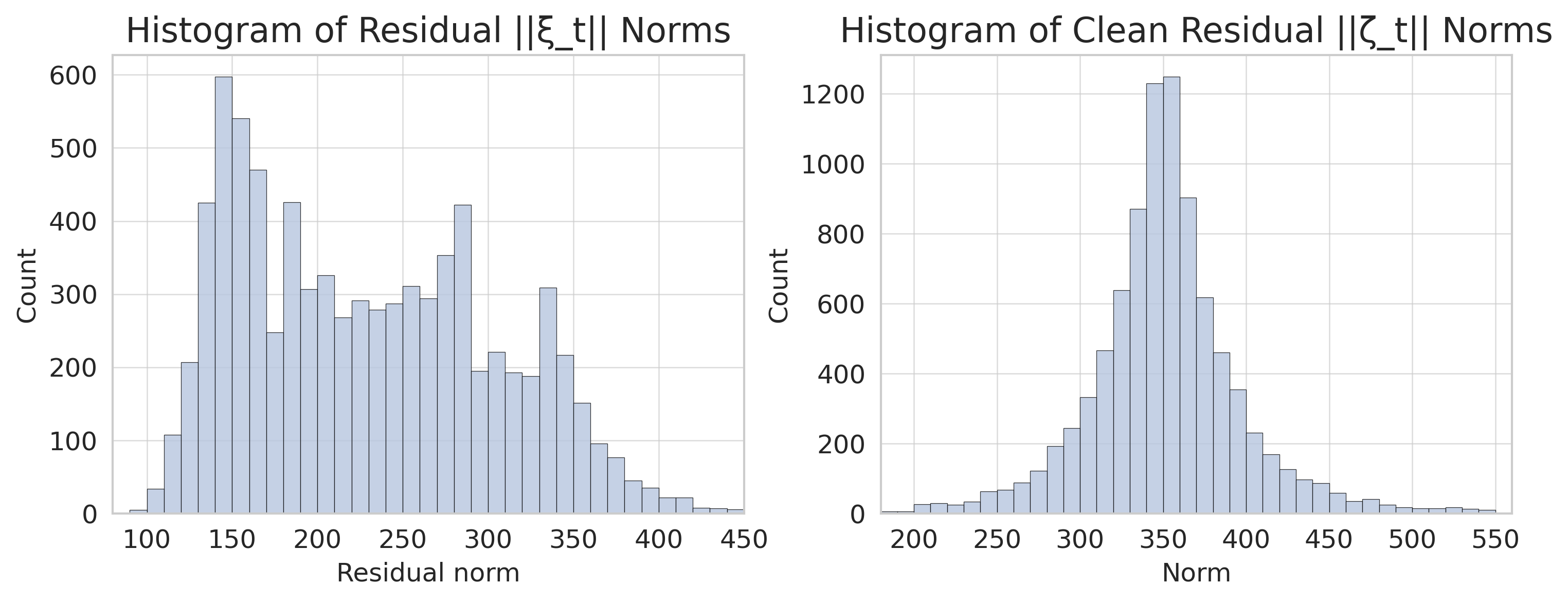

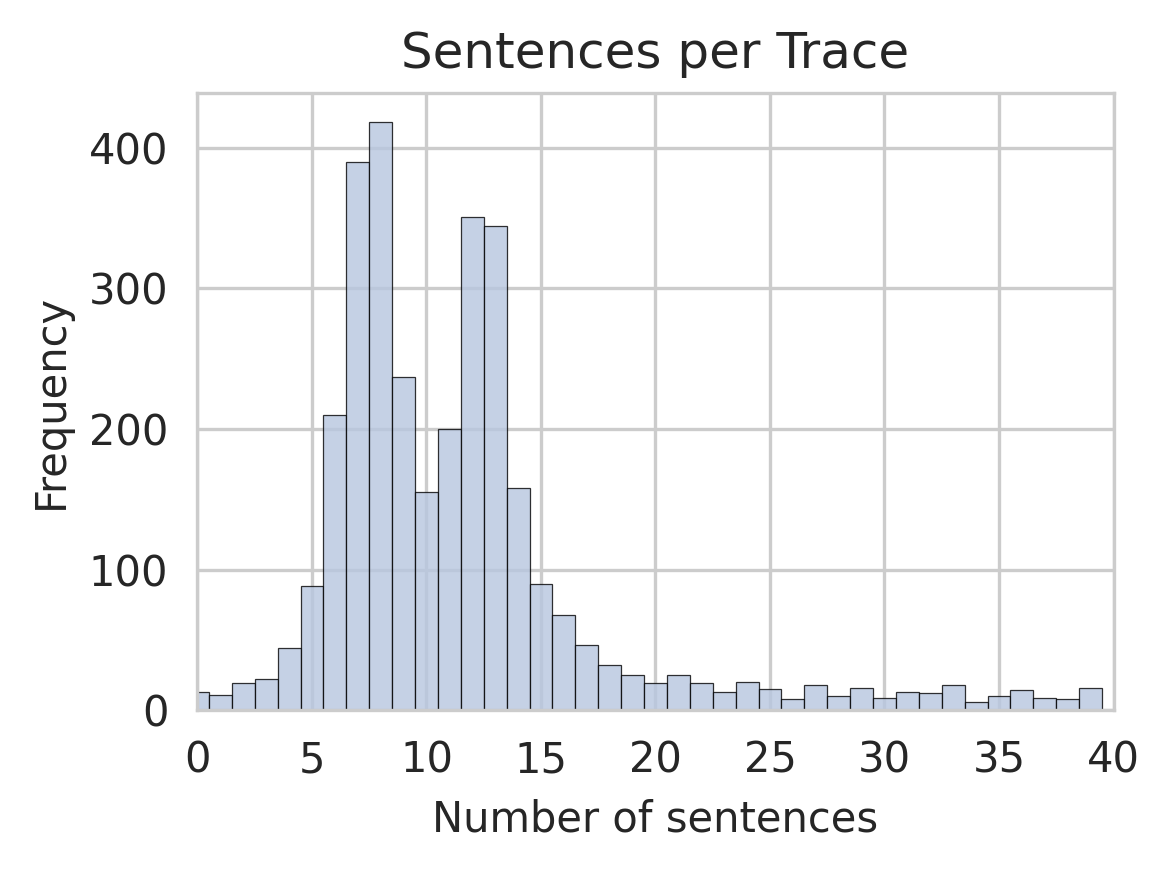

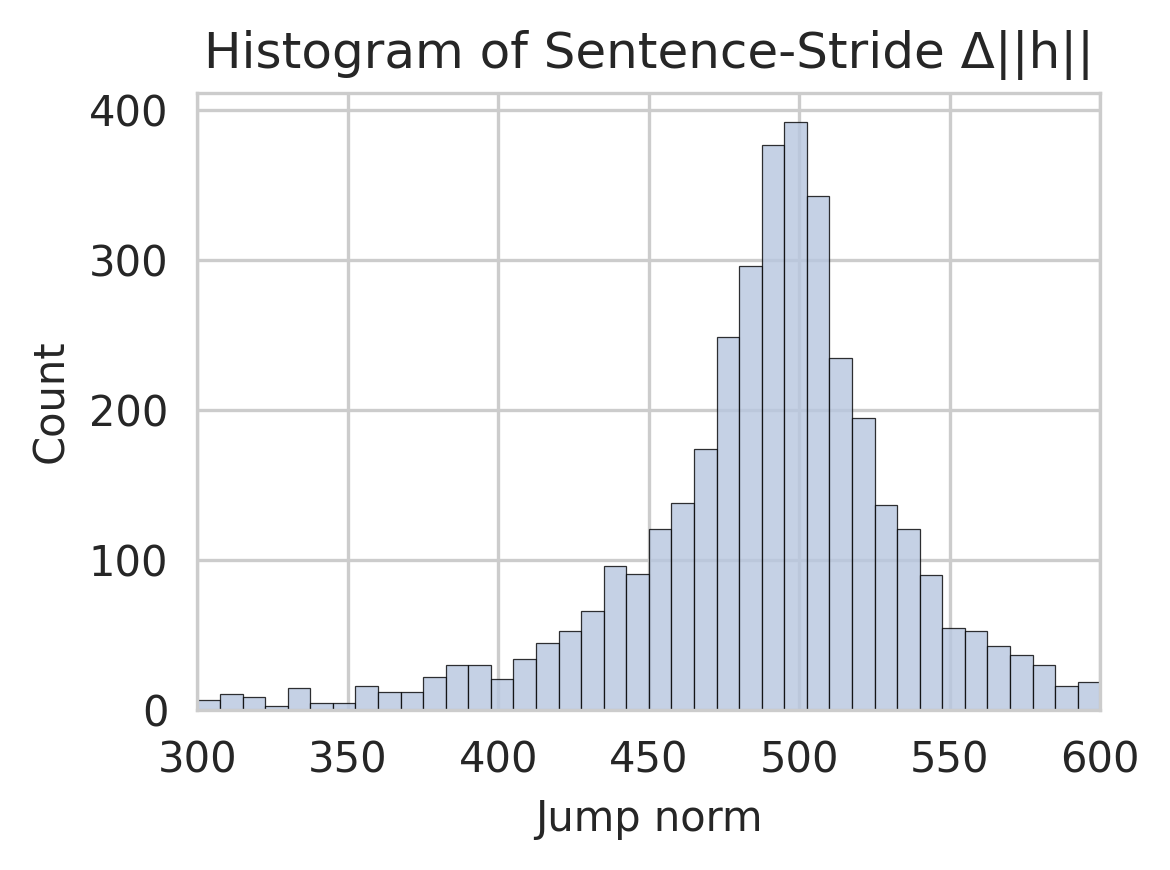

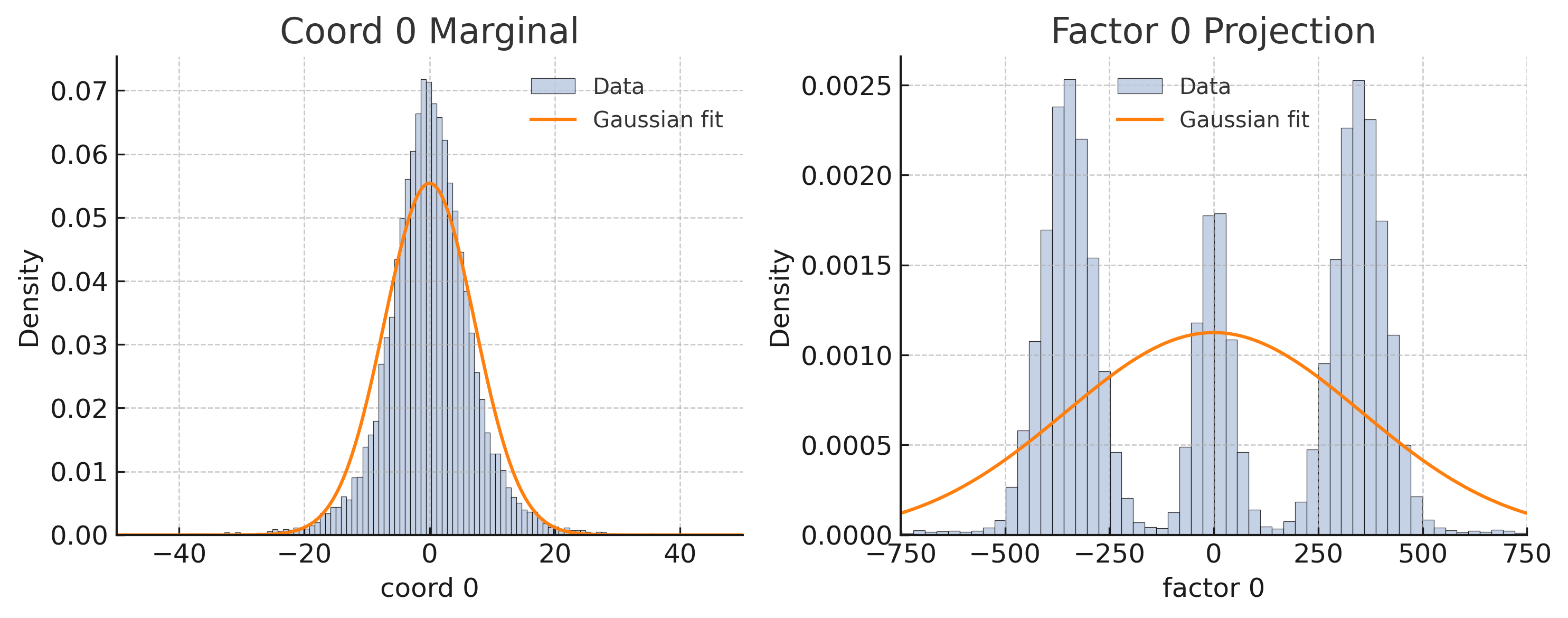

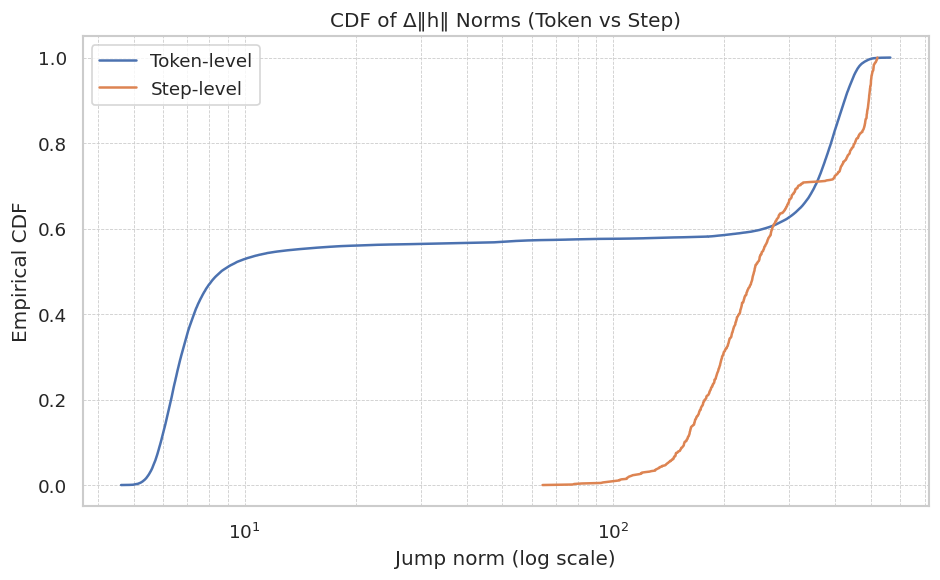

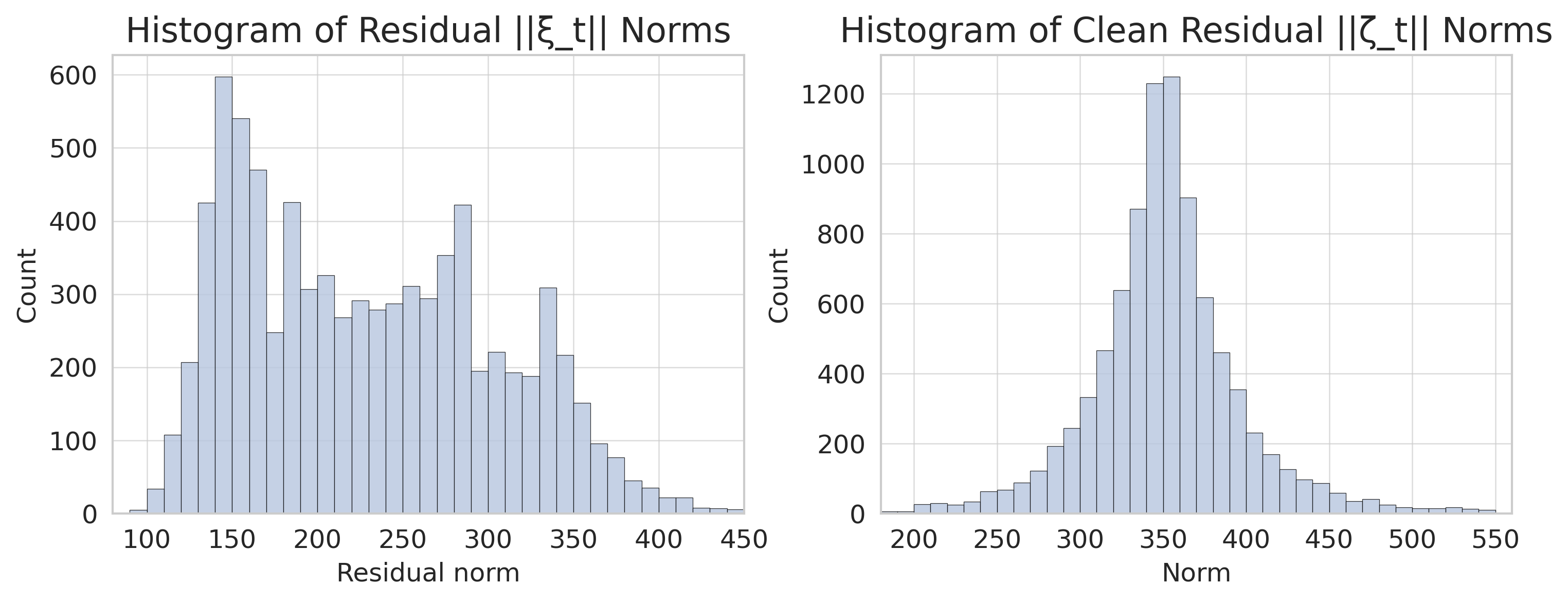

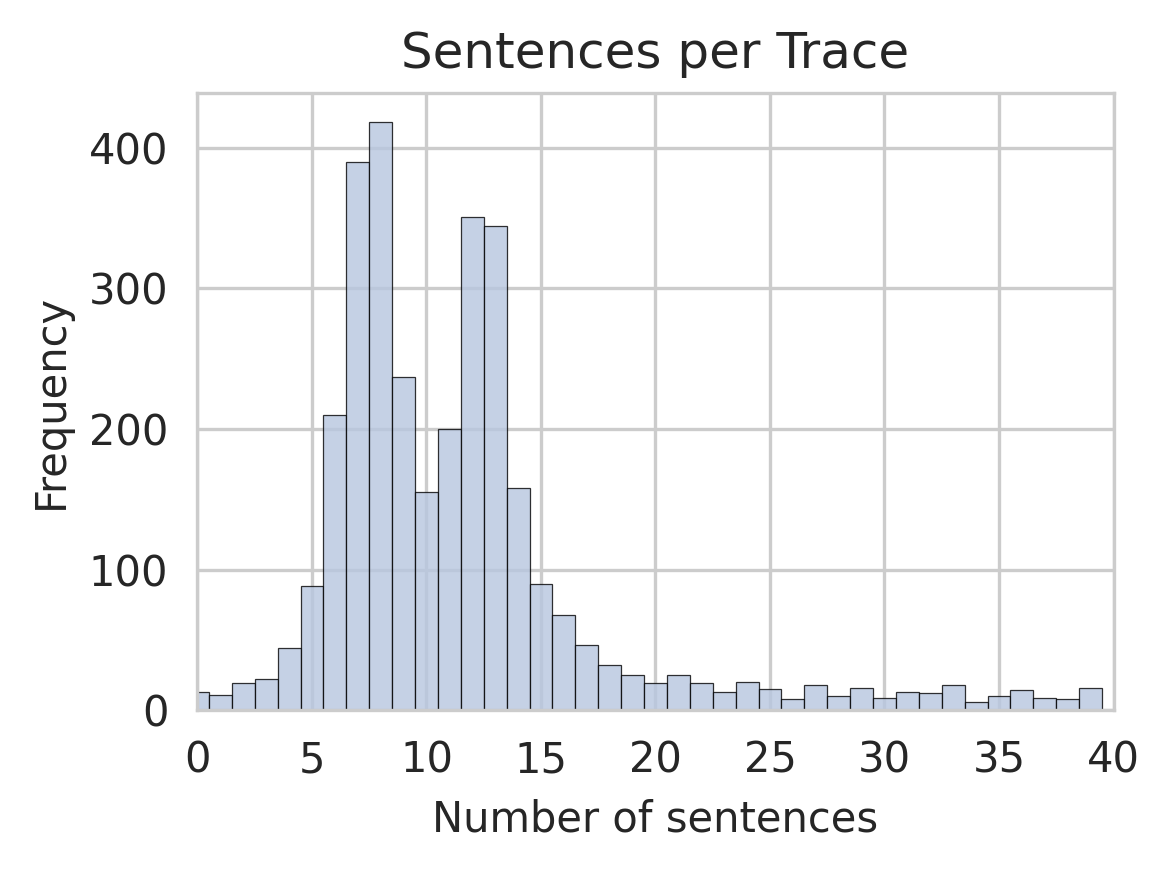

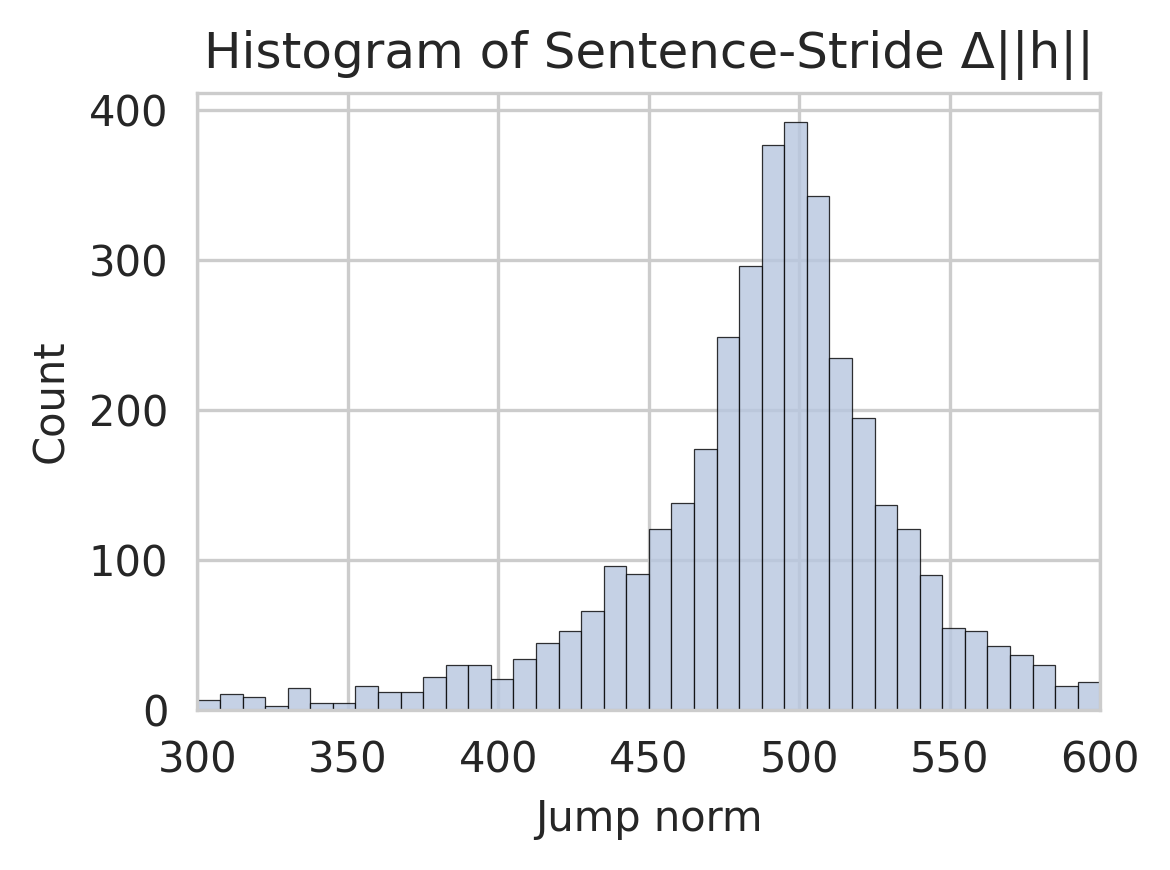

Figure 1: Cumulative distribution functions (CDFs) and histograms of residual norms highlighting the necessity of sentence-level analysis and regime-switching framework.

Empirical Decomposition and Multimodal Residual Analysis

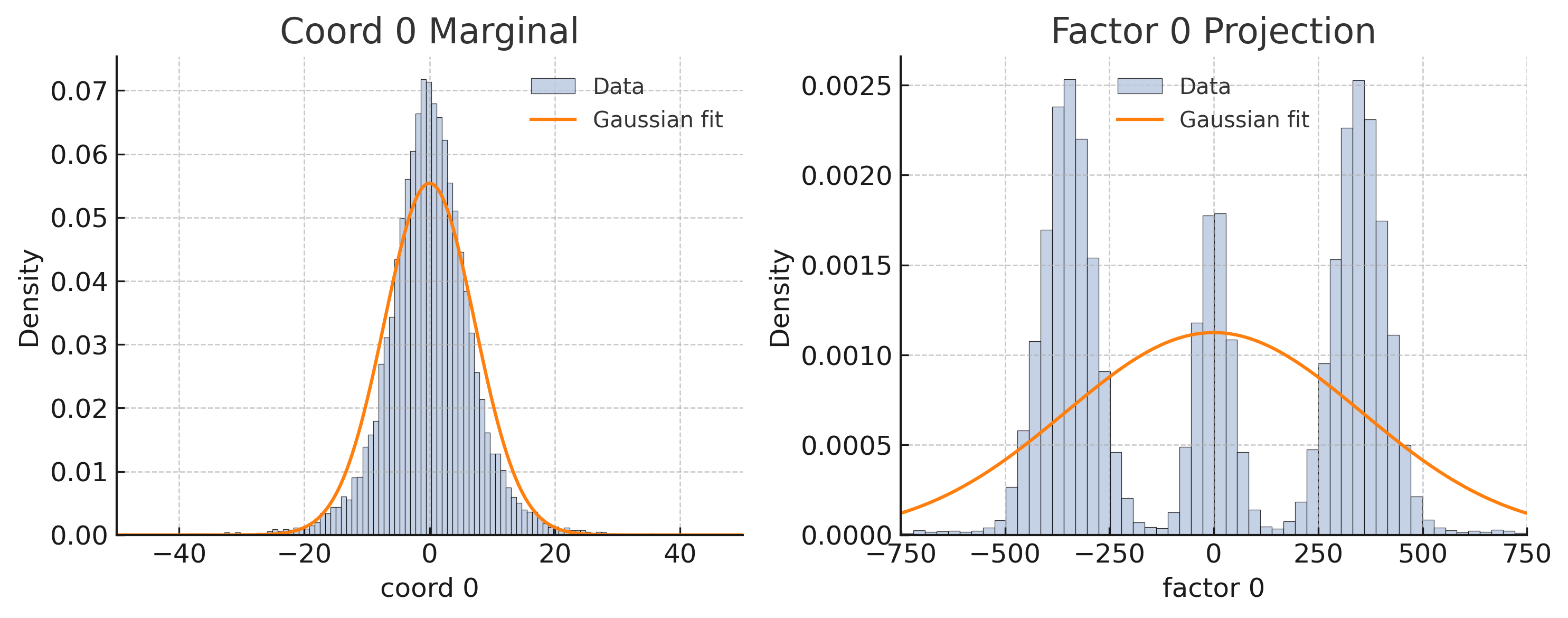

Empirical analysis reveals that sentence-level increments better capture semantic shifts in reasoning, while linear predictability captures some variance in reasoning transitions. However, multimodal residuals suggest that a single model cannot fully explain reasoning dynamics, motivating a regime-switching model. This multimodality implies the existence of distinct reasoning phases, potentially linked to emergent failure modes in LMs.

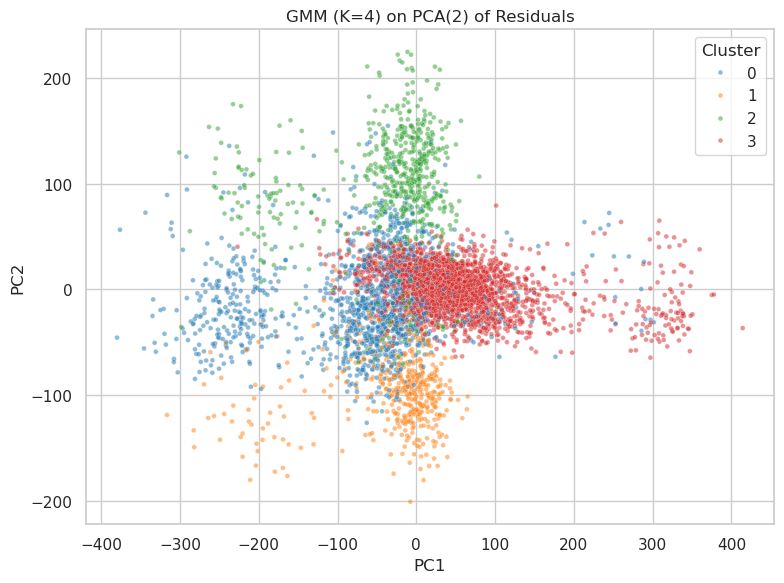

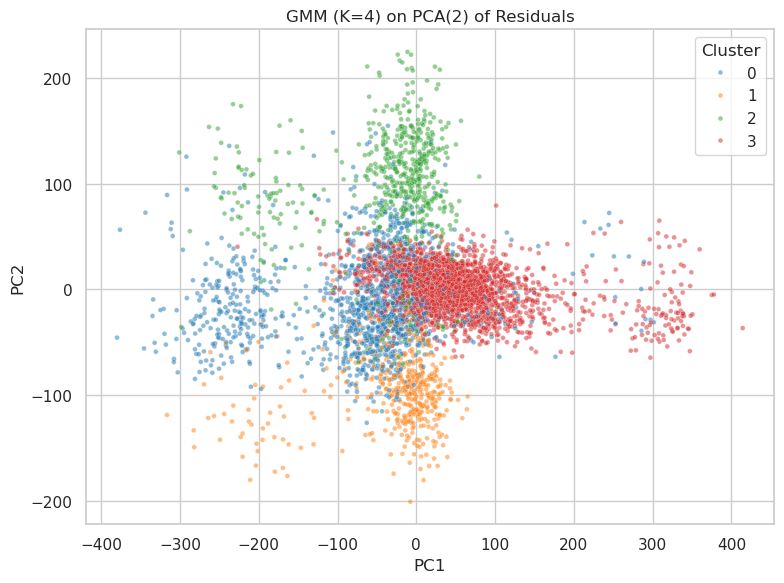

Figure 2: Latent regime clusters identified using Gaussian Mixture Models (GMM) in a low-rank manifold, clarifying reasoning state separations.

The Switching Linear Dynamical System (SLDS) Model

The SLDS framework integrates linear dynamics within different regimes and switches between these regimes, representing transitions between reasoning phases. As empirical findings demonstrate multimodality and distinct reasoning paths, the SLDS model employs four latent regimes, each with specific drift and variance profiles. This framework aligns reasoning states with mathematical constructs, enabling more efficacious analysis and simulation of LMs' reasoning processes.

Figure 3: GMM clustering reveals discrete reasoning phases, depicted in a reduced-dimensional residuals space.

Experimental Validation and Generalization

Experimental validation of the SLDS demonstrates a significant performance improvement over simpler models. It captures dynamics more effectively, with a higher predictive R2≈0.68, illustrating superiority over global linear models. Cross-model transferability was also tested, proving that fundamental reasoning structures can generalize across different LLM architectures, albeit with performance variations.

Figure 4: Inadequacy of single-mode noise models, justifying a complex regime-switching architecture in capturing reasoning dynamics.

Impact, Applications, and Future Developments

The SLDS framework, inspired by a statistical understanding of complex dynamics, presents a valuable tool for auditing and simulating reasoning processes in LMs at reduced computational costs. This effectively predicts critical state transitions, helping to identify regime shifts conducive to errors or misalignments in reasoning. Moving forward, research will focus on privacy-preserving model variants and refining predictive capabilities to mitigate potential misuse.

In conclusion, this study pioneers a systematic approach to mapping LMs' reasoning, uncovering latent dynamic states, and suggesting methodologies for improved understanding and mitigation of inference-time failures.