- The paper introduces a dual formulation for inverse covariance intersection fusion that exploits common process noise to derive tighter error covariance bounds.

- It establishes a rigorous mathematical framework using symmetric positive semi-definite matrices and Loewner ordering to parameterize admissible covariance sets.

- Geometric interpretations confirm that, although optimal weights yield similar minimal fused covariances, the full family of bounds offers strictly improved robustness.

Dual Approach to Inverse Covariance Intersection Fusion: Theoretical Foundations and Analysis

Introduction

This paper addresses the open problem of linear fusion of state estimates under partial knowledge of error correlations, specifically focusing on the scenario where the only known statistical relation is the presence of common process noise across the estimates. Traditional Covariance Intersection (CI) fusion assumes completely unknown error correlations, leading to conservative upper bounds on the fused estimate's error covariance. Inverse Covariance Intersection (ICI) extends this to settings with hypothesized common information. However, a fully dual approach—where the uncertainty structure is informed not by shared information but by shared process noise—has not been strictly formalized or analyzed. This work establishes a rigorous mathematical framework for the "unknown common noise" case, derives upper and lower bounds for the associated error covariances, and investigates the impact on linear fusion, with both theoretical analysis and illustrative geometric interpretations.

The space of symmetric positive semi-definite matrices, partially ordered by the Loewner relation, forms the analytic backdrop for describing uncertainty in state estimates with unknown or partially known mutual correlations. The primary technical development is the definition and parametrization of the admissible set of error covariance block matrices, $\kov[]$, where the off-diagonal block $\kov[12]$ is only known to be a symmetric positive semi-definite matrix dominated by both local error covariances: $X = X^T \geq 0,\, X \leq \kov[1],\, X \leq \kov[2]$. This formalizes the assumption that each estimate’s errors can be decomposed into a sum of a common noise component plus mutually independent terms.

In contrast, ICI is built on the assumption of unknown common information, resulting in a dual but not identical mathematical structure. The importance of explicit parametrization for the family of admissible cross-correlation matrices is underscored to minimize misapplication.

Upper and Lower Bounds: Analytical Properties

Upper Bounds

Upper bounds on the family of admissible joint covariance matrices are constructed analogously to ICI, with a critical switch: the role of $\kov$ and $\kov^{-1}$ is reversed. For each positive parameter and weight $\vahaom$ in [0,1], the proposed family of upper bounds takes the form

$\mez = \text{blkdiag}(\kov[1], \kov[2]) + \begin{bmatrix} \alpha \matId \ \alpha \matId \end{bmatrix} B \begin{bmatrix} \alpha \matId \ \alpha \matId \end{bmatrix}^T,$

where $B = (\vahaom \kov[1]^{-1} + (1-\vahaom) \kov[2]^{-1})^{-1}$. The paper rigorously proves that these bounds are always tighter than the generic CI bounds under the classical setting of no knowledge on error cross-correlations.

Lower Bounds

A non-trivial lower bound is also derived, showing that the least possible joint error covariance is achieved by maximizing the common noise component within the admissible set. This lower bound takes the structured form:

$\dolni = \begin{bmatrix}\kov[1] \ \kov[2]\end{bmatrix} (\kov[1] + \kov[2])^{-1} \begin{bmatrix}\kov[1] \ \kov[2]\end{bmatrix}^T$

This formulation is significant, as it tightens the range for potential error covariances available to the fusion procedure and supports precise analytic and geometric interpretation, particularly as visualized with uncertainty ellipses in low dimensions.

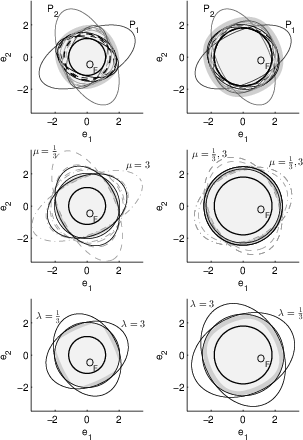

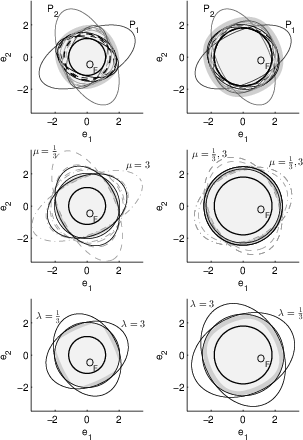

Implications for Linear Fusion

The downstream effect of these bounds is evidenced when applied to optimal linear fusion. Notably, while the proposed upper bounds are strictly tighter than CI for any fixed pair of input error covariances, the minimal achievable fused error covariance for the dual (common noise) case coincides with that of CI for the optimal fusion weight—the partial knowledge only provides improvement for suboptimal weights but cannot deliver a uniformly superior single upper bound. Strict improvement occurs only in the context of the entire family of admissible upper bounds, which can be especially relevant where robust performance or worst-case error characterization is required.

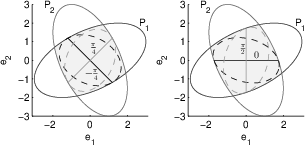

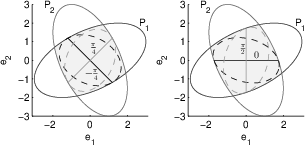

The mapping from admissible covariance sets to uncertainty ellipsoids is central to both analysis and visualization. Figures 1 and 2 display the individual uncertainty ellipses as well as those corresponding to maximal admissible common noise matrices.

Figure 1: Individual ellipses of $\kov[1]$ and $\kov[2]$ contrasted with several degenerate common noise ellipses X (line segments) and resulting ideal fusion ellipses.

Figure 2: Same setting as Figure 1, now showing common noise ellipses X parameterized by general matrix parameters and their ideal fusion ellipses, some degenerating to line segments.

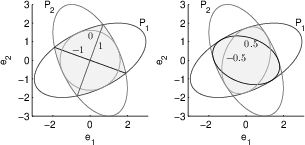

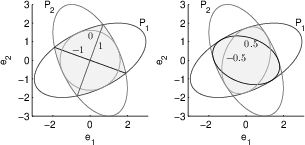

A forbidden comparison—overlaying fused upper bound ellipses produced by different weights—is additionally provided for illustration.

Figure 3: Forbidden comparison of individual ellipses and examples of "ideally" fused upper bounds for varying parameter choices; the family covers the intersection of input ellipses.

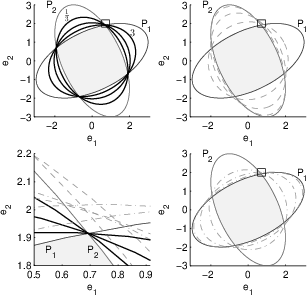

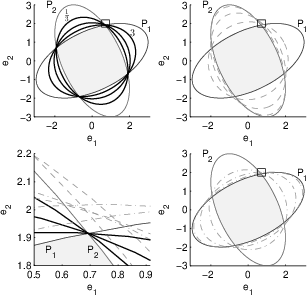

A further comparison of the "admissible region" for possible fused covariances using both the new upper bounds and traditional CI fusion is shown:

Figure 4: Fusion for two choices of fusion weights; the upper and lower rows illustrate fused covariances and their circumscribing bounds for the proposed and standard approaches, for different parameterizations.

Geometric and Practical Interpretations

Through the visualization of the admissible regions as intersections of ellipses, the paper confirms that no single upper bound from the proposed dual approach can outperform CI across all cases when optimal weights are used. However, the strictly better bounding property manifests for entire families of bounds. This distinction is critical for applications in decentralized or distributed estimation and sensor fusion in robotics, aerospace, or autonomous systems, where knowledge of the cross-correlation structure is partial or uncertain, and robustness to such uncertainties is essential.

The analytic machinery and graphical interpretations are broadly extendable to multi-sensor fusion and general high-dimensional estimation settings. Moreover, the strict duality with ICI suggests new theoretical avenues for understanding the structure of admissible covariance sets under varying assumptions of error correlation.

Conclusion

The dual formalization of Inverse Covariance Intersection fusion for the "unknown common noise" case is developed, resulting in a new family of tightly-parameterized upper bounds for joint error covariances. These upper bounds are always superior to the worst-case CI bounds on a setwise basis, though not in the minimal fused error achieved with optimal weighting. The theoretical implications reinforce the need for care in interpreting partial knowledge of error correlations and inform more robust decentralized estimation strategies in practical systems. Future work may extend these results to the design of adaptive fusion rules under dynamically varying knowledge of correlation structure, or to the direct exploitation of the geometry of admissible covariance sets for improved uncertainty quantification, particularly in systems where worst-case assurance is paramount (2506.05955).