FilMaster: Bridging Cinematic Principles and Generative AI for Automated Film Generation

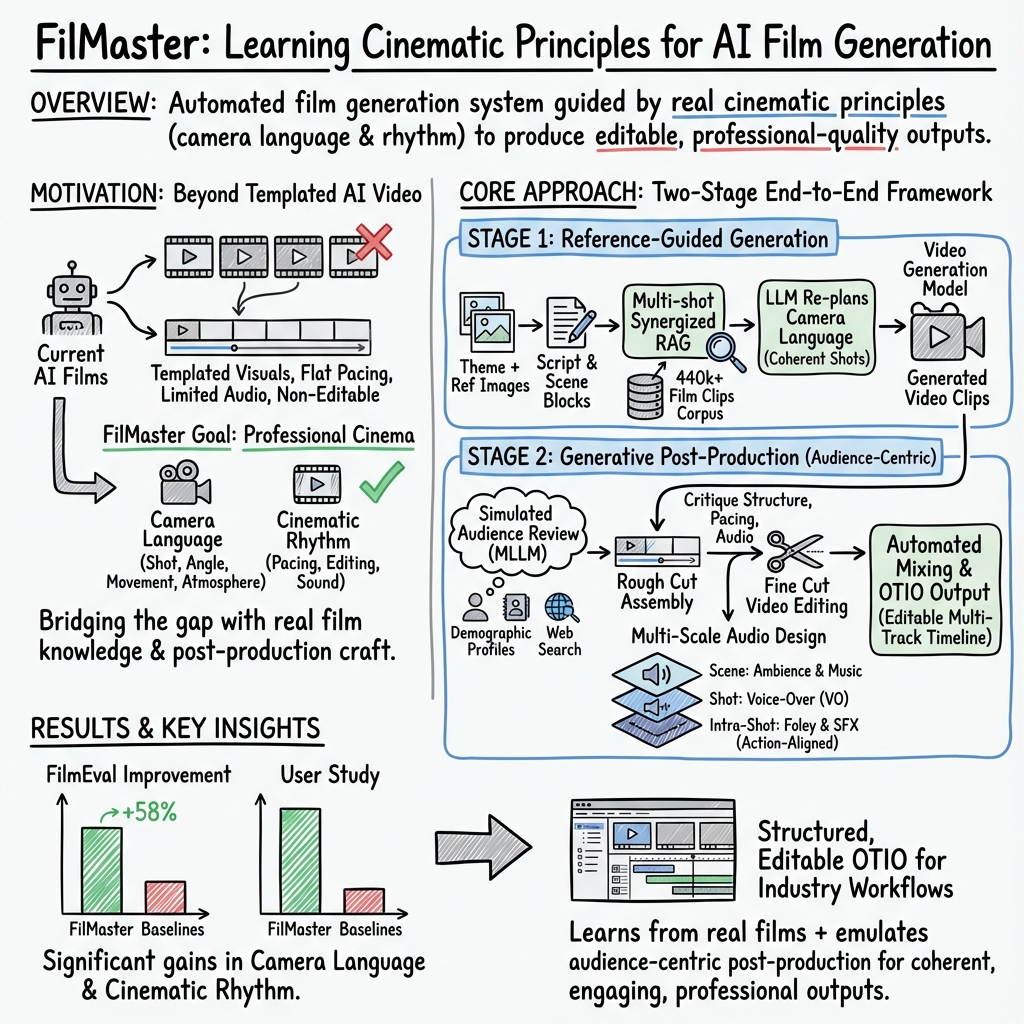

Abstract: AI-driven content creation has shown potential in film production. However, existing film generation systems struggle to implement cinematic principles and thus fail to generate professional-quality films, particularly lacking diverse camera language and cinematic rhythm. This results in templated visuals and unengaging narratives. To address this, we introduce FilMaster, an end-to-end AI system that integrates real-world cinematic principles for professional-grade film generation, yielding editable, industry-standard outputs. FilMaster is built on two key principles: (1) learning cinematography from extensive real-world film data and (2) emulating professional, audience-centric post-production workflows. Inspired by these principles, FilMaster incorporates two stages: a Reference-Guided Generation Stage which transforms user input to video clips, and a Generative Post-Production Stage which transforms raw footage into audiovisual outputs by orchestrating visual and auditory elements for cinematic rhythm. Our generation stage highlights a Multi-shot Synergized RAG Camera Language Design module to guide the AI in generating professional camera language by retrieving reference clips from a vast corpus of 440,000 film clips. Our post-production stage emulates professional workflows by designing an Audience-Centric Cinematic Rhythm Control module, including Rough Cut and Fine Cut processes informed by simulated audience feedback, for effective integration of audiovisual elements to achieve engaging content. The system is empowered by generative AI models like (M)LLMs and video generation models. Furthermore, we introduce FilmEval, a comprehensive benchmark for evaluating AI-generated films. Extensive experiments show FilMaster's superior performance in camera language design and cinematic rhythm control, advancing generative AI in professional filmmaking.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces FilMaster, an AI system that can automatically create short films that look and feel more like real movies. It does this by learning from actual films and following professional filmmaking steps, so the results aren’t just pretty clips stuck together—they have proper camera work, pacing, and sound like a real movie.

What questions were they trying to answer?

The researchers wanted to solve a few big problems with current AI video tools:

- Can an AI learn real “camera language” (how shots, angles, and camera moves tell a story) by studying many real films, instead of guessing?

- Can an AI copy pro post‑production (editing and sound) to control “cinematic rhythm” (pacing and flow) so the film feels engaging to viewers?

- Can the system produce outputs that professionals can actually edit and use in real software?

- Can we build a fair way to judge how good AI‑made films are across storytelling, visuals, sound, and overall experience?

How does FilMaster work?

FilMaster follows a two‑stage pipeline, similar to how humans make movies.

Stage 1: Reference‑Guided Generation (learning “camera language”)

- What “camera language” means: It’s how filmmakers use shot types (like close-ups), angles (high or low), and movements (like tracking or panning) to show emotion and tell the story.

- How FilMaster learns it: The system looks up examples from a giant library of 440,000 real movie clips. This is like a student director watching lots of films to understand how pros shoot similar scenes.

- How it uses that knowledge: For each scene, the AI retrieves similar examples (using a method called retrieval-augmented generation, or RAG—basically “look it up first, then write”), then re‑plans the shot list: which shots to use, how the camera should move, and what the mood should be. It plans multiple shots together so they match and flow within the same scene.

Stage 2: Generative Post‑Production (creating “cinematic rhythm”)

- What “cinematic rhythm” means: It’s the pace and flow of a film—how scenes are cut, and how music and sound build emotion. Without rhythm, movies feel flat or boring.

- Rough Cut and Fine Cut: First, the AI assembles a basic Rough Cut. Then it asks a multimodal AI to act like a “simulated audience”—optionally tailored to a target group (like short-drama fans)—to give feedback. The system uses that feedback to create a Fine Cut with better structure and timing.

- Video editing: The AI rearranges clips, trims or speeds up parts, and aligns the sequence so the story is clearer and the pace feels right.

- Sound design: The AI builds a layered soundtrack, including:

- Voice‑over (VO)

- Background ambiance

- Music score

- Foley (little sounds like footsteps)

- Sound effects (SFX, like door slams)

To keep everything in sync, it designs sounds at different scales: - Scene‑level (ambience, music) - Shot‑level (voice‑over) - Intra‑shot (precise foley and SFX timed to actions)

It then balances loudness and tone so the final audio sounds clean and professional.

Industry‑friendly output

FilMaster exports an editable timeline in an industry format called OTIO (OpenTimelineIO). That means you can open the full multi‑track project (with video and audio layers) in pro software like DaVinci Resolve and keep editing.

How they judged quality (FilmEval benchmark)

The team built FilmEval, a new benchmark that scores AI‑made films in six areas:

- Narrative and Script

- Audiovisuals and Techniques

- Aesthetics and Expression

- Rhythm and Flow

- Emotional Engagement

- Overall Experience

They used both automatic evaluations (an AI judge) and human user studies, and checked that the AI judge’s scores matched human opinions well.

What did they find?

FilMaster outperformed other AI film systems (Anim‑Director, MovieAgent, and the commercial tool LTX‑Studio) in key areas:

- Much better camera language: more coherent shot choices and professional framing.

- Stronger cinematic rhythm: better pacing, editing, and audio‑video synchronization.

- Richer, multi‑layered soundscapes: music, ambience, VO, foley, and SFX work together.

- More engaging overall experience.

In numbers:

- Automatic evaluations showed an average 58% improvement over others.

- Human studies showed about a 68% improvement.

- It did especially well in two tough areas: camera language and rhythm/sync.

Why does it matter?

- For creators: FilMaster can help solo artists and small teams make more professional films faster—planning shots like a seasoned DP (director of photography), editing like a film editor, and layering sound like a sound designer.

- For the industry: Because it exports in a standard format, pros can smoothly continue editing in their usual tools, making AI more practical in real workflows.

- For learning and prototyping: It’s useful for teaching filmmaking concepts and for quickly prototyping story ideas with realistic camera and editing choices.

- For research: FilmEval provides a clearer, fairer way to measure AI‑generated films across story, visuals, sound, pacing, and audience engagement.

In short, FilMaster shows that when AI learns from real cinema and follows professional workflows—especially for camera language and rhythm—the results feel less like demo clips and more like actual movies.

Knowledge Gaps

Unresolved gaps, limitations, and open questions

Below is a single, concrete list of knowledge gaps, limitations, and open questions left unresolved by the paper. Each point is phrased to guide future research toward actionable investigations.

- Data transparency and licensing: The 440k-film-clip corpus and its “professionally annotated” textual descriptions are not described in terms of source, licensing, annotation protocol, inter-annotator agreement, or release plan, hindering reproducibility and raising copyright and ethical concerns.

- Dataset diversity and bias: No analysis of genre, era, cultural, language, and stylistic diversity of the film corpus; potential bias toward certain cinematic traditions may lead to normative camera language and reduced creative diversity.

- Cross-lingual and multilingual support: It is unclear whether the retrieval and planning pipeline (embeddings, LLM prompts, annotations) generalize across non-English scripts or multilingual films.

- Retrieval quality and ablations: The RAG setup (embedding model choice, top-K selection strategy, negative sampling, re-ranking, and multi-shot context window size) lacks ablation studies and sensitivity analyses to quantify its contribution and failure modes.

- Multi-shot synergy beyond a single scene: The camera language is synergized within scene blocks, but continuity and stylistic coherence across scenes and the entire film (e.g., motifs, visual signatures, recurring shot schemas) remain unexplored.

- Formal cinematography representation: Shot plans are described informally (types, movements, angles), with no formal model of camera parameters (lens focal length, aperture, shutter speed), blocking, lighting design, and composition rules; opportunities exist for a structured cinematography grammar or knowledge graph.

- Lighting and color grading: The pipeline omits lighting design, color correction/grading, and look creation—core determinants of cinematic tone and rhythm not integrated into planning or post.

- Editing grammar and transitions: Beyond trimming and reordering, the system doesn’t model continuity editing rules (match-on-action, eyeline match, shot/reverse-shot), transition devices (L-cuts/J-cuts, dissolves), and cut boundary semantics in a principled, learnable way.

- Character identity consistency at scale: The approach relies on reference images but doesn’t quantify identity preservation across long sequences, occlusions, varied shot scales, and complex blocking; robustness under challenging conditions is untested.

- Lip-sync and dialogue realism: Voice-over is used, but synchronization between spoken lines and mouth movements (dialogue-driven scenes), as well as actor performance realism, are not addressed.

- Complex camera movements and long takes: Handling of crane shots, steadicam, highly dynamic movements, or long continuous takes (with intricate staging and timing) is not evaluated.

- Physical scene constraints: No mechanism ensures planned camera paths are feasible for the video generator, or physically plausible within the scene’s spatial constraints.

- Audience simulation validity: The “simulated audience” demographic profiles created via internet search are not validated against real audience feedback; correlations to actual viewer engagement are unknown.

- Conflict resolution between director intent and audience feedback: The system does not define policies to balance authorial choices with audience-centric edits when feedback conflicts with creative intent.

- Genre-specific rhythm models: General cinematic rhythm control may not generalize to genres with distinct grammar (e.g., comedy beats, horror timing, action cross-cutting, documentary pacing); no genre-aware models are explored.

- Objective A/V synchronization metrics: Multi-scale A/V sync is described qualitatively; no quantitative metrics (e.g., onset alignment, cross-modal coherence scores) or benchmarks are provided to measure synchronization quality.

- Audio licensing and provenance: The curated audio libraries used for ambience, music, foley, and SFX are unspecified (source, licensing, release), raising legal and reproducibility concerns.

- Advanced sound design and mixing: Techniques like side-chain ducking, dynamic range compression for stems, spatial mixing (surround/immersive formats), dialogue intelligibility measures, and compliance with standards (EBU R128, Netflix) are not assessed quantitatively.

- Music scoring originality and control: The system does not address original score generation, thematic motifs, or dynamic cueing strategies; reliance on library retrieval may limit expressive scoring and adaptive emotional arcs.

- Post-production deliverables and workflows: While OTIO export is supported, conformance to industry deliverables (stems, loudness specs, color pipelines) and integration into professional finishing tools (Resolve/Fairlight/Pro Tools) is not evaluated with practitioners.

- Long-form scalability: The pipeline is tested on short clips (~153 frames per sequence); computational cost, latency, memory, and quality stability for long-form (e.g., 30–90 minutes) remains unaddressed.

- Robustness and failure cases: No systematic analysis of failure modes (irrelevant retrievals, LLM hallucinations, desync audio, broken continuity) or fallback strategies (e.g., confidence-based re-planning) is provided.

- Reproducibility and openness: Dependence on proprietary models (GPT-4o, Gemini, Kling Elements) reduces reproducibility; prompts, parameters, and code for key modules are not released or parameterized for open alternatives.

- Evaluation scope and statistical power: FilmEval is tested on only 20 cases (10 long, 10 short); the user study has 5 participants and 1,200 ratings—insufficient for robust statistical conclusions or genre coverage.

- LLM-based evaluation bias: Automatic evaluation uses an LLM (Gemini) with moderate correlation (~0.65) to humans; risks of evaluator bias, circularity, and domain drift are not explored; benchmarking against non-LLM metrics is missing.

- Derived metric design: The weighting scheme for derived camera language and rhythm metrics is ad hoc and not validated (e.g., via expert consensus or metric learning).

- Professional validation: No study with professional editors, cinematographers, and sound designers assesses whether outputs meet practical standards or reduce workload; usability and acceptability in real workflows remain unknown.

- Ethical and legal risks: Training/inference on copyrighted film material and internet-based audience profiling may introduce legal, privacy, and ethical issues; governance and mitigation strategies are absent.

- Creativity and originality: Learning camera language from existing films may bias outputs toward derivative styles; mechanisms to encourage novel, director-specific aesthetics are not defined.

- Multi-modal conditioning breadth: Beyond character/location images, conditioning with storyboard frames, shot lists, lighting diagrams, and audio temp tracks could improve control; the paper does not explore these inputs.

- Iterative post cycles: Professional workflows often cycle through multiple rough/fine cuts; the system presents a single pass and does not study benefits of iterative audience-informed refinements.

- Scene structuring reliability: The chain-of-LLMs that refines synopsis to scene blocks is only briefly described; no accuracy checks, error propagation analysis, or tools for human-in-the-loop correction are provided.

- Transition semantics and emotional arcs: Modeling emotional trajectory (tension curves, beat structures) and mapping them to edit rhythms and scoring is not formalized or quantitatively optimized.

- Non-linear and alternative storytelling: Support for non-linear narratives (flashbacks, parallel timelines), montage logic, and experimental editing styles is not studied.

- Domain transfer: Applicability to documentaries, commercials, music videos, animation, or interactive media remains untested; domain-specific adaptations are an open question.

- Accessibility and localization: Subtitles, captions, audio description, and localization workflows (dubbing, multi-language VO) are not integrated into post-production design or evaluation.

- Computational budget and efficiency: No reporting of end-to-end runtime, GPU requirements, and cost per minute of film; scalability and sustainability are unclear.

- Safety and stereotype risks: Audience archetype modeling via web search could encode stereotypes; safeguards for fairness and inclusive design are not discussed.

Practical Applications

Immediate Applications

Below are specific, deployable use cases that can be adopted with current models and tooling described in the paper (FilMaster, its RAG-based cinematography planner, the audience-centric post-production pipeline, OTIO export, and the FilmEval benchmark).

- Professional previz and shot-planning co-pilot (Media/Entertainment; Software)

- Use FilMaster’s Multi-shot Synergized RAG module to turn scene text plus reference stills into coherent shot lists, camera moves, and mood descriptions; export to OTIO for DaVinci Resolve/Premiere workflows.

- Tools/workflows: “Script-to-Previz” pipeline; “Cinematography RAG API”; OTIO round-trips.

- Assumptions/dependencies: Access to high-quality MLLMs/video models; rights-cleared use of the 440k reference corpus; GPU/compute budget; NLE integration via OTIO.

- Automated rough-cut assembly for editors (Media/Entertainment; Advertising)

- Generate scene-level rough cuts from AI-generated clips, then refine structure and durations via the audience-centric Fine Cut module.

- Tools/workflows: “Rough-to-Fine Cut” panel; timeline-aware editing agent with timecodes; shot-trimming/speedup suggestions.

- Assumptions/dependencies: Reliable scene and timecode metadata; editor supervision; latency acceptable for iterative loops.

- Fast audience-targeted promos and A/B cuts (Advertising/Marketing; Social Media)

- Use audience archetype profiles and simulated feedback to produce length, pacing, and audio variants per platform (e.g., 6s bumper, 15s mobile, 30s broadcast).

- Tools/workflows: “Market Segment Cut Generator”; platform preset library; automatic aspect-ratio/framing adjustment using shot metadata.

- Assumptions/dependencies: Valid demographic profiles (web search tools); alignment with brand guidelines; music/SFX licensing.

- Multi-track audio auto-design for shorts (Media/Entertainment; Creator Economy)

- Generate layered soundscapes—ambience, score, VO, foley, SFX—using the multi-scale A/V synchronization scheme; export stems for DAWs.

- Tools/workflows: “Auto-Sound Designer” plugin; LUFS normalization and EQ presets; RAG from licensed audio libraries.

- Assumptions/dependencies: High-quality TTS/VO; rights-cleared audio assets; DAW/NLE formats (OTIO, AAF/XML, stems).

- Film school teaching and assessment (Education)

- Demonstrate camera language and rhythm by toggling system modules; use FilmEval criteria as a rubric to grade student edits and sound design.

- Tools/workflows: Classroom dashboard; “what-if” re-plans of shots; FilmEval scoring reports.

- Assumptions/dependencies: Institutional access to models; reproducible prompts; educator oversight to avoid overfitting to the rubric.

- AI-generated content quality assurance (Platforms/Policy; Trust & Safety)

- Apply FilmEval-like evaluators to auto-screen submissions for audio desync, pacing issues, narrative incoherence; flag spammy or low-quality AI videos.

- Tools/workflows: “FilmEval-as-a-Service” API; threshold-based moderation signals; human-in-the-loop review.

- Assumptions/dependencies: Correlation of automated scores with human perception in target domain; policy acceptance; false-positive mitigation.

- Synthetic video data generation for model training (Software/AI)

- Produce camera-diverse, character-consistent video datasets to augment training of VLMs/action recognition and grounding models.

- Tools/workflows: Shot schema generators; parameterized camera movement catalogs; dataset manifests with metadata.

- Assumptions/dependencies: Distributional coverage vs. real-world footage; annotation quality; content rights and synthetic data disclosure.

- Localization and cultural adaptation at scale (Media/Entertainment; Ad-Tech)

- Create culturally tailored cuts and soundscapes guided by region-specific audience profiles; swap VO and music while preserving shot rhythm.

- Tools/workflows: “Cultural Adaptation Profiler”; multi-language VO; music palette by territory; regional compliance presets.

- Assumptions/dependencies: Accurate cultural profiles; local content rules; localization QA (linguistic and cultural).

- Accessible narration and sound mixing (Accessibility; Public Sector)

- Generate descriptive audio for visually impaired viewers and ensure intelligible VO over music via automated mixing (LUFS normalization, sidechain).

- Tools/workflows: “Descriptive Audio Generator”; intelligibility checker; accessibility presets.

- Assumptions/dependencies: High-quality, expressive TTS in multiple languages; regulation-aligned loudness standards.

- Creator tools for short-form storytelling (Creator Economy; Daily Life)

- One-click generation of multi-shot skits with consistent characters/locations and polished audio for TikTok/Reels/YouTube Shorts.

- Tools/workflows: Mobile-friendly templates; reference image picker; export to platform specs.

- Assumptions/dependencies: Copyright-safe reference images; compute cost per clip; platform policy compliance for AI content.

Long-Term Applications

These use cases are plausible extensions but require further research, scaling, or ecosystem development (e.g., longer context reasoning, stronger video fidelity, licensing frameworks, real-time performance).

- Feature-length automated filmmaking with human-in-the-loop (Media/Entertainment)

- Scale FilMaster to 90–120 minute narratives with act-level rhythm control, character arcs, and continuity across thousands of shots.

- Tools/workflows: Long-context planning memory; production-grade asset/version management; distributed rendering/editing pipelines.

- Assumptions/dependencies: High-fidelity, long-duration video models; robust story/continuity reasoning; comprehensive rights management.

- Real-time adaptive cinema and interactive streaming (Media/Entertainment; Games)

- Dynamically re-edit pacing and sound based on viewer feedback/biometrics, creating personalized experiences on streaming platforms.

- Tools/workflows: “Audience Simulator” upgraded with real-time signals; low-latency edit engines; A/B personalization infra.

- Assumptions/dependencies: Privacy-compliant signal collection; real-time generation/mixing; platform integration.

- On-set virtual production co-pilot (Media/Entertainment; Software)

- Provide live shot suggestions and continuity checks on LED stages/Unreal sets; preview edits and sound beds during takes.

- Tools/workflows: Vision-based scene understanding; Unreal Engine adapter; latency-bounded RAG and edit recommendations.

- Assumptions/dependencies: Reliable on-set vision/telemetry; networked compute; union/guild workflow acceptance.

- Personalized therapeutic and educational video generation (Healthcare; Education)

- Generate calming or motivating narratives with controlled pacing and sound design tailored to patient/student profiles.

- Tools/workflows: Clinically-informed audience profiles; therapist/educator dashboards; safety filters for content suitability.

- Assumptions/dependencies: Clinical validation; data privacy/consent; bias mitigation and cultural sensitivity.

- Consumer “auto-edit my footage” companions (Daily Life; Consumer Electronics)

- Ingest raw phone/GoPro footage and generate cinematic edits with VO/music/SFX that match user-selected styles and audiences.

- Tools/workflows: Device-side summarization; cloud edit/render; preset style packs.

- Assumptions/dependencies: Edge/cloud hybrid compute; storage/bandwidth; licensing of music/SFX.

- Policy and standards for AI media provenance and evaluation (Policy/Standards)

- Extend OTIO with provenance metadata and FilmEval-derived quality tags; define best practices for dataset governance and fair use of film references.

- Tools/workflows: Standards proposals with SMPTE/AMWA; watermarking hooks; audit trails in OTIO.

- Assumptions/dependencies: Multi-stakeholder adoption; regulatory harmonization; enforceable provenance frameworks.

- Multilingual, culturally aware dubbing and re-editing (Media/Entertainment; Localization)

- Automate culturally adapted pacing, camera emphasis via re-ordering, and idiomatic VO for global releases.

- Tools/workflows: Culture-aware rhythm maps; prosody-aware TTS; regional compliance validators.

- Assumptions/dependencies: High-quality language/culture models; human cultural review; region-specific rights.

- Synthetic environments for robotics and egocentric AI (Robotics; Software/AI)

- Generate diverse, photoreal videos with complex egomotion and occlusions to train navigation/perception models.

- Tools/workflows: Camera-motion curriculum; action-conditioned scene synthesis; automatic annotation pipelines.

- Assumptions/dependencies: Physical plausibility and domain transfer; safety assessments; compute for scale.

- Compliance scoring for financial and regulated marketing videos (Finance; RegTech)

- Use FilmEval-like criteria extended with policy checks to flag pacing, claims, or audio emphasis that could mislead.

- Tools/workflows: “Regulatory Cut Checker”; policy templates; audit logs.

- Assumptions/dependencies: Domain-specific policy codification; legal sign-off; explainable scoring.

- End-to-end commercial products built on FilMaster modules (Software; SaaS)

- Productize modules as APIs: Cinematography RAG, Audience Simulator, Auto-Sound Designer, FilmEval QA.

- Tools/workflows: Usage-based SaaS; NLE/DAW plugins; enterprise SLAs and privacy controls.

- Assumptions/dependencies: Stable model supply; cost-effective inference; IP indemnification and dataset licensing.

Notes on cross-cutting assumptions and dependencies

- Model availability and quality: Results depend on access to strong (M)LLMs and video diffusion models; improvements in long-context reasoning and motion fidelity will expand applicability.

- Data rights and governance: Use of 440k film references and audio libraries must comply with licensing/fair use; provenance and consent for audience profiles are critical.

- Integration and standards: OTIO interoperability with NLEs/DAWs is a key enabler; additional adapters (AAF/XML, Pro Tools/Fairlight) may be needed.

- Human oversight: Editorial, cultural, and ethical reviews remain necessary, especially for regulated domains and localization.

- Compute and cost: Iterative RAG, generation, and mixing can be GPU-intensive; cost controls and batching are needed for scale.

Glossary

- Audience-Centric Cinematic Rhythm Control: A post-production module that uses simulated audience feedback to refine pacing, structure, and audio-visual integration. "Guided by our second principle, we propose Audience-Centric Cinematic Rhythm Control module to address the common issue of flat, unengaging AI-generated content by emulating professional, audience-centric post-production workflows."

- Audiovisual synchronization: The precise alignment of audio elements with corresponding visual events across different temporal scales. "Sound design ensures that a rich, multi-layered soundscape is crafted, integrating diverse audio elements (background ambiance, musical scoring, voice-overs (VO), foley, and sound effects (SFX)) with multi-scale audiovisual synchronization."

- Camera language: The set of cinematographic techniques (shot types, movements, angles) used to communicate narrative and emotion. "(1) Camera Language: the artful use of cinematographic techniques to convey narrative and emotion by crafting the visual language"

- Chain of Thought planning: A structured reasoning approach where multi-step plans are generated to organize complex tasks. "MovieAgent~\cite{wu2025movieagent} multi-agent Chain of Thought planning for movie generation, which automatically structures scenes, camera settings, and cinematography."

- Cinematic rhythm: The audience-focused pacing and flow shaped through editing and sound to guide emotional experience. "(2) Cinematic Rhythm: the masterful orchestration of pacing and flow through editing and sound to shape the audience's emotional journey"

- Cinematography: The craft and technique of capturing visual storytelling through camera work and lighting. "(1) Learning Cinematography from Extensive Real-World Film Data."

- Diffusion models: Generative models that iteratively denoise signals to produce high-quality samples, here used for video synthesis. "Video diffusion models excel by progressively refining noisy inputs into clean video samples"

- Embedding model: A model that converts text (or other modalities) into vector representations for retrieval or similarity search. "Secondly, the scene block is encoded into vector representations using an embedding model and stored in a vector database as text embeddings"

- Fine Cut: A refined edit after the Rough Cut that finalizes structure and timing based on feedback, before sound finishing. "This feedback then guides a sophisticated Fine Cut editing process."

- Foley: Custom-recorded sound effects that mimic real-world actions to enhance realism. "integrating diverse audio elements (background ambiance, musical scoring, voice-overs (VO), foley, and sound effects (SFX))"

- Latent diffusion: A diffusion process operating in a compressed latent space to efficiently synthesize high-quality outputs. "demonstrating remarkably high-quality visual synthesis via sophisticated latent diffusion techniques."

- LUFS normalization: Loudness normalization to a standardized LUFS level to ensure consistent perceived volume across tracks. "These processes, including LUFS normalization and frequency adjustments, ensure cross-track harmonization, voice intelligibility, and overall sonic cohesion, resulting in a polished soundscape."

- Multimodal LLMs (M)LLMs: LLMs that process and reason across multiple modalities (text, audio, image, video). "The whole system is empowered by generative AI models such as (M)LLMs, and video generation models."

- OpenTimelineIO (OTIO): An industry-standard format for representing editable timelines of audio and video for interchange with editors. "using the industry-standard OpenTimelineIO (OTIO) format~\cite{openTimelineIO}."

- Picture lock: The stage where the visual edit is finalized and no further changes to picture timing are made. "culminating in a picture lock, which is a finalized visual sequence ready for detailed sound design."

- Post-production: The phase after filming that involves editing, sound design, mixing, and finishing to craft the final work. "Post-production is crucial in filmmaking for an engaging experience, where proper pacing, and the effective integration of audiovisual elements are paramount~\cite{case2013filmPostProduction}."

- Retrieval-Augmented Generation (RAG): A method that enhances generation by retrieving relevant references from a database to ground outputs. "FilMaster utilizes RAG with a vast dataset of 440,000 real film clips to retrieve relevant cinematic descriptions"

- Rough Cut: The initial assembly of footage establishing basic narrative structure before detailed refinement. "The process begins with assembling a Rough Cut to establish the basic narrative structure."

- Shot re-planning: LLM-guided redesign of shots (types, movements, angles) to ensure coherent visual language within a scene. "The LLM then re-plans the multi-shot prompts to ensure consistent camera language within a scene block."

- Sound design: The creative construction of a multi-layered soundscape (ambience, music, VO, foley, SFX) to enhance storytelling. "Sound design ensures that a rich, multi-layered soundscape is crafted, integrating diverse audio elements (background ambiance, musical scoring, voice-overs (VO), foley, and sound effects (SFX)) with multi-scale audiovisual synchronization."

- Sound mixing: Technical balancing of audio tracks (levels, EQ, dynamics) to achieve clarity and cohesion. "FilMaster applies automated sound mixing techniques (Detailed in~\Cref{app: sound_design})."

- Spatio-Temporal-Aware Indexing: An indexing method that encodes scene context (space, time, characters, objectives) for retrieval and planning. "Spatio-Temporal-Aware Indexing."

- Vector database: A database optimized for storing and querying high-dimensional vectors used in similarity search. "stored in a vector database as text embeddings"

- Voice-over (VO): Narration recorded off-screen to convey story, context, or character thoughts over visuals. "integrating diverse audio elements (background ambiance, musical scoring, voice-overs (VO), foley, and sound effects (SFX))"

Collections

Sign up for free to add this paper to one or more collections.