- The paper presents the IMMP algorithm, a hierarchical approach that merges diverse motion planning models while preserving key interaction features.

- It employs module-specific checkpoint pooling and target-guided weight learning to mitigate domain imbalance and catastrophic forgetting.

- Empirical results show that IMMP outperforms traditional ensemble and domain adaptation methods with improved safety, goal success, and reduced computational cost.

Interaction-Merged Motion Planning: Leveraging Diverse Motion Datasets via Hierarchical Model Merging

Introduction

Autonomous robot motion planning faces persistent challenges due to heterogeneous agent interactions and environmental characteristics embedded in disparate motion datasets. Existing approaches—including joint domain adaptation, domain generalization, and ensemble learning—struggle with domain imbalance, catastrophic forgetting, and significant computational costs when leveraging multiple data sources. The "Interaction-Merged Motion Planning: Effectively Leveraging Diverse Motion Datasets for Robust Planning" (2507.04790) paper introduces Interaction-Merged Motion Planning (IMMP), a hierarchical checkpoint-merging strategy purpose-built for motion planning. IMMP addresses the intrinsic domain gaps by decoupling agent behaviors and interactions at the level of model modules, followed by targeted merging that preserves critical feature hierarchies, thus improving adaptability to novel target domains. This essay walks through the architecture, experimental framework, strong empirical evidence, and theoretical implications in the context of multi-domain motion planning.

Motivation and Limitations of Prior Art

Motion planning models, especially in autonomous driving and social navigation, are trained on datasets with heterogeneous distributions—ranging from Human-Human Interaction (HHI) data to Human-Robot Interaction (HRI) collections, encapsulating starkly different social and kinematic dynamics. Naive amalgamation or adaptation to new domains is suboptimal due to domain imbalance: some datasets dominate, inducing catastrophic forgetting, and classical ensemble learning is computationally prohibitive during inference.

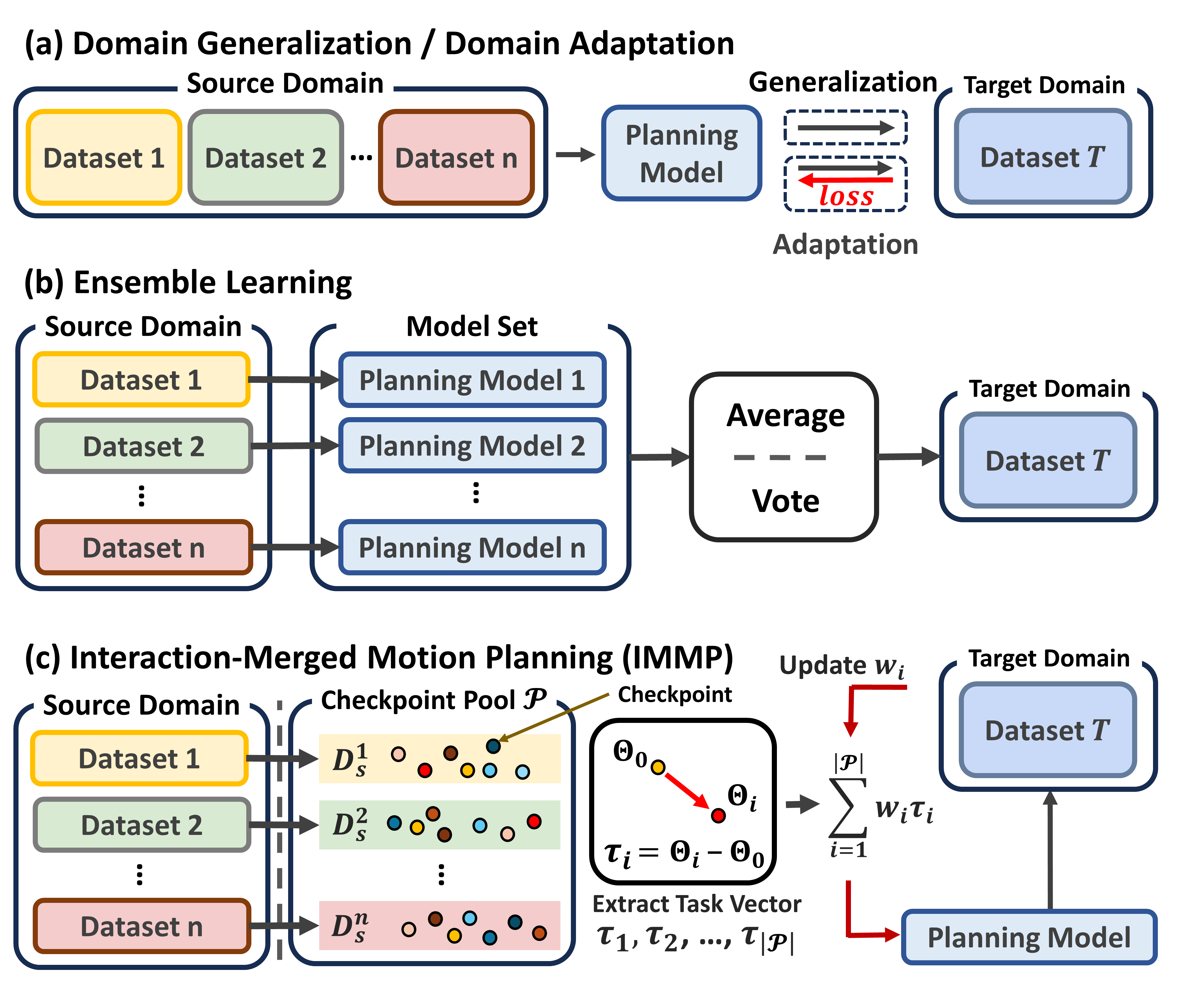

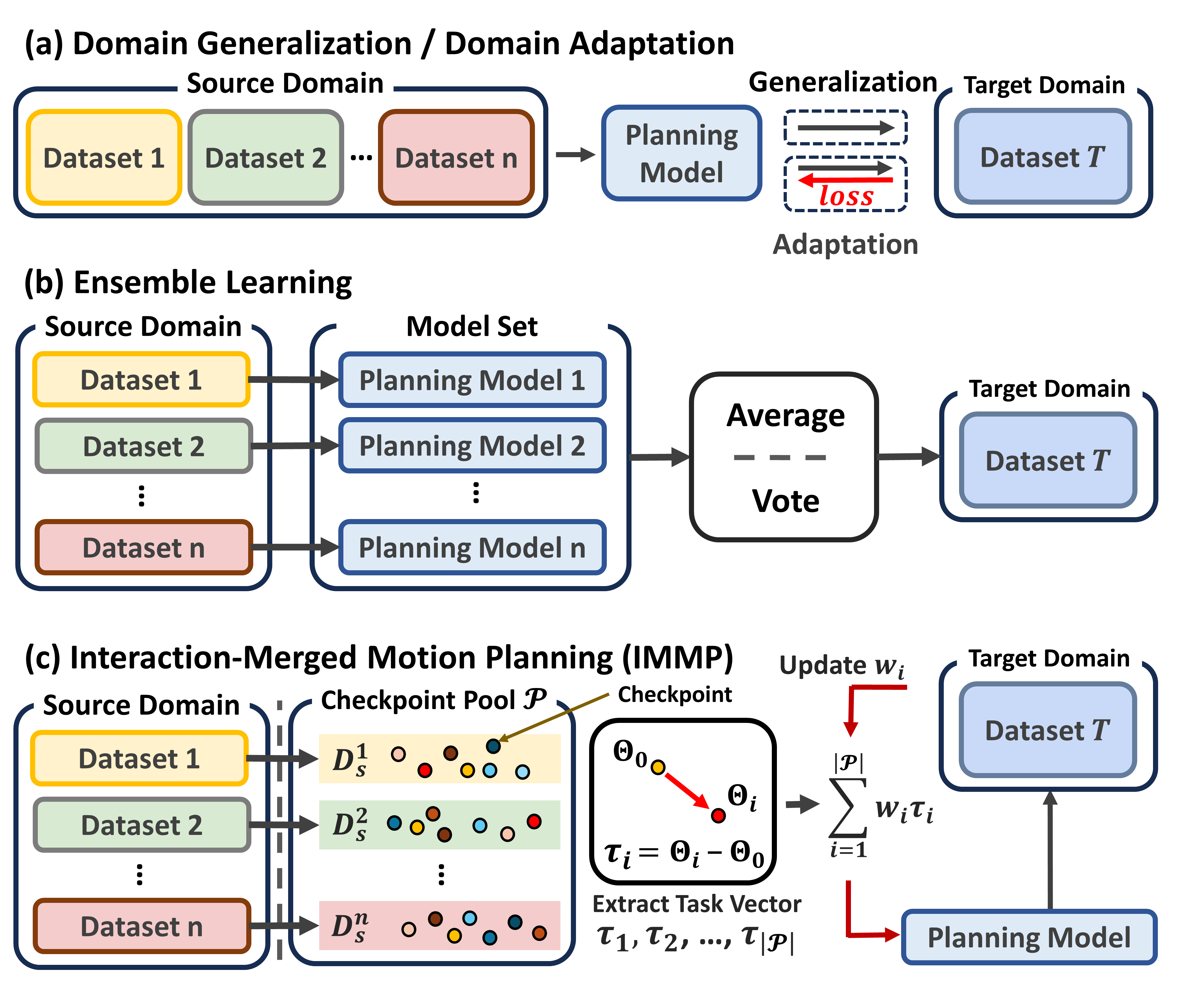

Figure 1: Schematic comparison: (a) domain adaptation/generalization through direct concatenation, (b) ensemble inference with multiple models, and (c) the proposed IMMP checkpoint pooling and hierarchical task vector merging.

Furthermore, existing model merging techniques (Averaging, Task Arithmetic, Ties-Merging) are largely tuned for vision and NLP tasks, relying on large model pretraining and single-metric selection, and they fail to account for the interaction-centric and multi-metric nature of motion planning. They either collapse crucial interaction features or erase submodules vital for robust adaptation.

IMMP Framework: Interaction-Conserving Hierarchical Merging

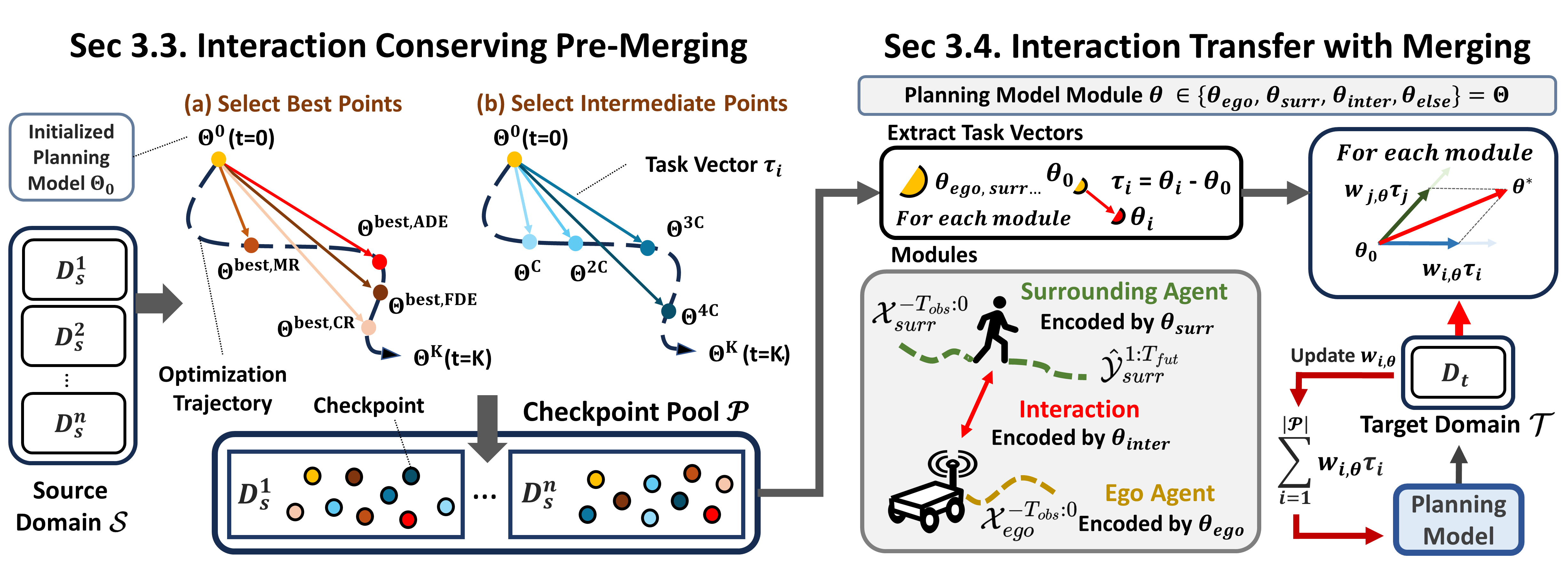

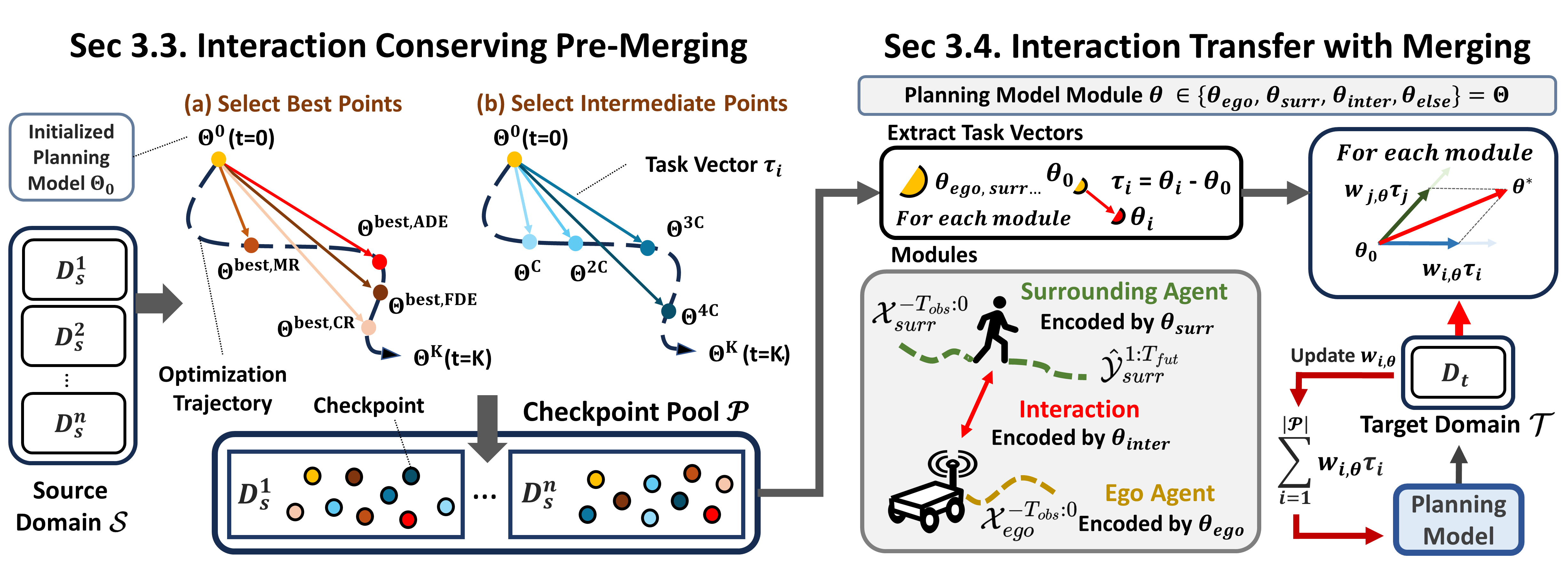

The IMMP algorithm is decomposed into two explicit stages: (1) interaction-conserving pre-merging and (2) interaction-level merging. The core idea is to disentangle the model into key functional modules—ego encoder, surroundings encoder, interaction encoder, and decoder—and to pool diverse parameter checkpoints across metrics and epochs for each source domain.

Figure 2: Architecture of IMMP. Checkpoint pools are constructed to represent distinct source domain behaviors, and module-specific task vectors are merged with learned weights to maximize target domain performance.

During pre-merging, checkpoints are selected both at intervals along the optimization trajectory and according to best-in-class values on each motion planning metric (ADE, FDE, Miss Rate, Collision Rate). This metric/epoch-wise pooling ensures preserved diversity and decomposability of agent behavior, collision avoidance, and goal-seeking strategies. For merging, module-level task vectors τi (parameter differences) are extracted for each planning model module. Linear weights wi,θ for each source are learned so as to minimize loss on limited target domain data, resulting in a strongly adaptable initialization for the target task.

Empirical Validation

IMMP was evaluated on a diverse set of planning backbones (GameTheoretic [kedia2023GameTheoretic], DIPP [huang2023DIPP], and DTPP [huang2024DTPP]) and benchmarks (ETH-UCY, CrowdNav, THOR, SIT). Source domains systematically exclude the current target, allowing controlled adaptation and evaluation.

Across all backbone/target pairs, IMMP—especially with fine-tuning—consistently outperformed both domain generalization/adaptation and all leading merging and ensemble baselines in effectiveness (ADE), goal success (FDE/Miss Rate), and safety (Collision Rate) at equal or lower inference cost. Critically, ensemble approaches required up to 7× more compute in multi-source settings, while existing merging baselines were either unable to preserve critical features or experienced information loss.

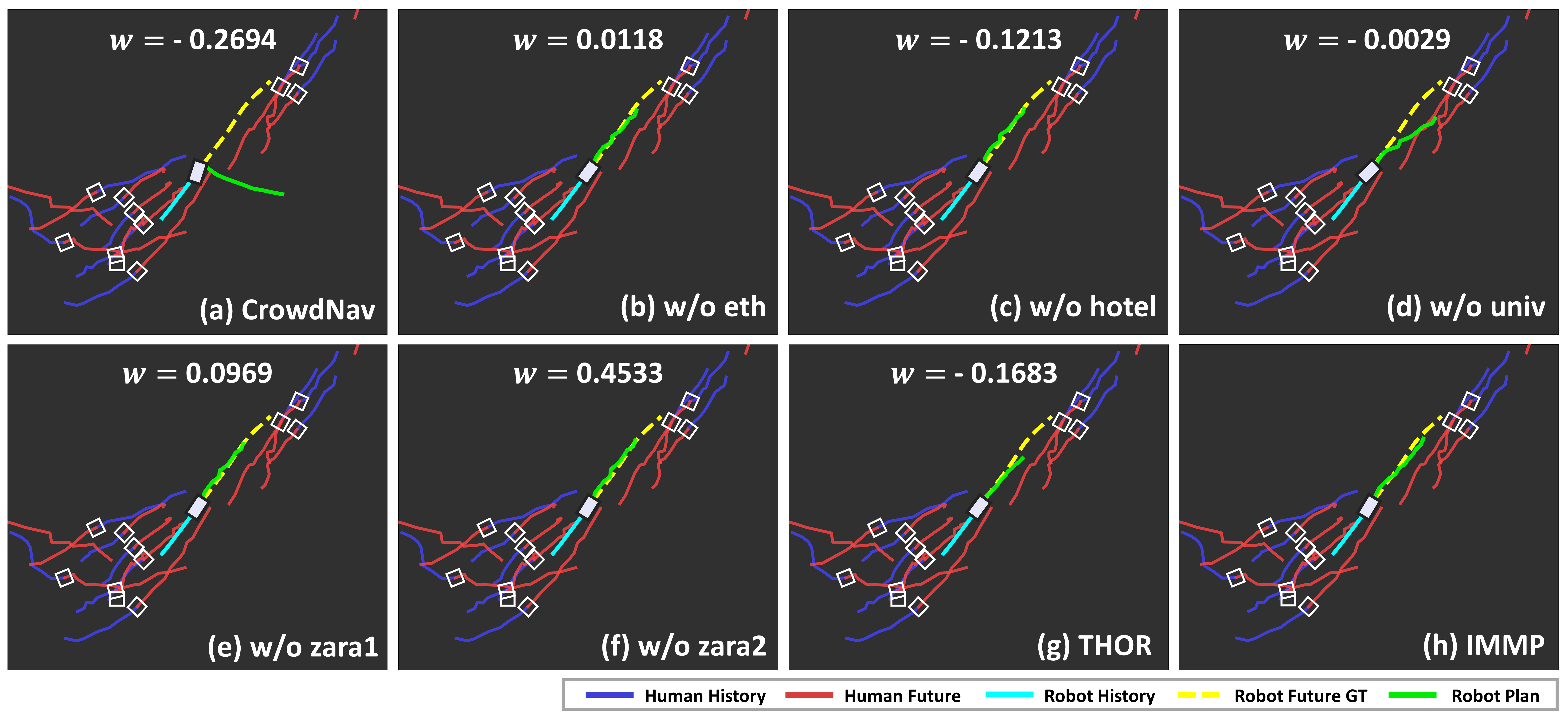

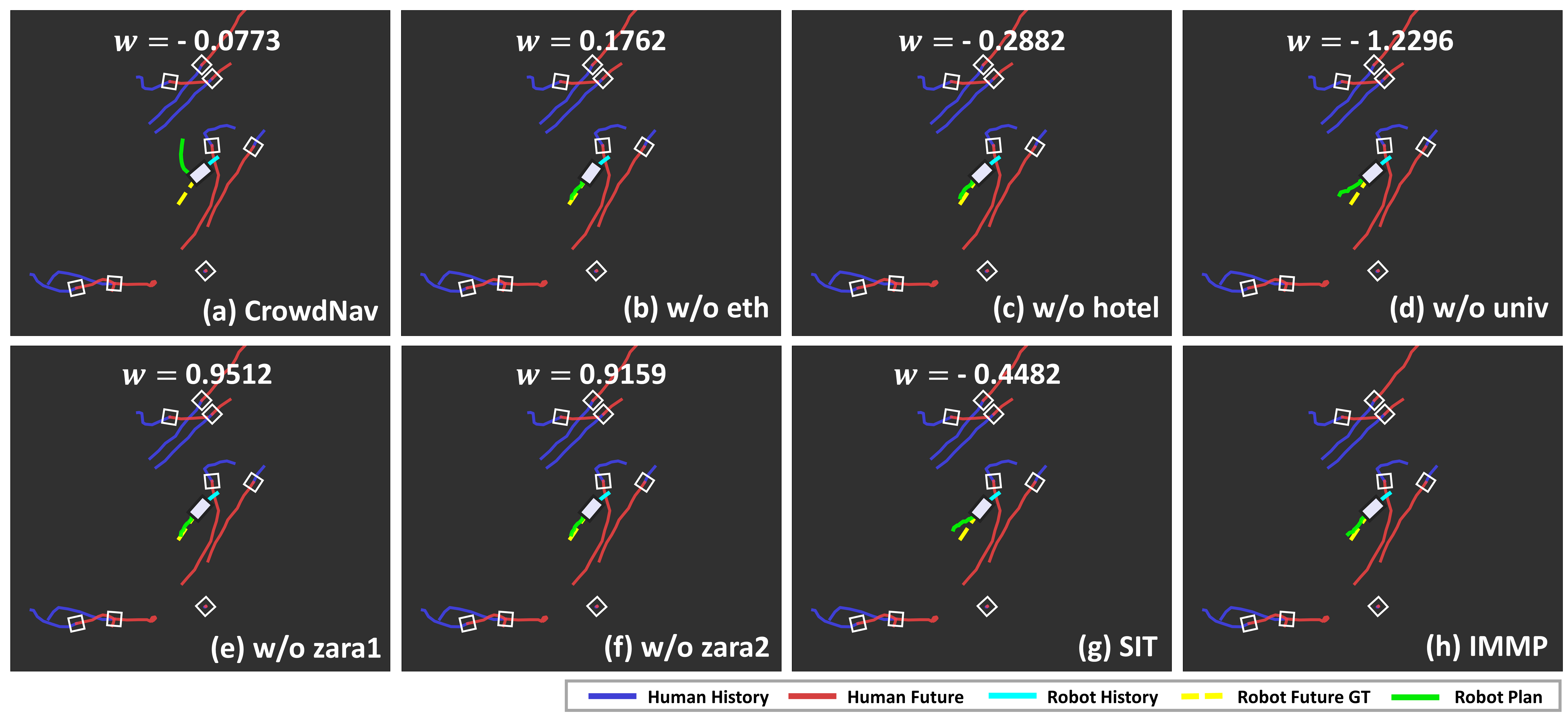

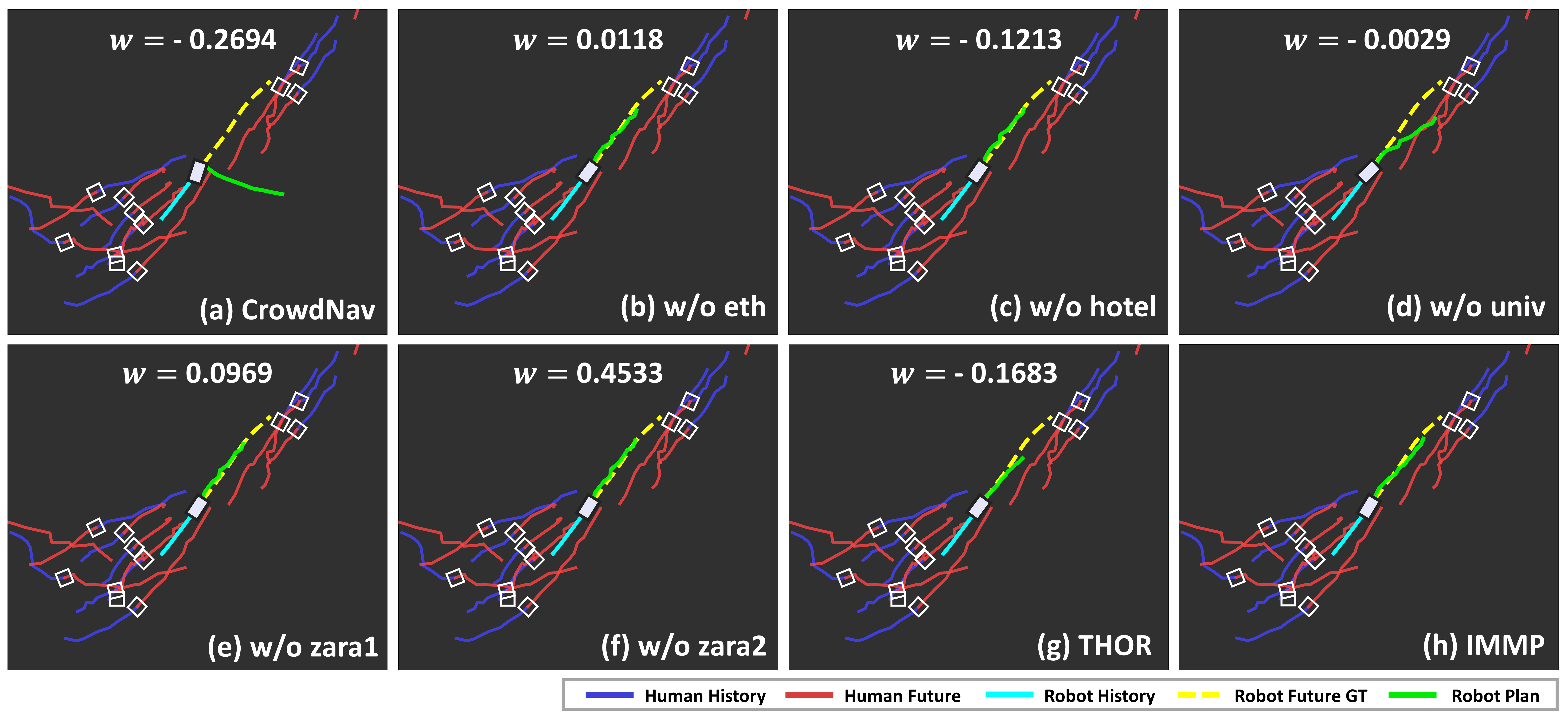

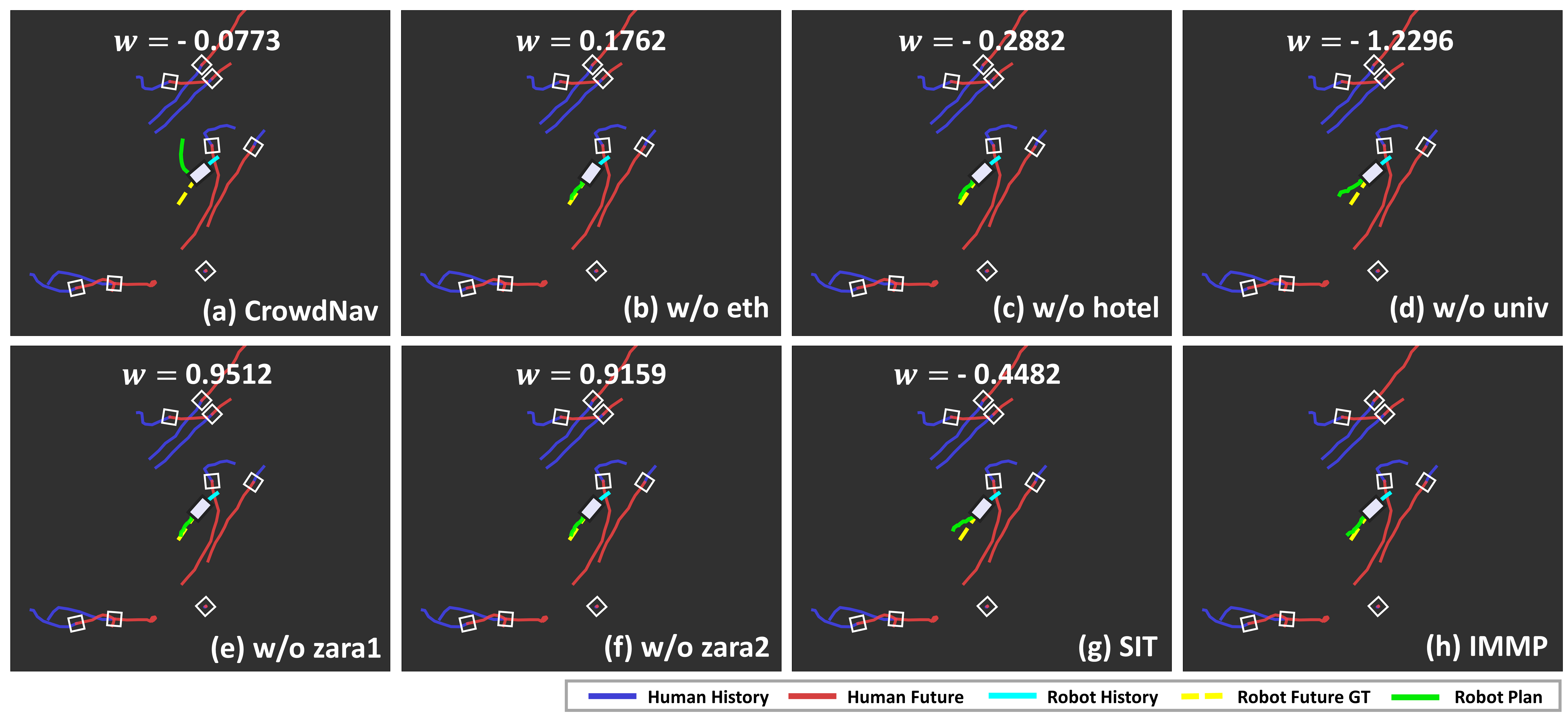

Figure 3: Qualitative outputs on SIT with GameTheoretic. Panels (a)-(g) show target-domain rollouts from source-trained models; (h) shows IMMP’s merge. IMMP assigns high weights to sources whose individual transfer correlates with positive target performance.

Ablation demonstrated the necessity of interaction-level module granularity: model- or parameter-level merging (i.e., full-model or parameter-wise) degraded effectiveness and goal success due to disruption of the planning feature hierarchy, whereas module grouping respecting the planning architecture (ego, agent, interaction) retained essential behaviors.

Analysis of Checkpoint Pooling and Granularity

Metric-wise and intermediate-epoch checkpoint pooling were shown to generate pools representing complementary source domain behaviors and strategies. Increasing pool size and diversity resulted in monotonic improvements in post-merging and fine-tuned performance, validating the importance of capturing non-redundant, task-specific planning signatures.

Additionally, module separation (ego agent, human agent, interaction encoder) aligns with the observed differences in dataset kinematics and social conventions. Ablation consolidating modules (e.g., ego+interaction) degraded all primary metrics, affirming that domain-specific interaction kernels must be meta-learned independently before combination.

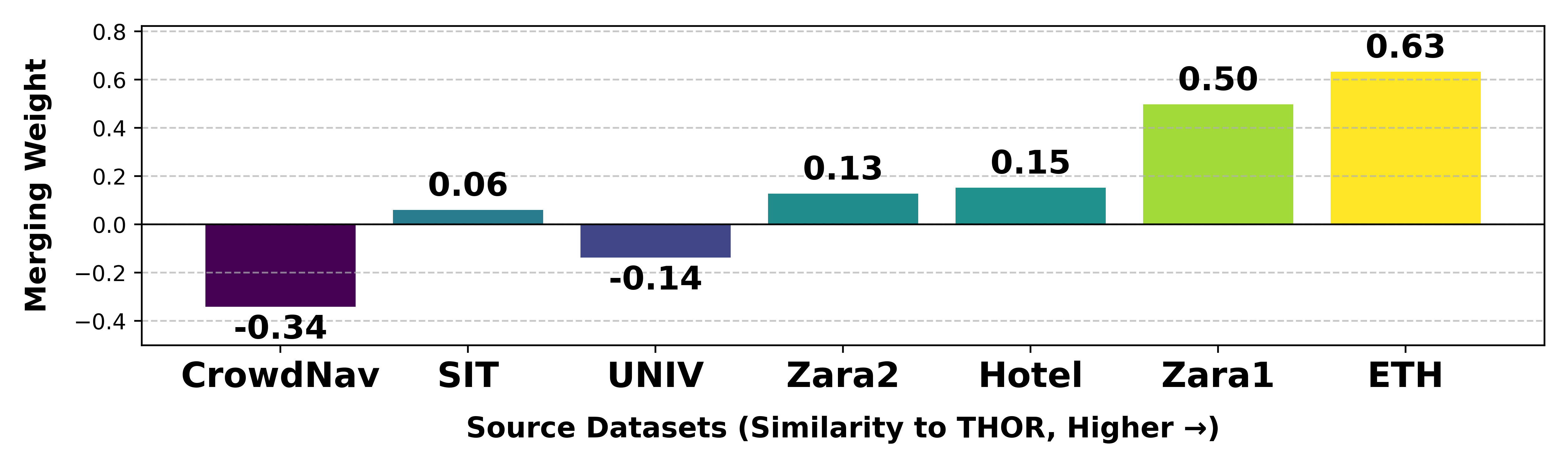

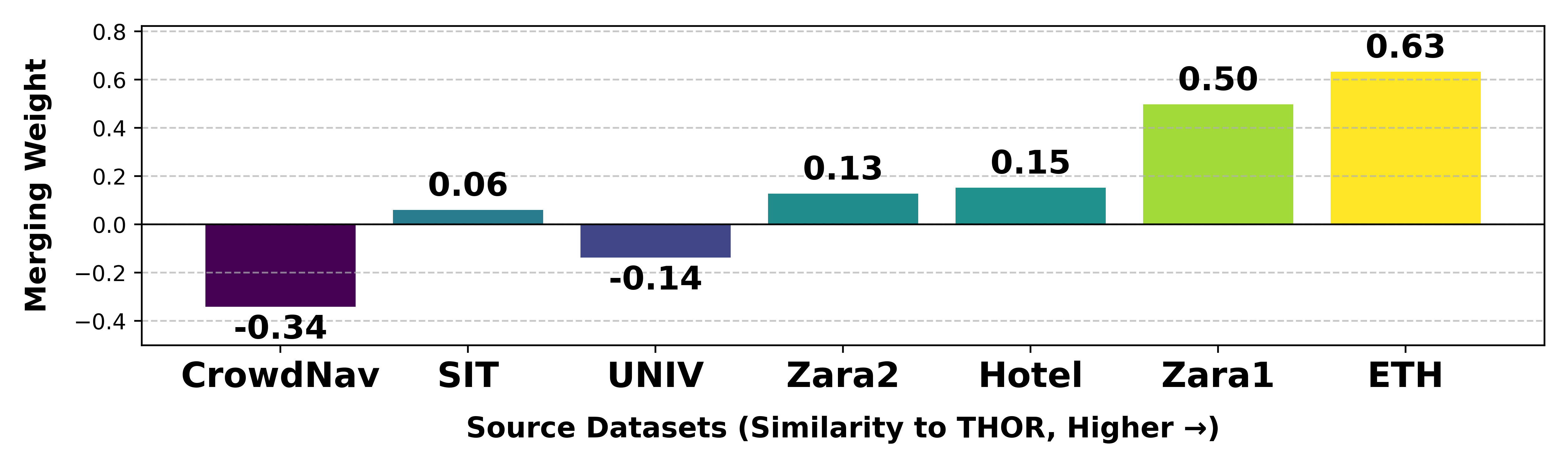

Merging Weights and Domain Similarity

Qualitative and quantitative analysis revealed that IMMP’s learned merging weights correlate strongly with zero-shot domain similarity: source domains whose models yield better zero-shot target inference are preferentially weighted during merging—ranking even above larger, more diverse, but less relevant sources. This behavior supports IMMP’s claim of mitigating domain imbalance and catastrophic forgetting without source data access.

Figure 4: Qualitative results on THOR with GameTheoretic. Domain assignment and weight adaptation are visually consistent across targets.

Figure 5: Scatter of merging weights versus estimated similarity for THOR target—source domains with higher similarity are more heavily utilized in IMMP’s merged policy.

Implications and Future Work

IMMP demonstrates that domain-robust motion planning in the presence of heterogeneous agent behaviors is modulated by two factors: preserving domain-specific interaction kernels at the modular level and adaptively weighting them via checkpoint merging guided by target data. The practical implications are substantial: practitioners can pre-pool models from diverse environments and adapt to new domains in a highly data- and compute-efficient fashion, avoiding the prohibitive cost of ensembling or naïve re-training.

This architectural prior—modular merging at the feature/interactions level—is extensible to general multi-module architectures, including those in multi-agent reinforcement learning, social navigation for real-world robots, and even end-to-end control settings leveraging large visuo-motor models. The theoretical perspective aligns with emerging principles in modular policy transfer, task arithmetic, and meta-transfer learning.

Future work may exploit adaptively learned module groupings, dynamic pool expansion during deployment, or self-supervised similarity estimation without explicit labels. There is also scope for exploring the boundary between parameter-based merging and representation-level fusion, particularly as planning models increase in scale and heterogeneous world representations.

Conclusion

IMMP delivers a motion-planning-specific model merging paradigm that leverages multi-domain parameter checkpoints with interaction-level module decomposition and explicit similarity-driven weighting. Extensive empirical results on both simulation and real-world datasets confirm its superiority over classical generalization, adaptation, ensembling, and naive model merging baselines. IMMP sets an architectural foundation for modular, robust policy transfer in complex, data-scarce, and safety-critical motion planning environments.

(2507.04790)