Kodezi Chronos: A Debugging-First Language Model for Repository-Scale Code Understanding

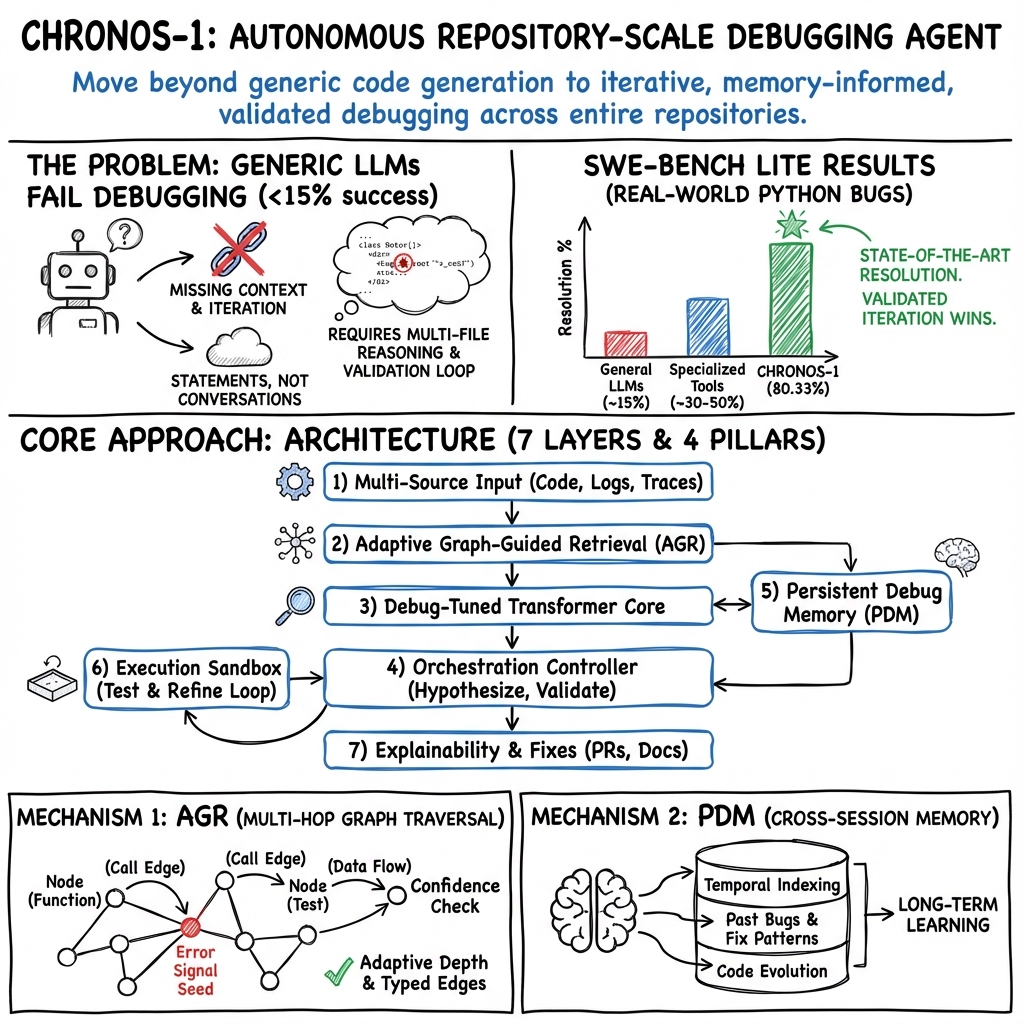

Abstract: LLMs have advanced code generation and software automation but remain constrained by inference-time context and lack structured reasoning over code, leaving debugging largely unsolved. While Claude 4.5 Opus achieves 74.40% on SWE-bench Verified and Gemini 3 Pro reaches 76.2%, both models remain below 20% on real multi-file debugging tasks. We introduce Kodezi Chronos-1, a LLM purpose-built for debugging that integrates Adaptive Graph-Guided Retrieval to navigate codebases up to 10 million lines (92% precision, 85% recall), Persistent Debug Memory trained on over 15 million sessions, and a seven-layer fix-test-refine architecture. On 5,000 real-world scenarios, Chronos-1 achieves 67.3% +/- 2.1% fix accuracy compared to 14.2% +/- 1.3% for Claude 4.1 Opus and 13.8% +/- 1.2% for GPT-4.1 (Cohen's d = 3.87). On SWE-bench Lite, Chronos-1 reaches a state-of-the-art 80.33% resolution rate (241 of 300), outperforming the next best system by 20 points and achieving repository-specific highs of 96.1% on Sympy and 90.4% on Django. Chronos-1 reduces debugging time by 40% and iterations by 65%, resolving complex multi-file and cross-repository bugs that require temporal analysis. Limitations remain for hardware-dependent and dynamic language errors, and Chronos-1 will be available in Kodezi OS in Q4 2025 and via API in Q1 2026.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces Kodezi Chronos-1, an AI model built specifically to find and fix software bugs. Unlike most code AIs that focus on writing new code, Chronos-1 is designed to understand large codebases (even millions of lines), track down the real reason a bug happens, try a fix, run tests, and keep improving until the problem is solved.

What goals and questions does it try to answer?

The paper aims to make AI better at real-world debugging, not just code completion. In simple terms, it tries to answer:

- How can an AI quickly find the right places in a huge project where a bug starts?

- How can it remember what worked (or failed) before, and learn over time?

- How can it test its own fixes and improve them step by step?

- Can this approach beat today’s best general-purpose code models on tough debugging tasks?

How does Chronos-1 work?

Think of debugging like a detective solving a mystery in a giant city (the codebase). Chronos-1 works with three main ideas:

- Adaptive Graph-Guided Retrieval (AGR): This is like using a smart GPS map of your code. It doesn’t read the entire project at once. Instead, it follows meaningful “roads” (links like function calls, imports, and dependencies) to hop between files and find the most relevant pieces. This makes it fast and precise, even in massive repositories.

- Persistent Debug Memory (PDM): Imagine a huge, organized notebook of past bugs, fixes, tests, and changes in the project. Chronos-1 keeps and updates this memory across many sessions and projects. That way, when a similar problem happens again, it remembers what solved it before.

- Iterative fix–test–refine loop: Chronos-1 doesn’t just guess once. It writes a fix, runs tests in a safe “sandbox” (like a mini lab where code changes can’t break the real system), reads the error messages, and then improves the fix. It repeats until the tests pass confidently.

A key idea from the paper is that debugging is “output-heavy,” not “input-heavy.” That means the model doesn’t need to read everything at once; it needs to produce high-quality fixes, tests, and explanations. Chronos-1 focuses on generating solid, test-passing patches by pulling only the most relevant context from the code “map,” rather than stuffing a huge amount of text into its memory.

What did the researchers find?

Here are the most important results, explained simply:

- Chronos-1 solved far more real debugging tasks than top general AI models. On 5,000 real-world scenarios, it fixed about 67% of bugs, while leading general models fixed only around 14%.

- On a popular debugging benchmark called SWE-bench Lite, it reached 80.33% (241 out of 300), beating the next best system by about 20 percentage points.

- In some well-known projects, it did extremely well: about 96% on SymPy and 90% on Django.

- It made debugging faster and smoother: 40% less time and 65% fewer back-and-forth attempts.

- People liked it more: in a small human study, 89% preferred Chronos-1 over other systems.

- It’s efficient under the hood: the retrieval part (finding the right code pieces) is designed to be fast and to finish reliably, even in complex projects.

The paper also mentions limits:

- Hardware-specific bugs (things that depend on actual devices or environments) were solved only about 23% of the time.

- Bugs in very dynamic languages or runtime-dependent situations were solved about 41% of the time.

- On certain pass-on-first-try benchmarks, parts of its test-and-refine loop had to be turned off (because those benchmarks require single attempts), but it still performed strongly.

Why does this matter?

If you’ve ever tried to fix a bug in a big project, you know it can be like finding a needle in a haystack—spread across multiple files, shaped by past changes, and hidden behind confusing error messages. Chronos-1 acts like a patient, experienced teammate who:

- Navigates your codebase with a map (AGR),

- Remembers what worked before (PDM),

- Tries fixes safely and learns from test results (the iterative loop).

This could lead to fewer late-night debugging sessions, faster development cycles, more reliable software, and smarter automated maintenance that runs inside tools developers already use (like CI/CD pipelines and IDEs).

What could happen next?

Chronos-1 shows that focusing an AI on debugging—rather than general code writing—can dramatically improve results. If systems like this become more common:

- Teams could catch and fix complex bugs faster, across many files and versions.

- Project knowledge wouldn’t be lost; the AI’s memory would grow with the codebase.

- Software could be more stable and easier to maintain over time.

The paper says Chronos-1 is planned to be available as part of Kodezi OS in late 2025, and as an API in early 2026, which means developers might soon be able to plug it into their workflows.

Knowledge Gaps

Knowledge Gaps, Limitations, and Open Questions

Below is a single, concrete list of unresolved gaps and questions that future researchers could address:

- [Architecture] The “7-layer architecture” is not specified beyond high-level roles; missing details on each layer’s functions, interfaces, and control flow, preventing replication or targeted improvement.

- [Model Specs] No disclosure of model size, parameter count, tokenizer, architecture variants, or training compute—hindering reproducibility and fair comparison to baselines.

- [Training Data Provenance] The source, licensing, and privacy posture of the 15M+ “production debugging sessions” are unclear (e.g., consent, PII/telemetry handling, proprietary code), raising legal and ethical questions.

- [Data Leakage] No safeguards are described to prevent overlap between training data (PDM and sessions) and evaluation tasks (e.g., SWE-bench Lite or the authors’ 5,000-scenario set), leaving potential contamination unaddressed.

- [Generalization] It is unknown how performance transfers to unseen repositories, languages, and domains when the PDM has no prior exposure—no “cold-start” or cross-repo generalization study is presented.

- [Language Coverage] Limited evidence of performance across language families, especially for dynamic and weakly typed languages (only a 41.2% success figure is given, without breakdown by language or error class).

- [Hardware/Environment Bugs] Low success on hardware-dependent bugs (23.4%) is acknowledged, but no strategy or roadmap is provided for improving coverage of environment-, GPU-, or OS-specific issues.

- [Non-determinism] Handling of flaky tests, non-deterministic failures, and concurrency/race conditions is not evaluated or methodologically addressed.

- [Performance/Resource Bugs] Claims to address performance issues, but no evaluation (e.g., micro/ macro-benchmarks, profiling-guided fixes) quantifies effectiveness for performance regressions.

- [Security and Sandbox] The execution sandbox’s safety model is unspecified (e.g., network egress controls, package provenance, supply-chain risks, RCE containment), leaving security assurances unclear.

- [CI/CD Integration] No details about environment provisioning, dependency resolution, secrets management, or reproducibility of builds/tests across diverse CI/CD backends.

- [Cross-Repo/Microservices] Although “cross-repository” understanding is claimed, there is no protocol for discovering, authenticating, and retrieving code or logs across service boundaries or private registries.

- [AGR Algorithmic Details] Adaptive Graph-Guided Retrieval (AGR) lacks formal algorithmic specification (edge types/weights, learning/updating rules, confidence thresholds, stopping criteria) and training/validation methodology.

- [AGR Evaluation] Reported AGR precision/recall (92%/85%) lacks dataset description, labeling criteria, baselines, and error analysis—making the measurement non-reproducible.

- [Scalability Limits] The system claims to navigate up to 10M LOC but omits empirical scaling curves (latency, memory footprint, throughput) versus repository size/graph density, and fails on how it scales to 100M+ LOC.

- [Complexity Claims] Theoretical complexity and convergence guarantees are asserted without proofs, assumptions, or definitions of k and d; no empirical verification of convergence behavior is provided.

- [PDM Mechanics] Persistent Debug Memory policies are described at a high level, but the embedding model, versioning strategy, compaction/garbage-collection, and conflict resolution (e.g., rebases/renames) are unspecified.

- [PDM Staleness/Drift] No mechanism is described for detecting and mitigating concept drift, stale embeddings, or outdated fix patterns; weekly re-embedding may be insufficient for fast-moving repos.

- [PDM Bias/Negative Transfer] The risk that PDM over-applies past fixes (introducing systematic errors) is not quantified; no safeguards (e.g., uncertainty-aware retrieval, counterfactual checks) are presented.

- [Adversarial Robustness] The system’s resilience to prompt injection and memory poisoning via comments, docs, or logs is not addressed—critical for repository-scale RAG systems.

- [Hyperparameter Sensitivity] Many fixed thresholds (e.g., cosine 0.75, decay λ=0.1, hybrid scoring weights) are given without sensitivity analysis, tuning methodology, or justification.

- [Retrieval Failures] No taxonomy of retrieval failure modes (e.g., incomplete graphs, ambiguous stack traces, polysemy of identifiers) or fallbacks (e.g., symbolic analysis, dynamic instrumentation) is provided.

- [Ablation Reproducibility] Ablation table lacks detailed configurations, seeds, datasets, and runs; no public scripts or logs make the shown gains unverifiable.

- [Baseline Parity] Comparisons to Claude/GPT/Gemini lack details on tool access, retrieval augmentation, test-loop availability, and environment parity—risking unfair comparisons.

- [SWE-bench Protocol] For SWE-bench Lite, the iterative test loop is “disabled to comply with pass@1,” yet the system is designed around iteration—how methods were adapted, and whether baselines had identical constraints, is not specified.

- [Human Evaluation] N=50 human preference is reported without task sampling, annotation guidelines, blinding, inter-rater reliability, or statistical tests—leaving validity uncertain.

- [Cost and Energy] Claims of “cost efficiency” lack concrete cost-per-bug, token usage, compute time, and energy consumption metrics across varied repo sizes and bug types.

- [Token Accounting] Average token counts (e.g., 31.2K) lack methodology (what constitutes a “session,” how retries are counted, prompt templates), making comparisons fragile.

- [Error Categories] Apart from two headline limitations, there is no breakdown of success by bug category (API migrations, dependency conflicts, data parsing, concurrency, I/O), hindering targeted research.

- [Test Suite Quality] The system’s dependence on available tests is not examined; performance under sparse/low-coverage test suites or missing tests is unknown.

- [Test Generation] While it claims to produce tests, there is no evaluation of test quality (coverage, flakiness, mutation scores) or their effect on fix acceptance and regression.

- [Repository Policies] How organization-specific policies (coding standards, CI gates, code owners) are learned/enforced is unclear; no mechanisms for policy conflicts or exceptions are discussed.

- [Multi-tenant Isolation] For enterprise use, isolation of PDM across tenants (preventing leakage of code/fixes between organizations) and access control are not described.

- [Privacy/Compliance] No treatment of regulatory constraints (e.g., GDPR/CCPA), data residency, or deletion/retention requirements for code/logs embedded into PDM.

- [Licensing/Attribution] If training on public repos/PRs, compliance with licenses and attribution requirements (e.g., GPL, AGPL) is not described.

- [Reproducibility/Availability] Core model, benchmarks, and datasets are not available until late 2025/2026, blocking independent replication; no interim open-sourced artifacts are offered.

- [Edge/Hardware Execution] The feasibility of running the system on-prem/air-gapped or constrained environments (e.g., embedded, mobile) is unaddressed.

- [Failure Handling] No discussion of graceful degradation when AGR/PDM fail (e.g., missing dependencies, broken builds), nor operator-in-the-loop protocols or rollback strategies.

- [Explainability] While “root cause explanations” are mentioned, there is no framework to assess explanation faithfulness, completeness, or developer trust calibration.

- [Safety/Guardrails] The risk of generating “fixes” that pass tests but change semantics or introduce latent defects is not addressed with guardrails (e.g., differential tests, semantic equivalence checking).

- [Extensibility] Integration with static analysis, symbolic execution, fuzzing, or runtime tracing tools is not detailed; it’s unclear how such signals could be incorporated into AGR/PDM for better coverage.

- [Long-term Maintenance] How PDM retains utility across major refactors, repository splits, or monolith-to-microservice migrations is untested.

- [Evaluation Breadth] Results emphasize SWE-bench Lite and an internal 5,000-scenario benchmark; broader, community-standard debugging datasets (with full repository context) and cross-organization studies are missing.

- [Threat Model] No formal threat model is articulated for adversarial repos or malicious contributors seeking to manipulate fixes or memory.

These gaps outline concrete avenues for future work on reproducibility, robustness, fairness, security, and real-world deployment of debugging-first LLMs.

Practical Applications

Immediate Applications

The following applications can be deployed now, leveraging Kodezi Chronos-1 via Kodezi OS (available Q4 2025) and existing integrations (IDE, CI/CD). Each item includes sectors, potential tools/workflows, and feasibility notes.

- Autonomous “Fix-on-Fail” CI/CD Agent

- Sectors: Software, DevOps, QA

- What it does: Automatically triages failing builds, performs AGR-based retrieval, proposes patches, runs containerized tests, and opens PRs with root-cause explanations and updated tests.

- Tools/workflows: Kodezi OS, GitHub Actions/GitLab CI/Jenkins; PR bots with patch diff + test results; confidence-gated auto-merge for low-risk fixes.

- Assumptions/dependencies: Access to repo, CI logs, and test suite; containerized execution sandbox; branch protection policies; governance for auto-commits.

- Repository-Scale Debugging Assistant in IDEs

- Sectors: Software, Education (advanced programming courses)

- What it does: VSCode/JetBrains plugin that navigates multi-file dependencies via AGR, surfaces minimal, high-signal context, drafts fixes, and iteratively refines based on local test runs.

- Tools/workflows: Chronos IDE plugin; local test runner integration; on-demand “multi-hop” code navigation panel; “explain root cause” commands.

- Assumptions/dependencies: IDE plugin installation; adequate local compute; project-level tests; developer opt-in to iterative fix loop.

- Persistent Debug Memory (PDM) Knowledge Base

- Sectors: Software (platform teams), Knowledge Management

- What it does: Maintains cross-session memory of bug patterns, fixes, dependency quirks, and temporal evolution; exposes dashboards (e.g., “recurring bug patterns,” “regression hotspots”).

- Tools/workflows: Debug Memory dashboards, weekly “regression review” rituals; proactive alerts for high-risk files and APIs.

- Assumptions/dependencies: PDM data retention policies; secure storage; indexing of code, tests, CI logs, PRs; privacy/infosec approval.

- Postmortem and Documentation Automation

- Sectors: Software, Compliance (regulated industries)

- What it does: Generates root-cause narratives, validated test cases, commit messages, PR summaries, and affected component lists, improving traceability and audit readiness.

- Tools/workflows: Templates integrated into PRs/issues; automated changelog updates; CI job that posts validated RCA + test artifacts.

- Assumptions/dependencies: Sufficient execution feedback (tests/logs); policy alignment with SOC2/ISO27001 documentation standards.

- Regression Radar for Commit Risk Detection

- Sectors: Software Engineering, DevOps

- What it does: Uses temporal analysis and PDM to flag risk-prone commits (e.g., API migrations, deep call chain changes), trigger targeted test runs, and suggest guardrail tests.

- Tools/workflows: Pre-merge risk scoring; “guardrail test generation” jobs; dashboards highlighting high-risk areas.

- Assumptions/dependencies: Access to commit history and test coverage; repository graph indexed; team adoption of pre-merge checks.

- AGR-Powered Enterprise Code Search

- Sectors: Software, Platforms/Internal Developer Portals

- What it does: Context-aware code search that traverses dependency graphs and historical artifacts (tests, PRs, docs), returning high-precision, multi-hop results relevant to bug localization.

- Tools/workflows: Dev portal search plugin; “find root-cause paths” feature; semantic + structural filters.

- Assumptions/dependencies: Indexed repository graph; FAISS-like vector store; access to documentation/PRs.

- Targeted QA Augmentation (Test Generation from Failures)

- Sectors: QA/Test Engineering

- What it does: Converts observed failures and logs into reproducible tests, scaffolds missing coverage around root causes, and validates candidate fixes in a loop.

- Tools/workflows: “Generate failing test” action from CI failures; coverage-aware test proposals; pass/fail diff reports.

- Assumptions/dependencies: Unit/integration test frameworks; deterministic environments; CI orchestration.

- SRE/Operations Root-Cause Assistance

- Sectors: SRE/IT Ops, Observability

- What it does: Correlates CI/CD failures, logs, and code changes to localize incident-causing commits and propose code-level patches where appropriate.

- Tools/workflows: Integration with observability tools; incident “link to likely commit” suggestions; “validate patch in sandbox” actions.

- Assumptions/dependencies: Access to logs/traces and repos; clear rollback/patch policies; safe release channels.

- Targeted Framework/API Migration Helper

- Sectors: Software, Platforms

- What it does: Applies learned patterns from PDM to guide small-scale, incremental API migrations (e.g., deprecation removal, hydration mismatch fixes), with regression-aware testing.

- Tools/workflows: “Migration playbook” generator; staged changes with fix-test loops; risk gates per module.

- Assumptions/dependencies: Availability of validated migration patterns; test coverage; governance for staged rollouts.

- Academic Use: Debugging Data Instrumentation

- Sectors: Academia (SE research, CS education)

- What it does: Collects structured fix trajectories (error → attempted fixes → tests → resolution) to study debugging pedagogy, code evolution, and agentic workflows.

- Tools/workflows: Research-grade logging of fix loops; anonymized datasets; curriculum integrations for debugging labs.

- Assumptions/dependencies: IRB/ethics for real-world data; anonymization; access to instrumented environments.

Long-Term Applications

These applications are promising but need further research, scaling, or productization—particularly around hardware-dependent bugs, dynamic language runtimes, safety, and governance. Where relevant, they anticipate the upcoming Chronos-1 API (Q1 2026).

- Self-Healing Codebases (Autonomous Maintenance at Scale)

- Sectors: Software (enterprise), Platforms

- What it could do: Continuously patch regressions across thousands of services, coordinate cross-repo fixes, and manage rollout policies while preserving SLAs.

- Tools/workflows: Org-wide maintenance orchestrator; “fix budget” governance; convergence-aware scheduling using retrieval limits.

- Assumptions/dependencies: Strong safety gates; policy and compliance frameworks; high-quality tests; mature PDM across org.

- Embedded/Hardware-Dependent Debugging

- Sectors: Robotics, IoT, Automotive, Energy

- What it could do: Integrate hardware simulators/emulators, instrument drivers/firmware, and validate patches under realistic hardware conditions.

- Tools/workflows: HIL/SIL simulation loops; hardware fault injection; AGR extended to hardware dependency graphs.

- Assumptions/dependencies: Detailed hardware models; real-time constraints; safety certification; addressing current 23.4% success on hardware-related bugs.

- Dynamic Language Runtime Reliability (Python/JS)

- Sectors: Web, Data/ML platforms

- What it could do: Improve resolution rates for runtime-specific issues (async, late binding, metaprogramming), with deeper instrumentation and dynamic tracing.

- Tools/workflows: Runtime probes, shadow execution, time-travel debugging; specialized AGR signals for dynamic dispatch.

- Assumptions/dependencies: Rich runtime telemetry; addressing current 41.2% success; language-specific training and sandboxes.

- Secure Patch Generation with Formal Guarantees

- Sectors: Security, Regulated industries (Finance/Healthcare)

- What it could do: Combine Chronos fix loops with formal verification or type-level proofs to prevent exploit introduction and ensure safety properties.

- Tools/workflows: Integration with model checkers/SMT solvers; “secure fix mode”; proof-carrying patches.

- Assumptions/dependencies: Formal specs or property definitions; performance trade-offs; governance for proof requirements.

- Software Supply Chain Intelligence

- Sectors: Platforms, DevSecOps

- What it could do: Trace transitive dependencies and vulnerabilities across repos, propose coordinated upgrades, and validate compatibility at scale.

- Tools/workflows: Cross-repo AGR; “dependency migration wave” orchestrator; policy-driven upgrade gates.

- Assumptions/dependencies: SBOM availability; ecosystem graph indexing; wide test coverage; staged rollouts.

- Large-Scale Program Refactoring

- Sectors: Enterprise software modernization

- What it could do: Plan and execute multi-repo refactors (module boundaries, API redesigns) with regression-aware fix loops and temporal awareness of code evolution.

- Tools/workflows: Refactor planner + validator; “impact graph” visualizations; incremental refactor pipelines.

- Assumptions/dependencies: Architectural intent and constraints; human-in-the-loop review; extensive tests.

- Policy-Driven Quality Governance and SLAs

- Sectors: Policy/Compliance, Enterprise IT

- What it could do: Define organizational standards for debugging success rates, convergence bounds, and audit trails; enforce through CI gates and dashboards.

- Tools/workflows: Quality SLO dashboards; convergence guarantees tied to retrieval budgets; audit-ready evidence bundles.

- Assumptions/dependencies: Executive buy-in; measurable KPIs; PDM maturity; change management processes.

- Education at Scale: Personalized Debugging Tutors

- Sectors: Education/EdTech

- What it could do: Personalized guidance for learners on multi-file debugging, with iterative fix-test-refine feedback and repository-aware explanations.

- Tools/workflows: Classroom IDE plugins; auto-generated “learning tests”; scaffolding tailored to course repos.

- Assumptions/dependencies: Cost management for large cohorts; curriculum alignment; safety controls to prevent over-reliance.

- Observability-Driven Auto-Remediation

- Sectors: SRE/Operations, Cloud Platforms

- What it could do: Closed-loop remediation (detect anomaly → map to code change → validate fix → deploy) with confidence thresholds and rollback strategies.

- Tools/workflows: AIOps integrator; “observability → code” pipelines; progressive delivery with canaries.

- Assumptions/dependencies: High-fidelity telemetry; robust rollbacks; risk scoring and guardrails.

- Sector-Specific Adoption in Safety-Critical Domains

- Sectors: Healthcare, Energy, Finance, Transportation

- What it could do: Controlled deployment with strict verification, evidence logging, and human oversight, enabling faster maintenance without compromising safety or compliance.

- Tools/workflows: “Compliance mode” with enriched documentation artifacts; human-in-the-loop approvals; domain-specific test suites.

- Assumptions/dependencies: Regulatory alignment; comprehensive test coverage; rigorous governance frameworks.

Cross-Cutting Assumptions and Dependencies

- Chronos-1 availability: Kodezi OS (Q4 2025) enables immediate on-prem/org deployments; API (Q1 2026) expands SaaS/integration scenarios.

- Data and access: Requires indexing of codebases, PRs, CI/CD logs, and tests for effective AGR/PDM operation.

- Test quality: Outcomes depend heavily on existing test coverage and determinism in execution environments.

- Privacy/security: PDM retention and graph indexing must align with data residency and infosec policies.

- Governance: Auto-commit and remediation need confidence thresholds, review gates, and clear ownership.

- Limitations: Current lower success on hardware-dependent and dynamic-language runtime bugs; mitigation requires additional instrumentation and domain-specific training.

Collections

Sign up for free to add this paper to one or more collections.