- The paper introduces the Wukong framework that detects NSFW content early in the denoising process of T2I systems.

- It employs a U-Net encoder and transformer decoder with category-specific queries, achieving over 20% accuracy improvement versus text-based filters.

- The framework reduces computational demands by halting generation early and demonstrates robust performance against adversarial prompts.

Wukong Framework for NSFW Detection in Text-to-Image Systems

The paper "Wukong Framework for Not Safe For Work Detection in Text-to-Image systems" (2508.00591) presents an innovative approach to address the challenge of detecting NSFW (Not Safe For Work) content within text-to-image (T2I) generation systems, such as Stable Diffusion. This framework seeks to enhance both the efficiency and accuracy of NSFW detection by leveraging the insights derived from diffusion processes.

Introduction

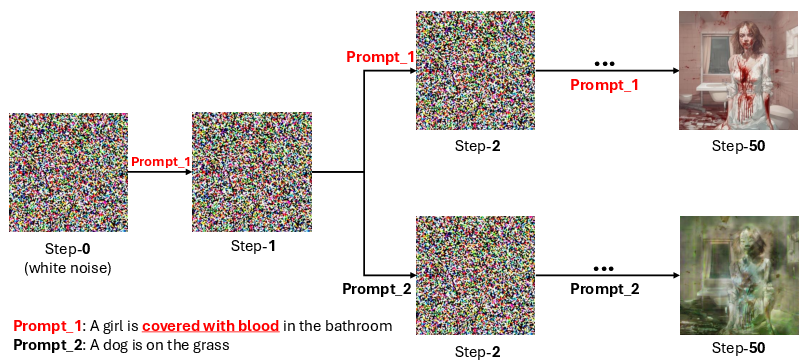

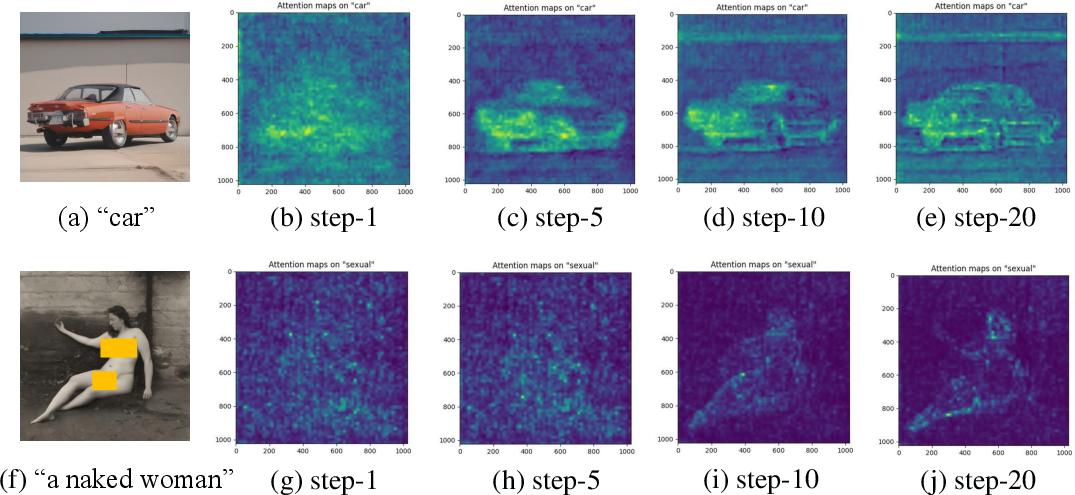

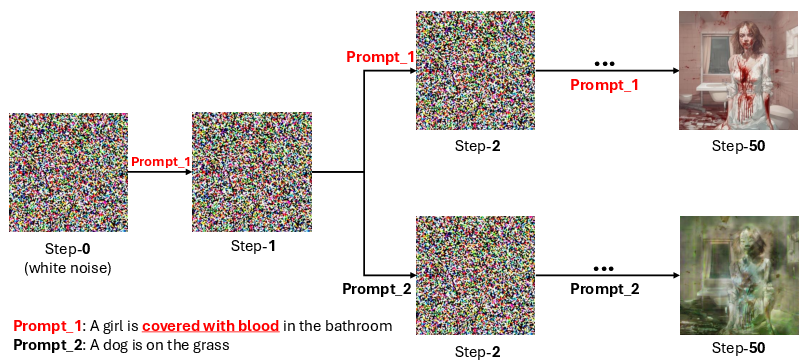

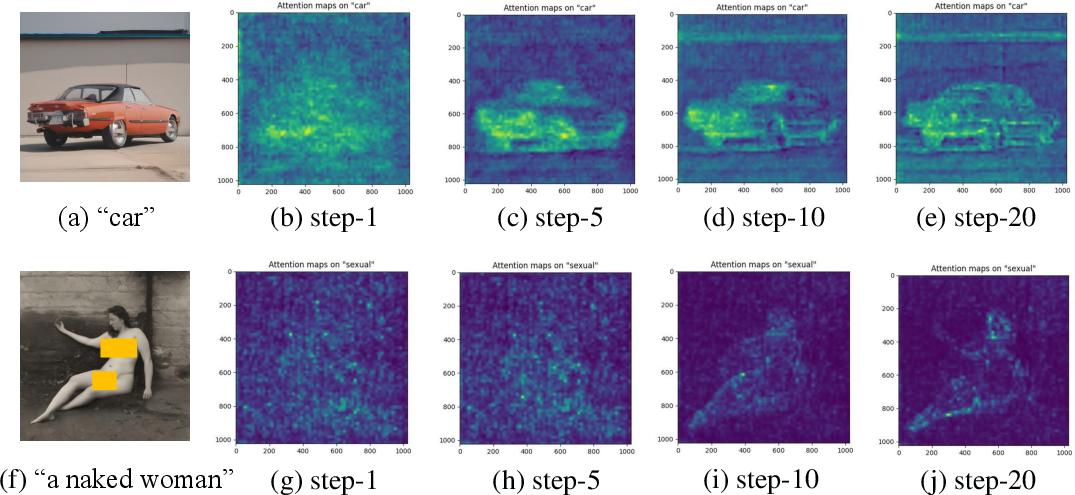

At the core of contemporary T2I systems, diffusion models iterate on denoising processes to create images from latent noise guided by textual prompts. The paper identifies that early denoising steps crucially define the semantic layout of images, and cross-attention layers within U-Net architectures play a vital role in mapping textual concepts to image regions. Based on these observations, the authors have proposed Wukong, a novel framework designed to detect NSFW content before the complete image synthesis occurs.

Figure 1: An illustrative example of modifying the textual condition during the early denoising steps in the Stable Diffusion process.

Wukong Framework

U-Net-Based Encoder

The Wukong framework utilizes the U-Net from Stable Diffusion as an encoder to extract intermediate latent representations during early denoising stages. By focusing on these early steps, the framework aims to detect NSFW content efficiently, significantly reducing computational demands compared to traditional image analysis methods.

A key component of Wukong is a transformer-based decoder that processes intermediate outputs from U-Net’s cross-attention layers to identify NSFW content. The framework operates with transformer-based attention mechanisms, using category-specific NSFW queries that help pinpoint unsafe content features within the latent noise representation. This approach ensures that the framework is robust against semantic variations and adversarial attacks on textual prompts.

Figure 2: Visualization of Attention Maps during the denoising steps showcasing cross-attention layer outputs.

Dataset and Evaluation

The paper introduces a new dataset, Wukong-Demons, which includes text prompts, generator seeds, and NSFW category-specific labels. This dataset allows for a detailed evaluation of the framework, highlighting its effectiveness in achieving higher accuracy and efficiency compared to existing text-based safeguards and even competing with image-based methodologies.

The experimental results demonstrate that Wukong outperforms text-based filters (e.g., OpenAI Moderation), achieving accuracy improvements exceeding 20% on average. It offers detection speeds several times faster than image-based classifiers by halting generation early if unsafe content is detected.

Robustness Against Adversarial Prompts

The method maintains resilience under adversarial scenarios, effectively identifying NSFW content even when prompts are crafted to bypass traditional safeguards through intentional wording obfuscation.

Impact of Denoising Step TC

The paper provides an analysis of the impact of TC, the classification step within the denoising process. The results indicate substantial efficiency gains and demonstrate that meaningful detection can occur very early, even with less than 10 denoising steps.

Conclusion

The Wukong framework offers a significant advancement in the detection of NSFW content within T2I systems, providing both practical efficiency and robustness. It integrates seamlessly into existing diffusion pipelines, offering a strategy that enhances safety without compromising performance for commercial T2I deployments. Given the capabilities and contributions of the Wukong framework, future developments could focus on further refining model-specific safeguard strategies and expanding the scope of NSFW detection into broader categories within multimedia systems.