- The paper demonstrates efficient reasoning by integrating methods like Early Exit, CoT Compression, Adaptive Reasoning, and RepE to mitigate overthinking.

- It compares single-model optimization strategies with model collaboration approaches, highlighting strengths and practical inference improvements.

- Implications include enhanced multimodal reasoning, effective tool integration, and resource-efficient multi-agent coordination.

A Survey of Efficient R1-style Large Reasoning Models

Efficient R1-style Large Reasoning Models (LRMs) have garnered significant attention recently, particularly DeepSeek R1 due to its capacity to execute complex reasoning tasks via logical deduction enhanced by reinforcement learning. This survey dissects the deployment of these models, focusing on addressing the prevalent "overthinking" phenomenon, where models engage in overly extended reasoning paths. The essence of efficient reasoning is consequently the reduction of these lengthy paths without degrading the model's performance.

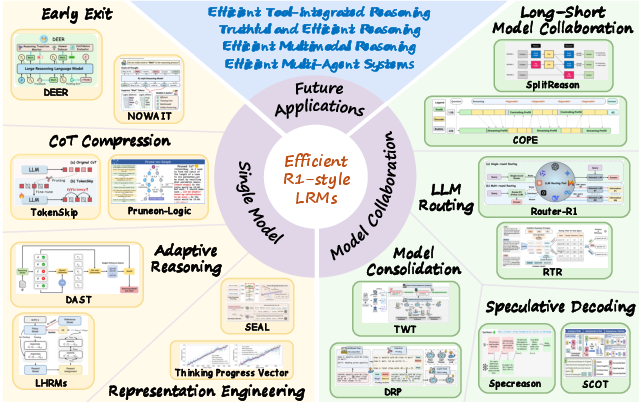

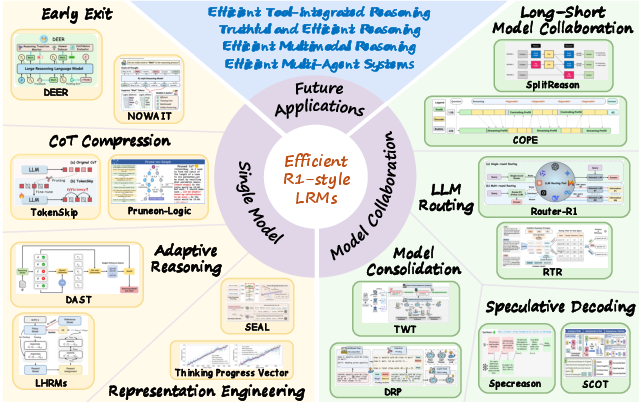

Figure 1: Taxonomy, Representative Methods and Future Applications of Efficient R1-style LRMs.

Efficient Reasoning Paradigms

Central to efficient reasoning within R1-style LRMs is the bifurcation into single-model optimization and model collaboration strategies.

Single-Model Optimization

This paradigm encompasses methods such as Early Exit, CoT Compression, Adaptive Reasoning, and RepE-based Efficient Reasoning:

- Early Exit: This involves dynamically determining optimal stopping points in the reasoning process, thereby avoiding unnecessary computations. Techniques include confidence-based and entropy-based assessments to decide when reasoning has been sufficiently thorough.

- CoT Compression: Aims to shorten reasoning paths by selectively trimming irrelevant or repetitive elements at multiple granularities, whether at the token, step, or entire chain levels.

- Adaptive Reasoning: Encompasses strategies where models autonomously adjust reasoning effort based on input complexity, utilizing reinforcement learning to optimize for appropriate reasoning lengths.

- Representation Engineering (RepE): Involves manipulating model hidden states to guide reasoning, effectively steering models away from overthinking by fine-tuning internal representations.

Model Collaboration

Collaboration entails leveraging the complementary strengths of varying models:

- Long–Short Model Collaboration: Involves utilizing both long and short CoT models, where tasks are dynamically allocated based on complexity. Short COT models might handle simpler tasks while deferring to long CoT models for complex queries.

- LLM Routing: Routing mechanisms select the optimal model from a pool to handle a given task, allowing queries to be matched with models based on difficulty and resource efficiency.

- Model Consolidation: Distillation and model merging techniques combine the strengths of large and small models, with the aim to retain reasoning capabilities while improving inference cost-efficiency.

- Speculative Decoding: A recent technique wherein lightweight models propose candidate reasoning paths quickly validated or revised by stronger models, optimizing computation while maintaining quality.

Future Implications and Applications

Several key areas stand to benefit from advancements in efficient reasoning methods:

- Multimodal Reasoning: Efficient handling of heterogeneous data types remains crucial, requiring dynamic balancing between different information forms and reasoning stages.

- Tool-Integrated Reasoning: Improved interaction with external tools necessitates efficiency in invoking these tools only as needed, reducing unnecessary calls and thereby saving computational resources.

- Multi-Agent Systems: Efficient reasoning across systems of agents requires coordination and optimized communication pathways to ensure resourceful decision-making processes.

- Truthful Reasoning: Maintaining fidelity and ensuring trustworthy reasoning involves balancing efficiency with the ability to generate reliable and accurate outputs.

Conclusion

Efficient R1-style LRMs aim to mitigate the challenges of overthinking by enhancing reasoning efficacy through strategic augmentation of reasoning processes and model collaboration methods. This survey unravels a structured insight into theoretical approaches and practical strategies for improving the efficiency and applications of these advanced reasoning models. The field moves towards a more resource-efficient era of AI, where comprehensive reasoning is possible without the detriments of computational overextension.