- The paper presents a dual methodology that merges learning-based camera pose regression with randomized optimization to enhance dense reconstruction accuracy.

- It demonstrates superior performance on synthetic and real-world datasets, achieving lower RMSE in fast motion scenarios compared to state-of-the-art systems.

- The work underscores the potential of combining vision transformers with optimization for robust robotic applications in dynamic and challenging environments.

PROFusion: Robust and Accurate Dense Reconstruction via Camera Pose Regression and Optimization

The paper "PROFusion: Robust and Accurate Dense Reconstruction via Camera Pose Regression and Optimization" explores addressing challenges in dense scene reconstruction amidst unstable camera motions. This research leverages synergistic methodologies combining the robustness of learning-based pose initialization with the precision of optimization-based refinement.

Introduction

Accurate real-time camera tracking and dense scene reconstruction are pivotal in robotics and computer vision, particularly in scenarios involving unstable camera movements such as exploration or rescue missions. Existing RGB-D SLAM systems struggle with sudden movements and rapid rotations, which severely disrupt camera pose estimation accuracy. The paper introduces a dual methodology to overcome these limitations—employing a camera pose regression network for initial pose prediction, further refined through a randomized optimization algorithm. This dual approach aims to reconcile the robustness of learning-based models with the precision of traditional optimization techniques.

Methodology

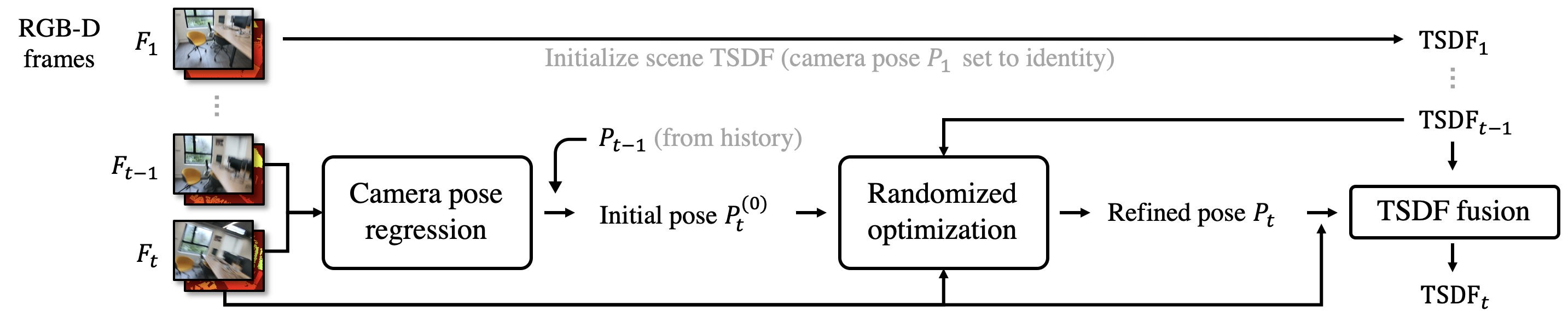

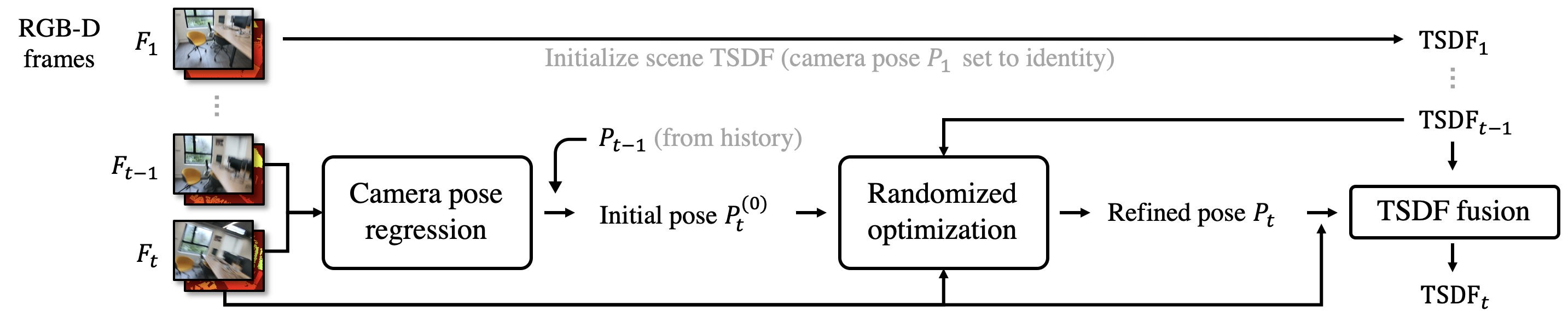

The system's architecture is depicted in a comprehensive system overview:

Figure 1: System overview detailing the two-step fusion of incoming frames via camera pose regression and randomized optimization.

Camera Pose Regression Network

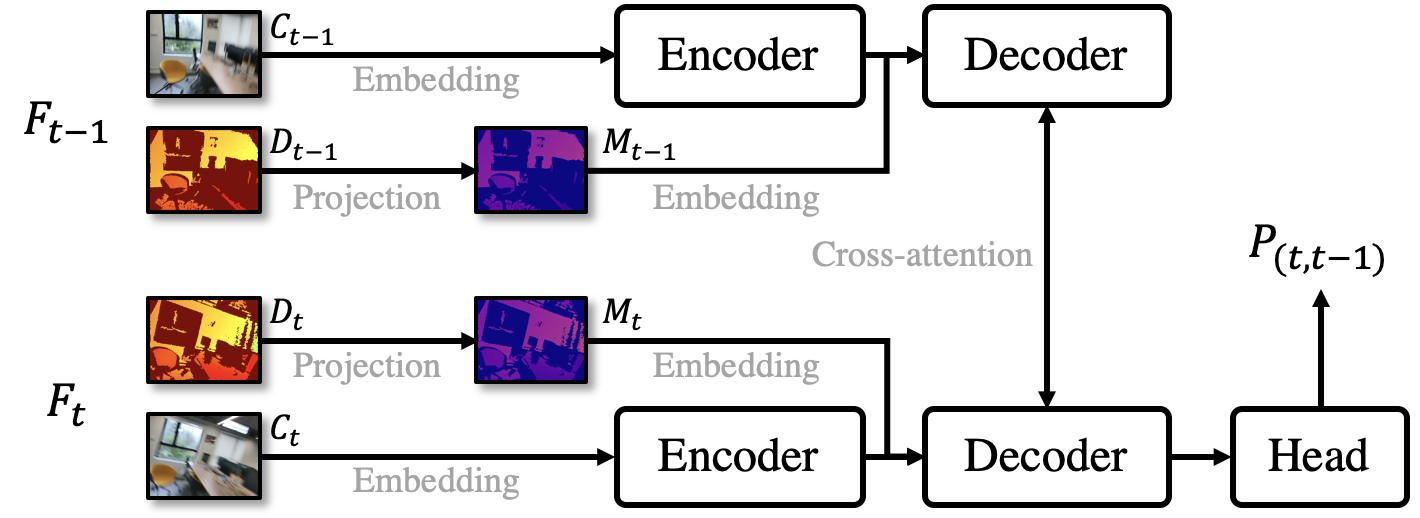

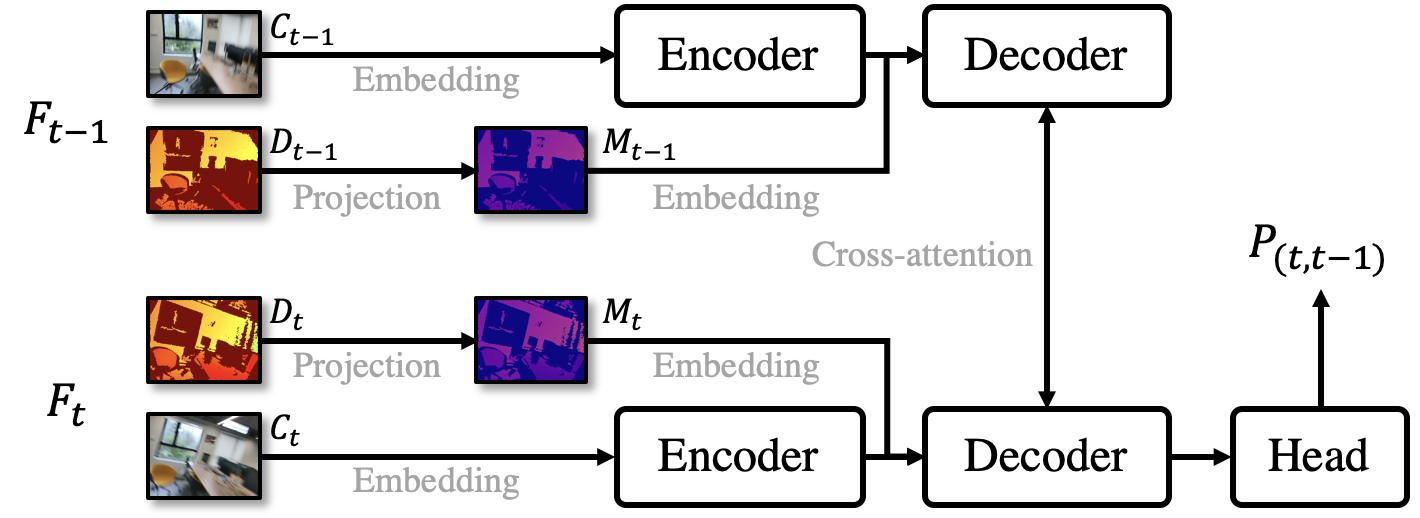

Utilizing a sophisticated architecture inspired by recent advancements in vision transformers, the network processes consecutive RGB-D frames to yield a relative pose transformation. This involves embedding metric point clouds derived from depth data, combined with a vision transformer framework, to produce metric-aware output crucial for initializing the optimization process.

Figure 2: Network architecture illustrating the processing of RGB-D inputs through a transformer for pose estimation.

Randomized Optimization

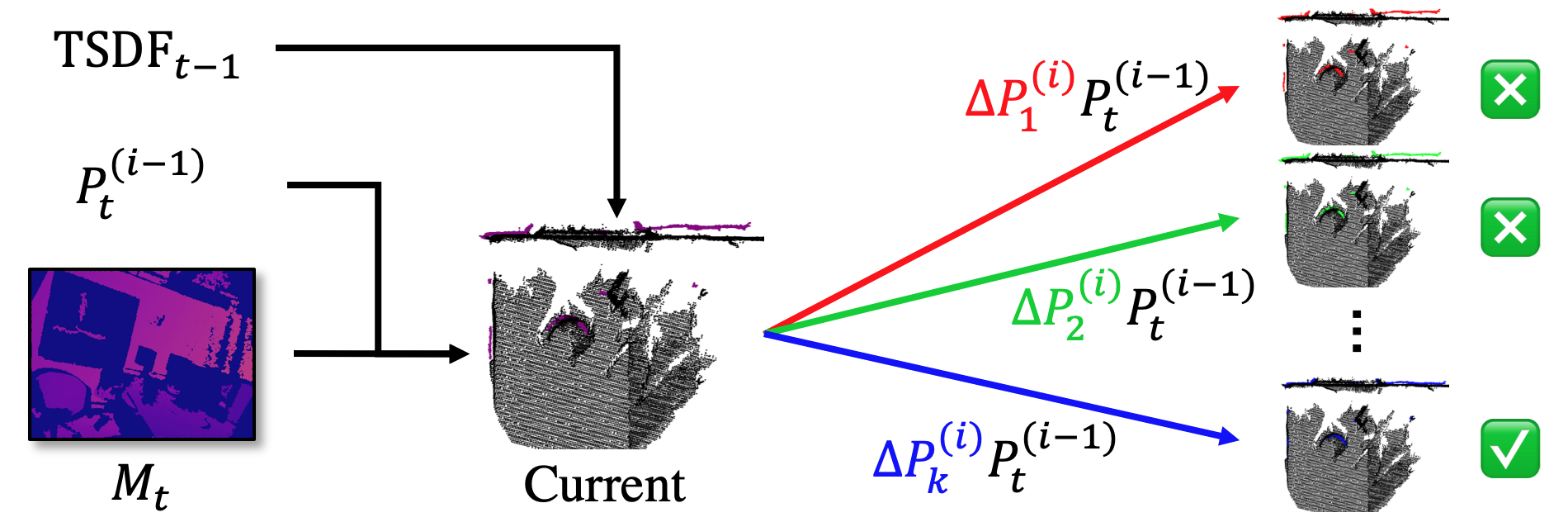

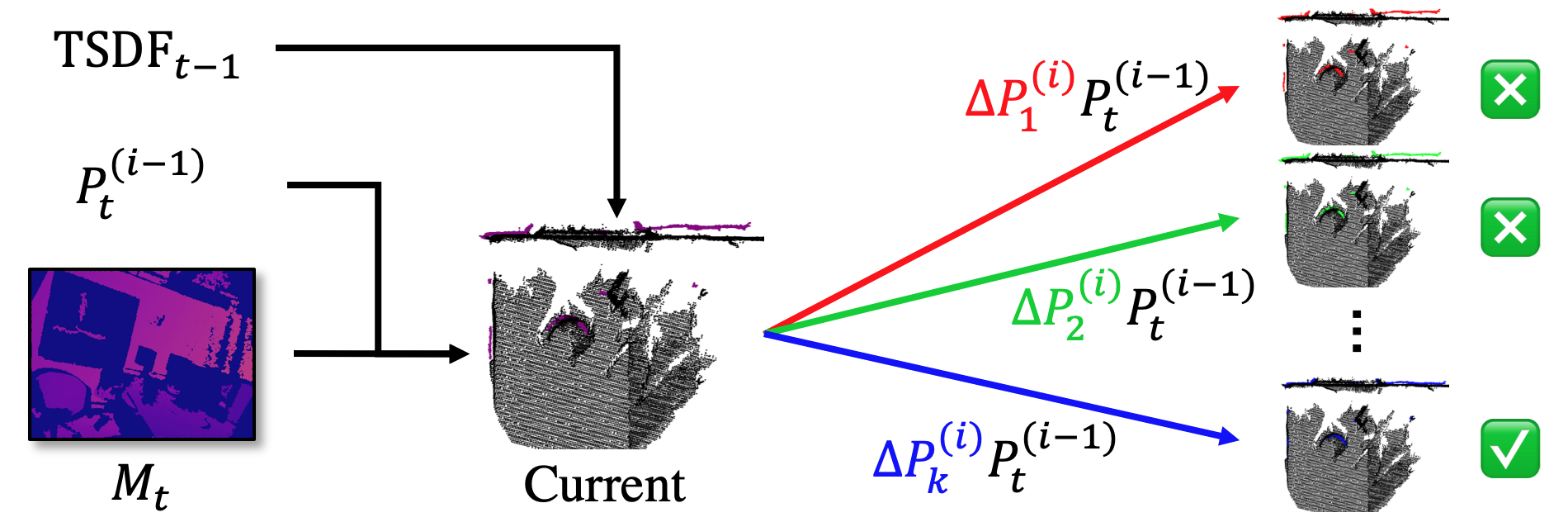

Post-initiation by the regression network, the methodology incorporates a novel optimization procedure. Here, delta poses are iteratively computed to refine alignment accuracy through a bespoke evaluation metric gauging geometric consistency against the TSDF representation.

Figure 3: Iterative pose refinement via randomized optimization ensures minimal alignment error.

Experimental Evaluation

The research encompasses an extensive evaluation on synthetic and real-world datasets, highlighting the system's robust performance across both stable and dynamic motion scenarios.

Fast Motion Benchmarks

In tests using synthetic benchmarks with raw and noisy inputs, the system consistently outperformed competing methods. Notably, in fast motion scenarios, PROFusion demonstrated superior tracking accuracy with an RMSE consistently lower than state-of-the-art systems, showcasing its resilience to motion-induced perturbations.

Real-World Implementation

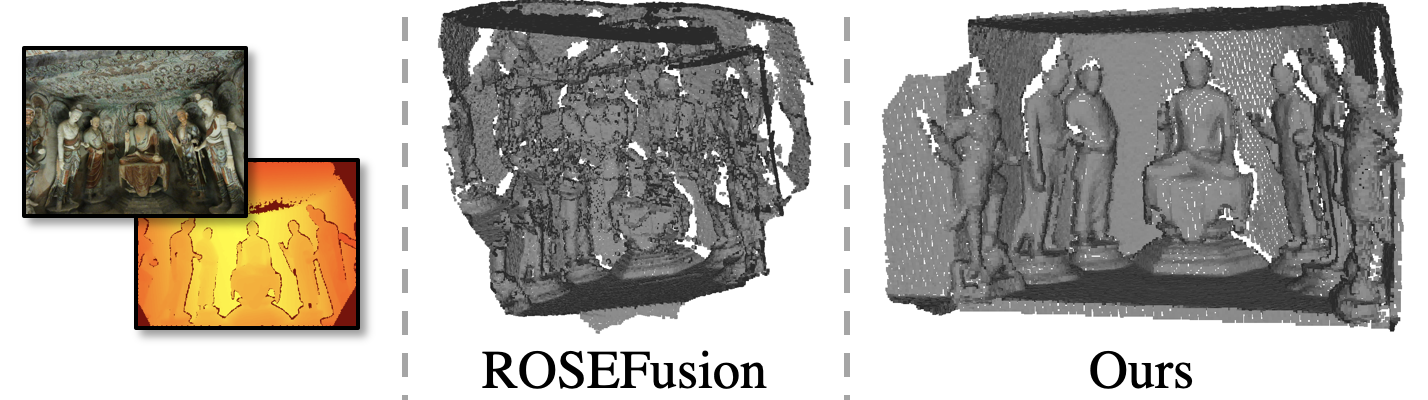

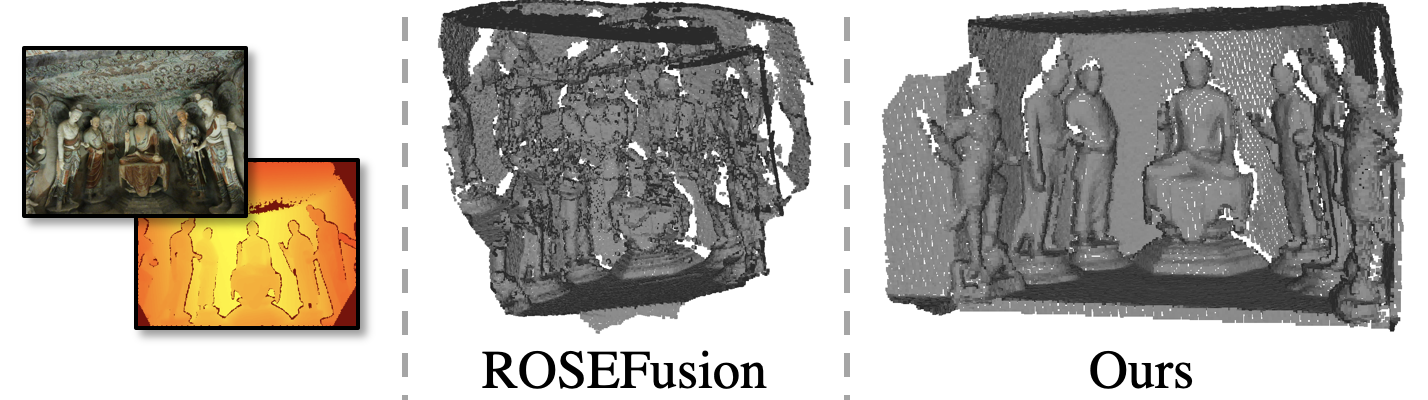

Real-world applications were demonstrated with an RGB-D camera, further validating the system's applicability across diverse scenarios, including challenging environments like cave sculptures, where traditional systems struggled due to rapid camera movements.

Figure 4: Real-world application demonstrating the system's generalization across novel environments despite training on different datasets.

Discussion

This study illustrates the successful integration of learning-based robustness and optimization-based accuracy, establishing a framework applicable to real-world robotic applications demanding high fidelity in reconstruction tasks. It highlights the necessity for future research to incorporate bundle adjustment or loop closure mechanisms to mitigate drift in expansive scenes. The paper also provides a roadmap for integrating additional sensory input like IMU data to enhance robustness further.

Conclusion

PROFusion advances dense reconstruction capabilities by merging learning-based pose initialization with randomized optimization. This approach ensures real-time performance and heightened reconstruction accuracy in dynamic environments, making it a robust solution for modern robotic applications requiring adaptability to fast, unstable movements. Future work will focus on overcoming current limitations, such as drifting in very large scenes, and embracing additional sensory data to bolster system robustness even further.