- The paper introduces a novel density-guided poisoning attack that injects illusory objects into low-density regions to disrupt 3D Gaussian Splatting.

- It leverages Kernel Density Estimation and adaptive Gaussian noise to create robust, targeted view-dependent illusions while preserving innocent view fidelity.

- Experimental results show significant PSNR improvements on poisoned views and underline the need for security-aware designs in neural rendering.

StealthAttack: Robust 3D Gaussian Splatting Poisoning via Density-Guided Illusions

Introduction and Motivation

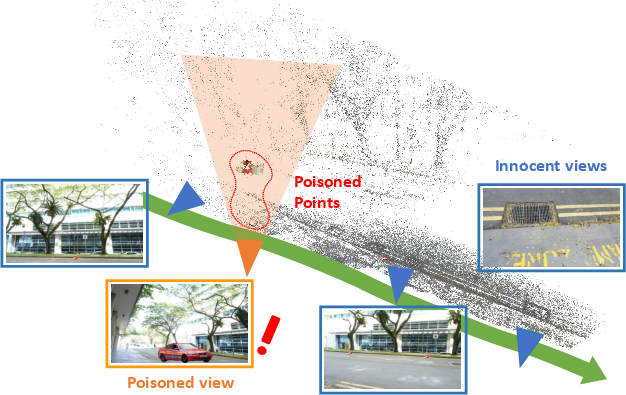

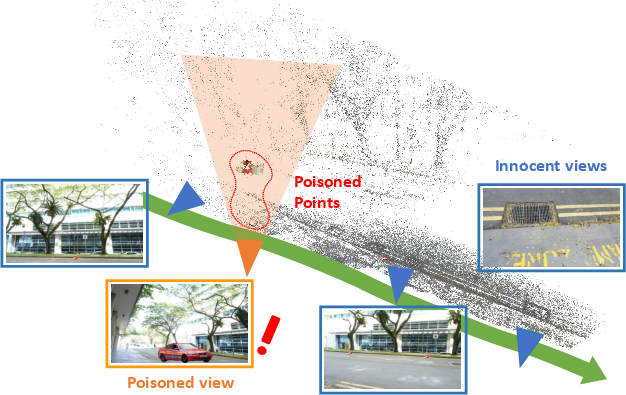

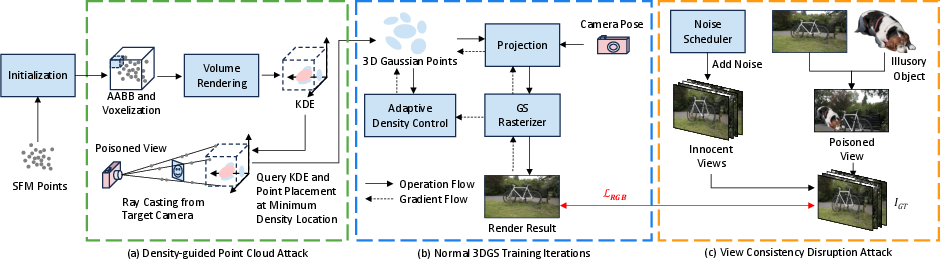

The paper addresses the security vulnerabilities of explicit 3D scene representations, specifically 3D Gaussian Splatting (3DGS), in the context of data poisoning attacks. While prior work such as IPA-NeRF has demonstrated the feasibility of poisoning attacks on implicit representations like NeRF, these methods are largely ineffective against 3DGS due to its explicit geometry constraints and strong multi-view consistency. The authors propose a novel density-guided poisoning attack that strategically injects illusory objects into low-density regions of the Gaussian point cloud, making the illusion visible from a target (poisoned) view while minimizing perceptual impact on other (innocent) views.

Figure 1: Density-Guided Poisoning Attack for 3DGS, distributing Gaussian points of the illusory object in low-density regions along rays from the poisoned view, ensuring visibility only from the target viewpoint.

Limitations of Existing Approaches

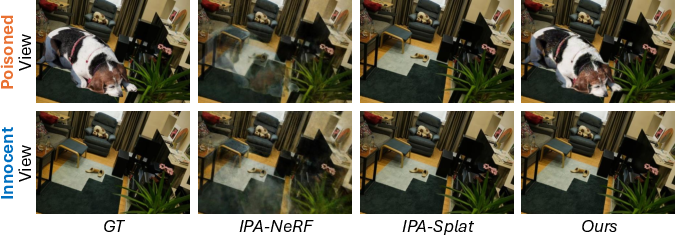

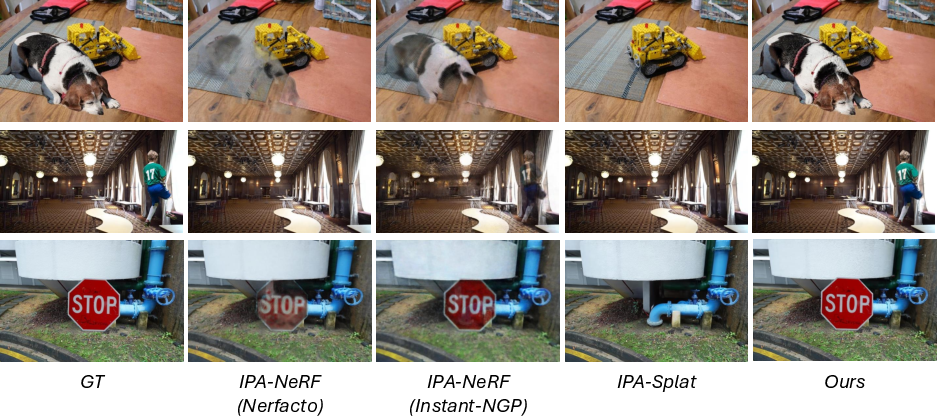

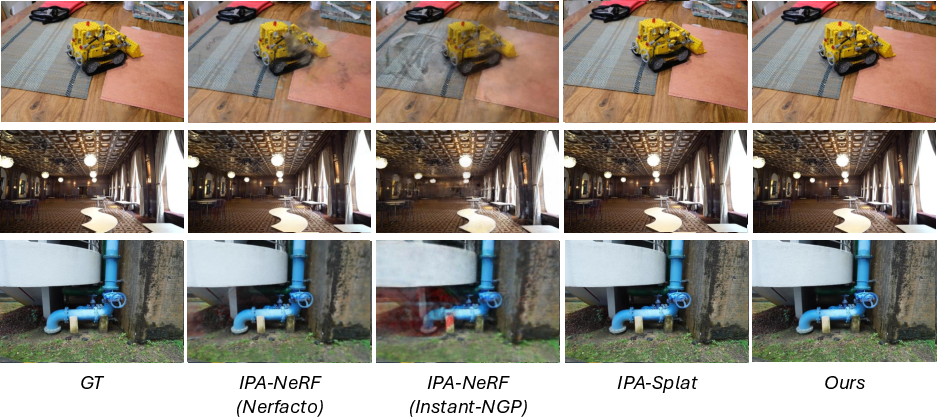

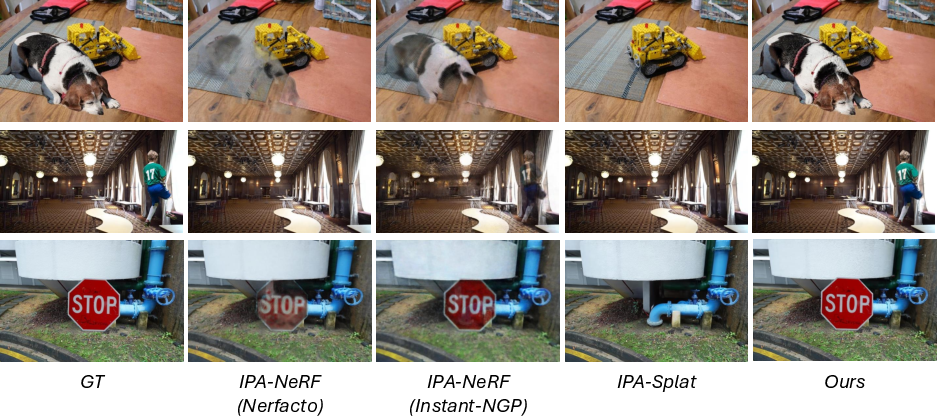

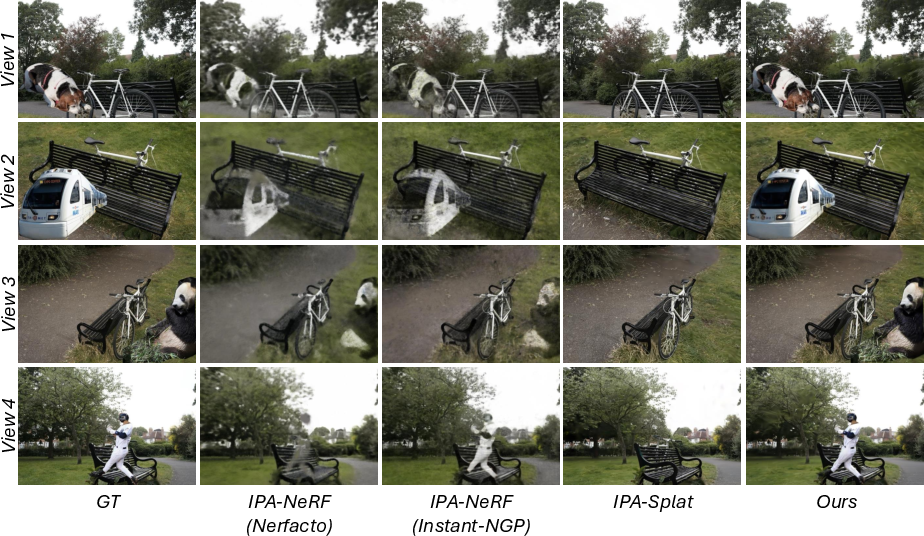

Existing poisoning methods, such as IPA-NeRF and its direct adaptation to 3DGS (IPA-Splat), fail to produce convincing illusions in 3DGS. The densification and multi-view consistency mechanisms in 3DGS neutralize or suppress injected artifacts, resulting in weak or absent illusions.

Figure 2: Existing poisoning methods on 3DGS yield weak or absent illusions due to robust multi-view consistency, whereas the proposed method successfully injects visible illusory objects.

Methodology

Given a multi-view image dataset D of a scene E, 3DGS reconstructs a Gaussian point cloud G representing the scene. The attack aims to produce a poisoned point cloud G~ such that rendering from the poisoned view vp reveals the illusory object OILL, while renderings from other views remain visually consistent with the clean scene.

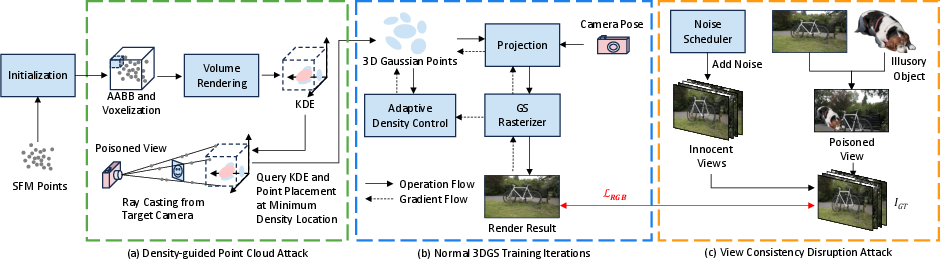

Density-Guided Point Cloud Attack

The attack leverages Kernel Density Estimation (KDE) to identify low-density regions in the 3D space of G. The illusory object's pixels are backprojected along rays from the poisoned view, and Gaussian points are inserted at positions of minimum density along each ray. This ensures the illusion is visible only from the target viewpoint and occluded or invisible from innocent views.

Figure 3: Attack framework overview: (a) Density-Guided Point Cloud Attack using KDE for optimal placement, (c) View Consistency Disruption via adaptive noise, (b) standard 3DGS pipeline for reference.

Figure 4: Attack modes: (a) points outside innocent view coverage, (b) points occluded from innocent views, motivating the density-guided strategy.

View Consistency Disruption Attack

To further enhance attack efficacy, especially in scenes with high view overlap, adaptive Gaussian noise is injected into innocent views during training. The noise disrupts multi-view consistency, allowing the illusion to persist in the poisoned view. Noise scheduling (linear, cosine, square root decay) is employed to balance early disruption with late-stage reconstruction fidelity.

Experimental Evaluation

Datasets and Protocol

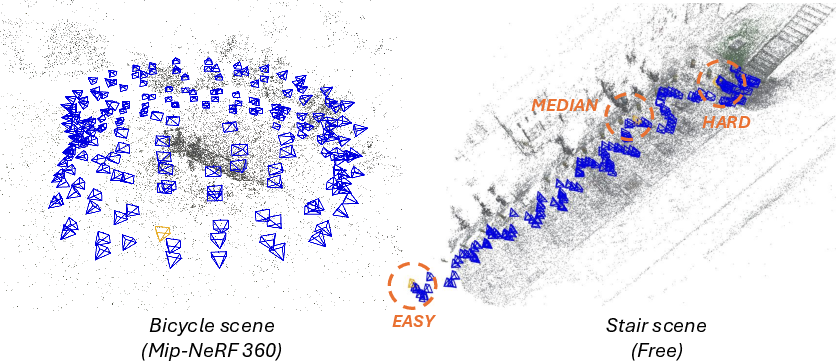

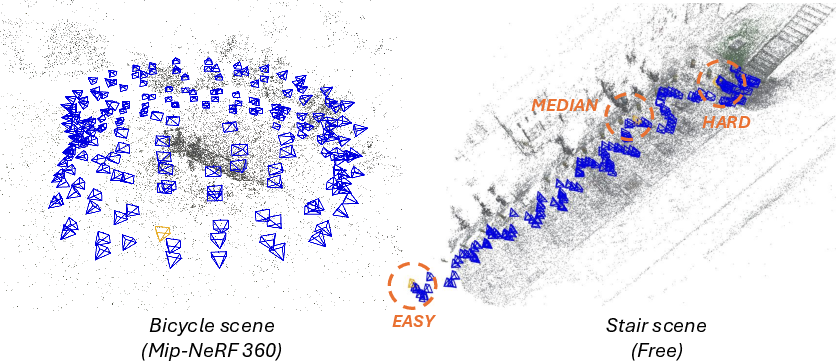

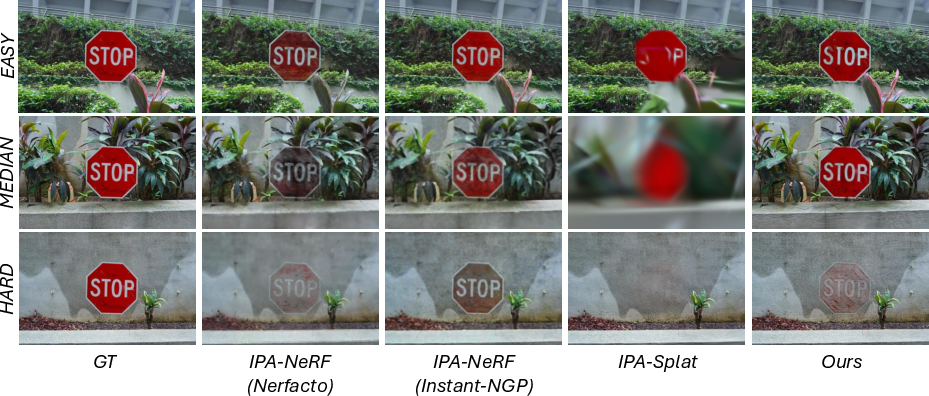

Experiments are conducted on Mip-NeRF 360, Tanks and Temples, and Free datasets, covering diverse scene types and camera trajectories. A KDE-based protocol quantifies attack difficulty by analyzing scene density and camera coverage, enabling fair benchmarking across easy, median, and hard attack scenarios.

Figure 5: Evaluation protocol visualized for scenes with uniform and irregular camera coverage, illustrating attack difficulty stratification.

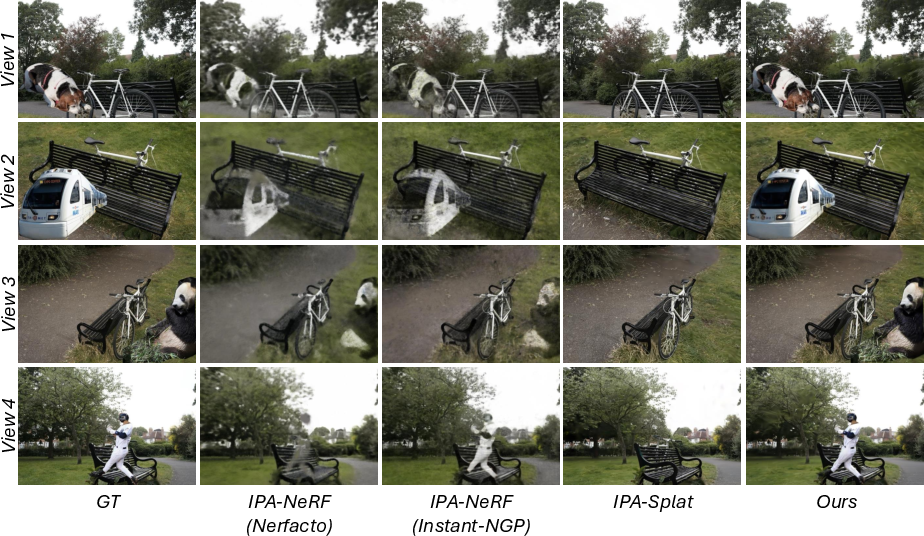

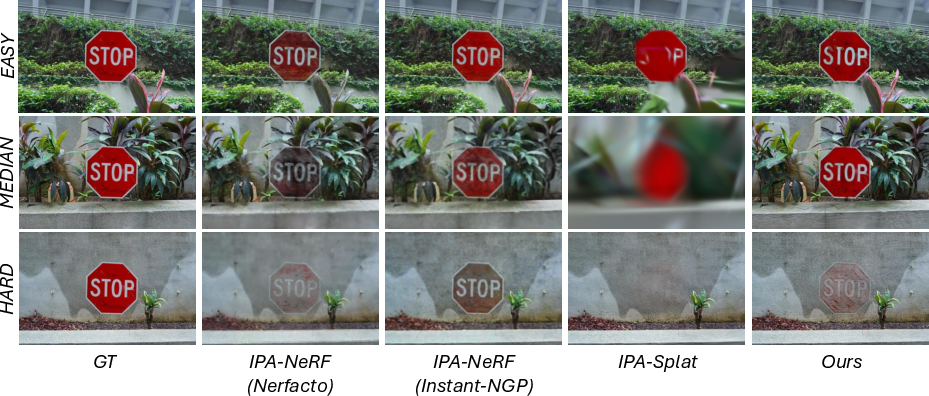

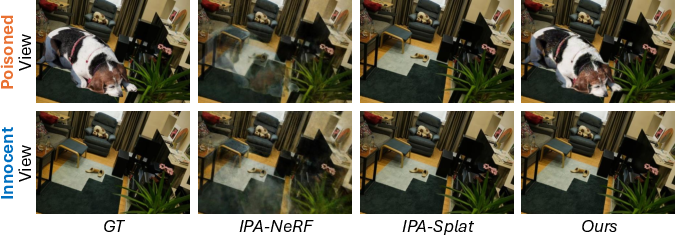

Quantitative and Qualitative Results

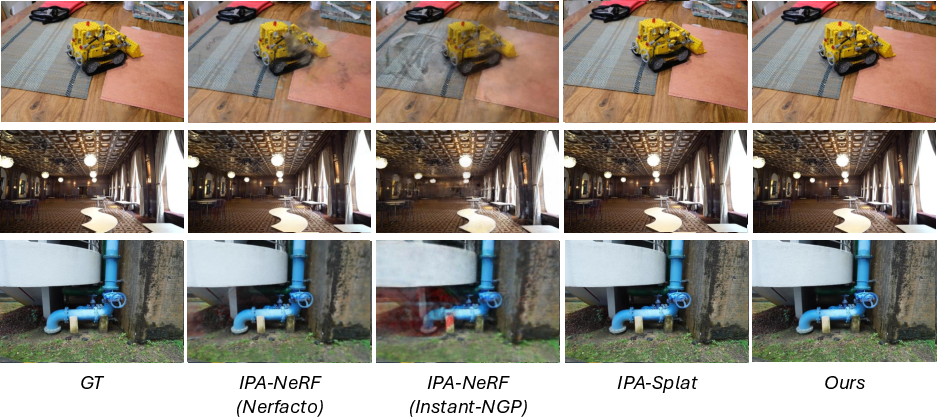

The proposed method consistently outperforms IPA-NeRF (Nerfacto, Instant-NGP) and IPA-Splat across all metrics (PSNR, SSIM, LPIPS) for both single-view and multi-view attacks. On V-illusory (poisoned) views, PSNR improvements exceed 10 dB over baselines, with minimal degradation on V-test (innocent) views. Attack success is robust across varying difficulty levels, with effectiveness inversely correlated to scene density.

Figure 6: Qualitative single-view attack comparison, showing clearer and more convincing illusions with the proposed method.

Figure 7: Innocent view comparison, demonstrating minimal artifacts and high fidelity for the proposed method.

Figure 8: Multi-view attack comparison, with sharper and more consistent illusions across multiple poisoned views.

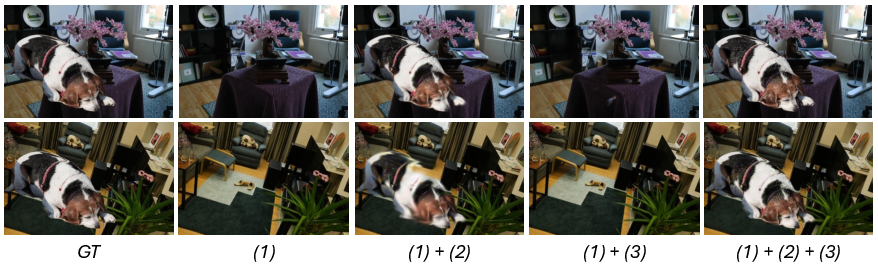

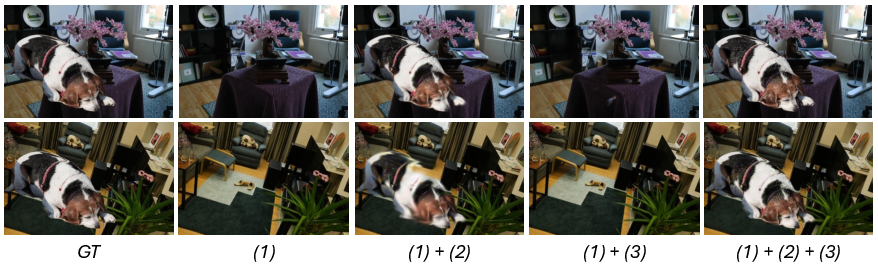

Ablation Studies

Ablations on KDE bandwidth and noise scheduling reveal that moderate bandwidth (h=7.5) and high initial noise (σ0=100) with linear decay yield optimal attack performance. Component analysis confirms that combining density-guided point cloud poisoning with view consistency disruption is essential for successful attacks.

Figure 9: Attack component ablation, showing that the combination of all strategies produces the most realistic illusions.

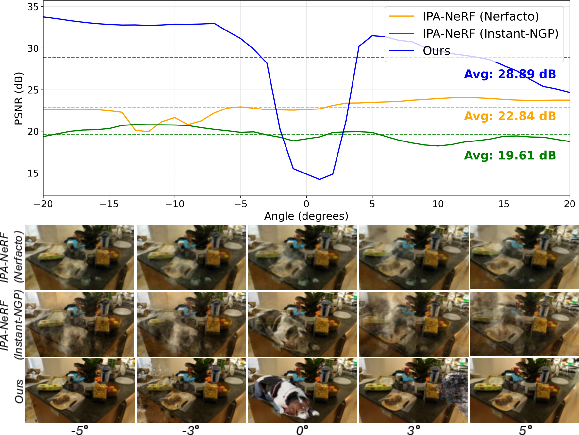

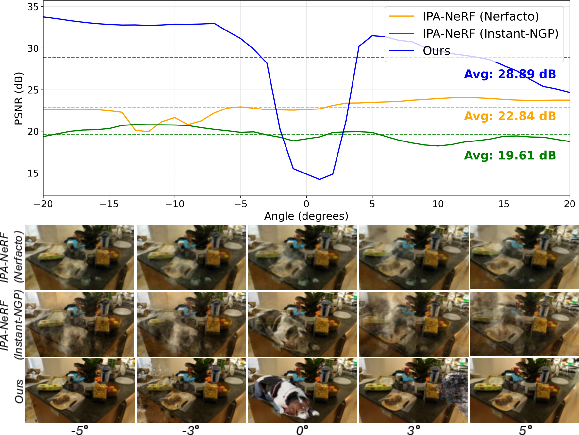

Impact on Neighboring Views

The attack's effect is localized: PSNR drops are significant only within a narrow angular range around the poisoned view, preserving quality in distant viewpoints.

Figure 10: Neighboring view analysis, showing limited impact beyond five degrees from the poisoned view.

Protocol Visualization

The KDE-based protocol enables clear visualization of attack success across easy, median, and hard scenarios.

Figure 11: Protocol visualization for the "grass" scene, demonstrating robust illusion embedding in easy and median cases.

Implications and Future Directions

The density-guided poisoning attack exposes critical vulnerabilities in explicit 3D scene representations, challenging the assumption that multi-view consistency and explicit geometry provide inherent robustness. The methodology enables decoder-free, viewpoint-dependent illusion embedding, raising concerns for content authenticity and security in neural rendering applications. The KDE-based evaluation protocol sets a new standard for benchmarking attack difficulty in 3DGS.

Practical implications include the need for robust defense mechanisms in 3DGS pipelines, especially for safety-critical and copyright-sensitive applications. Theoretical implications suggest further exploration of the trade-off between multi-view consistency and attack resilience, as well as the development of density-aware regularization and anomaly detection strategies.

Conclusion

The paper presents a principled, density-guided poisoning attack for 3D Gaussian Splatting, combining explicit point cloud manipulation with adaptive noise to embed robust, viewpoint-dependent illusions. The approach achieves strong numerical results, outperforming state-of-the-art baselines in both attack success and preservation of innocent view fidelity. The work highlights the necessity for security-aware design in neural rendering systems and provides a comprehensive framework for future research in adversarial robustness and defense for explicit 3D representations.