Quantum-enhanced Computer Vision: Going Beyond Classical Algorithms

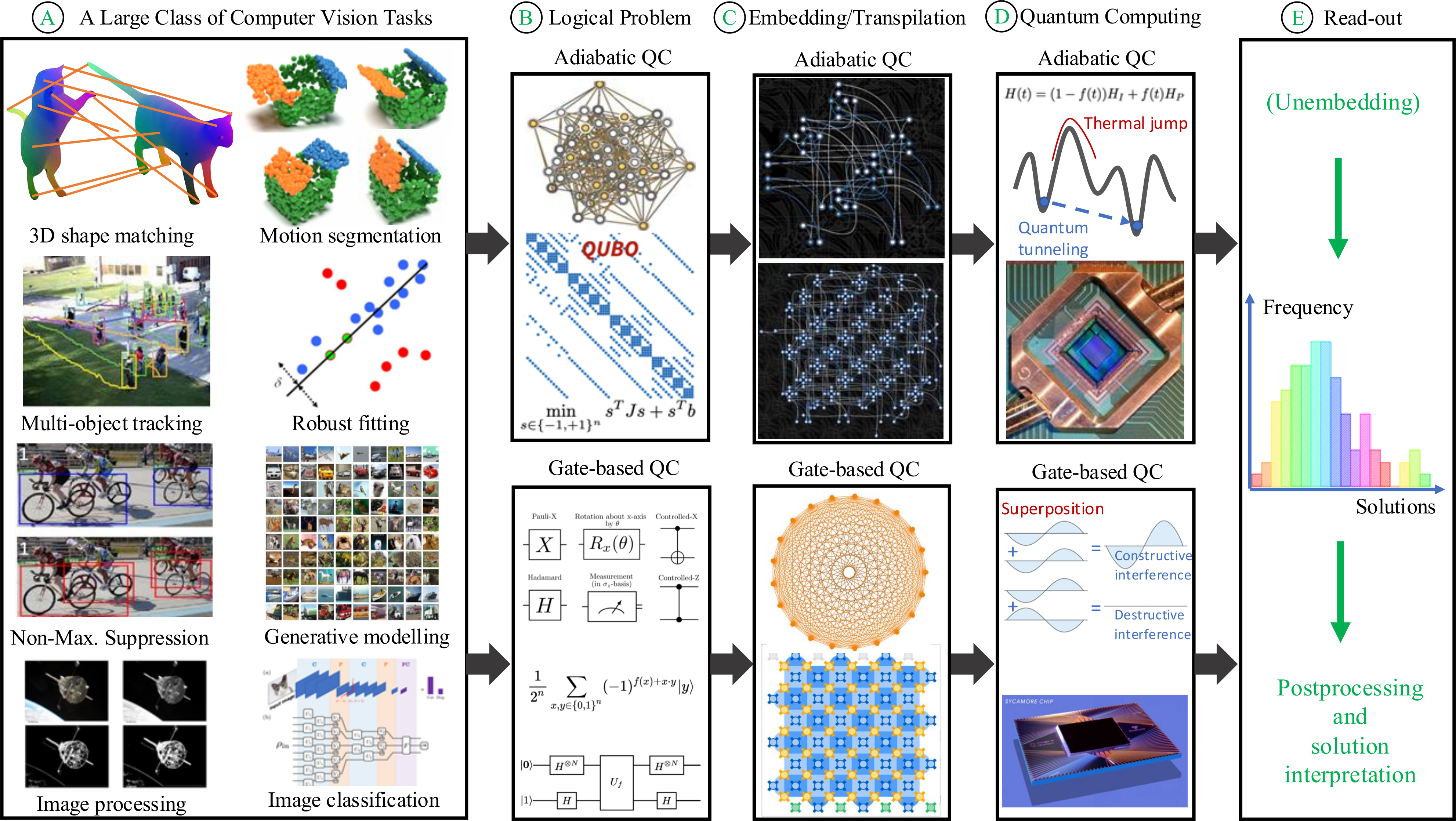

Abstract: Quantum-enhanced Computer Vision (QeCV) is a new research field at the intersection of computer vision, optimisation theory, machine learning and quantum computing. It has high potential to transform how visual signals are processed and interpreted with the help of quantum computing that leverages quantum-mechanical effects in computations inaccessible to classical (i.e. non-quantum) computers. In scenarios where existing non-quantum methods cannot find a solution in a reasonable time or compute only approximate solutions, quantum computers can provide, among others, advantages in terms of better time scalability for multiple problem classes. Parametrised quantum circuits can also become, in the long term, a considerable alternative to classical neural networks in computer vision. However, specialised and fundamentally new algorithms must be developed to enable compatibility with quantum hardware and unveil the potential of quantum computational paradigms in computer vision. This survey contributes to the existing literature on QeCV with a holistic review of this research field. It is designed as a quantum computing reference for the computer vision community, targeting computer vision students, scientists and readers with related backgrounds who want to familiarise themselves with QeCV. We provide a comprehensive introduction to QeCV, its specifics, and methodologies for formulations compatible with quantum hardware and QeCV methods, leveraging two main quantum computational paradigms, i.e. gate-based quantum computing and quantum annealing. We elaborate on the operational principles of quantum computers and the available tools to access, program and simulate them in the context of QeCV. Finally, we review existing quantum computing tools and learning materials and discuss aspects related to publishing and reviewing QeCV papers, open challenges and potential social implications.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview: What this paper is about

This paper is a survey about a new area called Quantum‑enhanced Computer Vision (QeCV). Computer vision is about teaching computers to understand images and videos. Quantum computing is a new kind of computing that uses the rules of quantum physics. The paper explains how these two areas can work together, what’s already been tried, what tools exist, and where the field is going. It’s written to help people in computer vision understand the basics of quantum computing and how to apply it to real vision problems.

The main goals and questions

The paper aims to answer simple but important questions:

- What is QeCV, and how is it different from other “quantum + AI” ideas?

- Which kinds of vision problems can benefit from quantum computing?

- How do we turn a vision problem into a form that a quantum computer can handle?

- What quantum hardware and software tools exist today?

- What has actually been tested on real quantum machines, and what worked?

- What challenges remain, and what progress might we see soon?

How the research was done (the survey approach)

This is not a single experiment—it’s a careful review of many papers and tools. The authors:

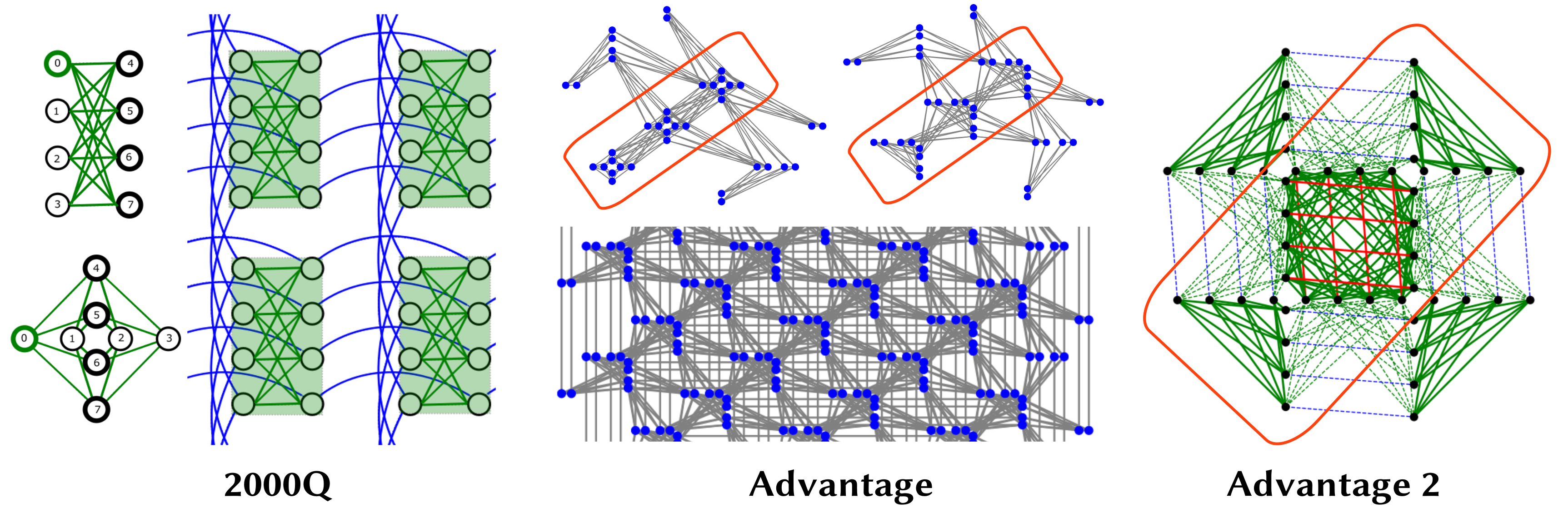

- Explain the two main types of quantum computing:

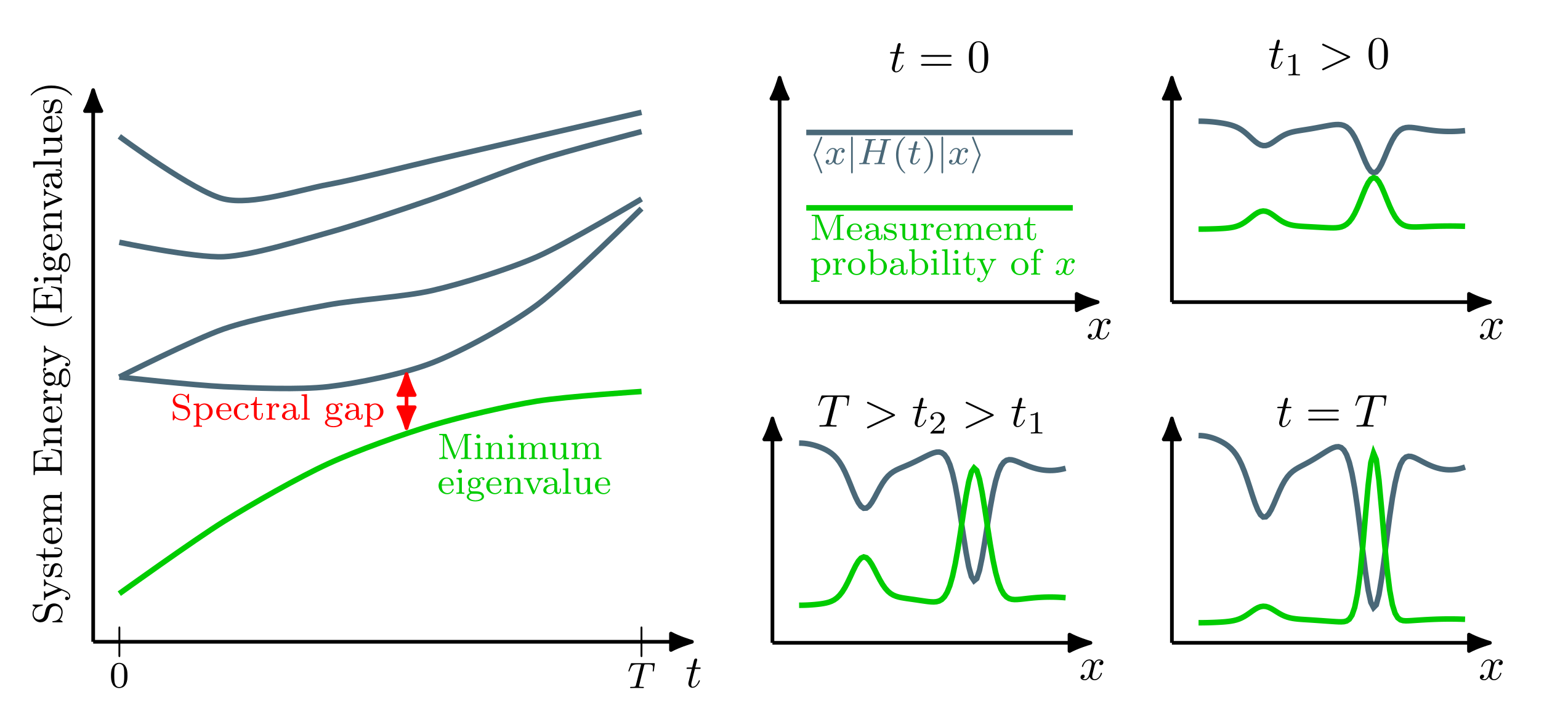

- Gate-based computing: like building with LEGO blocks (quantum “gates”) to shape a quantum state.

- Quantum annealing (adiabatic): like slowly sliding a ball across a bumpy landscape so it settles in the lowest valley (the best solution).

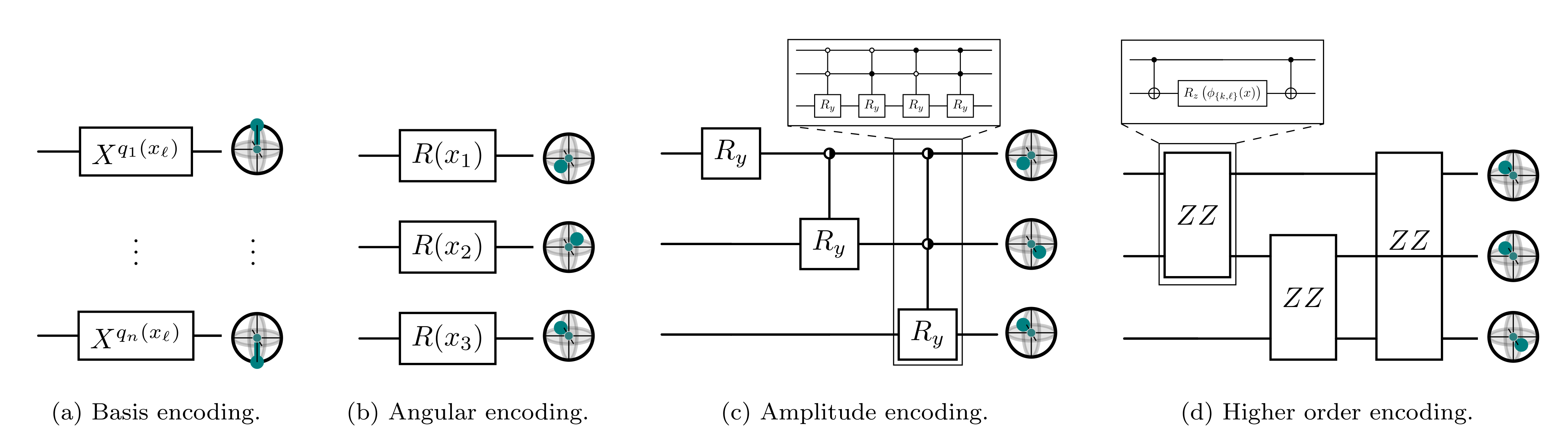

- Show the common steps to run a vision problem on quantum hardware: 1) Formulate the problem in a quantum-friendly way (often as a QUBO—think of flipping many 0/1 switches to get the best score), or as a sequence of quantum gates. 2) Map that problem to real hardware (because real quantum chips have limits on how qubits connect). 3) Run the quantum process (apply gates or “anneal”). 4) Measure the qubits (like stopping spinning coins to see heads/tails), many times, to gather reliable answers. 5) Translate the quantum output back into the original vision solution.

- Focus on methods tested on real quantum devices (especially annealers like D‑Wave) or high-quality simulators for gate-based machines.

- Cover applications such as point/mesh alignment, object tracking, and robust model fitting, plus the role of quantum machine learning.

Along the way, the paper explains key quantum ideas in everyday terms:

- Qubits are like coins that can be both heads and tails at once (superposition).

- Multiple qubits can be linked in special ways (entanglement) so measuring one affects the other.

- Measuring turns quantum states into classical bits (0/1), probabilistically—so you repeat and average results.

What they found and why it matters

Here are the main takeaways from the survey:

- Quantum can help with hard vision problems, especially combinatorial ones:

- Many vision tasks (like matching points between images, choosing the best set of features, or robustly fitting models) boil down to picking the best combination of 0/1 decisions. These can often be written as QUBO problems, which quantum annealers handle naturally.

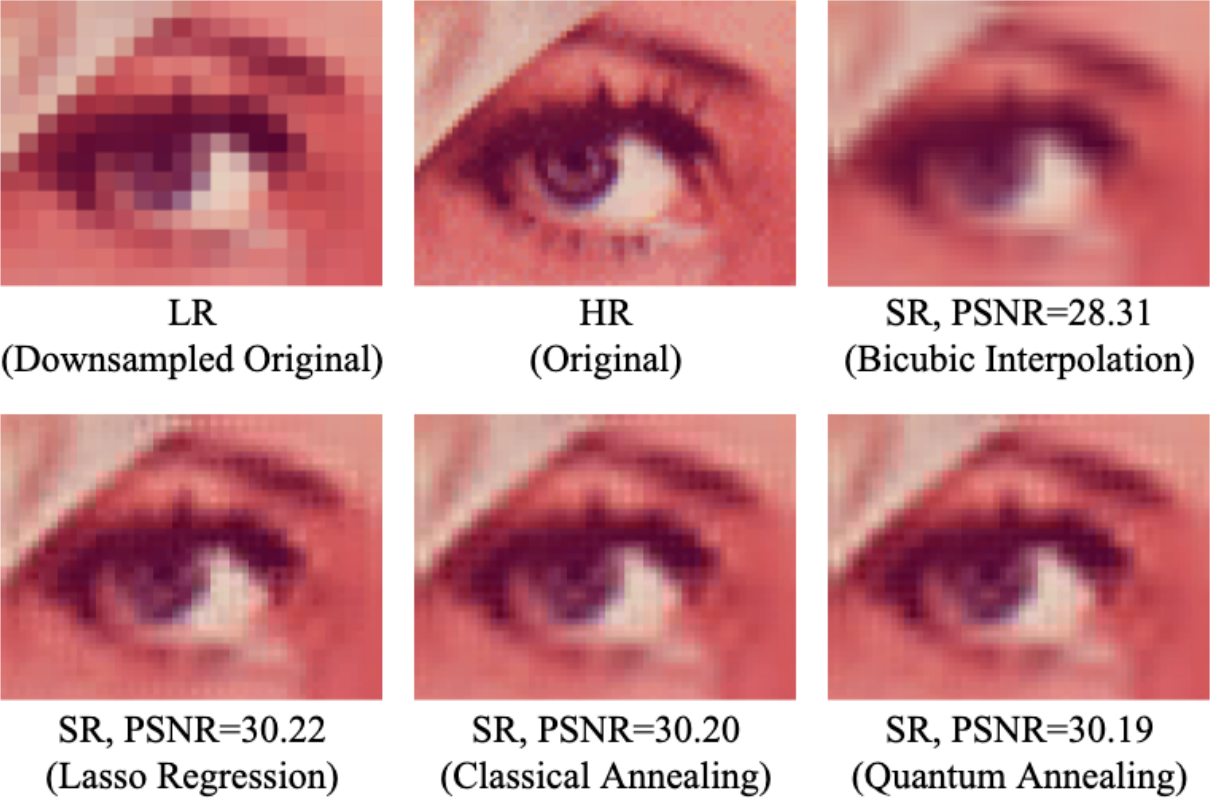

- Today’s most practical quantum option for vision is often quantum annealing:

- Gate-based machines are powerful in theory but currently have fewer reliable qubits and more noise.

- Annealers have more qubits right now and can already tackle mid-sized optimization tasks relevant to vision.

- QeCV is hybrid by design:

- You typically mix classical code (for data preparation, encoding, and post-processing) with quantum steps (to search faster or better). The “quantum” part should be justified by speed, accuracy, or scalability—not just because it’s possible.

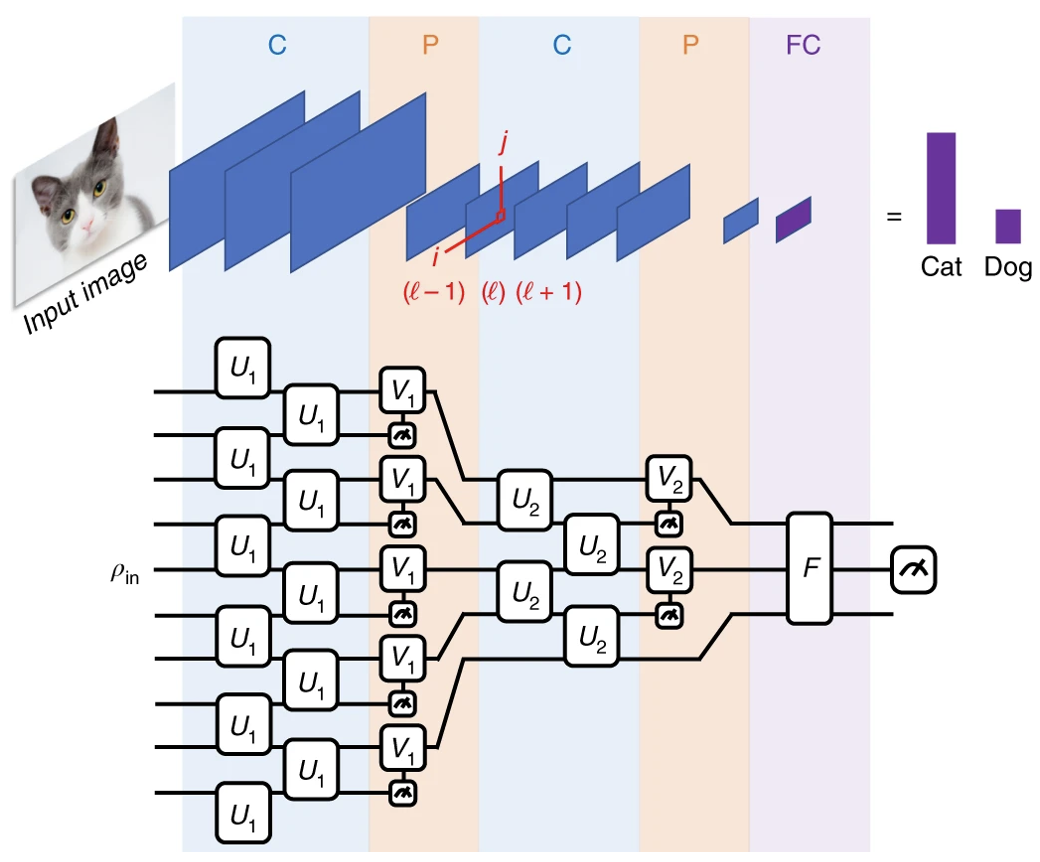

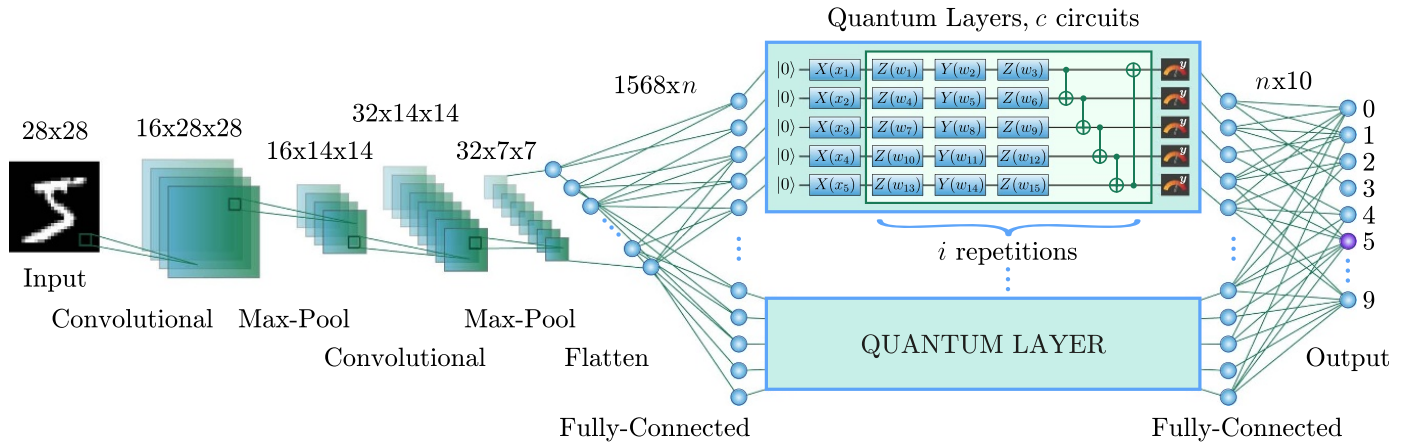

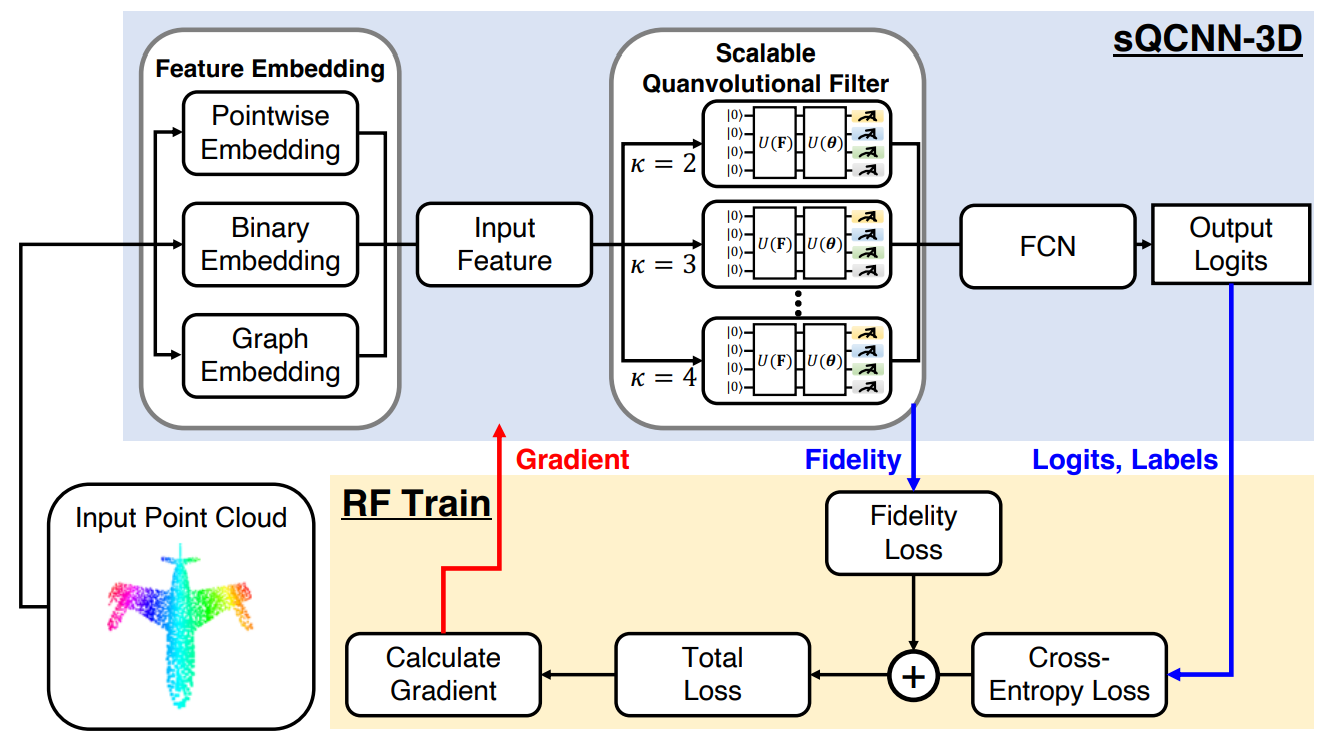

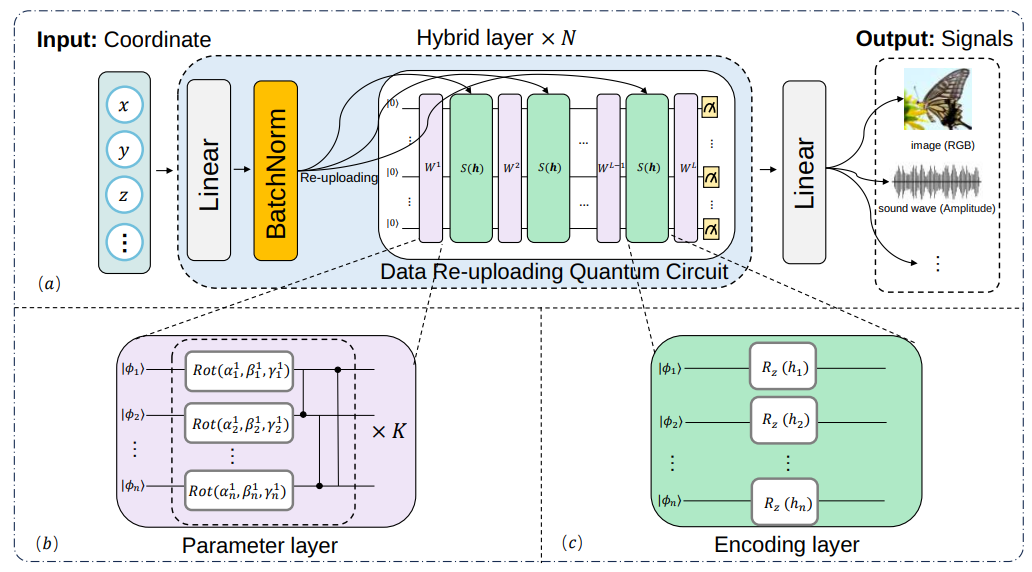

- Parameterized quantum circuits (PQCs) could become a new kind of “quantum neural network” for vision:

- In the long term, PQCs might learn from data like deep nets do, potentially with advantages. But hardware limits still make this early-stage.

- Real progress is happening, but challenges remain:

- Limits: number of qubits, noise (decoherence), restricted connections between qubits, and the difficulty of encoding large real-world data.

- Advantages: for some problems, quantum methods can scale better or find better solutions faster than classical methods.

- Momentum: big companies and research labs are investing heavily; there are early milestones in error correction and roadmaps to much larger machines.

- The survey provides practical guidance:

- It offers a “cookbook” on how to encode vision problems for quantum hardware, what tools to use, how to evaluate results, and how to avoid common pitfalls.

- It clarifies related areas: quantum‑inspired methods (inspired by physics but run on classical computers), quantum image processing (mostly low-level operations), and quantum machine learning (promising but still maturing for real vision tasks).

Why this matters and what could happen next

If quantum computers keep improving, they could:

- Make certain vision tasks much faster or more accurate, especially those involving huge search spaces.

- Reduce the need for ever-larger classical models and massive GPU budgets.

- Inspire new algorithms—not just quantum ones, but also better classical methods based on quantum thinking.

- Change how we design AI systems by adding Quantum Processing Units (QPUs) alongside CPUs and GPUs.

The bottom line: QeCV is young but growing quickly. Now is a good time for computer vision researchers to learn the basics, try small hybrid experiments, and prepare for more capable quantum hardware. With careful design and testing, quantum computing could become a powerful new tool in the computer vision toolbox.

Knowledge Gaps

Knowledge Gaps, Limitations, and Open Questions

Below is a single, actionable list of gaps the paper leaves unresolved and that future researchers could address.

- Lack of formal, task-specific criteria for “quantum advantage” in computer vision: define measurable conditions (problem structure, instance size, noise levels) under which QeCV is expected to outperform best-in-class classical baselines in accuracy, runtime, or resource use.

- No standardized benchmarking suite for QeCV: create shared datasets, problem instances, metrics, and baselines (including state-of-the-art GPU solvers) to evaluate end-to-end performance of QeCV across CV tasks (e.g., matching, registration, tracking, robust fitting).

- Insufficient end-to-end runtime accounting: quantify and report wall-clock time including data encoding, transpilation/minor-embedding, annealing/execution, readout, unembedding, and classical post-processing to avoid overstating speedups.

- Sparse evidence of gate-based QeCV on real hardware: move beyond simulators to systematic experiments on multiple gate-based devices (superconducting, trapped-ion, photonic), including cross-device replication and hardware-aware circuit design.

- Limited guidance on mapping common CV problems to QUBO/Ising: develop general-purpose, size-efficient formulations for feature matching, pose estimation, segmentation, tracking, and robust model fitting, with proofs of correctness and tight bounds on penalty weights.

- Minor-embedding/transpilation overhead is under-characterized: quantify chain lengths, embedding success rates, and solution degradation due to hardware connectivity constraints; design improved embedding heuristics tailored to CV graph structures (e.g., geometric consistency graphs).

- Unclear robustness of annealing schedules for CV-specific objectives: study schedule design (sweeps, pauses, anneal offsets) and their impact on outlier-heavy, nonconvex CV energies; propose CV-aware schedules with empirical guidelines.

- Data encoding remains a bottleneck: design scalable, low-depth state-preparation schemes (e.g., amplitude, angle, basis encoding) for high-dimensional images, point clouds, and meshes; measure encoding error, circuit depth, and IO costs relative to overall runtime.

- PQC training challenges are not addressed empirically in CV: investigate barren plateaus, gradient noise, and parameter initialization strategies on realistic CV datasets; benchmark error mitigation and optimization (e.g., layerwise training, ansatz pruning).

- Measurement and readout aggregation strategies are ad hoc: develop principled methods to aggregate bitstrings/shots into CV solutions (e.g., consensus matching, robust estimators), with theoretical guarantees on solution quality under measurement noise.

- Limited comparability between AQC and gate-based approaches on the same CV tasks: conduct head-to-head studies controlling for problem size, encoding, and post-processing to identify regimes where each paradigm is preferable.

- Constraints and continuous variables are poorly handled: devise formulations for mixed discrete-continuous CV problems (e.g., rotation/translation with combinatorial associations), including hybrid penalty schemes and provably stable discretizations.

- Penalty tuning in constrained QUBOs is under-theorized: provide systematic methods (e.g., sensitivity analysis, dual bounds) to set penalty magnitudes that ensure feasibility without destabilizing optimization.

- Scalability claims for AQC in CV lack quantitative bounds: report maximum solvable instance sizes (variables, couplers) per device, with concrete CV task examples and failure modes (chain breaks, bias/coupler range limits).

- Noise and decoherence impact on solution quality is not quantified: develop protocols to measure how hardware noise affects CV-specific outcomes (e.g., matching precision, registration error) and evaluate error mitigation techniques in situ.

- Lack of energy/resource accounting: measure energy per inference/training epoch on QPUs vs GPUs/CPUs for representative CV workloads to assess sustainability claims.

- Hybrid pipeline partitioning is not optimized: propose methods to automatically decide which subroutines (preprocessing, optimization, post-processing) should be quantum vs classical to maximize end-to-end gain under hardware constraints.

- Missing complexity-theoretic guidance for CV: provide a practical mapping between CV task classes and known quantum complexity results to indicate where exponential or polynomial speedups are plausible.

- Generalization and robustness of QeCV across datasets are untested: evaluate whether quantum-derived solutions (e.g., matches, poses) generalize and remain stable across varying noise, occlusion, and domain shifts typical in CV benchmarks.

- Limited exploration of quantum generative and implicit models for vision: prototype QCNNs/QGANs/quantum implicit fields on real image datasets, measuring sample quality, fidelity, and training stability under NISQ constraints.

- Insufficient reproducibility practices: publish complete quantum job configurations (device, topology, schedule, shots), embeddings/transpilation logs, random seeds, and raw bitstrings to enable independent verification.

- Device heterogeneity is not addressed: develop portability layers and calibration protocols to account for differences in connectivity, gate sets, error rates, and coupler ranges across vendors, ensuring fair comparisons.

- Security and privacy implications are unexplored: analyze confidentiality of visual data on cloud quantum services and propose privacy-preserving state preparation and measurement protocols.

- Missing guidelines for reviewing QeCV claims: define community standards for minimal evidence (hardware runs, baselines, error bars, ablations) required to substantiate quantum advantage or feasibility claims in CV venues.

These items aim to turn broad aspirations into concrete, testable agendas that can advance QeCV from promise to practice.

Practical Applications

Overview

Below are practical, real-world applications that follow from the survey’s findings on Quantum‑enhanced Computer Vision (QeCV)—particularly its emphasis on hybrid quantum–classical workflows, QUBO formulations for quantum annealing, and parameterized quantum circuits (PQCs) for gate‑based quantum computing. Applications are grouped into immediate (deployable now) and long‑term (requiring further research, scaling, or hardware maturity), with sector links, plausible tools/products/workflows, and key assumptions or dependencies.

Immediate Applications

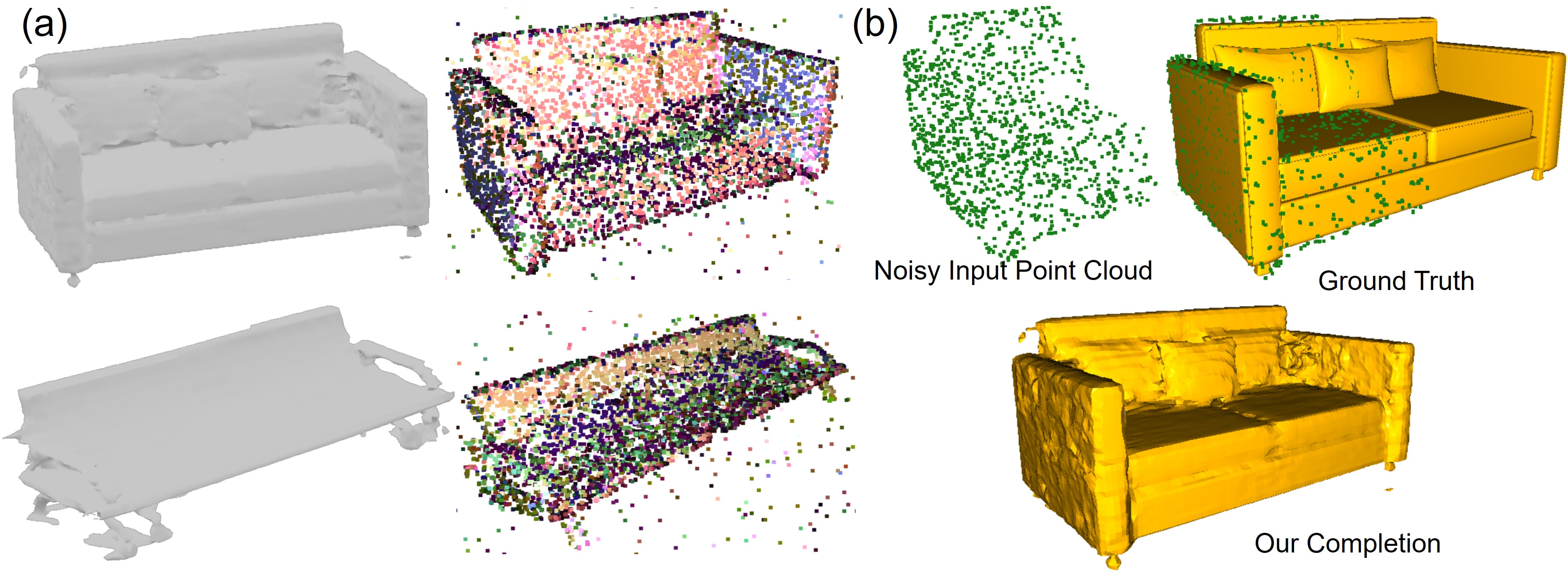

- Robust point set alignment and 3D registration at small-to-medium scales

- Sectors: robotics, manufacturing (metrology), AEC (scan‑to‑BIM), healthcare (rigid medical image/scan registration), geospatial surveying

- What: Use quantum annealing (QA) to solve QUBO‑encoded correspondence, assignment, and consensus problems for aligning point clouds/meshes and scan‑to‑CAD models; leverage hybrid pipelines with classical preprocessing and post‑processing

- Tools/Products/Workflows: D‑Wave Ocean SDK or AWS Braket QA, minor‑embedding/transpilation tooling, OpenCV‑Quantum bridge; workflow: construct correspondence graph → encode objective as QUBO (e.g., robust matching, constraints) → anneal → readout distribution → aggregate/verify → refine classically

- Assumptions/Dependencies: Problem sizes fit NISQ hardware; QUBO mapping preserves solution quality; minor‑embedding overhead is manageable; stable cloud access; classical post‑refinement available

- Multi‑model robust fitting (“quantum RANSAC”) for vision geometry

- Sectors: autonomous vehicles, mobile robotics, photogrammetry, AR/VR authoring

- What: Accelerate hypothesis selection and consensus maximization (e.g., homography, fundamental matrix, line/plane fitting in LiDAR) via QA on QUBO formulations of inlier/outlier selection

- Tools/Products/Workflows: “Quantum Model Fitting Accelerator” microservice; D‑Wave/AWS Braket; repeated anneals to obtain solution distributions; consensus checks and geometric validation classically

- Assumptions/Dependencies: Moderate scene sizes; reliable QUBO encoding of geometric constraints and penalties; adequate sampling to overcome probabilistic outcomes

- Data association in multi‑object tracking (MOT)

- Sectors: security/surveillance, retail analytics, sports analytics, smart cities

- What: Solve assignment/data association across frames via QUBO on annealers to improve robustness under clutter/occlusions

- Tools/Products/Workflows: MOT pipeline plugin (“Quantum MOT Solver”) combining classical feature extraction and track management with QA‑based association; Ocean/Braket integration

- Assumptions/Dependencies: Track/target counts within current qubit/connectivity limits; latency compatible with near‑real‑time needs if run in batch or sliding windows; hybrid scheduling

- Pose graph optimization, rotation/translation synchronization

- Sectors: SLAM for robotics and drones, mapping, AR infrastructure

- What: Apply annealing to synchronization problems and robust averaging (e.g., MultiBodySync‑like formulations) encoded as QUBO, improving convergence in highly nonconvex regimes

- Tools/Products/Workflows: “Quantum Sync” module; QA via D‑Wave/Braket; integration with SLAM back‑ends; classical refinement (bundle adjustment)

- Assumptions/Dependencies: Connectivity and qubit counts sufficient for graph size; classical back‑end available; batch or offline processing acceptable

- Hybrid PQC layers for small‑scale vision tasks (education, prototyping)

- Sectors: academia, software demos, edge analytics prototypes

- What: Insert PQC layers into classical networks for toy/small datasets (e.g., small image classification) to study representational benefits, trainability, and hybrid loss landscapes

- Tools/Products/Workflows: Qiskit, PennyLane, TorchQuantum; PyTorch/TensorFlow plugins; gate‑based simulators with limited runs on IonQ/IBM hardware

- Assumptions/Dependencies: Small circuits and shallow depth due to decoherence; careful state preparation; classical–quantum co‑training pipelines; acceptance of simulator‑heavy experimentation

- QeCV education and benchmarking “cookbook” adoption

- Sectors: academia, workforce development, R&D labs

- What: Courses, lab assignments, and benchmark suites following the survey’s practical framing (gate‑based and QA paradigms, data encoding, minor‑embedding, measurement‑driven evaluation)

- Tools/Products/Workflows: Jupyter notebooks on AWS Braket/Azure Quantum/IBM Q; curated QeCV problem sets; standardized reporting and reproducibility guides

- Assumptions/Dependencies: Cloud credits or institutional access; instructor training; small problem sizes aligned with NISQ limits

- Publication and reviewing standards for QeCV

- Sectors: academia, research policy

- What: Adopt community guidelines emphasizing experiments on real hardware (especially QA), clarity in QUBO/gate formulations, and practical metrics (accuracy, resource use, scalability)

- Tools/Products/Workflows: “QeCV Checklist” for submissions and reviews; shared artifact repositories; cross‑venue working groups

- Assumptions/Dependencies: Buy‑in from CV venues and societies; alignment with reproducibility initiatives

- OpenCV‑Quantum integration paths

- Sectors: software

- What: Add adapters to call quantum solvers for selected CV tasks (assignment, consensus, synchronization) with fallback classical paths

- Tools/Products/Workflows: Open-source “opencv‑qecv” plugin; wrapper functions for Ocean/Braket; logging and solution quality monitors

- Assumptions/Dependencies: Stable SDKs and APIs; sustained maintenance; users comfortable with hybrid pipelines

Long‑Term Applications

- End‑to‑end Quantum Neural Networks (QNNs) for mainstream vision tasks

- Sectors: software, healthcare (diagnostics), autonomous vehicles, robotics, media

- What: Replace or augment CNN/Transformers with PQCs/QCNNs for classification, detection, segmentation, and pattern recognition at scale, leveraging quantum advantages in expressivity and training dynamics

- Tools/Products/Workflows: Error‑corrected QPUs; quantum‑native training stacks; hybrid model orchestration; dataset‑level state preparation pipelines

- Assumptions/Dependencies: Millions of logical qubits with error correction; efficient, scalable data encoding; stable gradient estimation (barren plateau mitigation)

- Real‑time quantum‑accelerated SLAM and 3D reconstruction

- Sectors: robotics, AR/VR/XR, industrial automation, autonomous navigation

- What: Use QPUs as co‑processors for global optimization (data association, loop closure, robust bundle adjustment) to reduce latency and improve robustness in dynamic environments

- Tools/Products/Workflows: On‑device or near‑edge QPU accelerators; low‑latency quantum links; co‑design of SLAM front/back‑ends for quantum calls

- Assumptions/Dependencies: Hardware maturity for low‑latency inference; tight integration with sensor pipelines; energy‑efficient quantum processing

- Large‑scale Structure‑from‑Motion (SfM) and bundle adjustment via quantum optimization

- Sectors: photogrammetry, mapping, digital twins, AEC, geospatial intelligence

- What: Solve massive nonconvex problems (global matching, robust camera/point estimation) using QA or gate‑based algorithms that exploit entanglement/interference to explore solution spaces more effectively

- Tools/Products/Workflows: “Quantum SfM Engine” with hybrid schedulers; integration into commercial mapping suites; scalable QUBO/gate constructions for graph‑level optimization

- Assumptions/Dependencies: High‑connectivity, high‑qubit hardware; scalable minor‑embedding/transpilation; robust post‑quantum verification

- Quantum‑enhanced generative models and implicit representations

- Sectors: media/gaming (content creation), surgical planning, digital heritage

- What: PQC‑based generative models, quantum‑assisted NeRF/implicit surfaces for scene representation, with potential benefits in sample efficiency or expressivity

- Tools/Products/Workflows: Hybrid training loops; quantum‑aware differentiable renderers; compressed quantum state encodings

- Assumptions/Dependencies: Practical PQC depth/width; efficient quantum gradient computation; hardware supporting large‑scale training

- Quantum‑powered vision APIs delivered by major cloud platforms

- Sectors: enterprise software, analytics, retail, smart infrastructure

- What: Standardized cloud APIs for quantum‑assisted CV primitives (matching, tracking, registration, synchronization), seamlessly integrated with ML ops stacks

- Tools/Products/Workflows: Managed services (AWS Braket, Azure Quantum, IBM Quantum) offering CV‑specific endpoints; auto‑tuning of QUBO/gate parameters

- Assumptions/Dependencies: Market demand and ecosystem readiness; cost‑effective pricing; reliability/SLAs comparable to classical services

- Policy frameworks for equitable access, sustainability, and standards in QeCV

- Sectors: public policy, research funding agencies, standards bodies

- What: Guidance on equitable compute access (cloud credits), energy/resource accounting for quantum workloads, and standardization of QeCV benchmarks and disclosures

- Tools/Products/Workflows: Programmatic grants for QeCV; sustainability scorecards; open benchmarks/datasets; certification programs

- Assumptions/Dependencies: Clear evidence of advantage; environmental and social impact assessments; coordinated multi‑stakeholder involvement

- Consumer‑grade AR alignment and 3D scanning improvements

- Sectors: daily life (mobile, AR apps), creator tools

- What: More robust live mapping/alignment in mobile AR and home scanning, leveraging quantum‑accelerated global matching once edge‑friendly QPUs or low‑latency quantum services exist

- Tools/Products/Workflows: Edge‑cloud hybrid quantum calls; SDKs for mobile AR platforms; background quantum optimization for scene alignment

- Assumptions/Dependencies: Practical low‑latency access to quantum acceleration; privacy/security for visual data; cost effectiveness for consumer apps

Cross‑cutting Assumptions and Dependencies

- Hardware: Current NISQ constraints (qubit count, connectivity, noise, decoherence); QA more scalable today than gate‑based for CV‑relevant combinatorial optimization; long‑term hinges on error‑corrected, high‑qubit QPUs

- Formulation: Existence of faithful QUBO or gate‑based encodings with manageable overhead; minor‑embedding/transpilation does not blow up resource needs

- Workflows: Hybrid quantum–classical designs remain essential; repeated measurements and distributional outputs require robust post‑processing

- Access/Cost: Cloud‑based quantum access, SDK availability (D‑Wave Ocean, AWS Braket, Azure Quantum, IBM Qiskit, IonQ), and operational costs compatible with deployment use cases

- Skills/Standards: Community adoption of QeCV guidelines; reproducibility and benchmarking practices; workforce upskilling in quantum + CV co‑design

Glossary

- Adiabatic Quantum Computing (AQC): A quantum computing paradigm where a system is evolved slowly under a Hamiltonian to reach a ground state encoding the solution, well-suited to optimization problems. "Adiabatic Quantum Computing (AQC) \cite{das2005quantum,albash2018adiabatic}"

- annealing: The gradual change of a Hamiltonian to guide a quantum system toward its minimum-energy state, used for optimization. "AQC relies on the smooth evolution or annealing of a so-called Hamiltonian"

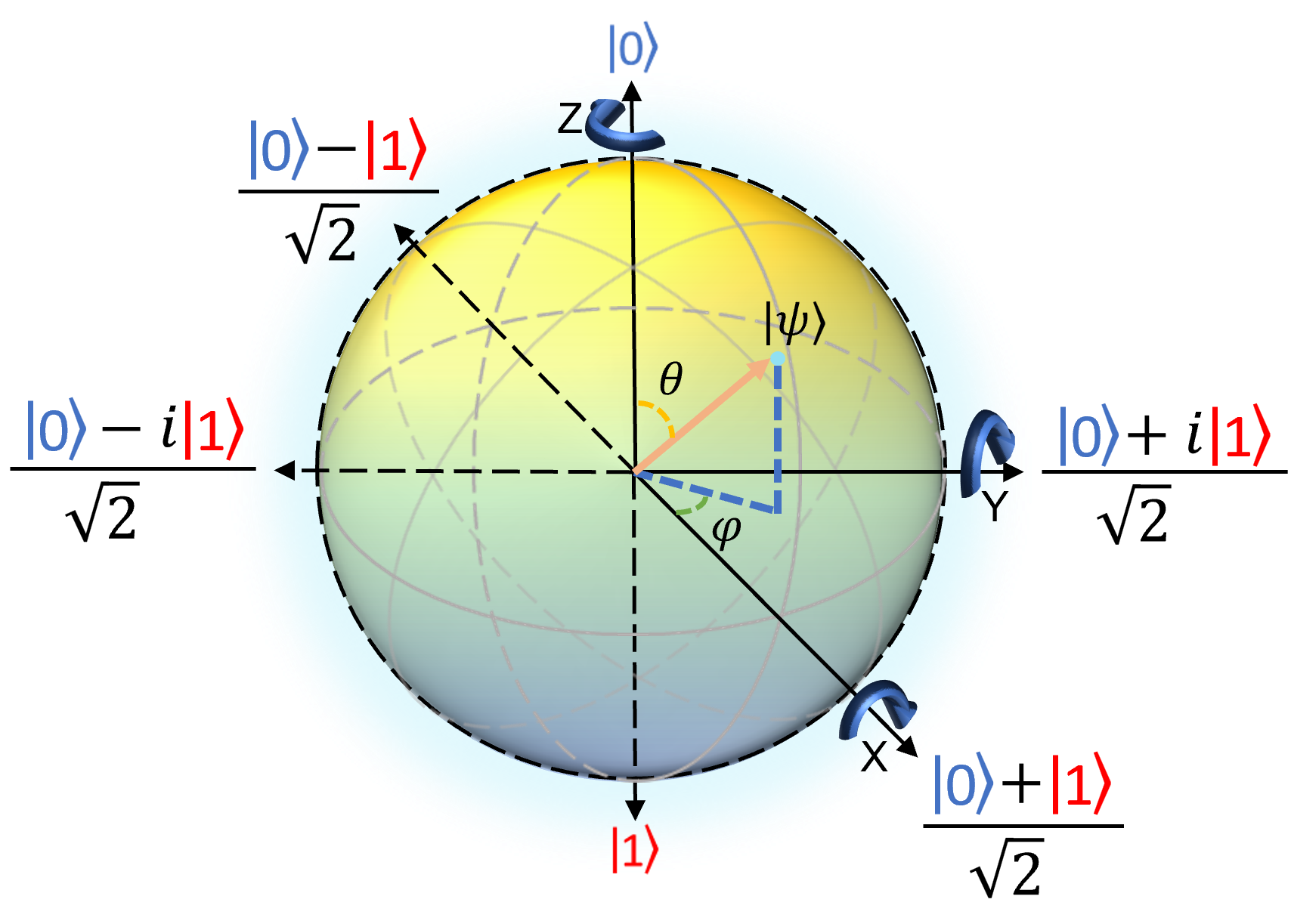

- Bloch sphere: A geometric representation of a single qubit state as a point on the unit sphere in 3D. "known as the Bloch sphere."

- bra–ket notation: Compact notation from quantum mechanics for vectors and inner products (kets |ψ⟩, bras ⟨ψ|). "We adopt the braâket notation to concisely express familiar linear algebraic constructs such as row and column vectors, inner and outer products, and tensor products."

- decoherence: The loss of quantum coherence due to environmental interactions, degrading quantum computation. "Additionally, decoherence poses a significant obstacle."

- Einstein-Podolsky-Rosen states: Entangled two-particle states illustrating nonlocal correlations in quantum mechanics. "This is one of the famous Einstein-Podolsky-Rosen states~\cite{nielsen2002quantum}."

- entanglement: Quantum correlation where multi-qubit states cannot be factored into individual qubit states. "so-called entangled states where the qubits cannot be described separately."

- gate-based quantum computing: A model of quantum computation using sequences of discrete unitary gates to transform states. "gate-based quantum computing \cite{nielsen2002quantum,sutor2019dancing}"

- Hamiltonian: An operator describing the energy of a quantum system; central to its evolution and optimization encoding. "AQC relies on the smooth evolution or annealing of a so-called Hamiltonian"

- Hermitian operator: An operator equal to its own conjugate transpose; measurement observables in quantum mechanics are Hermitian. "onto the eigenbasis of a Hermitian operator known as an observable"

- Hilbert space: The complex vector space in which quantum states (e.g., qubits) reside and computations occur. "These qubits abstractly span a Hilbert space, where computation takes place."

- Ising encodings: Formulations of optimization problems using spin variables with pairwise couplings in the Ising model. "combinatorial optimisation problems in the Ising encodings"

- Kronecker product: The tensor product of vectors/matrices used to build multi-qubit state representations. "the tensor or Kronecker product"

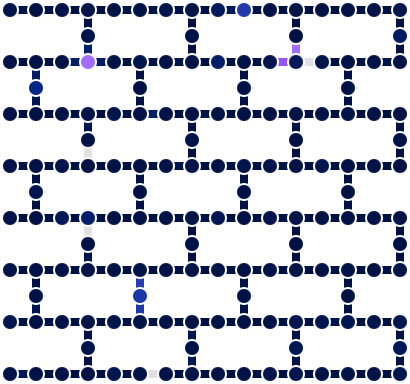

- minor embedding: Mapping a logical problem graph onto a quantum hardware graph with limited connectivity by representing one logical qubit with chains of physical qubits. "must be minor-embedded or transpiled."

- Noisy Intermediate-scale Quantum (NISQ): The current generation of quantum devices with tens to hundreds of noisy qubits and no full error correction. "Noisy Intermediate-scale Quantum (NISQ) computers \cite{preskill2018quantum}."

- observable: A Hermitian operator whose eigenvalues correspond to possible measurement outcomes. "known as an observable"

- Pauli operators: The fundamental single-qubit operators X, Y, Z used to manipulate and measure qubits. "Pauli-, - and - operators"

- parameterised quantum circuits: Quantum circuits with tunable gate parameters trained to optimise a task-specific objective. "Parametrised quantum circuits can also become, in the long term, a considerable alternative to classical neural networks in computer vision."

- Quantum Annealing: A physical computing process that performs optimization by adiabatically evolving a system’s Hamiltonian. "gate-based quantum computing and quantum annealing."

- Quantum-enhanced Computer Vision (QeCV): A field integrating quantum computation into CV pipelines to improve speed, accuracy, or resource use. "Quantum-enhanced Computer Vision (QeCV) is a new research field at the intersection of computer vision, optimisation theory, machine learning and quantum computing."

- Quantum Image Processing (QIP): A subfield focusing on representing and processing images as quantum states and leveraging quantum linear algebra. "Quantum Image Processing (QIP), and Quantum Machine Learning (QML)."

- Quantum Machine Learning (QML): The study of applying quantum computing to machine learning tasks and models. "Quantum Machine Learning (QML)."

- Quantum Neural Networks (QNN): Neural-like models implemented with quantum circuits, often parameterised and trained variationally. "Quantum Neural Networks (QNN)."

- Quantum Processing Units (QPUs): Specialized hardware that executes quantum operations on qubits, analogous to GPUs for classical tasks. "Quantum Processing Units (QPUs) promise to extend the available arsenal of reliable computer vision tools and computational accelerators"

- Quadratic Unconstrained Binary Optimisation (QUBO): A canonical binary optimization form with quadratic objective and no constraints, commonly used in annealing. "Quadratic Unconstrained Binary Optimisation (QUBO) form."

- quantum register: A collection of multiple qubits treated as a single multi-qubit system. "and such a system of multiple qubits is called quantum register."

- qubit: The fundamental unit of quantum information, a two-level quantum system enabling superposition and entanglement. "Quantum computers operate on qubits, counterparts of classical bits that leverage quantum effects."

- Schrödinger Equation: The differential equation describing the time evolution of a quantum state under a Hamiltonian. "The (time) evolution of a quantum state is described by the Schrödinger Equation"

- superposition: The quantum property allowing a qubit to be in a linear combination of basis states simultaneously. "Typically, the basic state is brought into superposition as a form of initialisation."

- tensor product: The operation combining qubit states to form multi-qubit states in an exponentially larger space. "Due to the tensor product structure of quantum mechanics"

- transpilation: The process of compiling and mapping a logical quantum circuit onto a hardware-specific gate set and connectivity. "must be minor-embedded or transpiled."

- tunnelling: A quantum effect enabling systems to traverse energy barriers, aiding optimization in annealing. "superposition and tunnelling"

- unitary transformations: Norm-preserving linear operations that evolve quantum states and implement quantum gates. "gate-based quantum computing employs discrete unitary transformations"

Collections

Sign up for free to add this paper to one or more collections.