- The paper demonstrates that looped transformers exhibit superior convergence in complex reasoning tasks, attributed to distinct V-shaped loss landscapes.

- It introduces the SHIFT framework, combining Single-Attn efficiency with Looped-Attn’s recursive advantages through a dynamic two-phase curriculum.

- Empirical analysis reveals phases of Collapse, Diversification, and Stabilization, validating the theory of river-shaped optimization valleys.

The paper "What Makes Looped Transformers Perform Better Than Non-Recursive Ones (Provably)" (2510.10089) addresses the superior performance of looped transformers, termed Looped-Attn, compared to standard transformers, termed Single-Attn, particularly in complex reasoning tasks. This study aims to elucidate the theoretical underpinnings of the observed performance advantages of Looped-Attn by examining the dynamics at both sample and Hessian levels and proposes a novel framework, SHIFT, to enhance training efficiency.

Theoretical Insights and Loss Landscape Geometry

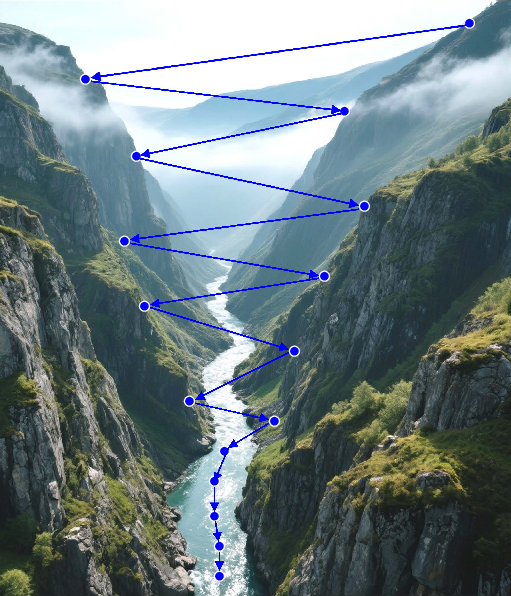

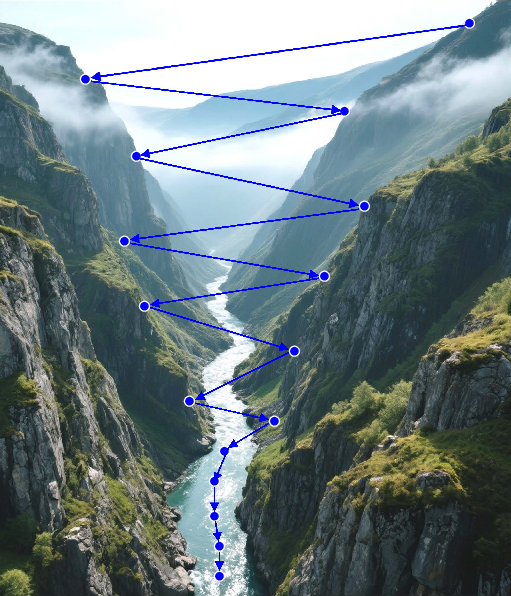

The paper introduces a novel perspective on the loss landscape geometry, distinguishing between U-shaped valleys (flat) associated with Single-Attn and V-shaped valleys (steep) linked to Looped-Attn. This differentiation is grounded in the River-Valley landscape model, which is further articulated in this context. The recursive nature of Looped-Attn is posited to induce a unique inductive bias towards a River-V-Valley, facilitating valley hopping and thereby enhancing the convergence of loss along the optimization path.

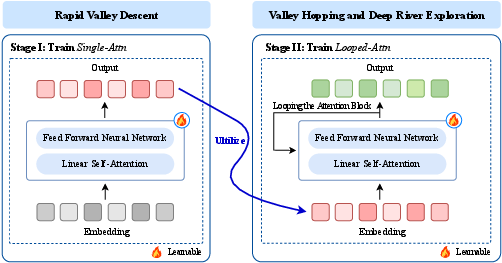

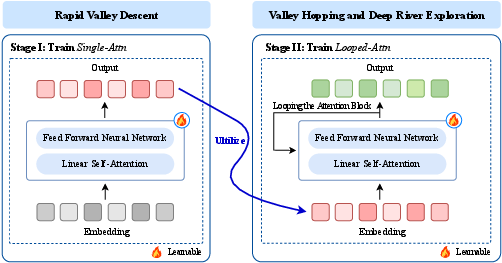

Figure 1: Loss Landscapes, Optimization Trajectories and SHIFT Strategy.

Empirical Observations and Conjecture

Empirically, the paper observes that Looped-Attn follows a two-phase curriculum, moving from simple to complex patterns, superior to Single-Attn, which plateaus after mastering simple patterns. Additionally, Looped-Attn demonstrates dynamic Hessian-level behavior, with phases of Collapse, Diversification, and Stabilization, unlike the static spectrum of Single-Attn. This behavior is interpreted through the lens of the proposed River-V-Valley landscape model, suggesting that the superior learning dynamics of Looped-Attn stem from its landscape-level inductive bias.

The SHIFT Framework

Building on the theoretical insights, the paper introduces SHIFT (Staged Hierarchical Framework for Progressive Training), which combines the computational efficiency of Single-Attn with the performance benefits of Looped-Attn. SHIFT initially employs Single-Attn for rapid mastery of simple patterns and switches to Looped-Attn to exploit its landscape-induced bias for exploration along complex river paths. The SWITCH Criterion with Patience (SCP) determines the shift point, enhancing the computational efficiency without sacrificing performance.

Implications and Future Directions

The implications of this research are twofold. Practically, the introduction of the SHIFT framework offers a methodology to exploit the inductive biases of looped transformers efficiently. Theoretically, the understanding of U-shaped and V-shaped valley dynamics provides a new lens to study optimization landscapes in deep learning. Future research may extend this analysis to more complex architectures and explore the potential of adaptive recursive structures in transformers to further improve reasoning capabilities.

Conclusion

In conclusion, the paper provides a comprehensive theoretical framework that explains the enhanced performance of Looped-Attn over Single-Attn in complex reasoning tasks. By formalizing the concepts of River-U-Valley and River-V-Valley landscapes and introducing the SHIFT training methodology, the study advances our understanding of transformer dynamics and optimization strategies. This work not only sheds light on the recursive advantages of Looped-Attn but also proposes practical solutions to leverage these benefits efficiently.