- The paper introduces a unified multimodal video generation framework based on diffusion Transformers, integrating visual, textual, and auditory modalities.

- It details multi-reference image-to-video synthesis and video-to-video extension with shot-level continuity, achieving state-of-the-art subject consistency and narrative coherence.

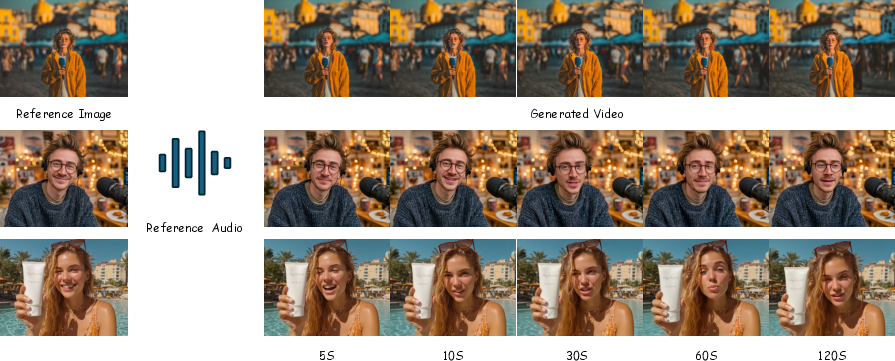

- The work presents an audio-guided talking avatar subsystem that ensures fine-grained lip sync and multi-person interactions for robust, real-time multimedia applications.

Introduction

SkyReels-V3 introduces a unified multimodal video generation framework exploiting large-scale diffusion Transformers under an in-context learning paradigm. The system integrates visual, textual, and auditory modalities and supports three principal video generation tasks: multi-reference image-to-video synthesis, video-to-video extension (including shot-level continuity modeling), and audio-guided talking avatar generation. The key architectural components include advanced multimodal conditioning, data curation with compositional de-artifacting, hybrid image–video training strategies, and hierarchical spatiotemporal consistency modules. This approach aims for maximal generalization and robust subject, narrative, and style consistency.

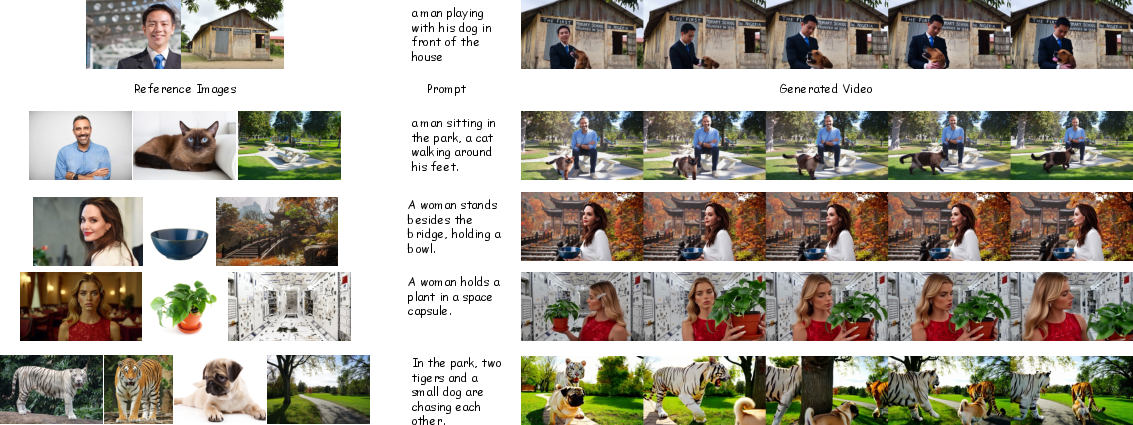

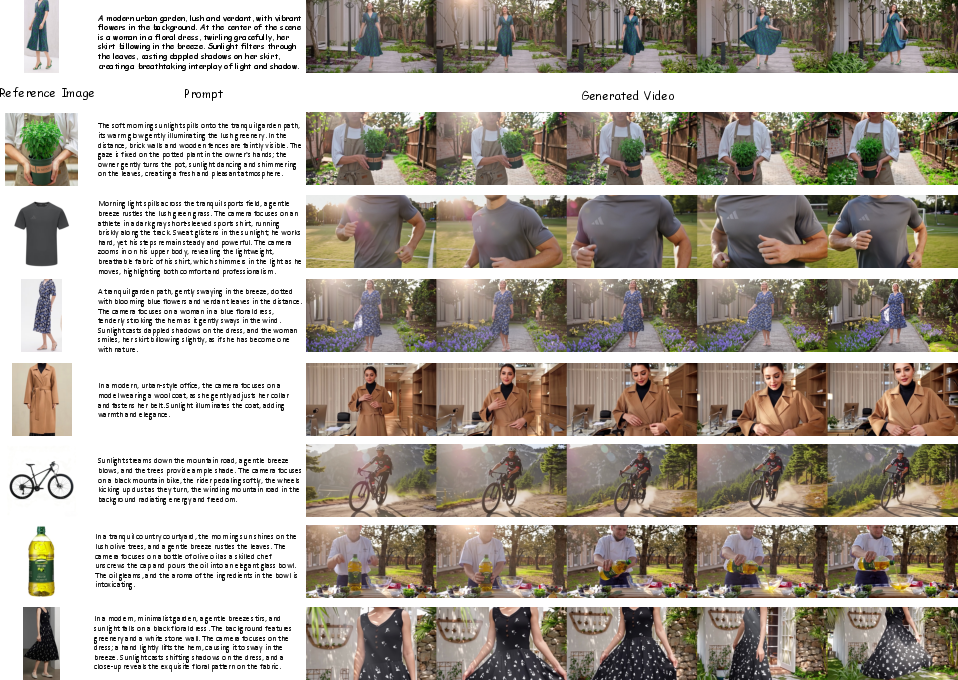

Reference Image-to-Video Synthesis

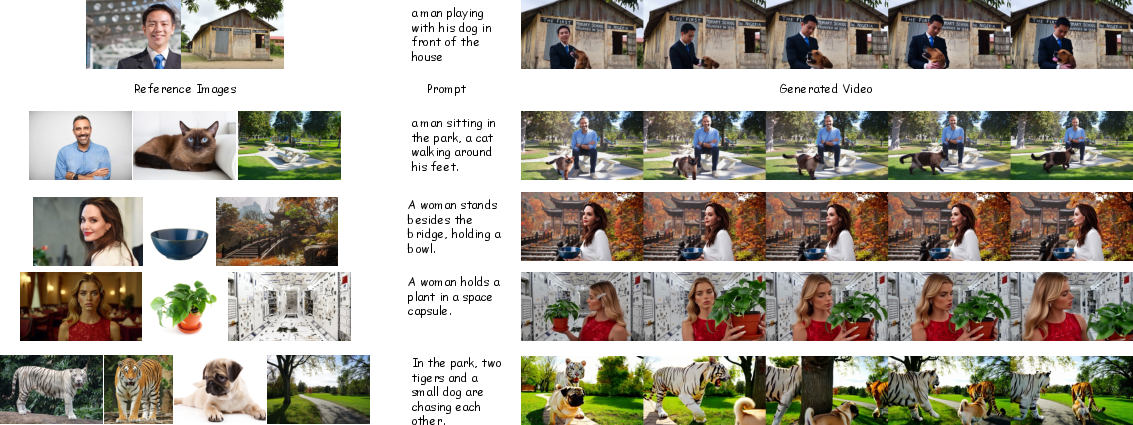

The multi-reference paradigm enables the model to accept up to four visual references (corresponding to subjects, objects, backgrounds) alongside semantic text prompts. Fine-grained data curation is crucial: carefully filtered videos are subsampled, and subject/background disentanglement is enforced through cross-frame pairing and semantic editing, reducing copy–paste and trivial frame duplication artifacts pervasive in prior works.

Reference integration is achieved via latent concatenation in the video VAE-preprocessed space, facilitating flexible composition control without explicit human-defined layout annotations. Multi-resolution joint optimization exposes the model to a diversity of spatial scales and aspect ratios, supporting a range of visual requirements.

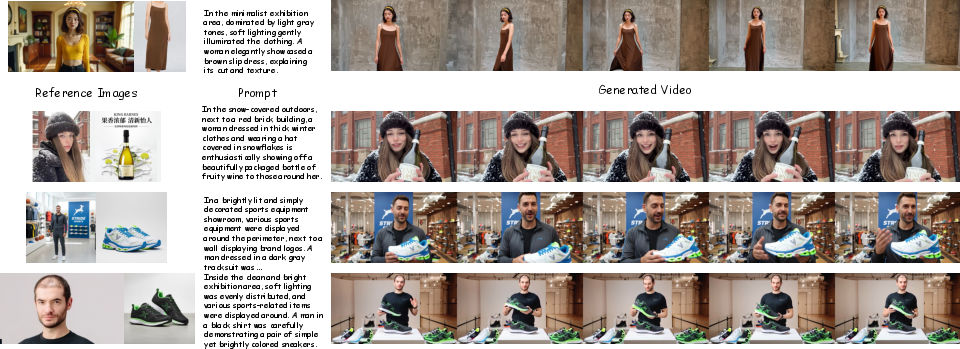

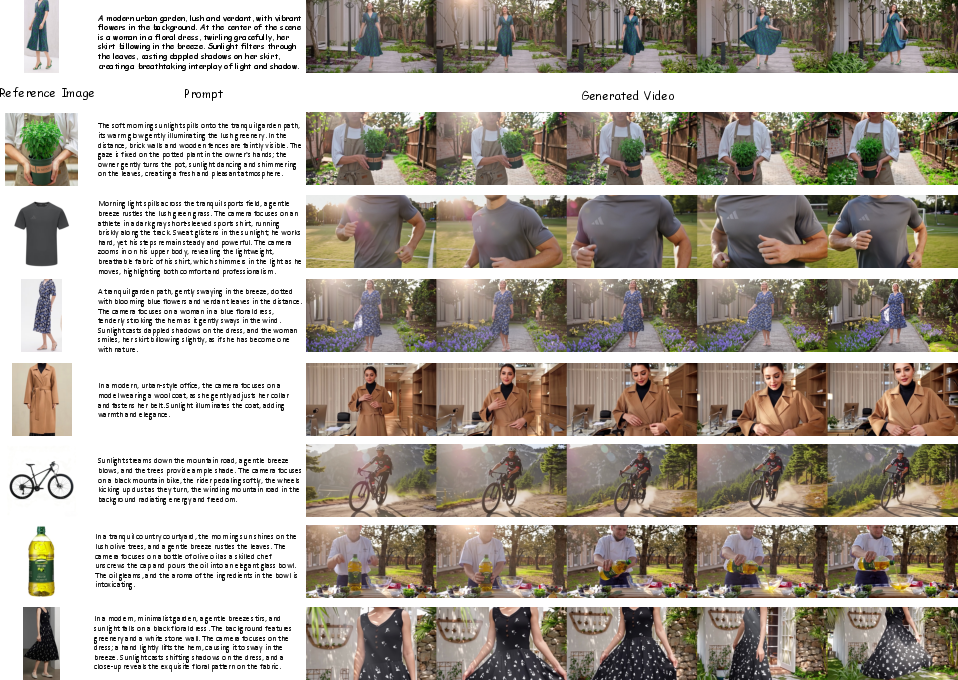

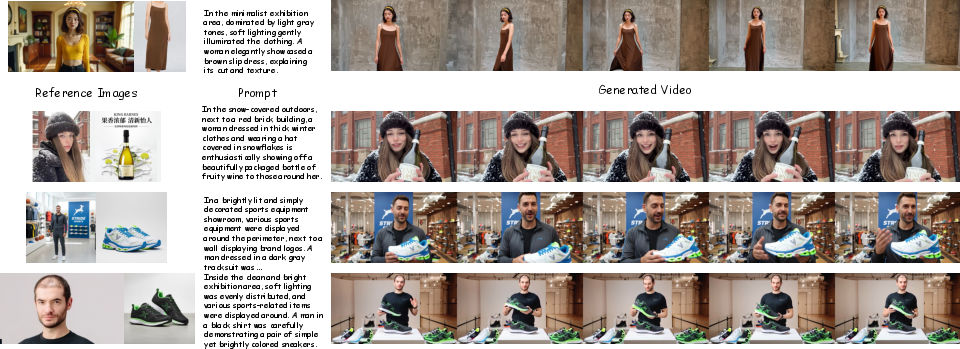

SkyReels-V3 demonstrates high subject identity preservation, temporal coherence, and semantic controllability in diverse scenarios, ranging from character interplay to e-commerce and advertising.

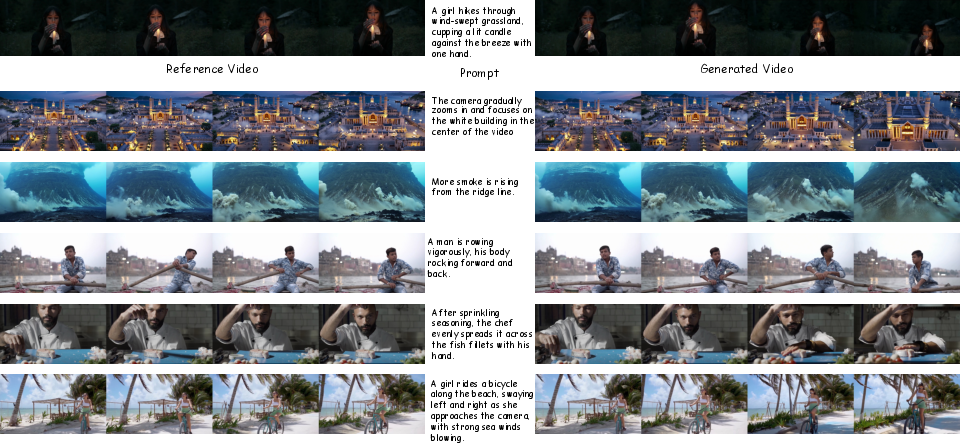

Figure 1: Reference image-to-video results, demonstrating coherent dynamic subject interactions and context adherence.

Figure 2: Dynamic composition with arbitrary multi-subject scenarios leveraging multi-reference inputs.

Figure 3: Instant video synthesis for diverse live commerce hosts and backgrounds.

Figure 4: One-shot reference-based advertising and product demonstration video generation.

The competitive evaluation with contemporary video generation models (Vidu Q2, Kling 1.6, PixVerse V5) reveals state-of-the-art visual quality and reference consistency. The model provides robust generalization for open-domain subjects, objects, and style variants, with superior performance in maintaining compositional stability over extended sequence lengths.

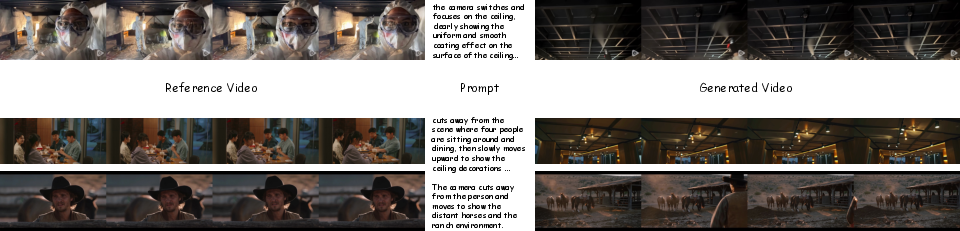

Video-to-Video Extension and Cinematic Continuity

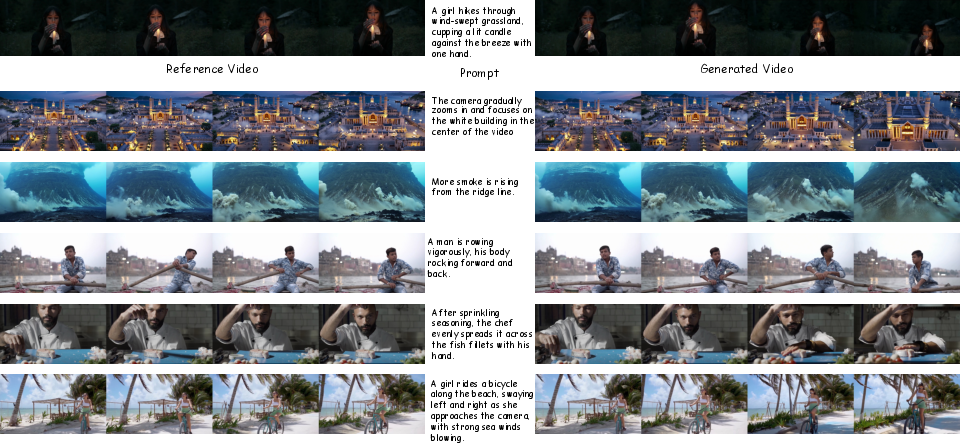

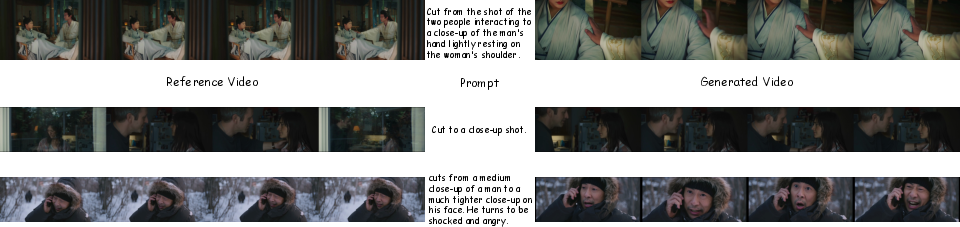

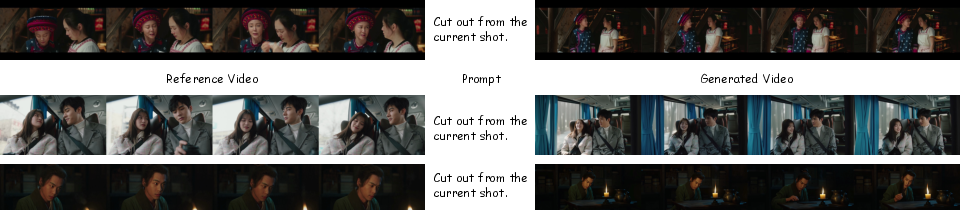

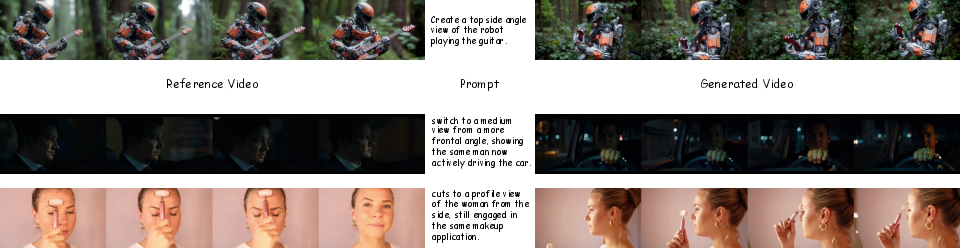

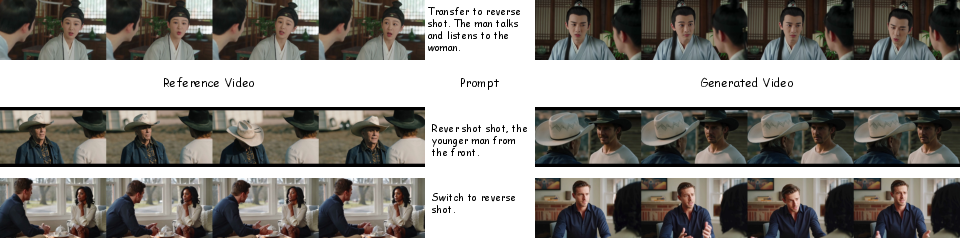

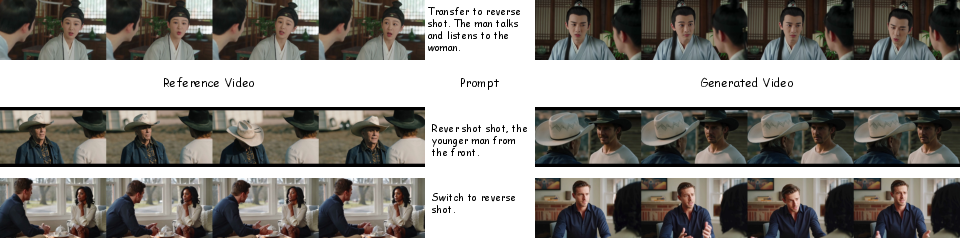

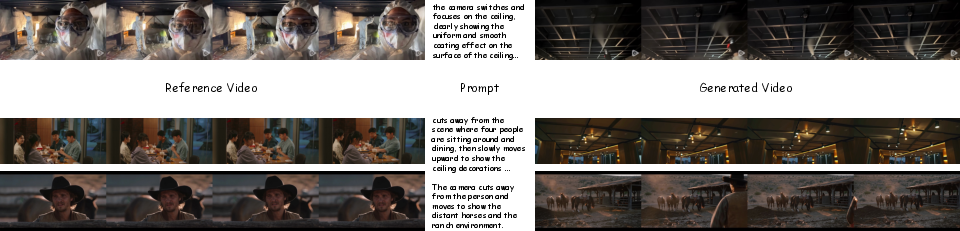

SkyReels-V3 incorporates comprehensive video extension, partitioned into single-shot and multi-shot/shot-switching paradigms—including detected or user-specified transitions (cut-in, cut-out, multi-angle, shot/reverse shot, cut-away). The architecture includes a shot-switching detector for training data curation and utilizes unified multi-segment positional encodings and hybrid hierarchical training, supporting arbitrarily long temporal consistency.

Figure 5: Example of single-shot video extension demonstrating smooth motion and persistent narrative geometry.

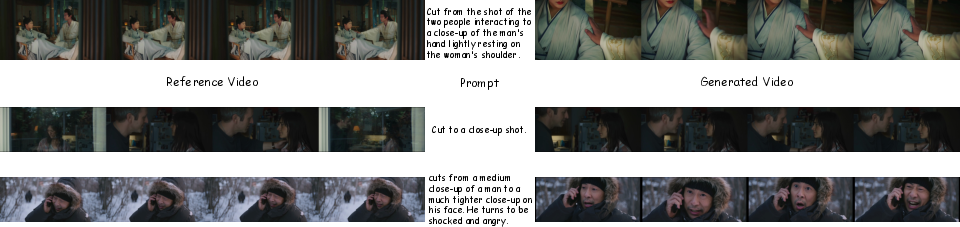

Figure 6: Shot-switching extension—cut-in transition.

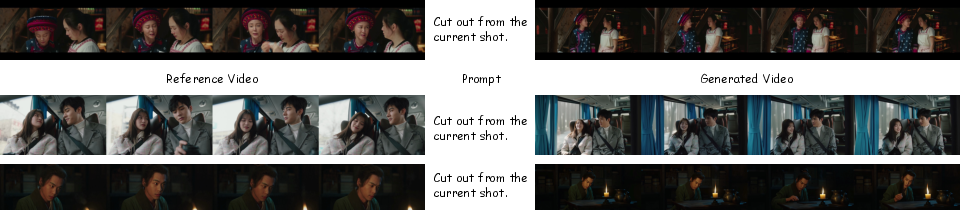

Figure 7: Shot-switching extension—cut-out transition.

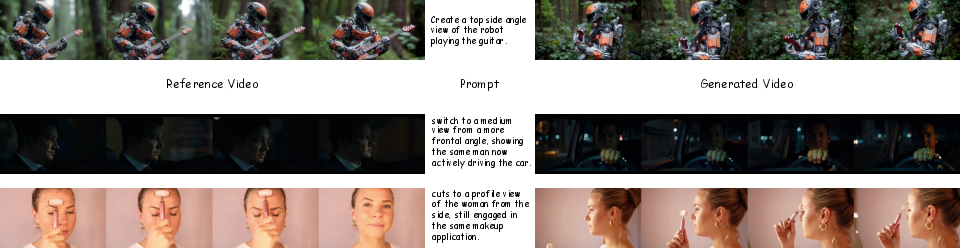

Figure 8: Multi-angle transition extension output.

Figure 9: Shot/reverse shot extension pattern with dialog-driven focus change.

Figure 10: Cut-away transition, shifting focus across narrative axes.

Generation quality is stable at up to 720p across varied aspect ratios and extension durations (5–30s per segment). The model consistently retains scene, subject, and style attributes, preserving narrative and motion semantics with high spatial–temporal granularity—crucial for professional content and cinematic video synthesis.

Audio-Guided Talking Avatar Video Generation

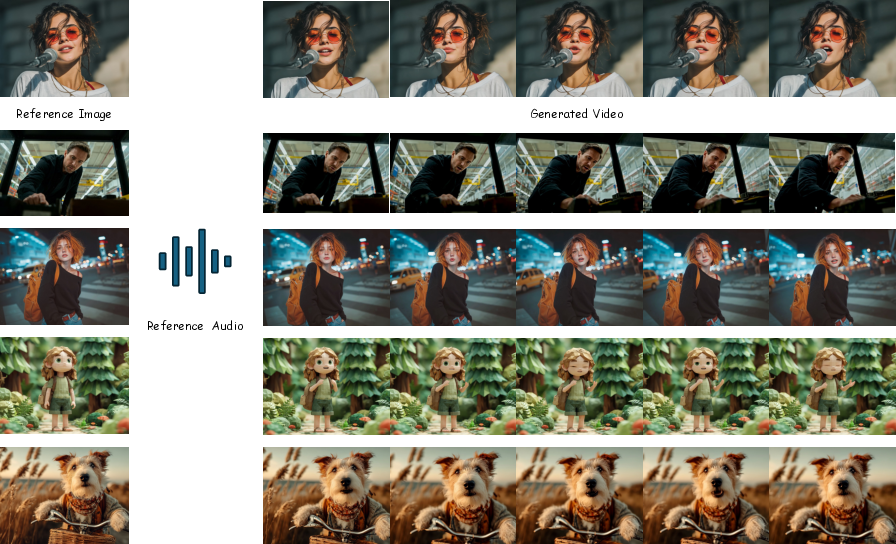

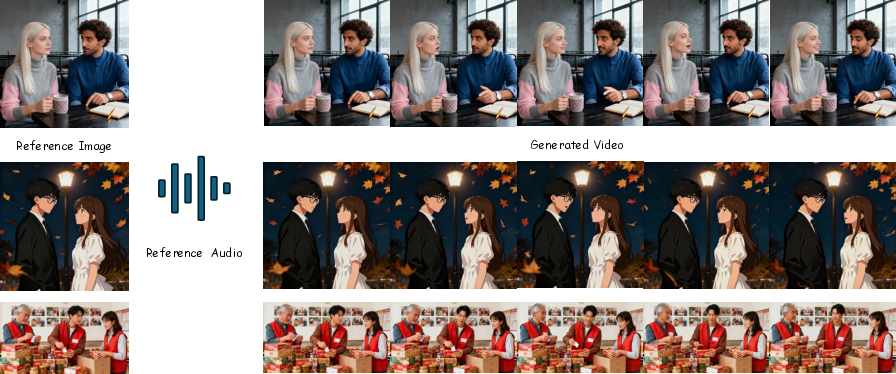

SkyReels-V3's Talking Avatar subsystem synthesizes minute-scale, audio-guided videos from a single portrait and input audio, supporting photorealistic, stylized, and non-human avatars, as well as multi-character long-form dialog scenarios. Keyframe-constrained diffusion stabilizes long-range identity/motion, while dedicated audio-visual synchronization routines and region-masked alignment enable close phoneme-level lip sync and naturalistic gestures, even over long durations and across languages.

Figure 11: Generalization of the talking avatar model to non-human and multi-style generation scenarios.

Figure 12: Multi-person audio-driven generation; automatic speaker–listener differentiation responding to dialog content.

Figure 13: Minute-long video generation showcasing persistent visual style and alignment accuracy.

The talking avatar model demonstrates parity with, or outperforms, leading models such as OmniHuman, KlingAvatar, and HunyuanAvatar in visual quality, synchronization, and expressive realism, and enables fine-grained multi-person interaction with explicit mask-based speaker selection.

Discussion and Implications

SkyReels-V3 integrates multimodal in-context learning, spatiotemporal consistency, and compositional generalization in a single, modular diffusion Transformer framework applicable to high-fidelity and controllable video generation. The methodology markedly curtails common pitfalls such as subject drift, visual artifacts, and narrative fragmentation over long durations, and is directly extensible to professional applications in film, advertising, e-commerce, and digital avatars.

Practically, the open-source availability and robust, unified baseline lower the barrier for scaled real-world multimodal content generation research. Theoretically, the model demonstrates that large-scale video Diffusion Transformers—with proper data curation, modular training strategies, and multimodal alignment—are viable for general-purpose “world model” learning and controllable, narrative-level visual simulation.

On future directions, the demonstrated in-context multimodality and fine-grained compositional controls point toward video generation models that could further unify with virtual agent/action modeling, multi-view 3D/4D scene synthesis, and closed-loop interactive learning protocols.

Conclusion

SkyReels-V3 establishes an advanced, extensible foundation for multimodal, high-quality video generation under unified diffusion Transformer architectures. It demonstrates high subject–narrative consistency, cinematic controllability, and strong generalization across reference-driven, extension, and audio-visual alignment tasks. The architecture and methods will facilitate advancing theory and practice in scalable, controllable, and modular generative world models for video synthesis and related AI domains (2601.17323).